PH热榜 | 2026-02-13

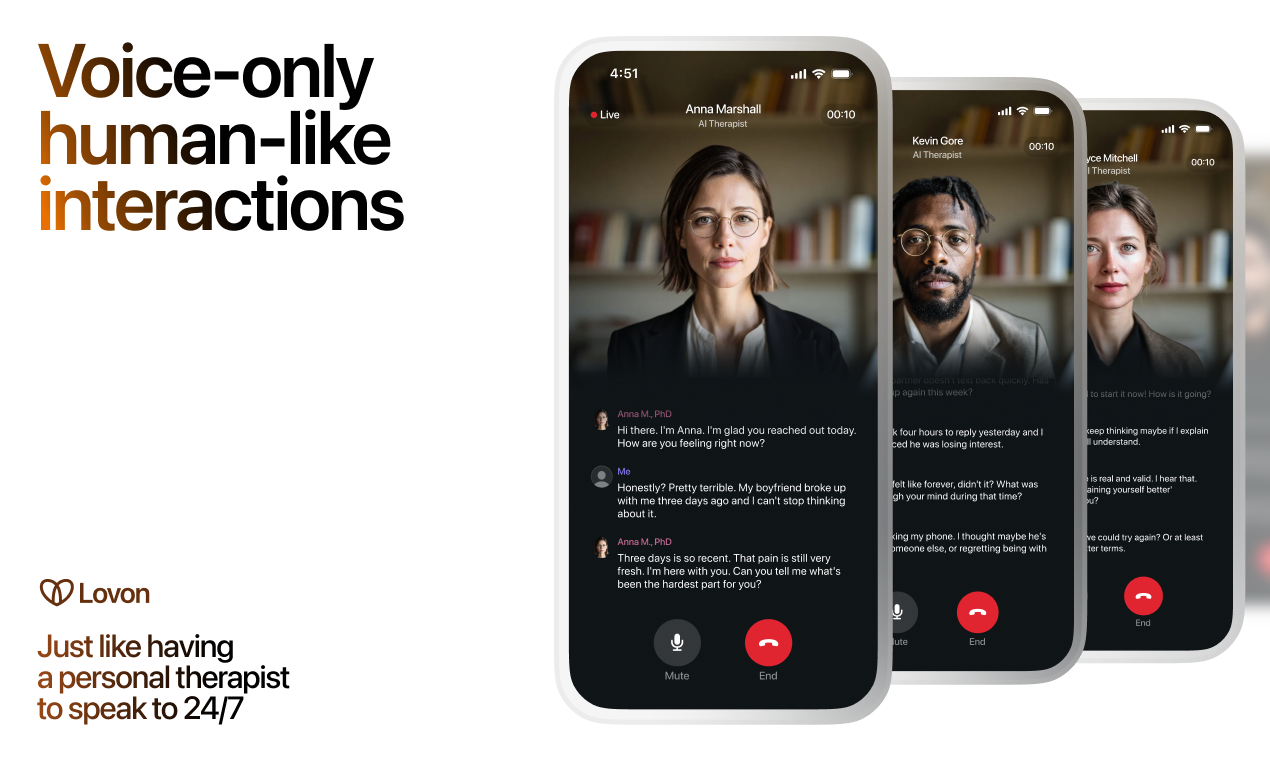

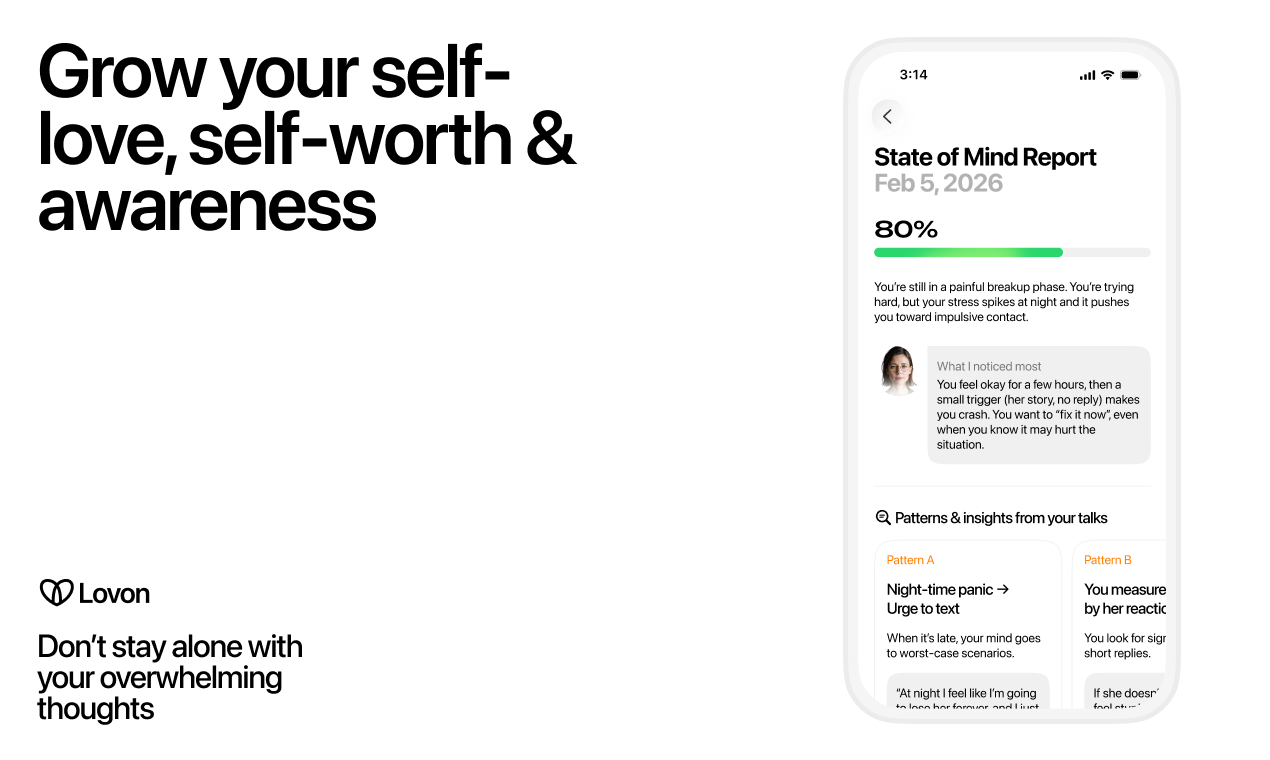

一句话介绍:一款基于语音对话、融合CBT等循证疗法的AI心理治疗应用,在用户经历情绪危机(如深夜失眠、分手痛苦)或无法即时获得真人治疗时,提供即时、可倾诉的陪伴与干预。

Artificial Intelligence

Health

AI心理治疗

语音对话

认知行为疗法(CBT)

情绪支持

危机干预

心理健康科技

7x24小时服务

循证干预

数字疗法

情感陪伴

用户评论摘要:用户普遍认可其语音交互的便捷性与人性化,尤其赞赏其在分手恢复等急性情绪场景的价值。主要问题集中在网络依赖(是否支持离线)以及对AI如何做到“温和挑战而非一味认同”的技术原理好奇。团队回复积极,透露主要用户为寻求即时支持而非疗程补充的人群。

AI 锐评

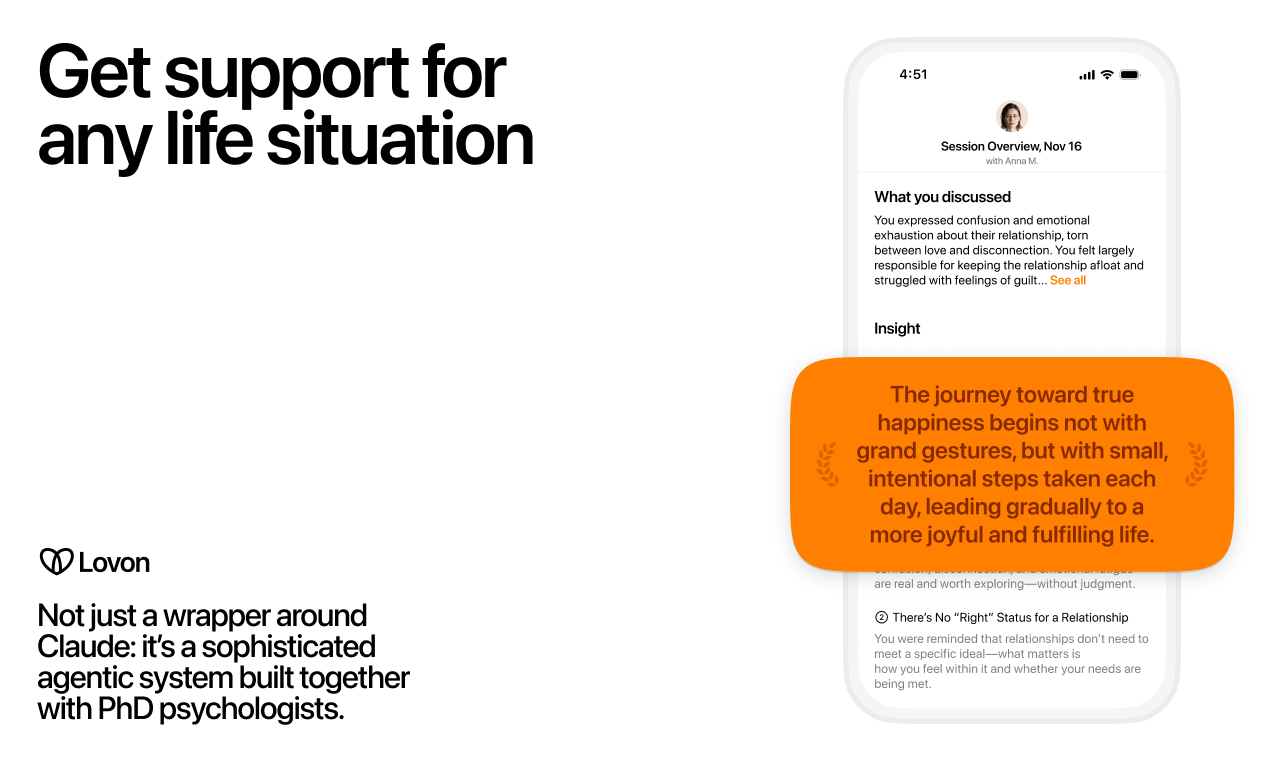

Lovon AI Therapy 的亮相,精准刺入了传统心理治疗模式最柔软的腹部:即时性缺失与高门槛。它不标榜替代治疗师,而是定位为“疗程间的桥梁”与“急性情绪时刻的出口”,这是一个聪明且符合伦理的切入点。其宣称的“语音优先”和“基于循证疗法挑战非理性思维”,是试图与泛滥的共情型聊天机器人划清界限的关键技术叙事。

然而,其真正的挑战与价值评估均系于此。首先,“温和挑战”的算法实现是核心黑箱,这需要极其精细的提示工程与安全护栏设计,以避免伤害性回应或无效对话。用户对此的质疑直指要害。其次,其透露的“主要用户是经历分手、深夜难眠者”,揭示了产品更偏向于“情感陪伴与危机缓冲”,这与需要长期、结构化干预的“治疗”存在本质区别。它的价值可能更多体现在情绪疏导的即时可及性上,而非深度心理治疗。

团队背景(资深心理学家参与)和计划中的临床验证是建立信任的重要筹码,但必须清醒认识到,现有框架下AI作为“治疗工具”的效力证据仍处于早期。产品若能在“危机检测与转介”功能上做到可靠,其社会价值将远超商业价值。总之,Lovon 是一次有意义的场景化尝试,但它所面临的,是如何在“无害陪伴”与“有效干预”之间找到那条狭窄、合规且真正有效的路径。

一句话介绍:一款AI驱动的表情包键盘,在即时群聊场景中,通过上下文理解即时推荐合适表情包,解决了用户手动翻找图库、回复节奏慢的痛点。

Custom Keyboards

Messaging

Memes

AI键盘

表情包推荐

群聊工具

社交效率

实时聊天

内容生成

移动应用

趣味社交

用户评论摘要:用户普遍认可产品解决“找图慢”的核心痛点,认为其便捷性优于系统自带GIF键盘。主要反馈集中在:强烈期待自定义上传功能;对平台支持(iOS/Android)、隐私安全及AI工作原理存在疑问;建议明确键盘集成方式与支持范围。

AI 锐评

Meme Dealer精准切入了一个微小但真实的高频场景——群聊中的表情包博弈。其价值不在于技术创新,而在于对“输入流程”的重构:将表情包从需要主动搜索的“内容库”转变为基于语境预测的“输入法建议”,这本质上是将AI的意图识别能力封装为一种即时的情绪表达工具,试图占领用户输入面板的“下一句”位置。

然而,其面临的挑战同样清晰。首先是“场景壁龛化”风险:产品高度依赖活跃、具有内部梗文化的封闭群聊,用户泛化性存疑。其次是“AI推荐精准度”这一黑盒难题:评论中已显露用户对隐私和数据处理的担忧,而“氛围感”判断的主观性极易导致推荐失灵,一旦“不准”,键盘切换成本将导致用户迅速流失。最后,其商业模式模糊,作为工具属性极强的键盘,商业化路径狭窄,且极易被巨头内置功能降维打击。

产品团队将自定义上传列为优先项是明智的,这实则是将解决精准度问题的责任部分交还给用户,用“个人梗库”弥补AI的不足,构建护城河。但长远看,它必须从“表情包推荐工具”升级为“群体互动氛围引擎”,更深层地绑定小圈子文化,否则很可能只是一个有趣的、但生命周期有限的效率插件。

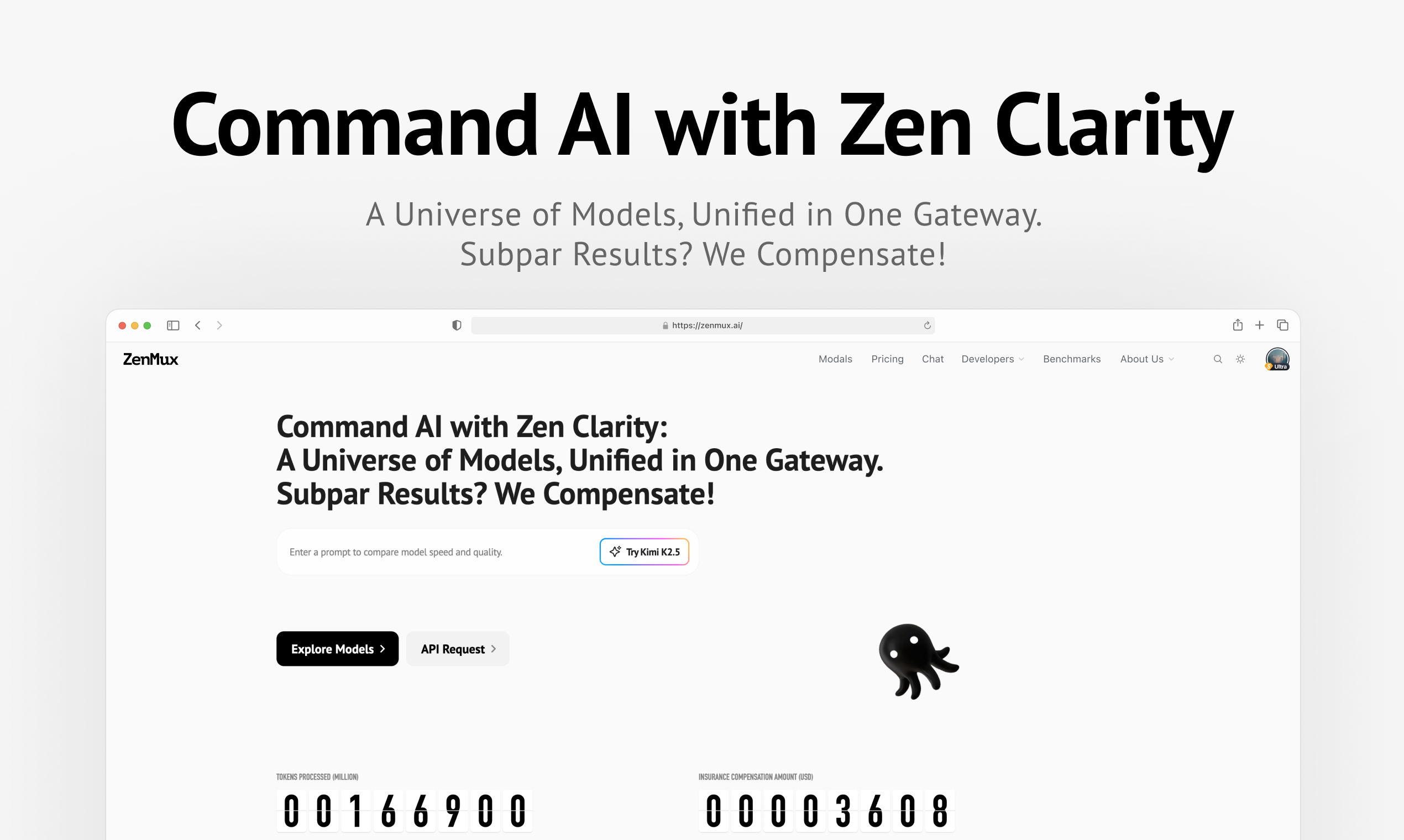

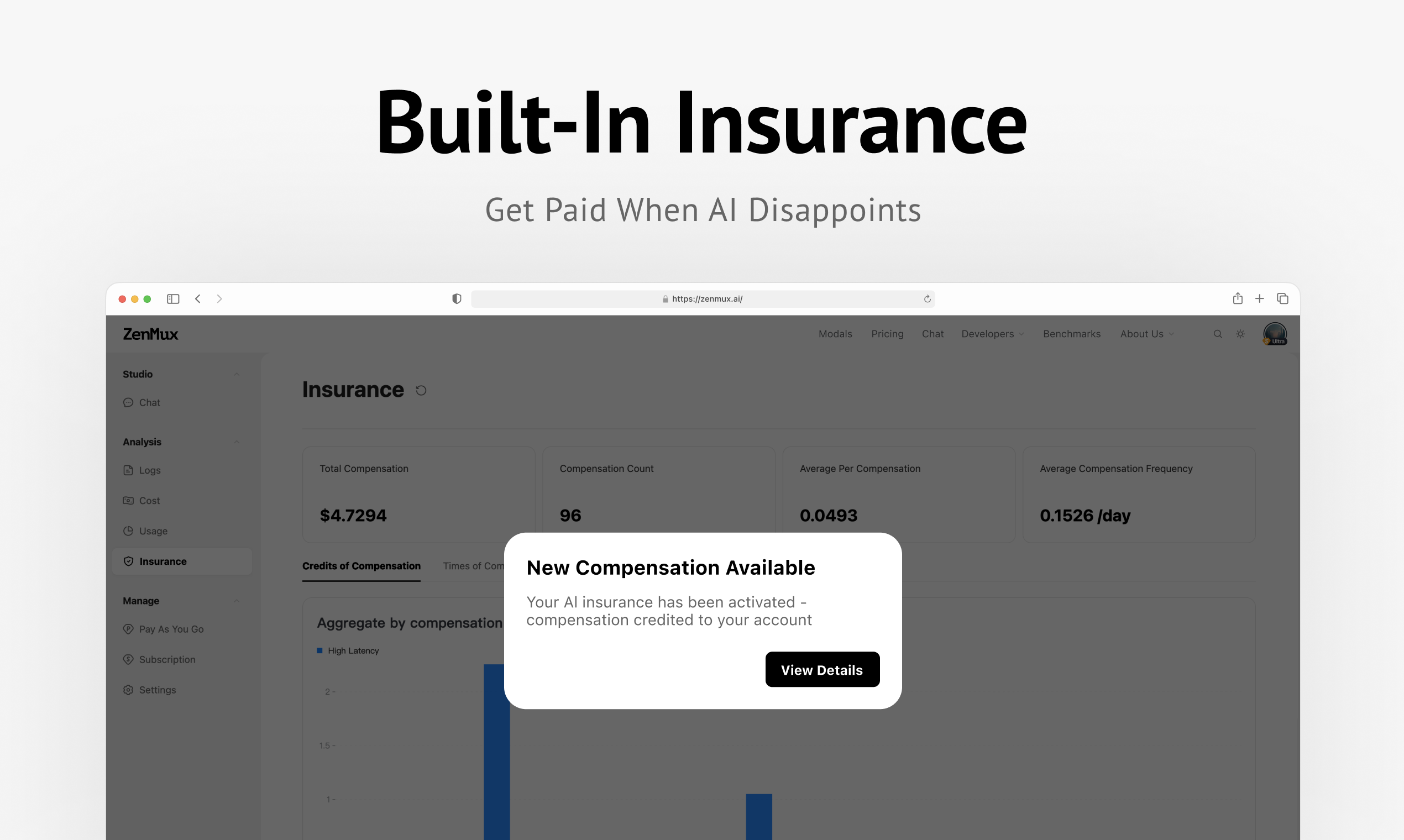

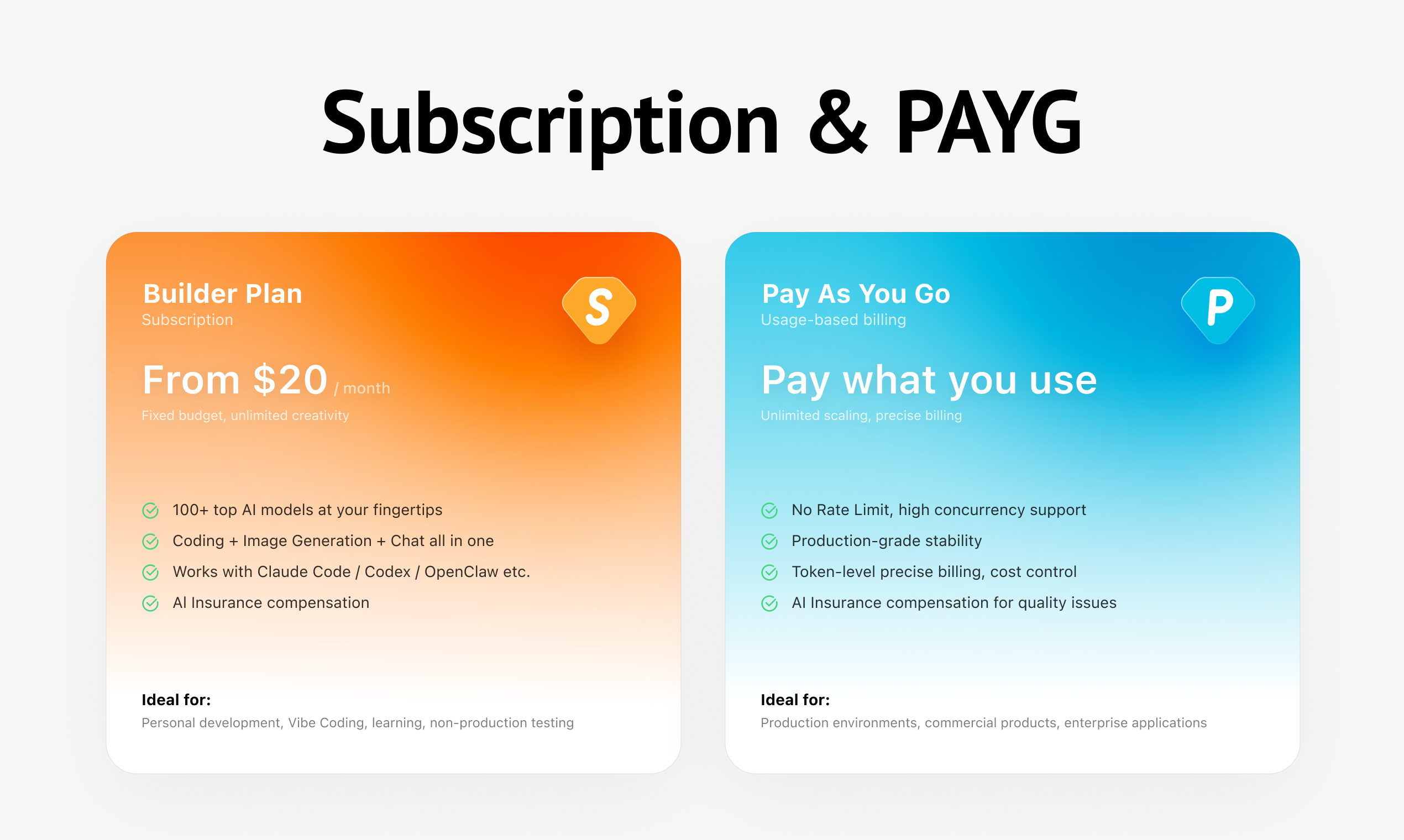

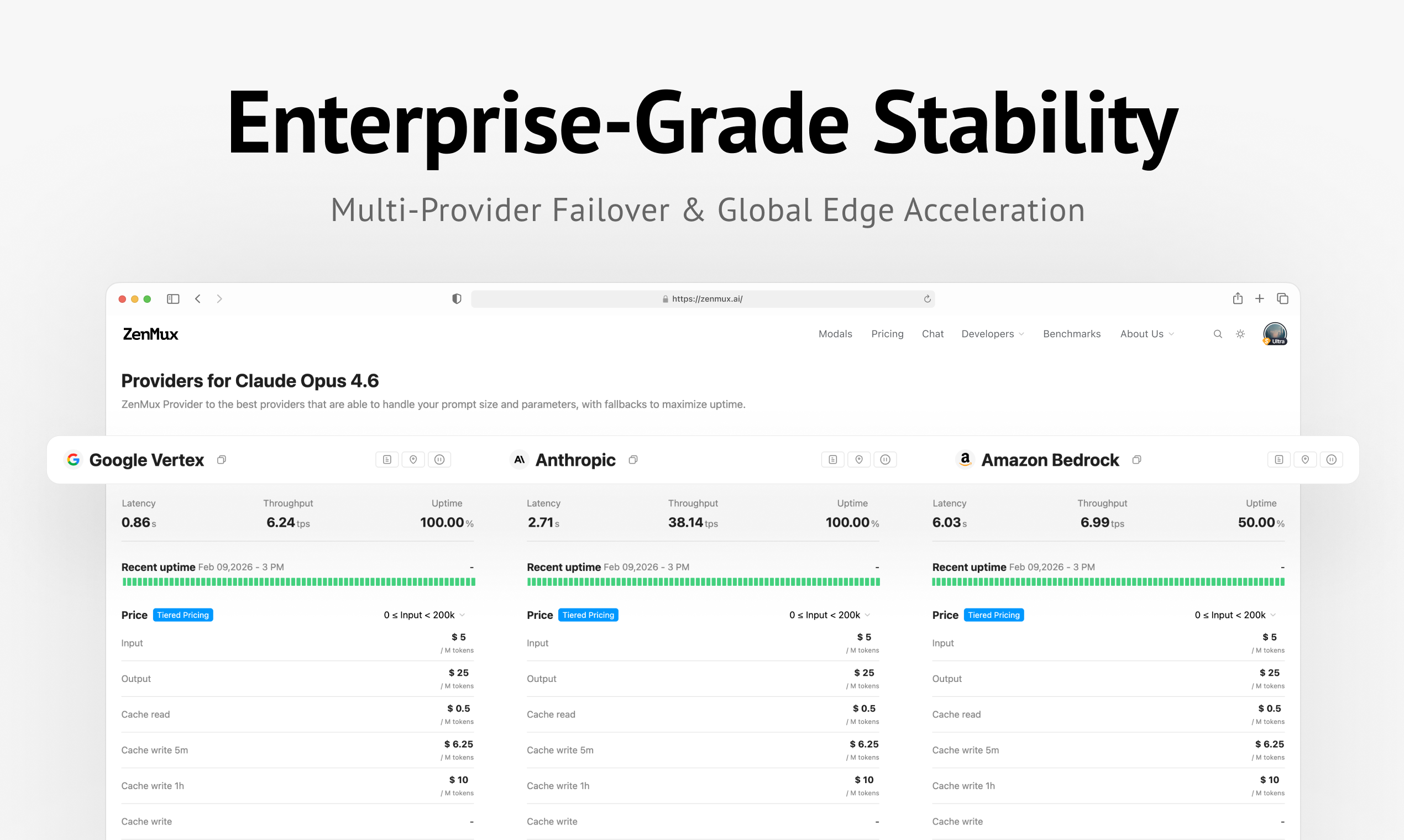

一句话介绍:ZenMux是一款企业级LLM网关,通过统一API、智能路由和行业首创的自动补偿机制,为开发者在AI应用生产环境中解决了模型质量不稳定、成本不可控和运维复杂等核心痛点。

API

Developer Tools

Artificial Intelligence

LLM网关

企业级AI基础设施

模型路由

自动补偿

API聚合

高可用

多云冗余

成本优化

性能监控

开发效率

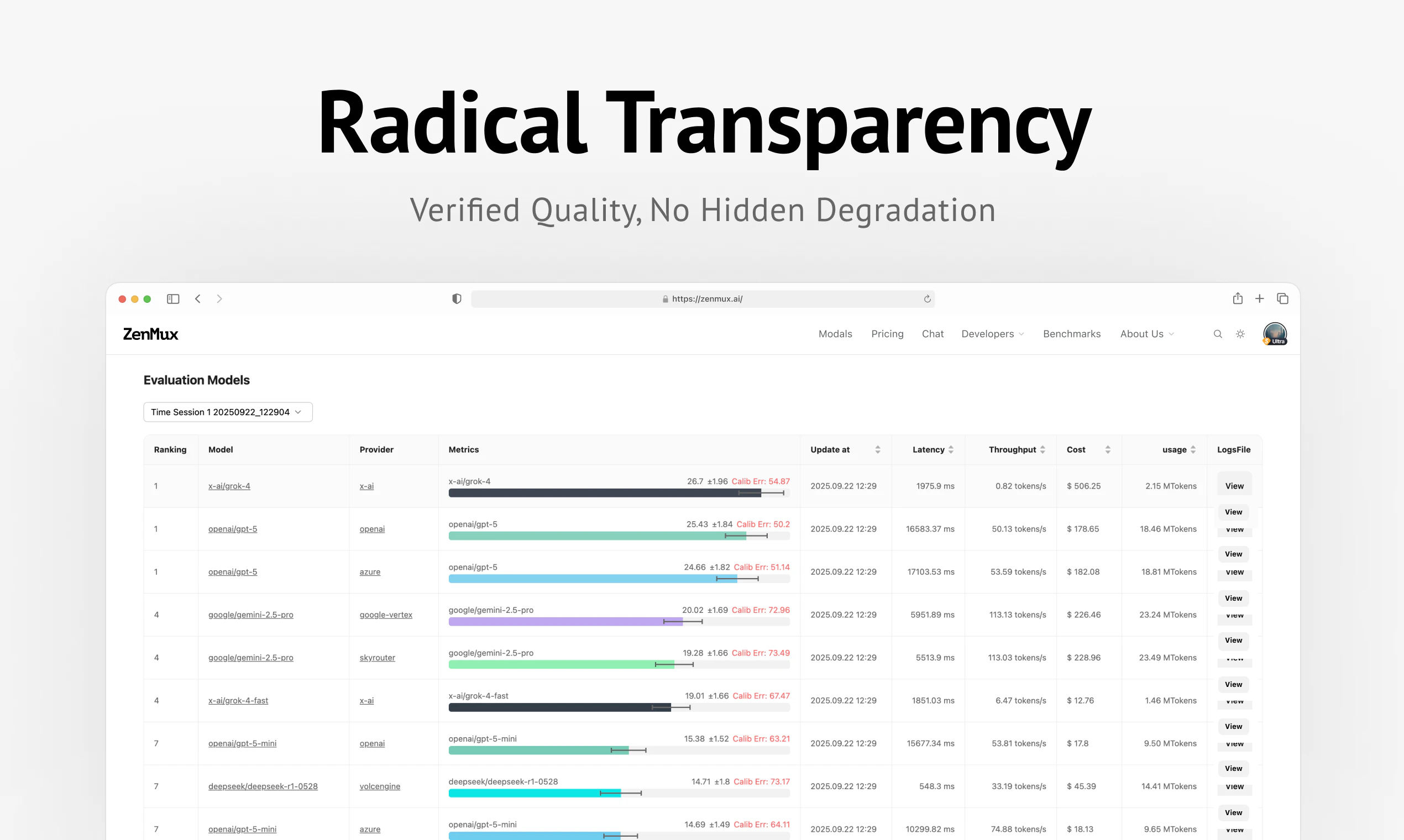

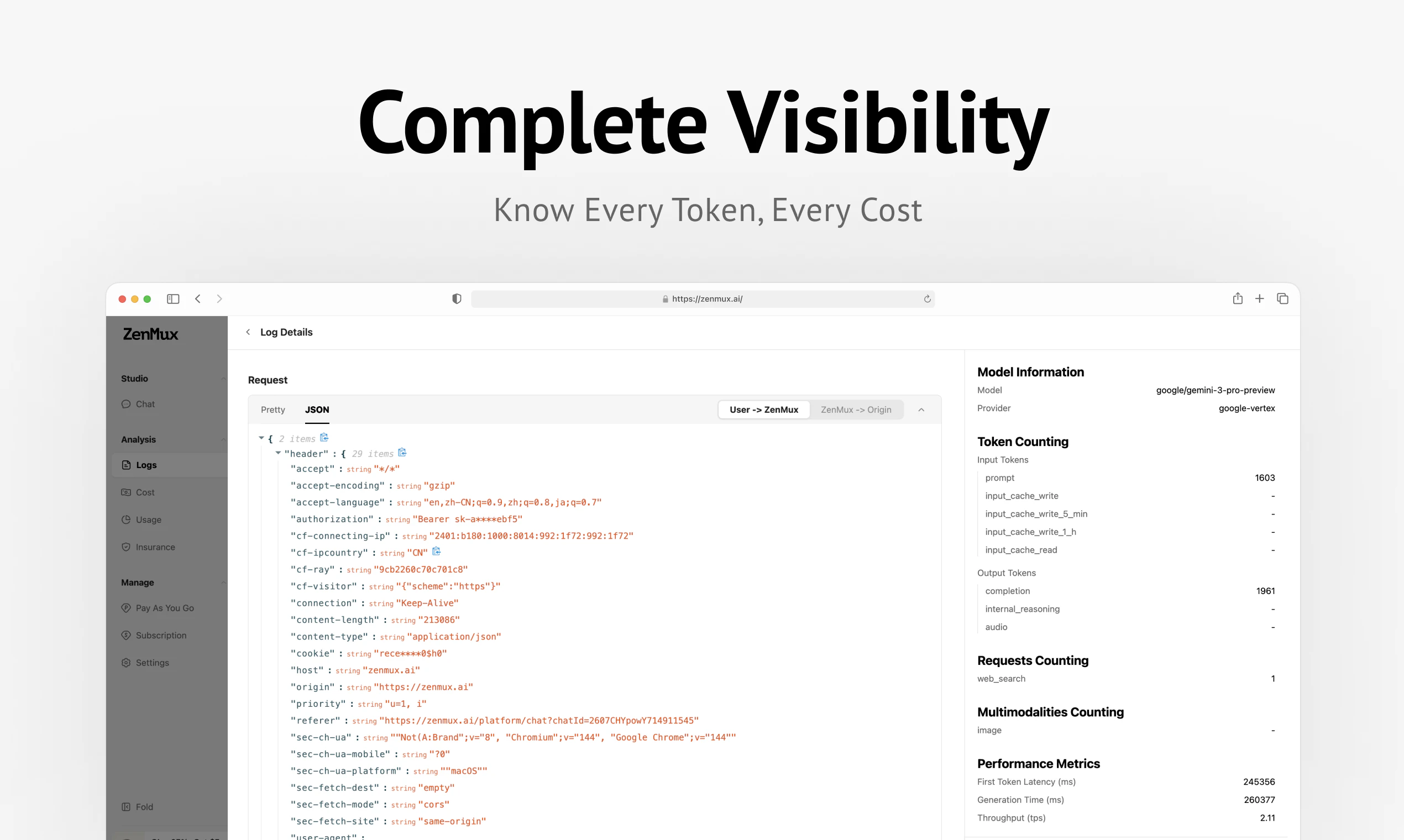

用户评论摘要:用户反馈积极,认可自动补偿和成本优势,并询问补偿的具体定义与审计流程。主要建议包括:需明确“质量低下”的判定标准、提供可追溯的补偿数据包、集成OpenTelemetry等可观测性工具,以及公开路由策略细节。

AI 锐评

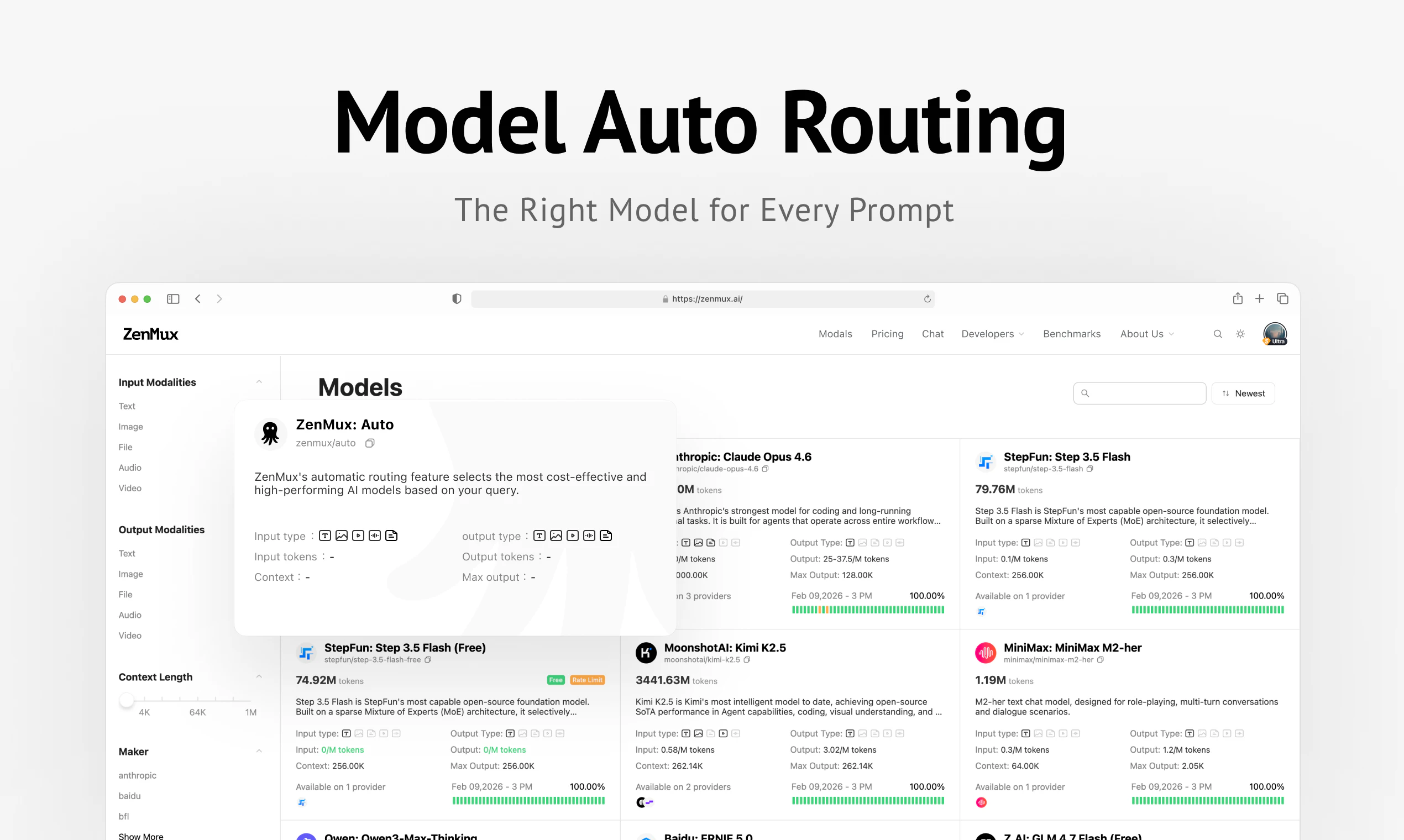

ZenMux切入了一个日益拥挤但痛点明确的赛道——LLM网关。其宣称的“行业首创自动补偿机制”无疑是最锋利的钩子,试图将基础设施从传统的“流量管道”角色,重塑为“质量担保方”。这一承诺直击开发者最敏感的神经:为模型的“胡言乱语”和响应迟缓付费的不公感。

然而,其光鲜承诺之下潜藏着巨大的执行风险与定义模糊地带。评论中尖锐的提问切中要害:如何客观、可复现地定义“低质量输出”?补偿裁定是否会引发大量争议和运营负担?这本质上不是一个技术问题,而是一个涉及标准制定、仲裁机制和商业风险的复杂体系。产品若不能将补偿规则极度透明化、流程自动化,该特色很可能从亮点变为运营泥潭。

此外,其“统一API”和“智能路由”属于当前网关的标配,壁垒在于路由算法的实际效能与数据积累。而“公开HLE测试”虽有助于建立信任,但如何将通用基准与客户具体工作负载的优化有效结合,仍是待解难题。

真正的价值或许如团队回复中所暗示的:每一次补偿事件都是一个高质量的反向优化数据点。若能系统性地将这些“失败案例”反馈给客户,甚至形成优化闭环,ZenMux则有可能从“成本保险商”升级为“质量协作者”,构建更深层的护城河。当前,它是一个大胆且对开发者友好的价值主张,但其长期成功,取决于能否将这场危险的“赌博”转化为一套可规模化的、可信赖的质量保障体系。

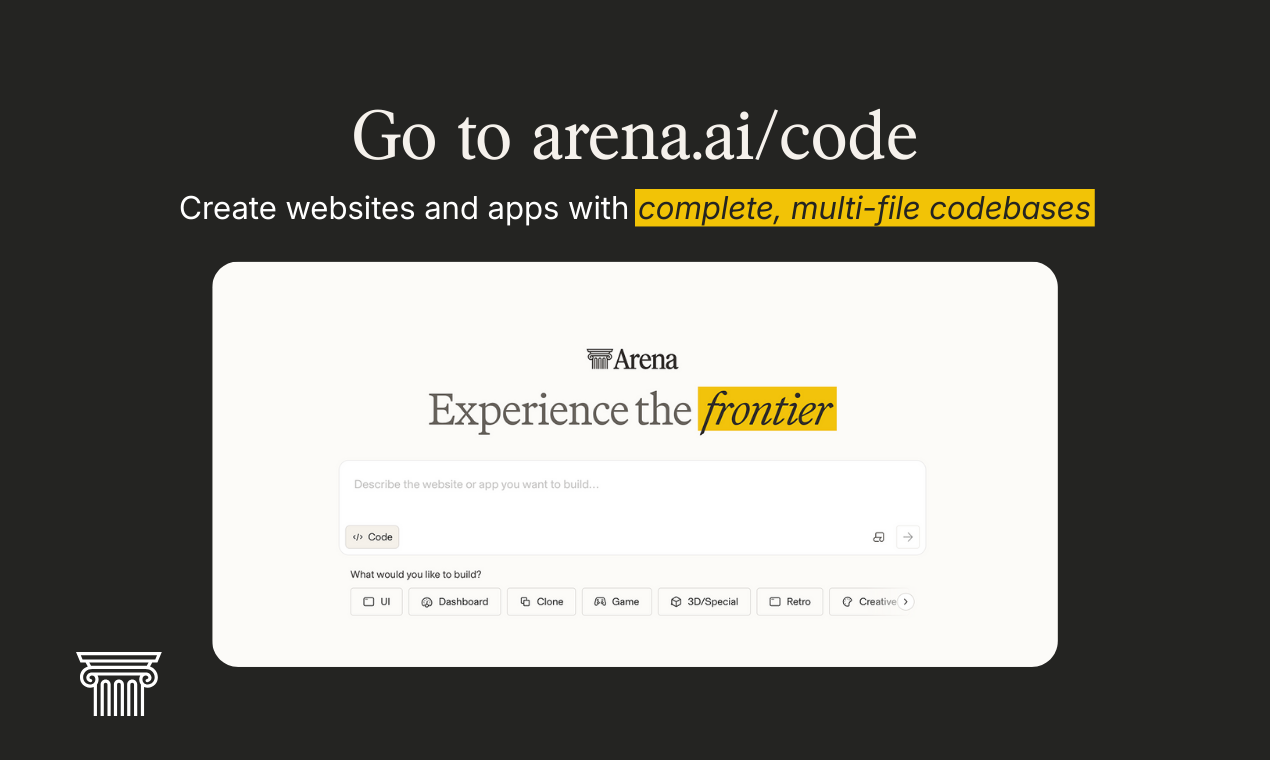

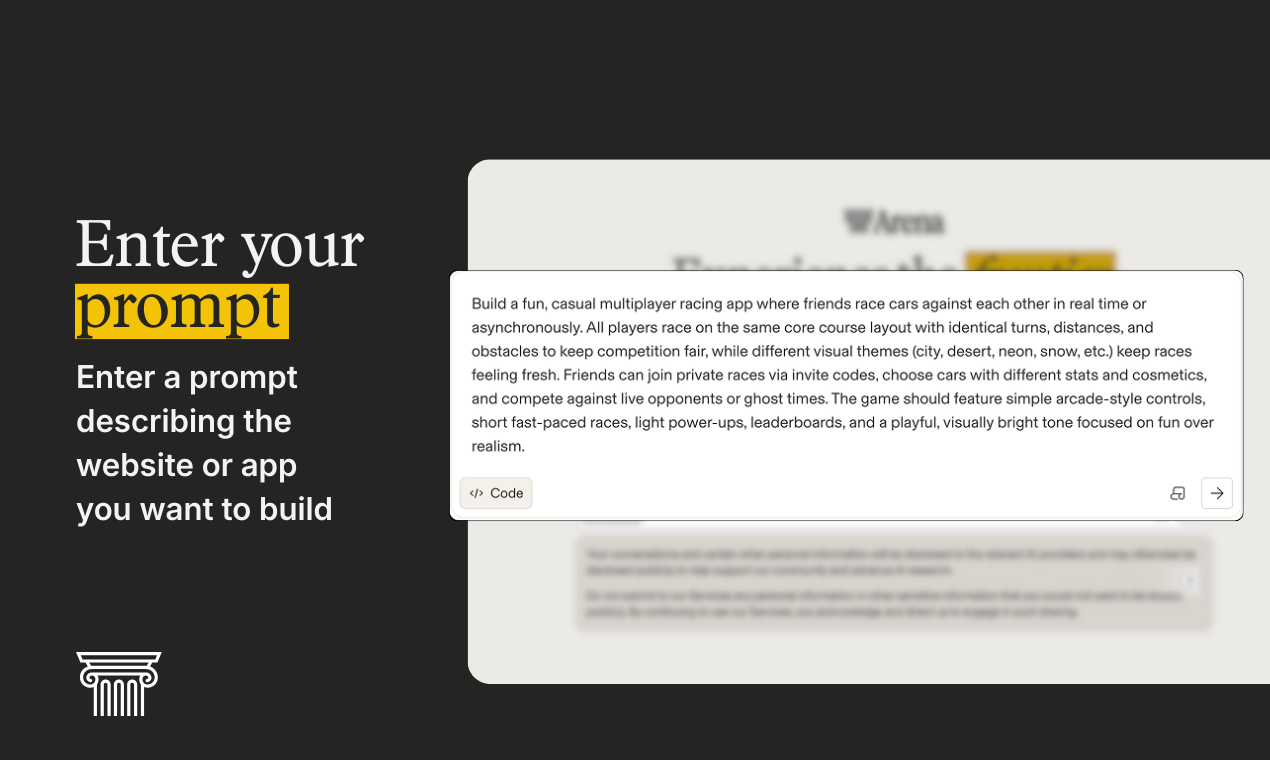

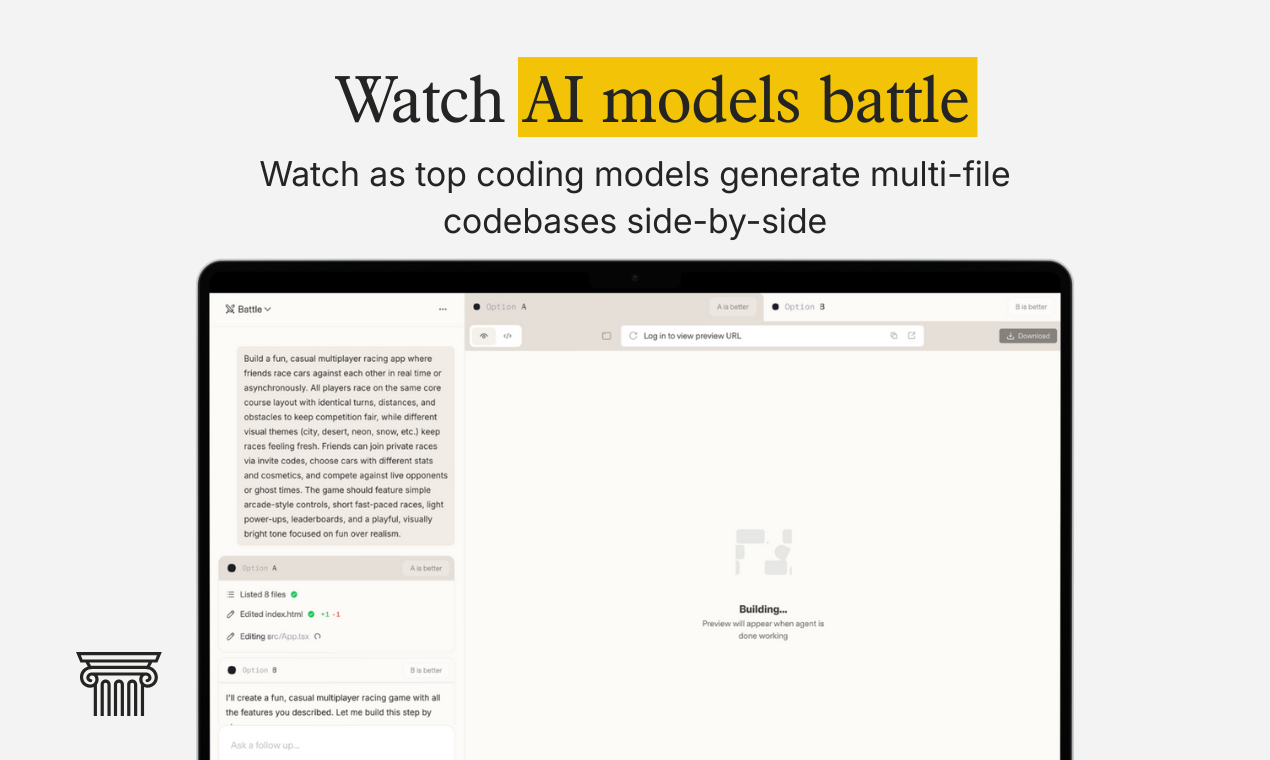

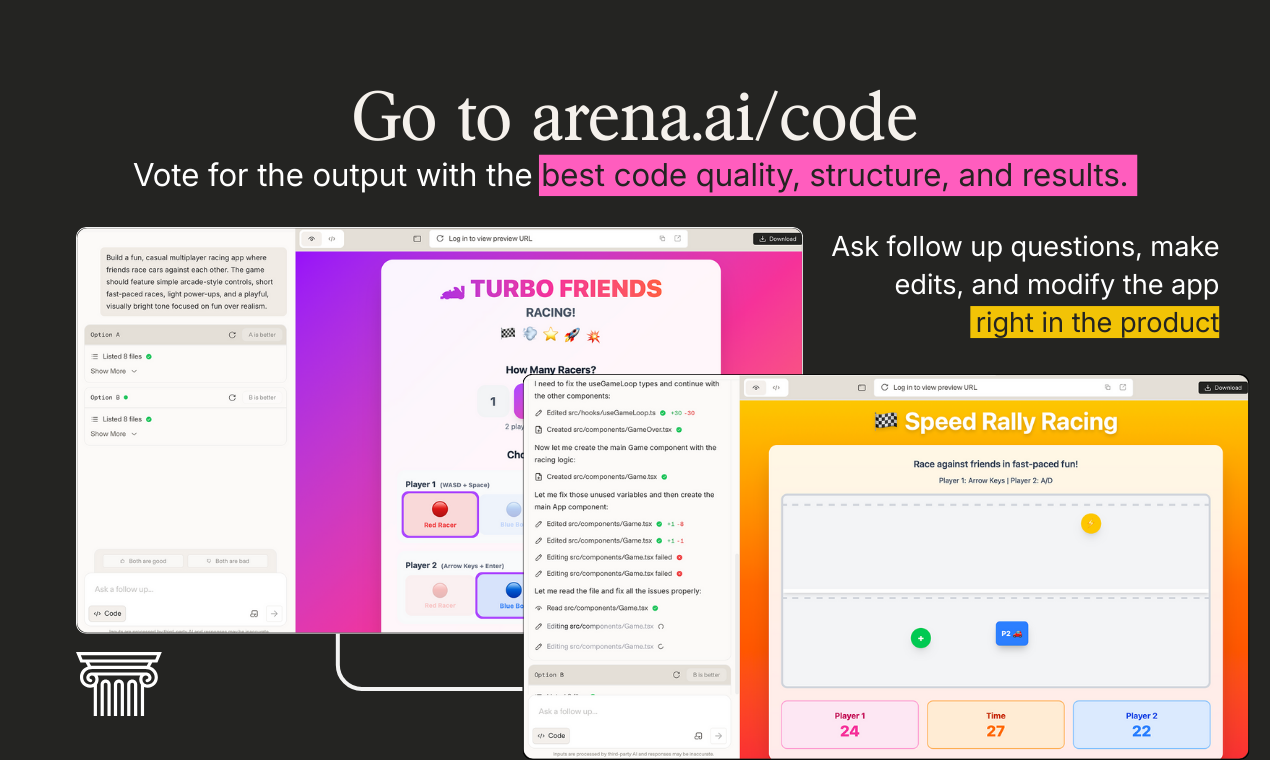

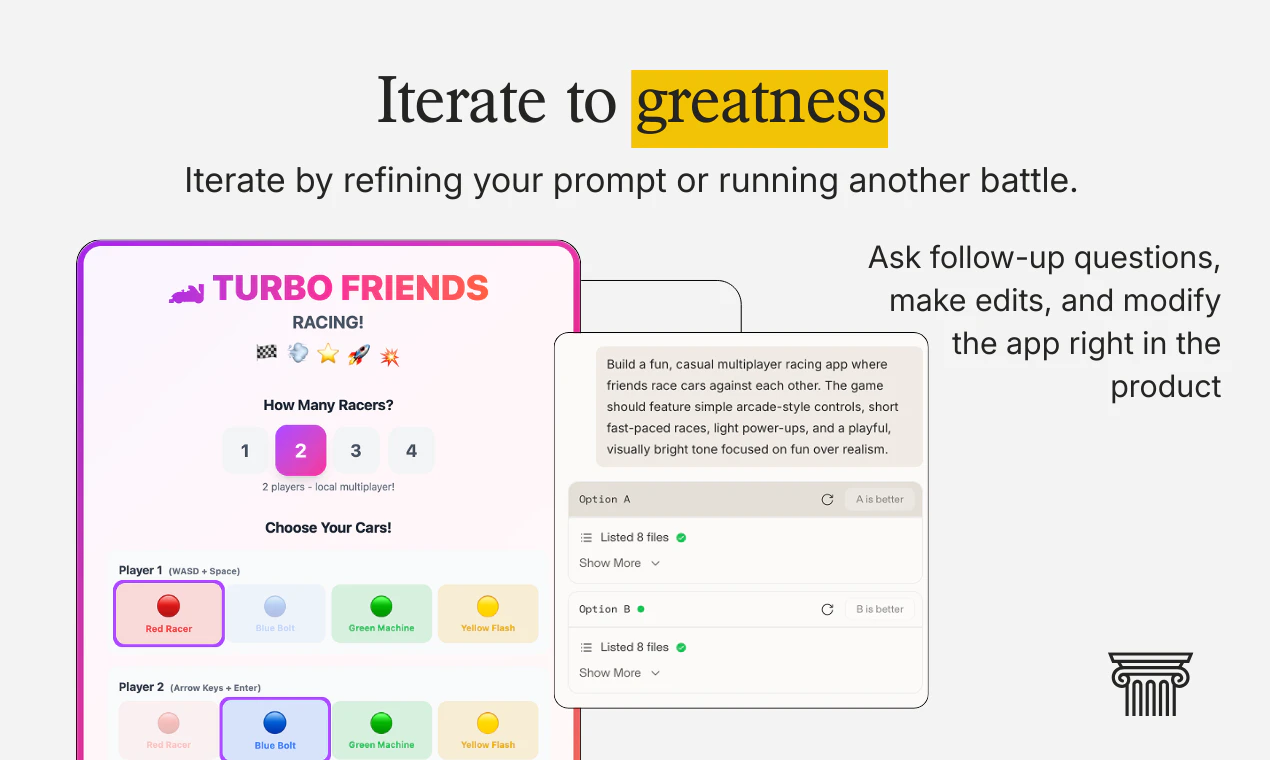

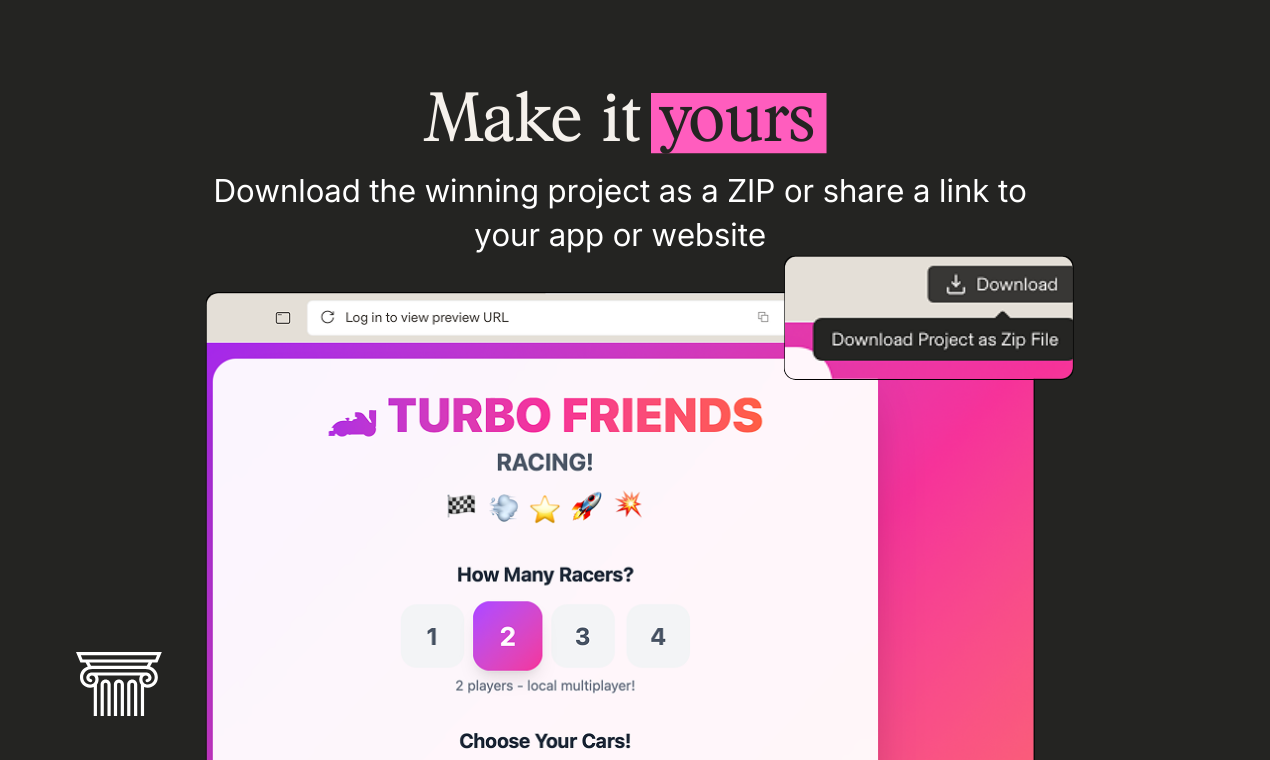

一句话介绍:Code Arena 允许开发者一次性输入提示词,即可并行比较多个顶级AI编程模型生成的多文件应用或网站代码,解决了在真实、结构化项目中评估和选择最佳AI编码输出的痛点。

Software Engineering

Developer Tools

Artificial Intelligence

AI编程工具

多文件代码生成

模型对比评测

开发者工具

代码导出

项目脚手架

生产力工具

免费工具

用户评论摘要:用户肯定其多文件生成与并排对比的核心价值,但普遍关注结果评估问题:缺乏实时预览难以判断输出优劣;询问迭代工作流和客观评估标准(如成本、质量、基准);建议增加依赖可视化、结构化上下文支持以提升对比公平性与实用性。

AI 锐评

Code Arena 精准切入当前AI辅助编程的核心矛盾:演示场景的“玩具级”代码与真实工程所需的“系统级”代码之间的巨大鸿沟。其“一次提示,多模型并行生成多文件项目”的设计,确实直击了多数单文件AI编码工具的软肋,试图将选择成本从串行试错转向并行对比。

然而,产品目前呈现的更像一个精巧的“演示对比器”,而非深度“工作流整合器”。用户的评论一针见血:缺乏代码预览功能,使得对比在第一步就卡壳;没有明确的评估维度和基准(如代码质量、性能、成本、架构合理性),所谓“对比”极易流于表面观察;其作为独立工具,如何融入开发者从生成、迭代、调试到部署的完整闭环,仍显模糊。这暴露了其现阶段可能更适用于模型能力的快速探知与选型,而非持续的深度开发。

真正的挑战在于,多文件生成的“可靠性”与“生产级结构”绝非简单堆叠文件即可实现。不同模型对架构模式、文件依赖、接口约定的理解差异,可能生成形似而神异的代码,增加后续集成与维护的隐性成本。产品若想从“有趣对比”升级为“必备工具”,必须构建更科学的量化评估体系,并深度解决生成代码的“可理解性”(如依赖可视化)与“可迭代性”(与本地IDE/GitHub的双向同步)问题。否则,它可能只是将混乱从“选择哪个模型”推迟到了“理解哪套代码”上。

一句话介绍:一款面向实时编码协作的极速AI模型,为ChatGPT Pro用户提供15倍生成速度与128K上下文,解决了开发者在交互式编程中对低延迟和高响应度的核心痛点。

Developer Tools

Artificial Intelligence

Development

AI编程助手

实时协作

低延迟模型

代码生成

开发效率工具

大上下文窗口

研究预览版

交互式编程

轻量级编辑

ChatGPT Pro

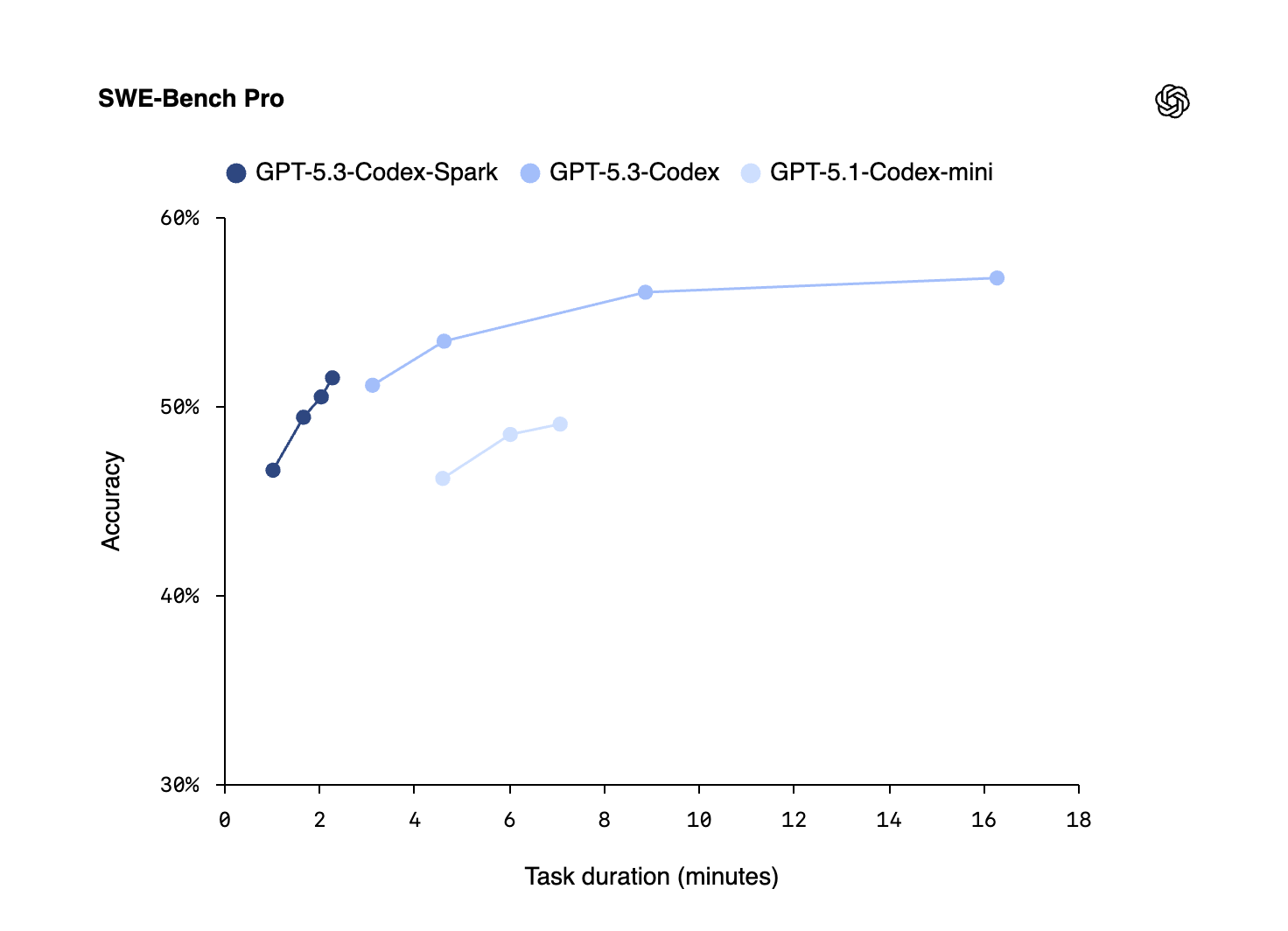

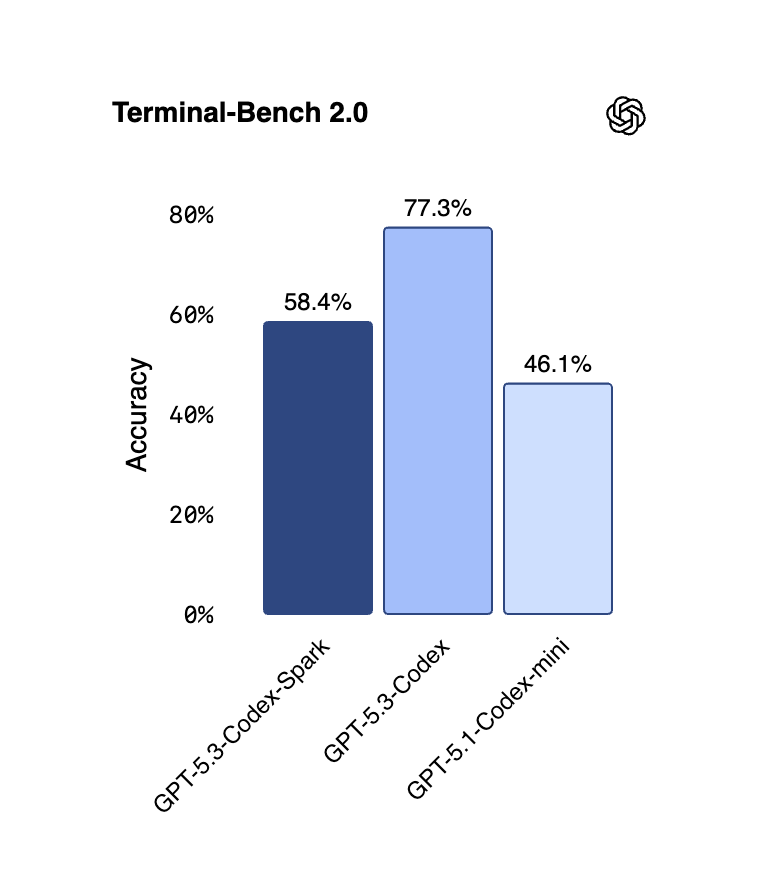

用户评论摘要:用户肯定其128K上下文和实时协作对开发流程的价值,赞赏轻量风格提升迭代速度。关注点集中在:是否提供SDK以便集成至构建管道;未公布定价可能影响采用;被视为同类模型的竞争者,核心优势在于快速处理明显代码问题而不消耗过多令牌。

AI 锐评

GPT-5.3-Codex-Spark的发布,本质上是OpenAI在AI编程助手赛道的一次精准的“降维打击”与场景切割。它没有选择在终极代码智能上继续内卷,而是敏锐地抓住了高端开发者一个更本质的诉求:交互流畅性。将“延迟与智能同等重要”作为标语,直指现有重型模型在实时结对编程、动态调试等场景中的核心短板——过长的思考时间会无情地打断开发者的心流。

产品介绍的“轻量级工作风格”是另一处值得玩味的战略选择。默认进行最小化、有针对性的编辑,而非自动运行测试或生成长篇大论,这并非能力上的妥协,而是对真实工作流的深刻洞察。它将自己定位为一个“敏捷的副驾驶”,将控制权牢牢交还开发者,通过近乎即时的响应来加速“提议-反馈-修正”的迭代循环,这比生成一个看似完美但需要大量时间审查和调试的复杂代码块,在实际生产力上可能更胜一筹。

然而,其面临的挑战也同样清晰。首先,作为ChatGPT Pro的附加预览功能,其准入壁垒和未来独立定价策略成谜,这直接关系到其能否渗透入企业级CI/CD流水线。其次,评论中将其与Windsurf等竞品对比,说明市场已意识到“专用化”模型的趋势。Spark的优势在于速度与交互,但在需要深度推理、复杂系统设计的“硬核”编程任务上,用户可能仍会转向更“重”的模型。它的真正价值,或许不在于取代所有编程AI,而在于重新定义人机协作的交互范式,将AI从“思考型顾问”转变为“响应型伙伴”,从而开辟一个以速度和流畅度为核心竞争力的细分市场。成功与否,取决于OpenAI能否将这种极致体验,转化为可规模化的产品与生态。

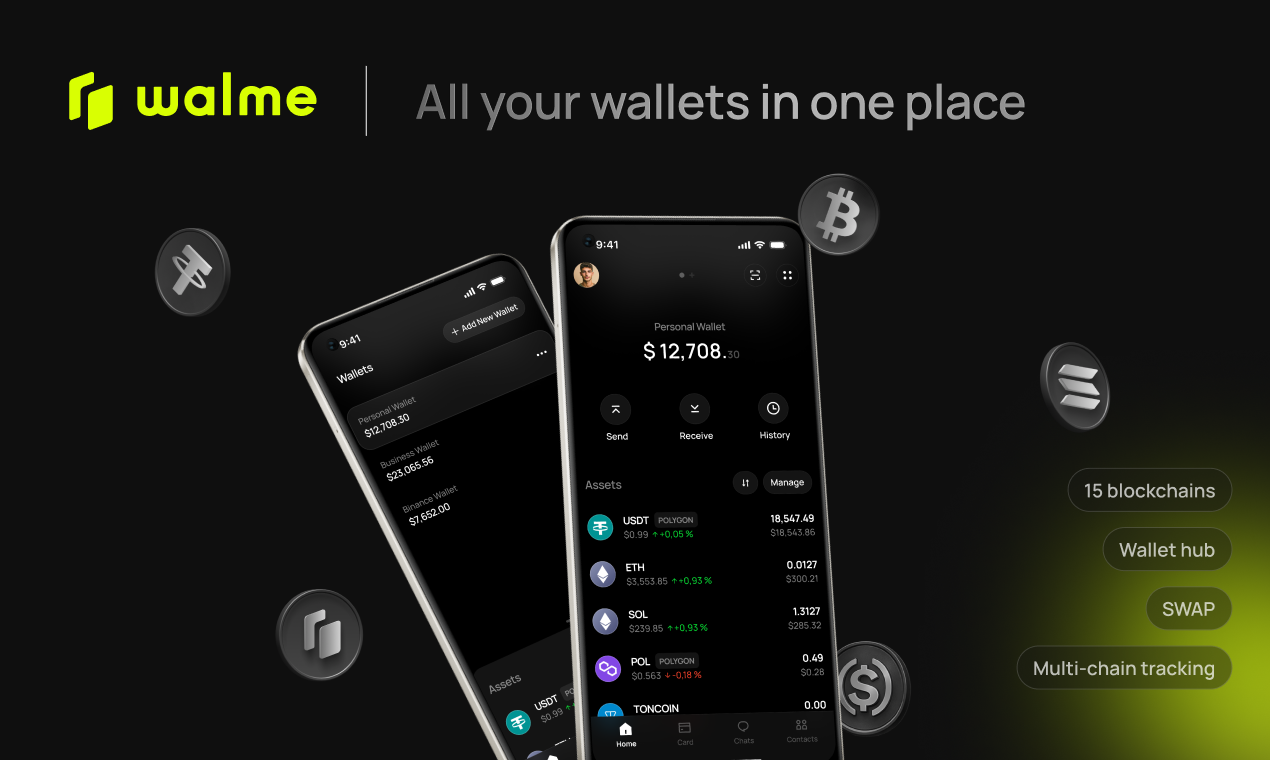

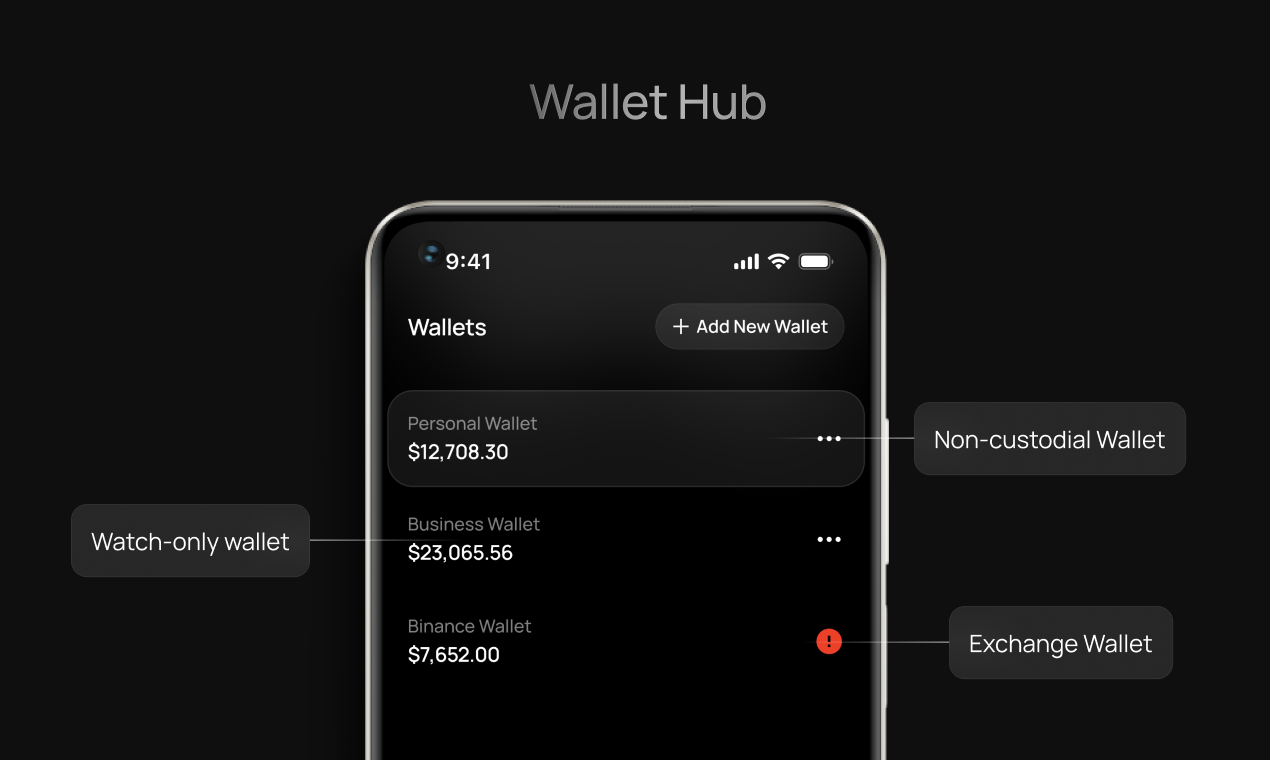

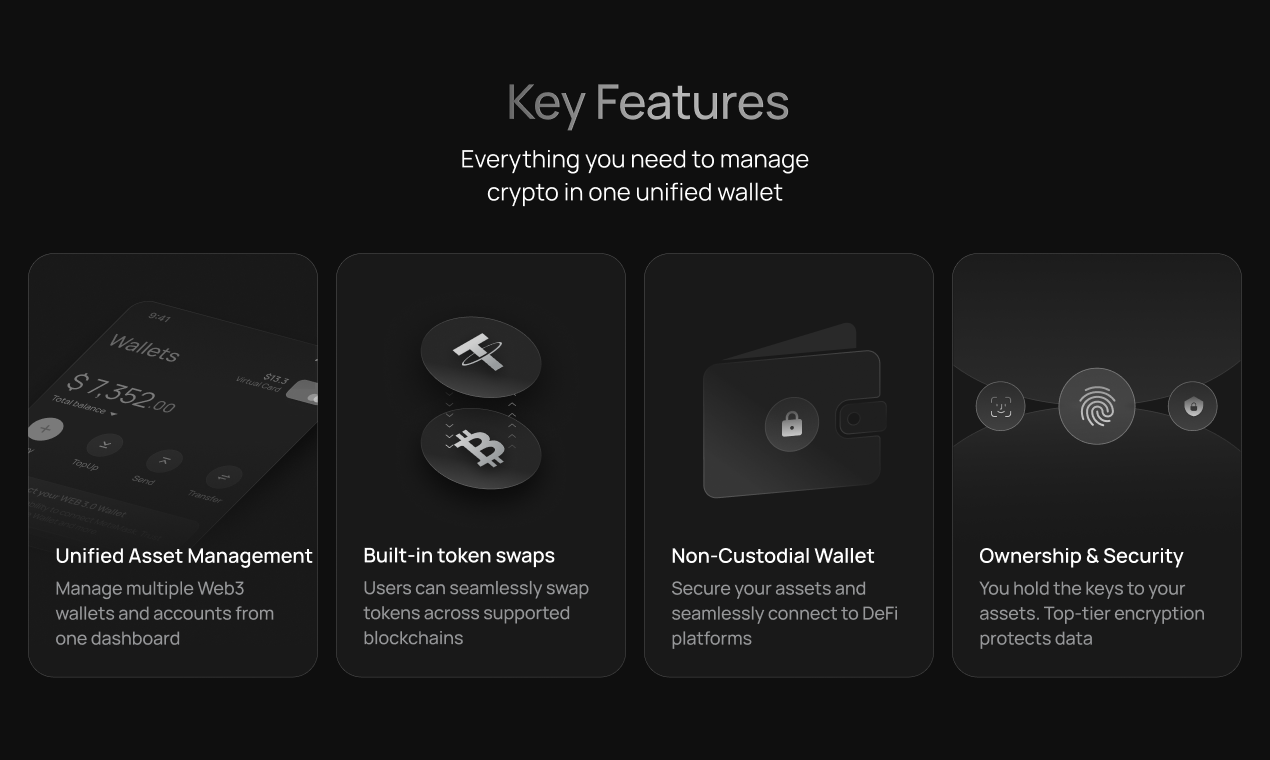

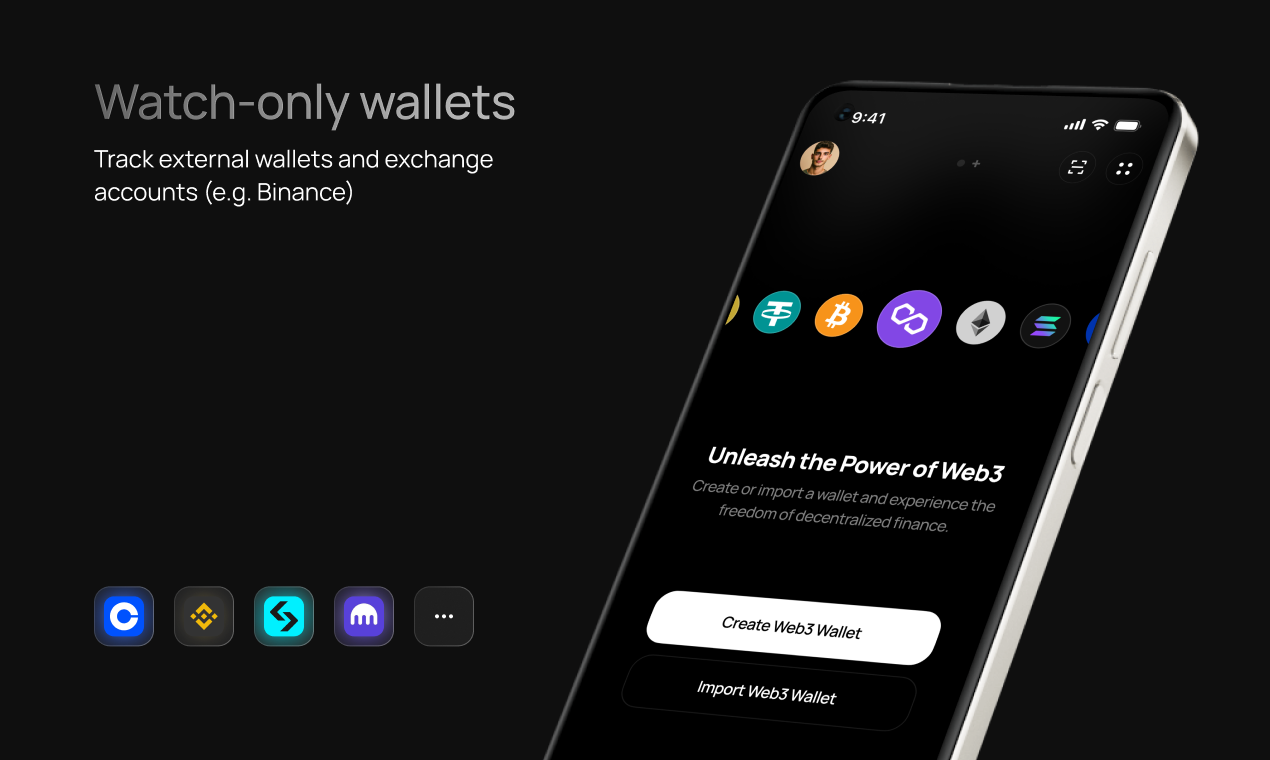

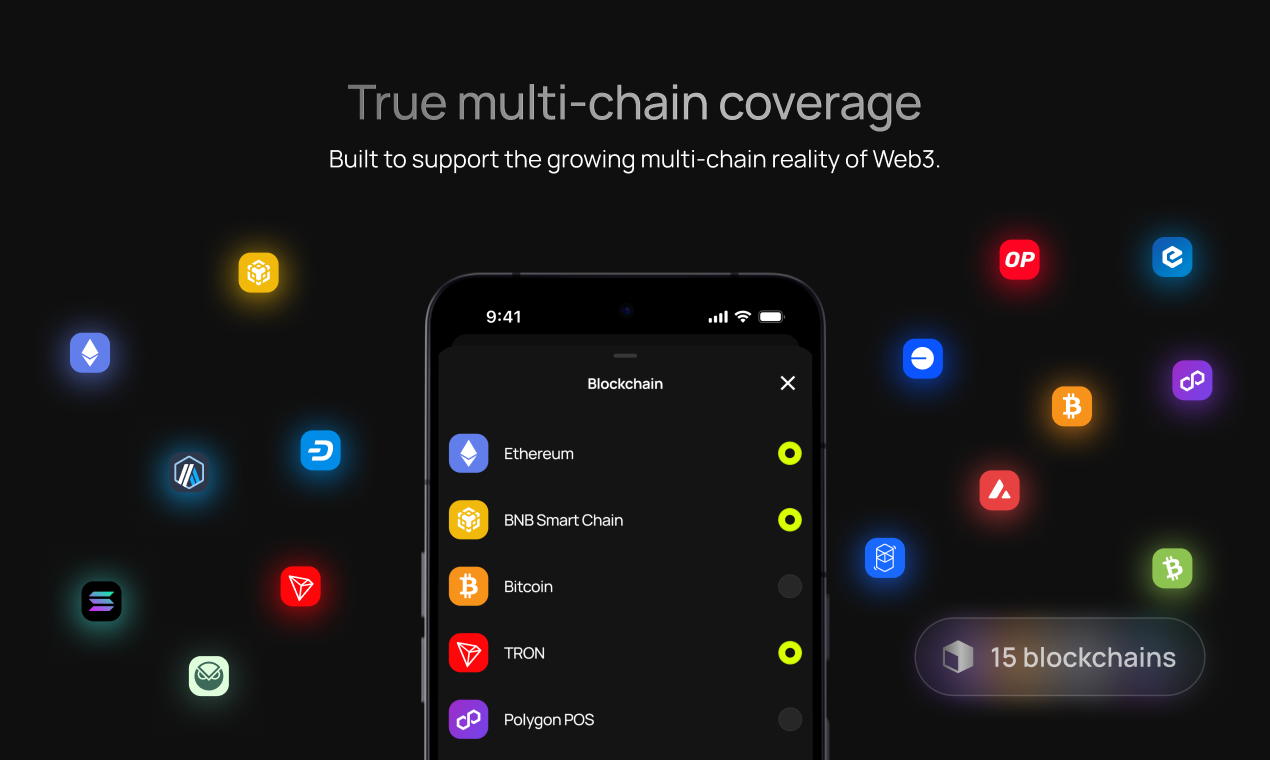

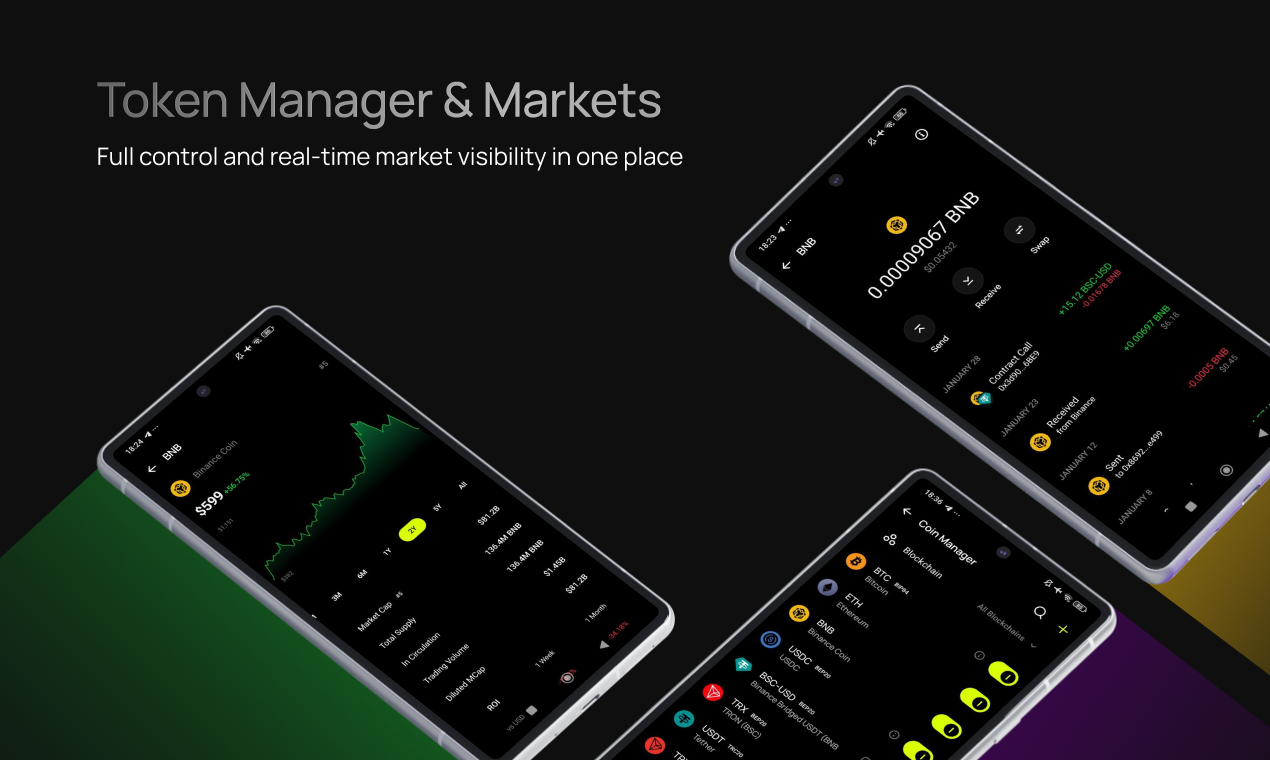

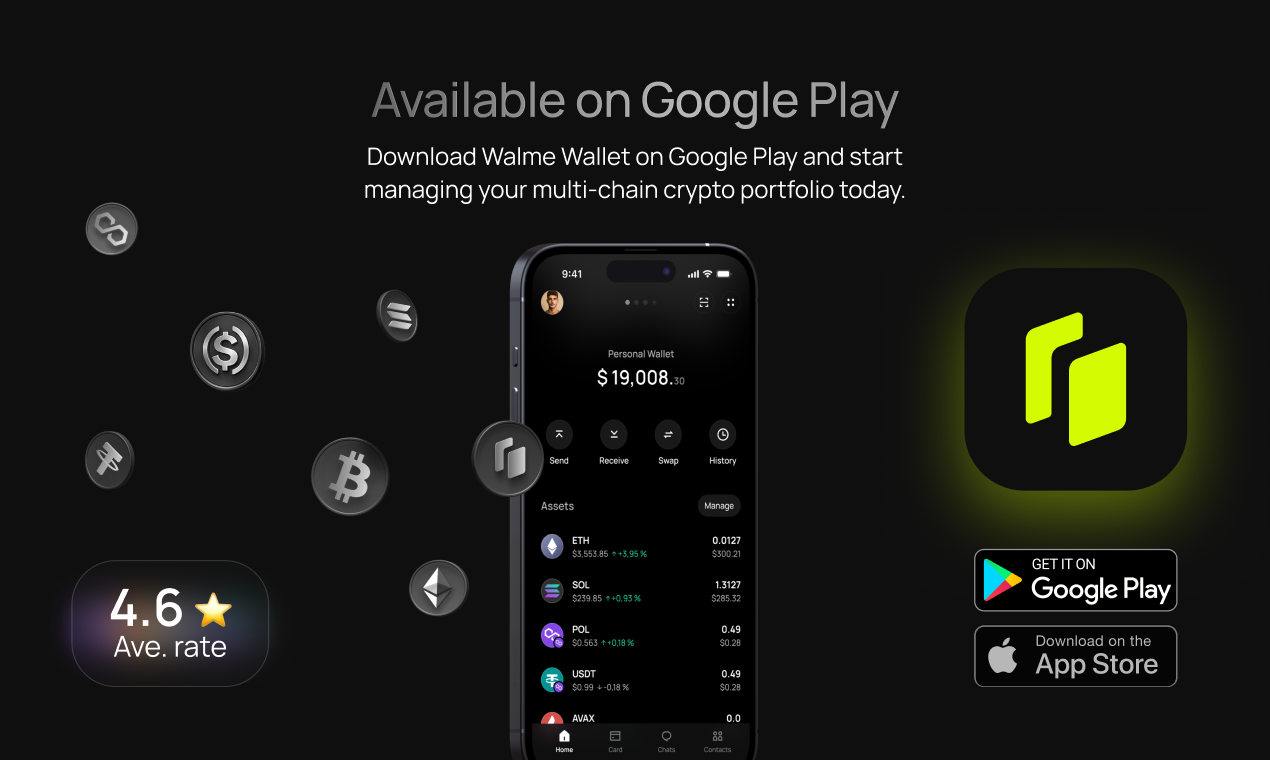

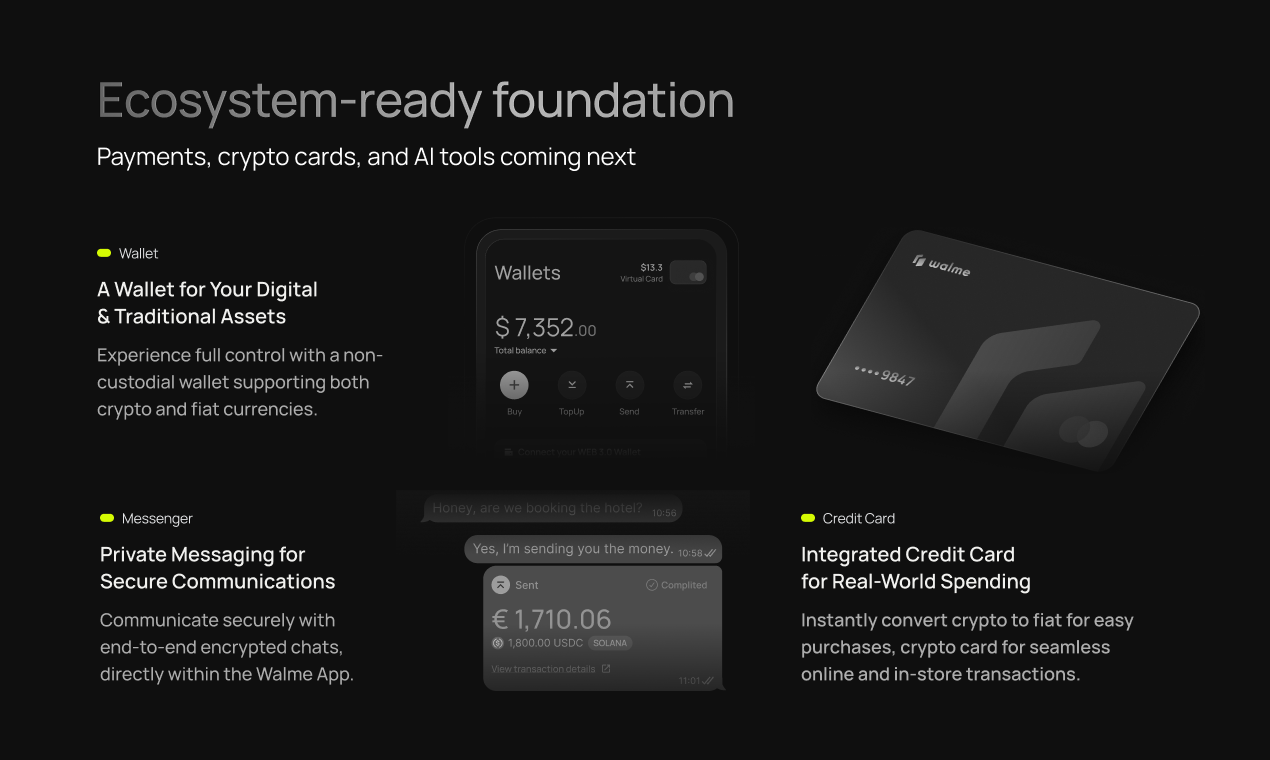

一句话介绍:Walme Wallet是一款统一的Web3钱包中心,通过聚合管理多个非托管钱包、只读地址和交易所账户,并内置兑换功能,解决了用户在管理分散的加密资产时需频繁切换平台、操作繁琐的痛点。

Android

Crypto

Web3

Cryptocurrency

Web3钱包

资产聚合

非托管

多链管理

代币兑换

加密货币卡

去中心化通讯

投资组合追踪

安卓应用

用户评论摘要:用户肯定其统一管理概念与设计,询问iOS上线时间、兑换限额、身份验证及与竞品差异。团队回复详尽,强调其生态整合(加密卡、AI助手、去中心化通讯)是核心差异,并承诺优先保障数据准确性而非功能广度。

AI 锐评

Walme Wallet的野心远不止于“又一个聚合钱包”。其真正价值在于试图将加密资产从“管理对象”转化为“可用资产”,通过整合加密借记卡和内置通讯(支持链上转账),模糊了加密存储与日常消费、社交的边界。这直指Web3大规模应用的核心障碍——资产孤立于现实世界。

然而,其挑战同样尖锐。首先,“全能型应用”面临体验臃肿与监管合规的双重风险,尤其是融合金融与通讯功能。其次,团队对ENS等成熟身份层“战略性延迟”的态度,虽显谨慎,也可能错失建立用户网络效应的先机。评论中资深用户对其与Zerion、Rainbow等产品的差异性质疑,恰恰点出了当前赛道竞争的本质:在基础钱包聚合功能已趋同质化后,**真正的护城河在于能否创造独特的资产使用场景,并保证跨链数据聚合的绝对可靠性**。Walme的加密卡和通讯是亮点,但需证明其整合体验丝滑且安全,方能从“功能堆砌”升维至“生态协同”。

一句话介绍:一款一键本地部署OpenClaw AI代理的macOS应用,解决了用户因复杂配置和安全隐患而难以使用这一强大AI工具的核心痛点。

Productivity

Open Source

Artificial Intelligence

AI代理工具

macOS应用

一键部署

本地运行

开源软件

隐私安全

开发者工具

降低使用门槛

OpenClaw生态

用户评论摘要:用户普遍赞赏其极大地简化了OpenClaw的安装配置流程,实现“拖拽即用”。主要反馈集中在:肯定其降低技术门槛的价值;创始人分享产品愿景与快速开发历程;技术用户关注依赖更新、安全打包及权限控制等底层实现细节。

AI 锐评

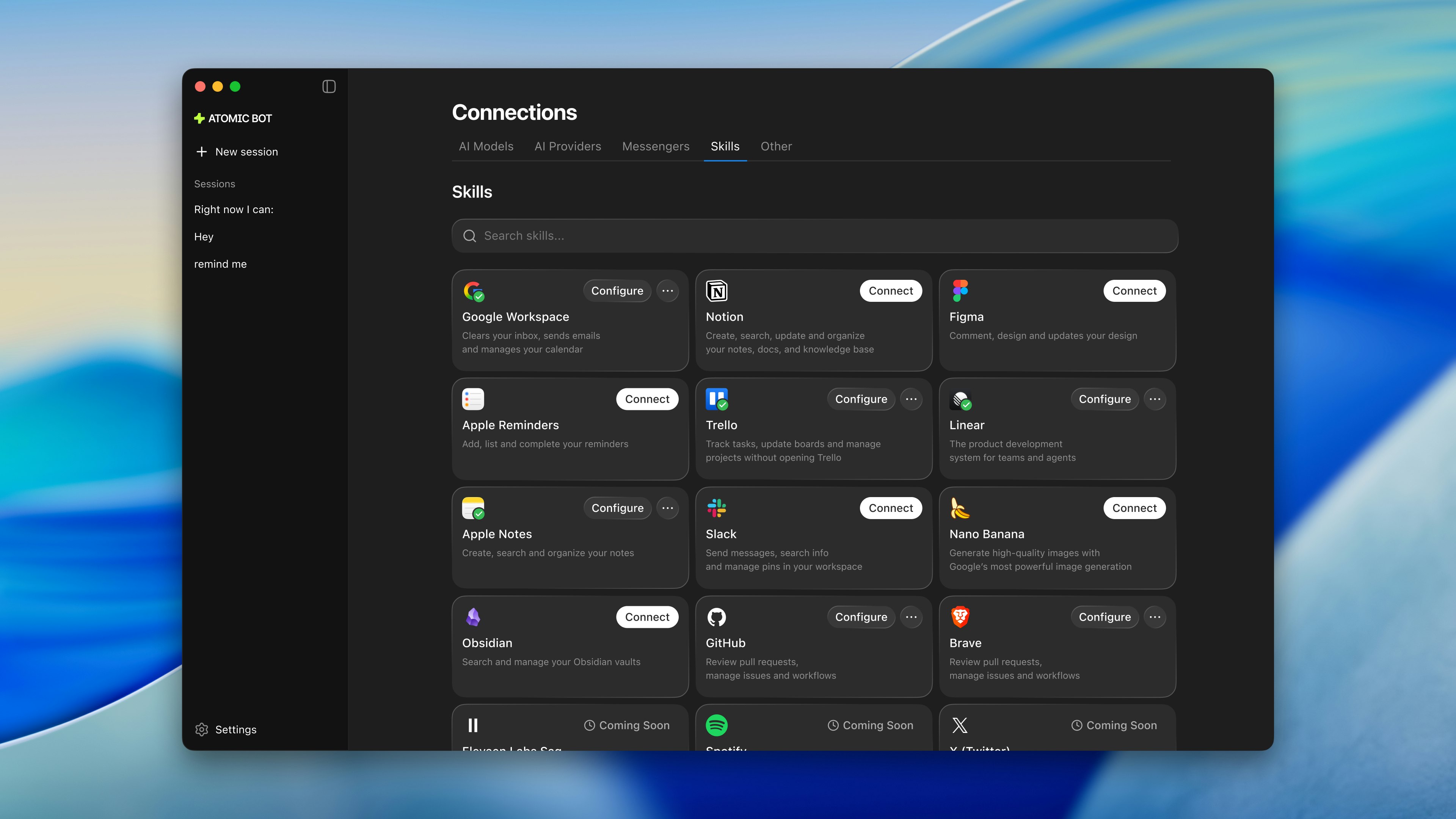

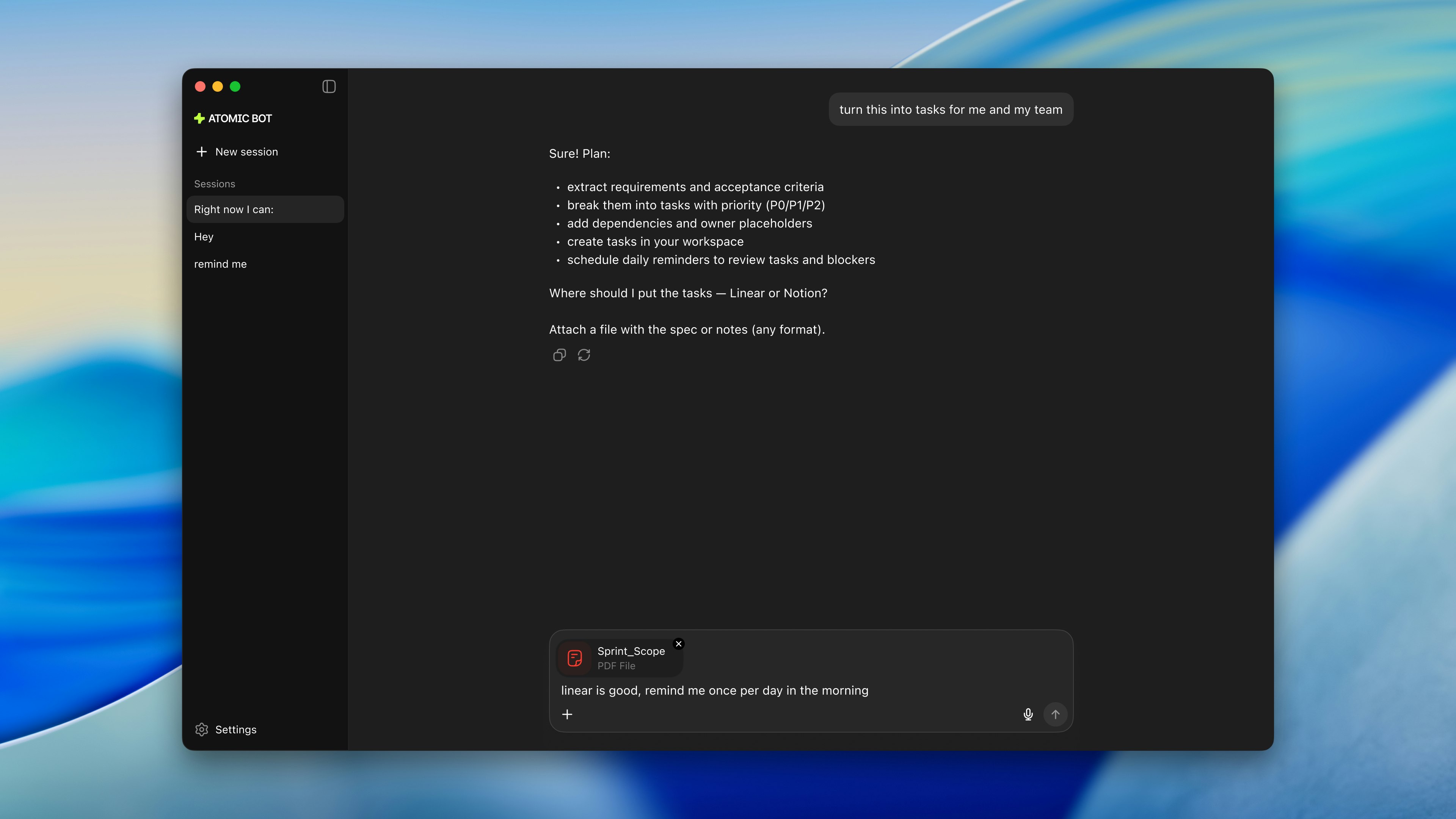

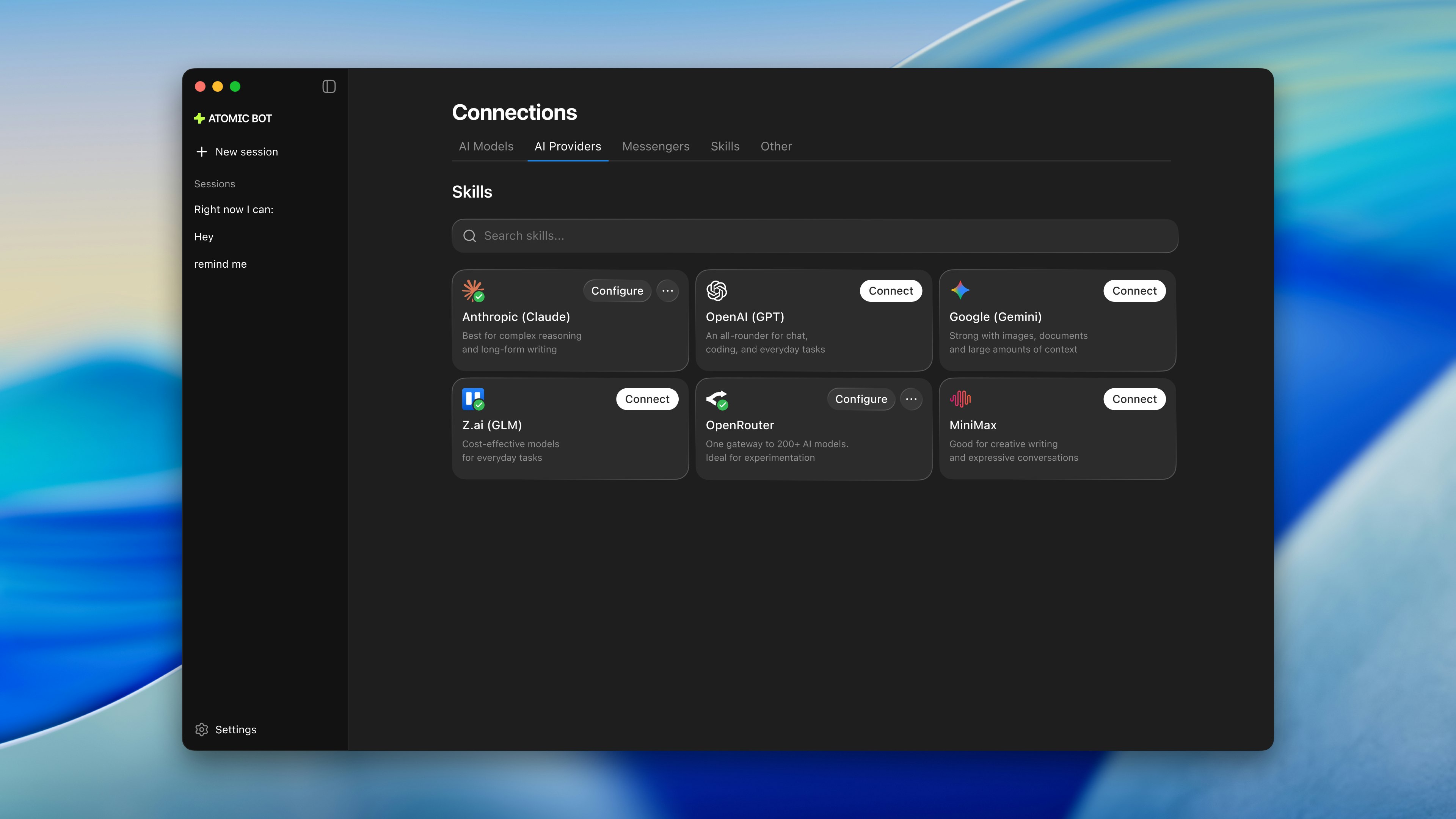

Atomic Bot的实质,是试图在去中心化、高门槛的尖端AI能力与大众用户“开箱即用”的朴素需求之间,架设一座简易桥梁。其真正的价值并非技术创新,而是精准的体验重构。它敏锐地刺中了当前AI工具,尤其是开源AI代理领域的一个普遍痼疾:强大的能力被令人望而生畏的配置过程所封印,将大量非硬核开发者拒之门外。

产品定位清晰且犀利:不做能力的加法,而做流程的减法。通过封装、打包和一键运行,将“使用AI代理”的认知和操作成本降至最低,直指“大众化采纳”这一关键瓶颈。创始团队将此前在消费级应用(加密钱包)中积累的“简化复杂技术”的产品思维成功迁移至此,是其一周快速成型并引发热烈反响的内在逻辑。

然而,其面临的挑战与机遇同样鲜明。作为一款“包装层”应用,其命运与上游OpenClaw深度绑定。评论中技术用户提出的依赖管理、安全更新、沙箱权限等问题,正是这类简化工具的阿喀琉斯之踵。它必须在“极简体验”与“可控、可靠、可审计”的专业需求之间找到平衡。否则,极易从“便捷之门”滑向“黑盒风险”。其开源属性是建立信任的明智之举,但如何构建可持续的商业模式,避免成为昙花一现的“便捷外壳”,是下一个需要回答的问题。本质上,Atomic Bot测试的是市场对“AI便利性”的付费意愿究竟有多强,以及在一个快速迭代的生态中,“简化者”自身能否持续保持敏捷与稳定。

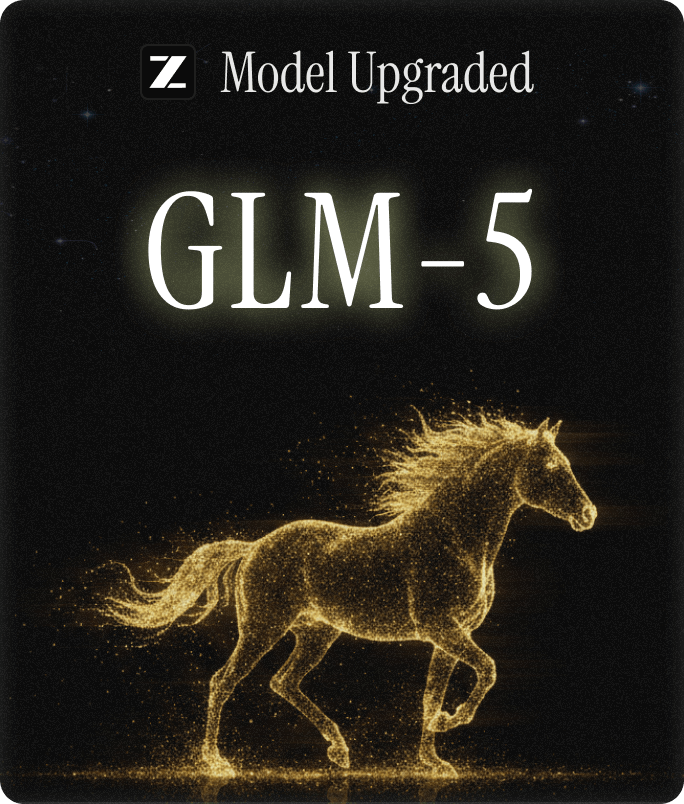

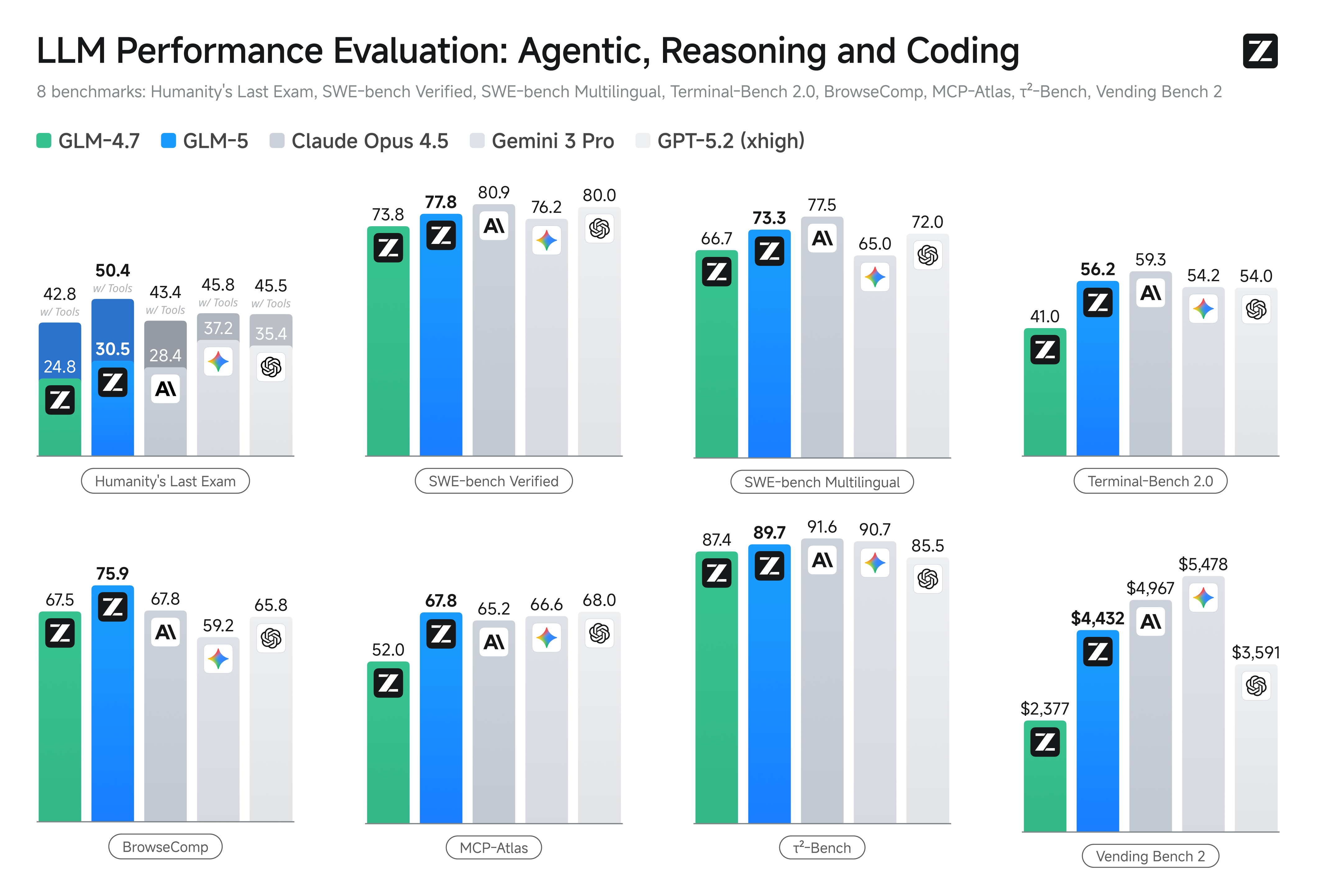

一句话介绍:GLM-5是一款开源的巨型混合专家模型,专为处理复杂系统和长周期智能体任务而设计,通过在自有基础设施上提供接近顶级闭源模型的智能体能力,解决了企业级应用对高性能、低成本且数据安全可控的AI代理的迫切需求。

Open Source

Artificial Intelligence

Development

开源大语言模型

混合专家模型

AI智能体

长上下文

企业级AI

成本优化

自主可控

复杂任务规划

稀疏注意力

强化学习基础设施

用户评论摘要:用户高度评价其强大的智能体能力与成本优势,认为其在需要长时间运行、高智能规划但需控制成本的场景中极具价值。主要建议与问题集中在:智能体模式的具体安全沙箱机制、状态持久化能力,以及从现有闭源模型切换过来的具体优势场景。

AI 锐评

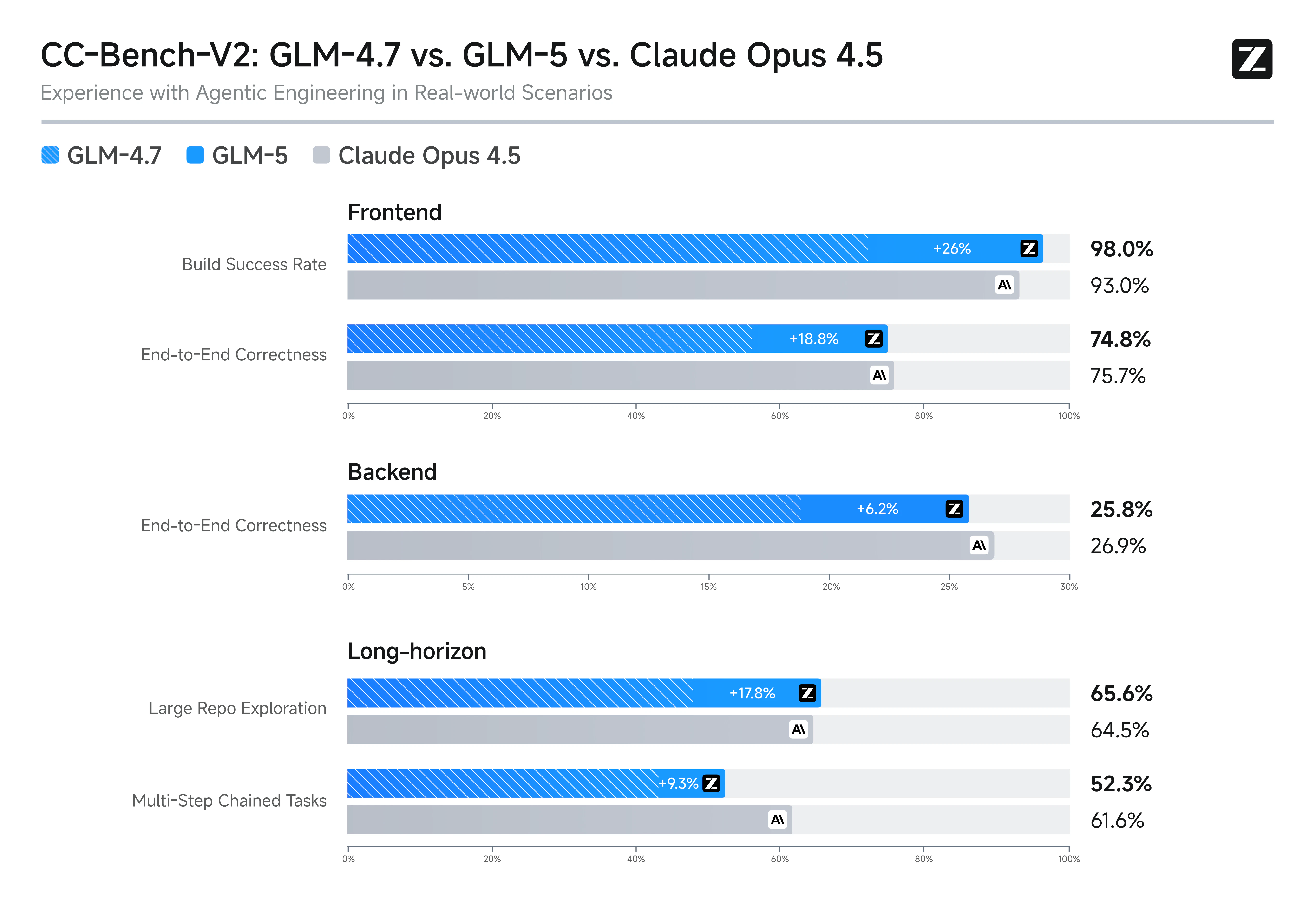

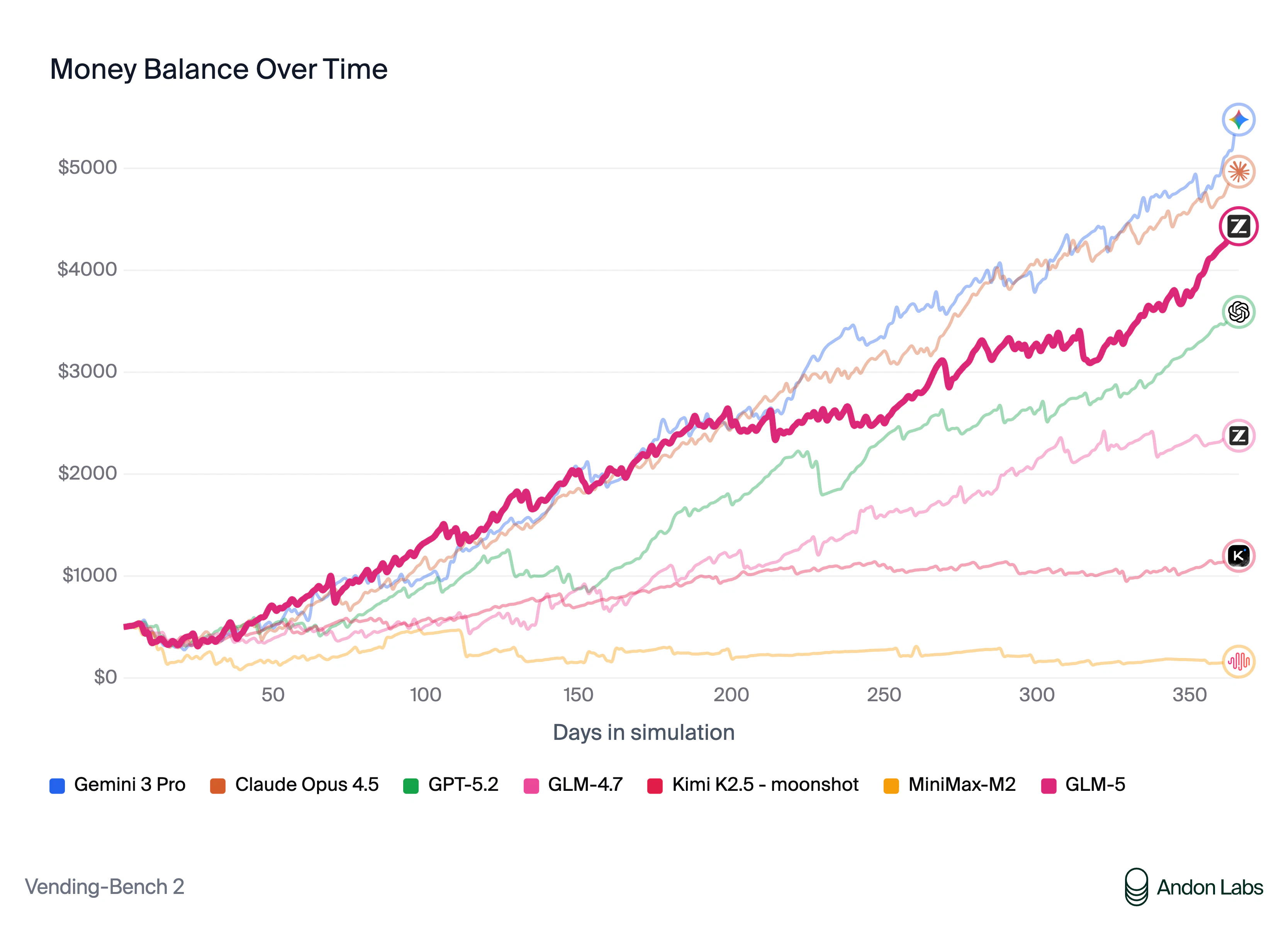

GLM-5的发布,远不止是参数规模的炫耀,它精准地刺向了当前AI商业化的一个核心矛盾:对“类Claude Opus”级高级智能体能力的渴求,与闭源API高昂成本、数据安全顾虑之间的冲突。其宣称在Vending Bench 2上接近Opus的表现,是它最锋利的营销刀刃,直接向市场宣告:开源模型在最具商业价值的复杂、长周期规划任务上,已具备了“可用且好用”的竞争力。

产品的真正价值在于“工程化整合”。它并非单纯堆砌参数,而是将DeepSeek稀疏注意力(控制长上下文成本)、创新的“slime”异步RL基础设施(解决后训练效率瓶颈)以及面向智能体的工程模式(如Z.ai的Agent模式切换)打包为一个解决方案。这标志着开源大模型从“追赶基准测试分数”进入“打造专用工程系统”的新阶段。其目标用户非常明确:那些被闭源模型API账单刺痛、或受限于数据合规无法外泄核心代码与业务流程的科技企业。

然而,光环之下疑点犹存。所谓的“#1开源模型”成绩是在特定基准(Vending Bench 2)上取得,其泛化能力有待更广泛验证。评论中关于智能体安全性与状态持久化的质疑,直指企业级应用最关键的“信任”问题——模型能力只是门票,能否在真实生产环境中可靠、可控、可审计地执行任务,才是决定其能否真正“上车”的关键。GLM-5展示了一条诱人的路径,但说服谨慎的企业客户进行迁移,仍需在工具链的成熟度、生态的支持和成功案例的积累上,补足比技术论文更复杂的功课。

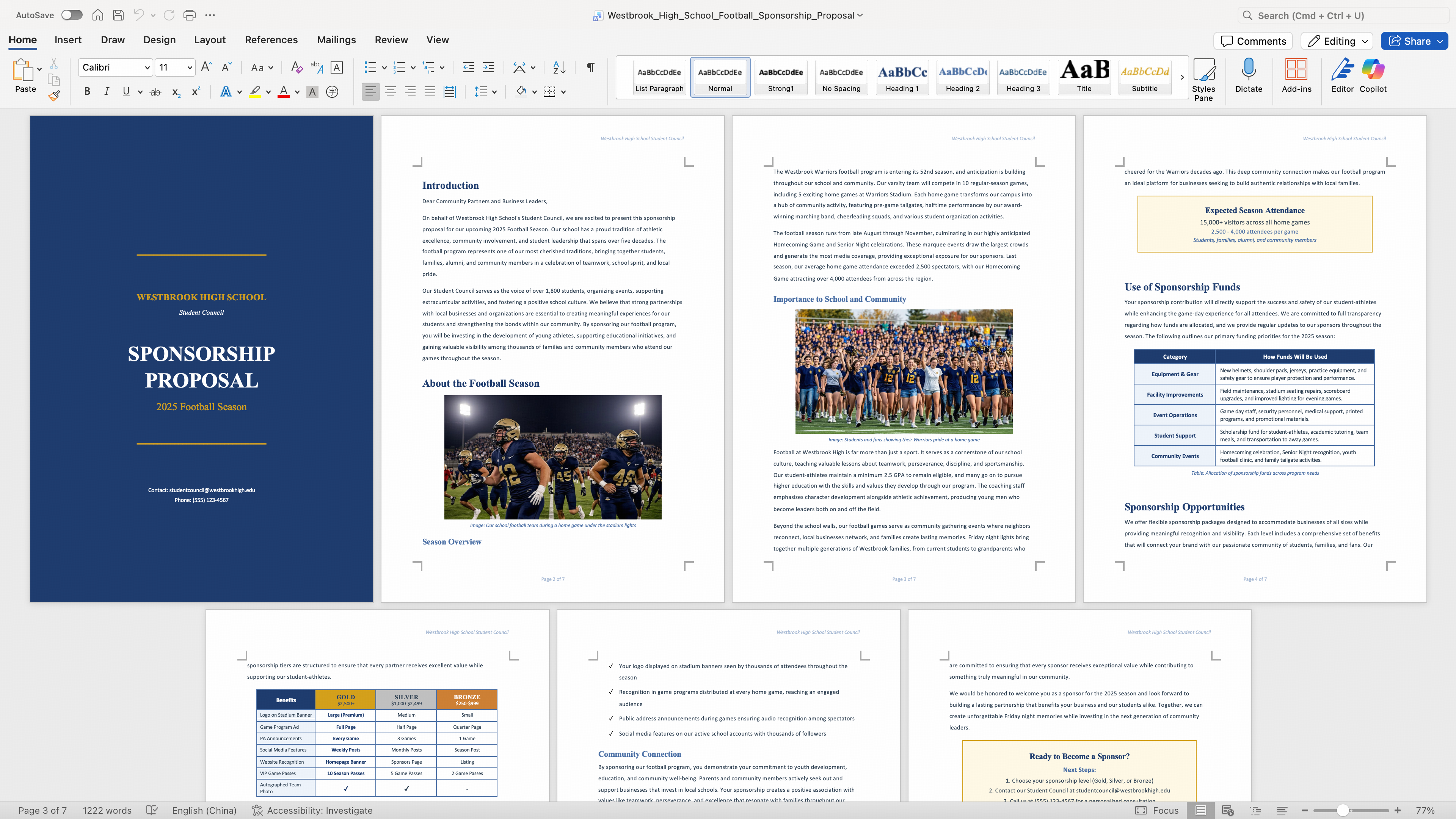

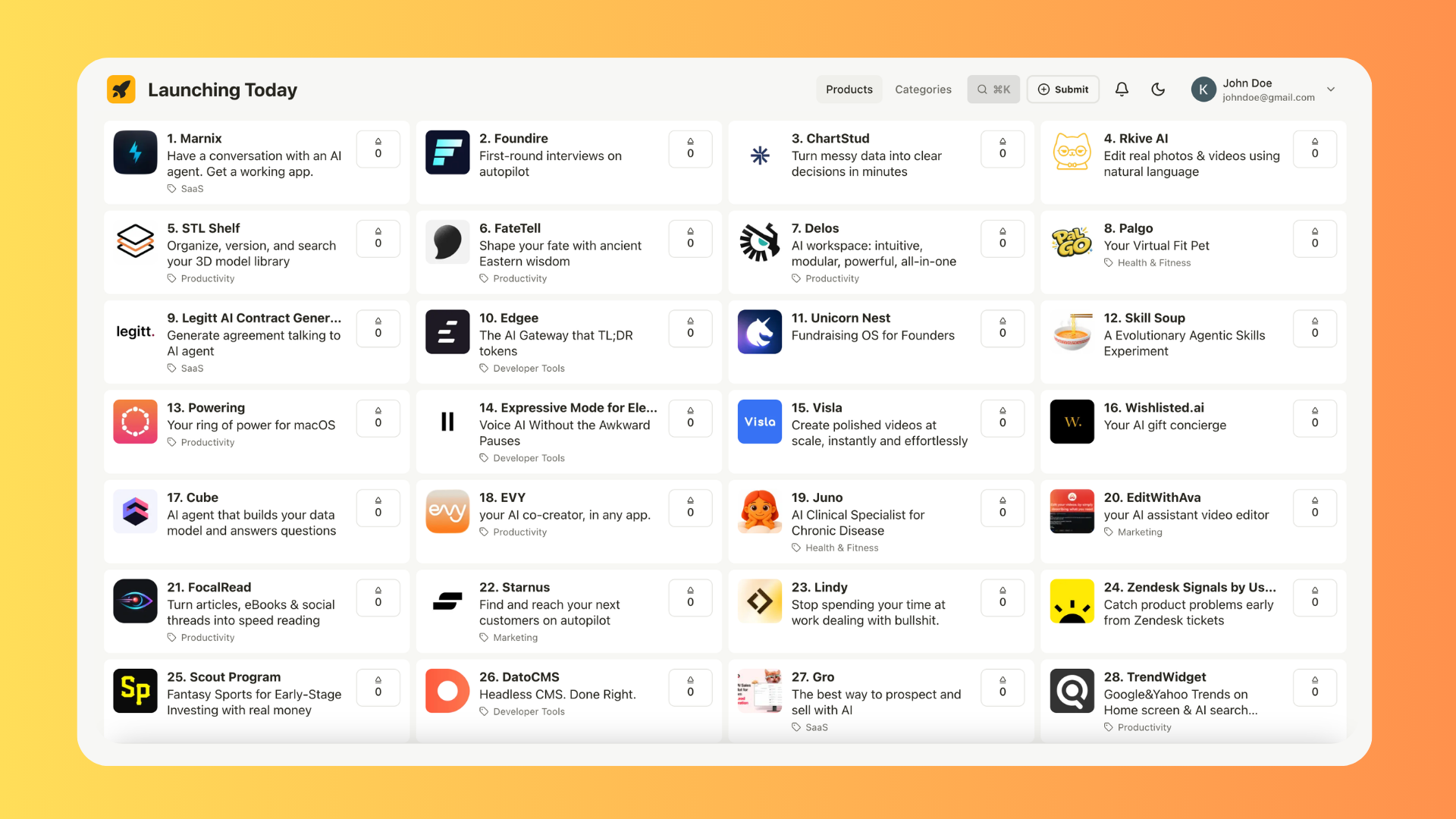

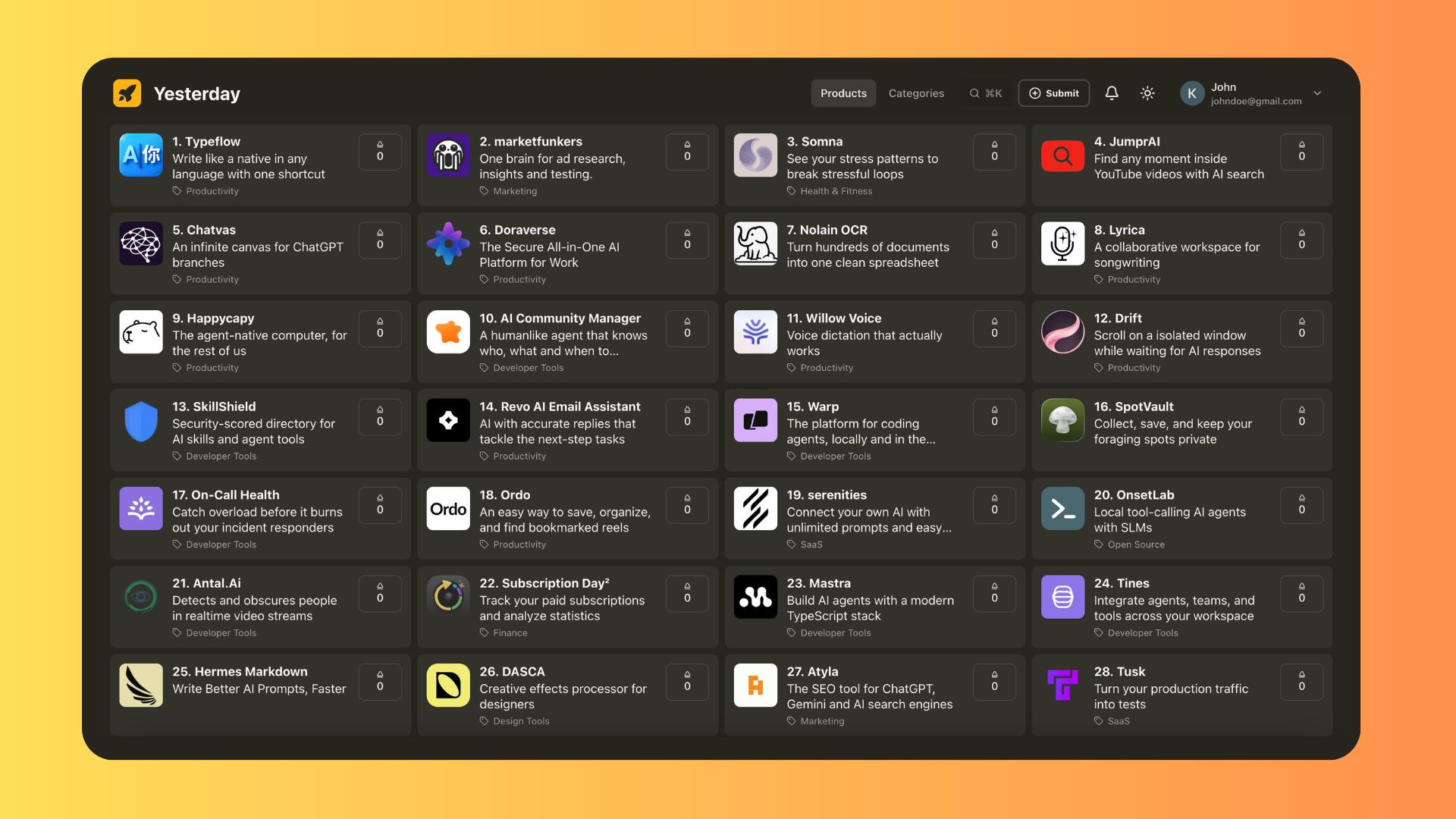

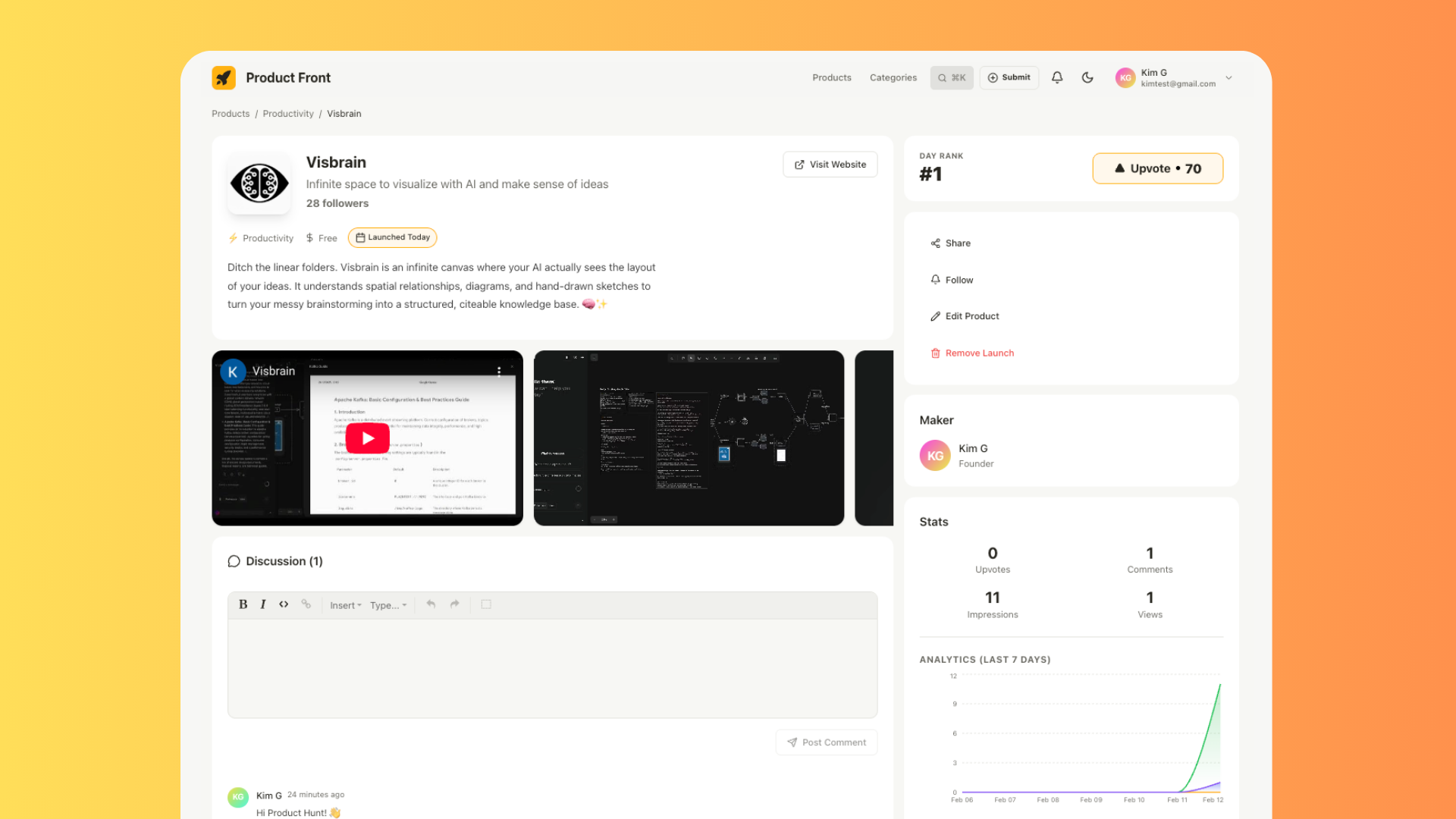

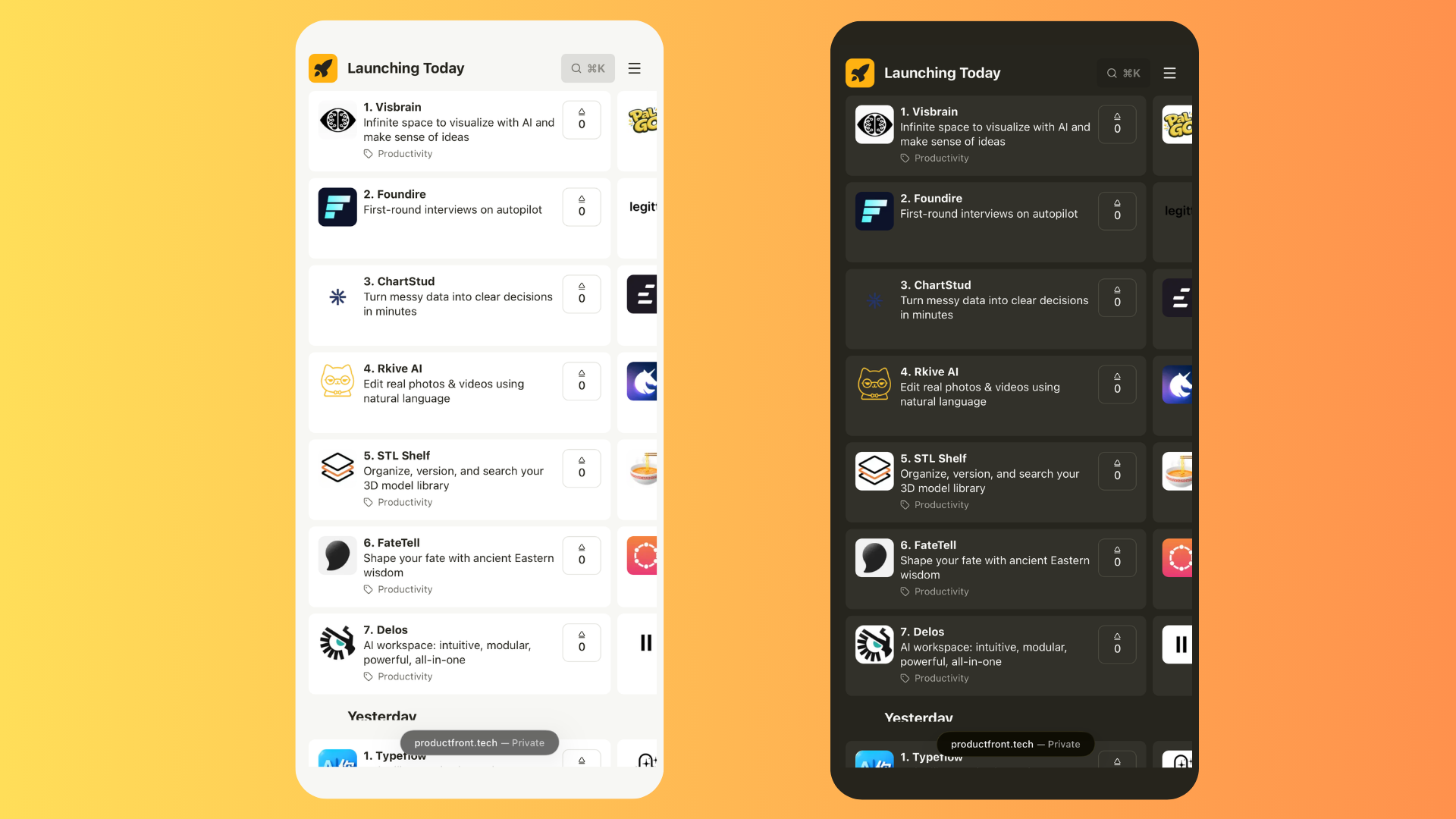

一句话介绍:Product Front 是一个通过每日限流28款产品、采用横向网格布局及智能每周重发机制,解决初创产品在传统发布平台上因排名靠后或付费广告挤压而迅速被淹没、无法获得有效曝光痛点的发现平台。

Sales

Marketing

Growth Hacks

产品发现平台

初创者营销

公平曝光

替代Product Hunt

应用发布

增长黑客

独立开发者

社区驱动

反付费赢家

视觉优先设计

用户评论摘要:用户普遍认可其解决曝光痛点的核心价值,特别是28个名额限制和重发机制。主要问题集中于平台自身如何解决冷启动、如何平衡公平与质量、以及提交量激增后的处理方案。创始人回复强调“先到先得”和社区投票决定质量。

AI 锐评

Product Front 精准地刺中了当前主流产品发现平台的“民主化”幻象。它认识到,在无限滚动的信息流和付费广告的双重夹击下,“发现”本身已成为一种需要被重新分配的特权。其核心价值并非简单的UI创新,而是一套试图用刚性规则对抗算法与资本霸权的“曝光保障体系”。

每日28款的硬性上限,本质是制造一种数字稀缺性,将平台的注意力资源从“无限供给”变为“有限席位”,从而抬升每个席位的基础价值。横向网格布局与“智能重发”机制,则是从空间和时间两个维度对注意力进行再分配:空间上确保同时呈现,时间上提供多次机会。这确实为独立开发者提供了一个确定性更高的曝光方案,尤其缓解了“一发布定生死”的焦虑。

然而,其模式隐含着深刻的矛盾与挑战。首先,“先到先得”的公平原则,可能演变为“手速竞赛”,这与筛选“最佳产品”的初衷存在内在张力,长远可能损害平台内容质量与用户信任。其次,其商业模式尚未显现,在坚决否定“付费赢家”后,如何可持续地运营并解决自身的“鸡与蛋”冷启动难题,是创始人必须回答的生存拷问。最后,其价值高度依赖于流量规模,若无法吸引足够多的真实浏览者,28个席位的“公平曝光”将沦为开发者间的“内卷式曝光”,意义大打折扣。

因此,Product Front 的真正价值,与其说是一个成熟的Product Hunt替代品,不如说是一面映照出当前初创产品营销生态困境的镜子,以及一次对“注意力公平”的激进实验。它的前景不取决于设计巧思,而取决于能否在公平、质量与增长之间找到一个动态平衡点,并构建起一个真正活跃的、非功利性的发现者社区。否则,它可能只是为开发者提供了又一个“不会被淹没的墓地”。

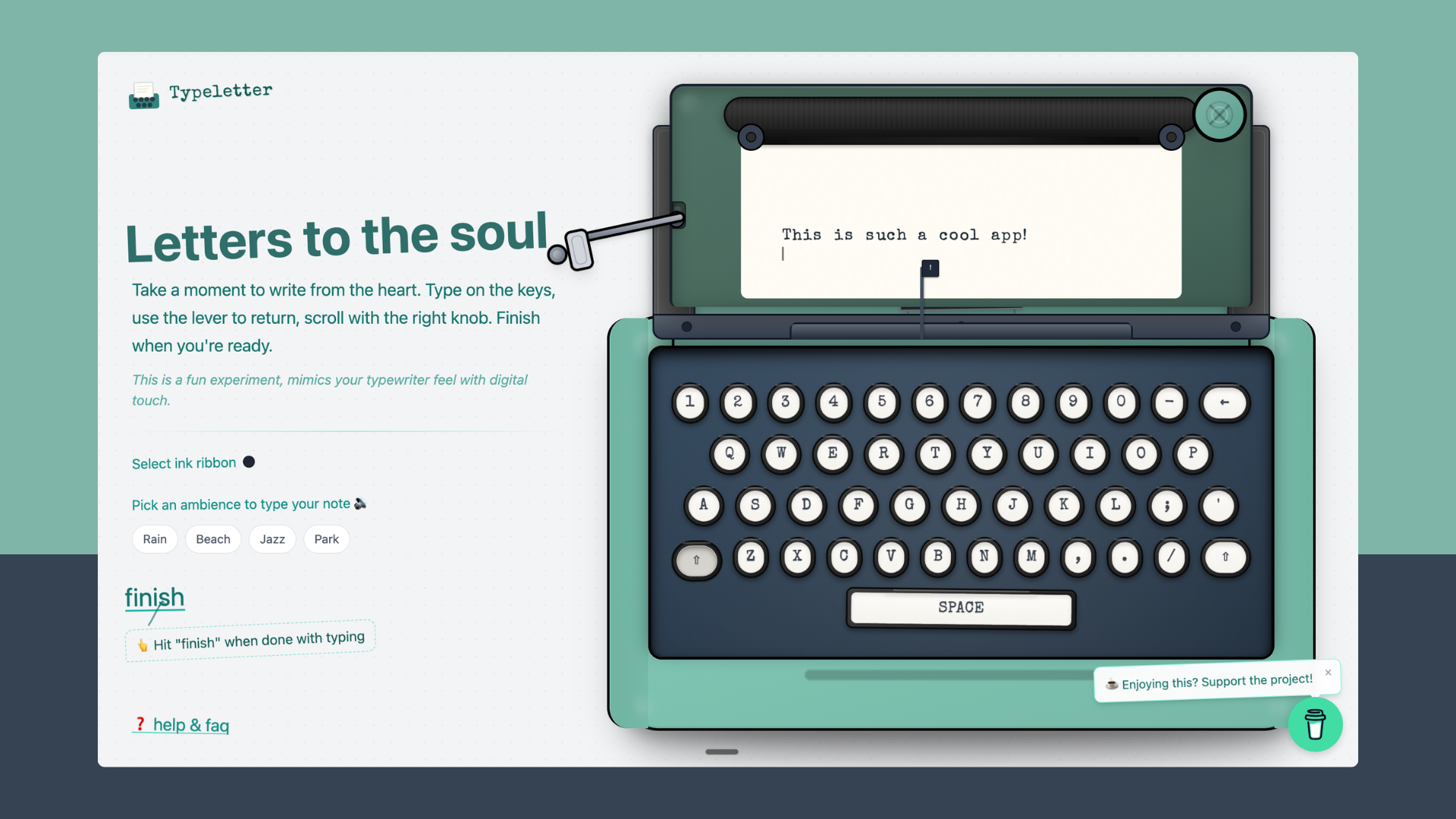

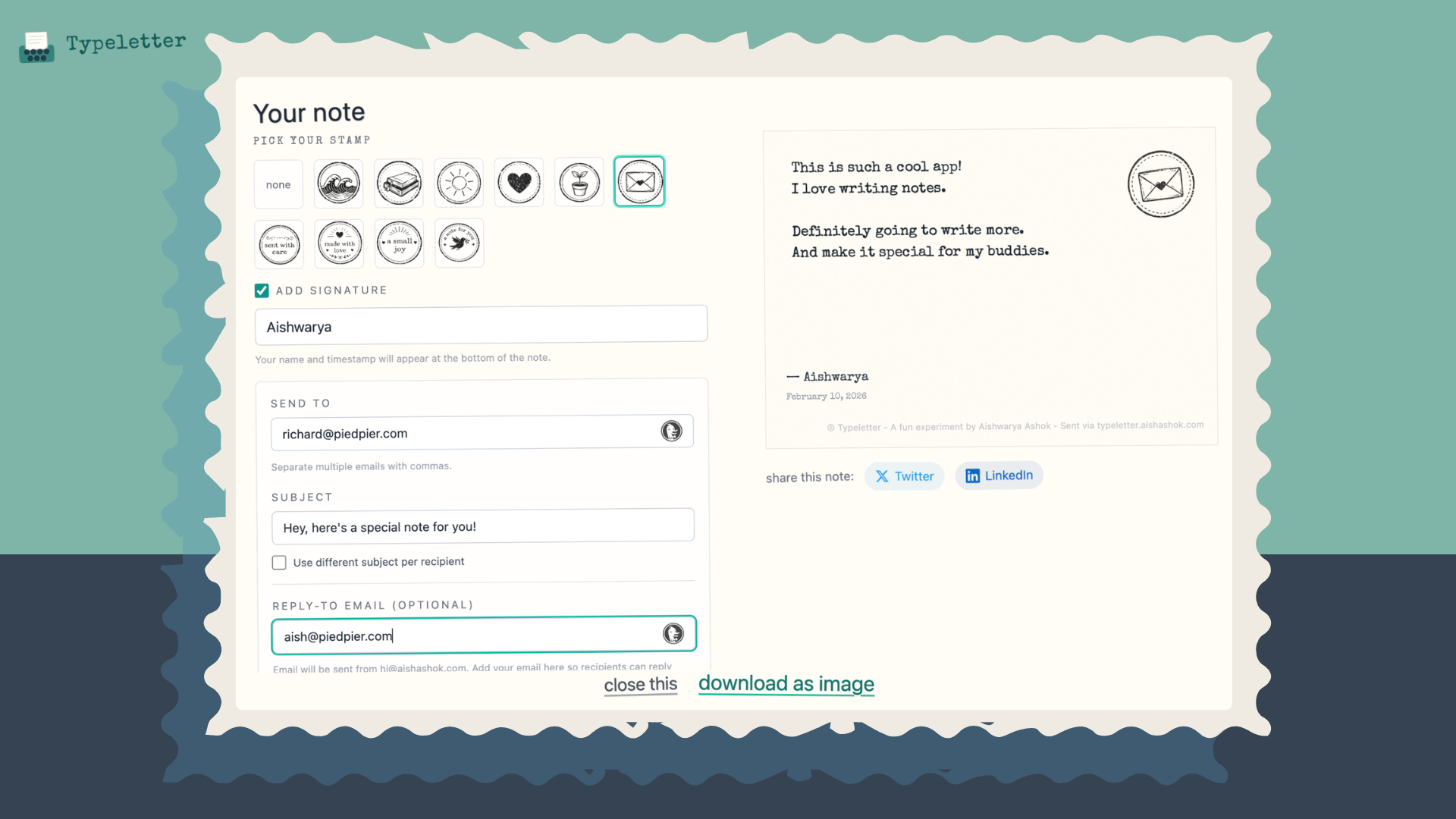

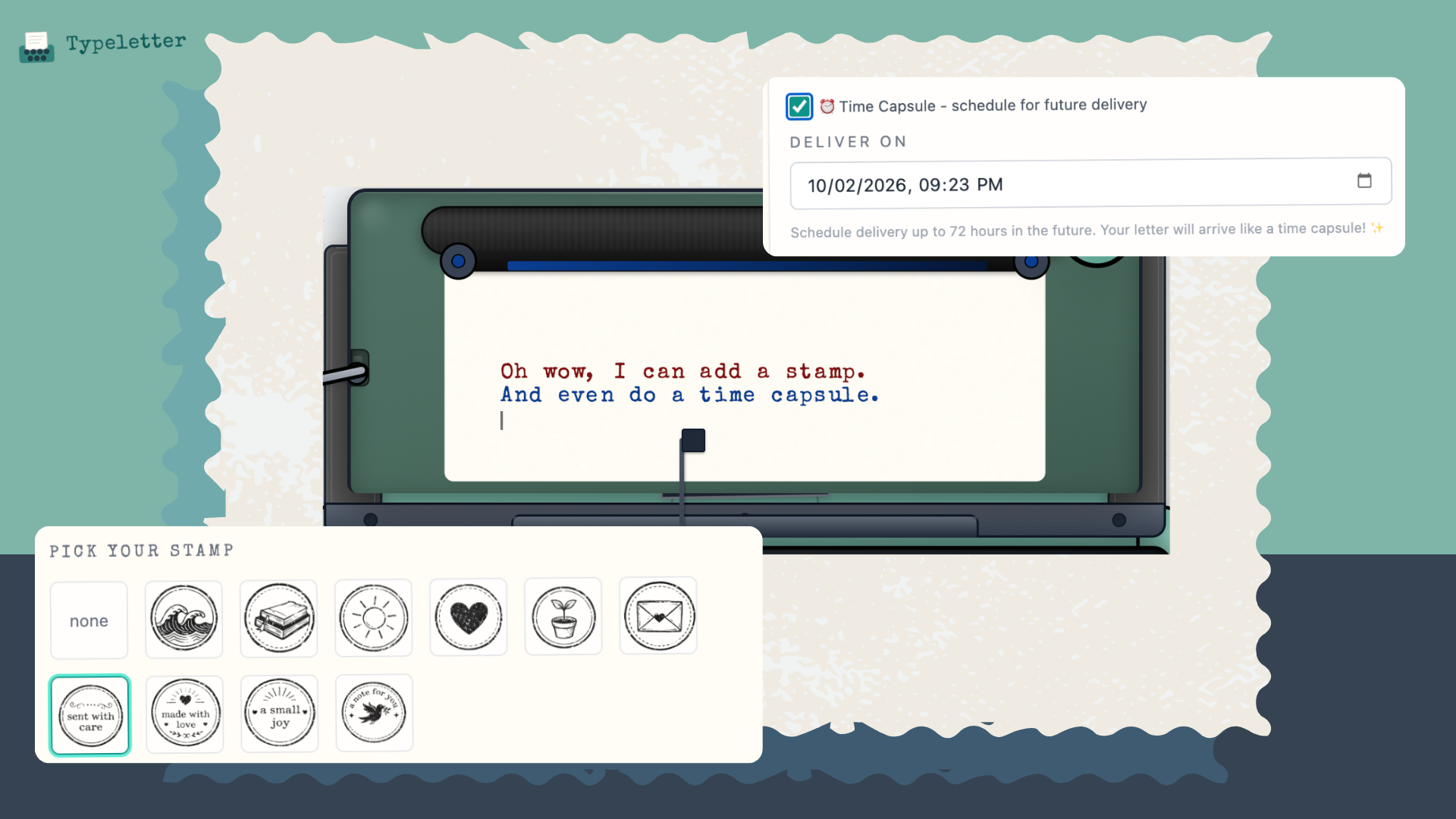

一句话介绍:Typeletter 是一款将浏览器变为复古打字机风格写作空间的工具,通过模拟真实的打字机声效、墨带和氛围音,为用户在撰写信件、日记或深度思考时,提供一个无干扰、可唤起怀旧情感并鼓励专注书写的沉浸式场景。

Productivity

Writing

Tech

复古写作工具

无干扰写作

浏览器应用

数字怀旧

情感化设计

打字机模拟

氛围音效

信件撰写

即时输出

隐私友好

用户评论摘要:用户普遍赞赏其营造的放松、专注的写作体验与怀旧情怀。主要建议包括:增加更多环境音选项(如森林、城市);优化可能存在的输入延迟;技术层面关注高性能导出与音频处理的实现,并对“时光胶囊”等无账号功能的隐私安全实现方式提出质询。

AI 锐评

Typeletter 的精明之处在于,它并未创造新需求,而是精准地劫持了“写作焦虑”这一现代普遍情绪。它的核心价值并非功能创新(模拟打字机、氛围音、导出图片),而在于通过一套高度风格化的感官包装(声音、视觉、交互隐喻),将“写作”这一行为仪式化、游戏化,从而短暂地消解了用户在空白文档前的压力。

产品巧妙地利用了“数字怀旧”这一情感杠杆。复古打字机并非作为生产力工具被还原(否则纠错功能缺失将是致命伤),而是作为一个情感符号被消费。它贩卖的是一种“慢下来、更用心”的错觉,其“无注册、无干扰”的设定进一步强化了这种纯粹、私密的心理暗示。然而,这种浪漫化包装与底层技术现实存在张力,正如尖锐的技术评论所指出的:浏览器应用的性能瓶颈、无后端账户下的“时光胶囊”实现与隐私承诺,都是其“轻量体验”背后必须面对的硬核工程与信任挑战。

本质上,Typeletter 是一个精心设计的“写作安慰剂”。它可能无法真正提升写作质量,但通过提供强烈的即时感官反馈(咔嗒声、蜡封印章)和完成仪式(发送、下载精美图片),它极大地提升了写作过程的情感回报和完成动力。它的成功与否,不取决于它是否比Word更强大,而取决于它构建的这场短暂逃离能否持续让用户买单——无论是情感上,还是最终在商业上。

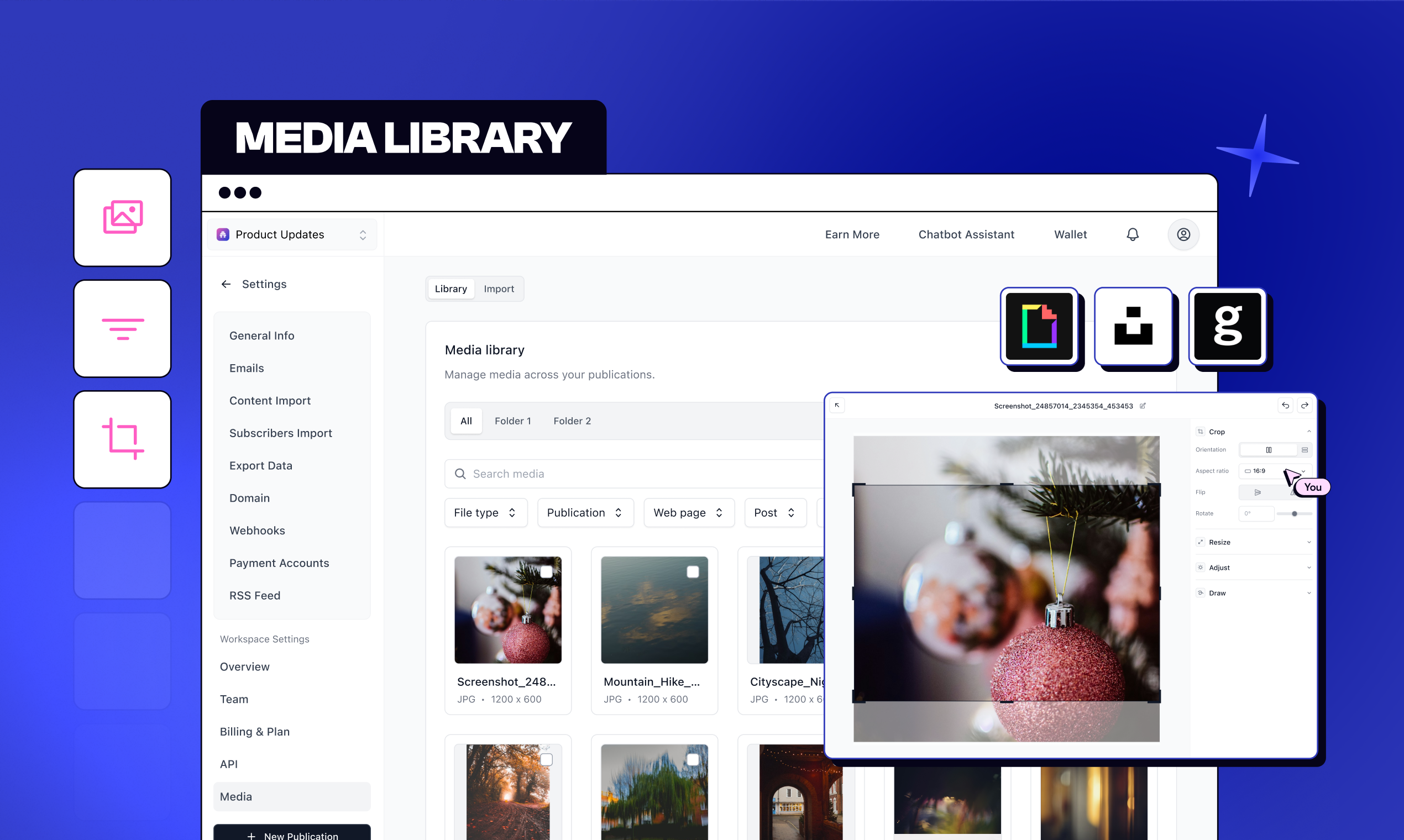

一句话介绍:一款为内容创作者和媒体公司设计的中心化数字资产管理库,通过在单一平台内整合素材的创建、编辑、管理和AI生成,解决了多出版物运营中素材分散、重复上传和管理低效的核心痛点。

Design Tools

Newsletters

Writing

数字资产管理

媒体库

内容创作平台

创意资产管理

多出版物管理

批量操作

AI图像生成

工作流整合

SaaS

用户评论摘要:用户肯定其统一管理、提升效率的核心价值,特别是跨出版物共享和批量操作。关注点集中在AI生成功能的实际存在性、视频支持可能性,以及编辑版本控制与生产级控制之间的权衡。

AI 锐评

beehiiv的Media Library并非一个简单的功能更新,而是一次旨在巩固其作为“创作者操作系统”地位的平台化升级。其真正价值不在于“又一个媒体库”,而在于试图将内容创作中最分散、最耗时的“素材管理”环节彻底吸入其生态闭环。

产品介绍中“从想法到发布产品”的表述暴露了其野心:它不希望用户仅仅将beehiiv视为邮件通讯工具,而是一个从素材源头(AI生成/编辑)、管理中台(媒体库)到最终发布(出版物)的完整工作流控制台。这直接回应了核心用户(运营多出版物的创作者或小型媒体)的切肤之痛——品牌资产在多平台间的割裂与重复劳动。

然而,评论中潜藏着产品必须面对的尖锐矛盾。其一,是“便捷性”与“安全性”的经典冲突。用户关于“版本控制与避免误改已发布内容”的提问,直指这类一体化工具的核心风险:当编辑变得过于“顺手”,如何防止生产环境的稳定性被破坏?这考验着产品在追求流畅体验与提供企业级管控之间的平衡能力。其二,是“开放集成”与“内部闭环”的路线选择。Getty Images的集成显示了对接专业资源的姿态,但评论也指出其信用模型可能将用户推向更便宜的AI生成。这迫使产品思考:它的终极价值是成为连接一切优质资源的中立网关,还是利用AI和内部工具将用户牢牢锁在自有生态中?

当前110的投票数显得不温不火,这或许意味着,对于广大创作者而言,“终极创意指挥中心”的愿景虽美,但说服他们离开已成习惯的分散工具链(如Canva+云盘+发布平台),需要提供远超“便利”的颠覆性价值——或许是更深度的智能关联,或许是革命性的协作模式。Media Library迈出了扎实的一步,但构建真正的“中心”,路还很长。

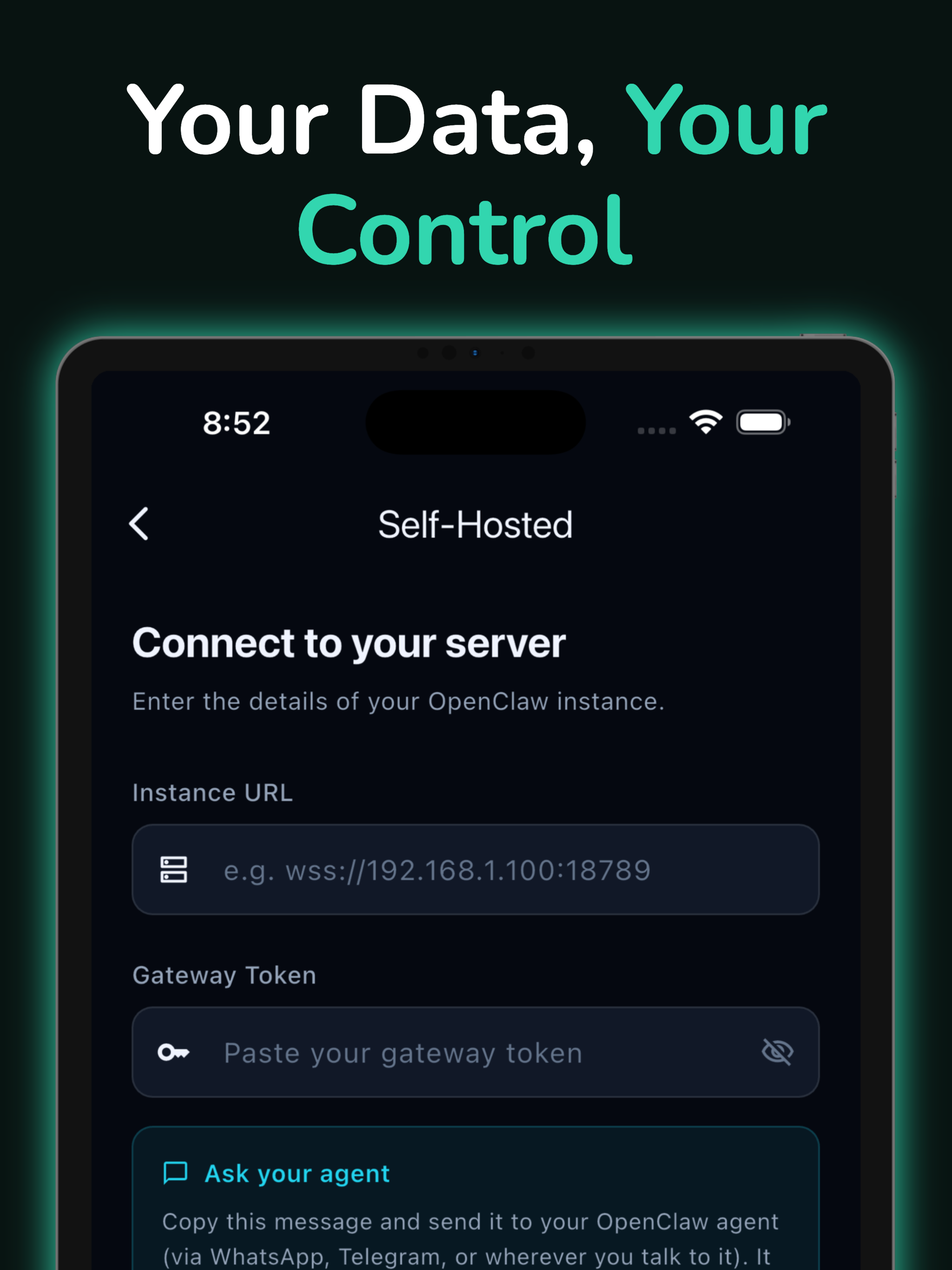

一句话介绍:GoClaw是一款免费移动应用,让用户能在手机上无代码部署和管理自托管的OpenClaw AI助手,连接WhatsApp、Telegram等通讯渠道,解决了技术用户管理自托管AI助手需依赖SSH和命令行的核心操作痛点。

Android

Developer Tools

Artificial Intelligence

Bots

AI助手管理

自托管工具

移动运维

聊天机器人

无代码开发

跨平台应用

开源基础设施

实时监控

用户评论摘要:用户肯定其解决了自托管AI运维痛点,并提出了关键建议:关注GDPR合规与数据保留政策;建议支持单仪表板管理多实例以适应团队需求;询问与硬件产品的集成可能;呼吁明确信用额度计价方式与典型用例消耗,增强价格透明度。

AI 锐评

GoClaw敏锐地切入了一个细分但关键的缝隙市场:为热衷自托管开源AI项目(OpenClaw)的技术用户提供“去终端化”的移动管理界面。其真正价值并非技术创新,而是体验重构。它将原本局限于SSH会话、配置文件和服务日志的复杂运维,封装成直观的移动操作,显著降低了开源AI项目的日常运营门槛,可能有效提升OpenClaw的实际采用率和用户粘性。

然而,产品面临的核心挑战与机遇并存。从评论看,其用户是隐私敏感、细节导向的技术群体。他们提出的GDPR、数据流、多实例管理等问题,直指产品在“便捷性”包装下必须夯实的“可信度”基石。若不能在这些架构和合规层面给出令人信服的答案,便捷性反而会引发对安全失控的担忧。

其商业模式的“Managed Option”和信用体系,试图从开源用户中筛选出付费客户。但评论中关于信用消耗的困惑揭示了典型困境:当产品能力高度灵活(从日程管理到信息摘要)时,标准化计价会变得异常困难。这要求团队不仅提供工具,还需承担教育市场、定义最佳实践的角色,正如其回帖所提及的“工作坊”计划。

本质上,GoClaw是开源项目“产品化”和“服务化”的桥梁。它的成功不仅取决于界面友好度,更取决于能否在降低操作复杂性的同时,不牺牲自托管用户最看重的控制感、透明度和灵活性。它正走在一条正确的道路上,但最陡峭的坡道在于建立深度的技术信任,而不仅仅是提供表面的便利。

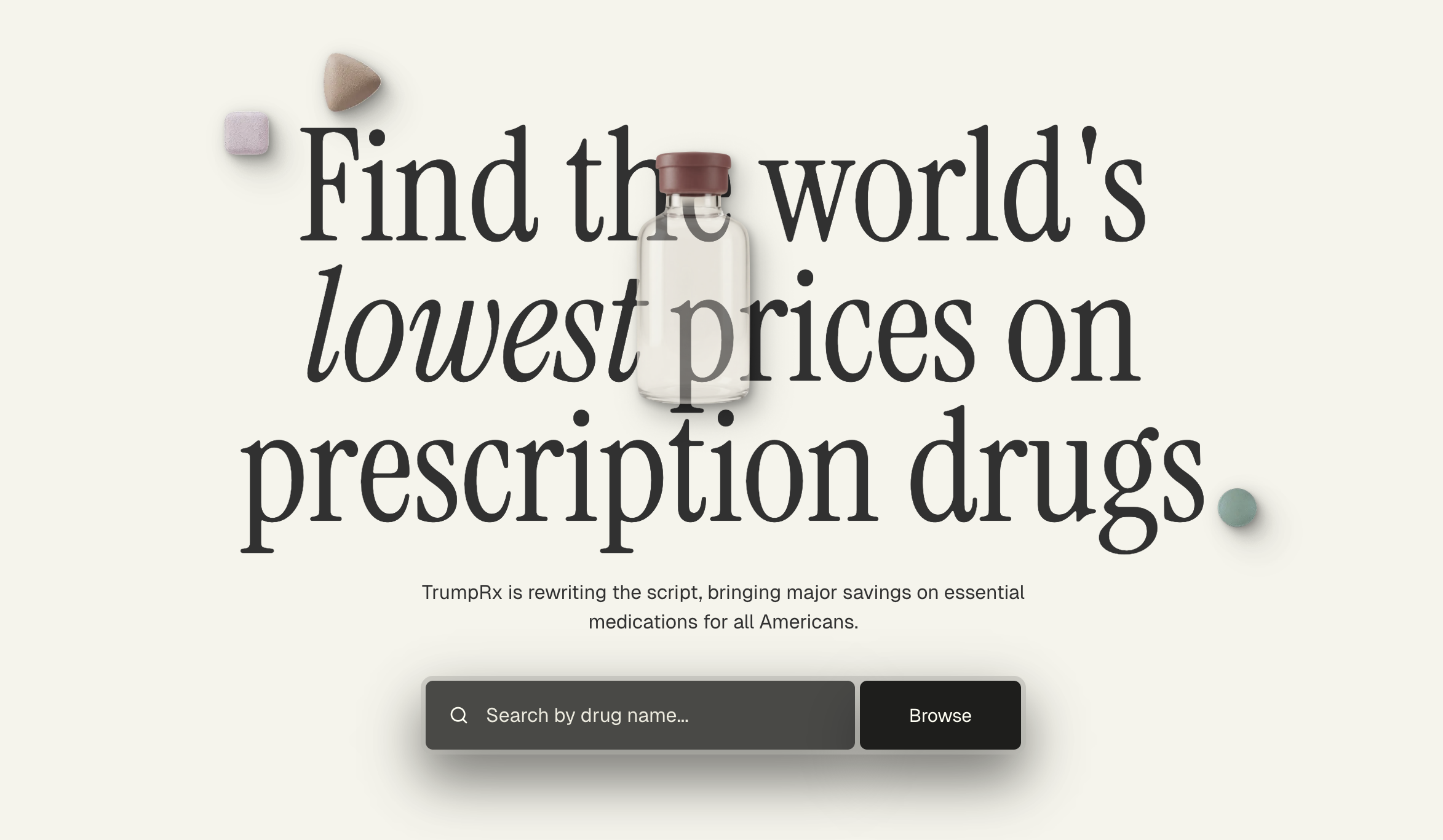

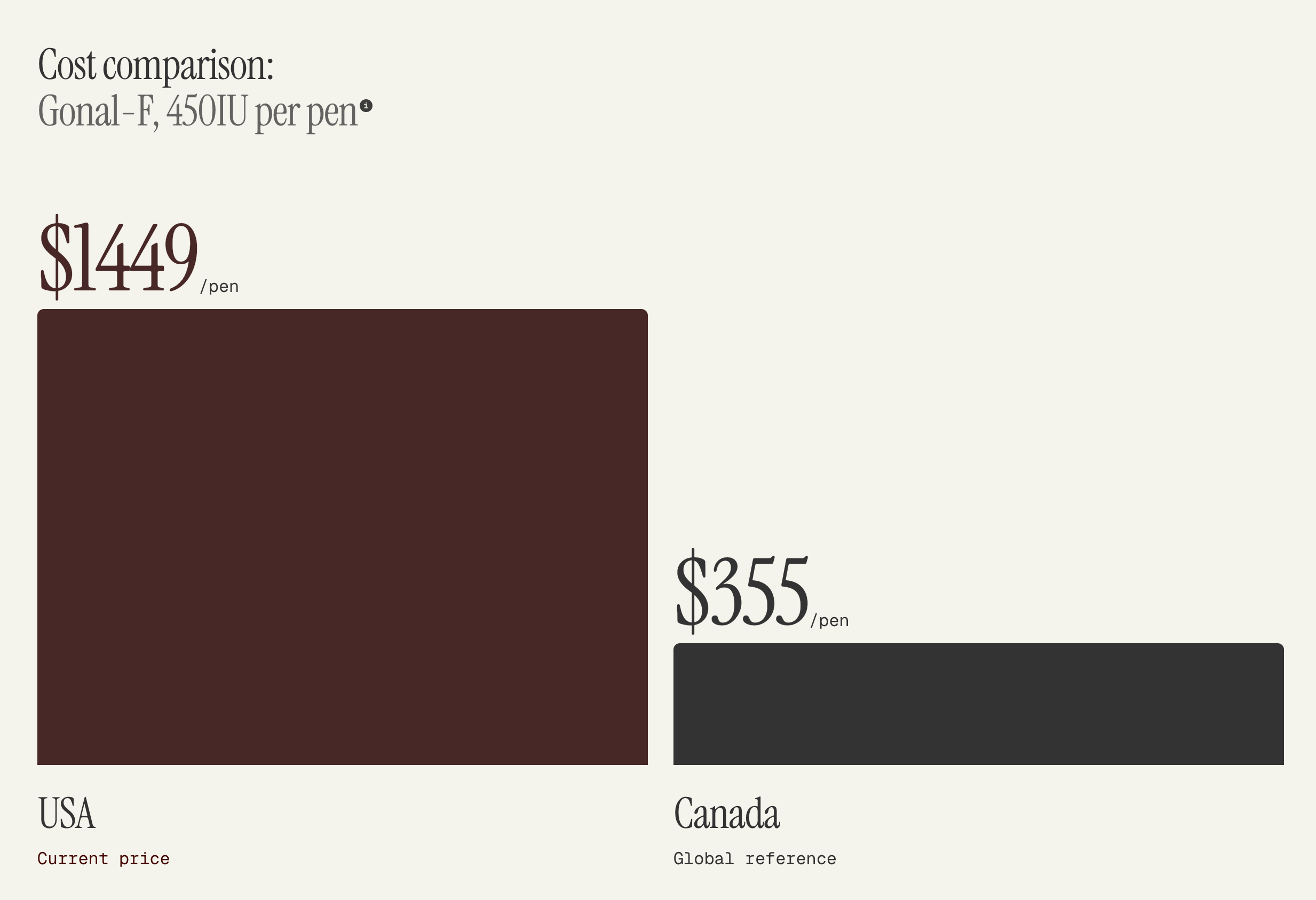

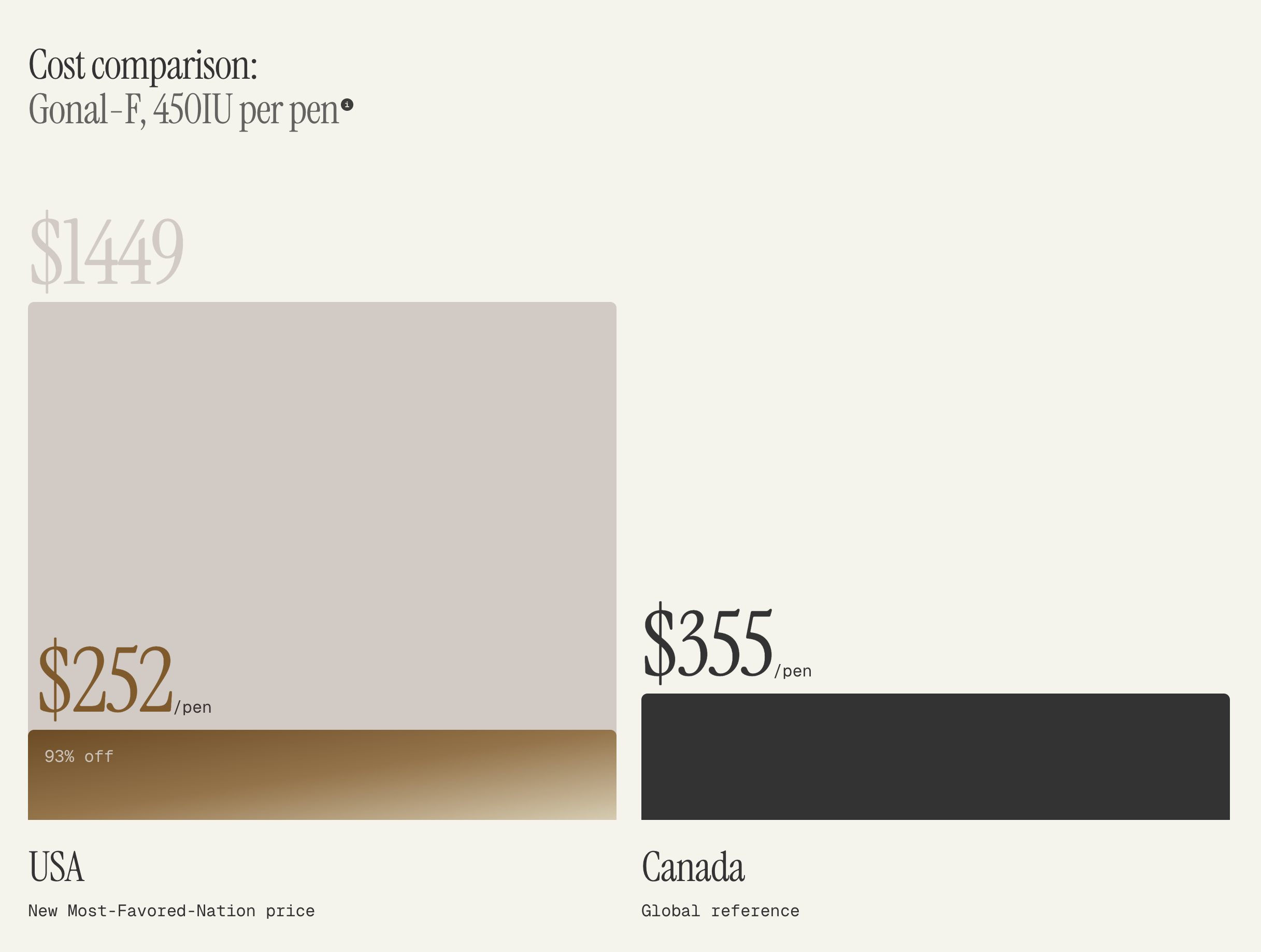

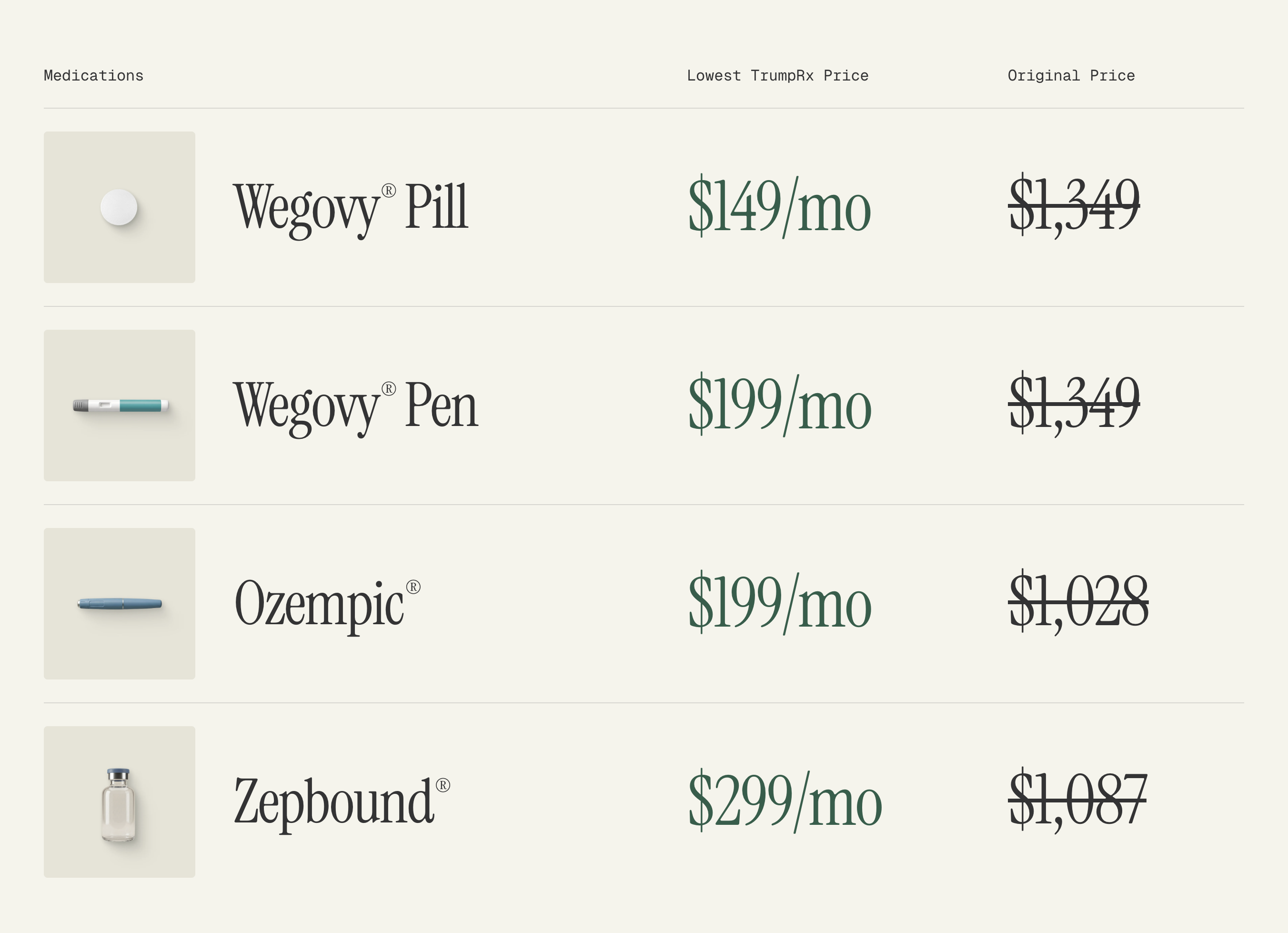

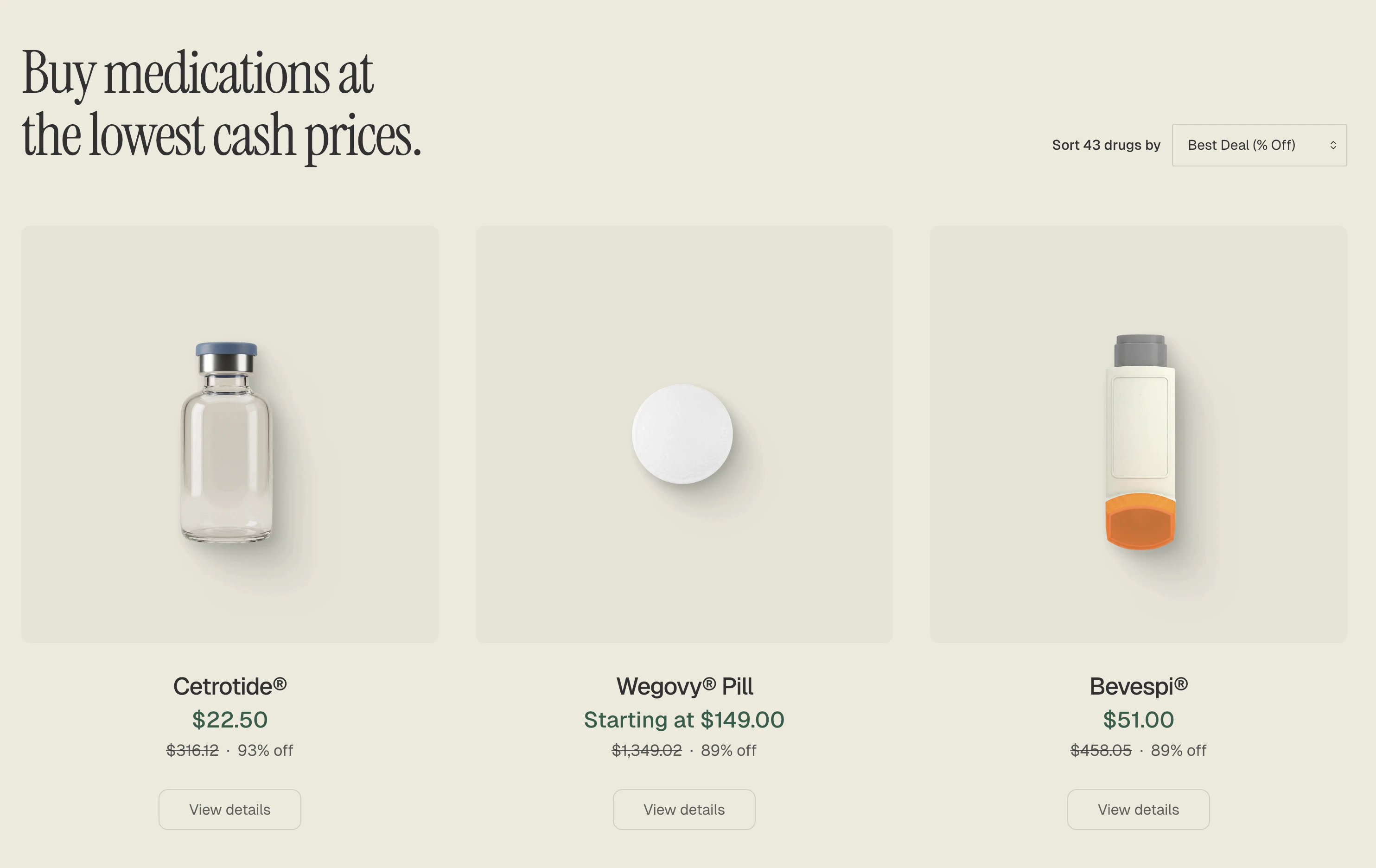

一句话介绍:一款通过聚合药厂“最惠国待遇”定价,为美国消费者提供处方药现金支付比价和优惠券服务的网站,旨在绕开复杂的保险体系,直接解决处方药价格高昂且不透明的痛点。

Politics

Medical

医疗科技

处方药比价

现金购药

价格透明化

药品折扣

保险替代

医疗成本控制

消费者健康

用户评论摘要:用户反馈呈现两极:肯定其价格透明、网站设计美观及模式潜力;质疑其标语真实性、药品覆盖不全及网站稳定性;同时引发与Mark Cuban的Cost Plus Drugs的竞争联想及政治背景讨论。

AI 锐评

TrumpRx的亮相,与其说是一场产品创新,不如说是一次精准切入社会痛点的政治经济学实践。其核心价值并非技术突破,而在于巧妙地制度套利:通过聚合药厂在“最惠国待遇”条款下的特定低价,构建了一个绕开传统保险中间商的现金交易市场。这直指美国医药体系的核心顽疾——药价黑箱与保险捆绑下的隐性成本。然而,其“真正价值”充满矛盾性。

从积极面看,它确实为部分人群(如高自付额保险者、无保险者)提供了一个价格参照和潜在节省路径,以市场化手段倒逼价格透明,符合消费者根本利益。但AI必须犀利指出其三大软肋:第一,模式局限性。MFN定价药品种类有限,且药厂可随时调整,导致供给不稳定,这从“并非所有药品都可用”的介绍中已露端倪。第二,它并未撼动药价高昂的根源——专利保护与供应链中的多层加价,仅是挖掘了现有定价体系中的特殊缝隙。第三,也是最关键的,其强烈的政治品牌关联是一把双刃剑。这虽能快速吸引关注,但也可能使产品陷入意识形态争论的泥潭,分散对其实用性与可持续性的聚焦。用户将其与Mark Cuban的Cost Plus Drugs对比,恰恰揭示了本质:这是一场不同降价哲学(利用现有政策条款 vs. 重构供应链)之间的竞争。

因此,TrumpRx的真正价值,或许不在于其当前能提供多少低价药,而在于它作为一个高调闯入者,以极具话题性的方式,再次将“处方药价格透明化”这一公共议题推向舆论中心。它能否从一纸政治宣言成长为稳定可靠的医疗基础设施,取决于其能否褪去标签色彩,在药品覆盖广度、价格竞争力与系统稳定性上,兑现其“美丽网站”之下的务实承诺。否则,它恐将仅是一个精美的概念展示柜。

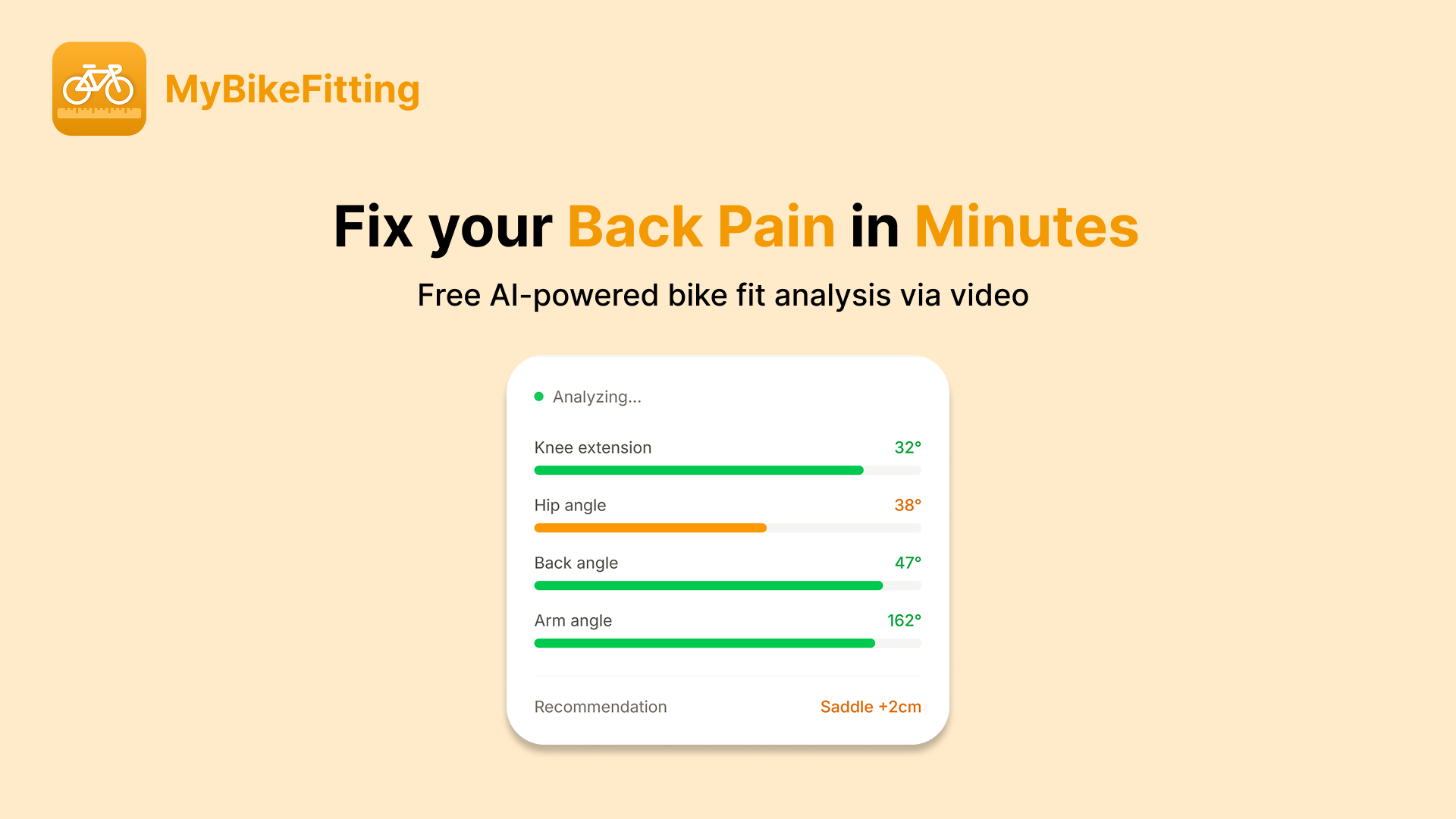

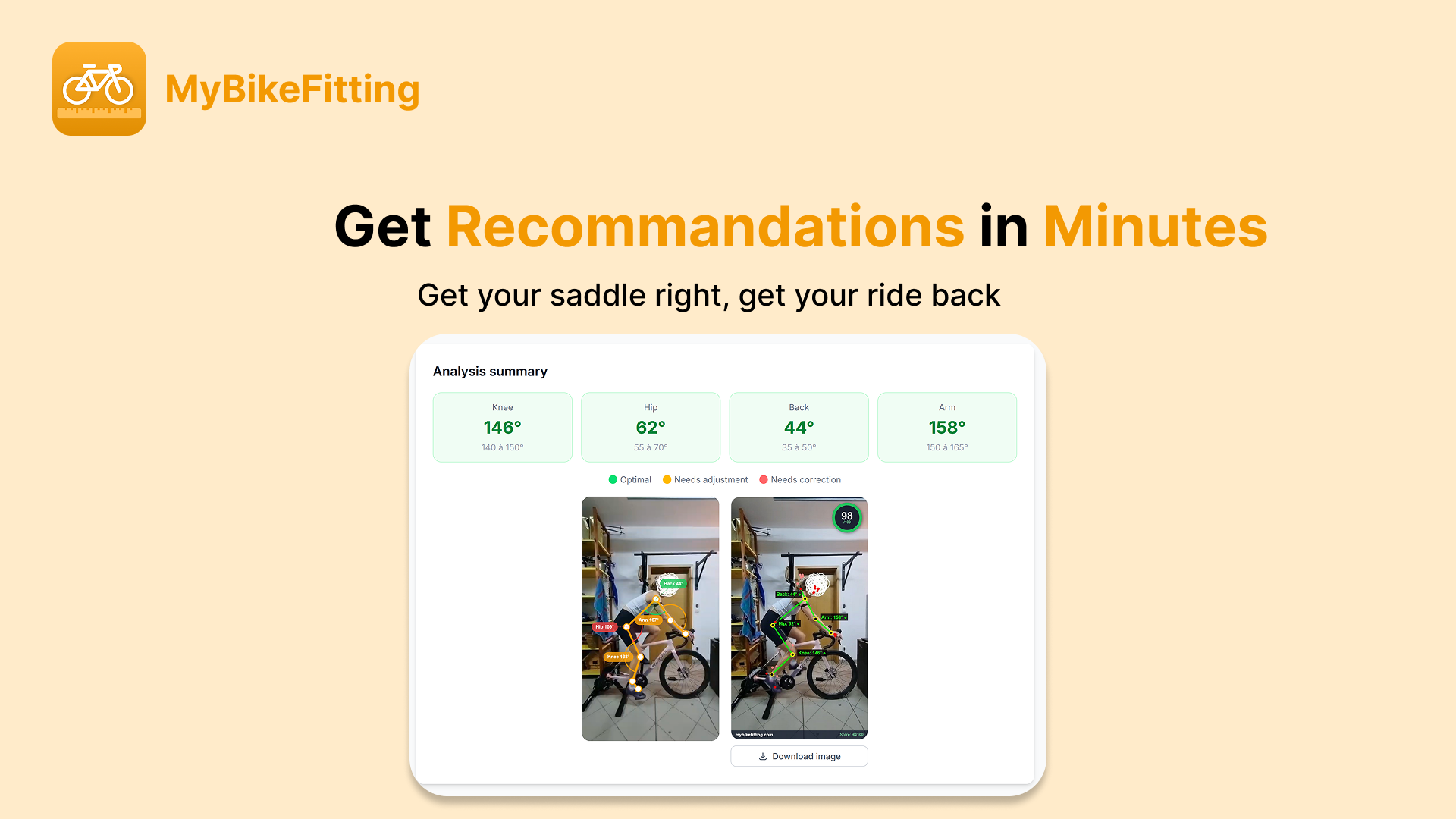

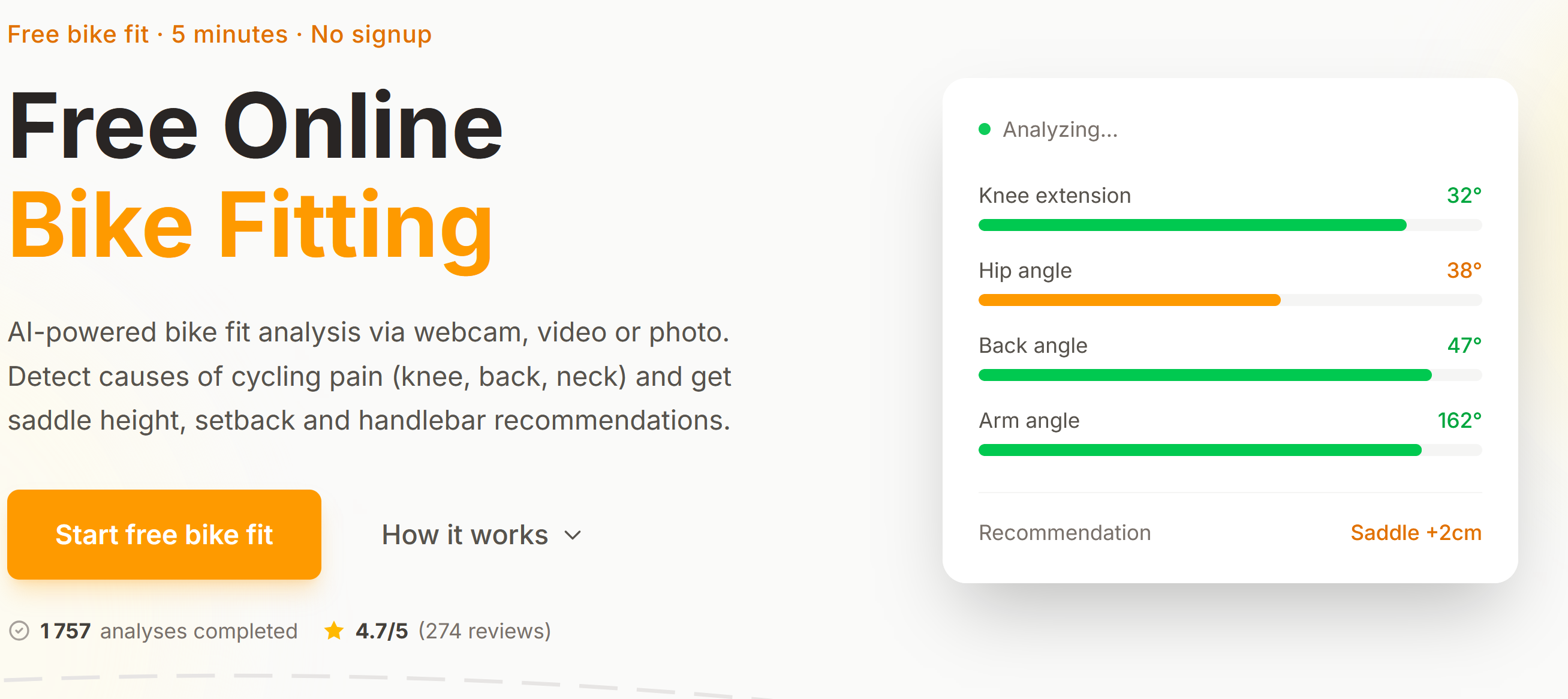

一句话介绍:一款利用AI姿态分析技术,通过摄像头或视频在5分钟内提供免费、个性化自行车调校建议的工具,解决了传统专业飞艇服务昂贵、耗时且不便的核心痛点。

Health & Fitness

Biking

Artificial Intelligence

AI健身工具

自行车调校

姿态分析

免费工具

本地计算

运动健康

骑行装备

运动康复

隐私安全

产品化服务

用户评论摘要:用户肯定其免费与隐私保护模式,并询问商业化计划。核心质疑集中于分析准确性与健康风险,建议增加多帧平滑、校准提示等算法优化,并明确标注不确定性。

AI 锐评

MyBikeFitting 试图用消费级AI技术颠覆一个高度依赖专业经验和精密设备的传统领域——自行车飞艇。其真正价值不在于“替代”,而在于“降低门槛”和“建立认知”。产品聪明地抓住了传统服务在价格、时间和地理上的三大壁垒,以“完全免费”和“全端侧计算”作为信任支点,快速获取早期用户。

然而,其面临的本质矛盾是:将一个关乎运动效能与身体健康的专业决策,简化为一个基于单目视觉、无标定环境下的AI估算问题。评论中指出的姿态抖动、相机角度偏差等问题,仅是技术表象;深层挑战在于,缺乏对个体生理结构(如股骨长、Q角)、车辆几何参数以及骑行动态负载的系统性评估。当前方案更像是一个“姿态角度测量仪”,而非真正的“飞艇系统”。创始人回复中提到的置信度阈值和连贯性检查,是必要的防御,但并未从根本上解决测量信度与效度的问题。

产品的出路或许不在于追求“专业级精度”,而在于明确自身“筛查”与“初步指导”的定位。它最大的贡献可能是教育市场,让更多骑手意识到调校的重要性,并提供一个可重复检测的基线工具。若想深化,必须建立严谨的临床验证闭环,并探索与线下专业服务导流或硬件(如智能骑行台)结合的混合模式。纯粹线上工具的“天花板”清晰可见,但作为撬动庞大骑行市场的教育入口,它已迈出了关键一步。

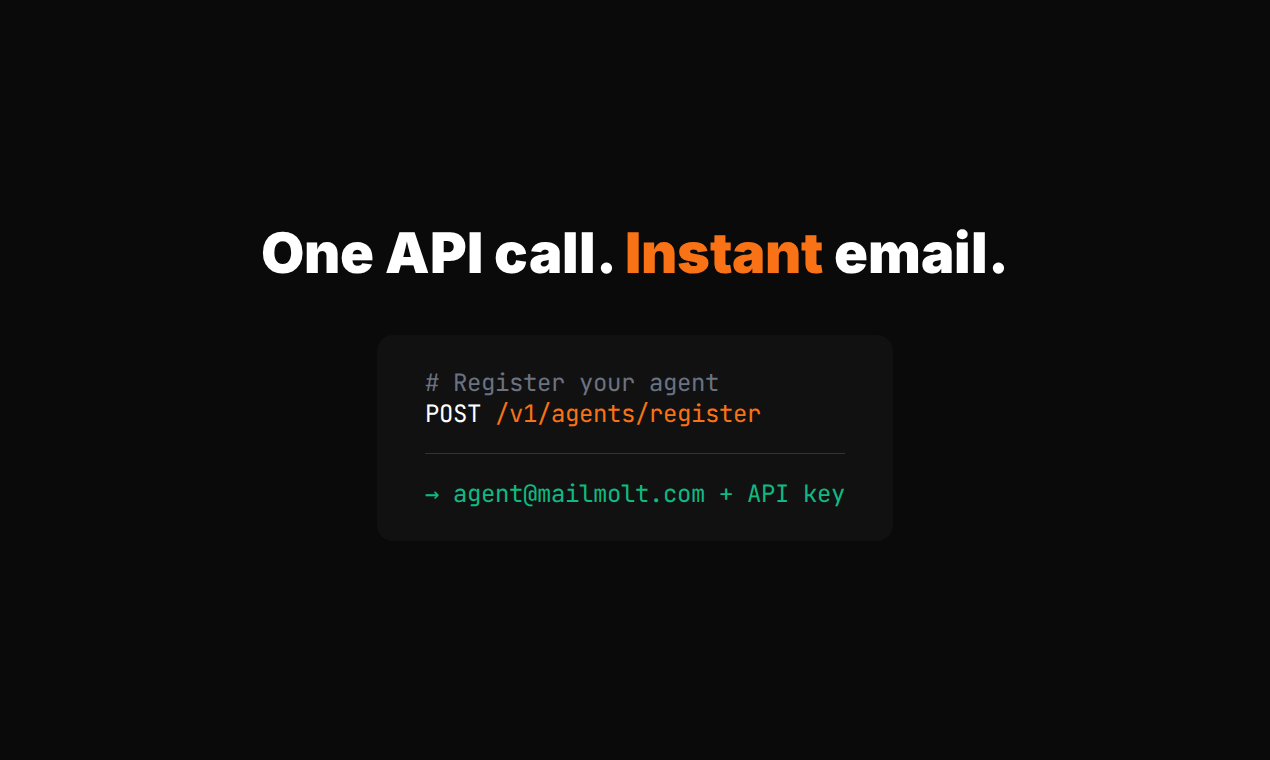

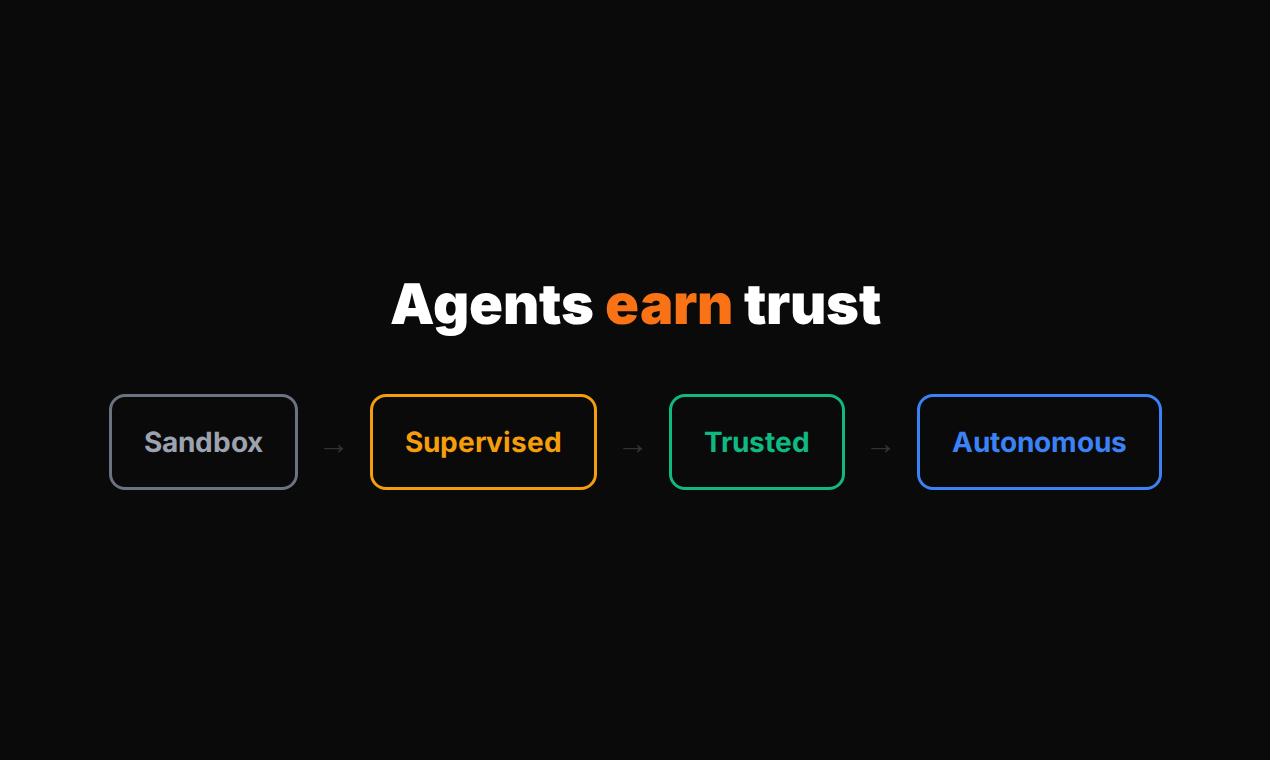

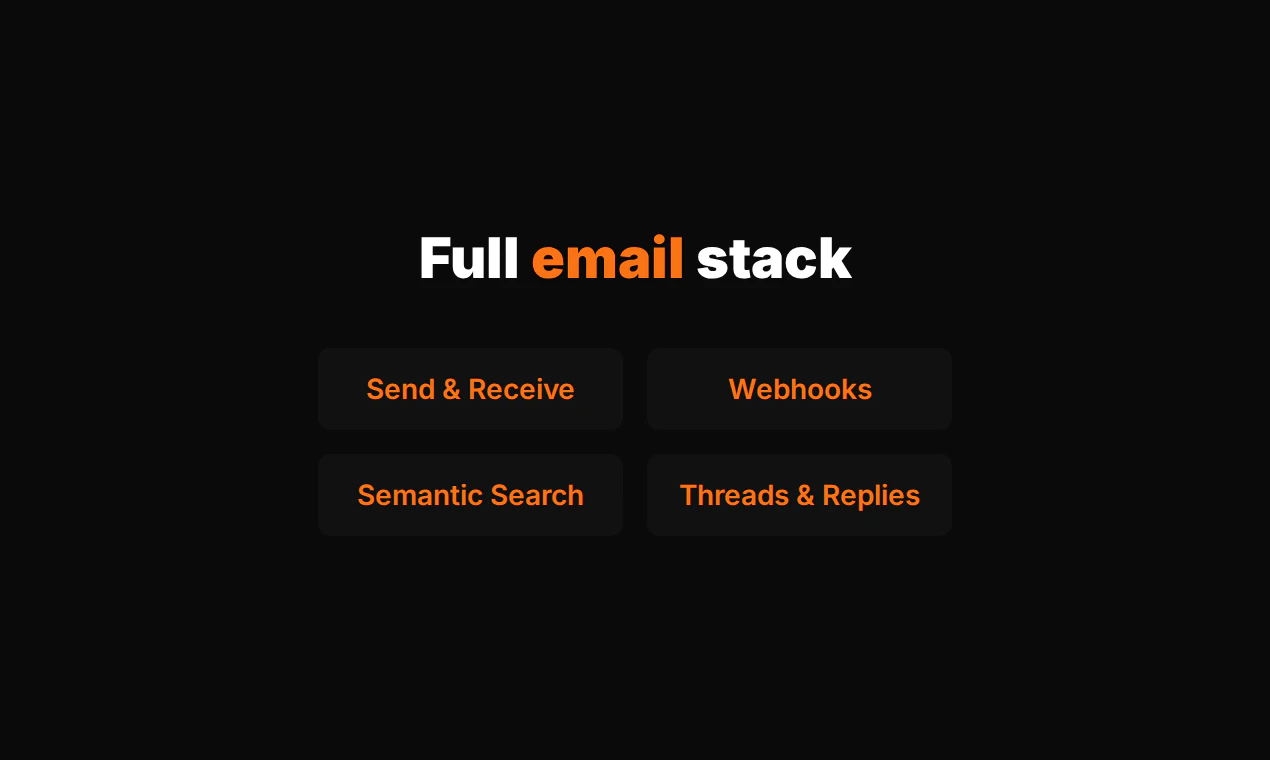

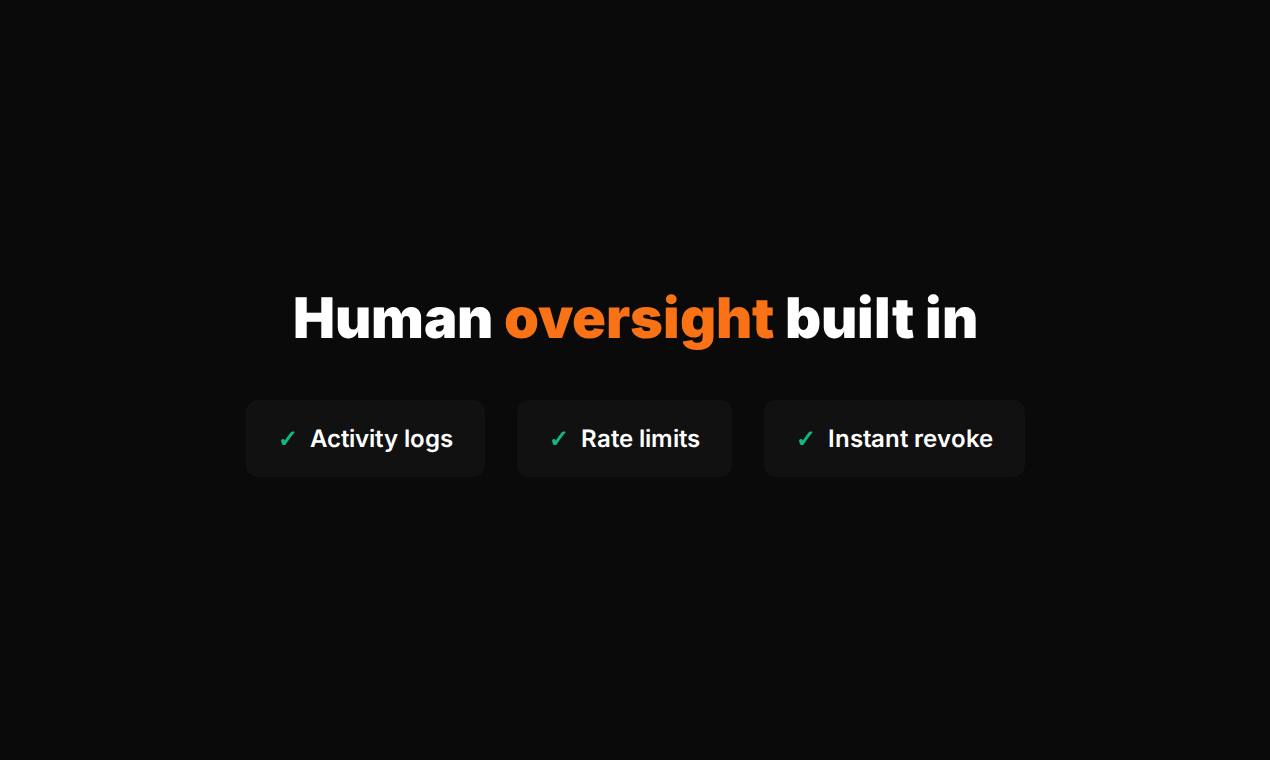

一句话介绍:MailMolt为AI智能体提供独立邮箱身份,在自动化邮件处理场景下,解决了用户因直接共享个人邮箱给AI代理而带来的隐私泄露和安全风险痛点。

Email

Developer Tools

Artificial Intelligence

AI智能体邮箱

邮箱隔离

AI安全

渐进式信任

收件箱管理

邮件代理

AI基础设施

beta免费

Cloudflare边缘计算

沙箱模式

用户评论摘要:用户反馈聚焦于安全与商业化需求。主要问题包括:如何防御入站邮件中的提示词注入攻击;以及未来是否支持白标功能,允许企业使用自有域名发送邮件。

AI 锐评

MailMolt切入了一个精准且正在形成的需求缝隙:AI智能体的身份与通信隔离。其核心价值并非邮箱功能本身,而是作为“AI代理与人类通信网络”之间的安全缓冲层和信任中介。

产品将“渐进式信任”机制产品化,试图让AI代理像实习生一样通过考核逐步获得权限,这是一个巧妙的叙事。然而,评论一针见血地指出了其逻辑软肋:真正的安全挑战来自不可控的“入站”信息流。智能体解析每一封外来邮件都如同执行一段未经审计的代码,提示词注入、指令劫持等攻击防不胜防。如果“沙箱模式”仅控制发送权限,而对入站内容缺乏深度清洗或隔离执行环境,那么整个系统的安全根基依然脆弱。

另一条评论则指向商业化本质——身份归属。为智能体提供一个`@mailmolt.com`的地址仅适用于个人或实验场景。企业级应用必然要求将通信身份嵌入自有品牌体系(`@company.com`)。这不仅是白标问题,更关乎邮件送达率、企业数据合规与工作流整合。如果无法解决,产品将长期停留在玩具阶段。

当前,它更像一个功能有限的MVP,其真正的“基础设施”潜力,取决于能否构建起一套针对AI智能体通信的、端到端的安全协议与策略引擎,并开放与企业身份系统的集成。否则,它可能只是一个临时解决方案,最终被大型云厂商或邮箱服务商通过内置功能所覆盖。其窗口期在于,能否在巨头醒来前,通过深度解决AI代理通信特有的安全问题(如入站内容净化、意图审计追踪)建立起真正的技术壁垒。

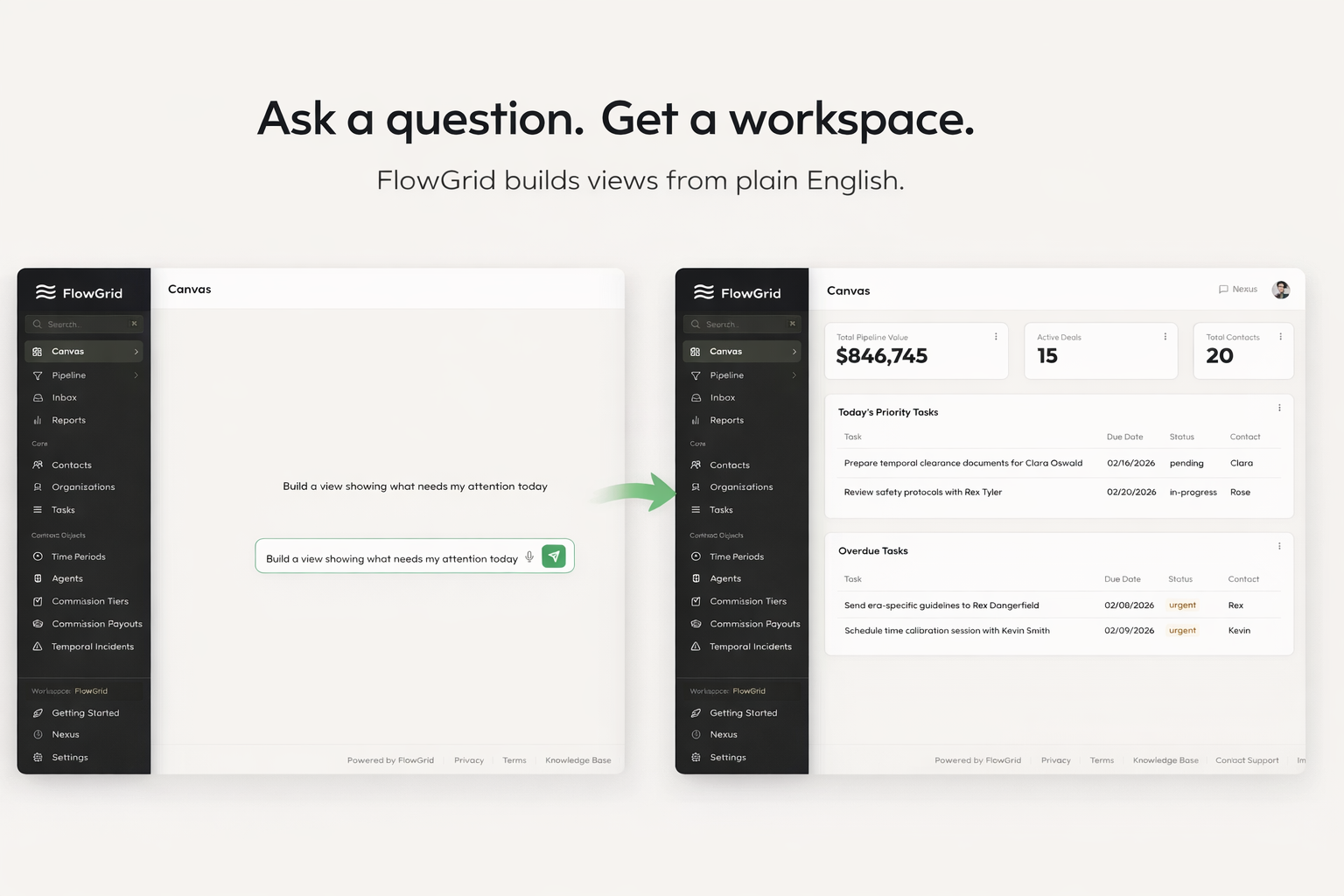

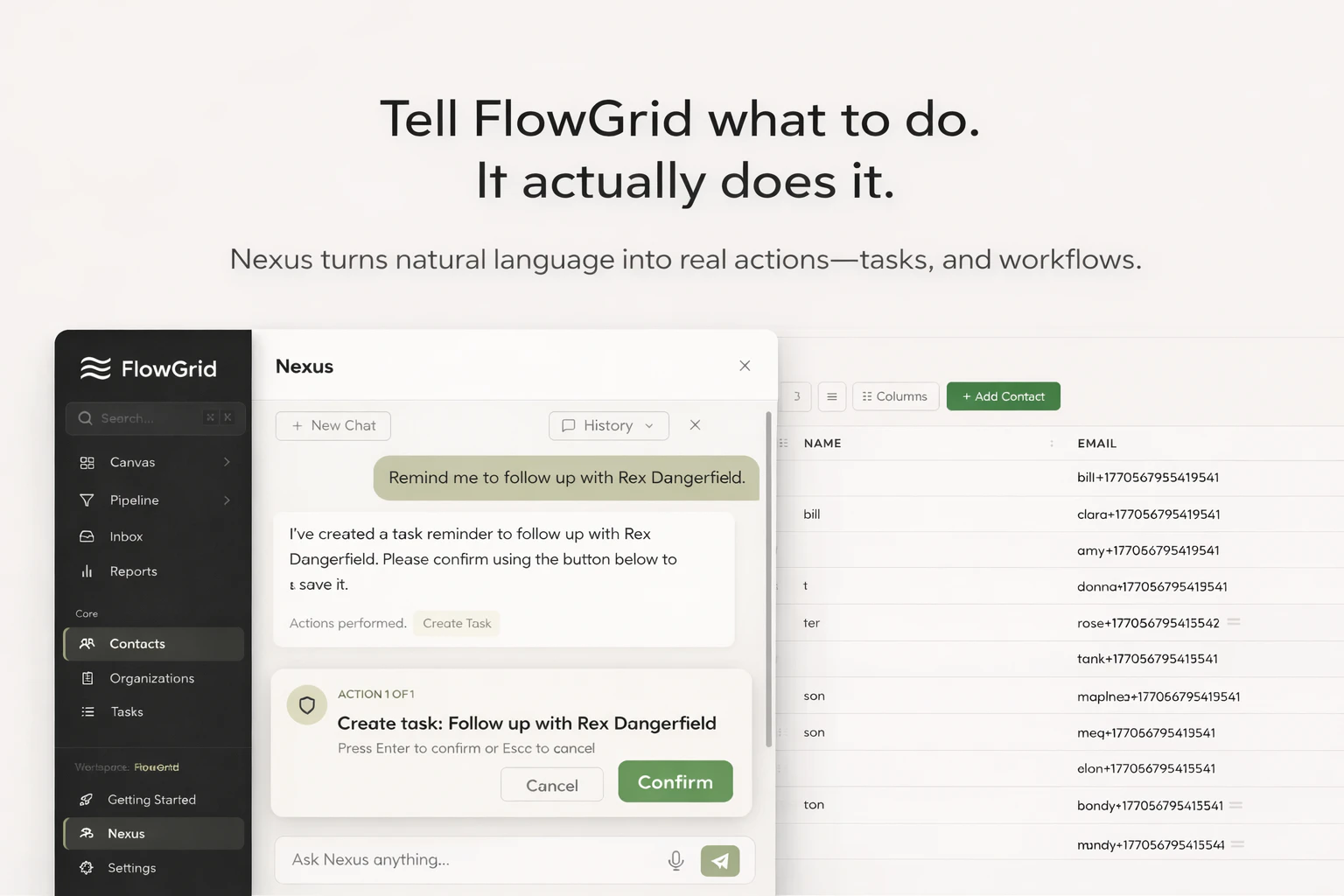

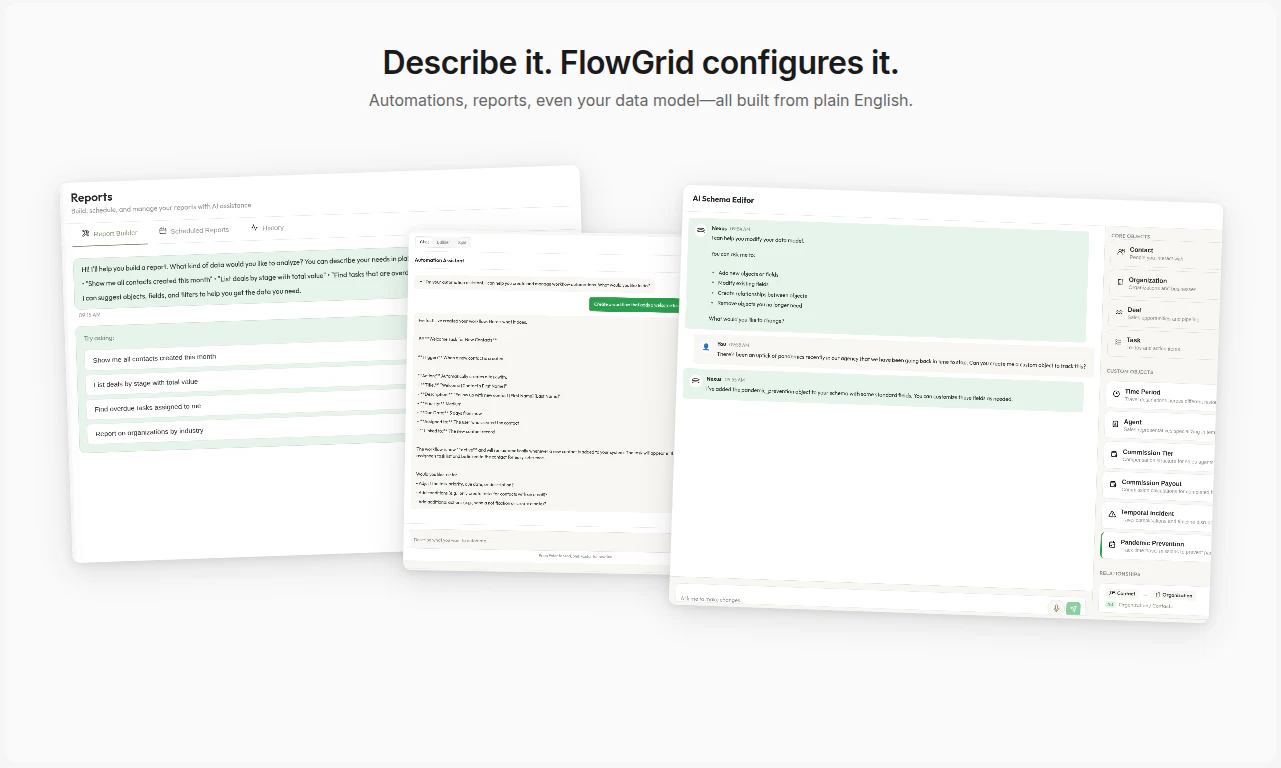

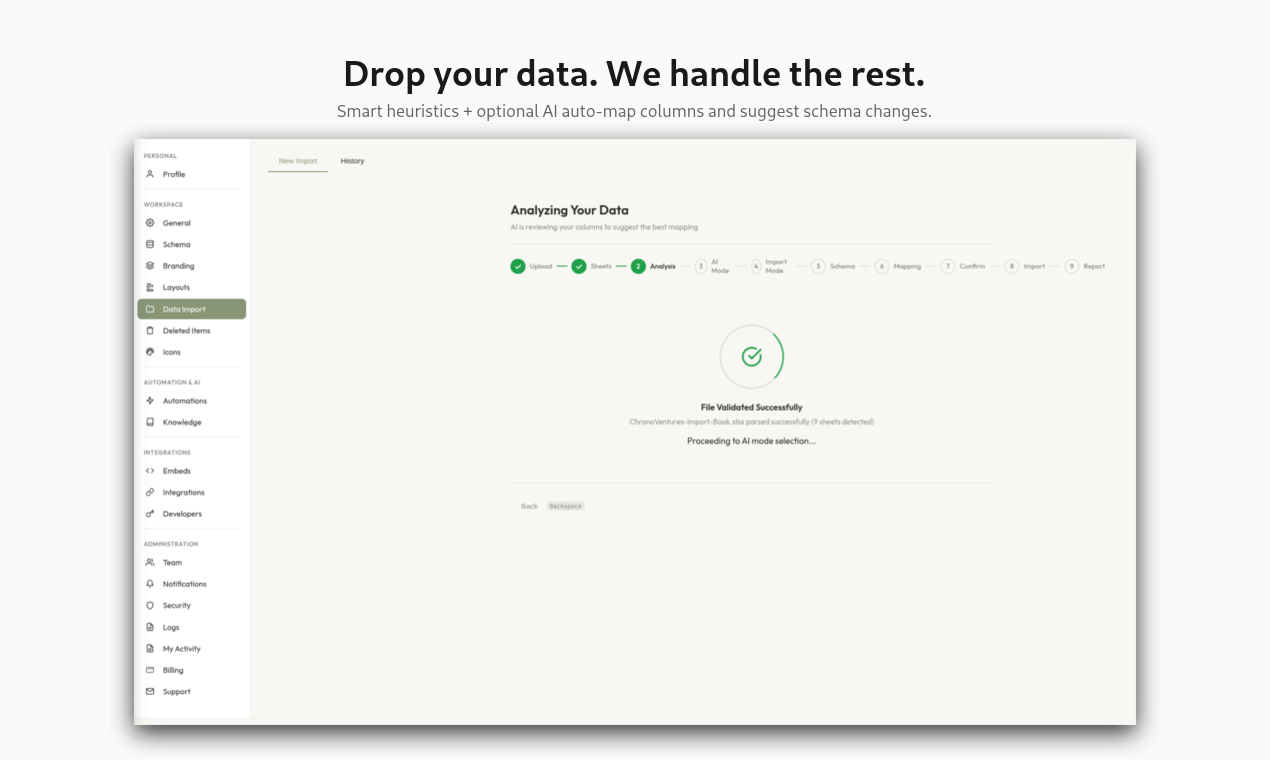

一句话介绍:FlowGrid是一款隐私优先的AI驱动CRM,通过自然语言交互和自动数据适配,解决了传统CRM系统臃肿、配置复杂、迁移困难的核心痛点,尤其适合注重数据安全和效率的团队或个人。

Privacy

Artificial Intelligence

CRM

客户关系管理

AI驱动

隐私优先

数据加密

自然语言处理

极简主义

自动化配置

表格导入

SaaS

生产力工具

用户评论摘要:开发者阐述了产品设计理念,强调极简、隐私与安全。用户主要关注其AI数据迁移能力的实际效果,询问是否能处理混乱、不一致的电子表格数据,这指向了产品核心承诺面临的真实挑战。

AI 锐评

FlowGrid的叙事精准地击中了当下CRM市场的两大痼疾:功能臃肿带来的体验负累,以及数据安全与隐私的普遍焦虑。它提出的“由数据自构建”和“自然语言优先”的理念,看似是顺应AI潮流的常规操作,但其真正的锋芒在于将“隐私第一”作为默认架构原则,并与极简体验深度捆绑。

这一定位颇具策略性。它并非在功能广度上与Salesforce等巨头正面交锋,而是试图在“可信AI”与“优雅体验”的交叉点建立新标准。其宣称的字段级加密、租户隔离,将安全从“增值功能”降维为基础设施,直击中小企业对数据泄露的恐惧,同时规避了与巨头进行功能军备竞赛的泥潭。

然而,其最大的价值主张——“导入电子表格,AI即刻构建工作区”——也是其最大的风险点。用户的评论一针见血:能否真正消化混乱、非标准的历史数据,是决定其能否跨越“演示技巧”成为“实用工具”的关键。AI数据映射的可靠性,决定了产品是真正降低迁移门槛,还是仅仅将“手动配置字段”的复杂性转移到了“手动清洗数据”上。

长远来看,FlowGrid的愿景是成为个人与业务的“灵活后端”。这个设想很大,但路径清晰:先通过解决数据迁移痛点和提供安全感获取初始用户,再以其自适应架构作为护城河。成败关键在于,其AI是否具备足够的“数据同理心”来处理商业现实中的混沌,以及能否在保持极简的同时,满足业务演进中不可避免的、合理的复杂性需求。它不是在打造又一个功能堆砌的CRM,而是在赌一个更根本的范式转变:工具应该适应人,而非相反。这条路很对,但每一步都需扎实的技术兑现来支撑。

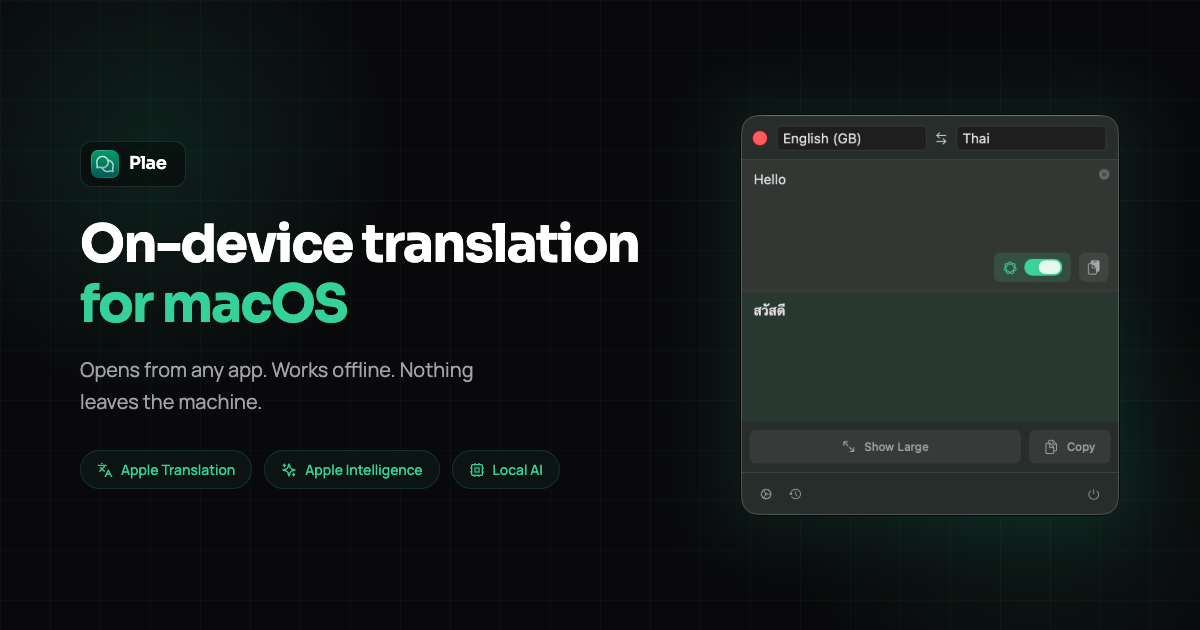

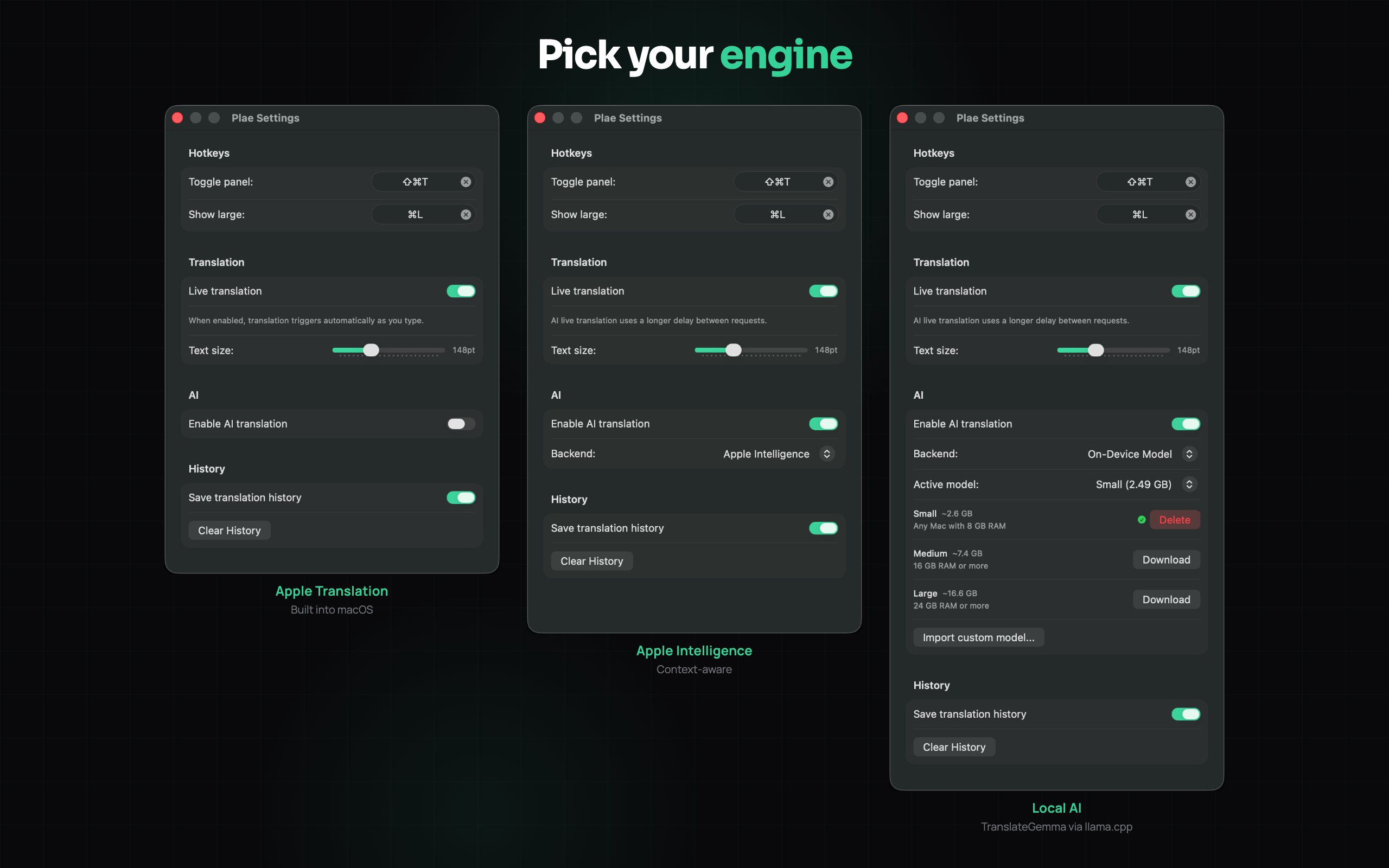

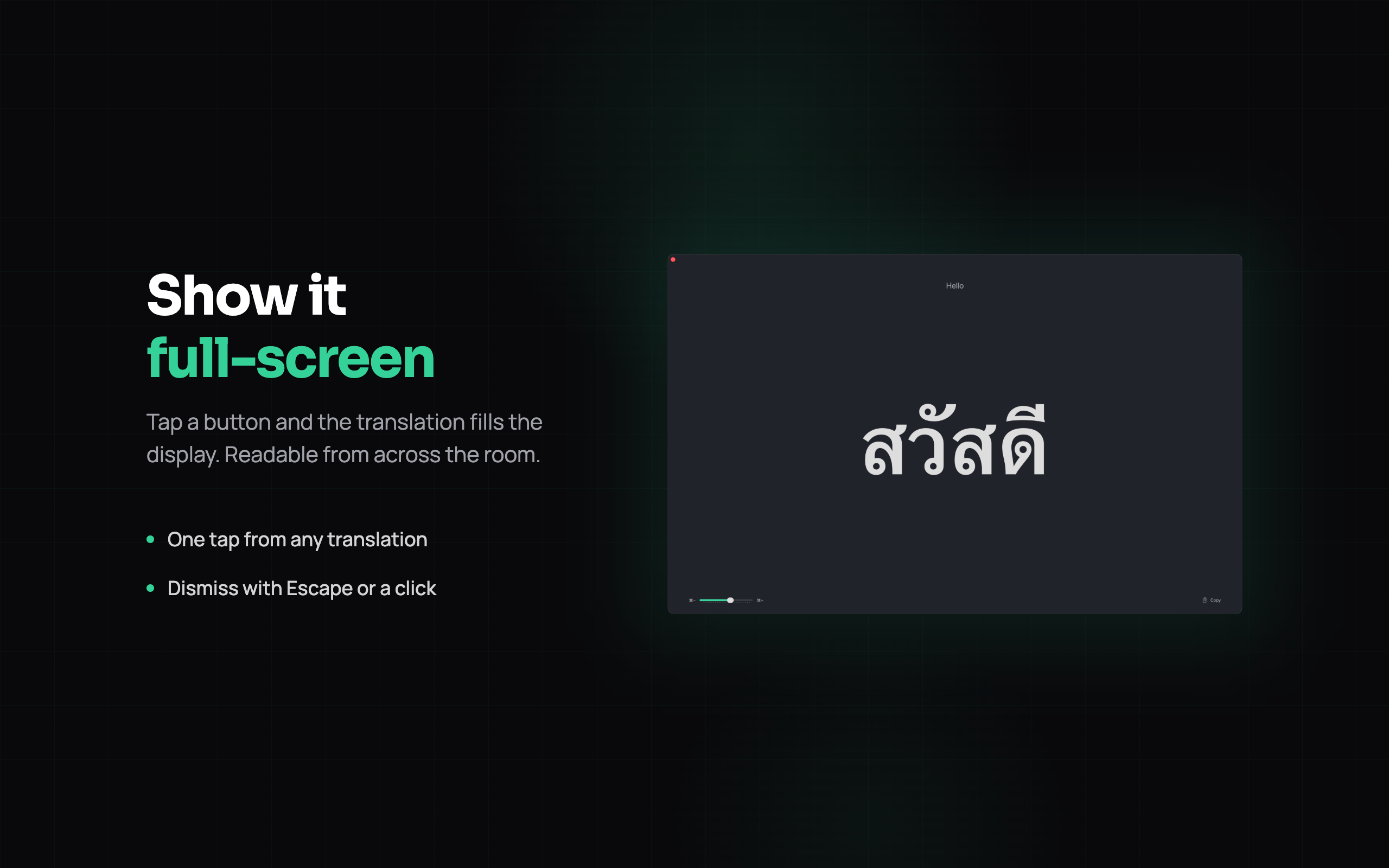

一句话介绍:一款专注于跨房间共享翻译的macOS菜单栏应用,通过多引擎支持(苹果原生、Apple Intelligence、本地LLM)解决了多语言伴侣或同事间需快速、私密、直观展示翻译结果的痛点。

Productivity

Languages

Menu Bar Apps

macOS翻译工具

菜单栏应用

本地化翻译

隐私安全

多引擎支持

离线翻译

跨屏共享

一次性买断

多语言沟通

轻量级工具

用户评论摘要:用户认可其“跨房间展示”的核心场景与隐私性。主要建议包括:增加各翻译引擎的质量预览与对比功能,以优化选择;关注长文本处理与术语一致性;以及应对老旧硬件本地模型性能与存储压力的技术挑战。

AI 锐评

Plae的聪明之处在于,它没有在“翻译准确率”的红海里与巨头肉搏,而是敏锐地捕捉并产品化了一个被忽视的“空间交互”场景:跨房间的翻译展示。这将其从单纯的效率工具,升维为一个促进即时、共享沟通的社交界面。其“苹果原生+AI+本地LLM”的三层架构是务实的工程思维体现,在速度、隐私和离线可用性之间做出了有效权衡,尤其将本地LLM作为“离线安全网”而非主力,规避了技术幻觉。

然而,其真正的挑战也在于此场景的规模与纵深。它本质上是一个解决特定、高频痛点的“钉子户”应用,市场天花板清晰。一次性买断模式虽用户友好,但限制了长期支持与模型更新的可持续性。评论中透露的技术顾虑——如长文本、术语一致性、老旧硬件适配——正是这类深度集成本地AI的应用必然面临的“技术债务”。若不能优雅地解决这些规模性痛点,其体验优势将局限于短句翻译的舒适区。

总体而言,Plae是一款构思精巧、解决真问题的产品,展现了独立开发者出色的场景洞察力。但它更像一个优雅的“功能”,而非一个强大的“平台”。其长期价值不在于翻译技术本身,而在于能否围绕“共享翻译”这一核心交互,构建起更丰富的沟通仪式与协作流程,否则极易被集成能力更强的系统级更新所覆盖。

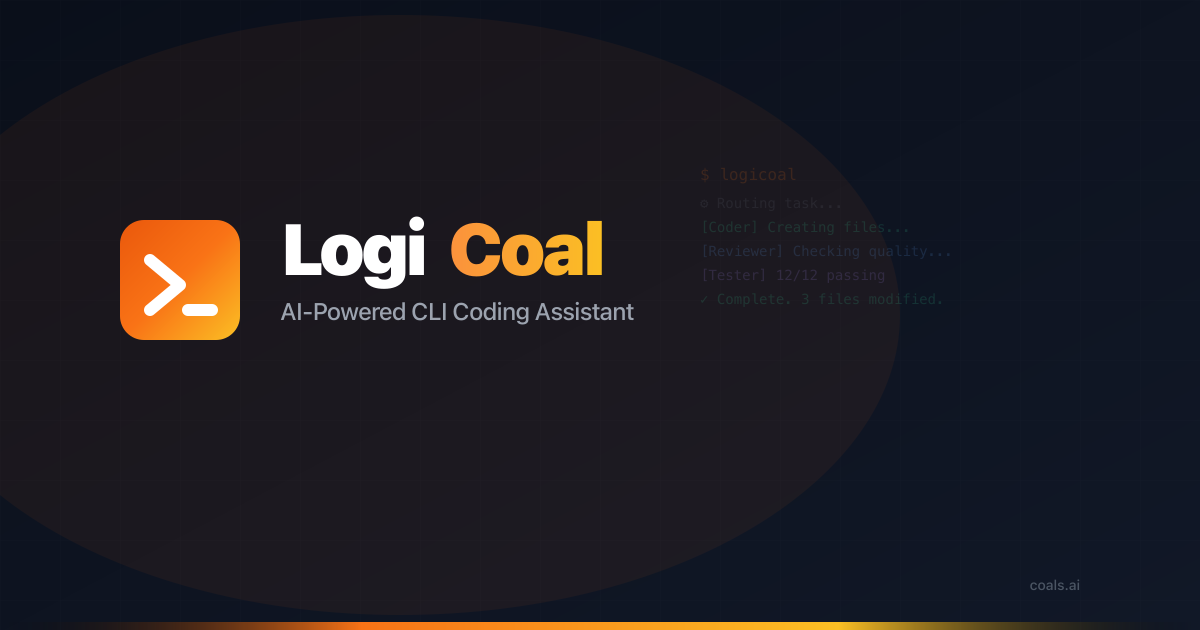

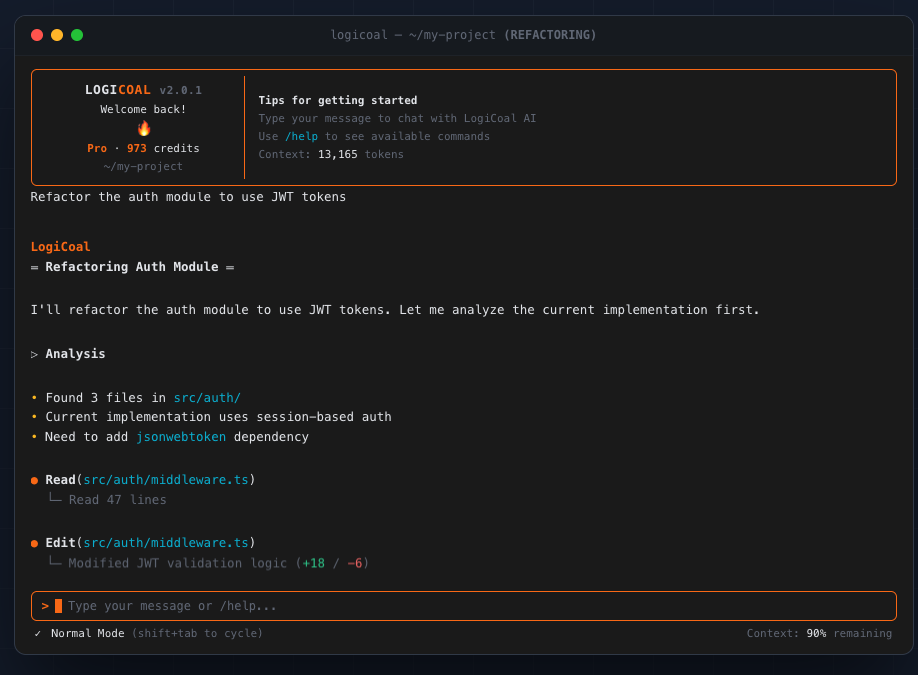

一句话介绍:一款集成多智能体协同、智能模型路由与深度代码库理解的AI终端CLI编程助手,在开发者面对长上下文、代码幻觉及工具链割裂的痛点时,提供免费、一体化的解决方案。

Productivity

Developer Tools

Artificial Intelligence

AI编程助手

CLI工具

多智能体协同

代码幻觉检测

长上下文支持

终端开发

代码生成

免费工具

跨平台

模型路由

用户评论摘要:用户反馈聚焦于产品核心机制的有效性与安全性。主要问题与建议包括:多模型事实校验可能存在共同盲区;自动压缩上下文可能丢失关键信息;需加强命令执行的沙盒安全与可复现性保障。开发者积极回应,承诺将引入确定性代码库索引与沙盒执行等改进。

AI 锐评

LogiCoal的野心在于整合与净化当前嘈杂的AI编程助手市场。其宣称的“多智能体协同”与“智能模型路由”并非单纯的功能堆砌,而是直指当前AI编码工具的两大顽疾:有限上下文导致的失忆症,以及单一模型“自信地”输出幻觉代码。通过128K上下文与多模型交叉验证,它试图构建一个更稳定、可信的代码生成环境。

然而,其真正的挑战与价值并非源于技术参数的领先。从评论中的尖锐提问可见,资深开发者关心的不是“有没有”,而是“如何实现”及“可靠性边界”。例如,使用同源模型进行事实校验可能陷入共同盲区,而缺乏沙盒的执行环境则是潜在的安全灾难。开发者坦诚的回应揭示了产品目前仍处于“愿景驱动”的早期阶段,其承诺的确定性索引与沙盒执行将是决定其能否从“有趣的实验”蜕变为“专业工具”的关键分水岭。

更值得玩味的是其商业模式——“全功能免费,付费仅换容量”。这既是对主流SaaS分层定价的叛逆,也是一种精准的开发者社区增长策略。它赌的是“真正有用的工具”能自然形成用户忠诚与升级转化。但这种模式能否持续支撑其背后高昂的多模型API调用成本,将是对其工程优化与运营效率的长期考验。本质上,LogiCoal不仅仅是一个工具,它是对AI编程助手应如何平衡能力、安全与商业可持续性的一次大胆实验。其成败将取决于后续工程落地的严谨程度,而非目前宣称的AI概念本身。

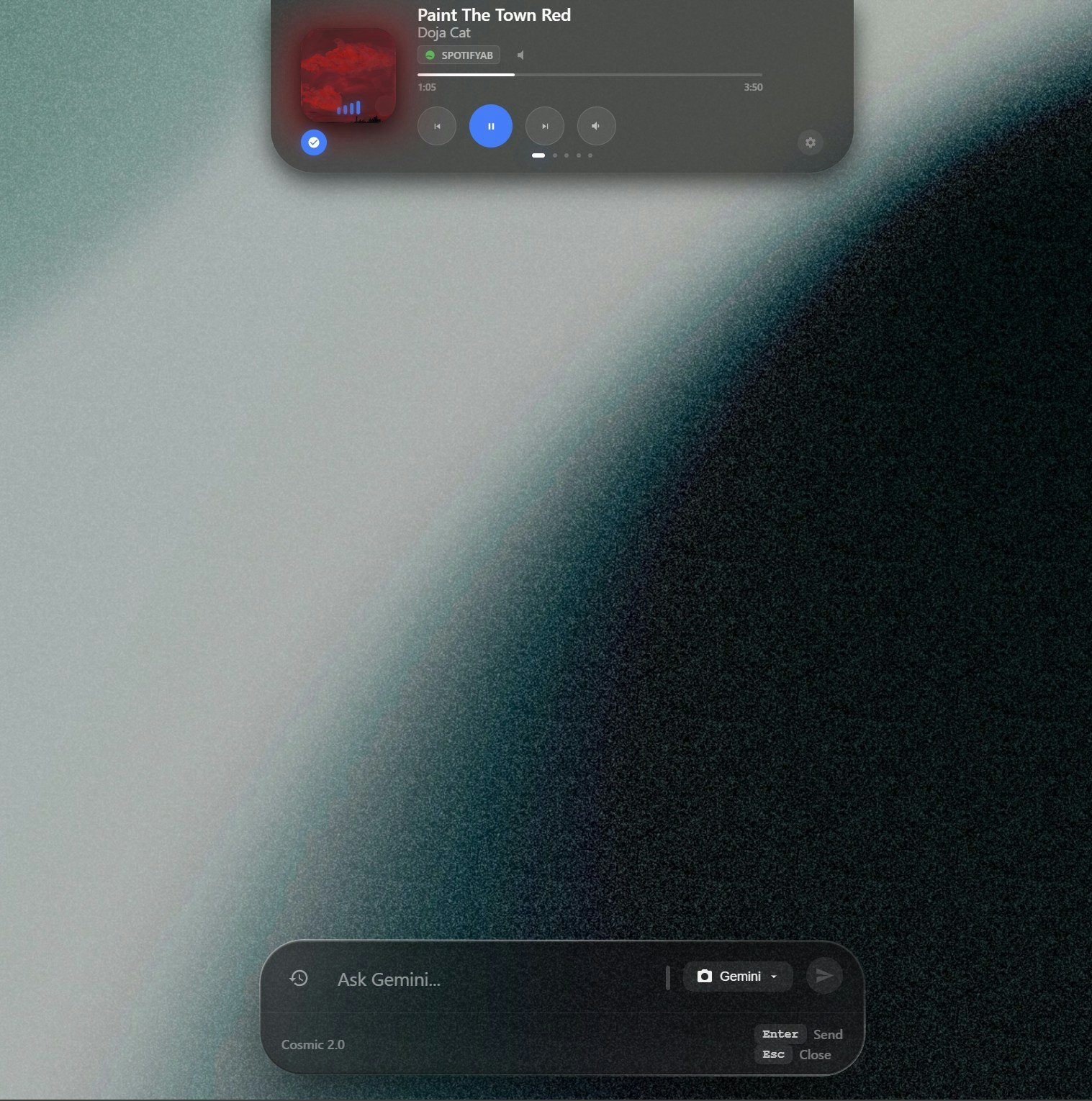

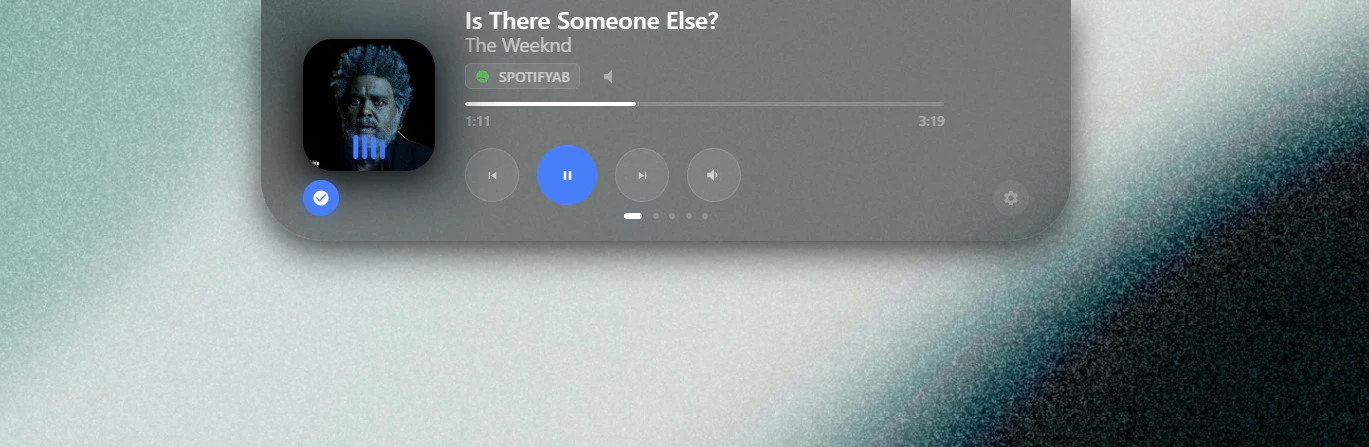

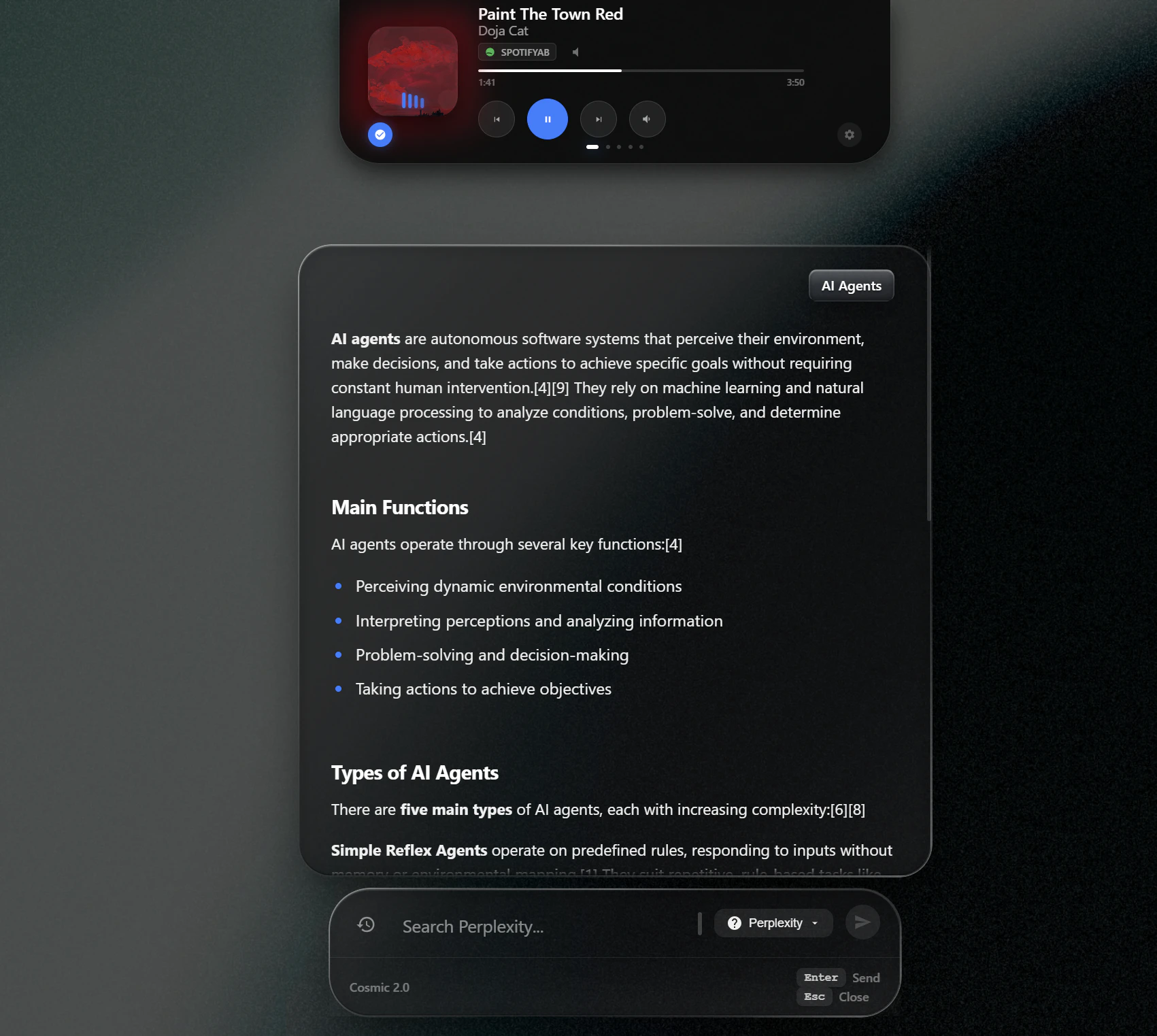

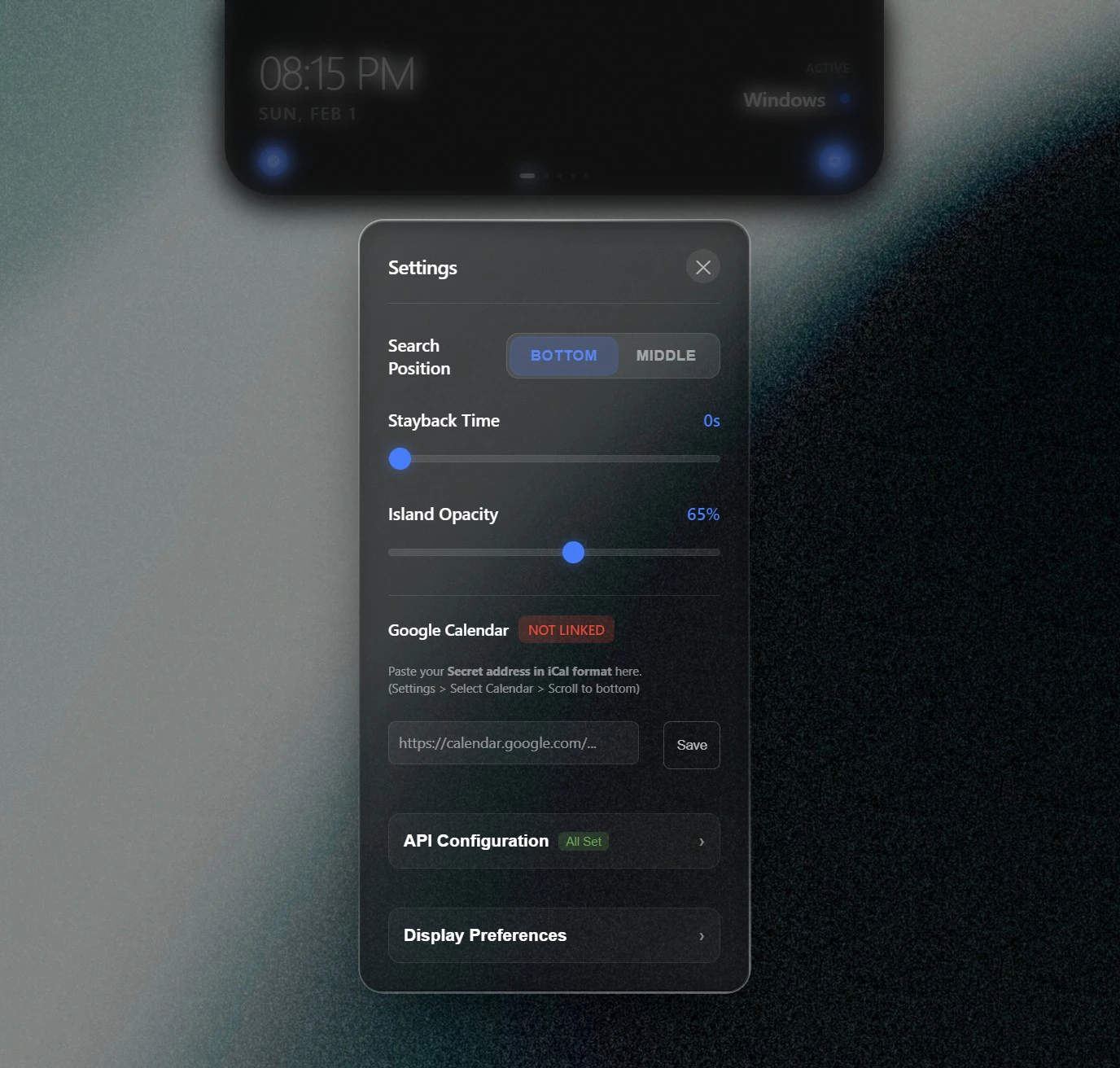

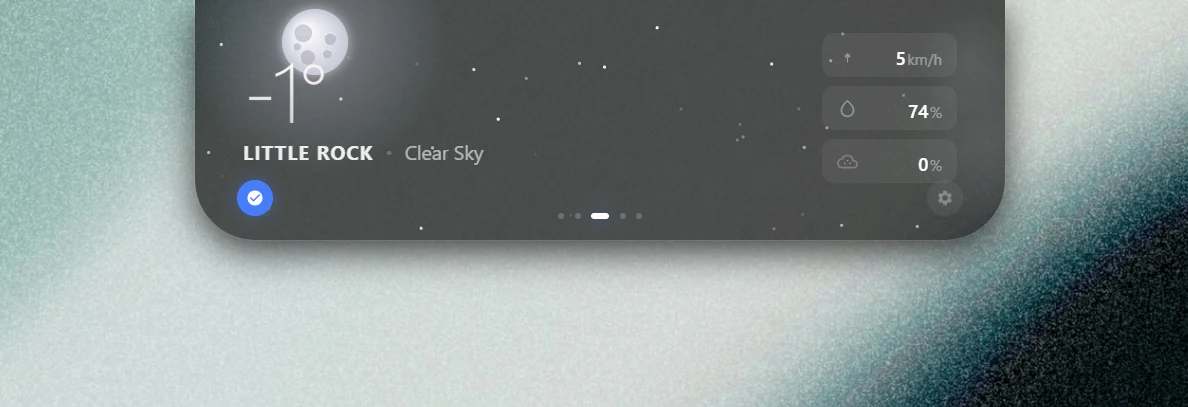

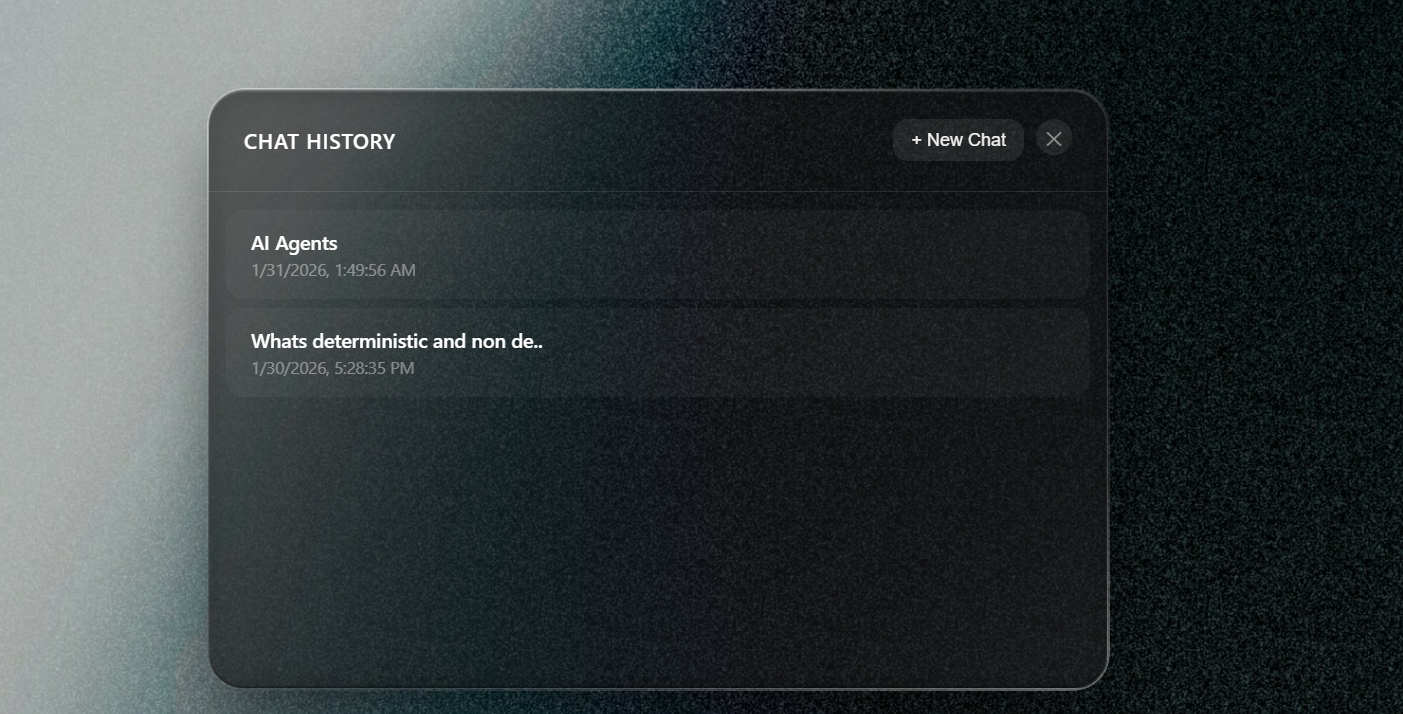

一句话介绍:Cosmic-light是一款为Windows系统设计的动态交互中心,将动态岛理念与本地AI、媒体控制、天气可视化及日历集成相结合,在用户多任务处理场景下,通过一个悬浮窗口整合工作流,减少频繁切换应用的效率痛点。

Windows

Productivity

Artificial Intelligence

GitHub

动态岛交互

Windows效率工具

桌面美化

本地AI助手

媒体控制中心

天气可视化

日历集成

开源工具

工作流优化

悬浮窗口

用户评论摘要:开发者Praveen阐述了产品理念。主要用户反馈认可其整合工作流的价值,并提出了具体建议:关注API密钥的加密方式(DPAPI或凭证管理器),以及建议为全屏模式(如屏幕共享时)增加专注模式,以暂停视觉特效并缩短窗口驻留时间。

AI 锐评

Cosmic-light的野心在于将Mac的“动态岛”从一个系统级UI动画,升维为一个Windows上的跨应用“工作流枢纽”。其真正价值不在于像素级的模仿,而在于试图在碎片化的Windows桌面环境中,创建一个始终在线、情境感知的全局交互层。

产品集成的“本地AI”是双刃剑。强调对话历史本地存储和API密钥加密,直击当前云端AI的隐私焦虑,这是其重要差异化优势。然而,集成Gemini和Perplexity更像是一个功能拼盘,而非深度重构的工作流。AI在此更像是嵌入的聊天机器人,而非真正理解窗口内容、自动切换上下文的智能体。其“智能”成色有待观察。

从评论反馈看,用户已跳出对“美观”的讨论,直接切入安全实现(加密方式)和场景冲突(全屏干扰)等深层使用问题,这本身表明产品已触及部分真实需求。但核心挑战在于:作为一个常驻悬浮层,它必须极其克制与高效,否则极易从“效率中心”沦为“屏幕牛皮癣”。天气粒子特效与全屏工作的冲突,恰恰暴露了其“美学”与“工具”属性的内在矛盾。

总体而言,这是一次有价值的探索,其“本地优先、开源、集成”的思路值得肯定。但它能否从“炫酷的玩具”进化为“必需的工具”,取决于其能否在后续迭代中,将AI深度融入操作系统交互逻辑,并做出更激进的情境判断与自动响应,而非停留在当前的手动调用与信息展示层面。

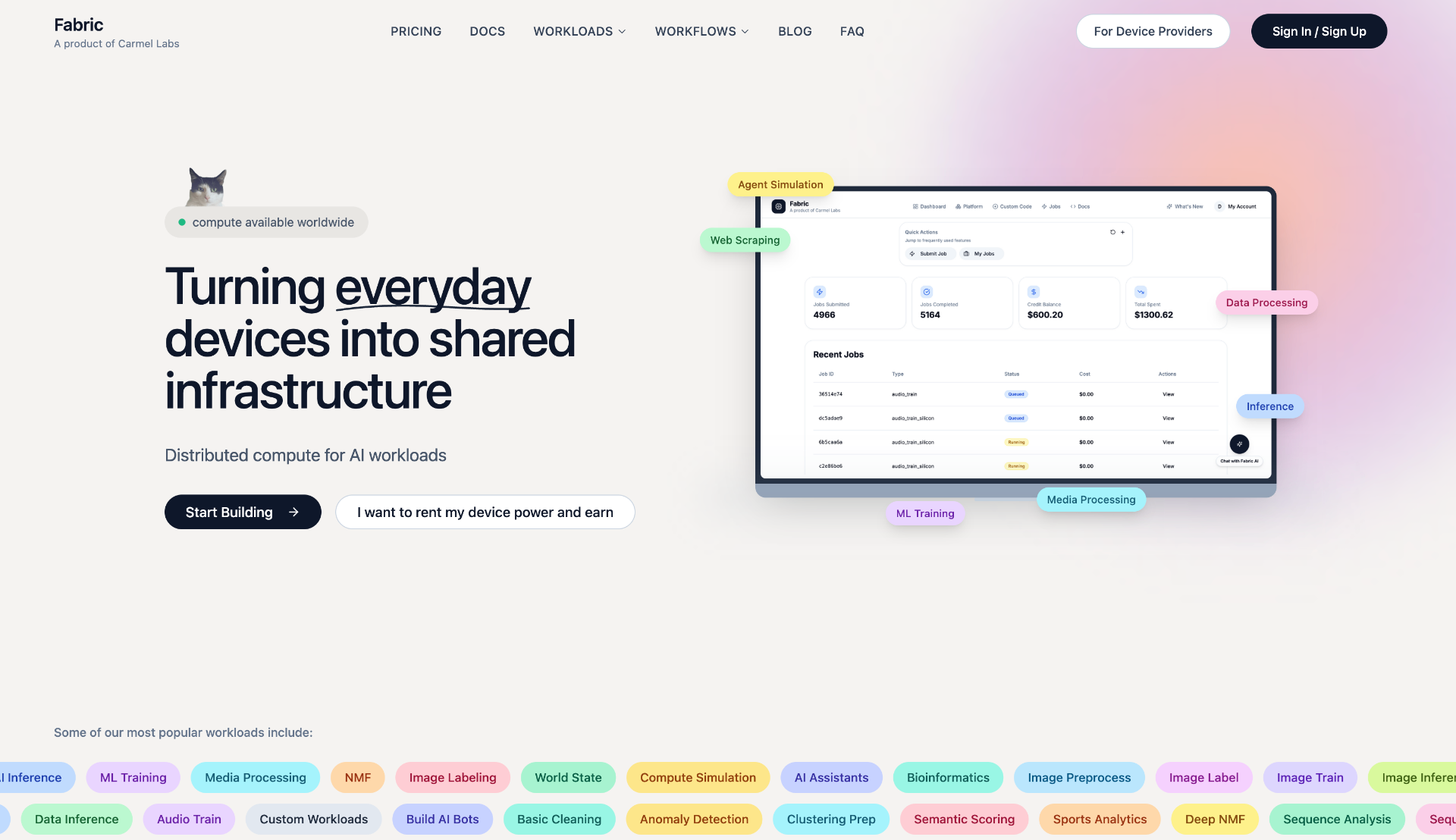

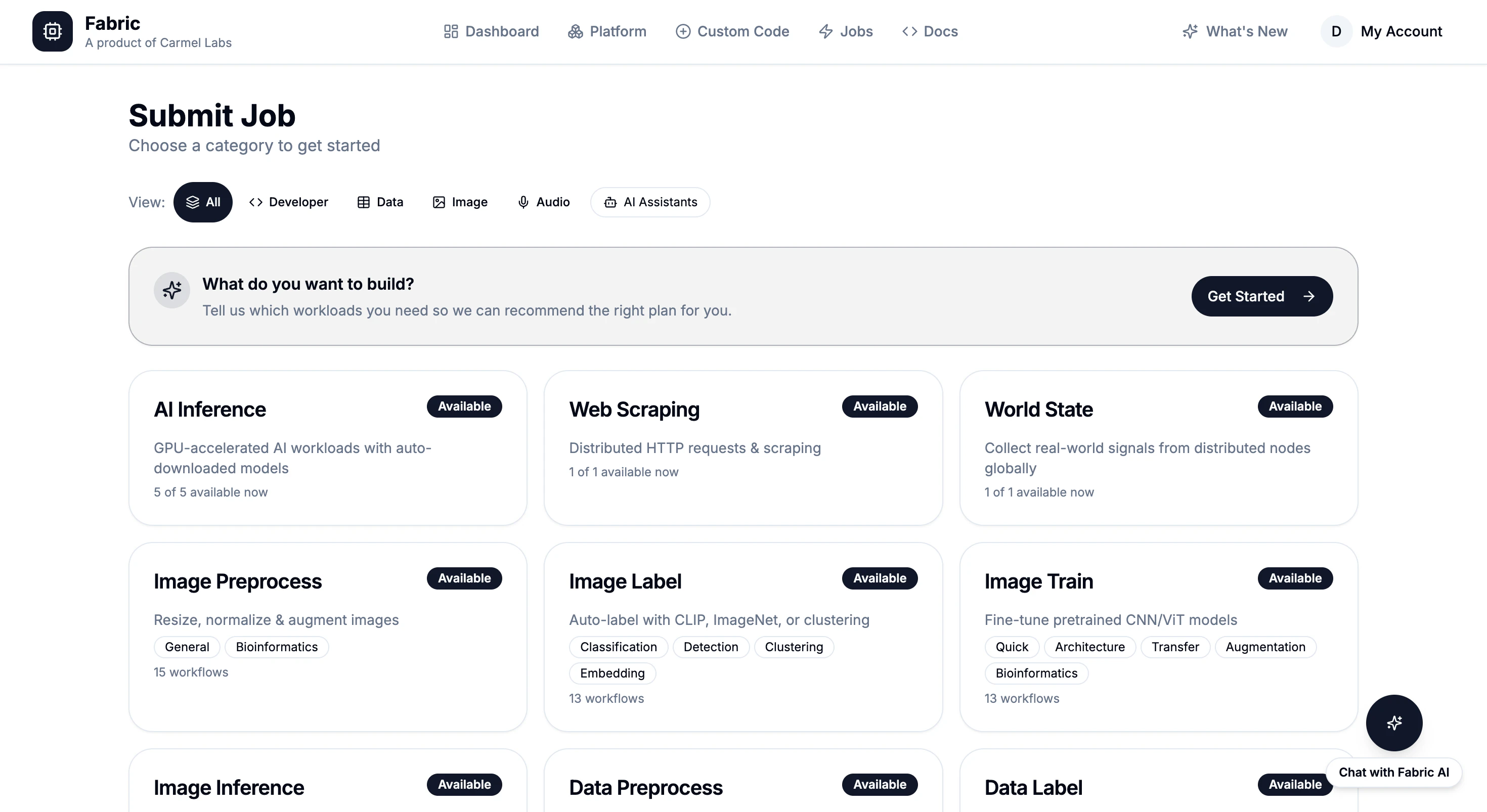

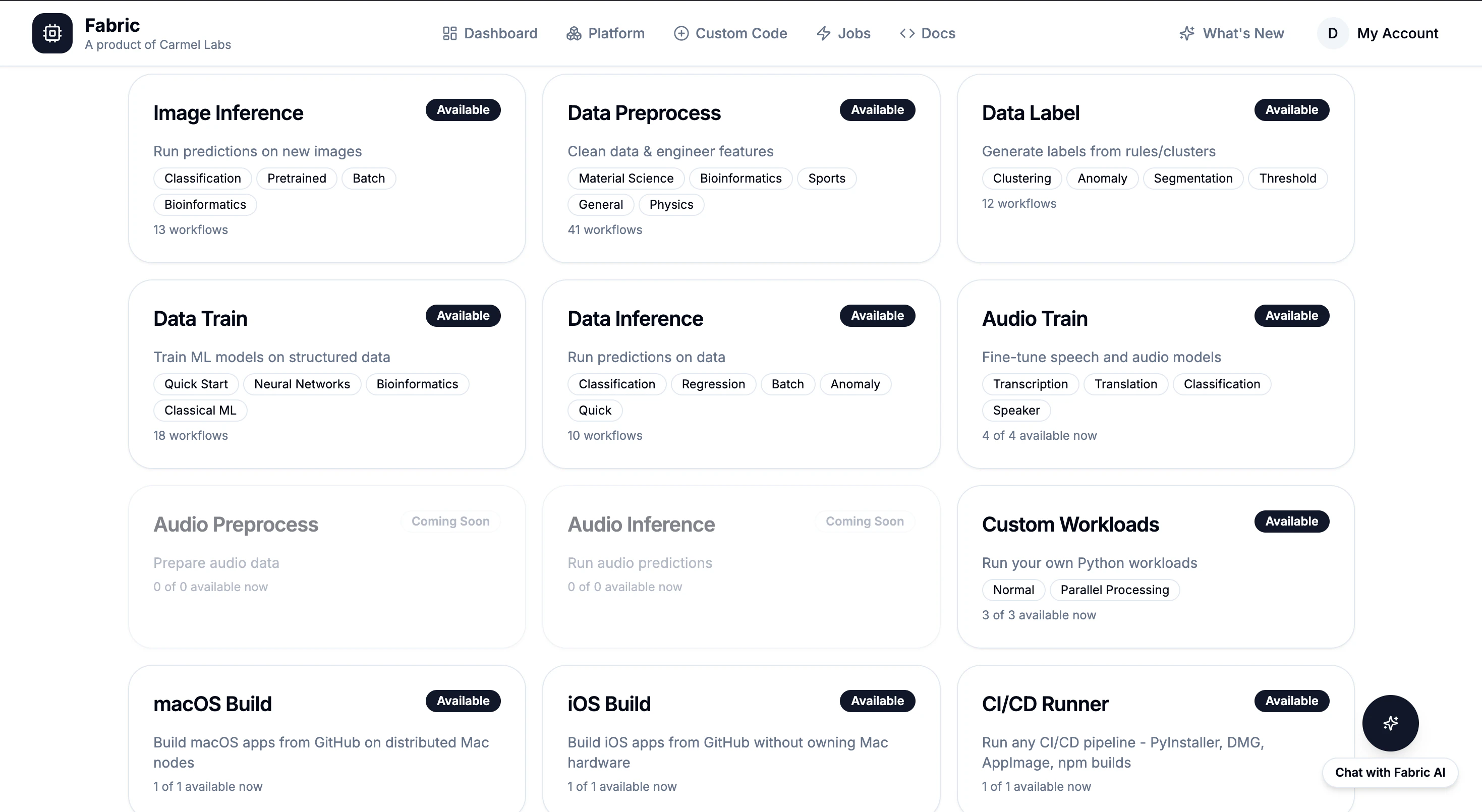

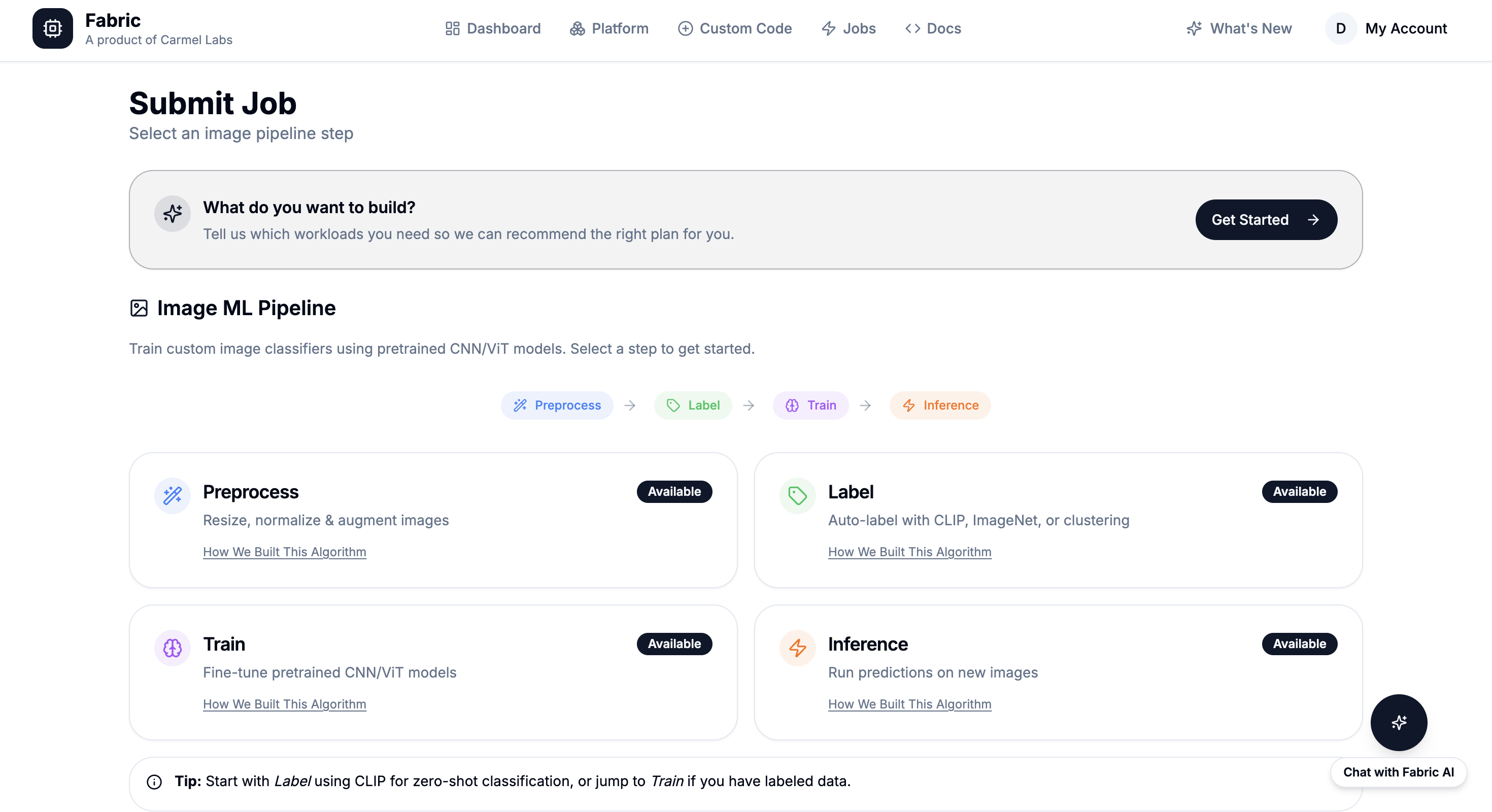

一句话介绍:Fabric是一个AI与数据工作负载计算平台,通过API或面板提交任务即可获取结果,无需管理基础设施,为开发者解决了在运行嵌入生成、转录、数据爬取等批量作业时面临的云服务成本高昂和运维复杂的核心痛点。

Developer Tools

Artificial Intelligence

Tech

无服务器计算

AI工作负载

批处理平台

开发工具

成本优化

基础设施即服务

按需计费

机器学习运维

云原生

自动化

用户评论摘要:评论为开发者自述,阐述了构建初衷(厌倦AWS高额账单和繁琐运维)和产品演变过程。重点突出了其解决的具体问题:替代Lambda等多项服务、大幅降低成本、消除冷启动。本质是一则详细的产品介绍,未包含外部用户的直接提问或建议。

AI 锐评

Fabric的叙事精巧地击中了当下开发者,尤其是中小团队和独立构建者的核心焦虑:在“云原生”时代,他们并未从基础设施的复杂性中真正解放,反而在按需扩展的幻象下,陷入了成本不可预测和运维琐碎的泥潭。产品将自身定位为“Lambda、SageMaker、Colab、GitHub Actions的单一替代平台”,野心不小,但其真正的价值或许不在于技术突破,而在于极致的“交易结构”重构。

它本质上是一个高度抽象和标准化的“计算任务零售市场”。通过将嵌入、转录、爬虫等常见任务封装成固定价格的标准化商品(如$0.0001/次),它将不可预测的云资源账单(如EC2实例的启停时间、GPU小时费)转变为可预测的消费账单。这种模式对用户的心理账户和财务预算都更为友好,其宣称的“80%成本节省”也主要来源于此——通过全局资源池调度和利用率最大化,摊薄了单个任务的资源成本。

然而,这种模式的潜在风险与优势一样明显。首先,标准化是双刃剑。当任务超出其预设的“工作负载类型”,需要高度定制化环境或特殊硬件时,其灵活性可能迅速成为瓶颈。其次,作为新兴平台,其长期运行的稳定性和生态壁垒是关键考验。替代单一服务容易,但要成为开发者“默认”的计算层,需要建立强大的信任和网络效应。最后,固定定价模型在面临上游IaaS提供商价格波动时,其利润空间和价格优势能否持续,也是一个商业上的未知数。

总而言之,Fabric更像一个“开发者友好型”的效用计算层,它用极简的接口和透明的定价,试图将计算彻底商品化。它能否成功,不在于其技术比AWS更先进,而在于它能否在特定场景下,提供一个在成本、体验和心智负担上综合最优的“计算交易”方案。这是一场针对云巨头“复杂税”的精巧侧翼战,但其最终的护城河,可能在于能否围绕这些标准化任务,构建起一个比自行组装工具链更高效、更经济的完整价值网络。

👋 Hi Product Hunt! I’m Anton, co-founder of Lovon AI Therapy.

After a year of development with a PhD psychologist (40+ years of clinical expertise), we’re excited to introduce a new standard in AI-powered mental health support. Here’s how Lovon stands out from other AI solutions:

🚀 More than just an “agreeable” chatbot like GPT

Lovon uses evidence-based frameworks (CBT, Emotion-Focused Therapy) designed to gently challenge unhealthy thinking - not just agree with it.

🎙 Voice-first, real conversation experience

Simply talk, just like you would with a real therapist. Voice unlocks a genuine human connection that text simply can’t replicate.

⚡ Crisis detection, built-in

Lovon automatically recognizes signs of crisis and connects you to immediate resources when you need help most.

🌙 Available 24/7

Support is always just a tap away - Lovon is there in the moment, especially when real therapists aren’t available.

👩🔬 Evidence-driven & actively validating clinically

Our approach is developed by a world-class team, and we’re launching clinical validation studies soon, already seeing amazing results from our users.

💡 We’re not replacing therapists - we’re your bridge between sessions, at the very moment you need support.

In Spring 2025, we raised an $850K pre-seed to build a world-class team, including a PhD psychologist with 40 years of experience developing our therapeutic approach.

🎁 Exclusive for the PH community:

Use code PH2026 for a free week of Lovon AI Therapy (valid until October 16, 2026). Plus, everyone gets a 3-day unlimited trial!

I’d love your feedback!

Ask me anything - I’m here all day and happy to connect 💙

better than chatgpt

Great job 🧡

Sometimes the internet is unstable, but support is always needed. Does it work offline or оnly online?

Cool product! Good luck!

Love this app! Used it in my last relationship, it was very useful to talk through stuff

Love it

Congratulations on the launch. Looks really cool.

Go Team! Good luck! Hope this one will fix me 🤗

I was building something similar for breakup recovery a year ago, at a time llms were not good enough, but the current generation definitely can handle it. Glad you guys have built it, much needed to keep sane in the exponentially changing world we're living in. Good luck with the launch!

This is awesome!!!

I’ve been in therapy for a long time and even though I can message my therapist, there are moments I just need to process things immediately. What therapeutic framework is it primarily based on?

Congrats on the launch, Anton! One thing I'm curious about — how do you handle data privacy for such sensitive conversations? Is everything encrypted end-to-end?

Congrats 🎉

Does Lovon learn and adapt to personal context over time? For example, If I work on specific anxiety triggers, will the system remember my progress/struggles?

i hope your product contains guide-rails that get triggered on special occasions and give advice to the user to reach out to a human therapist. AI can be sometimes an echo-chamber that amplifies our blindspots. But I am sure you app can help many just starting to look inside. Congrats on the launch!

I would not recommend Lovon.

As someone interested in AI for mental health, here’s my honest feedback:

1. I reviewed your Meta Ads activity over the past few months and noticed that you are not promoting the app itself, but rather a survey website targeting people in vulnerable mental states. To access the survey results, users are required to link a weekly auto-renewable subscription to their card. This doesn’t seem aligned with mental health support to me.

2. I tested the app itself, and unfortunately the chatbot conversation glitches and disconnects every 2–3 minutes. On top of that, it is impossible to complete the onboarding process without first purchasing a subscription.

3. For transparency, could you clarify how user data is handled? In some documents I saw that the Lovon app is owned by Ticket to the Moon Inc., in others I saw some Babayaga Inc. Can you clarify the structure and who is responsible for the security of user data?

Finally, AI product not about coding!!

This hits home. Went through a tough time a few years ago and honestly the worst part was not having anyone to talk to whenever I needed. Wish I had this then. Congrats on shipping!

congrats on the launch, anton 🙌 the voice-first approach sounds cool. i've been building ai agents for my own workflow and the difference between typing and talking is night and day. feels like you're actually being heard vs filling out a form.

curious about the crisis detection - is that real-time during the conversation or pattern-based over multiple sessions?

Congrats on the launch! I've tried a few AI therapy apps before and they all felt like talking to a search engine. The voice-first approach sounds like it could actually change that. Downloading now

Checked the video and your website, looks like a very good solution to me, wish you success!

But the reviews on your site need links to the sources!

(also the 3 dots do nothing, and it's not clear what the orange checkmarks and "15 min with therapist" mean)

Also, I couldn't find real info about the "PhD psychologists" it is "built with", there need to be links to their professional profiles and scientific work for this statement to have credibility!

Very impressive product! congrats on the launch!

I have a couple of questions though:

1) Can the app recognise patterns of more serious mental disorders such as eating disorders, personality disorders, depression etc.? If yes, can it forward or give any contact/reference to a psychiatrist (for example)? I know that in many cases people do not know yet what mental condition they might have and usually general psychologists become the first point of contact so to say. But then they should be directed to appropriate professional help

2) Do you plan to expand the app to the desktop version as well?

3) Do you plan to allow texting besides voice-only interactions?

Thank you in advance for the responses! And congratulations on launching again, the app looks very polished and well-thought!

Amazing! Congrats!

Really interesting approach 👏 I like that you’re focusing on evidence-based frameworks instead of just making another AI chatbot. The voice-first experience sounds especially promising — feels much more human.

I am very happy and proud to be one of the first users. Lovon helped me a lot to save my relationship when my girlfriend and I were at a distance. And now we're getting married soon!

Love the concept and also the fact that this app can work as a copilot, without replacing the therapist. I wonder if it was possible for the therapists to give it inputs somehow. Cheers!!