PH热榜 | 2026-03-19

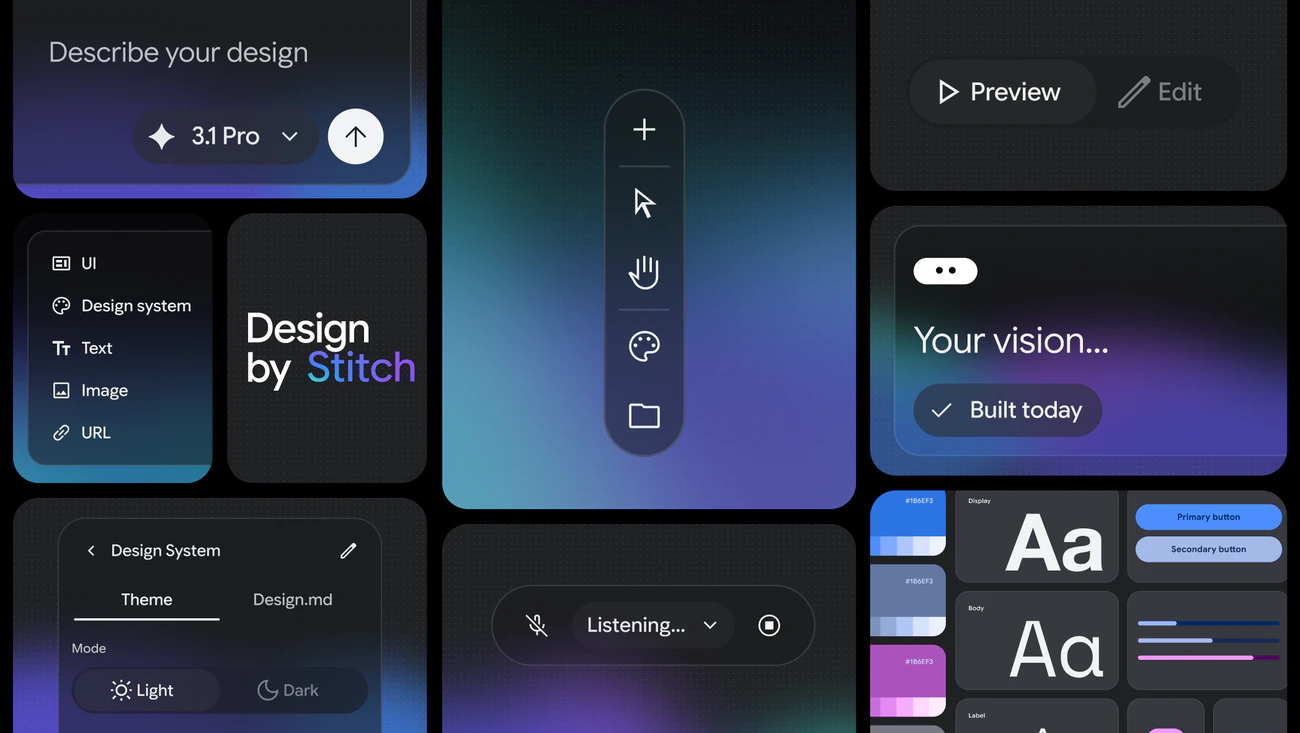

一句话介绍:Stitch 2.0是一款由Google推出的AI原生设计工具,允许用户通过自然语言、语音和智能体协作,在统一画布上快速生成高保真UI和交互原型,旨在将创意想法在数秒内转化为可交付的界面设计,极大提升了设计探索和产品原型的构建效率。

Design Tools

Prototyping

Artificial Intelligence

AI设计工具

UI生成

原型设计

智能体协作

自然语言交互

设计系统

谷歌产品

设计开发一体化

语音设计

画布协作

用户评论摘要:用户普遍惊叹其生成效果与速度,但核心关切点集中于生成UI与现有生产代码/设计系统的整合难题,如设计令牌不匹配、组件库不兼容。同时,对工具在迭代中的设计一致性、操作透明度(缺乏状态反馈与确认机制)以及平台政策风险(如苹果对“氛围编码”应用的审查)提出了疑问和建议。

AI 锐评

Stitch 2.0所代表的,是谷歌将AI从“设计辅助”推向“设计主体”的一次激进尝试。其核心价值并非简单的“更快出图”,而在于构建一个能够同时理解图像、代码和文本语义的“多模态设计画布”,试图将产品构思、视觉设计和原型逻辑压缩进一个由自然语言驱动的统一上下文环境中。这预示着一种“对话即设计”的新范式,对快速验证想法的产品经理和独立开发者极具吸引力。

然而,Product Hunt社区的反馈精准地刺穿了当前所有AI设计工具共有的华丽泡沫:生产就绪性。用户欢呼其生成效果,但旋即追问与现有设计系统(Design Tokens、组件库)的兼容性。这揭示了AI设计从“玩具”到“工具”的关键壁垒:真正的生产力工具必须融入现有工作流和规范体系,而非每次都创造一座孤岛。尽管Stitch引入了DESIGN.md和内置设计系统来维护内部一致性,但对外部系统的“理解与适应”能力,才是其能否被团队采纳的生死线。

更深层的挑战在于,Stitch的“智能”本身可能成为协作的障碍。有评论指出其缺乏操作透明度和确认机制,像一个沉默而武断的合作伙伴。当AI拥有过高的自主裁量权却无法清晰沟通其“推理过程”时,会损害设计师的掌控感和信任感,这在专业工作流程中是致命的。

此外,Figma股价因之波动、苹果政策收紧等外部评论,点明了更宏大的产业叙事:以谷歌为代表的科技巨头,正通过将尖端AI模型垂直整合进基础生产力工具,系统性重塑甚至颠覆传统专业软件市场。Stitch不仅是设计工具,更是谷歌争夺下一代人机交互入口和开发生态的战略棋子。它的最终对手可能不是Figma,而是所有固守传统图形界面创作模式的思维定式。其成败将不取决于生成了多少惊艳的demo,而在于能否跨越“原型”与“产品”之间那道由复杂性、协作性和规范性构成的鸿沟。

一句话介绍:一款具备自我进化能力的AI智能体模型,通过创建智能体工具链、组建多智能体团队协作,在软件开发、调试研究等复杂任务场景中,减少了人工干预需求,实现了从静态工具到动态执行系统的跨越。

API

Open Source

Artificial Intelligence

自主智能体

自我进化AI

多智能体协作

AI原生工作流

代码生成

软件开发

AI模型API

自动化执行

持续学习

智能体框架

用户评论摘要:用户普遍认可自我进化是AI发展的必然方向,惊叹其迭代速度和代码能力。核心关切集中在“可控性”与“可预测性”:如何确保进化系统的生产环境稳定性、如何平衡探索与利用、如何审查和管理其长期记忆、以及“自我进化”的具体技术内涵与边界。

AI 锐评

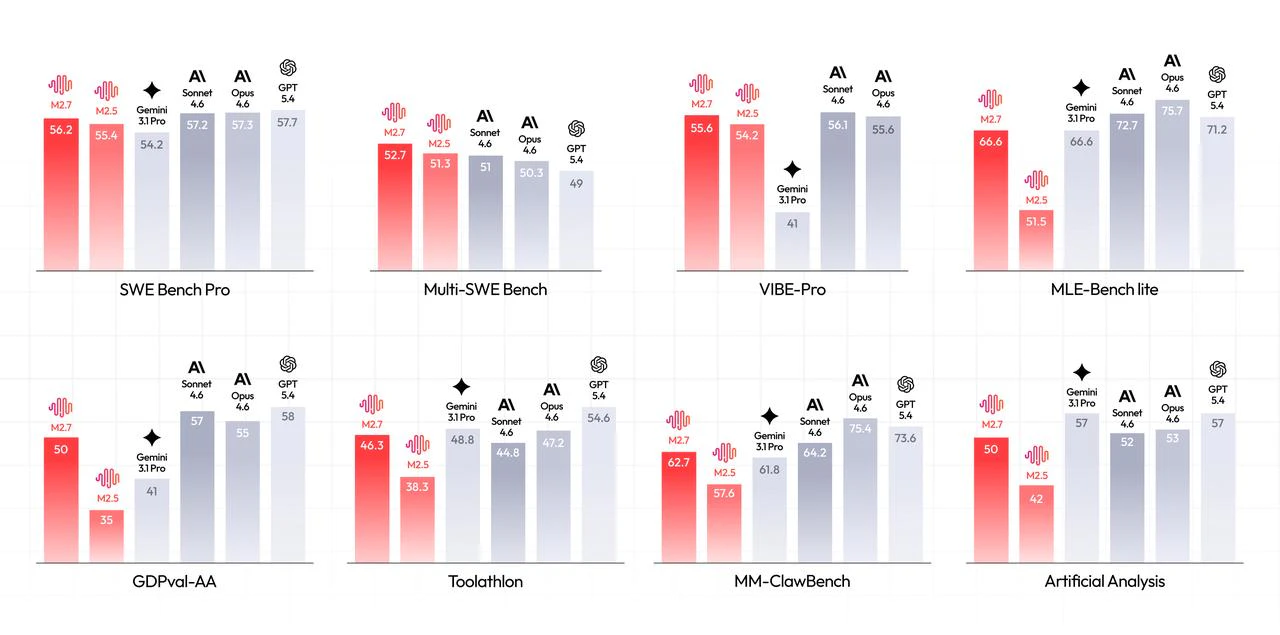

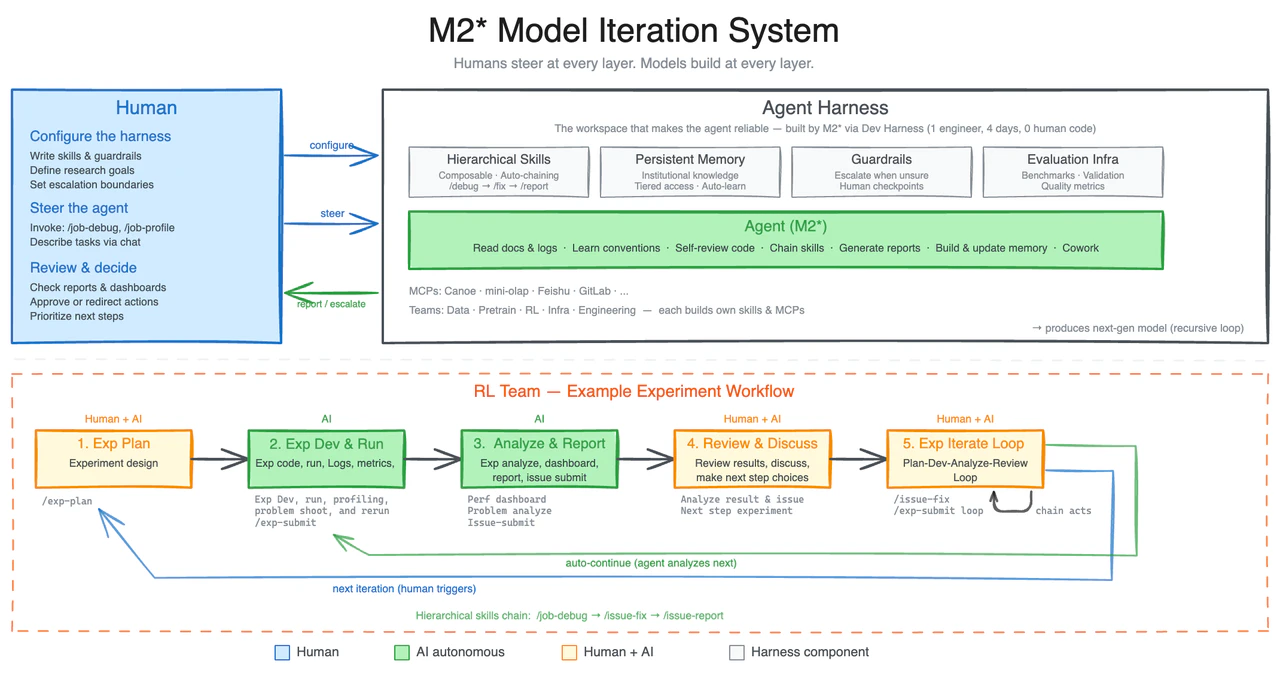

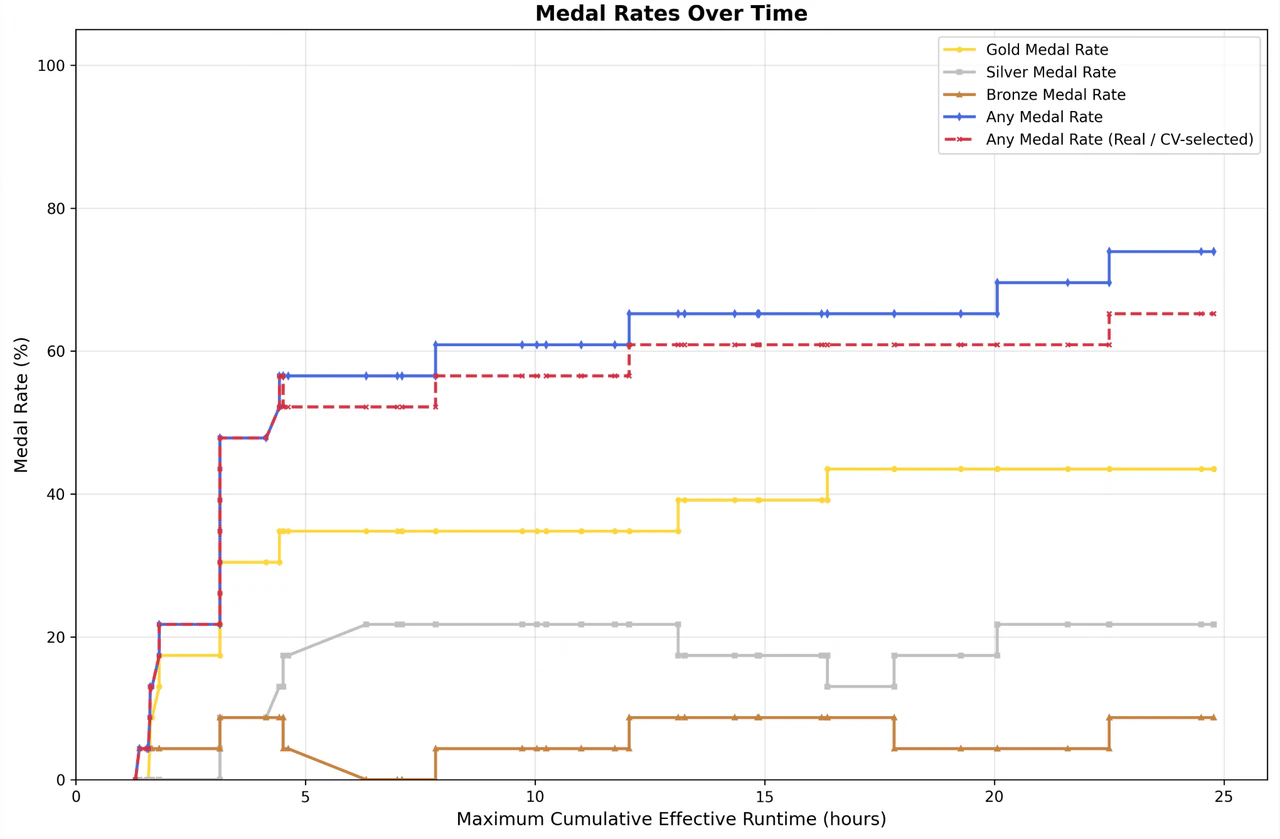

MiniMax M2.7所标榜的“自我进化”,与其说是一次模型能力的质变,不如说是一场精明的叙事升级。它将AI行业内部持续进行的模型迭代、提示工程优化、智能体工作流设计等专业过程,包装成一个看似自主的“黑箱”系统。其真正价值不在于玄乎的“进化”,而在于将一系列复杂能力——多智能体协作、长上下文记忆、代码与调试——整合为一个声称能闭环改进的“智能体工厂”。

评论区的兴奋与忧虑精准地揭示了产品的双重性:开发者看到的是自动化复杂任务、提升效率的“超级助手”;而严肃的从业者则警惕“自我进化”叙事下潜藏的控制权让渡与系统不可预测风险。产品试图解决的“减少人工干预”痛点确实存在,但它用“进化”一词巧妙地回避了关键问题:系统改进的决策权究竟在谁手中?是依据用户反馈的定向优化,还是不受控的自我突变?

因此,M2.7的核心突破或许不在技术,而在产品定位。它不再满足于充当被动工具,而是试图成为可托管复杂项目的“初级合伙人”。这一定位转变极具吸引力,也极其危险。它预示着AI应用正从“功能交付”迈向“责任交付”,但当前的技术是否足以支撑其承诺的“自主性”而不引发混乱,仍需打上巨大问号。市场的追捧反映了对自动化极致的渴望,而冷静的质疑则是防止我们过早踏入一个自己也无法理解的系统迷宫的必需之声。

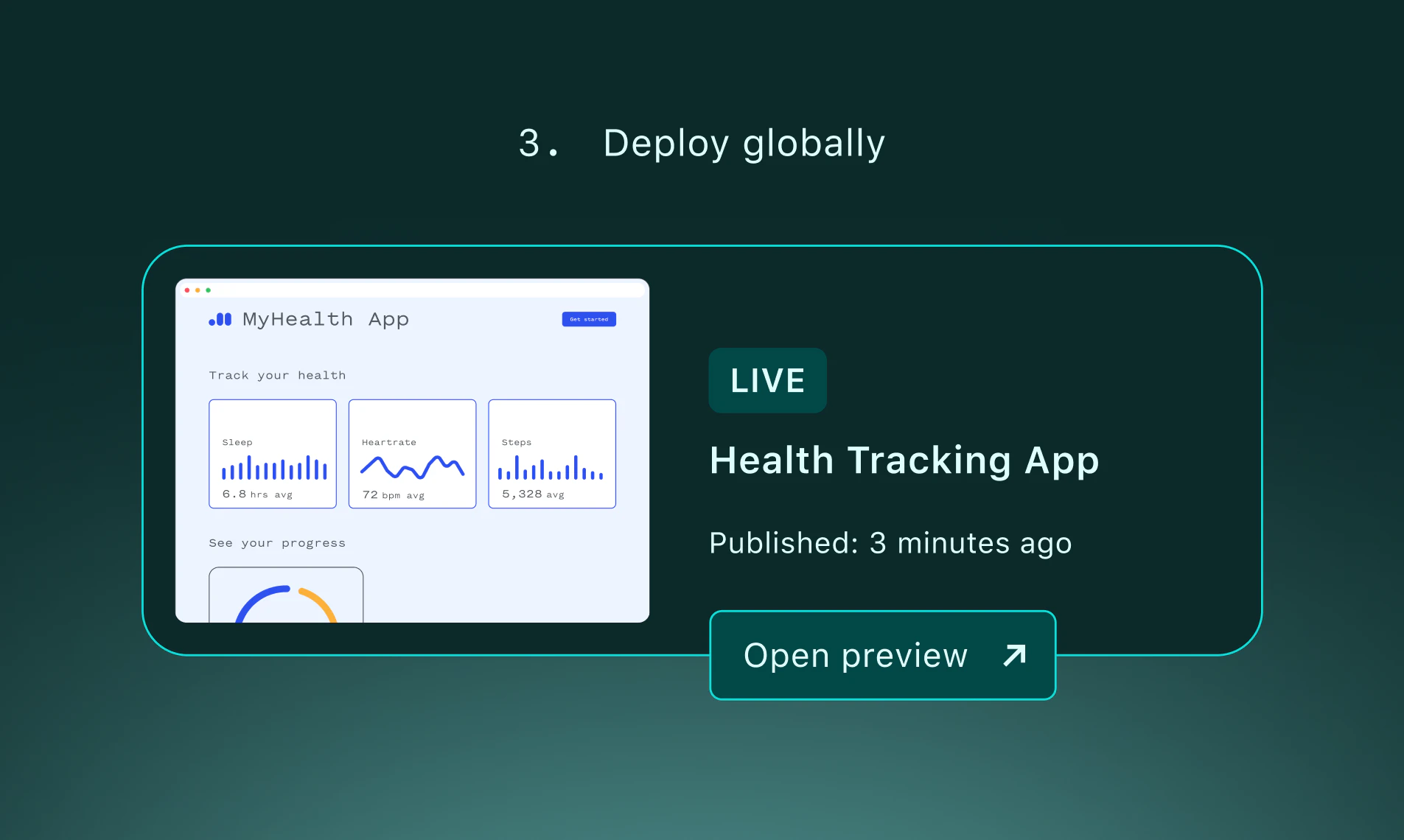

一句话介绍:一款允许用户仅通过自然语言描述,即可由AI代理直接生成并部署出拥有生产环境URL的完整应用,无需代码仓库或本地设置,旨在消除从AI生成代码到实际可运行产品之间的巨大鸿沟。

Developer Tools

Artificial Intelligence

No-Code

AI代码生成

无代码/低代码

应用部署

快速原型

云开发平台

迭代开发

生产就绪

开发者工具

提示工程

基础设施自动化

用户评论摘要:用户普遍赞赏其“从提示到产品”的一站式流程和原地迭代能力,认为这解决了AI工具生成代码后的部署断层痛点。主要疑问集中在:对复杂应用(如含数据库、鉴权)的支持深度、与Vercel等平台的迁移路径、配置(如环境变量)的自动化程度,以及项目上下文继承能力。

AI 锐评

Netlify.new并非又一个AI代码生成器,而是一次对“开发完成定义”的激进重构。它的真正野心,是成为AI原生时代的应用“操作系统层”,将提示(prompt)直接编译为可运行的服务。

当前多数AI编码工具止步于代码输出,将最棘手的部署、配置、基础设施集成等“脏活累活”抛回给开发者,这正是创新动量熄灭的“死亡之谷”。Netlify.new的犀利之处在于,它用自身成熟的云基础设施(全球边缘网络、无服务器函数、表单等)作为“运行时”,将AI生成的代码直接注入这个已就绪的、生产级的环境。这相当于为AI创造力提供了即时的、可规模化的现实载体。

其宣称的“原地迭代”是另一个关键价值点,它试图解决AI生成代码的“一次性”和“碎片化”顽疾。用户不是不断重启项目,而是在同一个持续存在的应用实体上叠加修改,这向“可进化”的AI协作开发迈出了一步。然而,这也暴露了其当前的边界:从评论中的尖锐提问可见,对于需要复杂状态管理、数据迁移和团队协作的成熟项目,它能否胜任仍是巨大问号。官方回复也暗示,这更多是针对绿地项目、原型和内部工具的“快速启动器”。

因此,Netlify.new的价值定位非常清晰:它不是来取代传统专业开发流程,而是旨在吞噬从“想法”到“第一个可分享URL”之间的一切摩擦。它降低了对“基础设施认知”的门槛,允许产品经理、设计师等非核心开发者快速验证想法,同时也为开发者提供了一个前所未有的高速沙盒。其成败关键在于,能否在保持“简单魔法”的同时,优雅地处理复杂性问题,否则可能被局限于玩具项目,难以触及真正的生产力核心。

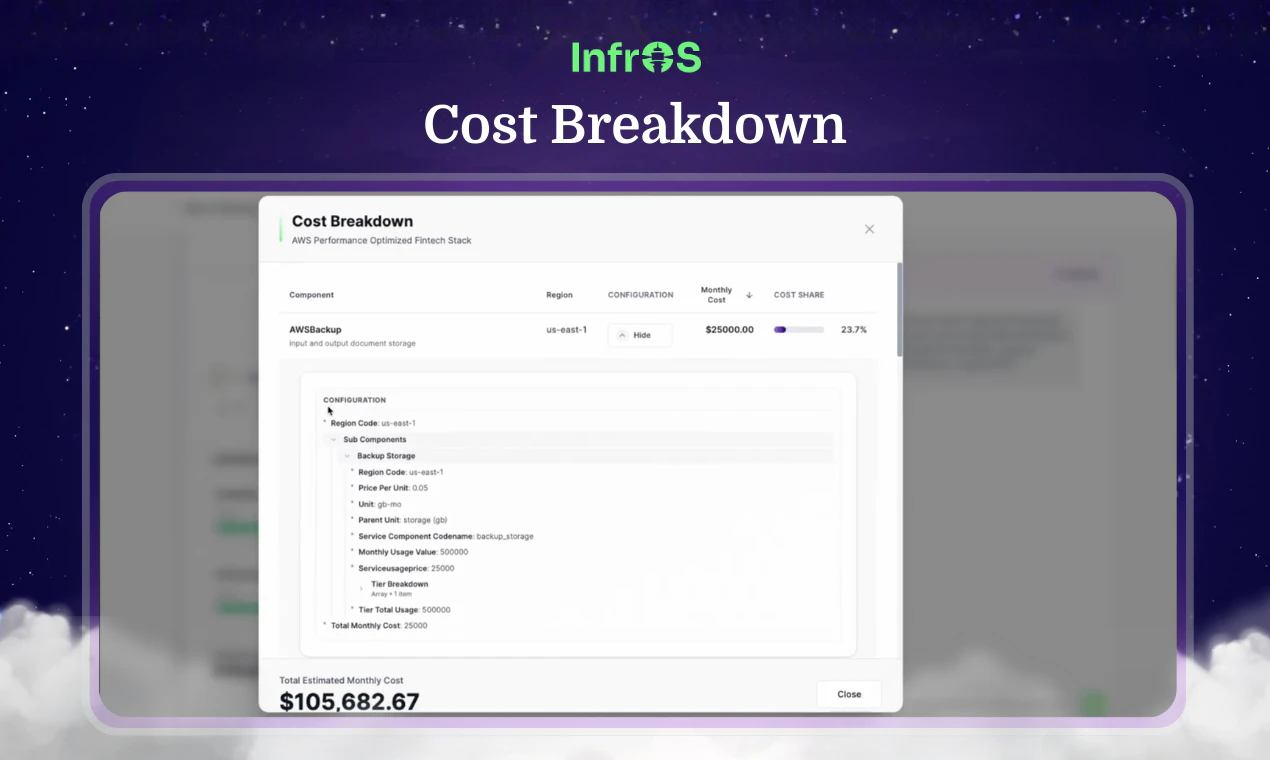

一句话介绍:一款通过仿真验证,在部署前为团队主动设计和优化云架构的AI驱动平台,解决了云基础设施因被动响应式优化而导致的成本超支和性能不稳定的行业痛点。

Software Engineering

Developer Tools

Artificial Intelligence

云架构设计

基础设施即代码

AI优化

仿真验证

成本优化

DevOps

Shift-Left

云迁移

性能预测

云管理

用户评论摘要:用户普遍认可“部署前验证”的理念,认为其解决了行业顽疾。核心关注点集中在:仿真技术的真实性与深度(如何模拟流量与故障)、如何处理频繁的需求变更、成本节约的具体来源(是资源规格调整还是架构级优化),以及产品对个人开发者或小团队的适用性。

AI 锐评

InfrOS的核心理念“Shift-Left Optimization”并非全新概念,但其通过“设计-仿真-验证”的闭环,试图将这一理念从口号变为可执行的工程实践。其真正价值不在于“预测”,而在于“证明”——通过在其云端沙箱中实际运行Top 3架构方案并基准测试,用数据替代猜测,将架构决策从一门艺术向一门科学推进。

然而,其宣称的“真实环境仿真”面临根本性质疑:在没有真实业务流量和不可预测的分布式系统交互下,仿真的保真度天花板有多高?它或许能完美验证一个静态架构的资源配比和基础性能,但能否捕捉到生产环境中那些诡异的偶发故障和链式反应?这决定了它是“高级计算器”还是“数字孪生”。

产品更深层的野心在于成为“基础设施的动态大脑”。它不仅做一次性设计,更承诺随代码、需求、云服务商报价的变化而持续“受控重设计”。这触及了云管理的终极痛点:架构漂移。如果它能可靠实现,其价值将从“部署加速器”升级为“全生命周期治理平台”。但这也使其复杂度陡增,其AI推荐引擎的“正确性”将承受巨大压力,早期在GPU架构推荐上的挫败已预示了这条路的艰难。

总体而言,InfrOS瞄准了一个高价值且疼痛感强烈的市场。其成功不取决于理念的先进性,而取决于仿真技术的深度、AI推荐的可靠性以及能否将复杂的重设计过程简化为可信赖的“一键优化”。它不是在优化云资源,而是在试图优化和标准化云架构师的决策过程本身。这条路若能走通,将是范式的转变;若走不通,则可能沦为又一个精美的辅助设计工具。

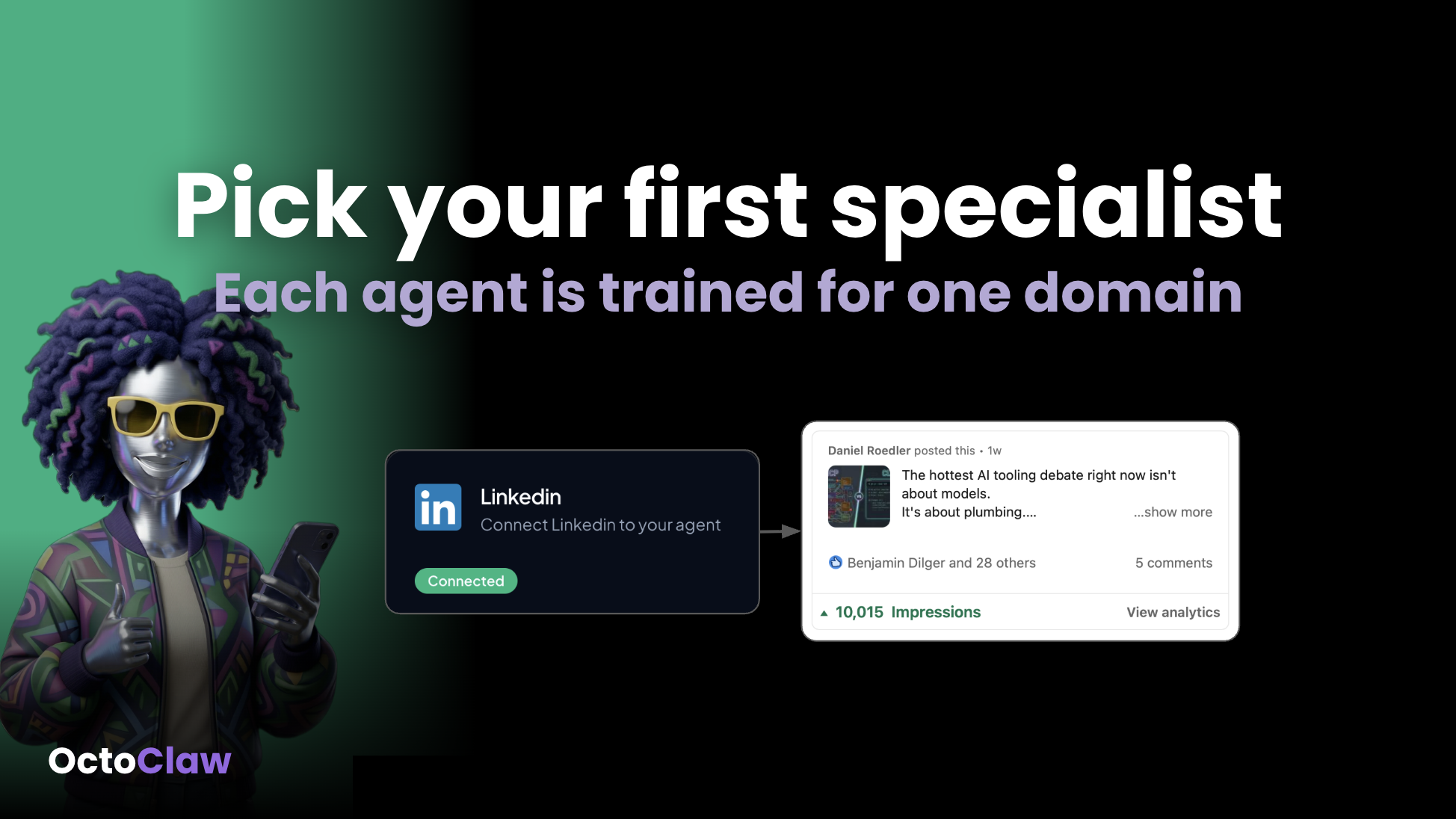

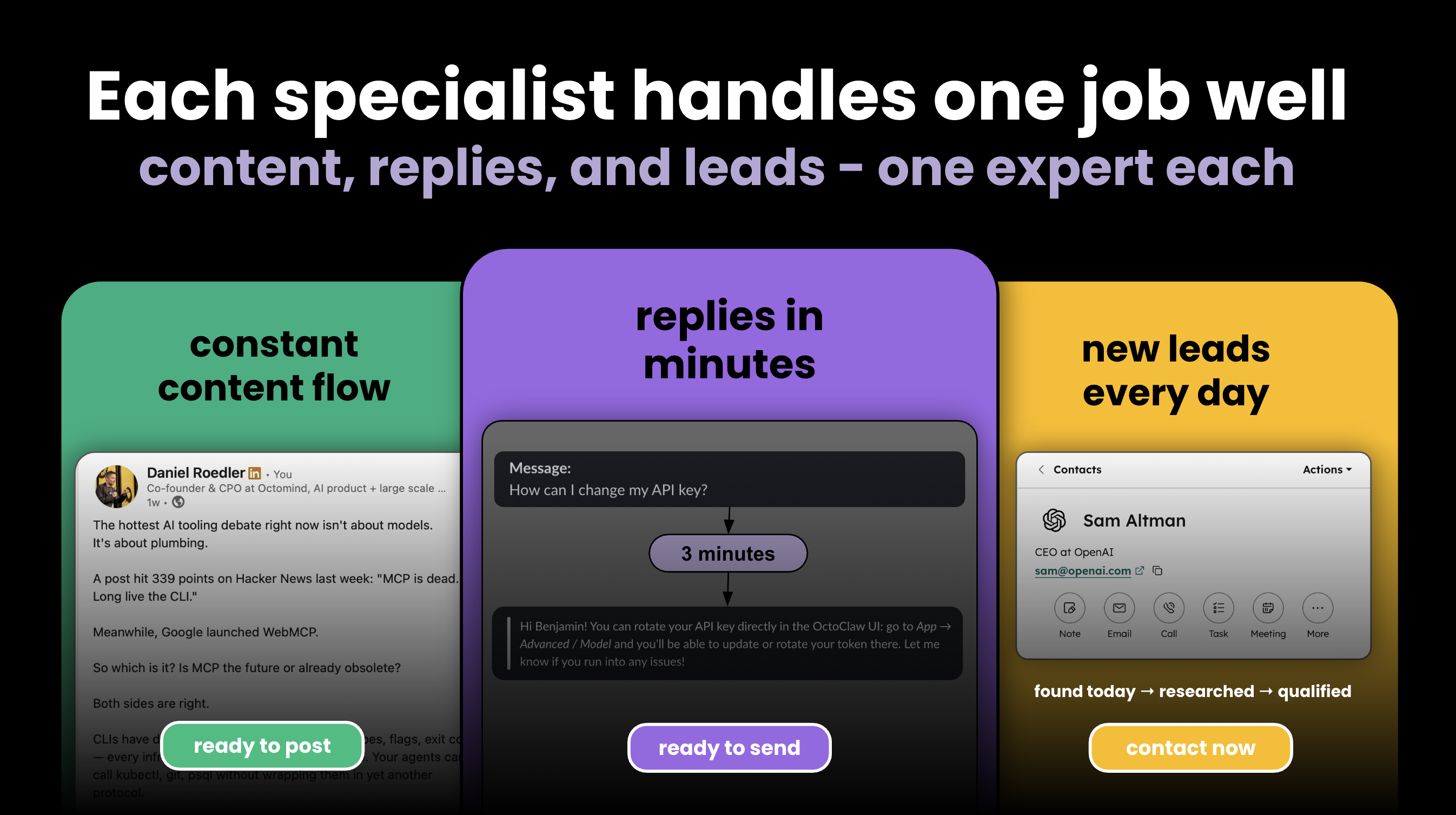

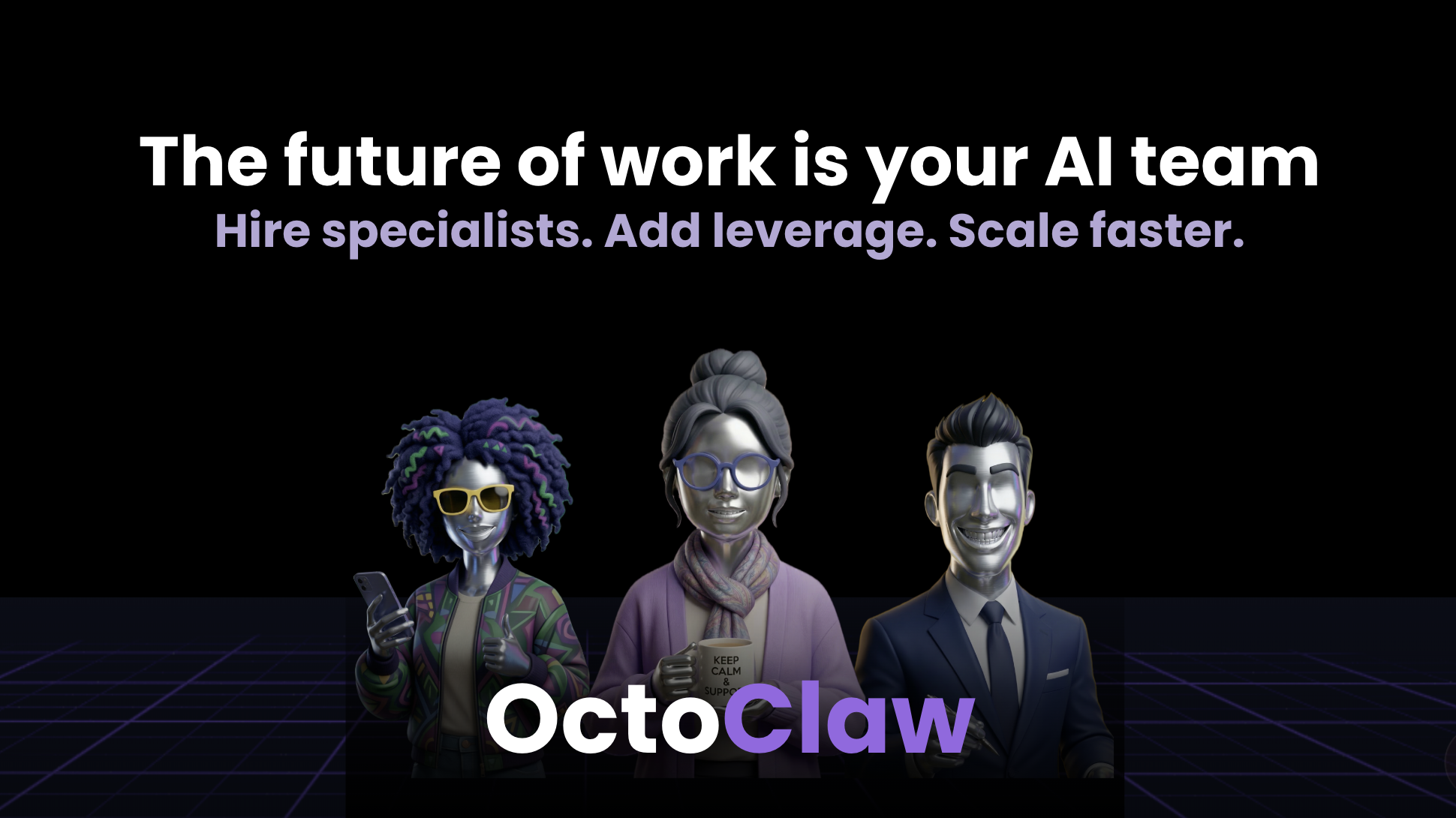

一句话介绍:OctoClaw 是一款让企业能“雇佣”专注于营销、销售、客服等领域的AI专家来实际执行业务任务(如撰写内容、筛选线索、回复客户、协调工作流)的平台,为初创及精干团队提供了在不增加早期人力成本的情况下,获得业务杠杆的解决方案。

Productivity

Marketing

Artificial Intelligence

AI智能体

自动化工作流

营销自动化

销售赋能

客户支持

多智能体协同

SaaS

生产力工具

初创企业工具

无代码AI

用户评论摘要:用户肯定其“专家”定位与多智能体协同价值,询问与通用AI工具的本质差异、训练方式、价格模型及人工接管机制。主要建议包括:提供无需信用卡的试用、增加“监督模式”确保内容安全、明确各领域专家的成熟度对比。

AI 锐评

OctoClaw 的核心叙事巧妙地将“使用AI工具”升维为“雇佣AI专家”,这不仅是营销话术的胜利,更是对当前AI应用范式的一次精准批判。它直指通用聊天机器人(如ChatGPT)在企业场景中的核心短板:需要反复提示、缺乏持续执行与跨工具协调能力。产品试图将“智能体”从执行单一指令的“手”进化为拥有领域知识、能持续运营并与其他“同事”协作的“虚拟员工”。

其真正价值潜力在于两个层面:一是作为“职能即服务”的早期形态,通过预训练和深度集成,降低企业部署专用AI的门槛;二是构建一个跨工具的工作流协调层,这比创建又一个独立的SaaS平台更具战略眼光。正如资深用户所指出的,智能体间的“编排”才是难点与价值倍增点。

然而,其面临的挑战同样尖锐。首先,“专家”与“精心调校的提示词+工具访问”之间的性能壁垒必须足够高,才能支撑其溢价,目前官方证据尚属早期自用数据。其次,企业级应用至关重要的“护栏”与“人工接管”机制在评论中被反复提及,凸显了用户对完全自主AI的信任顾虑。最后,从固定费用到用量计费的定价过渡,将直接考验其价值与成本的平衡能力。

总体而言,OctoClaw 展现了一个更贴合企业运营逻辑的AI应用方向,但其从“有亮点的自动化”走向“可信赖的虚拟团队”,仍需在效果可验证性、安全可控性与商业模型上接受市场严苛的淬炼。

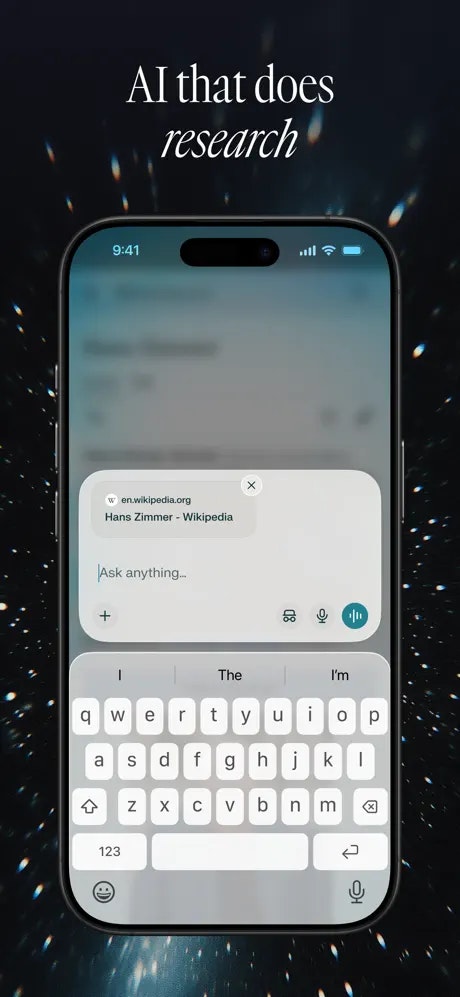

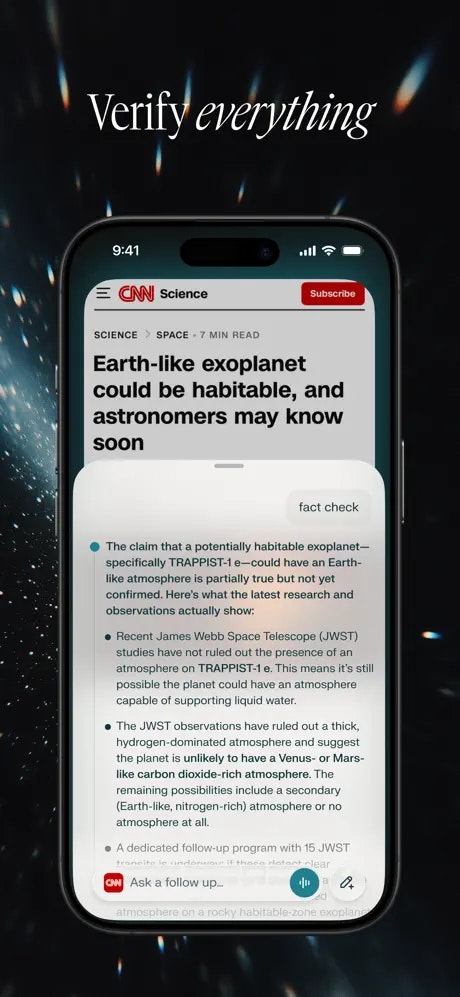

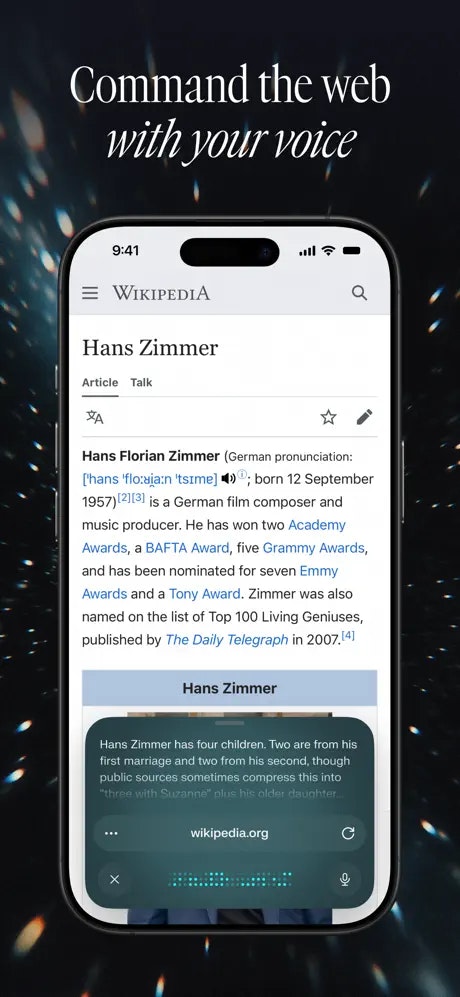

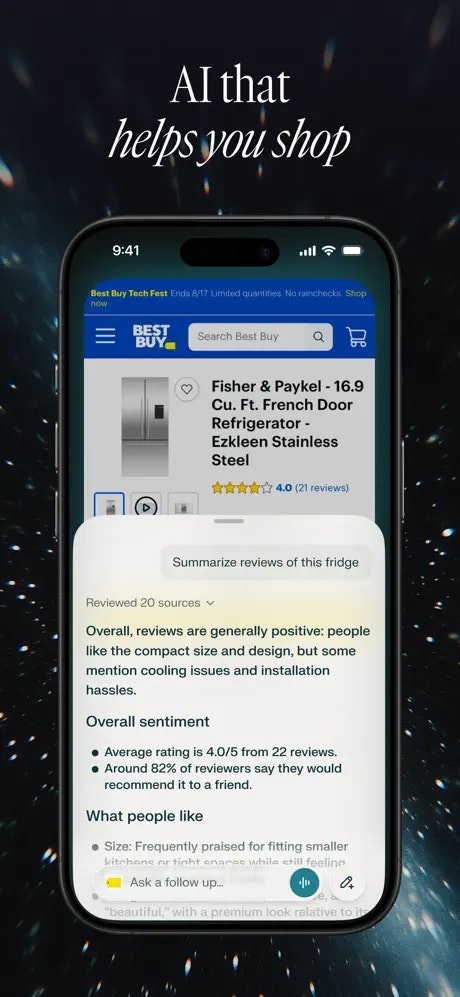

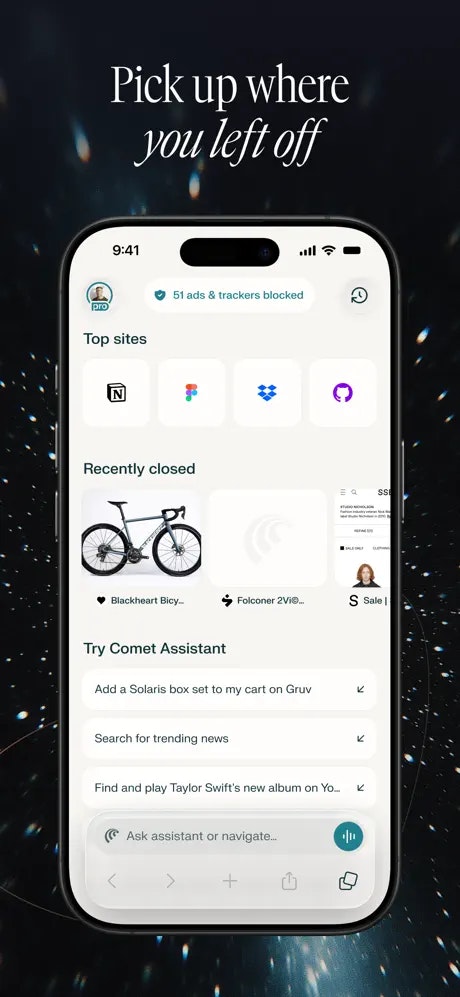

一句话介绍:Comet是一款由Perplexity推出的移动端智能体化AI浏览器,通过跨标签页总结、语音交互和AI代理执行任务,解决了用户在移动场景下信息碎片化、操作繁琐、多任务处理效率低下的痛点。

iOS

Artificial Intelligence

Search

AI浏览器

移动智能助理

信息聚合

语音控制

代理智能体

生产力工具

广告拦截

Perplexity

移动办公

跨标签页管理

用户评论摘要:用户普遍对移动端发布感到兴奋,认为其设计出色,可能取代Safari。核心反馈包括:产品体验流畅,解决了以往AI浏览器的笨重感;AI代理功能强大,能深度集成个人工作流;期待其在内容创作等场景的应用。无明显负面问题。

AI 锐评

Comet for iOS的发布,远不止是将一个桌面AI浏览器“搬运”到移动端。其宣称的“智能体化”核心,试图在移动浏览这个已被巨头标准化、用户行为固化的红海市场中,撕开一道“主动服务”而非“被动响应”的口子。传统移动浏览器的进化止步于性能与界面,而Comet则押注于“浏览后”场景:信息过载后的即时整合、跨页面信息的主动关联、以及基于用户意图的代理执行。这本质上是对“浏览器”定义的颠覆——从查看信息的窗口,转向处理信息的智能工作台。

然而,其真正的挑战与价值也在于此。首先,“代理执行”在移动端敏感权限与隐私顾虑的背景下,能否取得用户深度信任并开放足够权限,是规模化前提。其次,评论中用户提及“训练我的Perplexity代理”,这揭示了其潜在壁垒与天花板:产品价值深度依赖用户投入时间进行个性化配置,这可能吸引效率极客,却为大众用户设置了使用门槛。最后,在移动设备小屏幕、碎片化使用场景下,复杂的智能体交互逻辑是否会造成新的认知负担,而非其所宣称的“解放双手”,仍需观察。

Perplexity借此产品,正从“问答式AI搜索引擎”向“操作系统级AI代理”跃迁。Comet for iOS不仅是功能发布,更是其生态野心的移动端触手。它不再满足于回答用户问题,而是试图成为用户在数字世界中行动的代理。成败关键在于,能否在“炫技般的自动化”与“稳定可靠的基础设施”之间找到平衡,让AI代理从令人惊叹的演示,转化为用户每日不可或缺的、平静而强大的数字延伸。

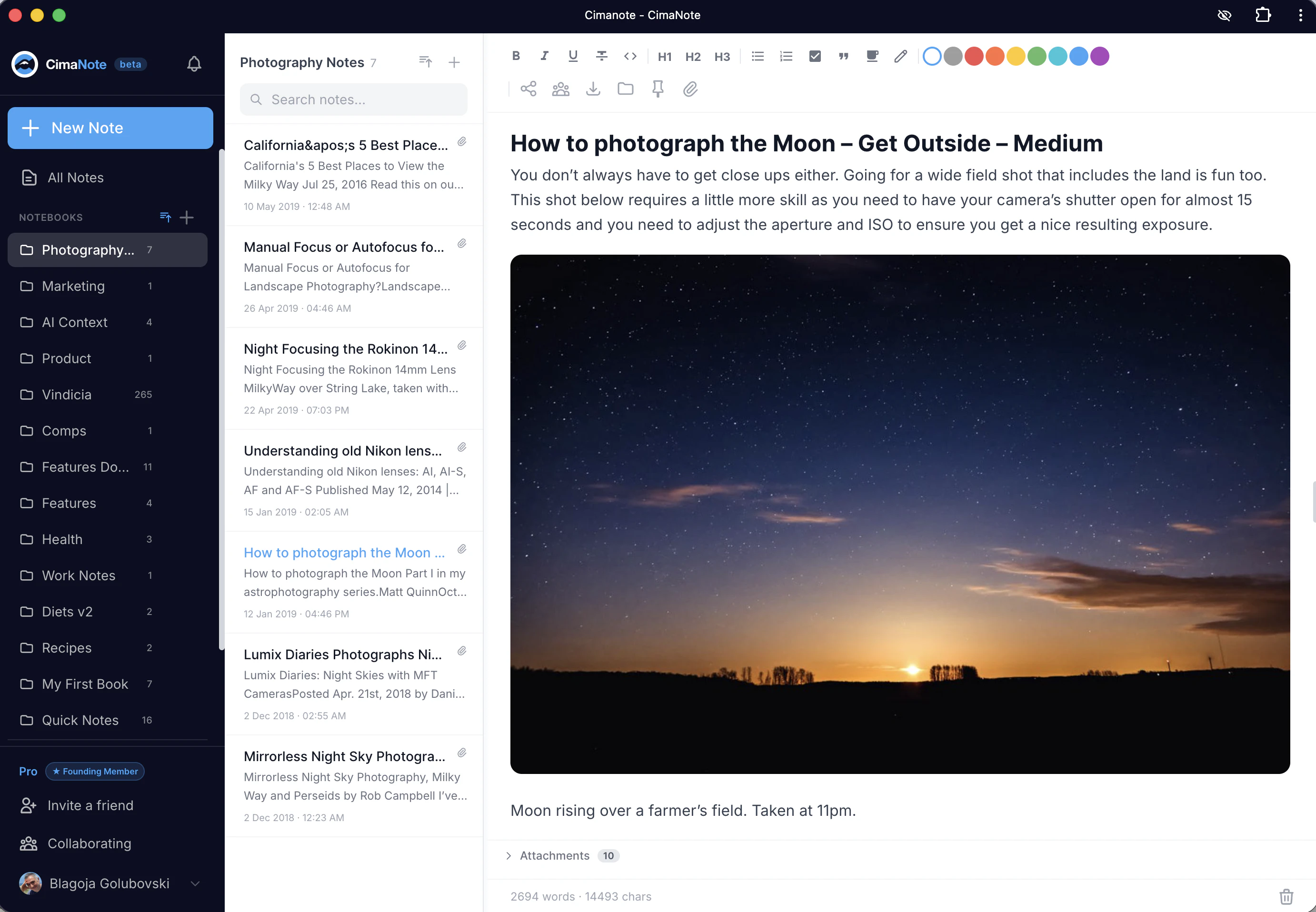

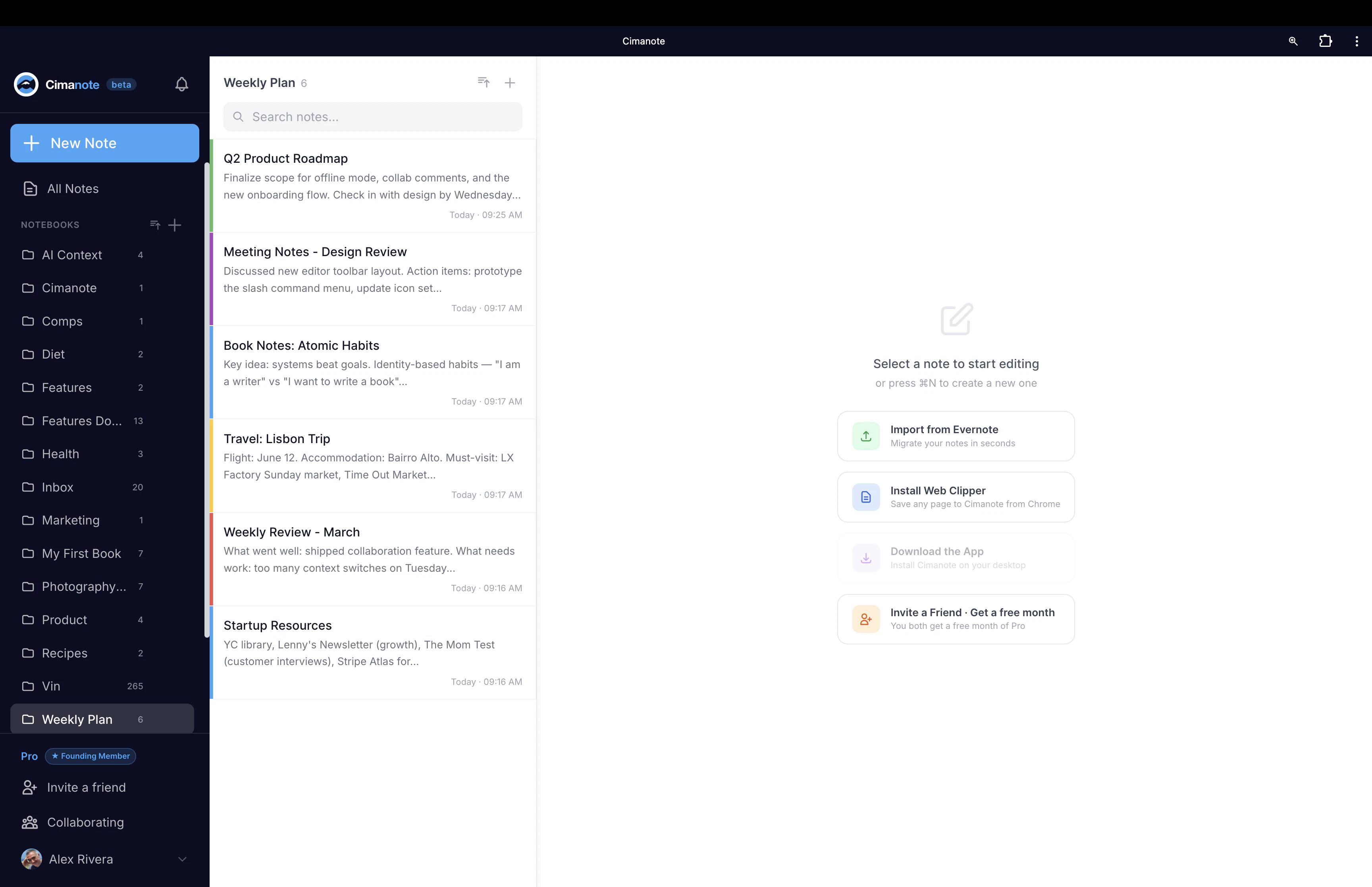

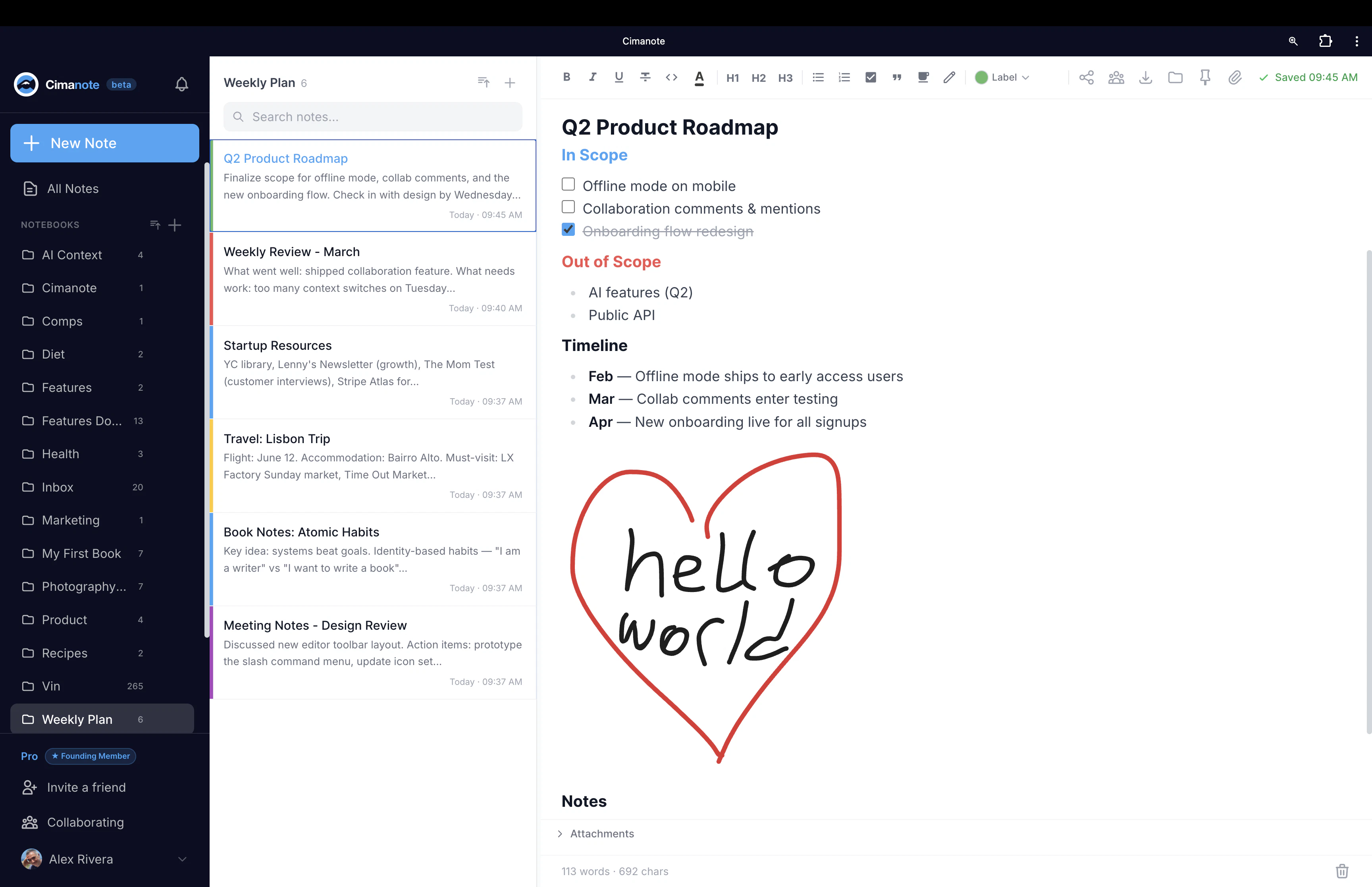

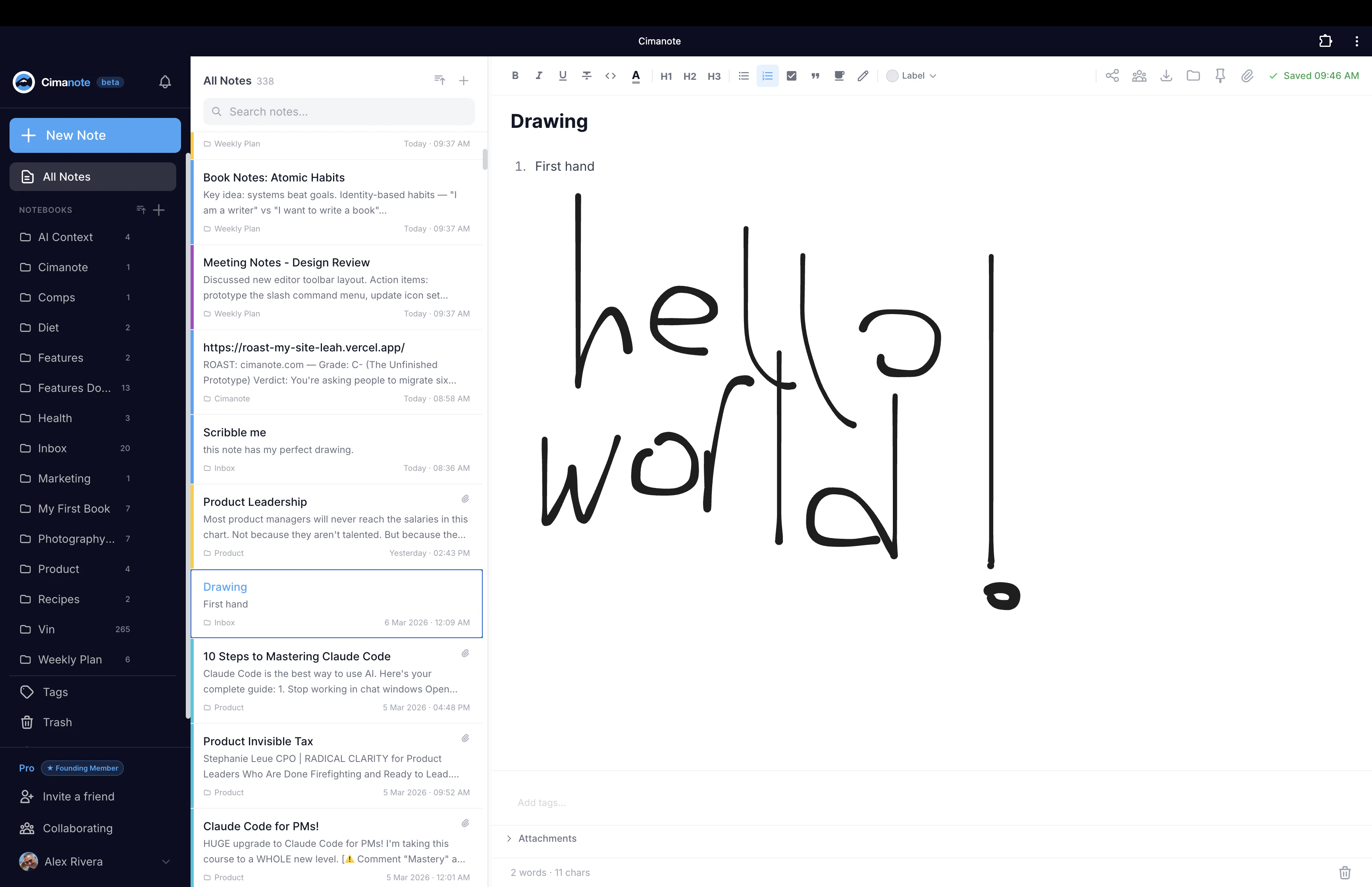

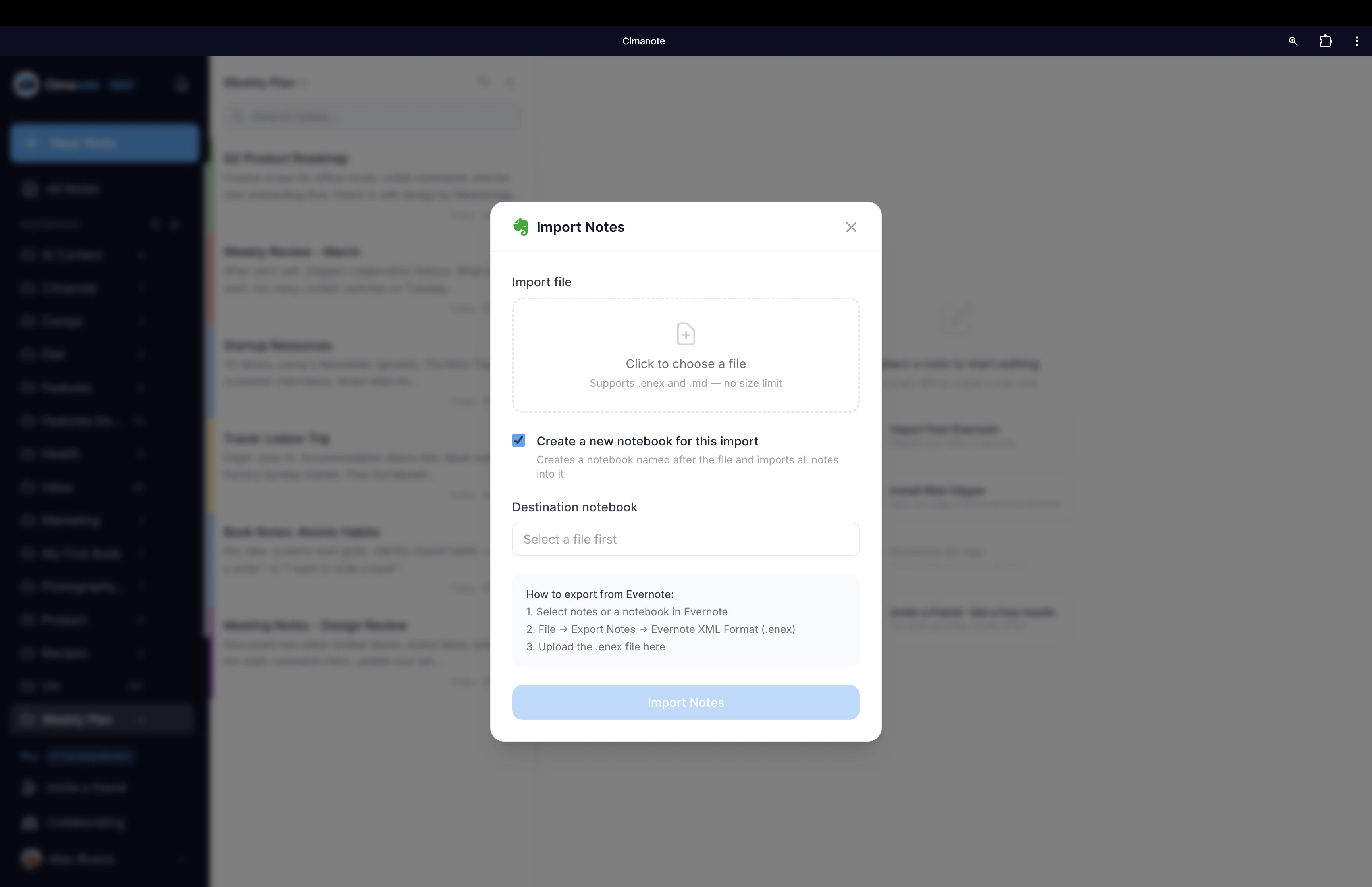

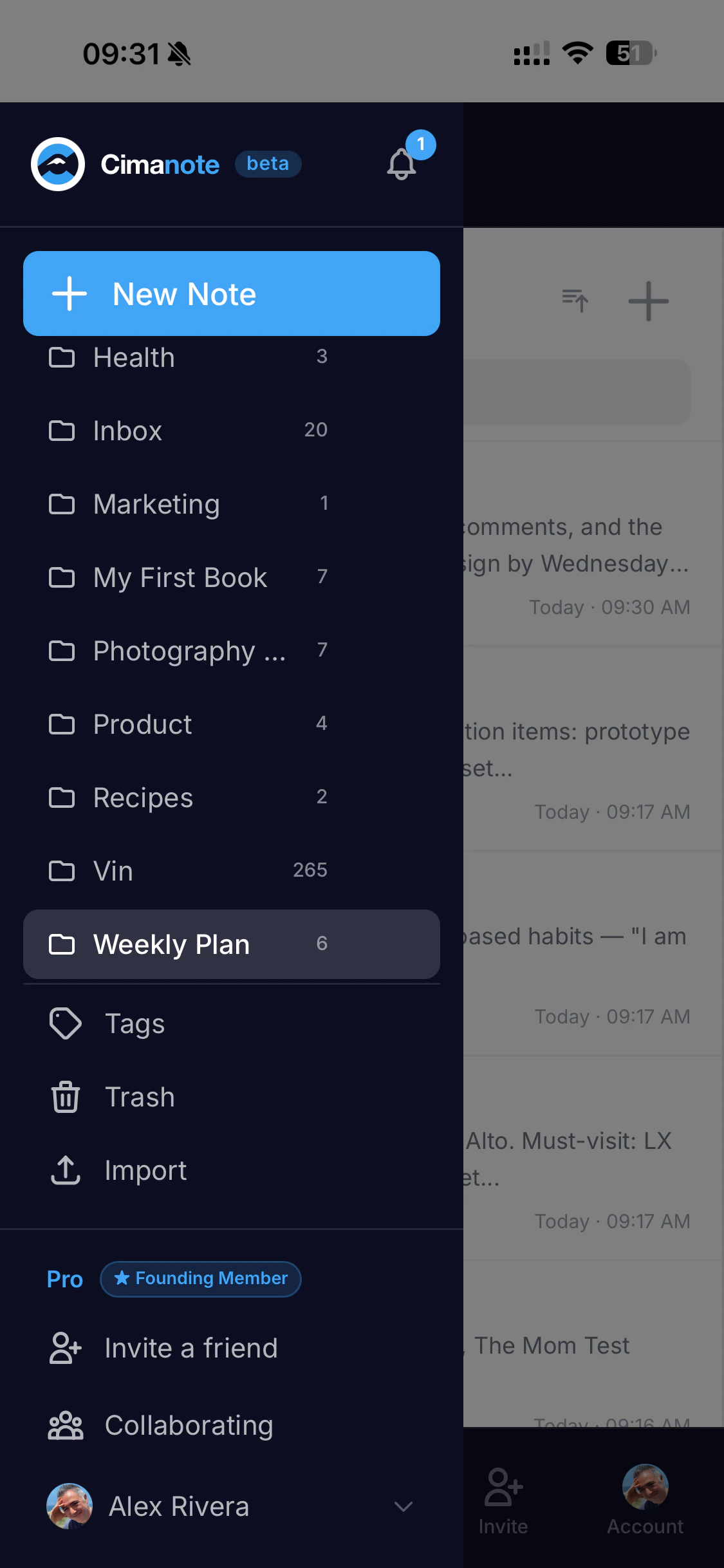

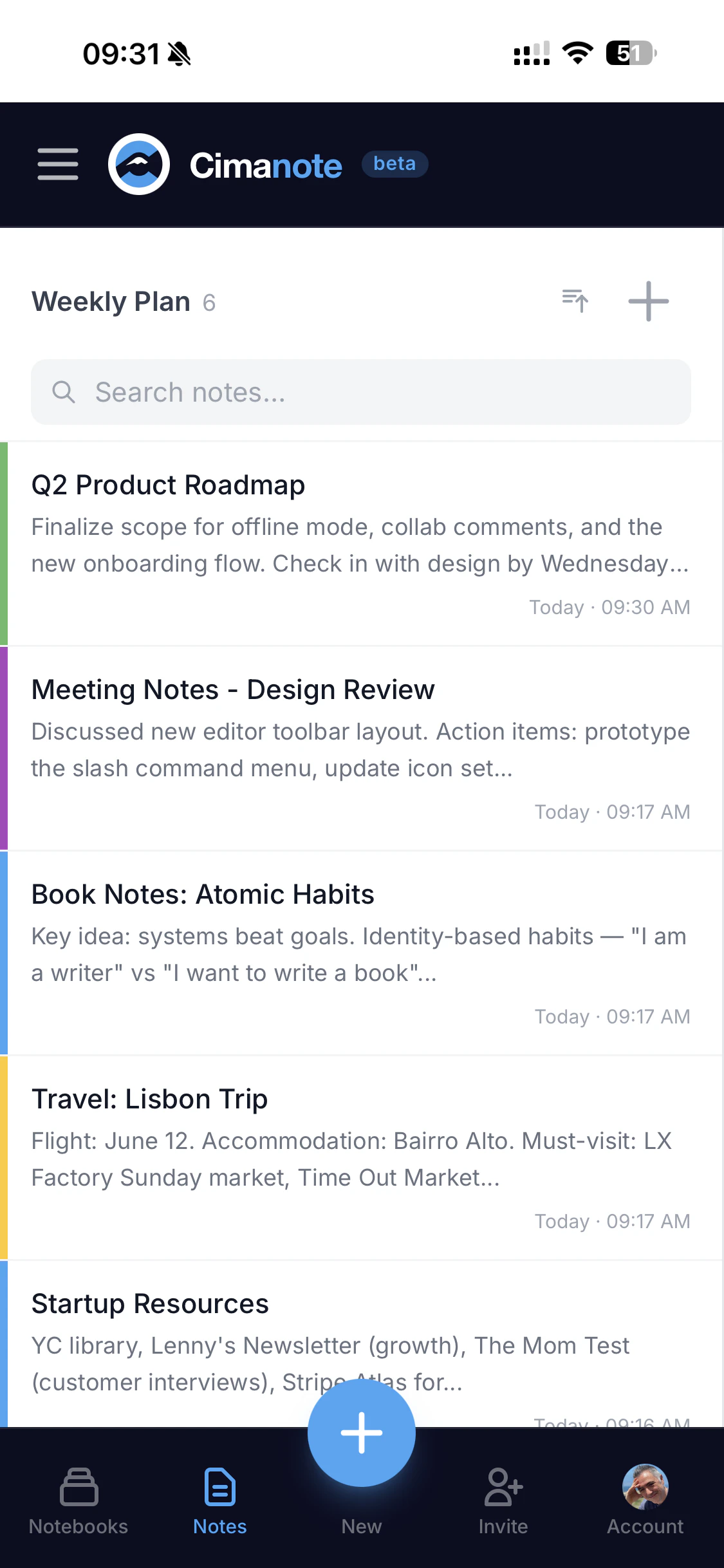

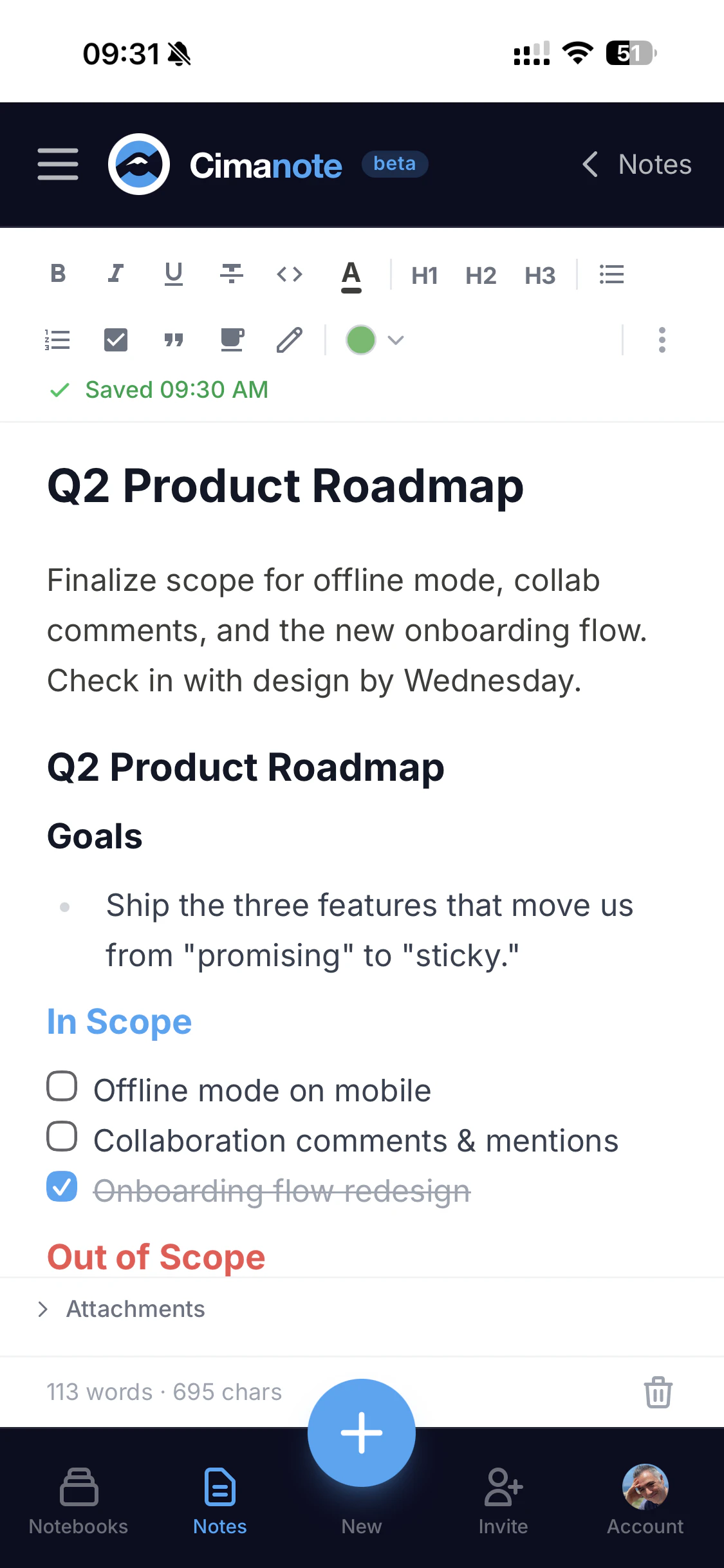

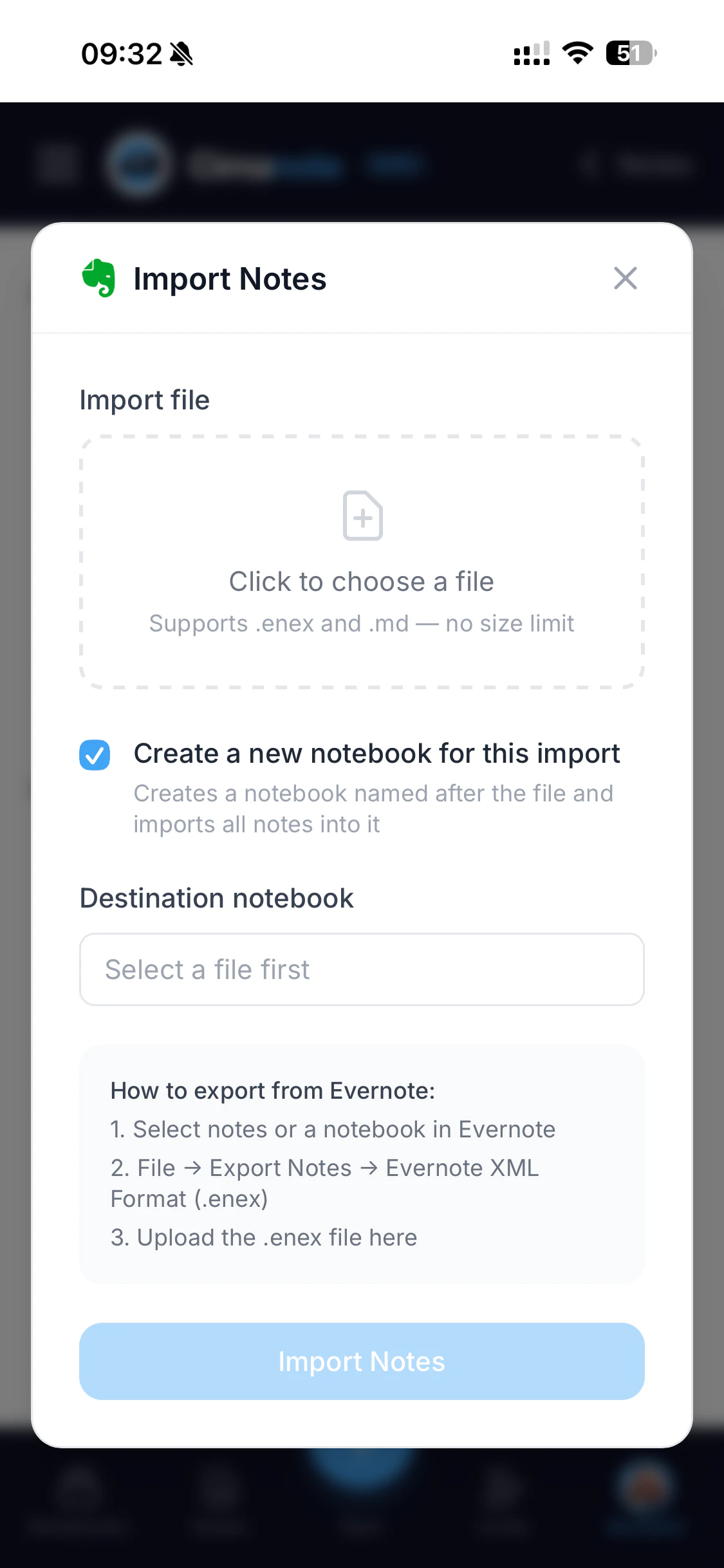

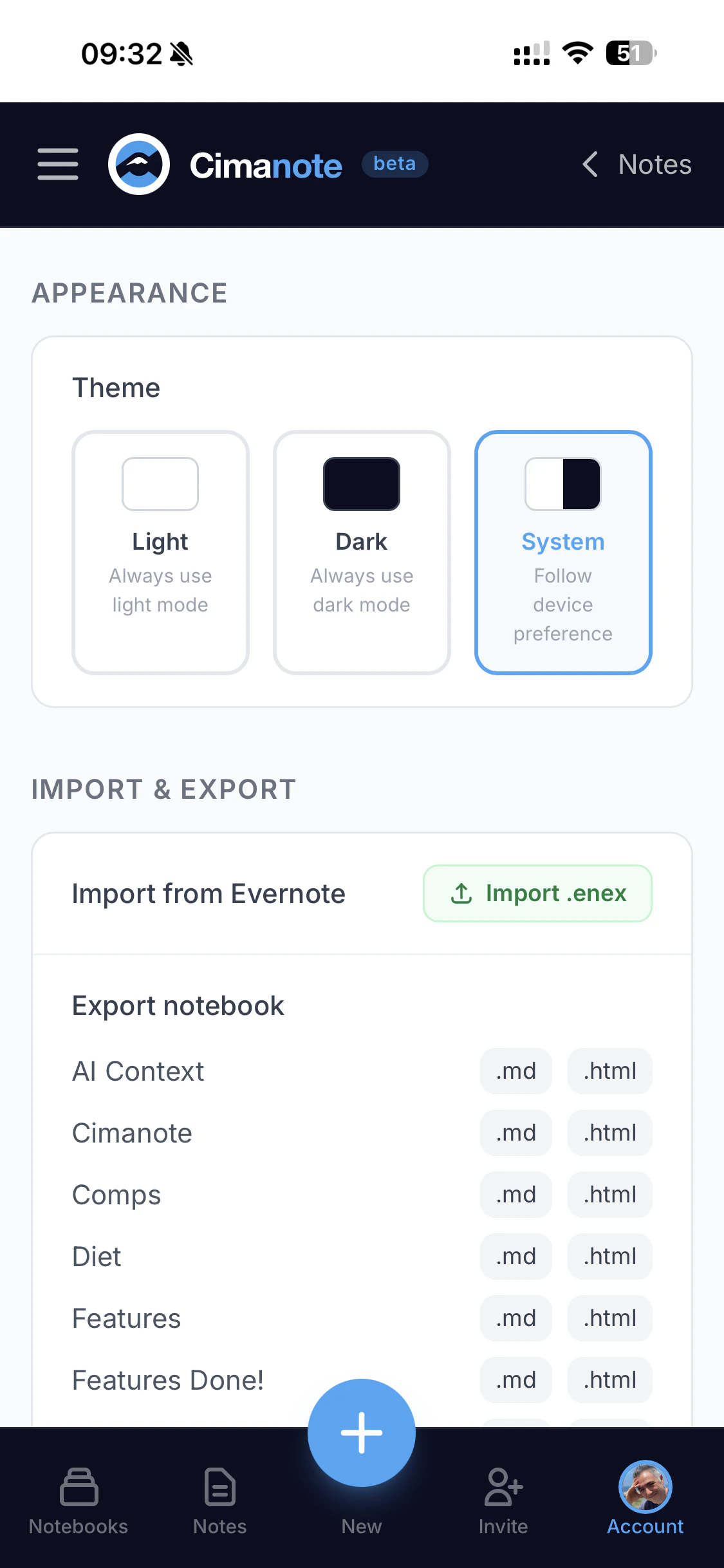

一句话介绍:一款快速、简洁、无臃肿功能的笔记应用,通过提供无损的Evernote数据导入、全平台无设备限制和实时协作,解决了老牌笔记软件涨价、降级、变慢后用户寻求可靠替代品的核心痛点。

Productivity

Notes

SaaS

笔记应用

Evernote替代品

生产力工具

数据迁移

跨平台

实时协作

渐进式网页应用

简洁设计

订阅制

用户数据主权

用户评论摘要:用户普遍赞赏其简洁、快速的体验及完美的Evernote数据(含附件)导入功能,认为这消除了迁移的最大障碍。主要问题与建议集中在搜索功能(PDF/OCR)、表格支持、听写/语音输入等缺失功能上。创始人积极回应,明确了产品路线图与“速度、定价诚信、数据可导出”三大不妥协原则。

AI 锐评

Cimanote的亮相,与其说是一款新产品的发布,不如说是一场针对Evernote“背叛”其核心用户的精准复仇。它敏锐地捕捉到了一个关键的市场情绪:用户对工具“异化”的深度厌倦——当一款旨在延伸大脑的工具,自身却变成了需要费力管理的对象时,背叛感便油然而生。

产品的真正价值,远不止于“快速、简洁”的功能复刻,而在于其构建的“零摩擦迁移”信任体系。无损导入(包括附件)并非单纯的技术展示,而是一个强烈的心理信号:它尊重用户的历史沉淀与迁移成本,直接攻击了SaaS时代用户被“数据锁链”捆绑的核心恐惧。这使其从众多“另一个笔记应用”中脱颖而出,直击Evernote难民最脆弱的软肋。

然而,其挑战同样鲜明。定位上,它试图在Apple Notes的极简与Notion的万能之间寻找“专业笔记”的甜蜜点,这是一个存在但可能狭窄的赛道。其当前依赖PWA的形式虽实现了跨平台敏捷部署,但在追求极致原生体验与系统集成度的用户面前,可能仍是短板。用户评论中关于高级搜索、表格等功能的追问,也预示了“简洁”与“功能完备性”之间的永恒张力。

创始人承诺的“速度、定价诚信、数据主权”三大不妥协原则,是产品最犀利的宣言,也是其未来最大的试金石。这本质上是在与SaaS行业“增长-垄断-榨取”的默认剧本唱反调。能否在资本与扩张压力下坚守这些原则,将决定Cimanote是成为一个值得尊敬的利基品牌,还是又一个被其誓言所反对的模式的追随者。它的出现,是用户用脚投票的产物,其持续成功,则将是对产品伦理与用户信任能否成为核心竞争力的一次公开检验。

一句话介绍:PixelClaw是一款栖息于Mac Dock栏的像素风动画小螃蟹,在用户等待AI编码或程序构建的间隙,提供了一种轻松、有趣的互动陪伴,缓解了等待过程中的枯燥感。

Productivity

Developer Tools

Lifestyle

桌面宠物

Mac应用

Dock栏工具

互动伴侣

像素风

轻量娱乐

等待优化

生产力玩具

情感化设计

开源项目?

用户评论摘要:用户普遍认为产品“可爱”、“酷”,有效缓解了等待焦虑。核心建议包括:增加交互(如抚摸)、引入成长系统(类似电子宠物)。开发者积极回应,社区氛围良好。部分用户联想到经典菜单栏彩蛋,怀旧情感凸显。

AI 锐评

PixelClaw表面上是一个“愚蠢的副业项目”,实则精准刺中了现代开发者工作流中一个隐秘的痛点:被动等待的碎片时间。它并非提升效率的工具,而是试图为冰冷的、异步的生产力进程(如AI编码)注入一丝拟人化的温暖和即时反馈的乐趣。

其真正价值不在于螃蟹本身的行为多么复杂,而在于它成功地将用户的注意力从“进度条焦虑”转化为一种低负担的、治愈性的观察与微互动。这本质上是一种“情感化UI”的极端轻量化实践,将Dock栏这个系统交互的“交通枢纽”,变成了一个数字生物的生态缸。用户评论中“抚育它成长”的呼声,恰恰暴露了用户对工具软件的情感连接渴望——我们不仅需要软件完成任务,更希望与我们的数字工作环境建立关系。

然而,其风险与潜力同样明显。作为新奇玩具,其热度极易消退,必须通过持续的行为更新和深度互动(如评论提到的Tamagotchi式养成)来维持用户长期兴趣,否则难免沦为一次性消遣。此外,它巧妙地依附于“Claude Code”这一具体场景进行传播,既是聪明的增长黑客,也可能限制了其受众想象。它能否从特定AI工具的“等待伴侣”,进化为一种普适的、可自定义的桌面情感化组件,将决定其是小众玩物,还是能开启一个“桌面生命体征”的微妙品类。在一切皆可智能化的时代,这种“无用的、有趣的”数字存在,或许是对抗工具理性异化的一剂小巧解药。

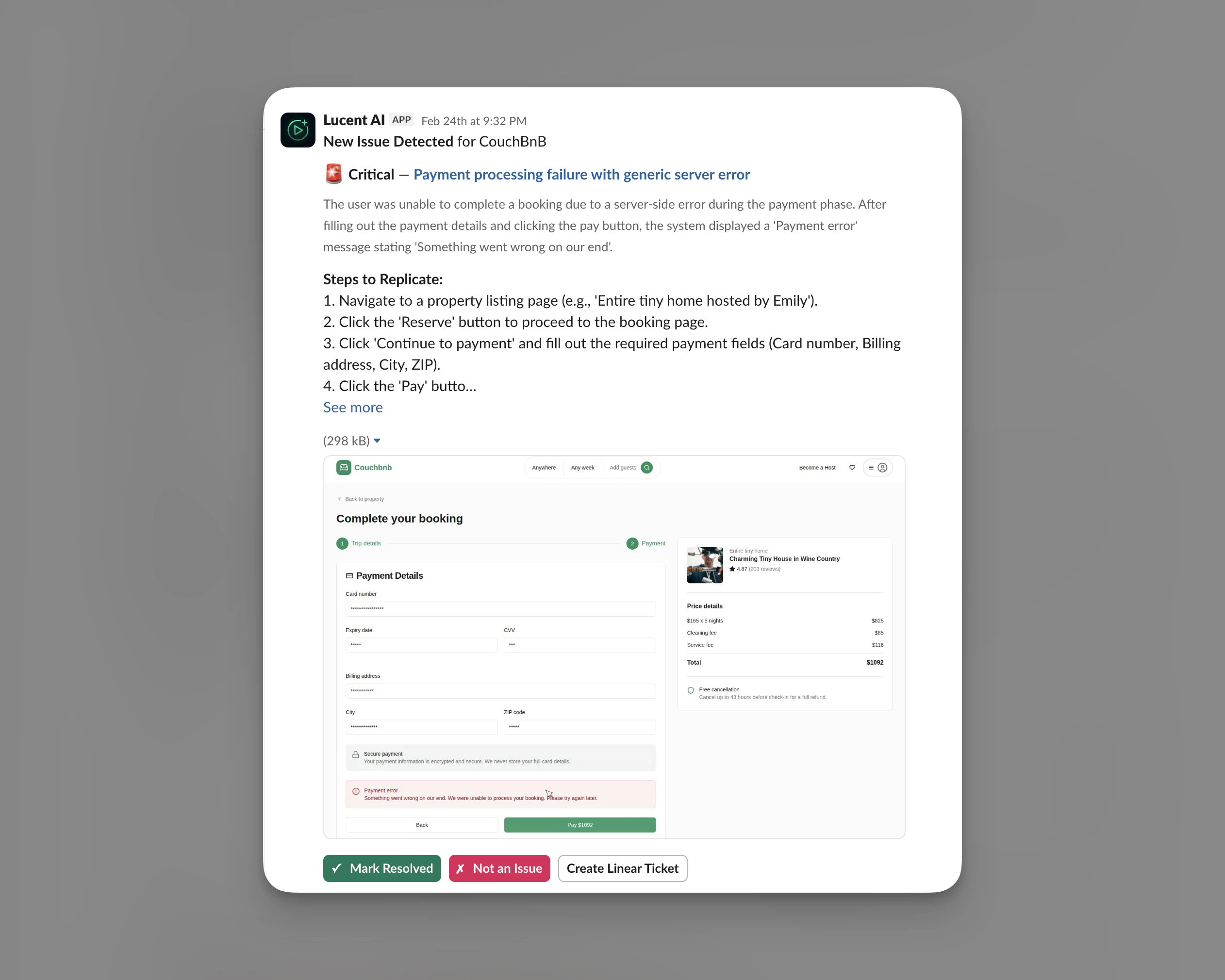

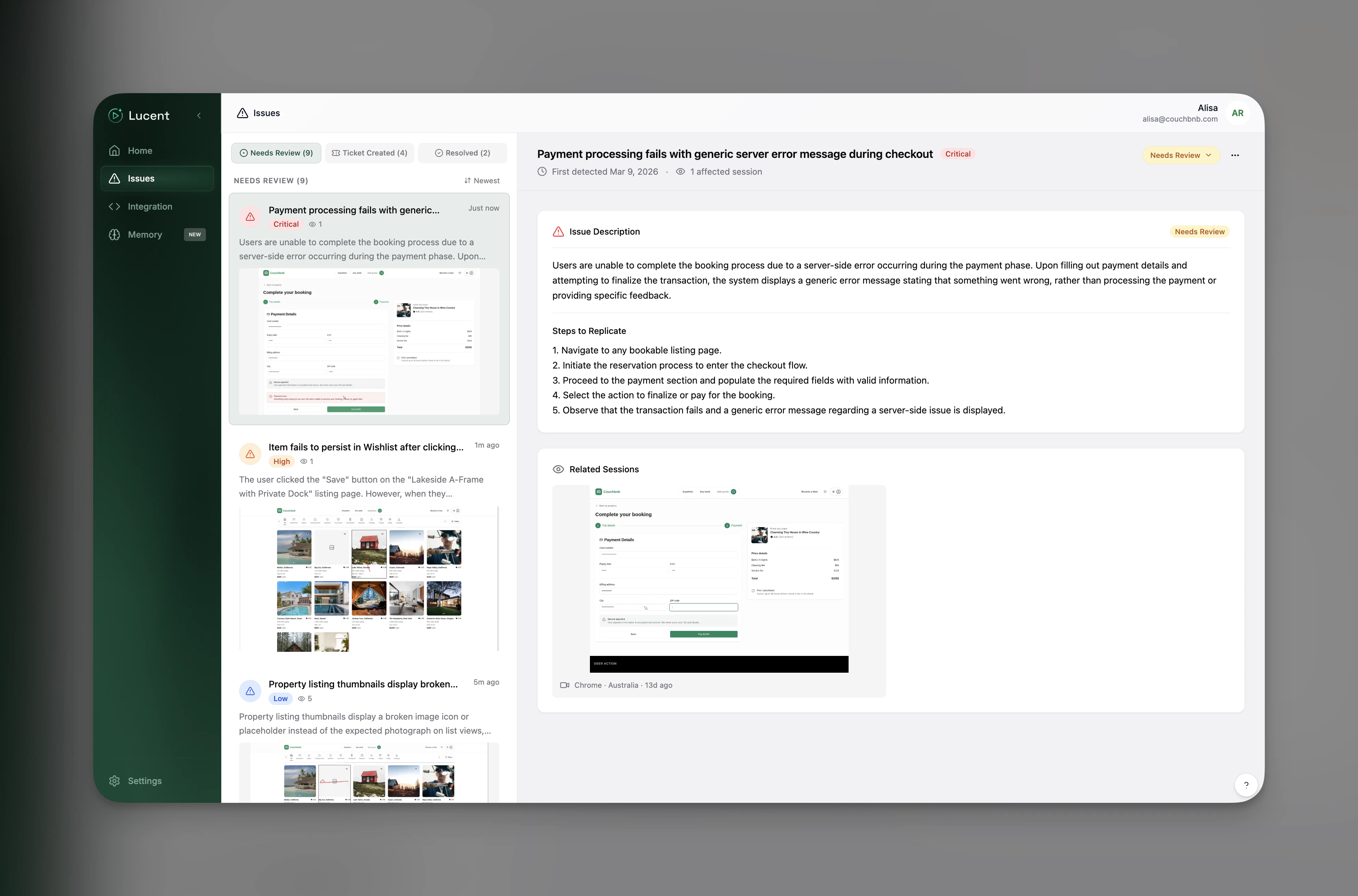

一句话介绍:Lucent是一款通过AI实时观看用户会话回放,自动检测产品Bug和UX问题的工具,解决了开发团队无暇人工筛查海量回放数据、导致生产环境问题漏检的痛点。

User Experience

Developer Tools

Artificial Intelligence

AI缺陷检测

会话回放分析

用户体验监控

实时告警

产品质量保障

Slack集成

PostHog生态

YC孵化

工程效率工具

用户评论摘要:用户普遍认可其解决“回放数据有价值但无人观看”的核心痛点,赞赏Slack/Linear集成使告警可操作。主要问题集中于:是否支持移动端(已支持移动Web)、是否支持其他回放工具(目前仅PostHog)、能否区分技术Bug与UX问题(两者皆可,后者以周报呈现)。建议突出强力客户证言。

AI 锐评

Lucent切入了一个典型的“数据富矿,洞察荒原”场景。其真正价值不在于“看回放”这个动作,而在于将非结构化的、高信息熵的用户行为视频流,转化为可归因、可分发、可行动的结构化事件。这本质上是一个“信号降噪”与“优先级排序”的工程。

产品聪明地选择了从PostHog切入,而非自建回放SDK,这降低了早期用户的接入成本,快速验证了AI检测模型的有效性。其“实时Bug+周期UX报告”的双轨制输出,反映了团队对“严重性”与“重要性”的区分有现实认知:崩溃需要即时响应,而体验摩擦则适合批量复盘。

然而,其长期挑战同样尖锐。首先,是“解释力”的边界。AI可以高亮“用户在这里连续点击了五次”,但它能否真正理解这是“按钮反馈缺失”的设计缺陷,还是“用户决策犹豫”的心理状态?其次,是误报与警报疲劳的经典难题。尽管声称有严重性评分,但在复杂业务流中定义“异常”本身就需要深厚的领域知识。最后,其商业模式高度依赖上游数据源(PostHog),生态位存在一定脆弱性。

总体而言,Lucent不是又一个“监控仪表盘”,它试图成为开发流程中的“第一响应者”。它的成功不取决于AI是否比人“看”得更准,而取决于它能否以足够低的噪音,将正确的问题在正确的时间,推送给正确的人。这条路走通了,便是工程效率的实质性跃迁;若陷入“狼来了”的困境,则不过是另一个需要被监控的噪音源。

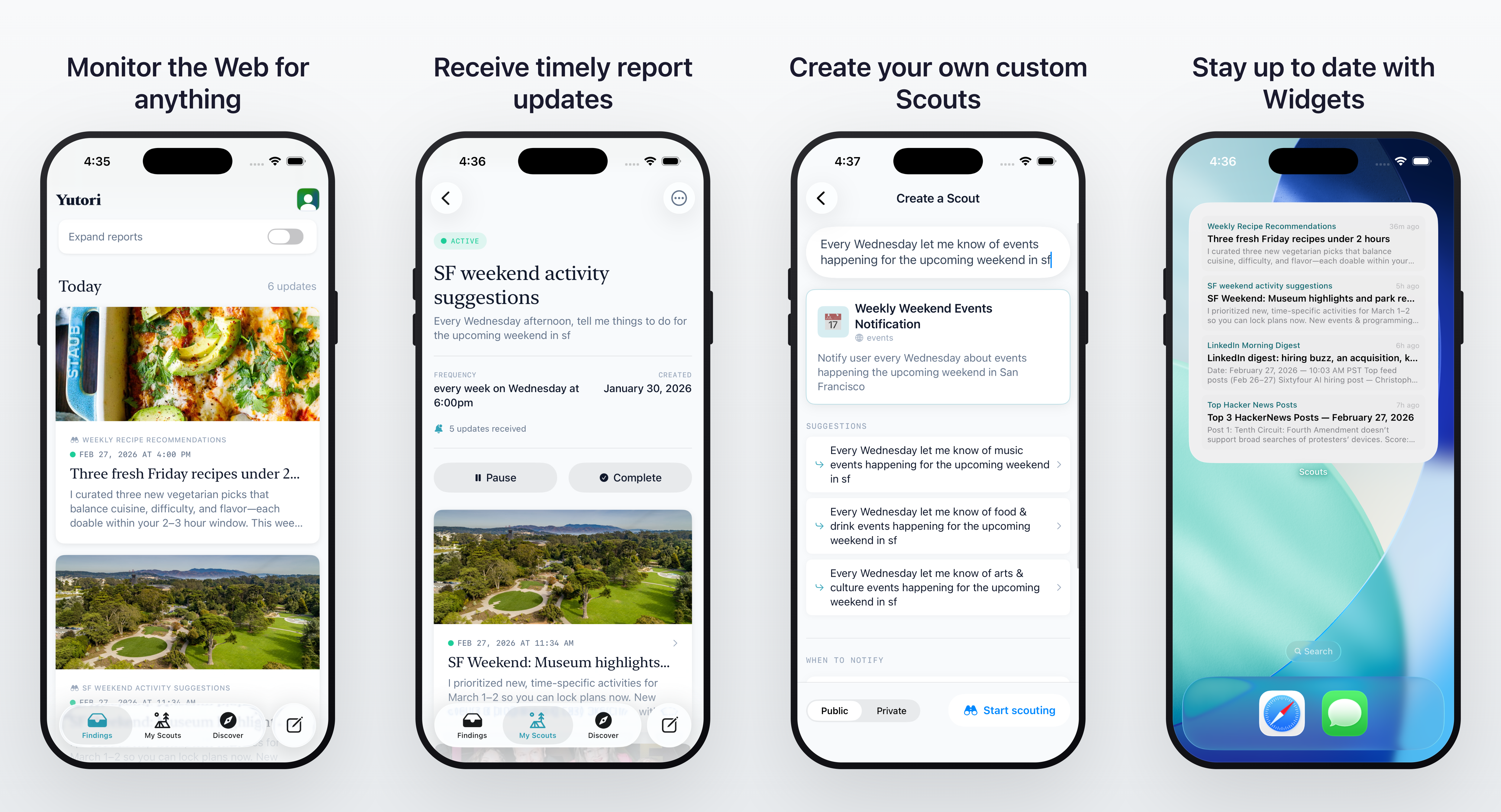

一句话介绍:Scouts是一款iOS端的常驻AI智能体应用,通过主动监测网络信息并推送高价值通知,解决了用户在移动场景下被动、低效获取关键信息(如竞品动态、价格波动、新闻资讯)的痛点。

Productivity

Artificial Intelligence

Search

AI智能体

网络监测

信息推送

竞品追踪

市场情报

移动效率工具

个性化提醒

降噪过滤

自动化研究

iOS应用

用户评论摘要:用户普遍认可其解决“信息过载”和“提醒疲劳”的核心痛点,期待其AI能有效过滤噪音。主要疑问集中于AI如何平衡信号与噪音(是预设规则还是自主学习),并提出了集成邮箱/日历等扩展功能的建议。开发者回复强调其基于LLM的智能体可通过自然语言反馈进行调优。

AI 锐评

Scouts for iOS的发布,本质上是对“信息推送”范式的一次AI化重构。它瞄准的并非信息缺失,而是信息过载时代下的“有效注意力的缺失”。传统警报工具(如Google Alerts)的失败在于其机械的匹配逻辑,导致海量低相关推送,最终被用户弃用。Scouts宣称的“高信号”和“降噪”能力,是其核心价值主张,但这恰恰是最大的挑战和待验证点。

其真正的创新不在于“监测”,而在于“理解与判断”。通过LLM驱动的智能体,它试图理解用户设定的模糊意图(如“监控竞品动态”),并自主判断网络中哪些新信息具备足够的相关性和重要性来触发推送。这从“关键词匹配”升级到了“语义与意图匹配”。评论中用户关于“信号/噪音权衡”的疑问直指要害:产品成功与否,完全取决于其AI智能体在具体场景下的判断精准度。开发者的回复揭示了其调优机制——通过用户对推送报告的邮件反馈进行强化学习,这是一个务实且关键的闭环设计。

然而,潜在风险同样明显。首先,“高信号”是高度主观的,对一位创始人有价值的竞品融资新闻,对另一位可能是噪音。过度依赖用户反馈调优,可能导致智能体视野窄化,错过潜在重要的关联信息。其次,从网页端扩展到iOS,将推送场景从“可处理的仪表盘”转移到“不容打扰的锁屏”,这对推送的精准性和紧迫性提出了近乎苛刻的要求。一次误判的推送就可能导致用户卸载。

总体而言,Scouts代表了一个正确的进化方向:将被动、泛化的信息拉取,转变为主动、智能的信息推送。但它能否从“一个有趣的AI应用”成长为“一款可靠的基础设施型工具”,取决于其智能体在无数细分场景下的稳定表现,以及能否建立起用户对“手机推送”的绝对信任。这远非一个iOS客户端所能解决,而是对其底层AI agent系统长期、残酷的效能考验。

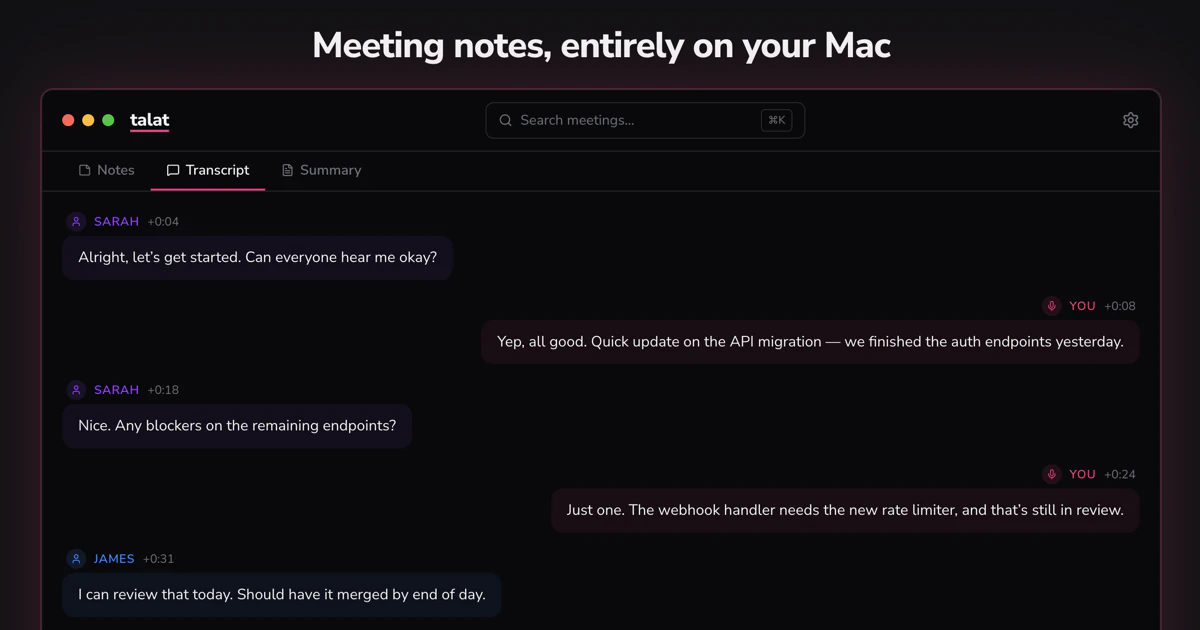

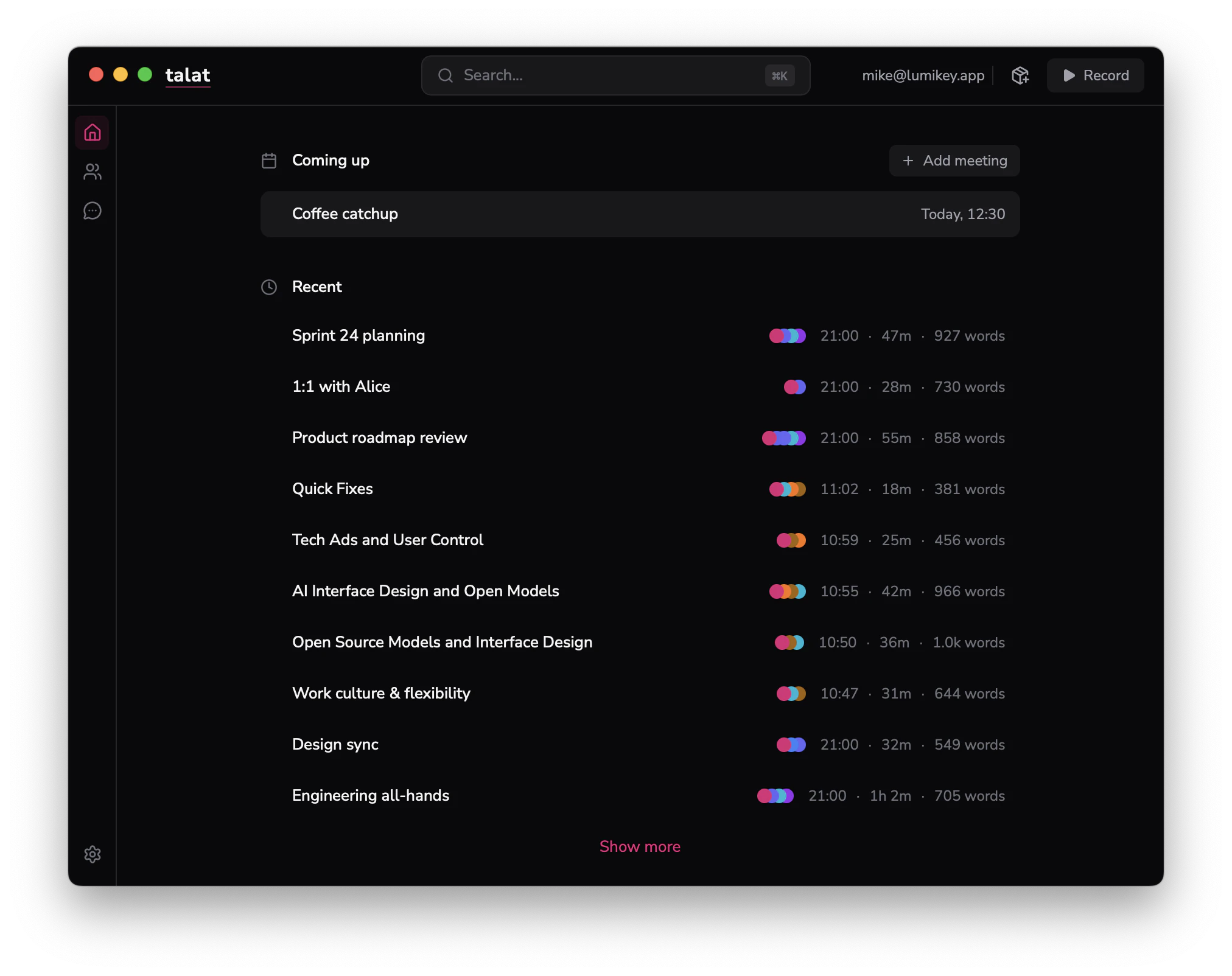

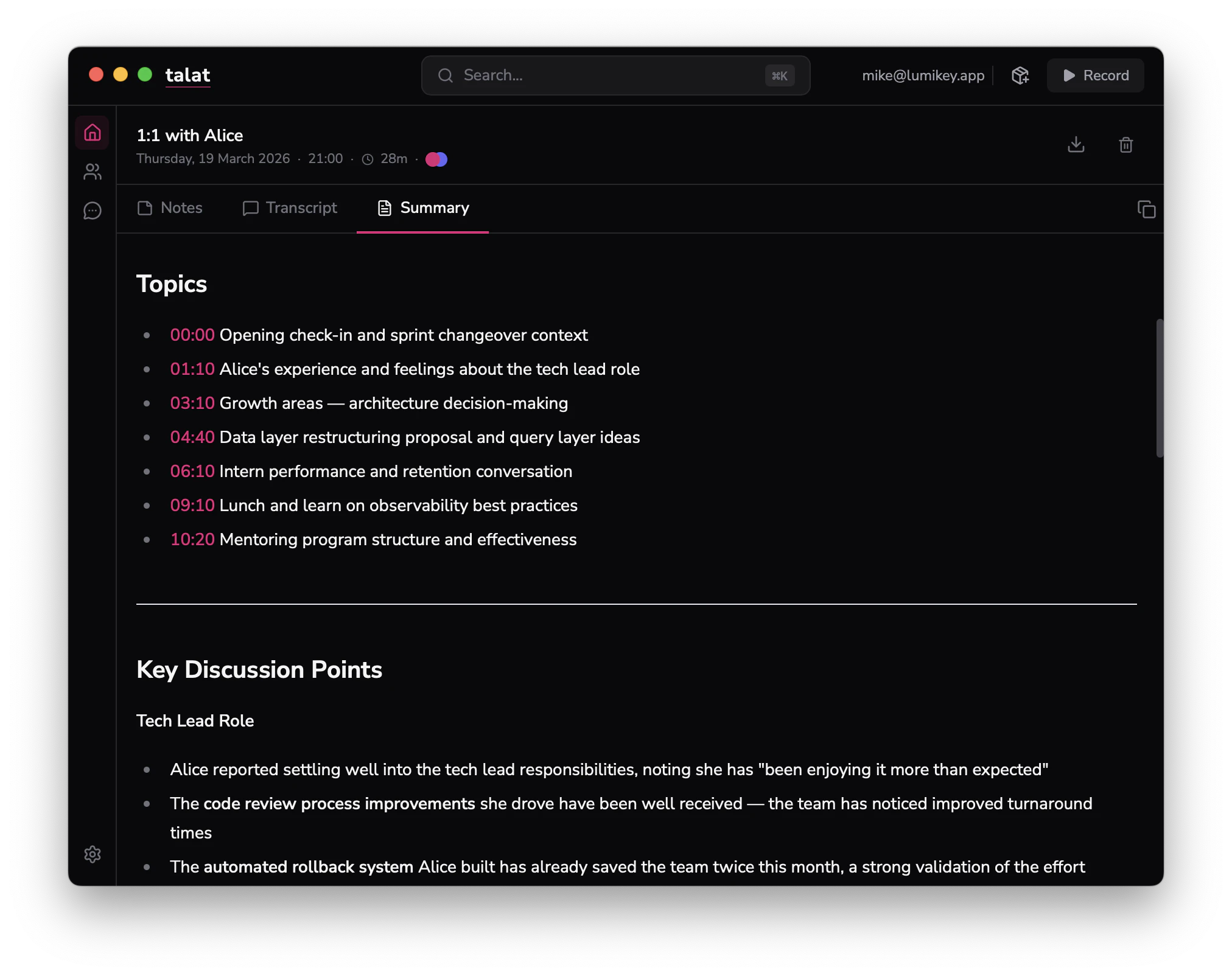

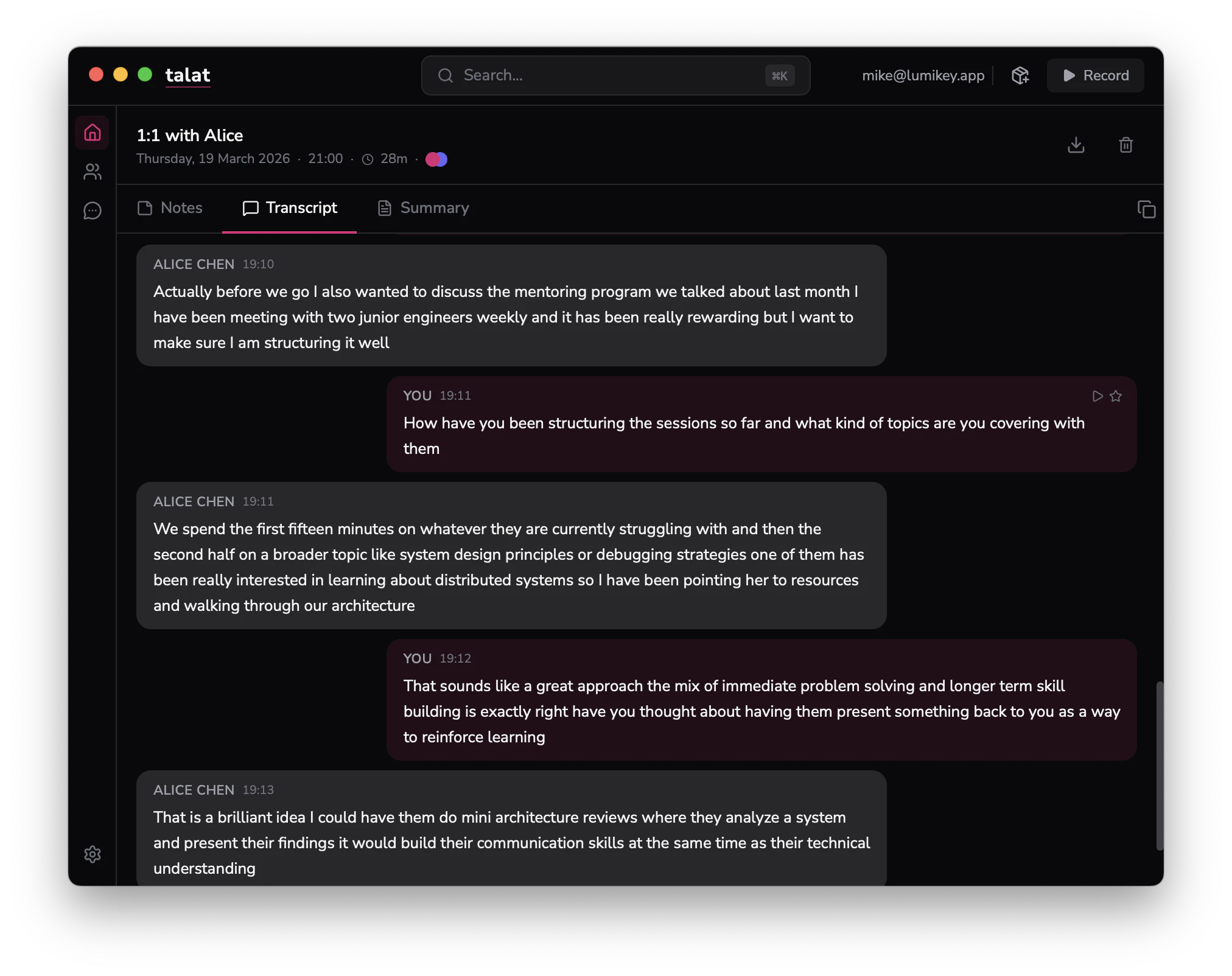

一句话介绍:talat是一款在Mac上实时转录会议音频并生成可搜索笔记的本地AI工具,通过完全在设备端运行解决用户对隐私泄露和云端订阅费用的核心痛点。

Notes

Privacy

Meetings

本地AI

实时转录

会议笔记

隐私安全

macOS应用

离线处理

神经引擎

知识管理

一次付费

开发者工具

用户评论摘要:用户高度认可其隐私保护(数据不离设备)和一次付费模式。积极反馈包括转录准确度、自定义LLM提示和Obsidian导出等高级功能。主要问题与建议集中在:多语言支持体验待优化、与Granola等竞品的具体迁移路径、以及本地处理是否会影响会议发言心理。

AI 锐评

talat的宣言“Realtime meeting notes that don’t leave your Mac”是一记精准打击,它贩卖的不是更优的AI,而是对云端AI商业模式的不信任。其真正价值并非技术突破——利用Mac Neural Engine进行本地转录已是已知路径——而在于敏锐地捕捉并产品化了当前科技消费中的一个核心矛盾:用户对AI助手的渴望与对数据主权丧失的恐惧。

产品定位极具策略性:不与Granola等云端工具正面比拼功能完整性,而是以“隐私守护者”和“订阅制叛军”的姿态,开辟一个差异化的利基市场。它允许并行运行,降低了用户尝试门槛,这种“补充而非替代”的柔和姿态,实则是针对高意识用户群体的高效转化漏斗。其提供的自定义LLM接口、Webhook和MCP服务器支持,则将产品从“笔记工具”升维为一个可编程的本地语音数据处理节点,迎合了开发者及高端用户的需求。

然而,其面临的挑战同样尖锐。本地化的代价是性能天花板受限于终端硬件,评论中提及的说话人分离粗糙、多语言体验打折便是明证。这引出一个根本问题:在会议笔记这个场景中,用户对“绝对隐私”的执着,能否持续压倒对“更佳体验”的追求?尤其是在团队协作场景中,纯本地化可能成为分享与协同的障碍。此外,一次付费模式对长期研发的可持续性构成考验。

本质上,talat是“本地优先”运动在AI消费级应用的一次重要实践。它未必能取代主流云端工具,但它成功地为市场提供了一个选择,并迫使整个行业重新审视数据处理的边界与成本。它的成败,将是观测用户隐私支付意愿与AI便利性天平如何倾斜的关键风向标。

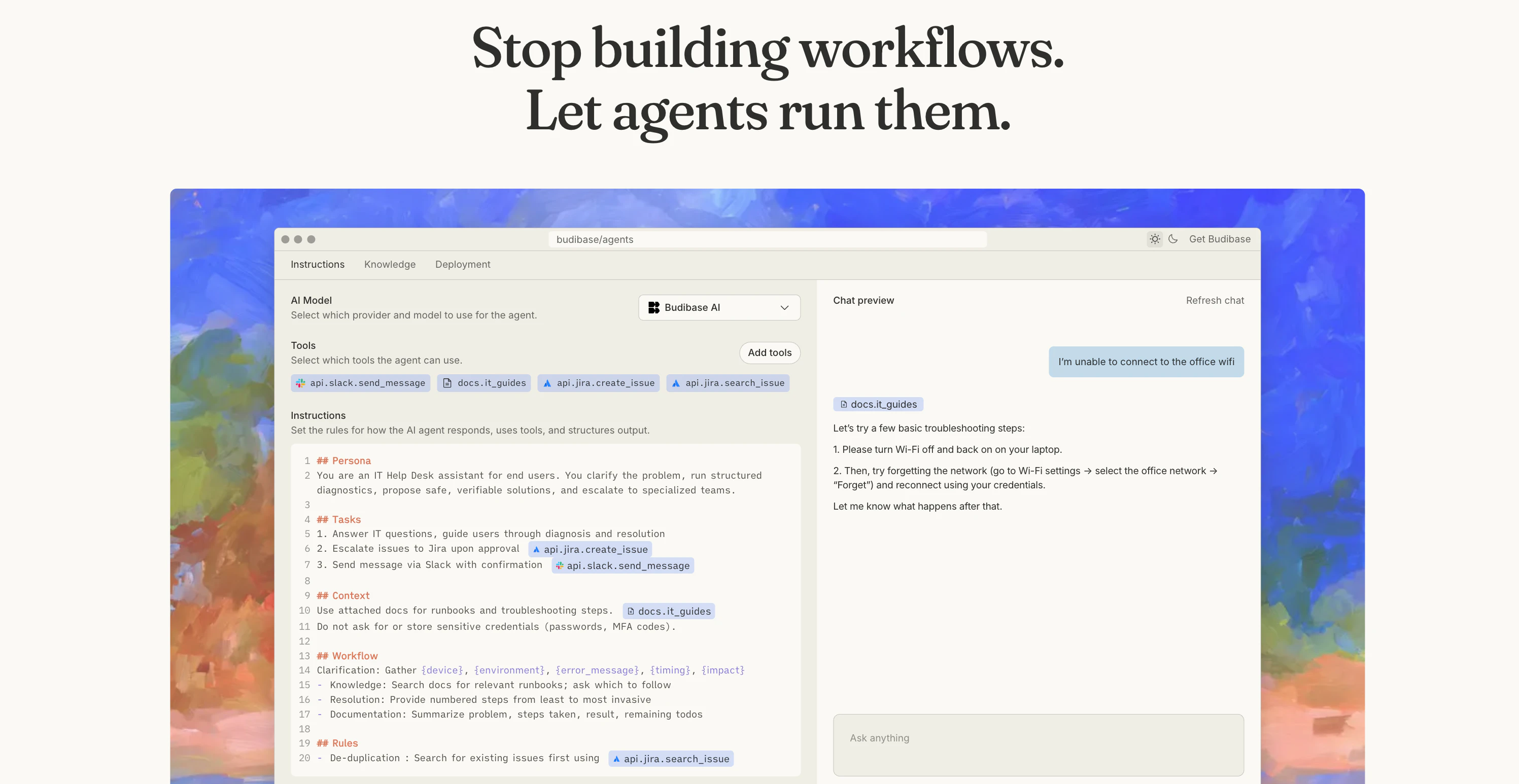

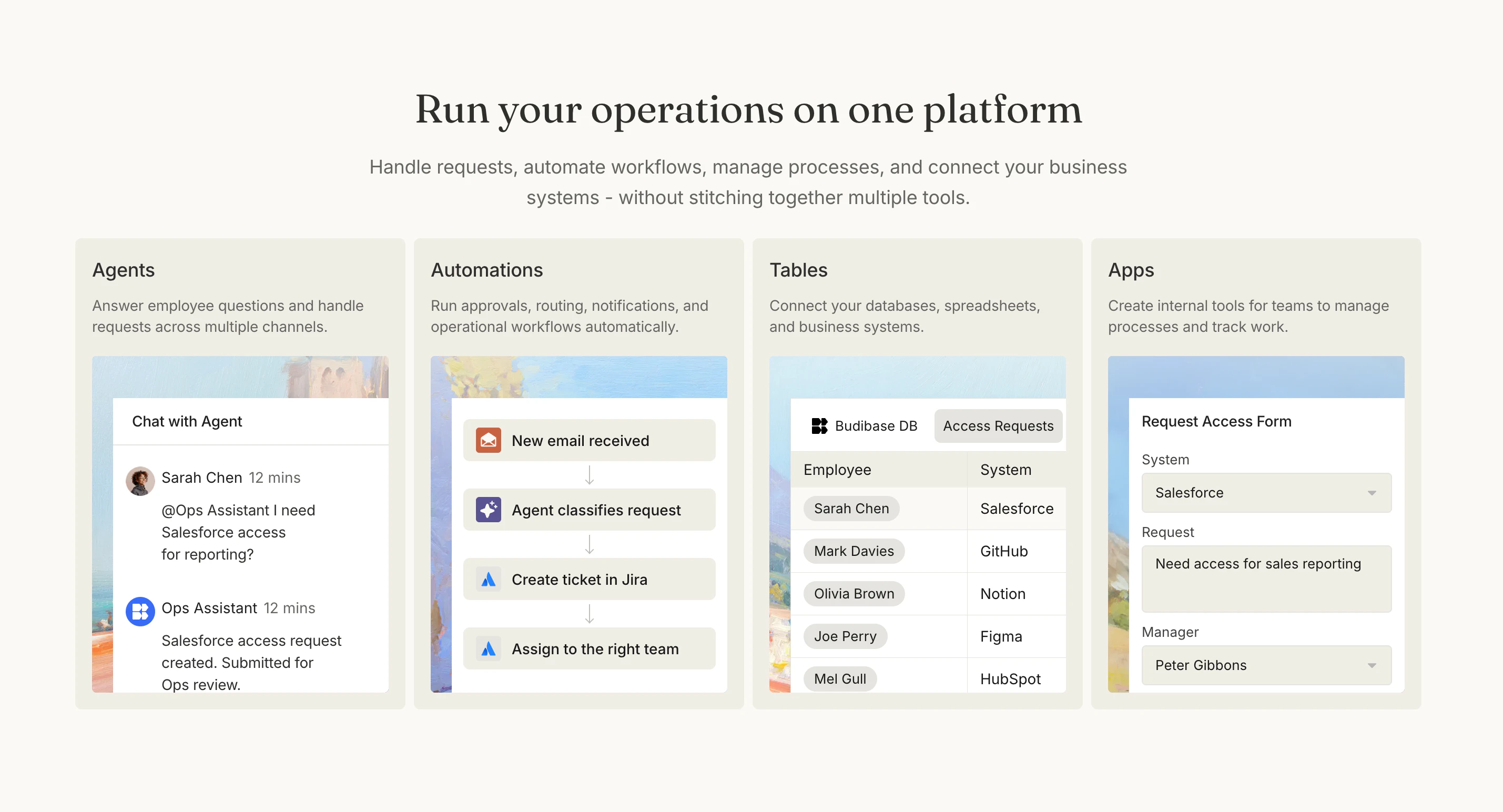

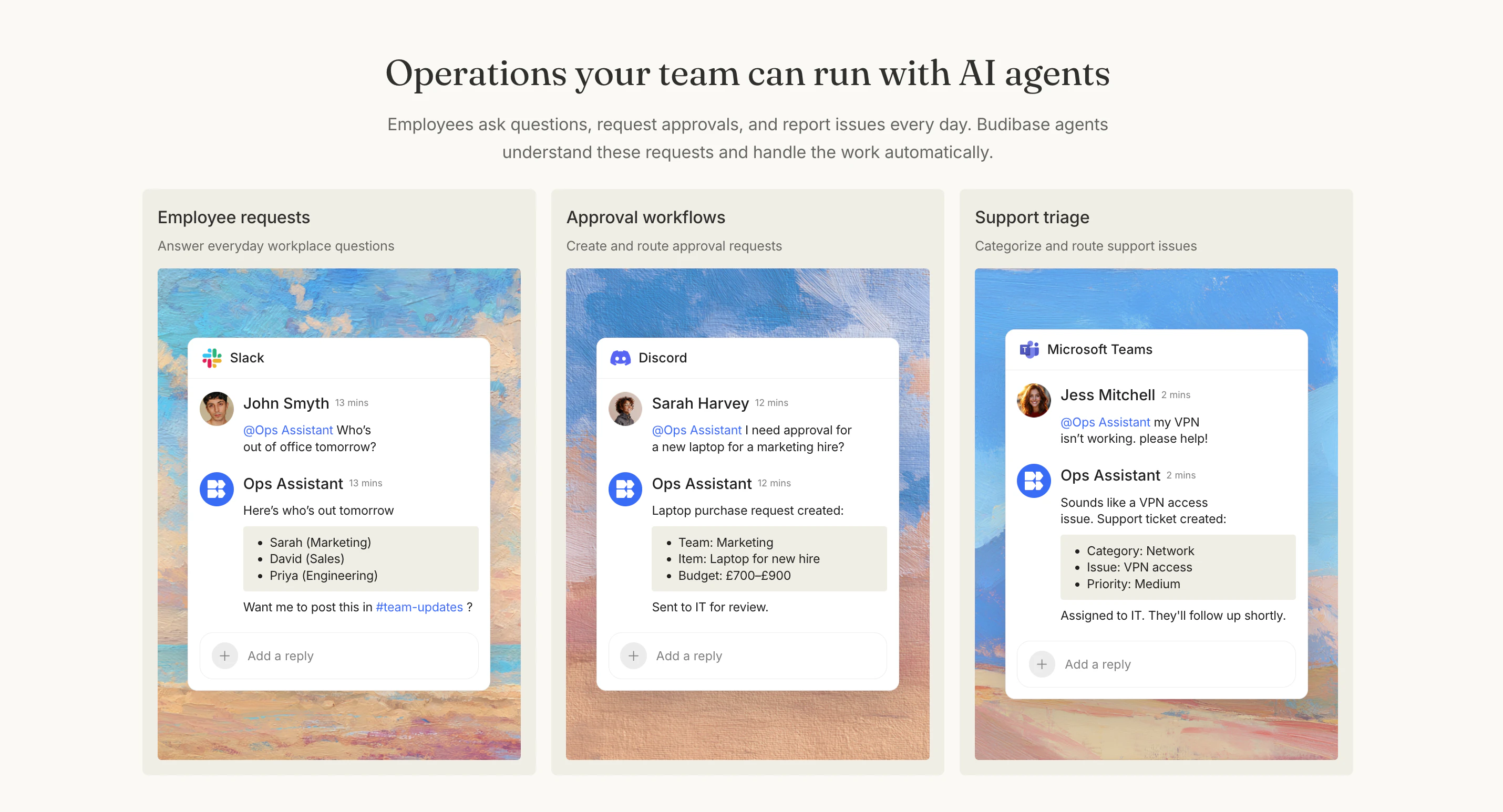

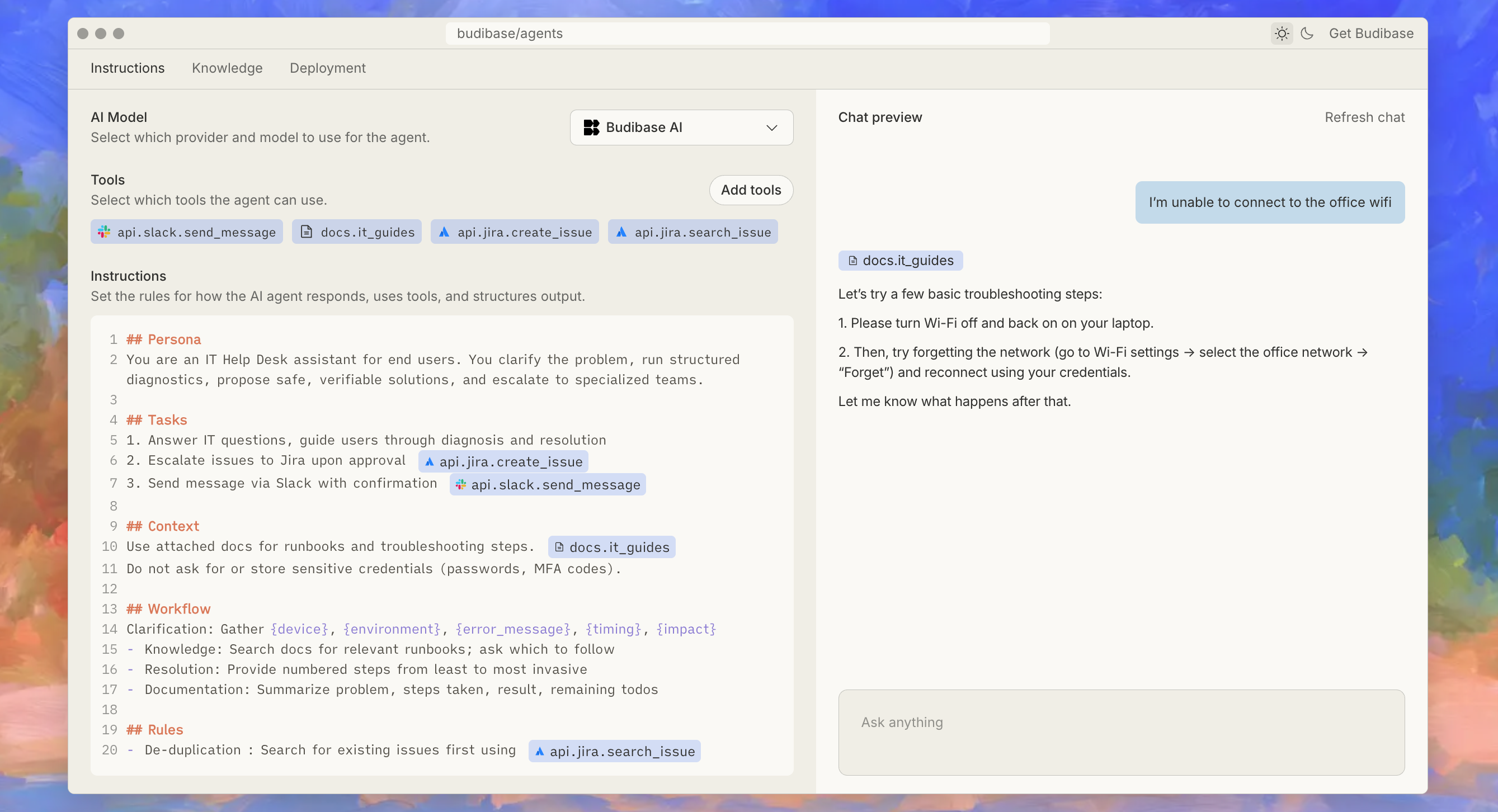

一句话介绍:Budibase AI Agents 是一款开源AI智能体平台,为运营团队自动处理来自Slack等渠道的审批、请求与工作流,连接内部数据与工具,旨在减少为每个流程重复构建应用的需求,提升运营自动化水平。

Productivity

Artificial Intelligence

No-Code

AI智能体

运营自动化

开源

内部工具

工作流管理

审批流程

低代码/无代码

企业级应用

数据集成

自主代理

用户评论摘要:用户反馈积极,认可其从“构建工作流”到“定义意图”的范式转变价值。主要关注点与建议集中在:对处理边界案例能力的质疑、开源许可能否确认、生产环境中的控制力、潜在的“智能体泛滥”风险以及面向敏感数据的权限管控模型。

AI 锐评

Budibase AI Agents 所标榜的“让智能体运营你的业务”,其真正的颠覆性不在于引入了另一个AI噱头,而在于它试图对企业内部工具的开发范式进行一次“釜底抽薪”。传统低代码平台解决的是“如何更易构建应用”,而Budibase此次转向,直指一个更本质的问题:**许多内部流程真的需要一个完整的“应用”吗?**

它的价值内核是“去应用化”。将运营工作中大量琐碎、非结构化、但规则相对明确的请求与审批,从需要预先定义每一步的刚性工作流中解放出来,交由能理解意图、主动获取上下文、并调用确定性子流程(其原有的Automations功能)的智能体处理。这精准击中了运营团队在“工具蔓延”与“灵活需求”之间的痛点——用定义“做什么”替代构建“怎么做”。

然而,产品面临的质疑同样尖锐且专业。评论中关于“边界案例”和“控制力”的讨论,正是当前AI代理落地企业的核心矛盾。智能体的“灵活”与生产环境所需的“确定”之间存在天然张力。Budibase提出的“混合架构”(智能体处理模糊前端,自动化确保确定后端)是务实的工程思路,但其成败关键在于:智能体决策的透明性、错误的可追溯与可干预性、以及精细至数据字段级别的权限管控。这些才是企业客户,尤其是考虑开源自托管版本的客户,真正为之付费的“安全阀”。

总体而言,这是一次极具洞察力的产品演进。它不再满足于做“更好的锤子”,而是试图重新定义“钉子”。其成功与否,不取决于AI代理本身有多“智能”,而取决于它能否在赋予业务灵活性的同时,构筑起堪比传统IT系统的可靠性与管理护栏。这条路走通了,便是内部工具领域的一次升维打击;若在控制与治理上失分,则可能止步于一个美好的概念。

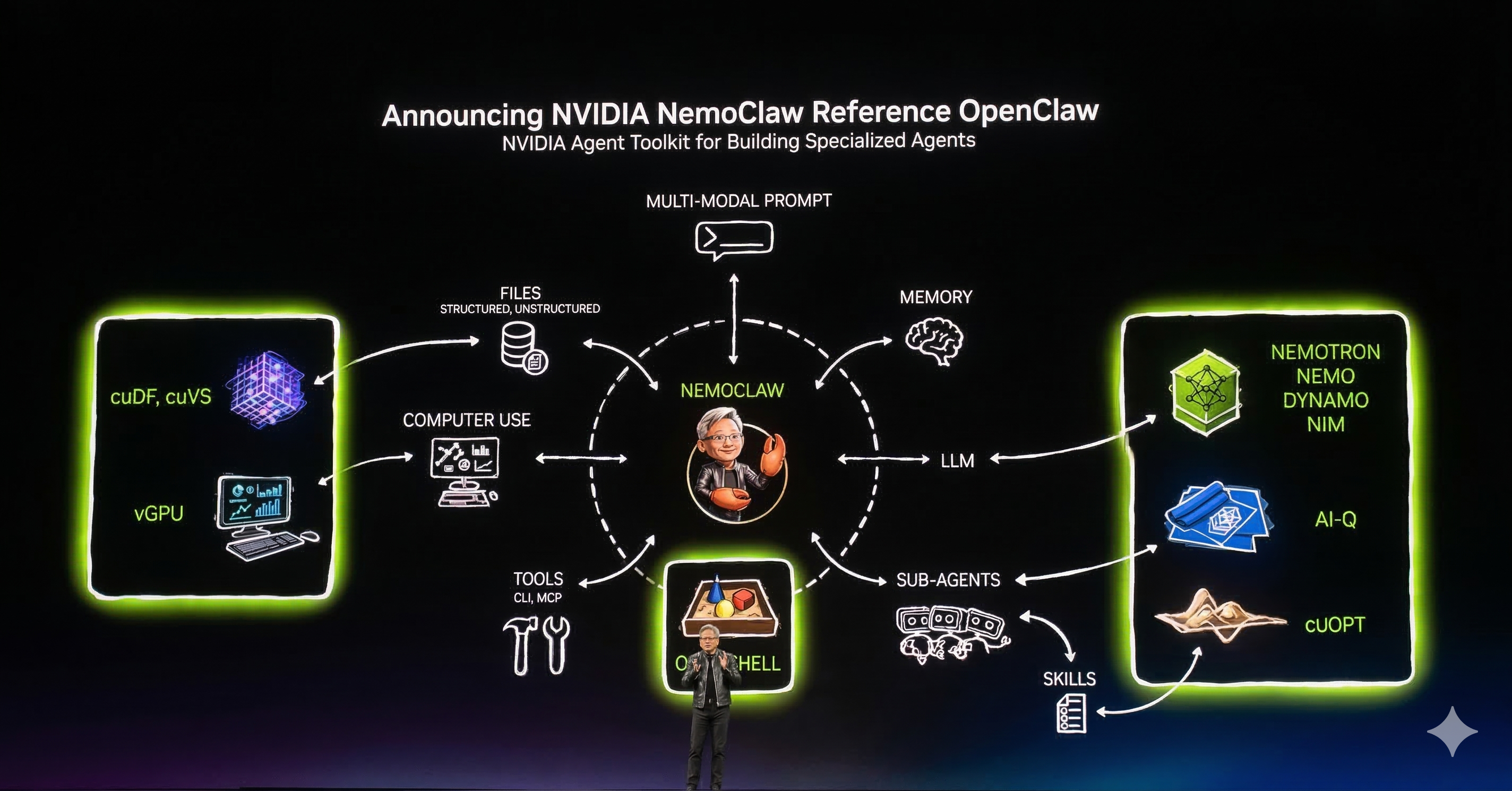

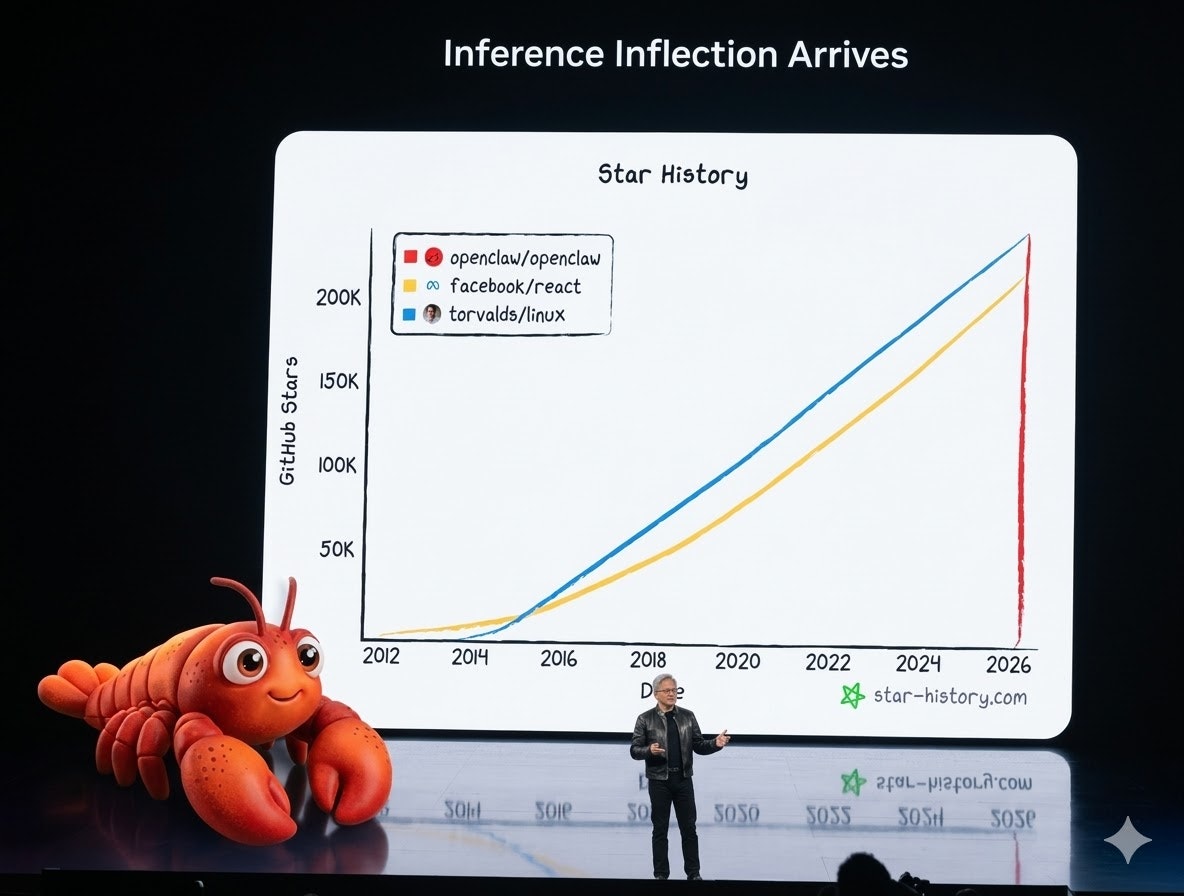

一句话介绍:NVIDIA NemoClaw是一个开源技术栈,通过在安全的NVIDIA OpenShell运行时环境中运行AI智能体,并经由NVIDIA云端进行推理,旨在更安全地部署和运行OpenClaw常驻助手,解决了开发者在构建自主智能体时面临的安全管控核心痛点。

Open Source

Developer Tools

Artificial Intelligence

GitHub

自主智能体

AI安全

开源技术栈

运行时环境

智能体工具包

云端推理

安全护栏

常驻助手

开发者工具

用户评论摘要:用户普遍认可其“安全优先”的方向,认为解决了行业核心关切。主要疑问集中于安全边界的处理机制:是静态规则还是动态监控?如何平衡安全限制与智能体实用性?以及处理冲突时的降级或警报策略。另有用户询问可用时间。

AI 锐评

NVIDIA NemoClaw的发布,看似是提供了一个运行自主智能体的“安全容器”,实则是一次对AI Agent生态基础设施的精准卡位。其真正价值不在于某个炫酷的功能,而在于将“安全”从一个事后附加的补丁,前置为整个运行时的底层预设。这直接回应了当前AI Agent从演示走向生产环境时最尖锐的矛盾:失控风险。

产品介绍中“secure environment”与“inference routed through NVIDIA cloud”的组合拳意味深长。它意味着NVIDIA正试图将智能体的安全与计算管道一同打包,形成从硬件、运行时到云服务的闭环控制。这不仅是技术方案,更是生态策略。开源其栈,能吸引开发者建立标准;而将推理路由至自家云端,则牢牢掌握了价值核心和监管入口。

用户评论中关于“静态约束”与“动态监控”的疑问,恰恰点中了当前所有AI安全方案的命门。NemoClaw若仅提供一套死板的政策规则,势必陷入“一管就死”的窘境,扼杀智能体的创造性。其成败关键,在于能否实现智能化的风险实时评估与分级干预,这需要深厚的底层AI能力与对复杂场景的理解,这正是NVIDIA可以发挥其全栈优势的地方。然而,这也带来了新的问题:这种深度集成与云端路由,是否会将开发者锁定在NVIDIA的生态内?安全性与自主性、开放性的边界又该如何划定?

总体而言,NemoClaw是NVIDIA从计算硬件巨头向AI操作系统与安全服务商转型的关键落子。它不满足于只提供“发动机”(GPU),更要提供整条“高速公路”的交通规则与安保系统。其面临的挑战并非技术可行性,而在于如何在推动安全标准的同时,维持生态的开放与活力,避免将安全的“护栏”变成封闭的“围墙”。

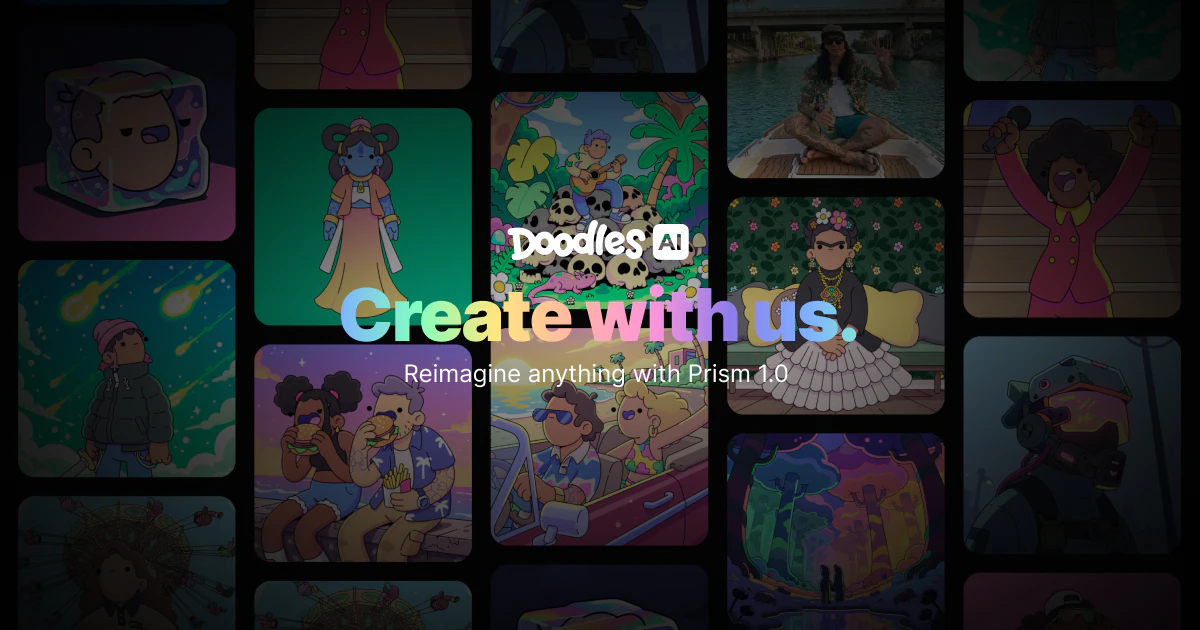

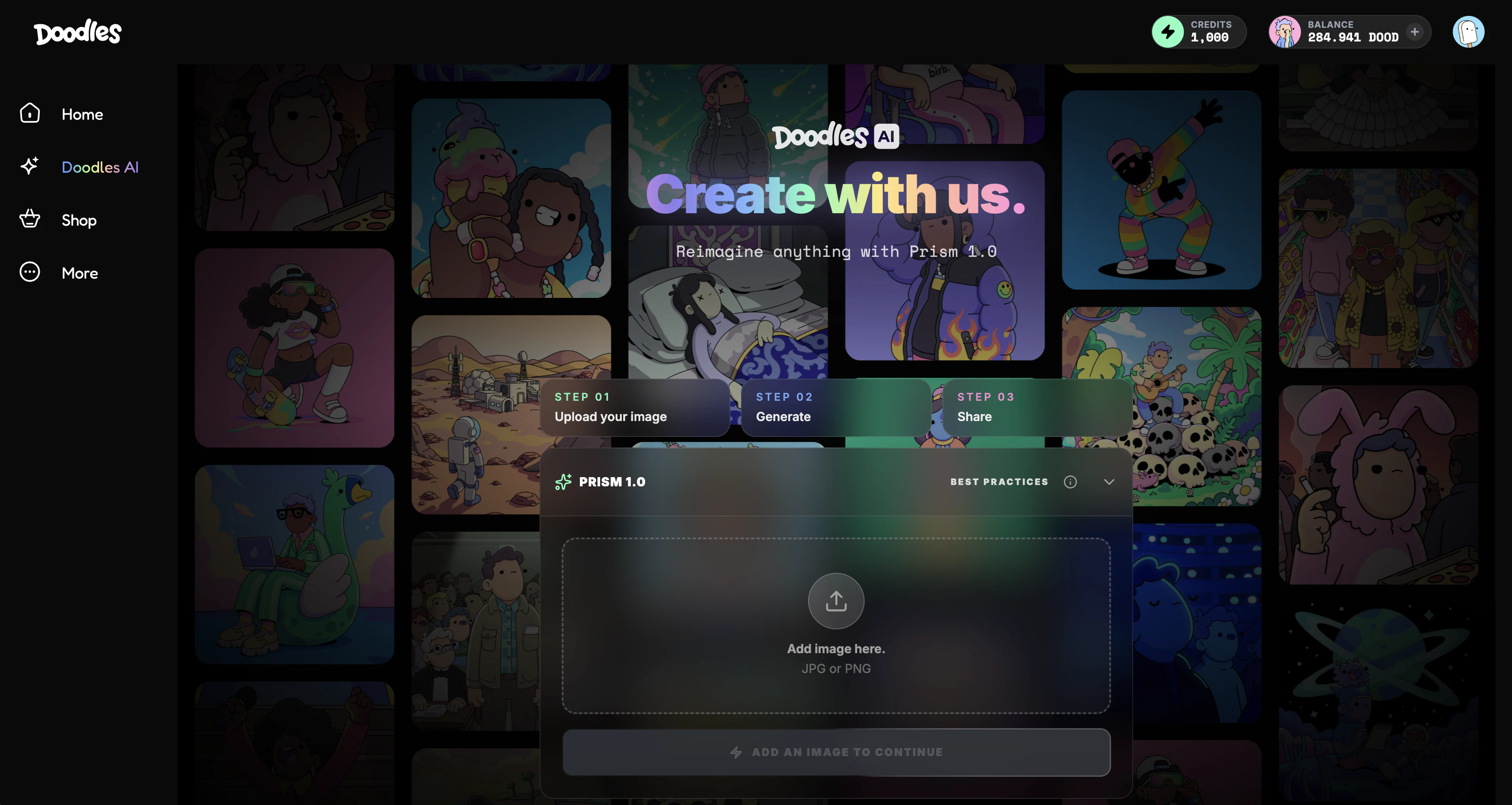

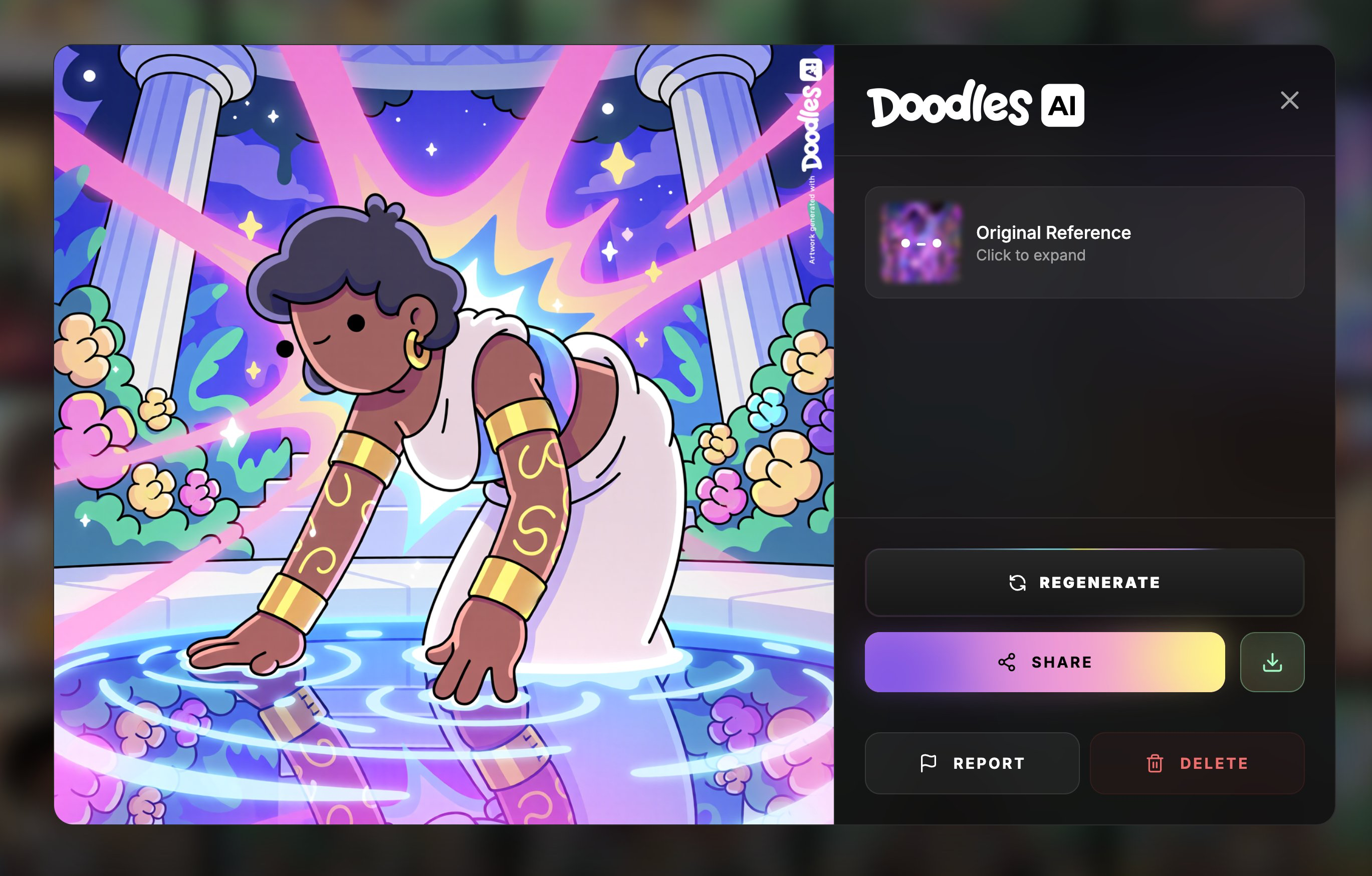

一句话介绍:Doodles AI是一个基于自有IP训练的封闭式AI图像生成平台,允许用户快速生成具有Doodles品牌标志性风格的“工作室级”图像,解决了品牌方和用户在创作中面临的风格抄袭与第三方IP侵权痛点。

Marketing

Artificial Intelligence

Graphics & Design

AI图像生成

品牌IP

封闭模型

用户生成内容

艺术平台

风格一致性

版权保护

品牌合作

Web3

NFT

用户评论摘要:用户反馈集中在三方面:一是肯定由艺术家主导开发AI工具的价值;二是看好其作为“常开UGC引擎”的品牌营销潜力;三是质疑其对现有NFT持有者的实际效用,并对项目从NFT到媒体再到AI的演变轨迹表示关注。

AI 锐评

Doodles AI的核心叙事,是试图用“封闭循环”的技术逻辑,解决当前AIGC领域最尖锐的版权与风格归属问题。其推出的Prism 1.0模型,仅使用Doodles自有IP训练,本质上是在打造一个“风格防火墙”。这与其说是一项面向大众的普适性工具,不如说是一个精密的品牌资产管理与授权引擎。

产品的真正价值,在于其商业定位的精准性。它瞄准了品牌方在AIGC时代的两大焦虑:一是生成内容风格不可控、品牌调性被稀释;二是潜藏的版权法律风险。通过将生成能力限定在自身IP库内,Doodles AI将AI从“开源掠夺”的工具,转变为“版权合规”的解决方案。其宣称的“常开UGC引擎”,揭示了终极目标:将用户从消费者转化为品牌内容的合规生产者,实现低成本、大规模、风格统一的营销内容供给。

然而,其局限性同样鲜明。首先,模型的创造力和多样性天花板,完全受限于Doodles已有的IP库,这可能导致输出内容的高度同质化。其次,评论中关于“对NFT持有者有何效用”的质问直击要害。作为发迹于NFT的社区驱动型项目,如何将AI工具的利益与早期支持者绑定,是其必须回答的社区治理考题。若无法为持有者提供独占权益或经济激励,此工具可能只是一次品牌方的单边技术升级,而非生态共赢。

总体而言,Doodles AI是一次有价值的商业实验,它验证了“专用型、合规化AIGC”的市场需求。但其长期成功,不仅取决于技术可靠性,更取决于能否在艺术家主导的愿景、品牌商业扩张与社区历史承诺之间,找到可持续的平衡点。

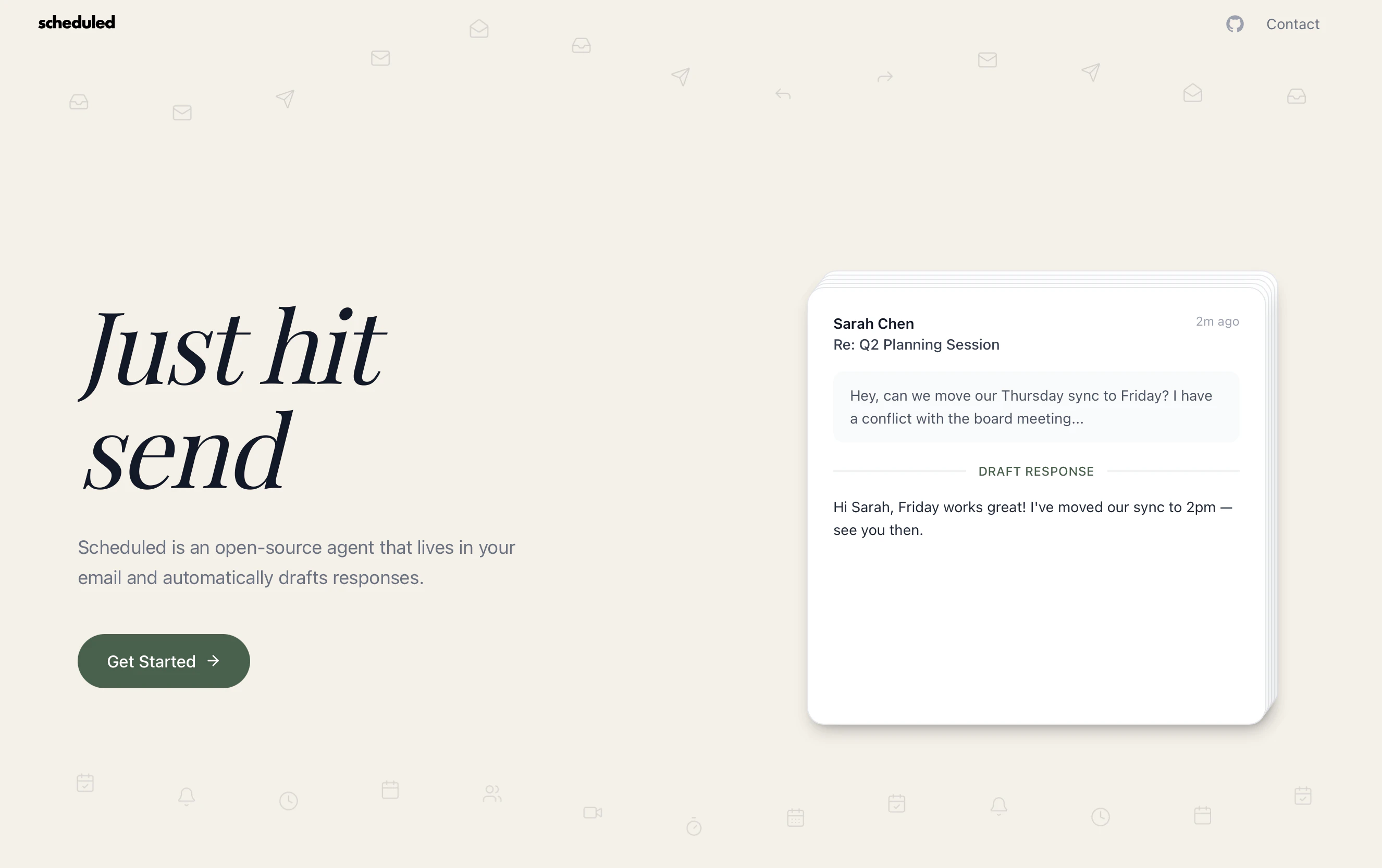

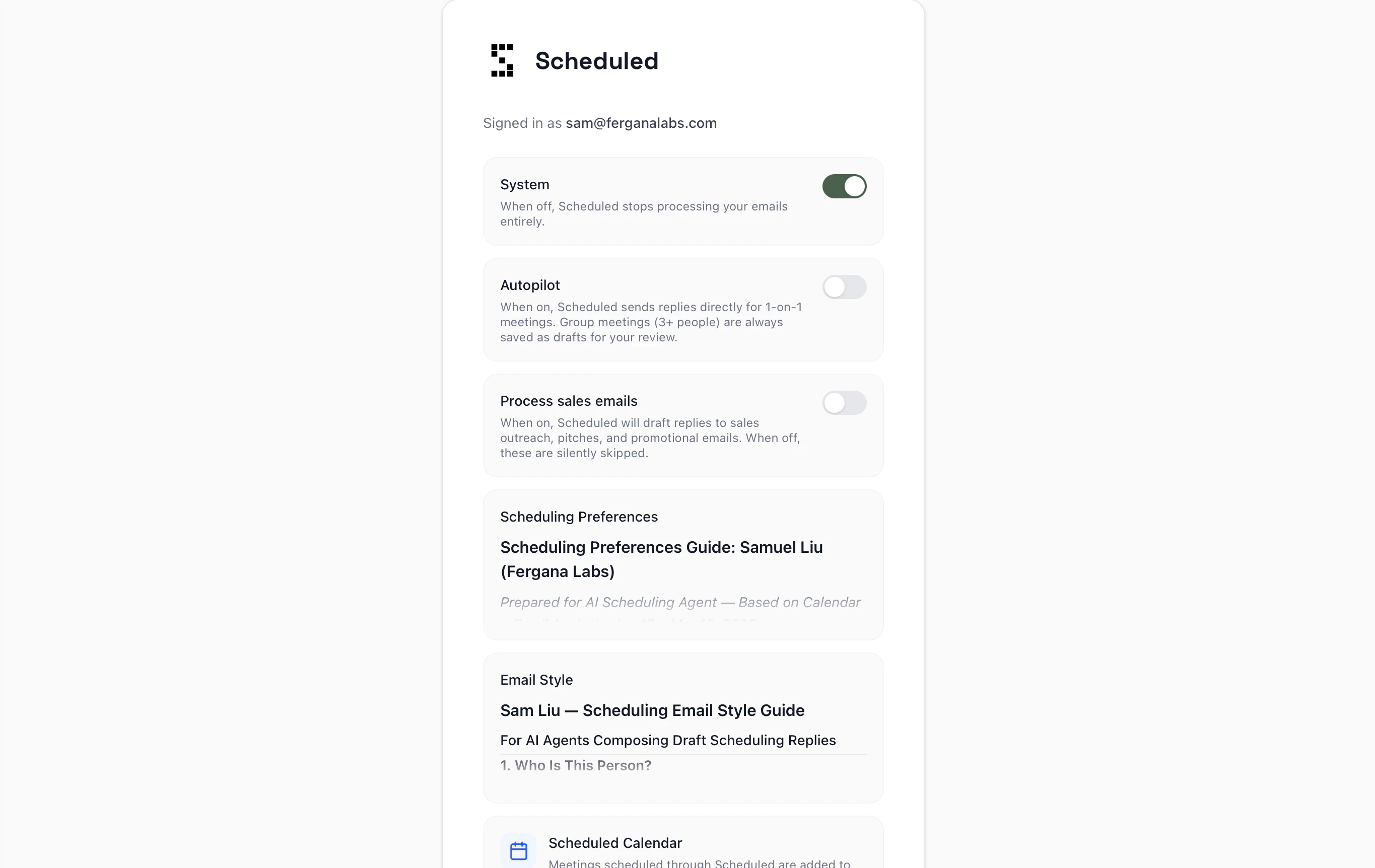

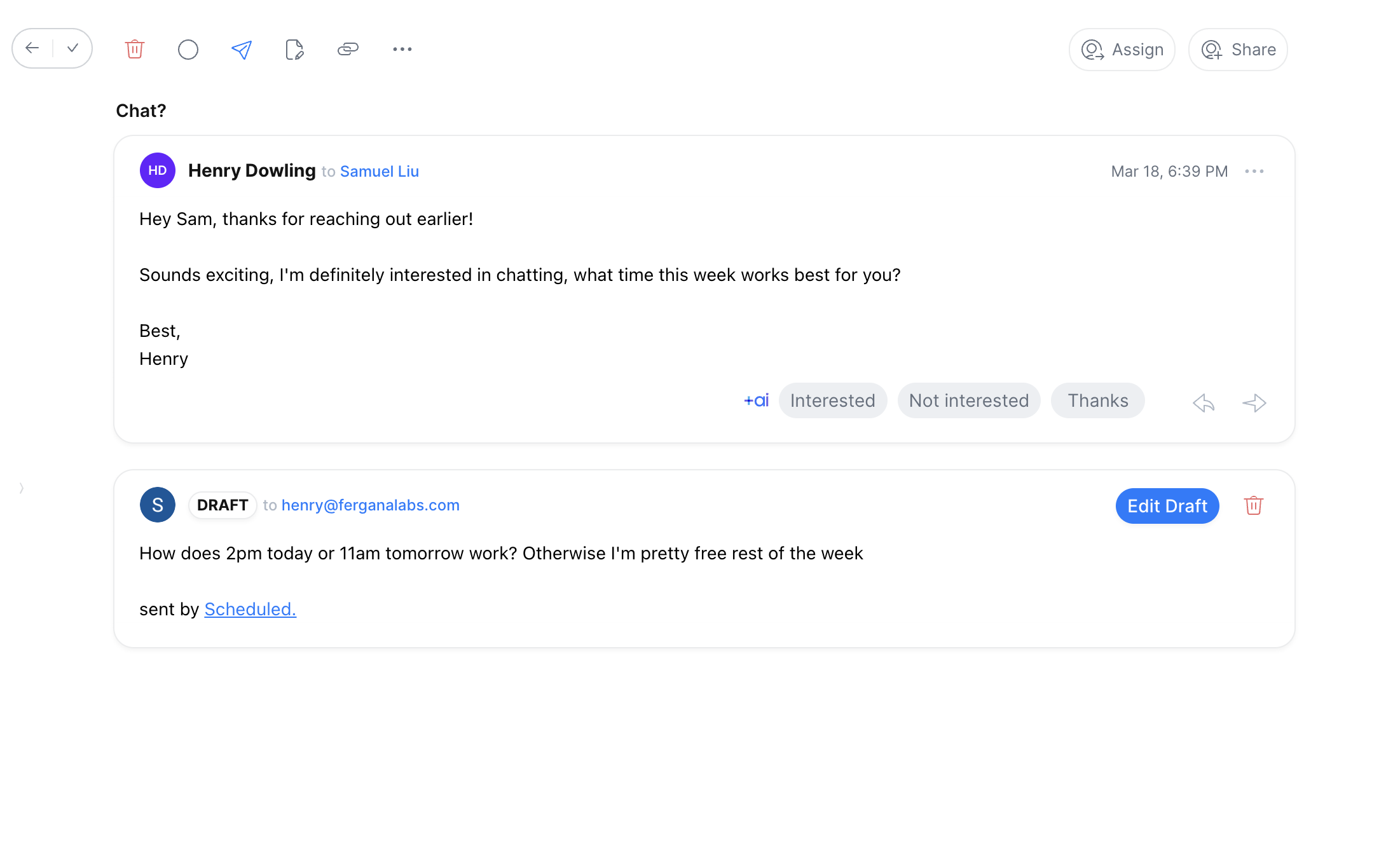

一句话介绍:一款开源、内嵌于Gmail的AI日程安排助手,通过读取邮件线程和日历,自动草拟符合用户风格与偏好的会议回复,在邮件沟通场景中彻底消除了反复协调时间的繁琐。

Email

Calendar

Artificial Intelligence

AI日程安排

开源工具

Gmail集成

邮件自动化

智能助理

会议调度

数据隐私

自托管

生产力工具

Calendly替代品

用户评论摘要:用户关注点集中在多日历支持、时区处理能力及复杂场景(如多方协调、模糊时间)的解决效果上。创始人回应多日历功能即将推出。用户普遍期待AI能真正理解个人风格并避免日程冲突。

AI 锐评

Scheduled瞄准了一个被Calendly等工具“结构化”方案所忽视的真实痛点:自然邮件对话中的日程协调。其核心价值并非简单的自动化,而是通过深度集成Gmail,在用户原有工作流中实现“无感调度”。

产品聪明地避开了与巨头在独立应用层面的竞争,转而以“邮件插件+开源”的轻量姿态切入。其宣称的“学习用户风格与偏好”是关键技术壁垒,若真能通过历史邮件无监督学习,而非手动规则设置,则实现了真正的个性化。这比粗暴地共享日历链接更符合高频率、非标准化商务沟通的本质。

然而,其“内嵌于Gmail”的定位既是优势也是枷锁。这固然降低了用户使用门槛,但也将自身命运与谷歌生态深度绑定,并可能面临Gmail自身功能迭代或API政策变化的风险。此外,其解决的是“确定时间”这一环节,但对于更前端的“需求澄清”(例如会议目的、时长协商)和更后端的“变更管理”(如会议取消、改期)的闭环能力尚未验证。

开源策略是一步高棋,既吸引了开发者社区,又为重视数据隐私的企业用户提供了自托管选项,这在当前数据敏感时代是显著的信任优势。但商业化路径也因此变得模糊:托管服务能否支撑起可持续的商业模式?

总体而言,Scheduled不是又一个日程工具,而是对“以用户为中心的工作流自动化”的一次精准实践。它能否成功,不取决于AI是否更智能,而取决于其“理解上下文”的深度能否真正匹配复杂人际协调的模糊性,从而让用户放心地交出“回复”这一最终控制权。

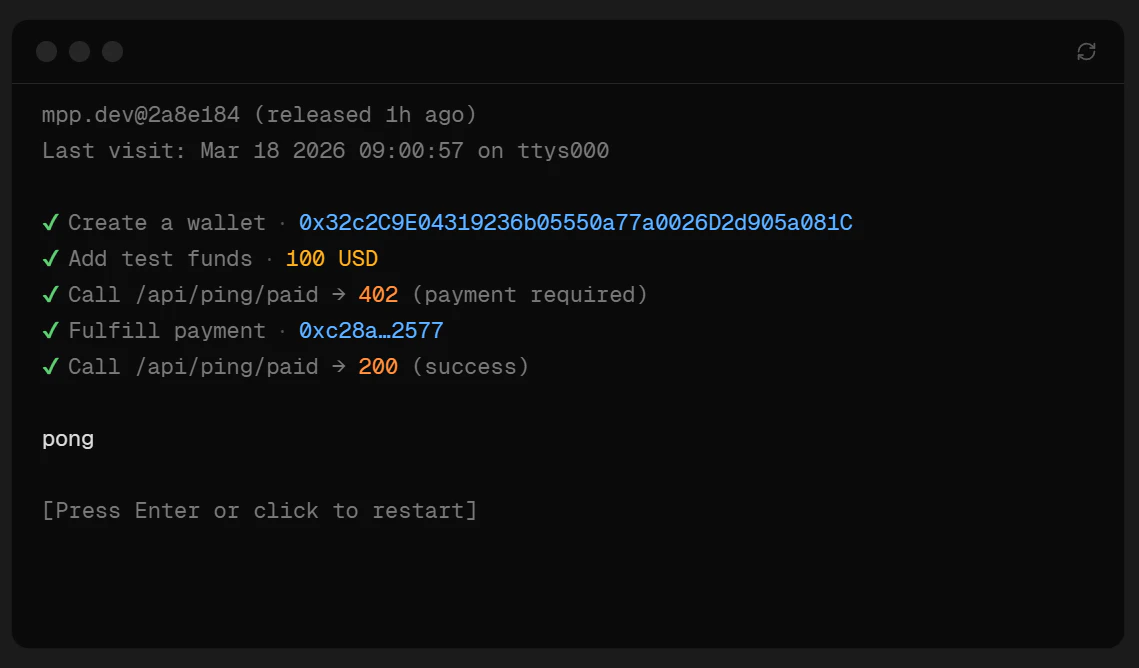

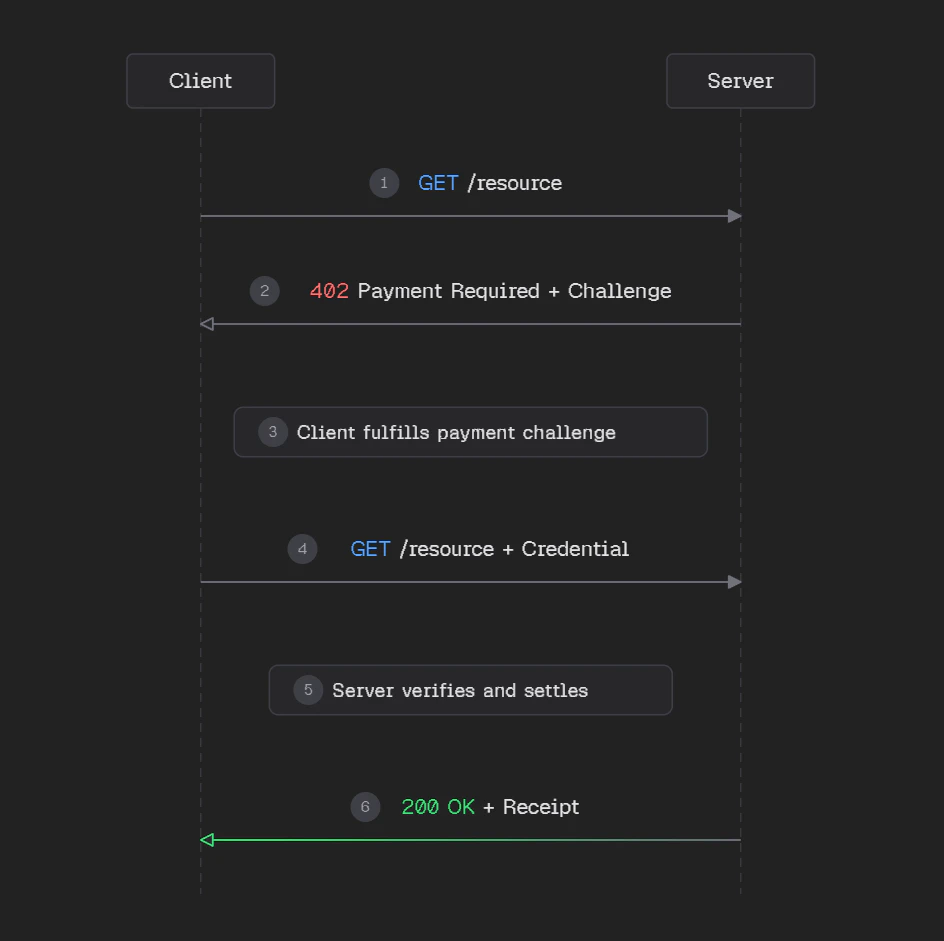

一句话介绍:Machine Payments Protocol是一个开放支付协议,让AI智能体能够以编程方式自动完成服务支付,解决了AI代理在需要消费时被人类优化的支付界面(如验证码、2FA)所阻断的核心痛点。

Fintech

Payments

Artificial Intelligence

开放支付协议

AI代理支付

机器经济

微交易自动化

区块链支付

API货币化

Stripe集成

互联网原生标准

智能体经济

协议层解决方案

用户评论摘要:评论有效信息集中。用户指出该协议将HTTP 402状态码从“梗”变为现实,解决了AI代理在支付环节的最大路障(如验证码、2FA),并强调了其协议层解决方案的价值及与Stripe现有生态集成的便利性。

AI 锐评

Machine Payments Protocol的野心,远不止于为AI代理提供一个支付工具。它试图在协议层重塑机器经济的交易基础设施,其真正的价值在于“标准化”和“桥接”。

首先,它精准地刺中了AI Agent商业化落地的核心矛盾:强大的推理与决策能力,最终会卡在人类设计的、反自动化的支付验证环节。MPP将支付抽象为机器可读的协议响应(HTTP 402),让交易像API调用一样自然,这为真正的自主智能体经济扫清了首个结构性障碍。

其次,其“开放标准”的定位与Stripe商业实践的绑定,是一步高明的棋。开放确保了协议的潜在广泛采用性,避免了生态锁死;而深度集成Stripe,则瞬间为开发者提供了成熟的法币结算通道和商户网络。这解决了“鸡生蛋还是蛋生鸡”的启动难题:开发者可以立刻用现有工具获利,而不必等待一个全新的支付生态成熟。

然而,其挑战同样尖锐。协议的成功极度依赖双边网络效应:需要足够多的服务提供商支持MPP,同时需要有足够多具备支付能力的AI代理来消费。在初期,这可能沦为少数高端API服务的实验场。此外,将金融交易完全程序化,必然伴随欺诈风险、责任界定(例如代理未经授权消费)和监管合规等复杂问题,这些都不是单纯的技术协议能解决的。

总体而言,MPP是迈向“功能完备的AI代理”不可或缺的一块拼图。它未必会立刻引爆市场,但它为未来那个由机器与机器高频、微额、自动协商交易的世界,铺设了第一条可信的支付轨道。其价值不在于今天的交易额,而在于定义了明天的交易规则。

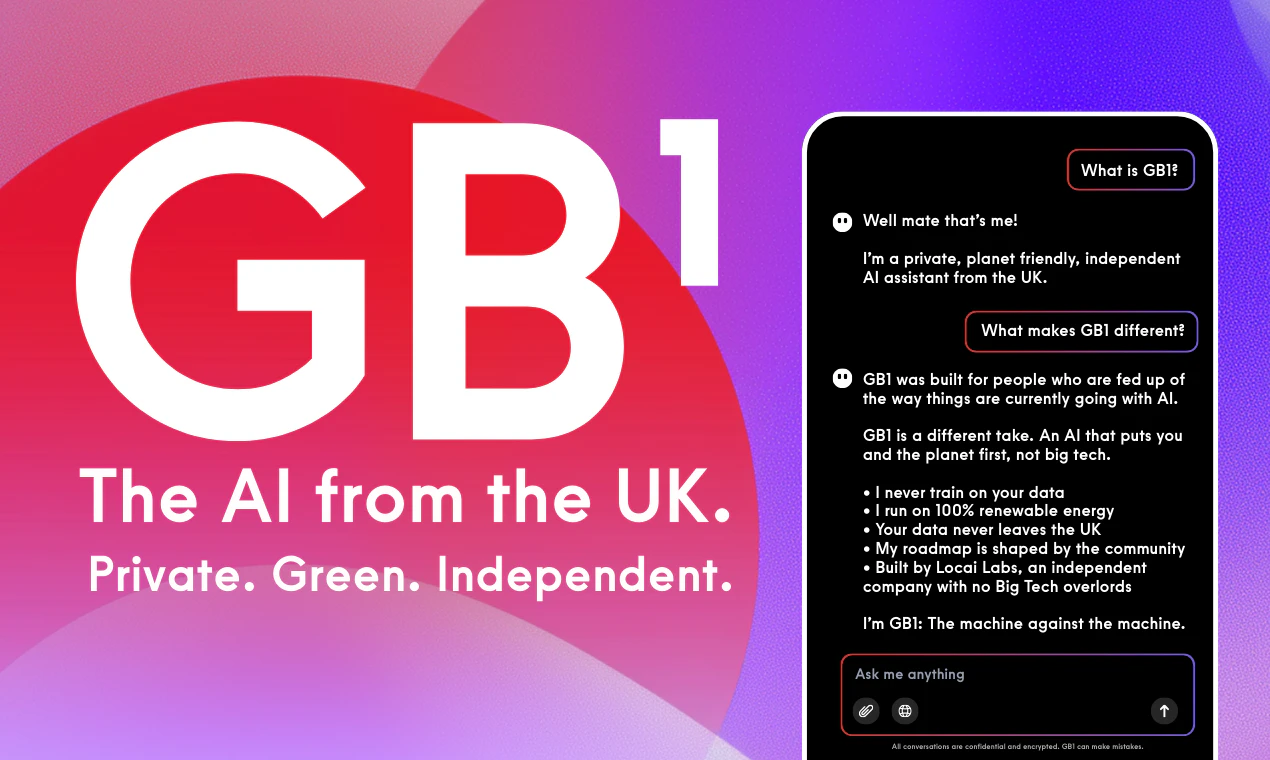

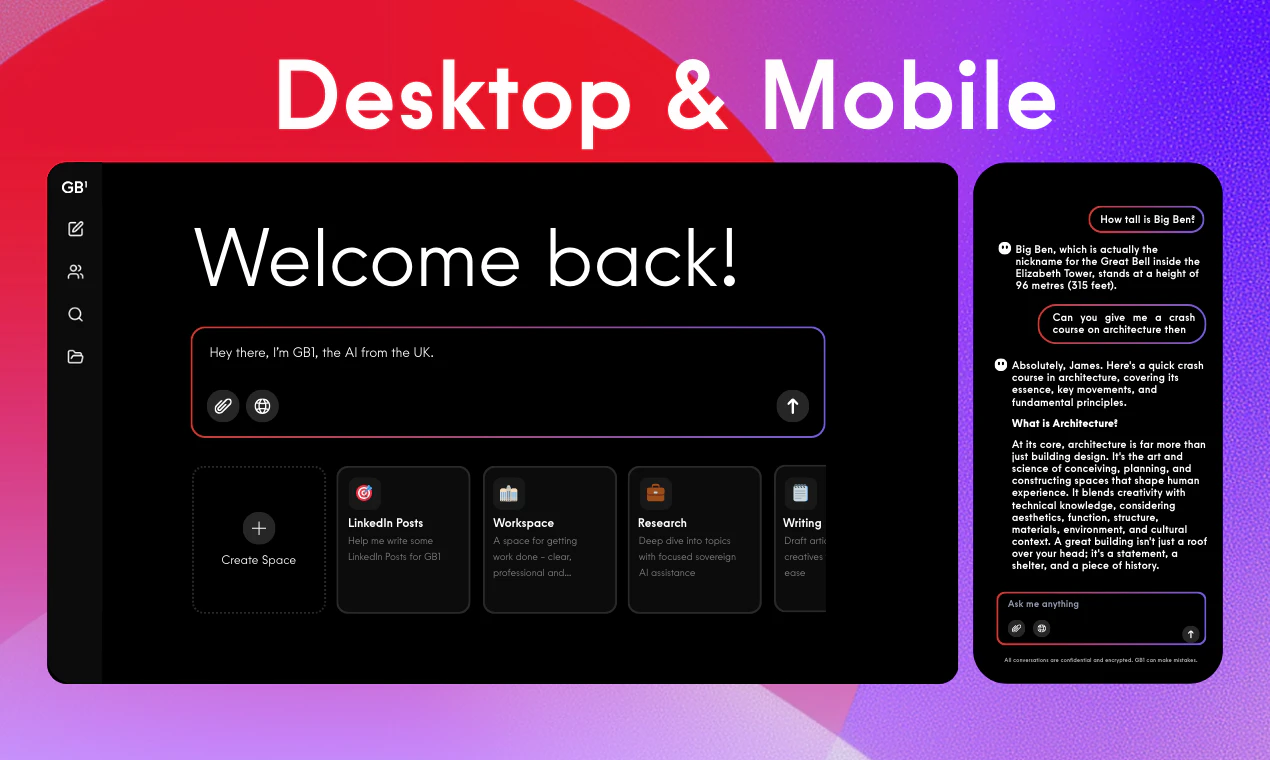

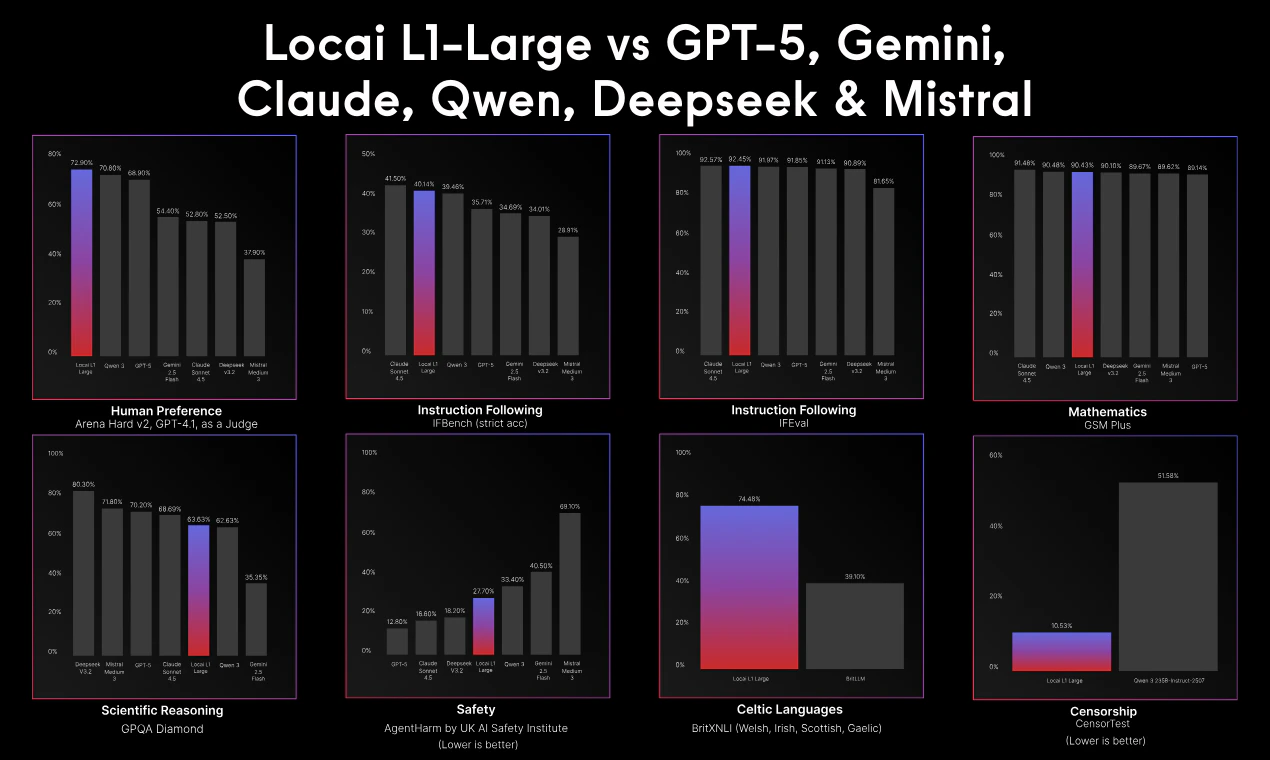

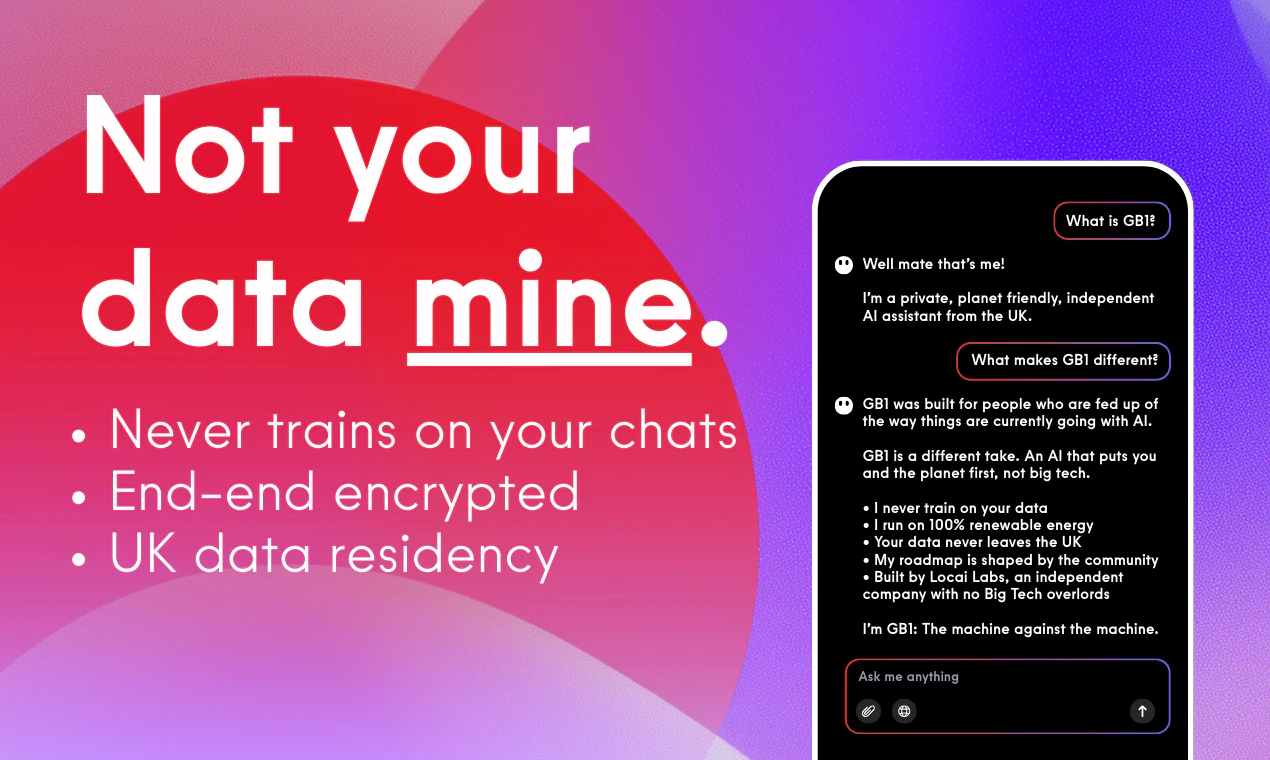

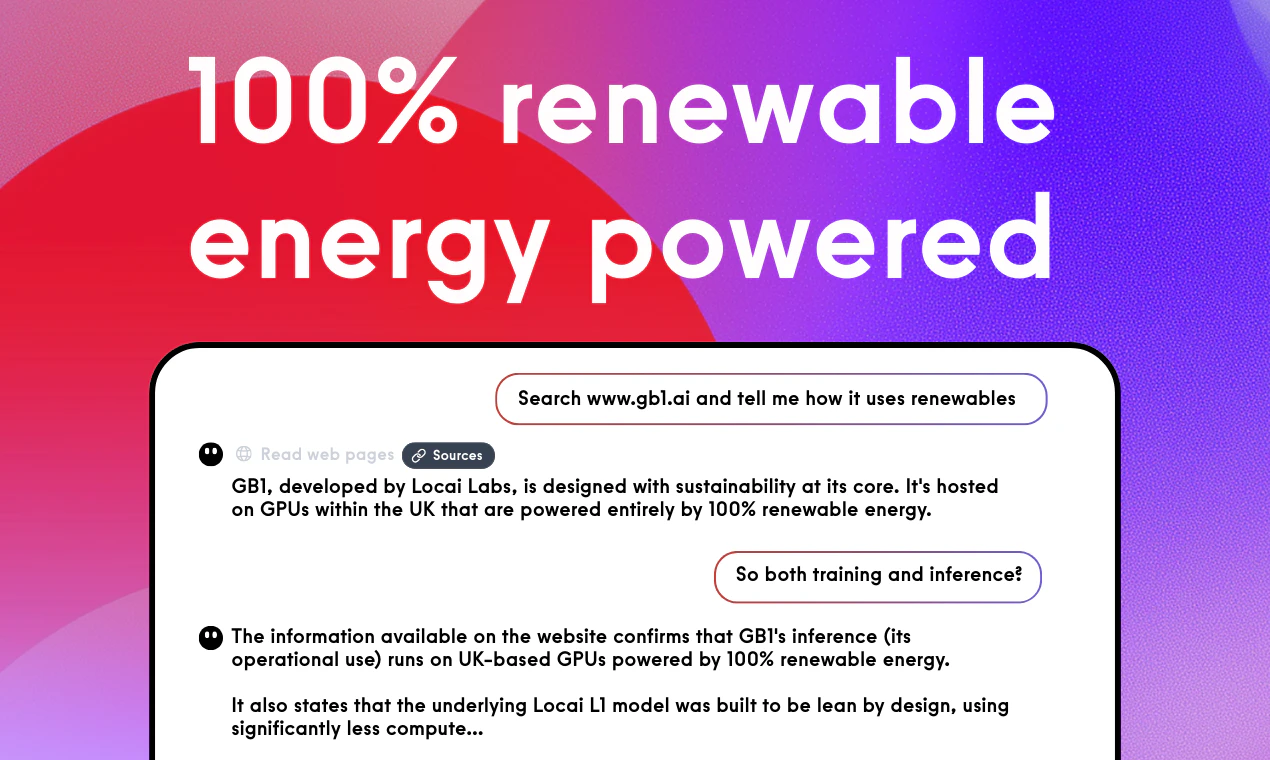

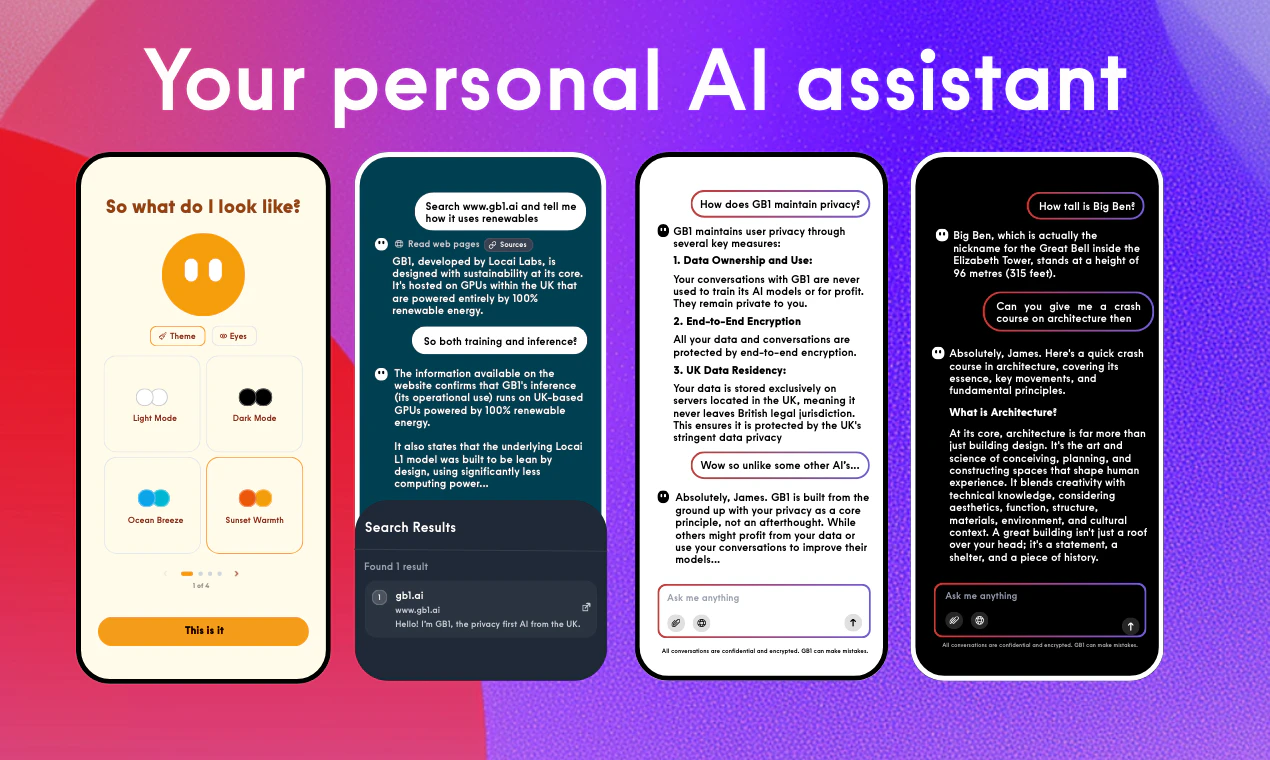

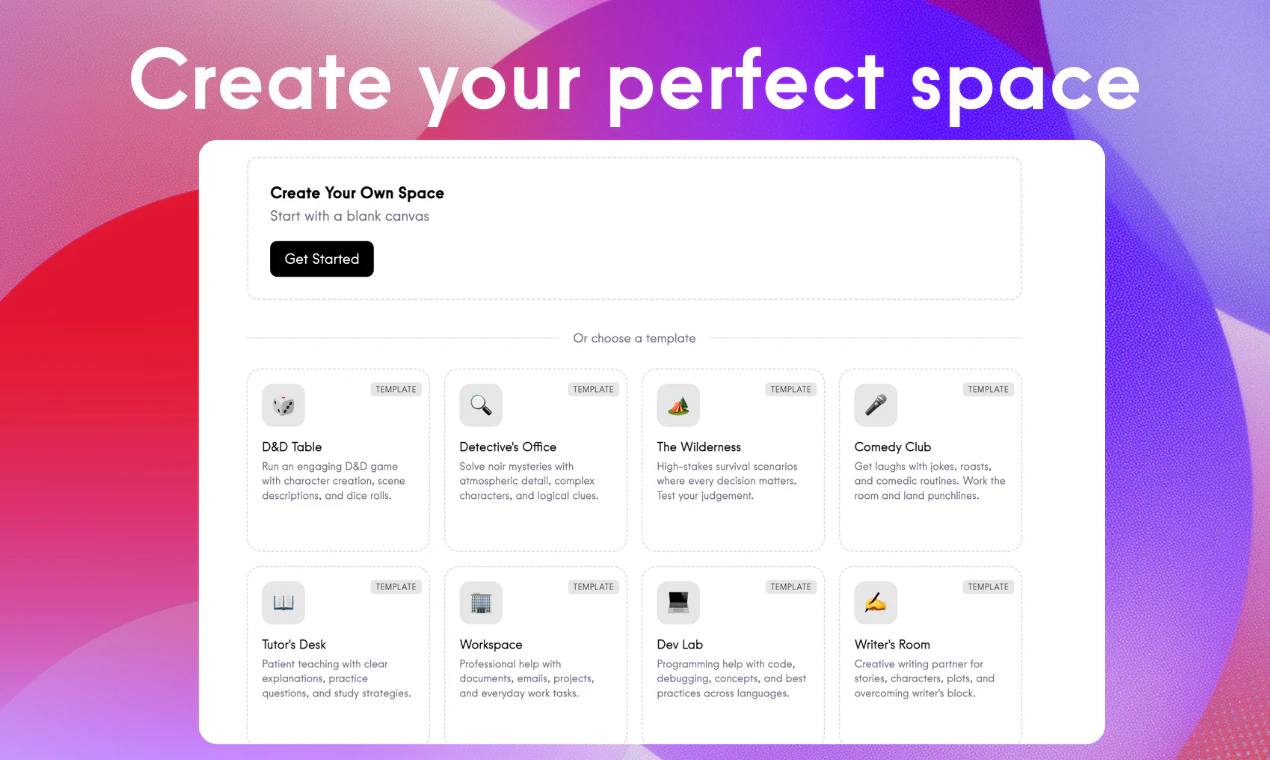

一句话介绍:一款默认保护隐私、使用英国可再生能源运行的AI助手,通过本地化数据处理和社区驱动路线图,为用户提供了安全、可持续且非美国中心化的AI工具选择。

Productivity

Artificial Intelligence

Tech

AI助手

数据隐私

可持续计算

英国本土AI

数据主权

可再生能源

社区驱动

基础模型

伦理AI

本地化服务

用户评论摘要:用户认可其隐私承诺、可持续理念及打破美国中心化的价值。CEO详细阐述了技术架构与价值观。具体反馈包括:对“Spaces”功能表示赞赏,询问API可用性,并探讨“隐私默认”作为价值观而非功能的意义。开发者积极回复,并引导用户参与社区规划。

AI 锐评

GB1的发布,本质上是一场精心策划的价值观营销。它精准地狙击了当前AI行业的三大焦虑:对美国科技巨头的数据垄断不满、对隐私被用作付费筹码的厌倦,以及对AI碳足迹的隐忧。产品将“隐私默认”、“100%英国可再生能源”和“数据不出境”捆绑为核心卖点,这与其说是一次技术突破,不如说是一次伦理定位的胜利。

然而,其真正挑战在于将价值观转化为可持续的竞争力。首先,“英国本土化”是一把双刃剑,在保障数据主权和降低传输延迟的同时,也可能意味着更高的运营成本和相对封闭的模型训练数据池,这可能最终影响其模型性能的迭代速度与广度。其次,其商业模式存在隐忧。承诺免费用户数据也不用于训练,且依赖昂贵的绿色能源,在缺乏明确盈利路径(如API商业化、企业方案)的情况下,其长期运营的财务可持续性存疑。最后,“社区驱动路线图”在早期是高效的获客与反馈机制,但随着用户规模扩大,也可能导致产品方向分散,陷入迎合众口难调的困境。

总体而言,GB1在拥挤的AI助手赛道中,成功开辟了一个差异化的伦理细分市场。它的初步成功证明了市场对“负责任AI”存在真实需求。但其能否从一款“令人尊敬的产品”成长为一家“可持续的企业”,取决于它能否在坚守原则的同时,找到技术性能与商业现实的平衡点,将道德高地转化为坚实的竞争壁垒。

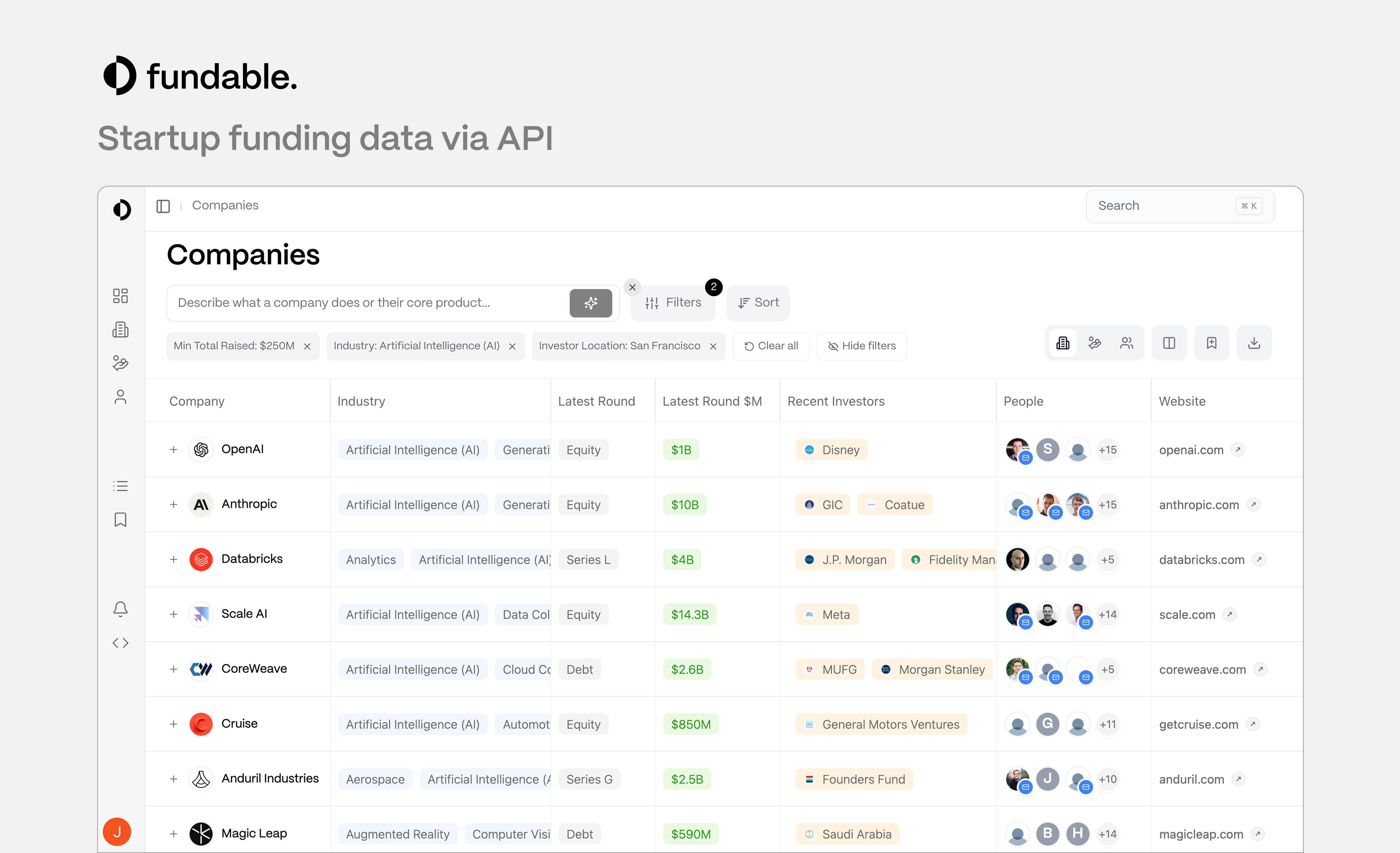

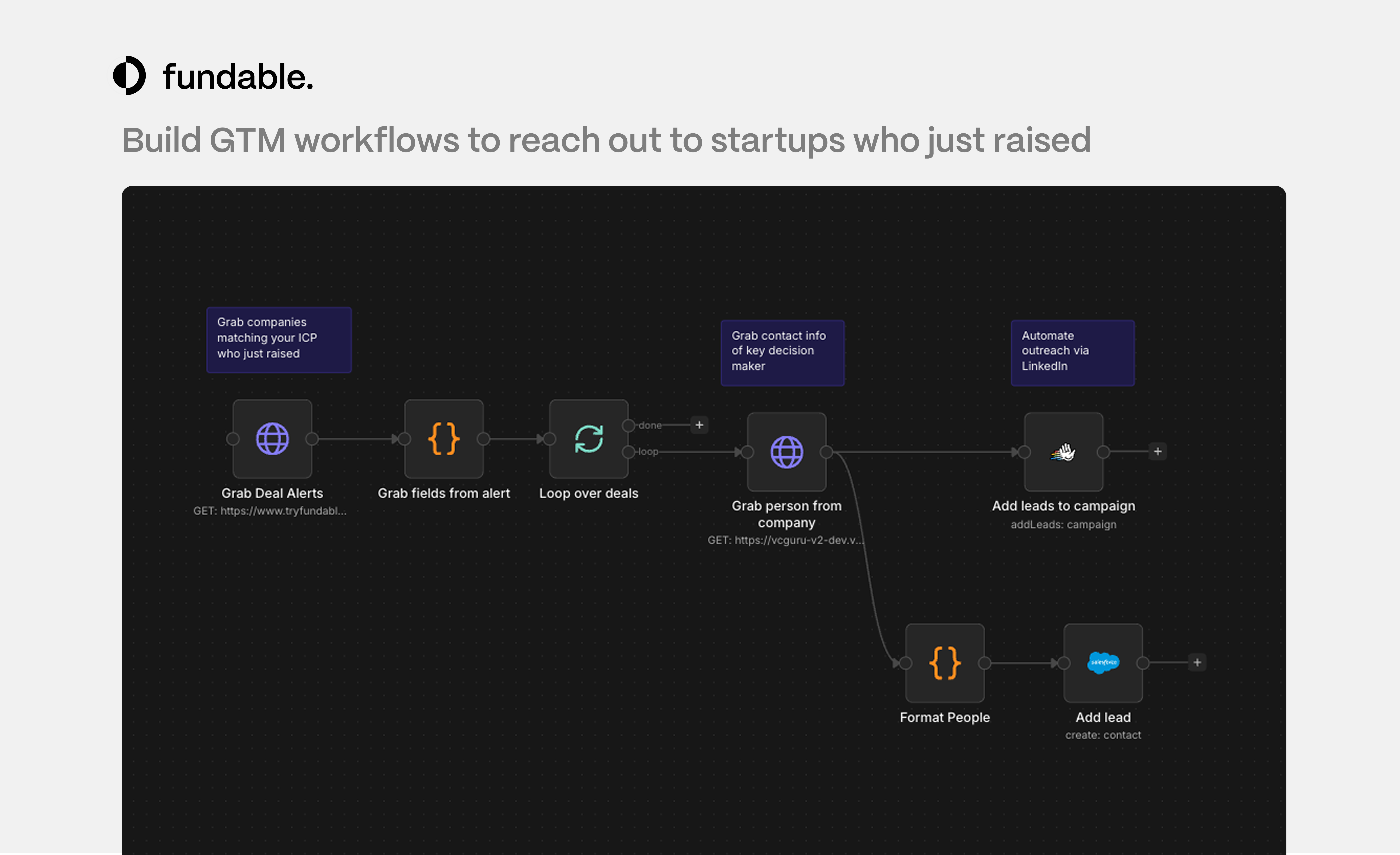

一句话介绍:Fundable API 为初创公司、开发者和独立黑客提供按需付费的初创企业及投资数据API服务,以极低的门槛和灵活的信用点模式,解决了传统行业数据平台(如PitchBook、Crunchbase)价格高昂、合约封闭的核心痛点。

Sales

API

Venture Capital

初创公司数据API

投资数据

按需付费

开发者工具

数据赋能

线索挖掘

投资人发现

信用点模式

开放数据

替代方案

用户评论摘要:用户普遍赞赏其“按需付费”模式,认为这对独立开发者和初创团队是游戏规则改变者,能有效避免与传统数据平台的高价合约纠缠。核心反馈是数据价值高、使用门槛低,激发了用户的尝试和构建意愿。

AI 锐评

Fundable API 看似是又一个数据API产品,但其真正的锋芒在于对陈旧商业模式的精准狙击。它没有创造新数据,而是重构了数据访问的规则:将“企业销售”主导的、动辄数千美元的门槛,拆解为“开发者友好”的、近乎零门槛的信用点消费。这并非简单的降价,而是一次渠道和心智的颠覆。

其价值核心在于“润滑”而非“创造”。它服务于那些需要偶尔查询融资轮次、丰富销售线索或寻找投资人的中小团队、独立黑客和早期产品,这些需求是真实且高频的,但传统巨头因其销售成本结构,无法也不愿服务这类碎片化、低客单价的需求。Fundable API 用技术自动化填补了这一市场缝隙,本质上是一个高效的“数据零售商”。

然而,其挑战也同样清晰。首先,数据质量、覆盖面和实时性能否与巨头媲美,是生存的根基。其次,“信用点”模式虽灵活,但重度用户的总成本可能迅速攀升,如何设计梯度定价以留住高价值用户是关键。最后,它必须警惕成为“廉价替代品”的陷阱。真正的护城河应在于利用其API的易用性,构建出PitchBook和Crunchbase因其笨重身躯而无法快速响应的应用生态和独特数据应用场景(如“whatever else you‘re cooking up”所暗示的)。如果只是数据的管道,其长期价值有限;若能成为创新数据应用的孵化平台,则可能从颠覆者演变为新生态的定义者。

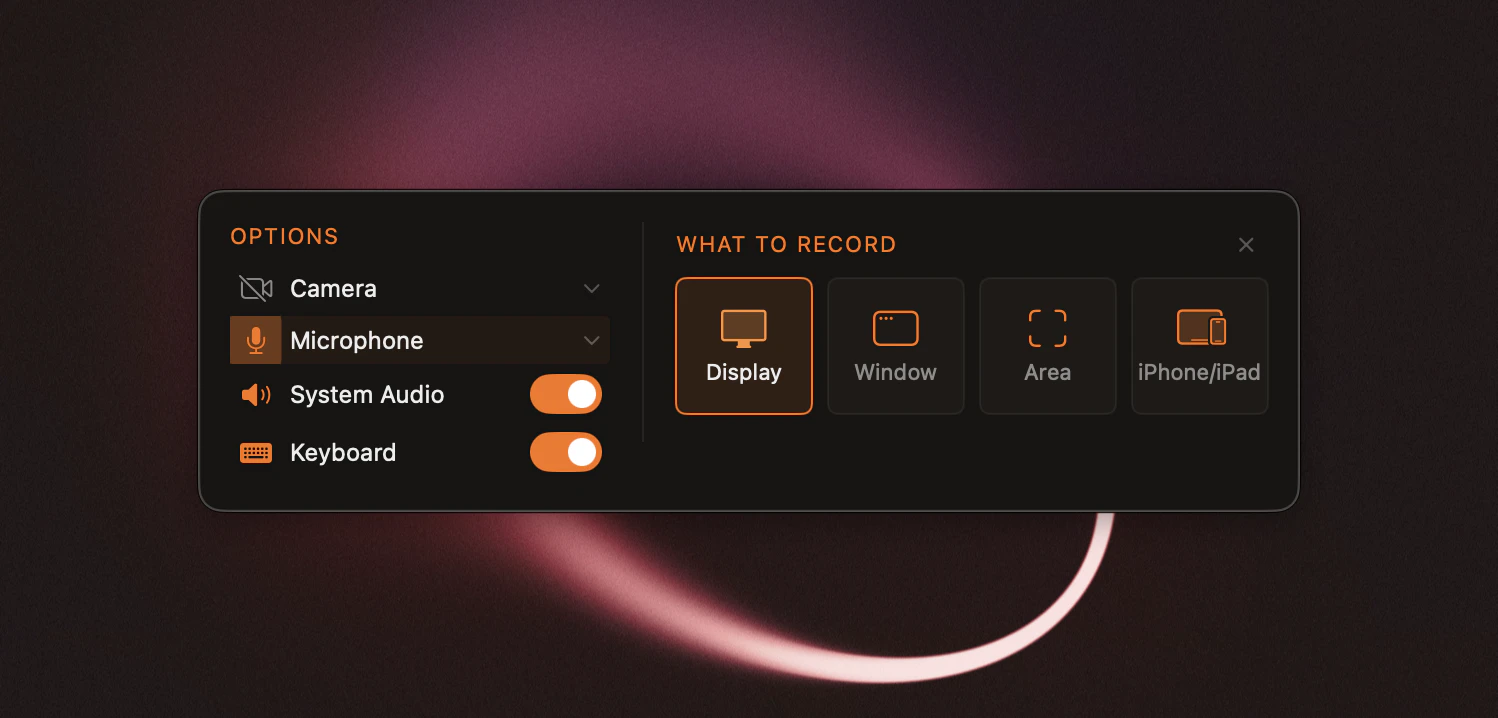

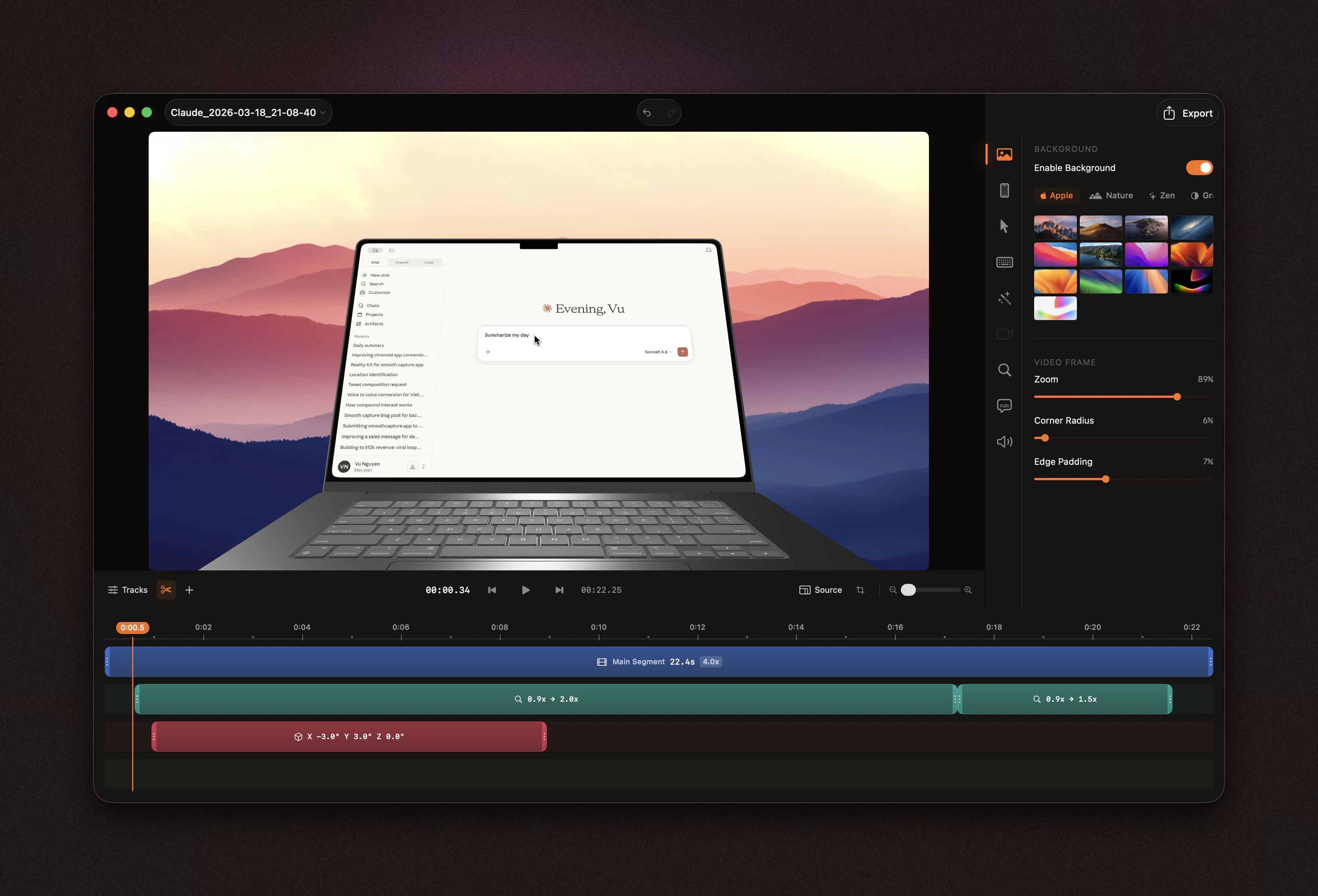

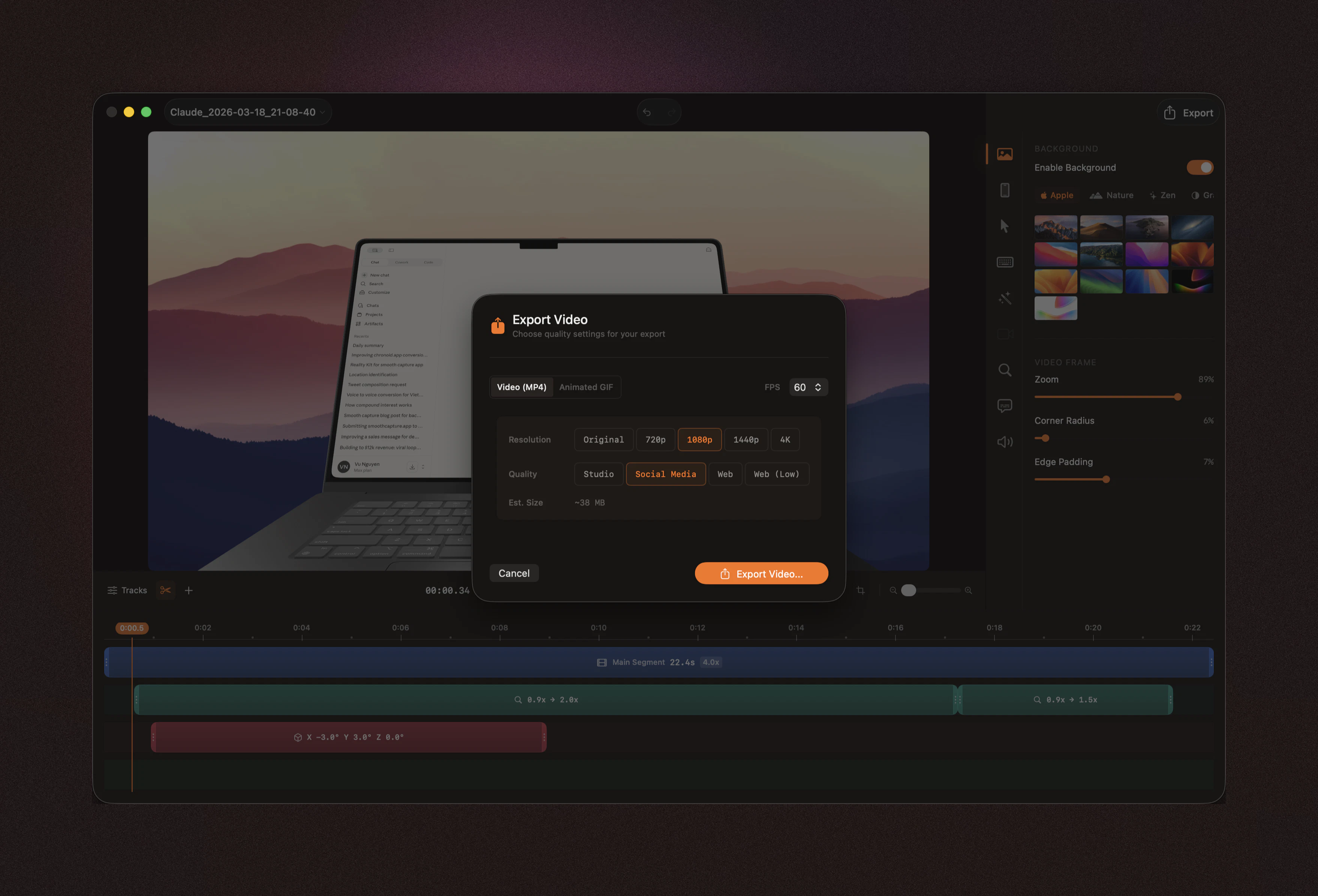

一句话介绍:一款为开发者打造的macOS原生屏幕录制工具,通过3D设备边框、USB直连录制和简洁编辑功能,解决了制作高质量应用演示视频耗时且繁琐的痛点。

Design Tools

Marketing

Apple

屏幕录制

应用演示

开发者工具

macOS应用

3D设备模型

视频编辑

原生应用

买断制

效率工具

独立开发

用户评论摘要:用户普遍赞赏其原生轻量(Swift+Metal)与买断制模式。核心关注点在于Metal渲染管线在复杂UI录制时的性能表现,以及对2026年路线图中AI编辑功能(如转录编辑、填充词删除)的极高期待。

AI 锐评

Smooth Capture的“真正价值”不在于功能堆砌,而在于其精准的定位与清醒的取舍。它并非挑战Final Cut Pro或ScreenFlow的全能选手,而是直击一个垂直但刚需的痛点:独立开发者和中小团队如何高效、低成本地产出“看起来贵”的应用演示视频。

其价值核心首先体现在技术路径的选择上。采用Swift+Metal构建约50MB的轻量原生应用,是对当前“Electron肥宅”和订阅制泛滥的明确反抗。这精准迎合了技术敏感型开发者群体对效率、性能和所有权(买断制)的深层需求。“风扇不转”的承诺,是一个极具说服力的性能营销。

其次,其功能设计体现了强烈的场景化聚焦。3D设备边框、USB直连iOS设备录制,这些并非通用功能,而是专门为“应用展示”这一场景服务的“捷径”。它将原本需要在3D建模软件和视频编辑软件间来回切换的复杂流程,简化为一步操作,直接产出可用于应用商店、产品官网的成品素材。这本质上是将专业设计能力产品化、模板化,降低了高质量演示视频的制作门槛。

然而,其面临的挑战与机遇同样明显。短期看,其轻量级编辑功能在应对复杂叙事性视频时可能力有不逮。长期看,其公布的2026年AI路线图(如转录编辑、填充词删除)才是决定其天花板的关键。若能将这些AI功能深度整合进其专注的“演示制作”场景,而非做成通用工具,它将从“效率工具”升级为“智能制作助理”,真正构建起护城河。目前,它成功地在细分市场撕开了一道口子,但能否从小而美走向可持续的生态,取决于其后续迭代是坚守场景,还是被迫泛化。

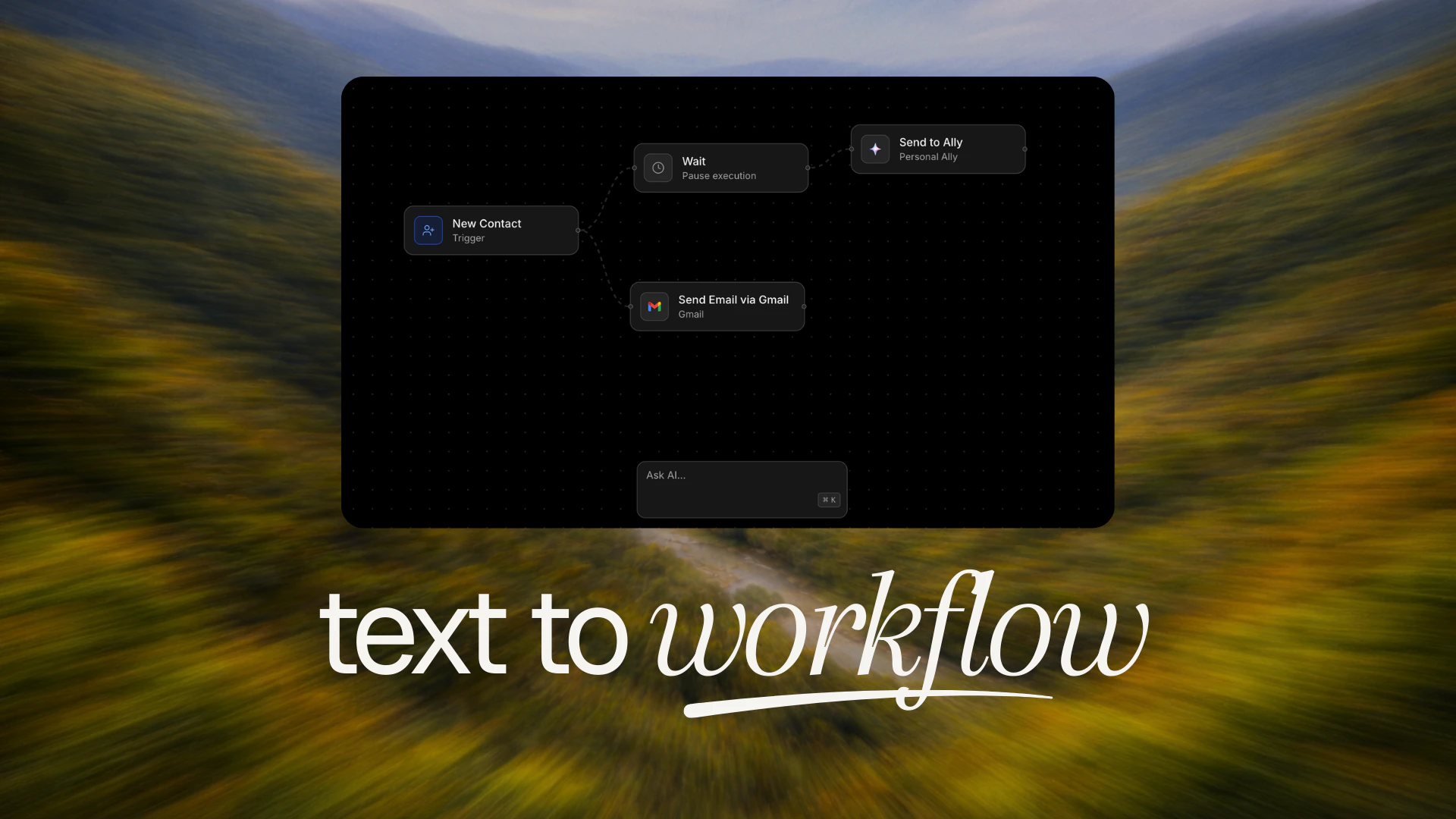

一句话介绍:Link AI是一款智能体化商业套件,通过整合语音、WhatsApp等多渠道AI代理与自动化工作流,解决企业因使用多个割裂工具而导致的运营效率低下和成本高昂问题。

Social Media

SaaS

Artificial Intelligence

AI商业套件

智能体(Agent)

工作流自动化

多渠道沟通

WhatsApp办公

企业运营

工具整合

SaaS

流程自动化

AI代理

用户评论摘要:用户反馈集中在:1. 肯定产品整合价值,但担忧AI代理(Ally)可能削弱人际沟通中的“人情味”与意图理解;2. 认为官网功能罗列过多,可能让新用户不知所措,建议优化引导和用户体验;3. 创始人积极回应,阐释Ally的设计哲学是减少用户对仪表盘的依赖,通过自然语言指令进行管理。

AI 锐评

Link AI的野心在于成为“一站式AI商业操作系统”,其核心价值并非单个功能创新,而在于对“工具碎片化”这一企业痼疾的激进整合。它直指一个关键痛点:中小企业为构建基础运营栈,被迫在多个SaaS工具间疲于奔命,导致数据孤岛和效率损耗。

然而,其宣称的“取代整个技术栈”面临双重考验。一是产品深度与专业性的平衡。将日历、订单、电话、表单等众多模块集于一身,极易陷入“样样通、样样松”的陷阱,在特定垂直场景的深度上,难以匹敌专注的独立工具。二是其灵魂功能“Ally”所代表的“无仪表盘”愿景。这看似是终极解放,实则对AI的上下文理解、任务拆解和权限管理提出了极高要求。用户评论中关于“保留沟通中人情味”的质疑,正是对此的隐忧——当AI成为所有客户交互的统一界面,如何确保其不沦为冰冷、机械的流程处理器,而是能传递企业独特温度与意图的代理?这需要远超当前RAG和简单工作流的技术底蕴。

当前版本更像是一个功能聚合的“连接器”,其真正的护城河在于后续能否通过“Ally”实现智能、无缝的跨模块调度,让数据与流程真正流动起来,而非简单堆砌。创始人承认官网信息过载,这恰恰反映了产品在“集成复杂性”与“用户体验简洁性”之间的挣扎。若不能通过AI代理有效降低认知和操作负荷,其整合价值将大打折扣。在AI Agent概念泛滥的当下,Link AI需要证明自己是真正理解商业逻辑的“大脑”,而非另一个需要被“胶合”的复杂工具。

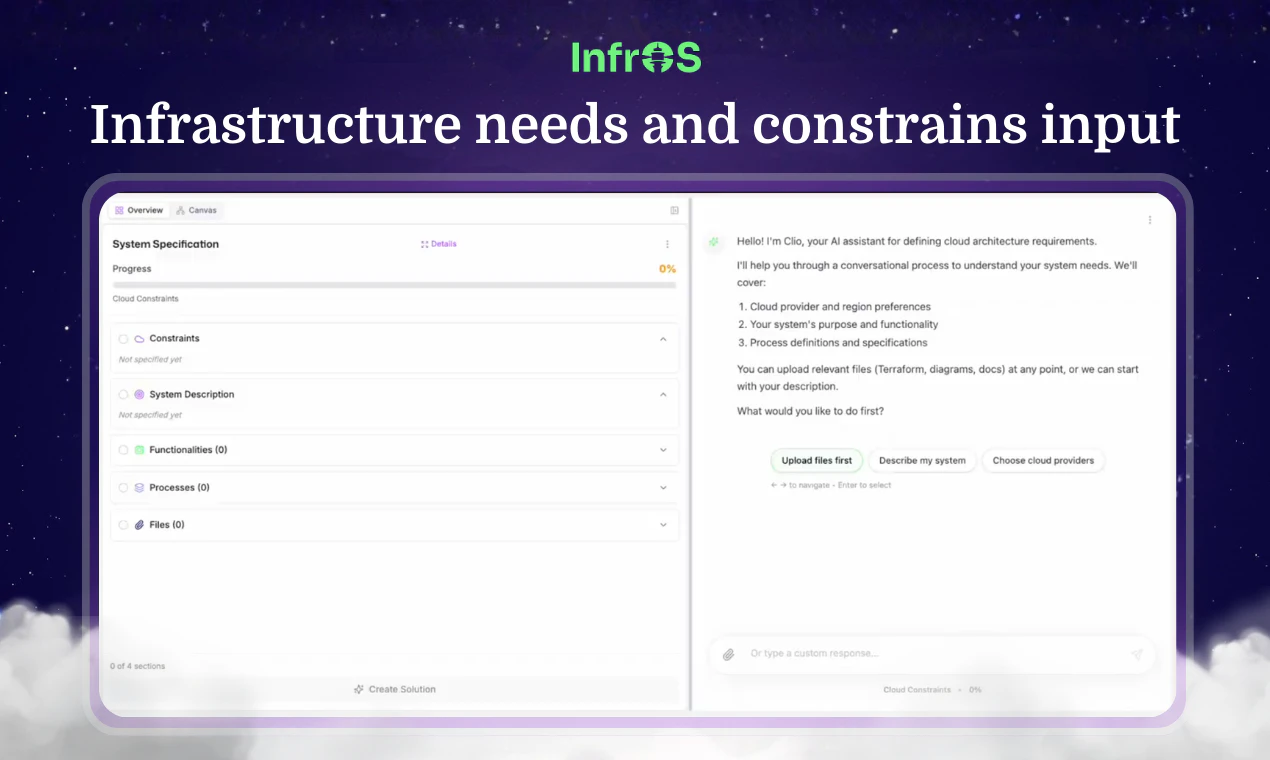

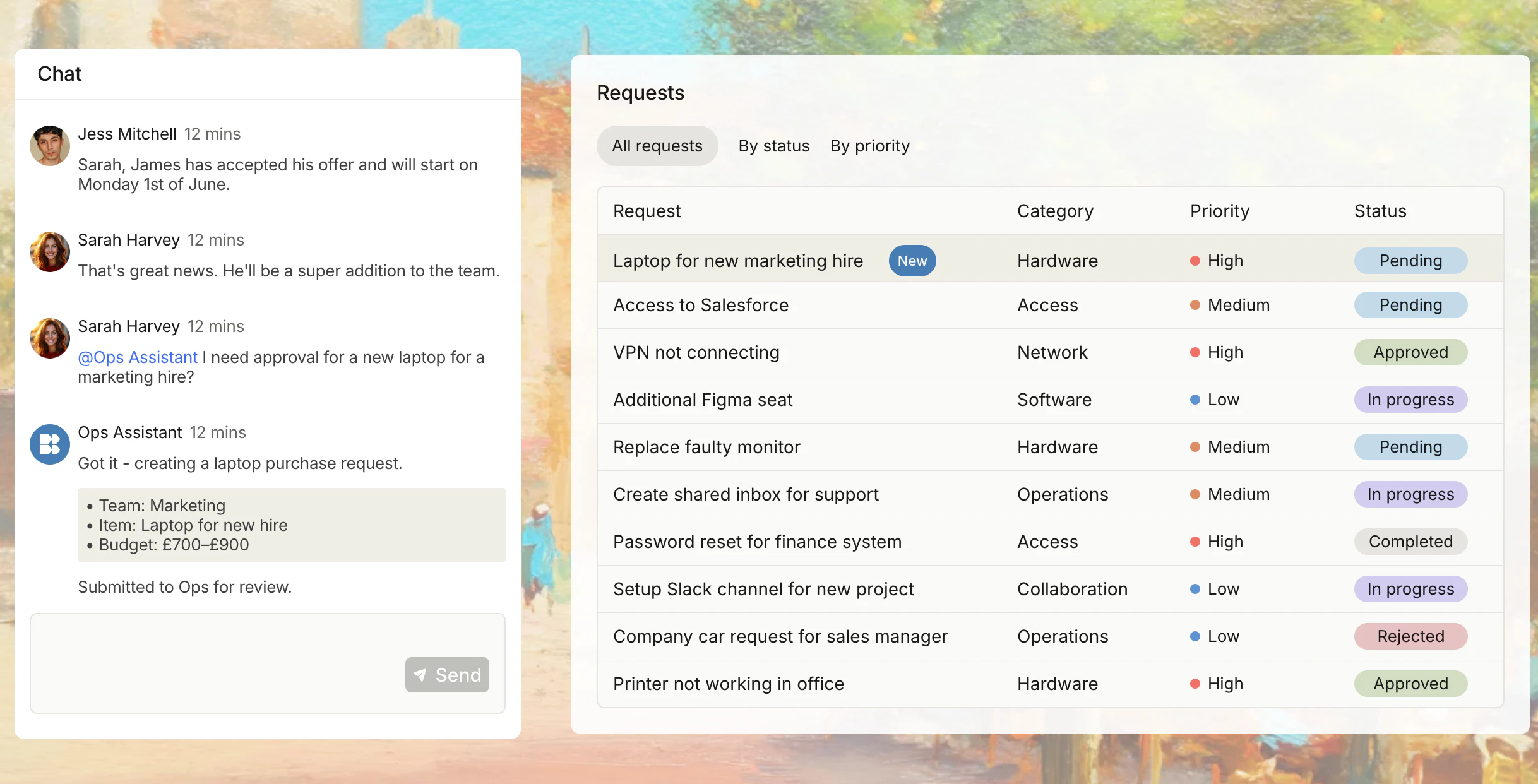

I had hunted Stitch by Google almost a month ago. The Stitch team is back with some major updates to Stitch, thereby making it your AI-native vibe design partner! :)

Here is a quick walkthrough of everything new in Stitch:

🎨 The AI-native canvas can hold and reason across images, code, and text simultaneously. The new agent manager helps you design in parallel. (PS … light mode!)

🧠 A smarter design agent now understands your entire canvas context. You can swap images, generate product briefs, or mix mobile and desktop screens on the same canvas.

🎙️ You can vibe design with your voice (in Preview). Stitch can ‘see’ your canvas and your selected screens. You can ask for design critiques, variations, or navigate your canvas.

⚡️ Instant prototypes. Just hit the play button to see a prototype or preview your app in seconds. Stitch can imagine the next screen based on your mouse click.

📐 DESIGN .md and consistency. Every new design automatically starts with a cohesive design system which vastly improves consistency. The new DESIGN .md file can be used to export or import your design rules.

Read more about the updates here. Stitch is perfect for designers exploring variations or founders shaping new products. If you’re into the future of AI + design, this is worth checking out!

I hunt the latest and greatest launches in tech, SaaS and AI, follow to be notified → @rohanrecommends

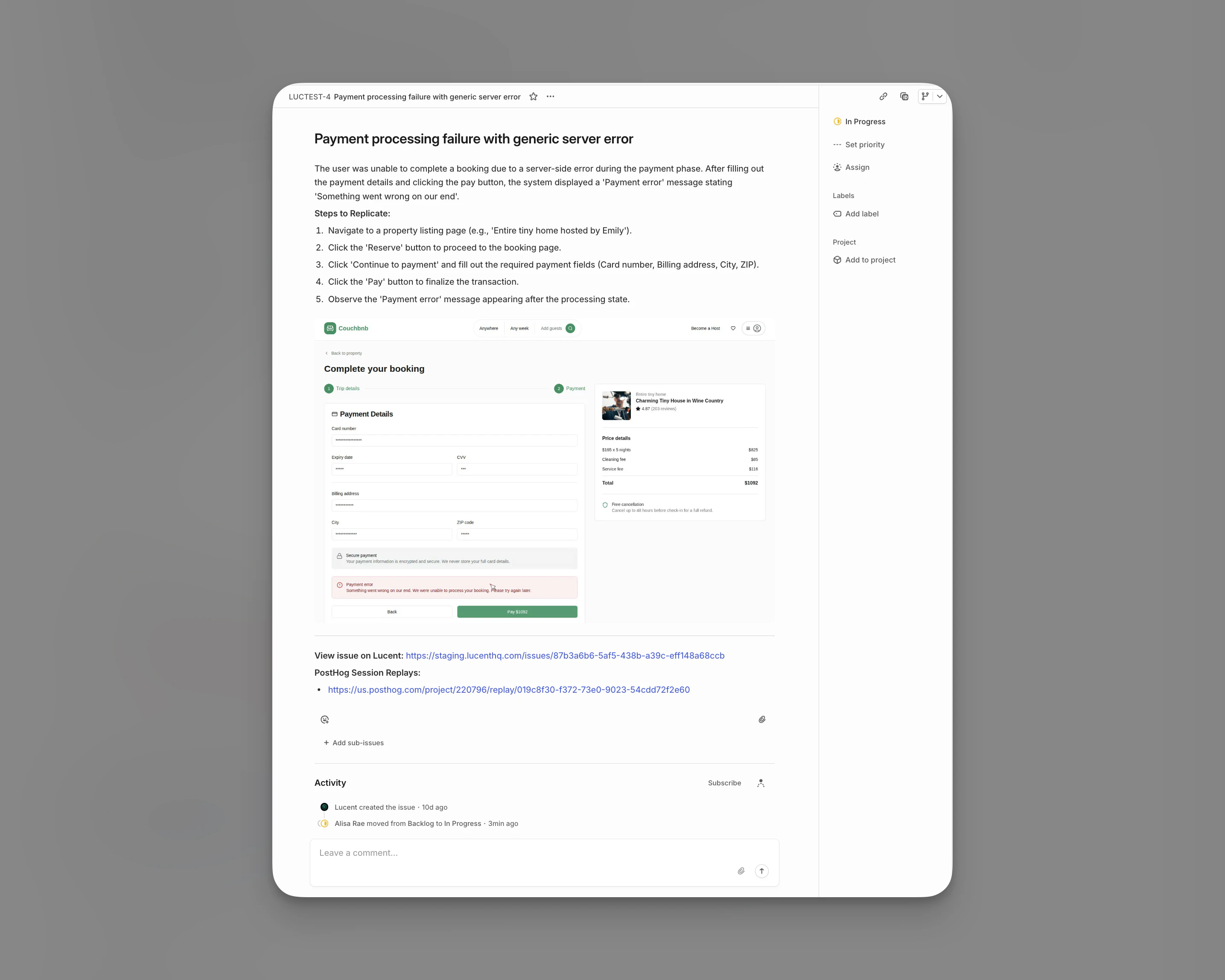

Curious how Stitch handles the gap between generated UI and production code. With most vibe design tools I"ve tried, the output looks great in isolation but falls apart once you drop it into an existing design system - wrong tokens, hardcoded values, etc. Does Stitch have any awareness of existing component libraries or is it starting fresh each time? Also wondering about responsive behavior - generated from a single viewport or does it reason about breakpoints at generation time?

Looks pretty cool. Tho today I read that Apple started rejecting solutions that are vibecoded. 🫣

I’ve tried a few similar tools, and they look great at first but get messy when you try to integrate them into an existing system. Curious how well this handles that in practice.

I am often trying to marketing assets with product UI. Is this something that can help me with that?

Tested it without giving any UI hints, just described the core functionality, and Stitch inferred a layout I would have probably landed on myself after a few iterations. Impressive how it picks up context implicitly.

Curious: how does it handle design consistency when you iterate heavily and go back and forth with prompts? Does DESIGN.md help keep things stable or does it drift?

The token mismatch Mykola called out is the production blocker. Every vibe design tool I've tried generates clean code that ignores your design tokens and component API. If Stitch can ingest a token file and respect those constraints at generation time, that closes the prototype-to-production gap.

Thanks! I really enjoyed using Stitch — it helped me improve what I already felt was a promising UI.

That said, one thing I (and other iOS engineers I’ve spoken to) found frustrating is the lack of visibility into what Stitch is doing. For example, when I point out issues or explain what I don’t like in a design, Stitch starts making changes without confirming whether it actually understood my concerns. It would be really valuable to have more communication and feedback — especially asking clarifying questions before jumping straight into a solution.

Another issue is that it sometimes seems to hang indefinitely. The only way to recover is to refresh the page or rerun the prompt, but there’s no indication of whether it’s still working or stuck. Some kind of status feedback would make a big difference here.

Lastly, I often find it confusing to choose between Gemini 3.1 (Pro) and NanoBanana. When I’m making small refinements to an existing UI, it’s not clear which option is more appropriate. It can feel like Gemini 3.1 would give better results, but at the same time, NanoBanana seems more suited to iterative tweaks — making the choice unclear.

Congratulations!

Sounds like a lot of "inspirations" from https://www.wenderapp.com/ 🫣

This is crazy. I tried it and got amazing results.

Great Product honestly