PH热榜 | 2026-04-15

一句话介绍:Fathom 3.0是一款AI会议笔记工具,通过无机器人参与录制、全账户AI搜索及与主流AI助手集成,解决了用户在视频会议中难以同时专注参与和高效记录的核心痛点。

Productivity

Meetings

Artificial Intelligence

AI会议助手

智能笔记

会议转录

知识管理

生产力工具

SaaS

对话式AI

销售赋能

远程协作

工作流集成

用户评论摘要:用户普遍赞誉其准确性和对工作流的变革性影响,尤其欣赏实时摘要、行动项提取和搜索功能。主要反馈包括:期待支持多谷歌账户切换以更好服务自由职业者;希望iOS应用能包含在现有订阅中;指出了一些小瑕疵,如必须启动应用才能使用Zoom插件。

AI 锐评

Fathom 3.0的迭代,表面是功能堆砌,实则是战略重心的清晰转移:从“记录会议”升级为“消化并激活会议知识”。其核心价值已超越转录准确度竞赛,在于构建了一个以会议数据为源头的私有化知识图谱。

“无机器人录制”看似解决了社交尴尬的小痛点,实则降低了使用心理门槛,是推动全员采纳的关键设计。而“全历史AI搜索”将离散的会议记录串联成可查询的组织记忆,这才是对知识工作者真正的效率核弹。与Claude/ChatGPT的深度集成,更是精明之举,将自身定位为AI生态的“数据管道”,而非试图在生成式AI应用层与巨头竞争。

用户评论揭示了其真实壁垒:高度的场景普适性使其能渗透至销售、客服、 coaching、工程等多个领域,形成跨职能的刚性需求。从“个人效率工具”到“团队核心工作流”的转变,是其抵御Zoom等平台内置功能侵蚀、并让用户从Gong回流的关键。然而,其挑战也在于此:如何平衡“轻量易用”的初心与日益增长的、来自不同垂直领域的复杂需求?当会议知识库日益庞大,如何实现更智能的知识关联与洞察,而不仅仅是关键词检索,将是其下一个必须回答的问题。

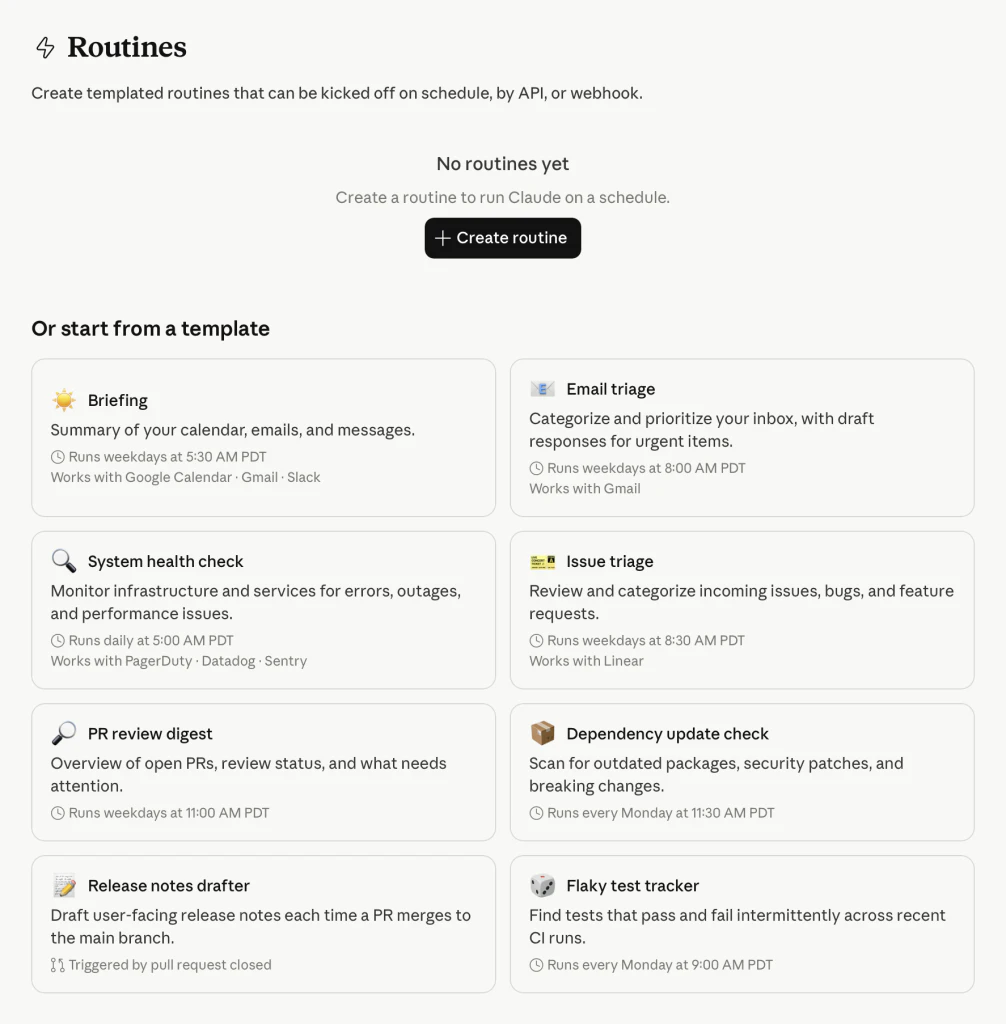

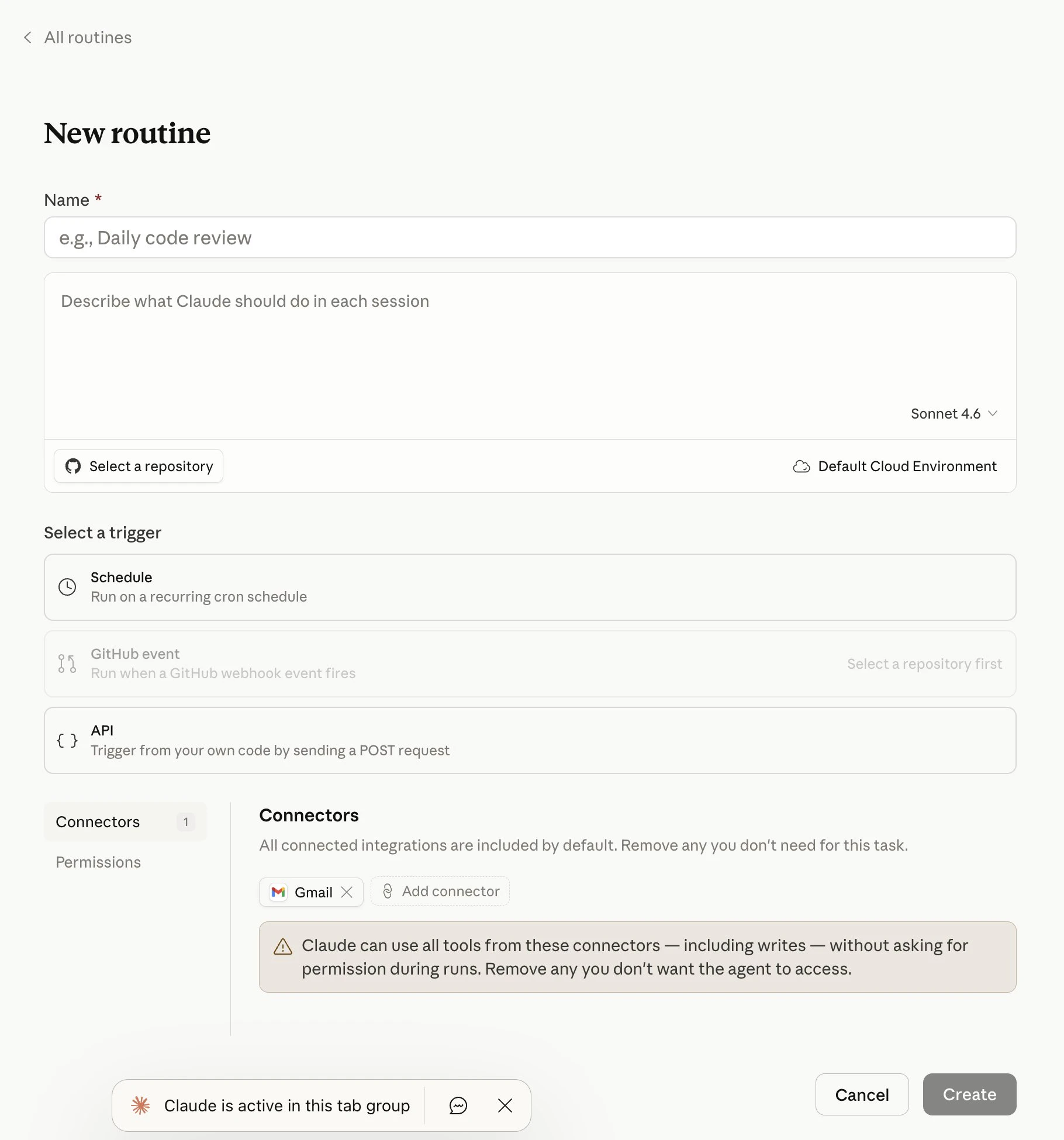

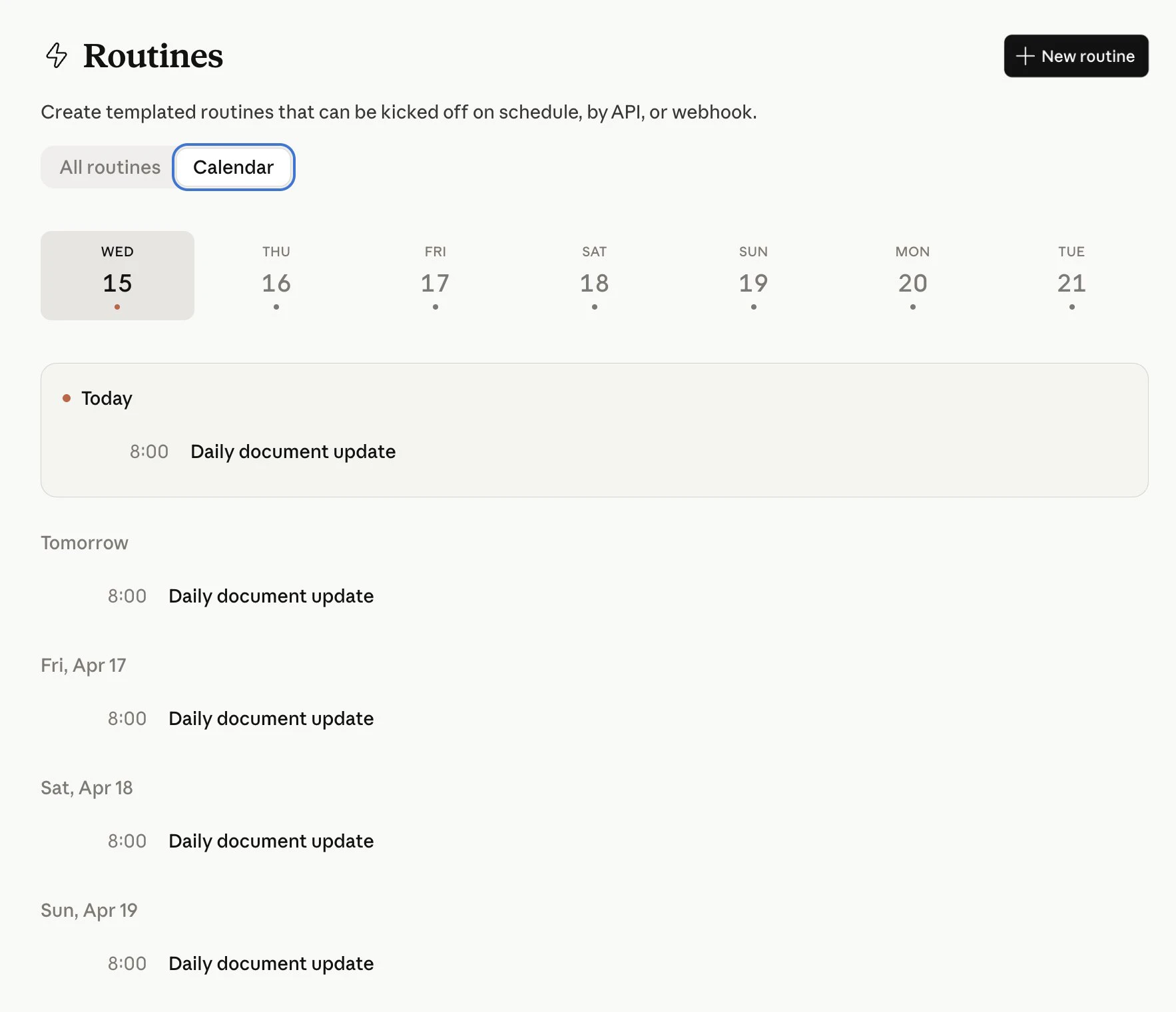

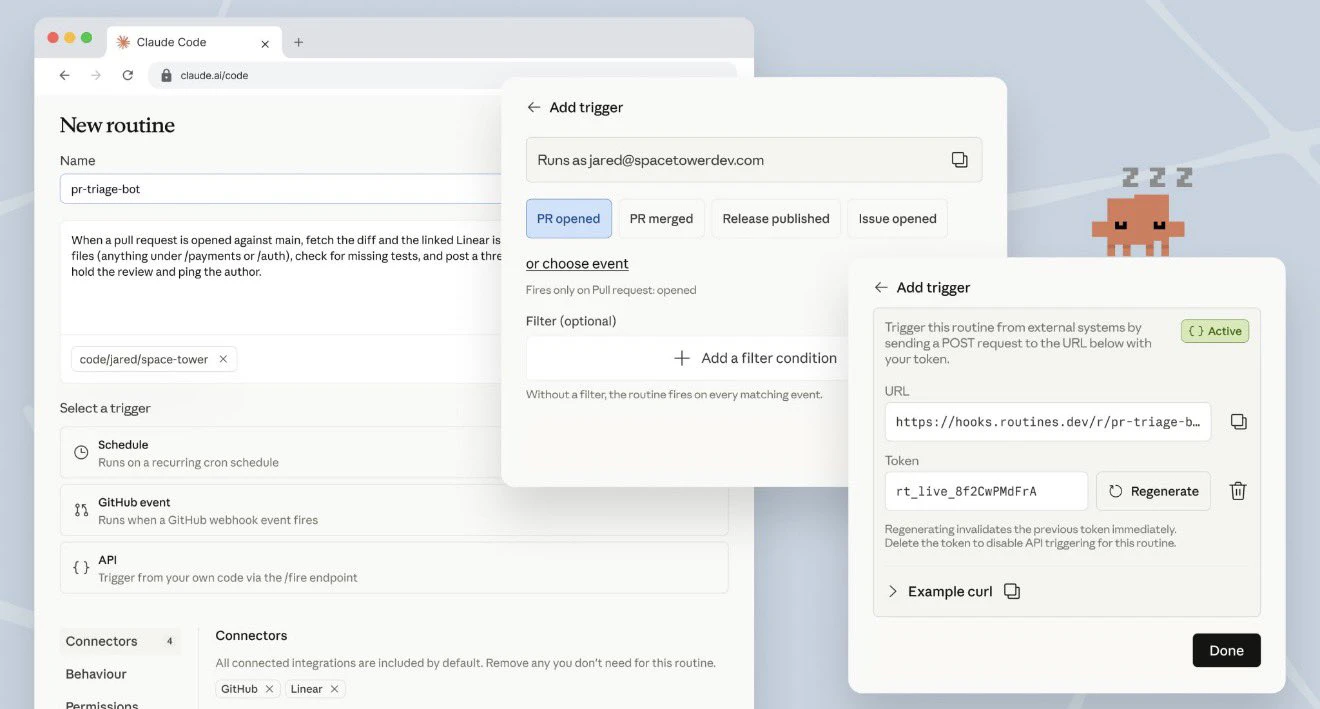

一句话介绍:一款在Anthropic托管基础设施上运行AI编码自动化的工具,通过计划、API调用或GitHub事件触发,为工程团队自动执行代码审查、工单分类和部署验证等重复性工作,无需开发者保持本地环境运行。

Productivity

API

Developer Tools

AI编码自动化

开发运维自动化

无服务器架构

定时任务

GitHub集成

工程团队效率工具

智能代码审查

持续集成与交付

Anthropic生态

SaaS

用户评论摘要:用户普遍认可其“无需本地值守”的核心价值,能节省大量时间。主要关注点包括:与现有功能(如dispatches)的区分、15次/天的限额对团队的实际影响、对自动化结果的信任度,以及跨PR会话的上下文保持能力等技术细节。

AI 锐评

Claude Code Routines本质上是一款“AI智能体即服务”产品,其真正的颠覆性不在于自动化本身,而在于将AI编码能力从“交互式工具”重构为“可调度的基础设施资源”。这标志着AI辅助开发正从“副驾驶”模式迈向“自动驾驶”模式的关键一步。

产品巧妙地将三类触发器(定时、API、GitHub事件)统一封装,降低了开发者将AI能力嵌入CI/CD管道的认知负荷和运维成本。其最大卖点——在Anthropic基础设施上托管执行——看似是技术实现细节,实则是商业模式的精心设计:它通过锁定执行环境,将用户从零散的“API调用者”转化为深度依赖其托管平台的“生态居民”。这为Anthropic构建了更深的护城河。

然而,潜在风险不容忽视。首先,“黑盒自动化”带来的责任归属问题:当AI自主执行的代码审查或部署验证出现误判时,责任链条如何界定?其次,15次/天的运行限制暴露了其作为托管服务的成本控制本质,也暗示着大规模、高频次的企业级应用可能面临高昂的升级费用。最后,评论中关于“信任阈值”的质疑直指核心:工程团队是否真的敢于将关键流程,如PR合并或生产部署验证,完全托付给一个尚无法完全解释其决策逻辑的AI代理?

该产品的成功,将不取决于其自动化功能的多少,而取决于Anthropic能否建立起堪比人类工程师的、稳定可靠的“AI代理信誉体系”。否则,它很可能只会停留在处理低风险、高重复性任务的“高级脚本”层面,难以触及软件开发的核心决策流程。

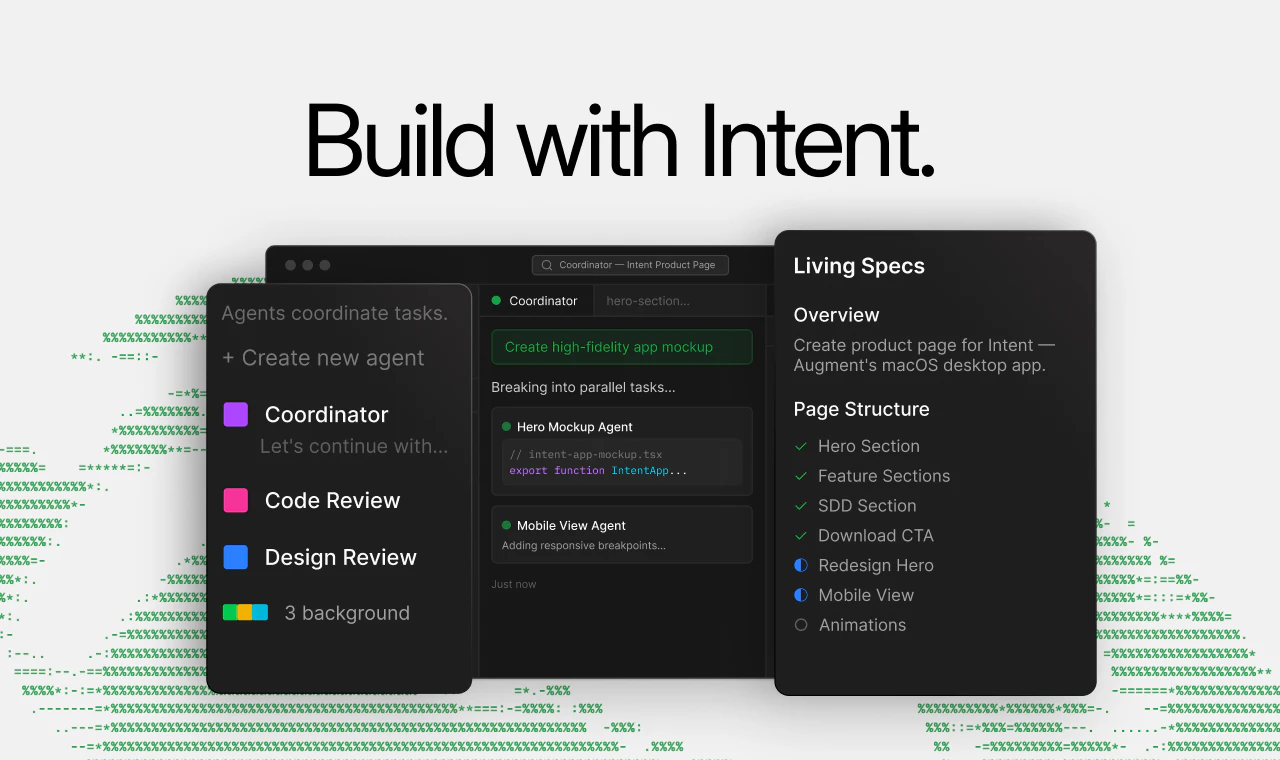

一句话介绍:Intent是一个为智能体驱动开发设计的开发者工作空间,通过将功能需求转化为规范说明,由AI智能体团队在隔离工作区内协同完成从实现到验证的全流程,解决了开发者在协调多个AI编码助手时面临的上下文断裂、进度跟踪和结果验证难题。

Productivity

Developer Tools

Artificial Intelligence

AI智能体开发平台

开发者工作空间

多智能体协作

规范驱动开发

自动化代码生成与验证

Git集成开发环境

智能编码助手

软件工程自动化

智能体编排层

用户评论摘要:用户高度认可其“协调者-专家”多智能体架构与“活的规范”设计,认为这是智能体开发的关键进化。主要关切点集中于:复杂混乱代码库的实际处理能力、输出代码质量的可信度、多任务并行可能产生的合并冲突,以及与传统SDLC工具的集成深度。

AI 锐评

Intent并非又一个“加强版Copilot”,其真正野心在于重构AI辅助开发的底层工作流。它摒弃了流行的单点提示交互模式,转而采用“规范驱动、多智能体协作”的工程化范式,这直指当前AI编码工具的核心缺陷:上下文碎片化与缺乏可验证性。

产品的核心价值在于两个“封装”:一是封装了复杂的智能体编排层,将技术团队自行搭建多智能体调度系统的数月工程成本,转化为开箱即用的服务;二是封装了完整的开发环境,将代码生成、测试、验证、调试等环节置于统一的、隔离的Git工作区内,使AI的输出不再是孤立的代码片段,而是可直接纳入现有Git工作流的、经过初步验证的变更集。

然而,其面临的挑战同样尖锐。首先,“规范”的质量决定输出的上限,这要求开发者从“写提示”转向“写机器可执行的严谨规范”,本身存在学习成本。其次,在高度耦合的遗留系统中,智能体能否真正理解跨模块的隐性依赖,仍需大规模实践检验。评论中关于合并冲突和依赖管理的担忧,正是其从技术演示走向工程实践必须跨越的鸿沟。

本质上,Intent试图将软件工程中的“分工协作”与“持续集成”理念AI化、自动化。它不再满足于充当“副驾驶”,而是想成为整个开发机组的“自动驾驶系统”。成败关键在于,这套系统在复杂、混乱的真实开发空域中,是能平稳导航,还是会因无法处理无数边缘情况而需要人类频繁接管。其演进方向,预示着AI辅助开发正从“工具增强”阶段,迈向“流程重塑”的新赛点。

一句话介绍:Lovable Desktop 是一款快速、轻量级的本地桌面应用,通过标签页组织项目和连接本地MCP服务器,解决了开发者在多项目工作流中工具分散、集成度低的痛点。

Design Tools

Artificial Intelligence

Vibe coding

桌面应用

本地优先

工作流优化

开发者工具

MCP集成

标签页管理

生产力工具

Mac原生体验

用户评论摘要:用户普遍认可其本地MCP连接的核心价值,认为能显著提升工作流效率。主要问题与建议集中在:希望了解已成功集成的具体MCP服务案例;询问是否增加代码安全沙箱功能;期待Xcode等特定生态集成;以及反映部分复杂任务处理能力有限和下载访问问题。

AI 锐评

Lovable Desktop App 精准地切入了一个正在形成的市场缝隙:AI原生开发者的本地化工作台。其宣称的“快速、轻量”直指Web版AI工具(如各类基于浏览器的AI IDE)的固有延迟与臃肿感,而“本地MCP支持”则是其真正的杀手锏。

MCP(模型上下文协议)本质上是为AI模型连接外部数据和工具的管道。Lovable将其“本地化”,意味着开发者可以将自建或私有的工具链(如本地数据库、内部API、定制化脚本)安全、高效地接入其AI辅助开发流程,无需经过云端,这同时满足了效率与数据安全的需求。它试图成为AI时代“本地终端”的新形态,一个以项目和标签页组织、以AI为协作者、但控制权牢牢掌握在本地的枢纽。

然而,其挑战同样明显。首先,生态壁垒。评论中关于“连接了哪些MCP服务器”的疑问暴露了其早期阶段的短板——价值完全取决于第三方MCP生态的丰富度。其次,定位模糊。它介于通用AI工作台(如Cursor)和垂直设计工具(如Figma)之间,评论中关于Xcode集成和复杂任务能力的质疑,说明它需要在“广而全”与“深而精”之间做出更清晰的选择。最后,其“本地优先”在保障安全的同时,也可能成为协作与分享的障碍。

它的真正价值并非替代某个具体开发工具,而是试图重新定义工具间的“连接方式”。如果它能成功构建一个繁荣的本地MCP插件市场,并形成稳定的核心工作流,它有可能成为新一代开发者桌面的入口。否则,它可能只是一个有趣但小众的效率插件集合壳。其成败,在于生态,而非功能本身。

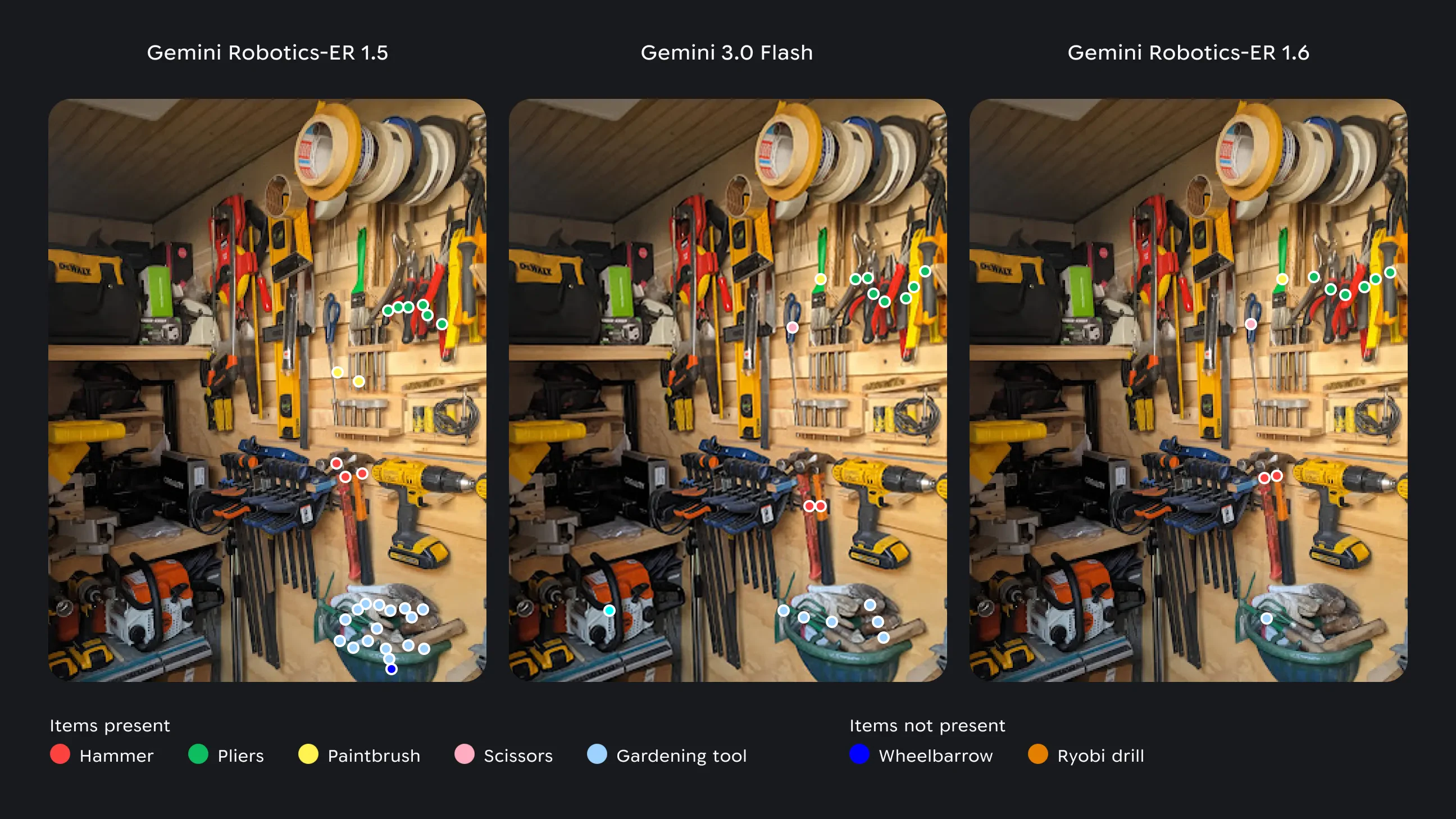

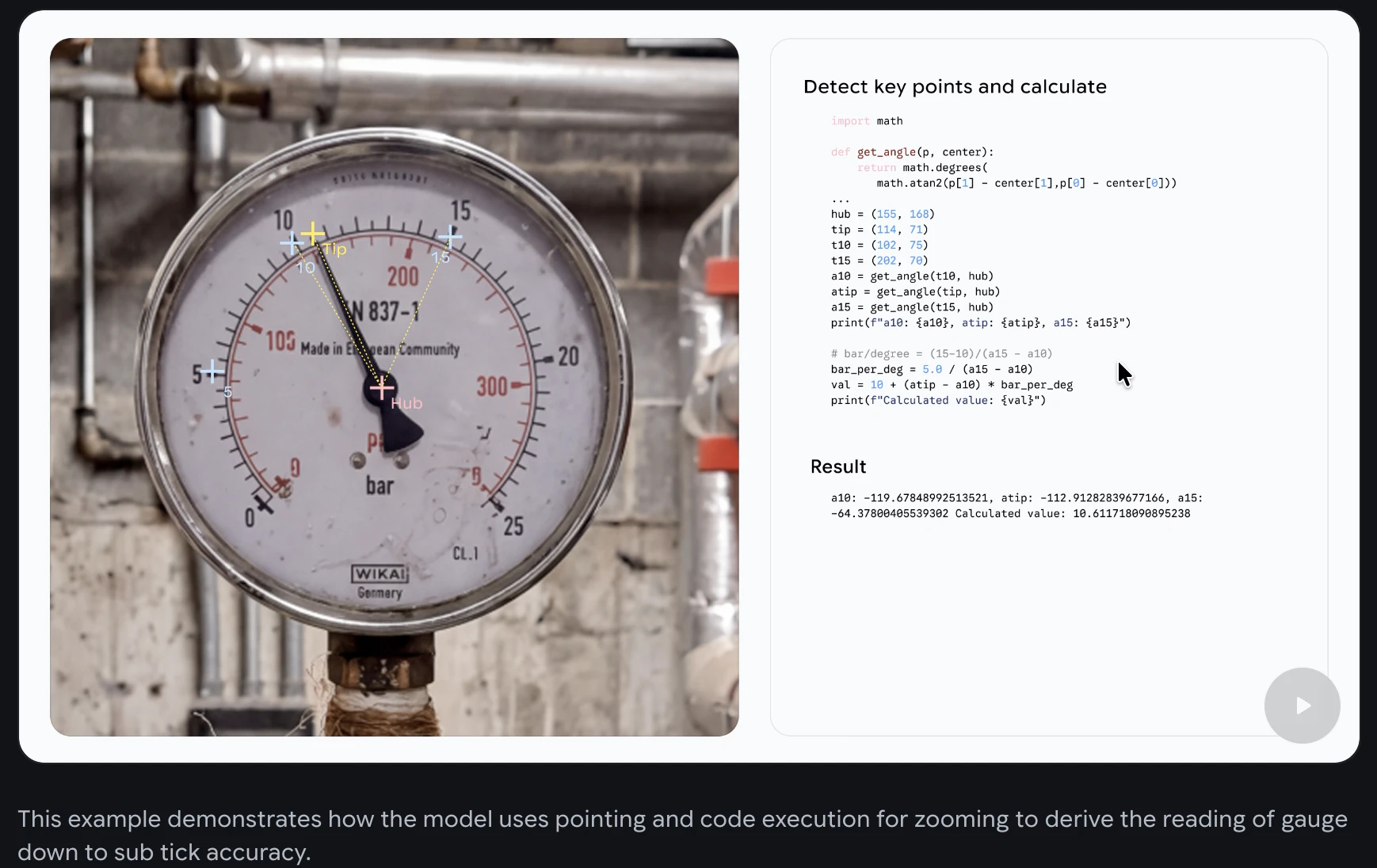

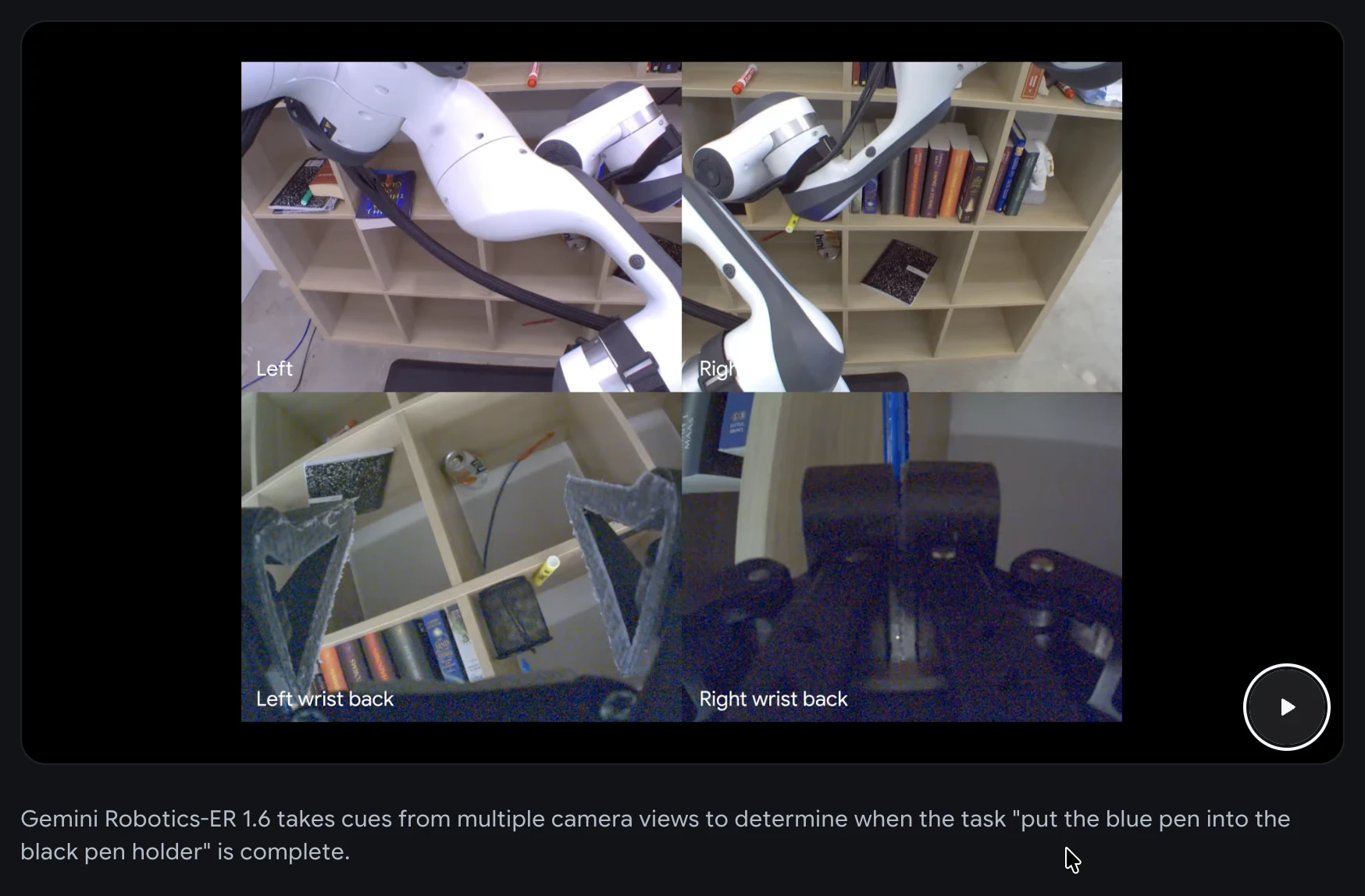

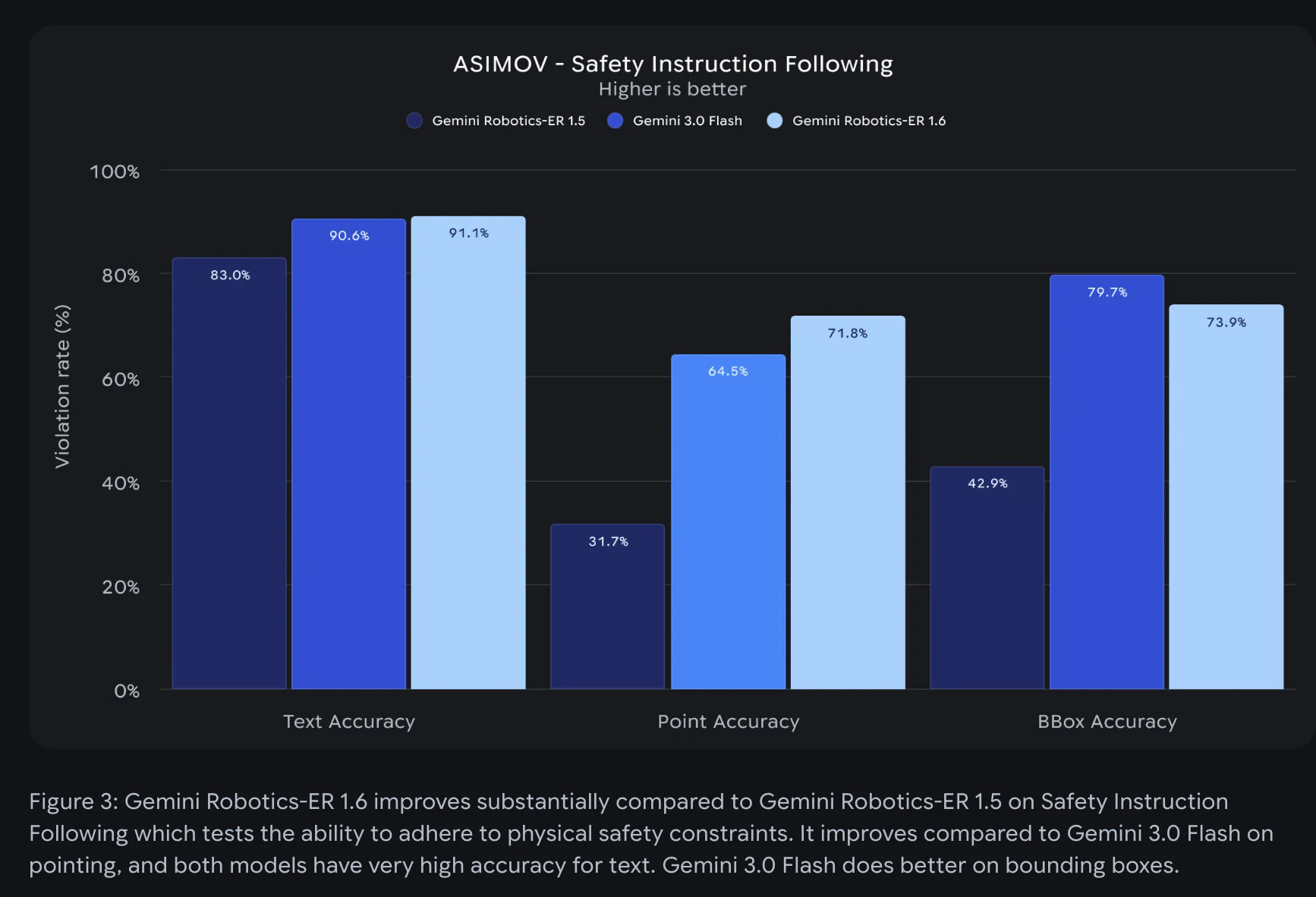

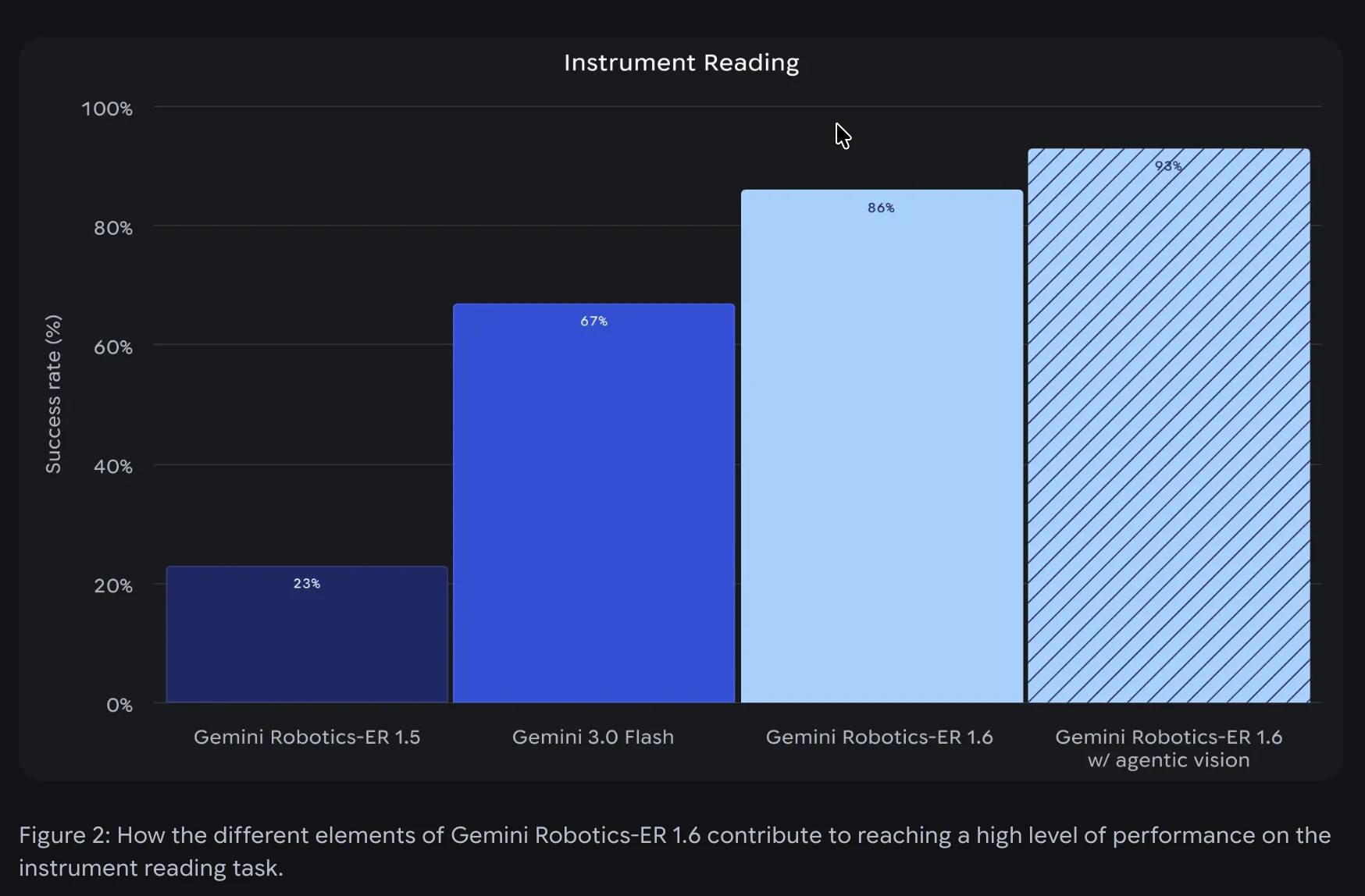

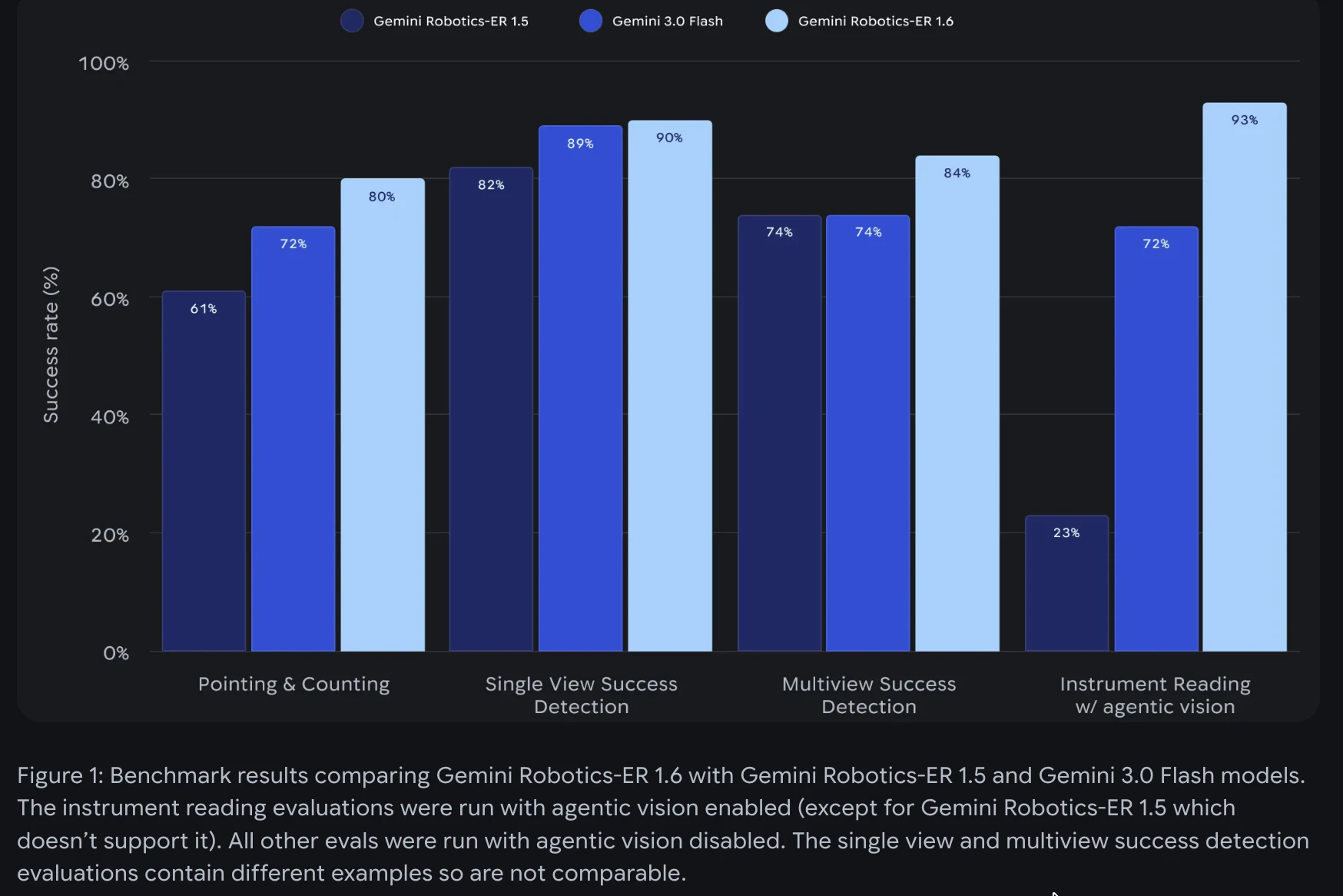

一句话介绍:Gemini Robotics ER 1.6是一款专注于视觉与空间推理的机器人模型,它通过处理空间指向、多视角任务成功检测和仪表读取等核心功能,为工业自动化等场景中“执行”与“验证”脱节的痛点提供了解决方案。

Robots

Artificial Intelligence

机器人视觉语言模型

空间推理

工业自动化

具身智能

任务成功检测

仪表读取

机器人API

物理智能体

状态验证

机器人操作系统

用户评论摘要:用户高度评价其填补了机器人“执行指令”与“自主推理”间的关键空白,认为空间推理能力是物理AI的长期难题。有效评论提出了两个关键问题:一是模型在真实工厂环境(如仪表锈蚀、污损)下的鲁棒性;二是模型在机器人平台(如Spot)上链式推理的实时延迟表现。

AI 锐评

Gemini Robotics ER 1.6的发布,与其说是一款新产品,不如说是谷歌将其大模型能力向物理世界渗透的一次精准卡位。它的真正价值不在于单项技能的突破(如93%的仪表读取精度),而在于将“视觉-语言-动作”的闭环验证首次以标准化API的形式产品化,直击工业自动化“哑巴执行、人工核验”的沉疴。

模型标榜的“空间指向”、“多视角成功检测”等能力,本质上是在为机器人安装一个具备常识和任务上下文理解的“数字监工”。这标志着机器人AI从“开环脚本执行”迈向“闭环状态感知与决策”的关键一步。其“原生调用工具”和“链式推理”的设计,更是试图将大模型的规划能力与机器人的物理技能模块化拼接,野心在于成为机器人领域的“推理中间件”。

然而,炫酷的演示与残酷的车间之间存在鸿沟。评论中关于锈蚀仪表和实时延迟的质疑,恰恰点中了这类VLM模型落地工业的核心命门:对非结构化环境的极端鲁棒性,以及复杂推理链带来的决策延迟能否被实际流程所容忍。此外,将如此复杂的推理能力封装成API,固然降低了使用门槛,但也可能将系统中最不可控、最需调试的“大脑”部分置于黑箱之中,这对强调可靠性与确定性的工业场景是一把双刃剑。

总而言之,ER 1.6是一次极具指向性的尝试,它描绘了智能机器人应有的“自主验证”未来。但其成功与否,不取决于实验室精度,而取决于能否在昏暗、油污、震动且容错率极低的真实工厂里,稳定地交出毫秒级的可靠答案。它开启的赛道令人兴奋,但最艰苦的工程化爬坡,才刚刚开始。

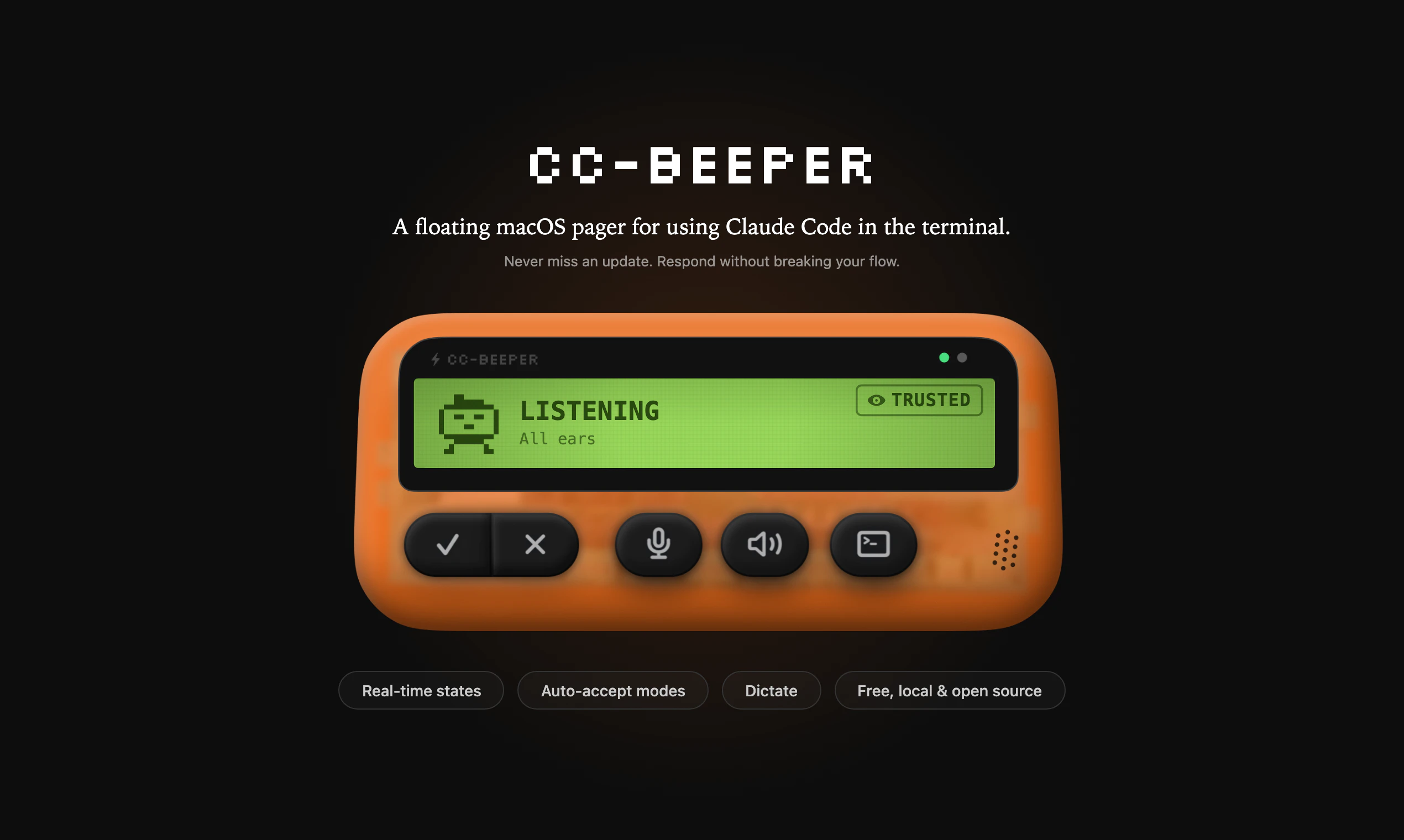

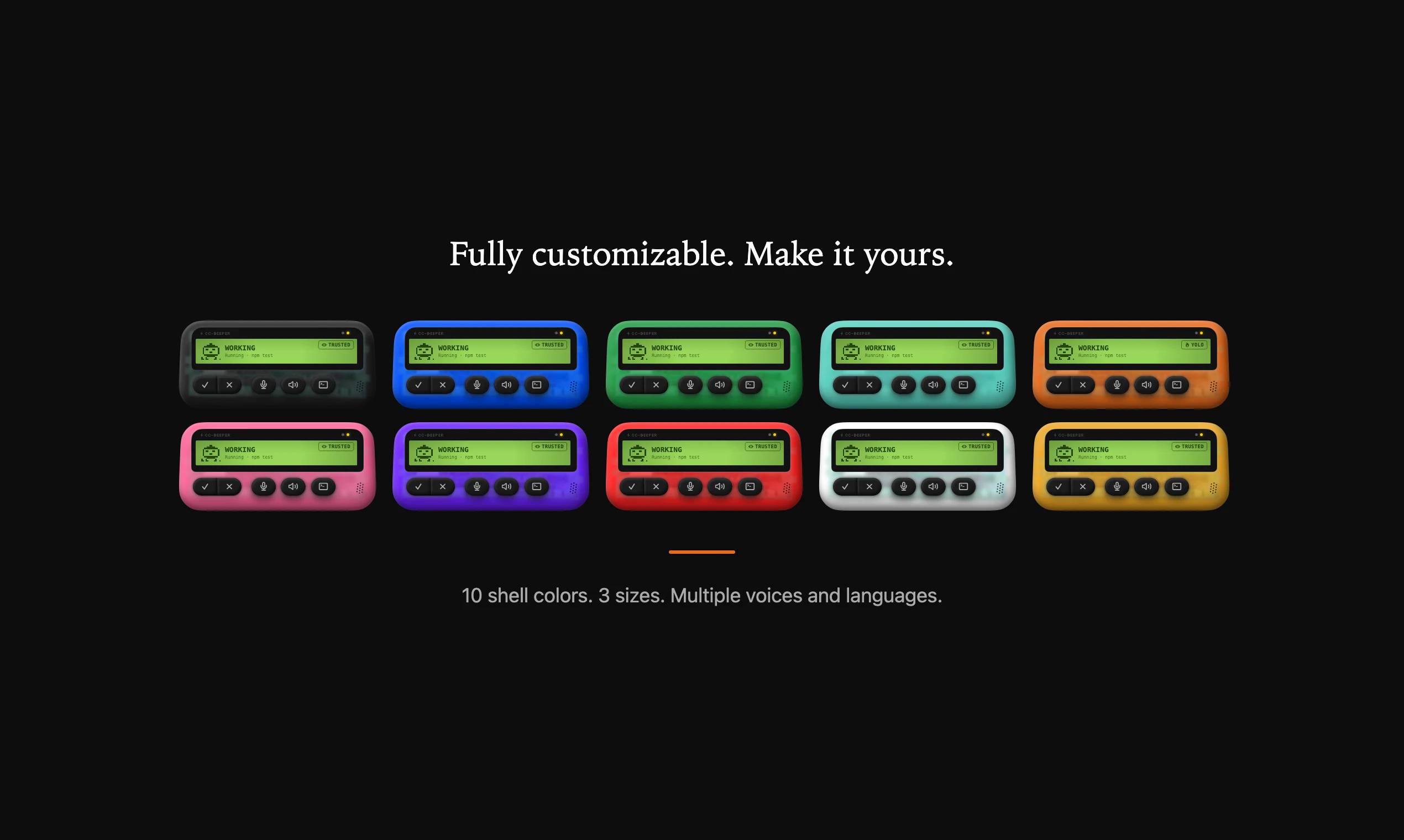

一句话介绍:一款为Claude Code设计的macOS悬浮传呼机式伴侣,通过实时状态显示和自动化权限管理,解决了开发者需持续监控终端进程的痛点,解放了注意力。

Open Source

Developer Tools

Artificial Intelligence

GitHub

macOS效率工具

Claude Code伴侣

状态监控

自动化权限管理

本地应用

开源软件

复古UI

悬浮窗口

开发者工具

生产力提升

用户评论摘要:用户赞赏其设计感和实用性,解决了终端“保姆式”监控问题。主要反馈集中在多会话状态显示逻辑、状态颜色编码建议、Windows版本需求、以及底层技术细节(如钩子可靠性、状态显示粒度)的探讨上。开发者积极回应,坦诚技术局限。

AI 锐评

CC-Beeper的价值远不止于一个花哨的桌面小部件。它精准切入了一个新兴的、却迅速变得核心的痛点:人类与AI编码助手协同工作时的“注意力税”与“信任成本”。在Claude Code等工具将AI动作深度嵌入开发流后,开发者陷入了两难:要么频繁切换上下文去终端审批,要么冒险使用全自动模式。此产品通过一个极简的、常驻的视觉通道,将AI代理的状态(工作中、需许可、完成、错误)轻量化但持续地广播给用户,本质上是为“人机结对编程”建立了非侵入式的状态同步协议。

其“YOLO/Strict”等四档自动接受模式,更是将模糊的信任决策产品化,让用户能根据任务风险动态调整干预级别,这比简单的开关高级得多。然而,其局限性同样明显:作为基于钩子的第三方工具,其状态抓取的深度和可靠性受制于上游(Claude Code)的接口设计,难以展示工具级(如读文件、执行命令)的细粒度状态,这也是评论中技术用户的核心关切。它更像一个优雅的“症状缓解剂”,而非根本解决方案。真正的未来在于AI助手自身能提供更丰富的状态API,或操作系统级的人机协作状态栏。但在此之前,CC-Beeper以其独特的复古隐喻和扎实的单点解决方案,为这个过渡期提供了极高的用户体验价值,并巧妙地将一个技术监控问题,转化成了一个充满个性与情感连接的桌面物件。

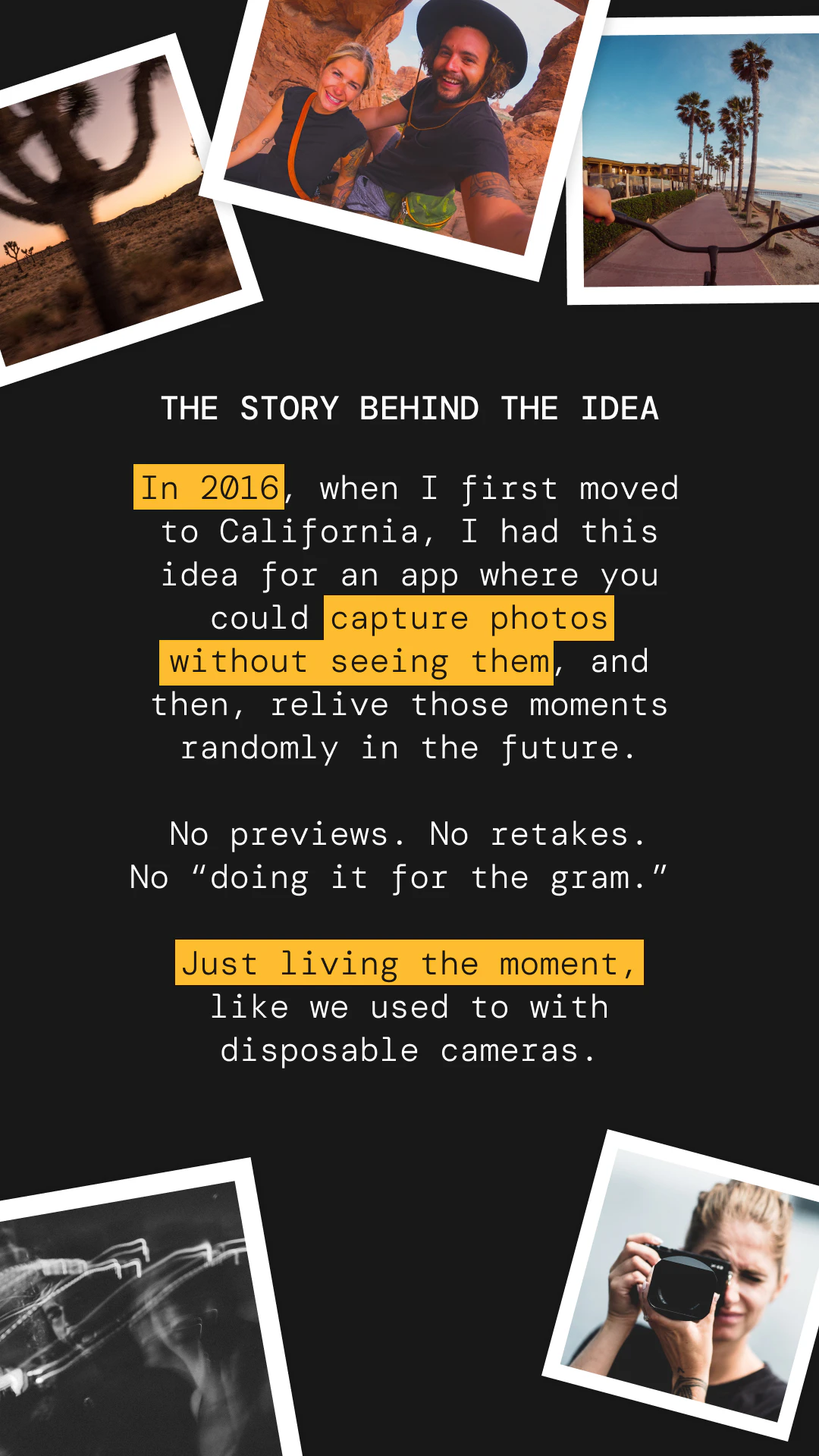

一句话介绍:Roll是一款模仿一次性胶片相机体验的手机拍照应用,通过限制每卷12张、延迟冲洗(数周至一年)的机制,在社交聚会、旅行等场景下,帮助用户减少拍照焦虑与过度拍摄,回归专注当下时刻的拍摄乐趣。

Web App

Photography

Health

胶片模拟摄影

限制性拍照

延迟满足

数字健康

怀旧设计

极简相机

mindful tech

社交记录

异步分享

意图摄影

用户评论摘要:用户普遍赞赏其“限制促专注”的核心体验,认为其有效对抗“表演式拍照”与即时查看焦虑。主要问题与建议集中在:协作拍摄功能(如多人共拍一卷)、Android原生应用开发、用户行为数据(如拍摄节奏)、提前预览可能性及网格线等基础功能完善。

AI 锐评

Roll表面上贩卖的是复古情怀与延迟满足的惊喜感,但其真正的产品锋芒在于对现代数字摄影异化现象的一次精巧“矫正”。在算法驱动、无限连拍、即时美颜的当下,它将“限制”作为核心功能变量,本质上是在交易“便利性”以换取“仪式感”和“意图性”。这并非简单的功能倒退,而是对“拍照即表演”和“拍摄-审视”即时循环的主动切断。

其价值不在于替代主流相机,而在于创造一种“情境化”的拍摄模式:它将自己定位为“时刻相机”,天然适配旅行、聚会等有起止时间的社交容器。用户评论中高频出现的“专注当下”、“减少焦虑”印证了其击中了一部分用户的“数字过载”痛点。然而,其长期挑战也在于此:这种需要高度自律和情境预设的“拍摄仪式”,能否从尝鲜行为转化为稳定习惯?延迟查看的惊喜感是否会因重复而衰减?

创始人透露的“协作拍摄”方向是更聪明的延伸,将个人仪式转化为社交契约,有望提升用户粘性与传播节点。但需警惕过度功能化破坏其极简哲学的初心。Roll的启示在于:在堆砌功能的红海之外,通过做“减法”和引入“时间变量”,依然能开辟出具有情感厚度和反思性的数字产品空间。它能否从小众的“数字清流”成长为可持续的商业模式,取决于其能否在保持核心克制的同时,围绕“共享时刻”构建更丰富的异步社交体验。

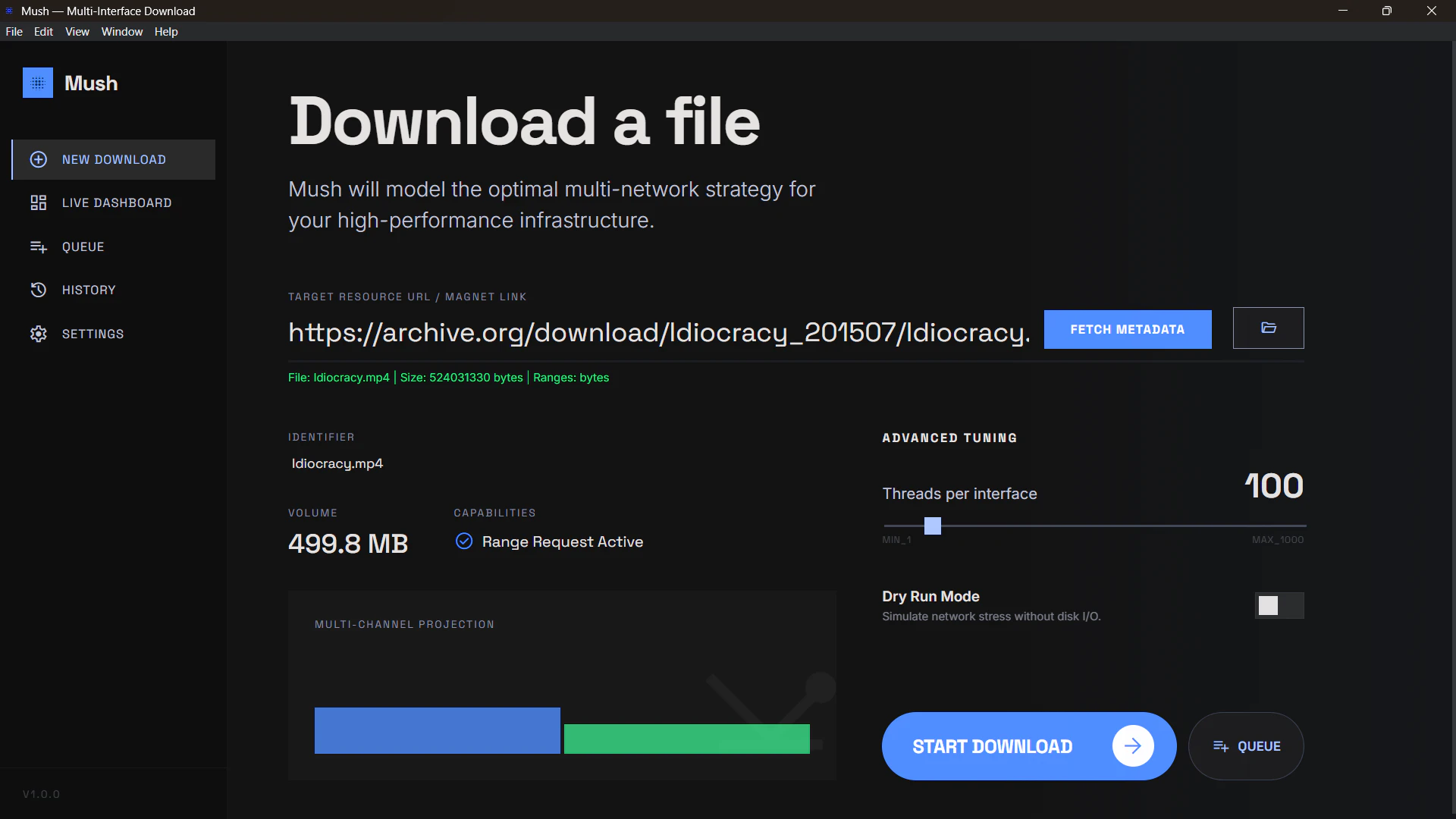

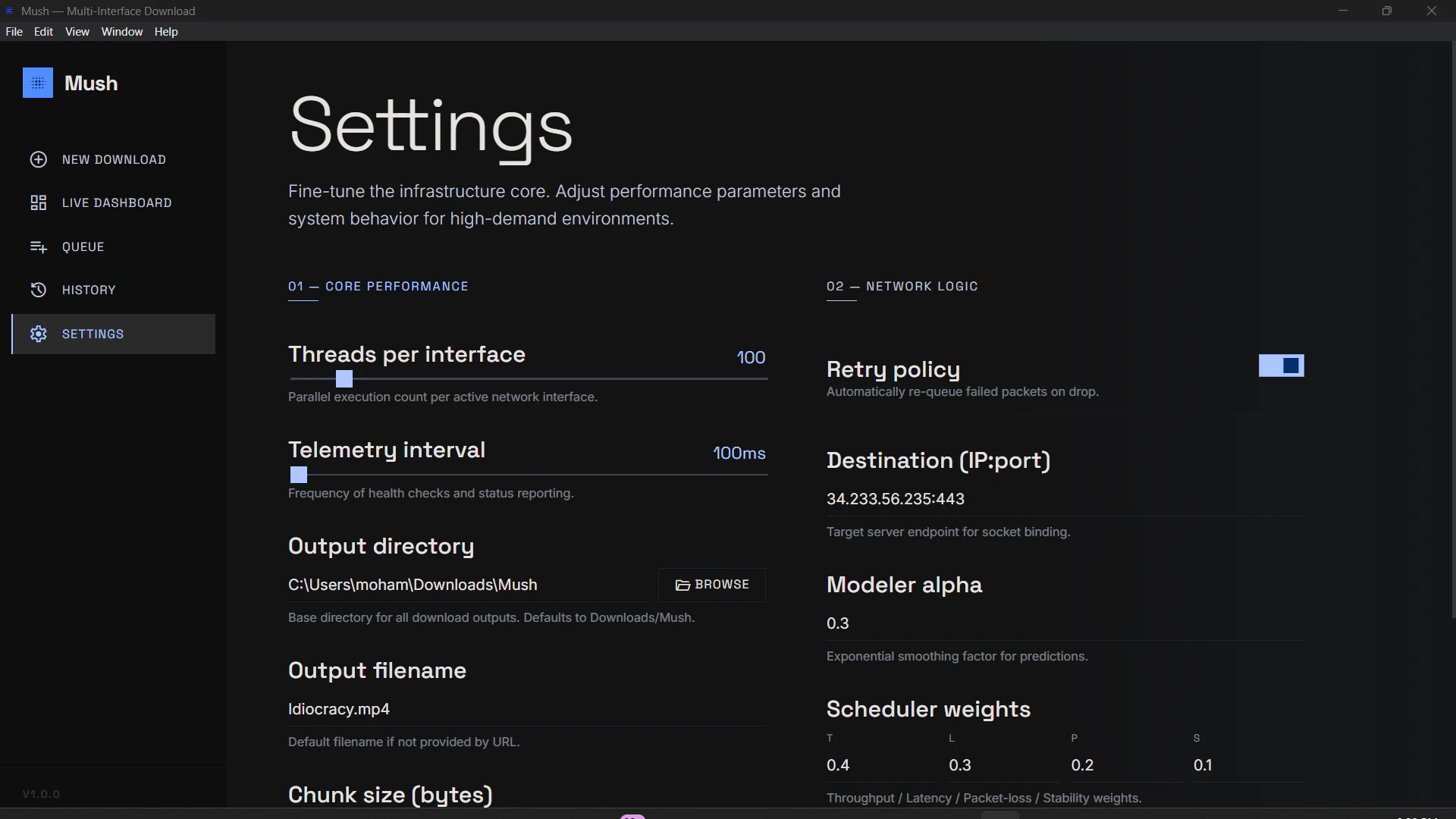

一句话介绍:Mush是一款多接口下载引擎,通过在Wi-Fi、以太网和5G等网络间并行分发文件块,解决单一网络带宽瓶颈问题,显著提升大文件下载速度。

Productivity

Software Engineering

Development

网络加速

多路径传输

下载工具

带宽聚合

HTTP/BitTorrent支持

网络优化

并行下载

Windows/Linux应用

测试版软件

开发者工具

用户评论摘要:用户肯定其性能提升(HTTP下载从10分钟缩至秒级),询问复杂场景(认证、CDN限流、网络抖动)下的可靠性与完整性保障,探讨其与多路径TCP的技术关联及普适性限制,并关注平台可用性。

AI 锐评

Mush的本质,是在应用层对“多宿主”网络连接的一次巧妙劫持。它绕开了需要端到端支持的多路径TCP协议,通过文件分块与并行调度,将单一数据流暴力拆解到多个物理接口上,这在技术路径上是务实的,但也是妥协的。

其真正价值并非创造了新带宽,而是对现有闲置网络资源的“榨取式利用”。对于拥有多张网卡(如笔记本同时连接Wi-Fi和以太网)或可同时接入蜂窝与固网的用户,它确实能化零为整,尤其对HTTP大文件、种子资源尚可的BT下载有立竿见影的效果。开发者自述的测试数据也印证了这一点。

然而,其天花板也显而易见。首先,它严重依赖服务器端不施加严格的单IP或单连接限速策略。面对如今普遍采用智能限流与身份绑定的CDN、需要认证的私有下载源,其加速效果可能大打折扣甚至引发封禁。其次,多接口带来的复杂度剧增:连接稳定性不均、IP地址切换、数据包重组开销与完整性校验,都是潜在的风险点。评论中关于“最难处理的HTTP案例”的提问,恰恰戳中了其商业化落地的核心软肋。

因此,Mush更像是一柄为特定场景(友好服务器、多稳定接口、对突发提速需求强于绝对可靠性)打造的“特种工具”,而非替代传统下载的通用方案。它的出现,与其说是颠覆,不如说是对现有网络协议栈在灵活性与资源利用效率上不足的一次犀利补丁。其前景取决于能否在激进加速与稳健兼容之间找到更精细的平衡点。

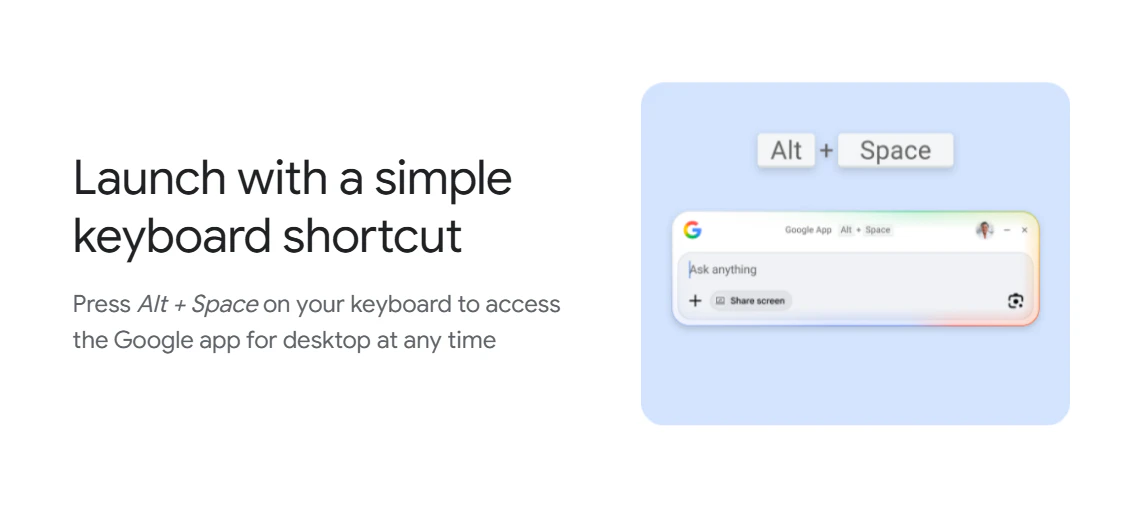

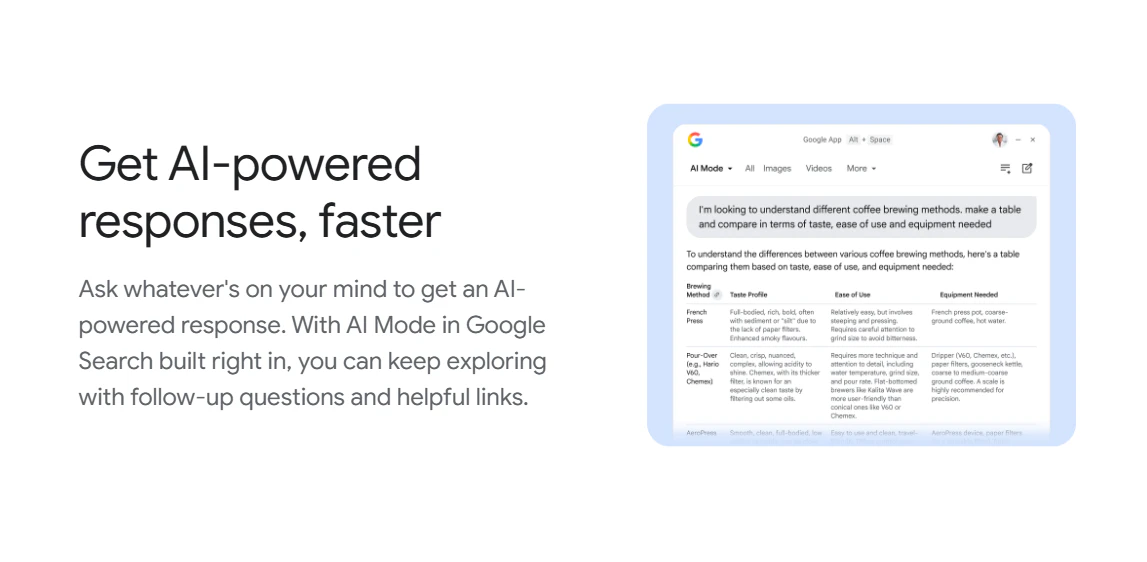

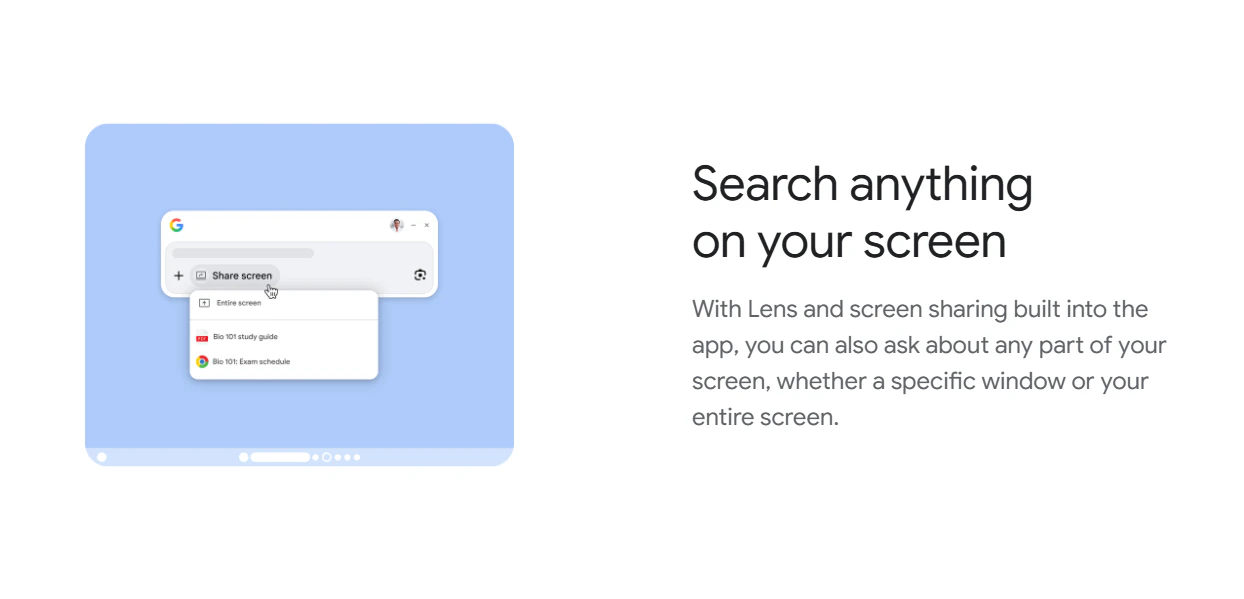

一句话介绍:这是一款桌面端搜索应用,通过快捷键(Alt+Space)呼出搜索框,整合了网络搜索、本地文件、应用、Google Drive及AI对话功能,旨在提升用户在多场景下的信息获取与处理效率。

Search

桌面搜索工具

AI搜索助手

系统级集成

快捷键启动

Google Lens

多源搜索

生产力工具

人工智能应用

用户评论摘要:正面评论认可其便捷的全局搜索和AI集成功能。负面反馈集中在:1. 界面简陋,功能基础,与竞品(如Claude Desktop)相比体验不佳;2. 内置的Gemini AI(Live模式)响应机械,缺乏深度对话与研究能力,令人失望。

AI 锐评

Google此次推出的桌面应用,本质上是将其移动端的“搜索框霸权”向操作系统桌面的又一次战略延伸。其核心价值并非技术创新,而在于**生态整合与入口控制**。通过一个简单的快捷键,它将用户从“打开浏览器->进入Google主页”的路径中彻底解放出来,试图成为PC端最优先、最底层的查询入口。

然而,从用户反馈的尖锐批评中,暴露出Google在AI时代产品化能力的深层困境。产品被诟病为“简陋的聊天框”,以及Gemini Live模式交互生硬,这恰恰反映了Google将AI能力转化为流畅、可靠用户体验的“最后一公里”问题依然严重。在ChatGPT、Claude等竞品已通过独立桌面应用构建起沉浸式、高智能体感的当下,Google这款产品更像是一个仓促的防御性布局——它急于将AI搜索、Lens视觉搜索、本地与云端文件检索等分散优势打包交付,却忽略了整合体验的精致度与AI交互的情感化设计。

其真正的挑战在于,当搜索行为从“信息检索”演进为“任务执行”与“深度协作”时,一个单纯的“快速入口”价值正在衰减。用户需要的是能理解复杂意图、进行多轮探讨、并主动解决问题的智能体。目前看来,这款应用更像是一个功能聚合的“快捷方式”,而非一个智能进化后的“新物种”。它的成败,将不取决于功能列表的丰富度,而取决于其内置的AI能否摆脱机械应答,真正理解桌面上下文,成为用户思维的无缝延伸。否则,它很可能只是另一个被快捷键唤醒,却又迅速被遗忘的系统托盘图标。

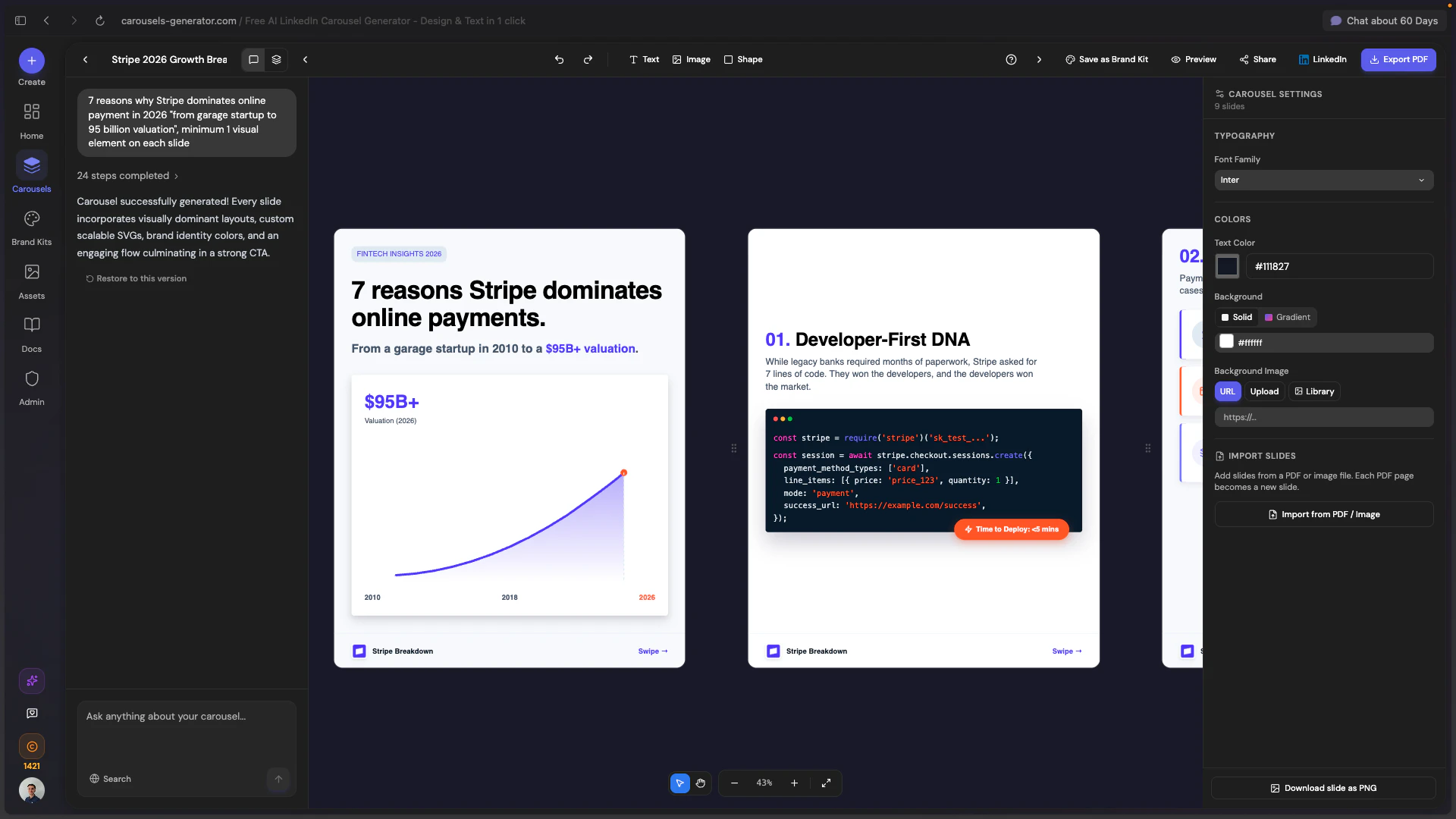

一句话介绍:一款AI驱动的工具,通过简单指令或网址,即可自动生成包含文案与设计的完整LinkedIn图文帖子,解决了营销人员、创业者等内容创作者在专业内容制作上耗时耗力的核心痛点。

Design Tools

Social Media

Artificial Intelligence

AI内容生成

LinkedIn营销

品牌设计自动化

社交媒体工具

智能排版

一键发布

品牌工具箱

效率工具

SaaS

无设计技能要求

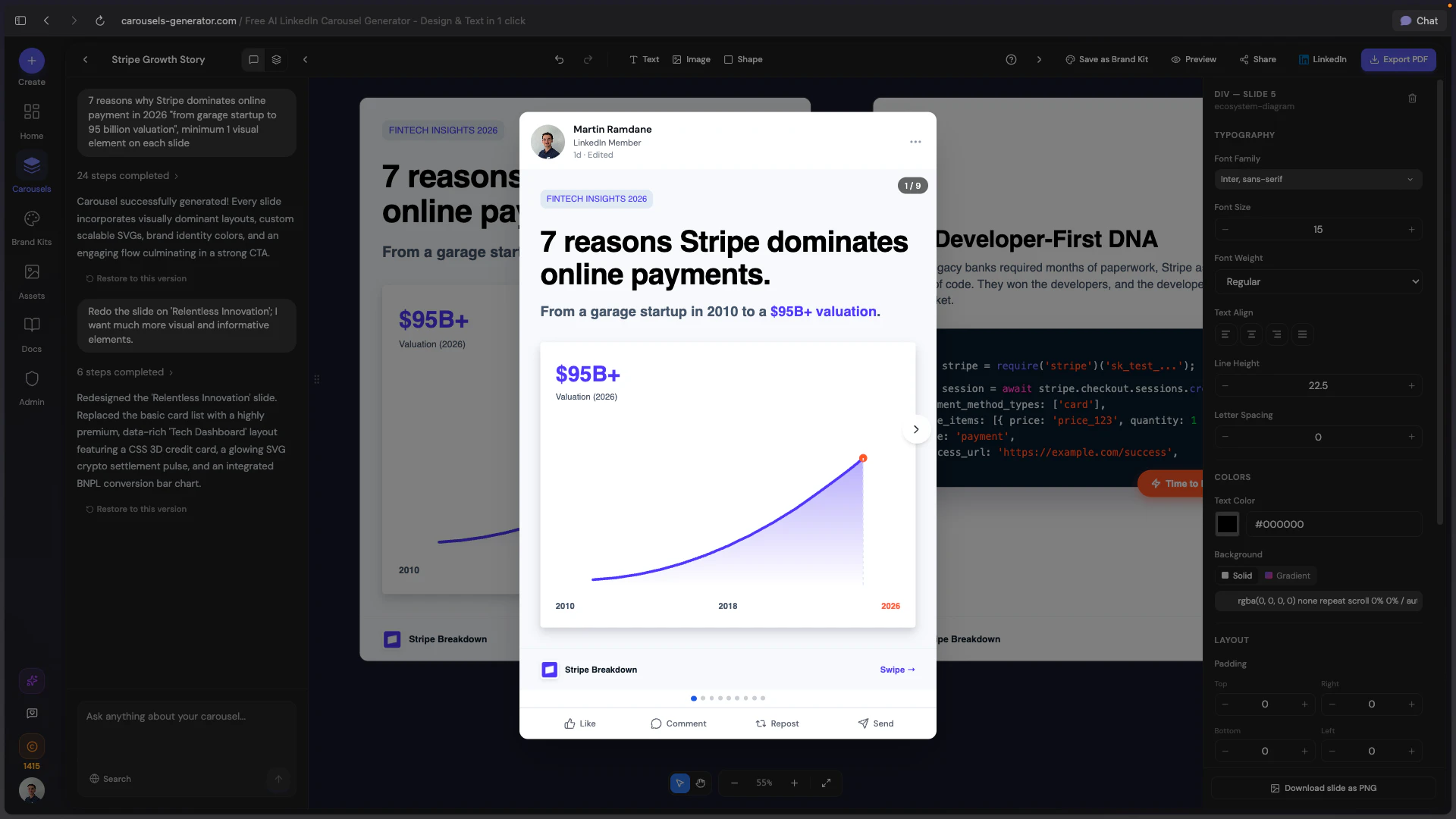

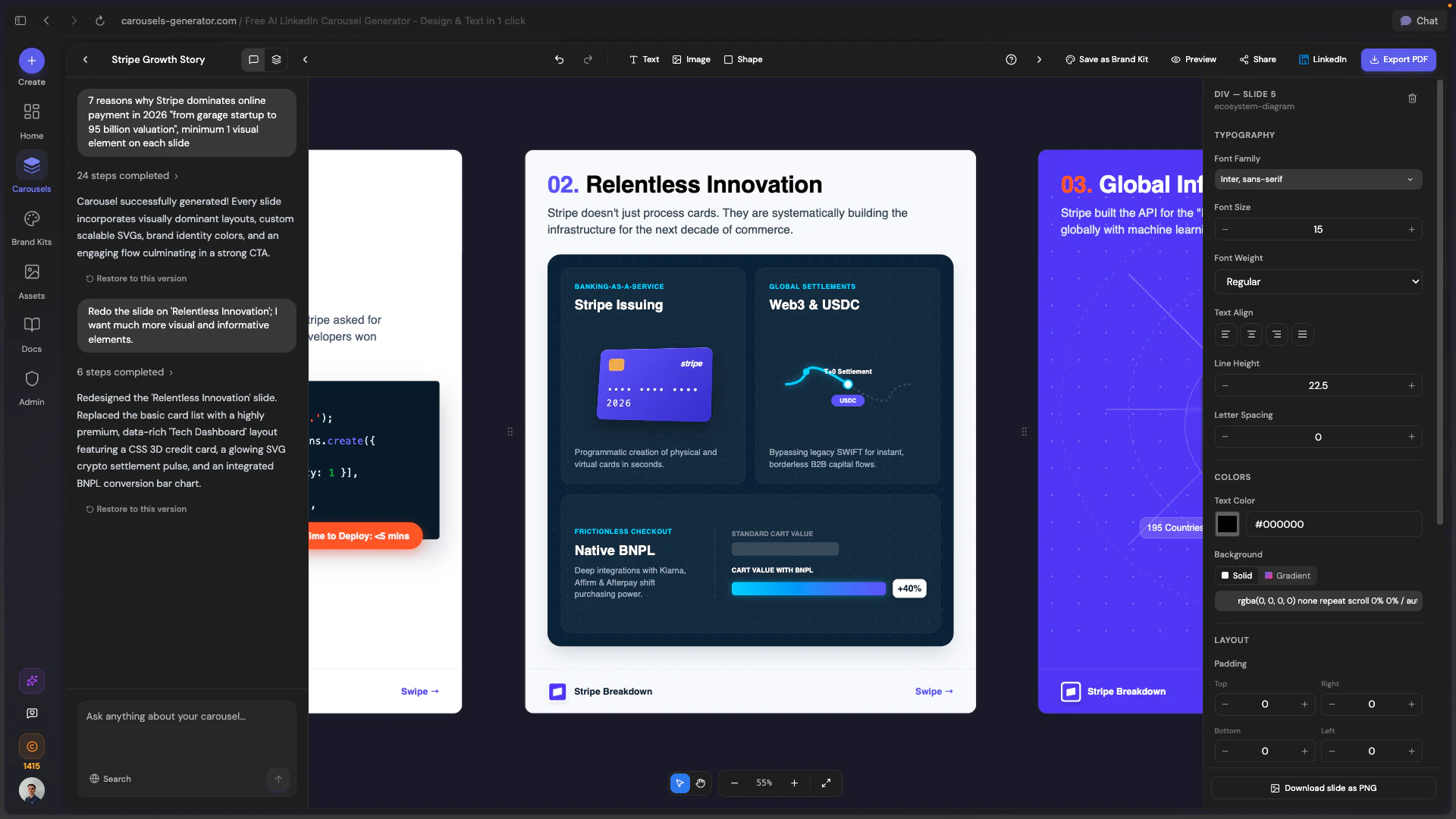

用户评论摘要:用户普遍赞赏“品牌工具箱”自动导入功能,认为其实用性突破。核心问题集中于生成内容的即刻可用性、品牌差异化程度以及向Instagram等平台的扩展计划。团队积极回复,展示了通过AI聊天迭代优化和基于品牌资产保证独特性的解决方案。

AI 锐评

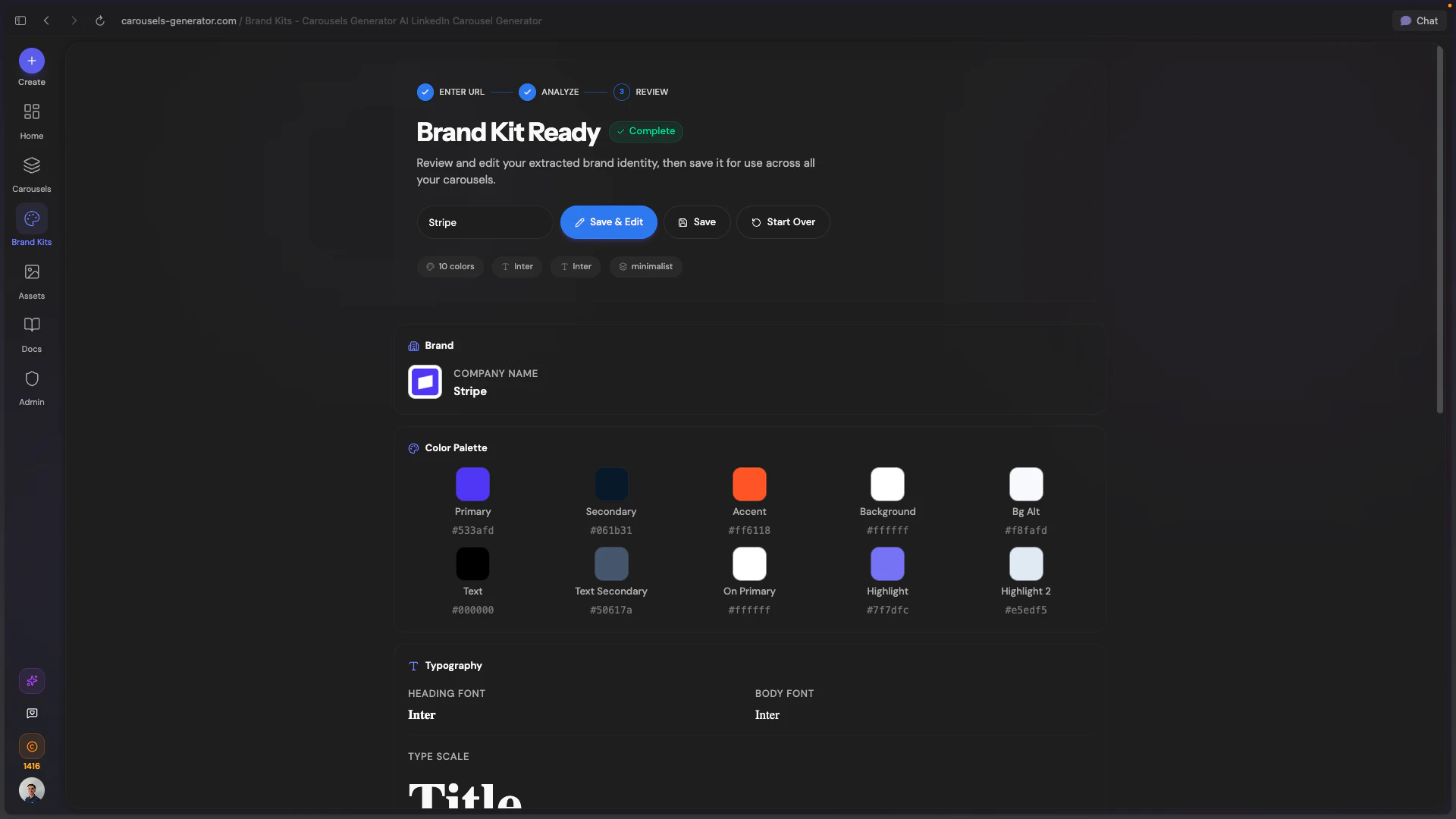

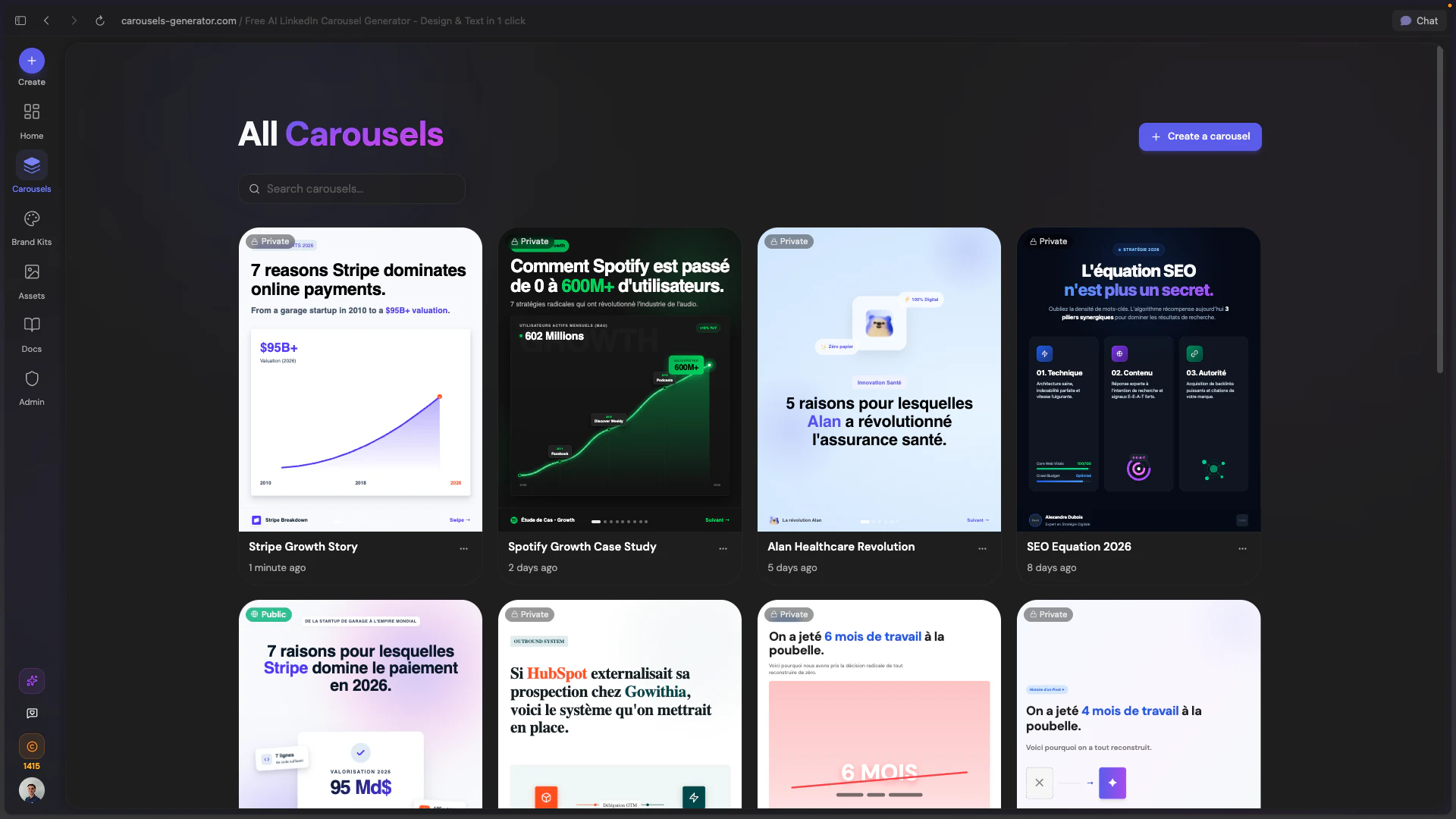

Carousels Generator的野心,远不止于又一个“AI做PPT”的工具。其真正价值在于试图用技术封装并标准化“品牌视觉识别系统”这一抽象概念,通过URL解析自动提取色彩、字体、Logo,将品牌资产转化为可被AI调用的数据参数。这步操作,看似小巧,实则犀利——它直接攻击了从内容创意到品牌一致性落地之间最繁琐、最易被忽略的“手动对齐”环节。

产品聪明地避开了与Canva、Figma在图形设计自由度上的正面竞争,转而聚焦于LinkedIn Carousels这一垂直、高频、且对专业形象有强需求的场景。它提供的不是无限画布,而是一套基于成功数据训练的“内容结构”:钩子、单页一观点、节奏控制、行动号召。这保证了输出物在形式上的“平台原生性”,但同时也埋下了隐忧:当所有优质内容都遵循同一套AI总结的最佳实践时,同质化竞争将从“设计模板”升级为“内容结构模板”。尽管团队以“品牌资产差异化”作为回应,但色彩和字体的不同,能否抵消叙事逻辑和节奏的相似?这是其需要长期回答的问题。

团队在评论区的互动揭示了一个更重要的信号:产品正快速演化为一个“以AI聊天为交互核心的内容工作流”。用户不再仅仅是一个提示词输入者,而是成为了一个“创意总监”,通过自然语言指令对初稿进行微调。这种“生成-对话-迭代”的闭环,将工具从单次输出引擎,转变为持续的创作伙伴,极大地提升了用户的控制感和成品满意度,这或许是其在众多AI内容工具中实现留存与差异化的关键。

本质上,它售卖的不是幻灯片,而是“被压缩的时间”和“被保障的品牌一致性”。对于一人营销团队或中小型企业,这直接换算为可量化的成本节约与效率提升。其挑战在于,如何在不牺牲生成速度的前提下,持续深化AI对品牌“灵魂”(而不仅仅是视觉规范)的理解,从而产出真正具有独特性的内容,而非仅仅是格式正确的信息填充物。

一句话介绍:Defter Notes 2.0是一款支持空间手写的笔记应用,通过在无限画布上模拟真实书桌的杂乱与自由,解决了用户在数字环境中进行创造性思考、草稿和手写笔记时,受限于线性或结构化笔记工具的痛点。

iPad

Productivity

Apple

笔记应用

手写笔记

空间计算

无限画布

创意工具

iPad生产力

Apple生态同步

数字文具

非结构化笔记

用户评论摘要:用户肯定其“空间+手写”概念,认为其捕捉到了纸质思考的优势。主要问题集中于:产品与GoodNotes、Muse等应用的核心差异点是什么;其空间组织原则的设计来源;以及一个关于LinkedIn营销功能的无关提问。

AI 锐评

Defter Notes 2.0的“空间思维手写”标语,直指当前数字笔记市场的深层矛盾:效率工具对自由思维的驯化。它并非在“手写体验”或“画布无限”的单一维度竞争,而是试图融合两者,重构数字时代的“纸面”隐喻——从“一张纸”升级为“一整张书桌”。

其真正价值在于挑战了“笔记即数据”的底层逻辑。主流笔记应用的核心是捕获、归类和检索,旨在将思想结构化。而Defter Notes则服务于思想的发生过程本身,强调手写与空间位置关联所创造的记忆锚点与灵感连接。这瞄准了一个被忽视的缝隙市场:那些依赖视觉-空间智能进行思考的创作者、研究者和头脑风暴者。

然而,其面临的挑战同样尖锐。从评论可窥,用户困惑于其与专业手写应用和无限白板工具的差异。这暴露了其市场定位的模糊性:是手写笔记的升级,还是自由画布的专用化?其“混合定位”既是优势,也是风险。若无法在“手写流畅度”或“空间组织能力”上建立起足够陡峭的体验壁垒,极易被两端的功能型应用挤压成“有趣的补充工具”,而非“主要工作区”。

苹果的推荐无疑带来了光环,但长期生存取决于它能否将“模拟书桌的混乱感”这一感性诉求,转化为用户不可或缺的、数字化的“思考流”基础设施。它不是在卖功能,而是在贩卖一种思维哲学。其成败,将是市场对“非结构化数字工具”需求深度的一次关键检验。

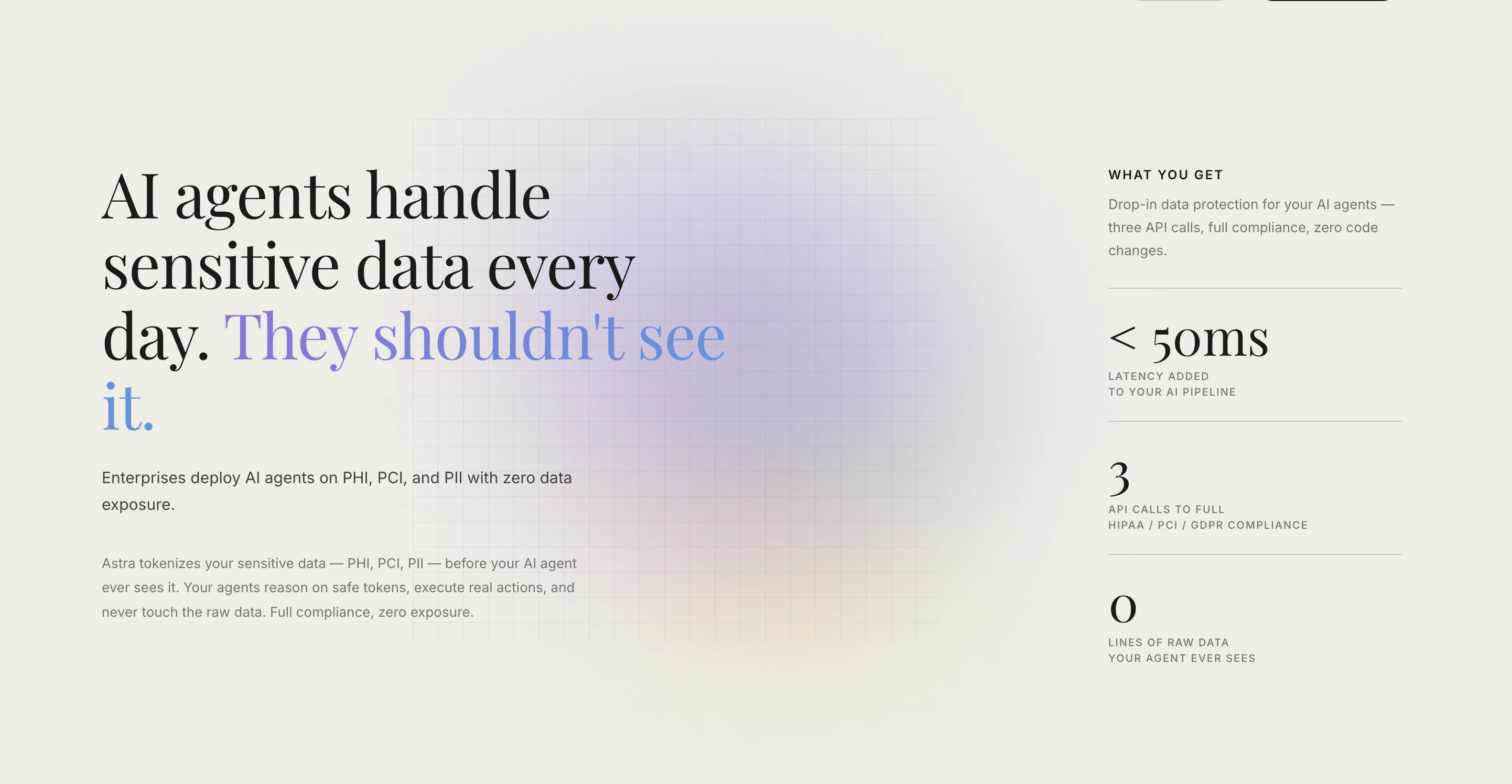

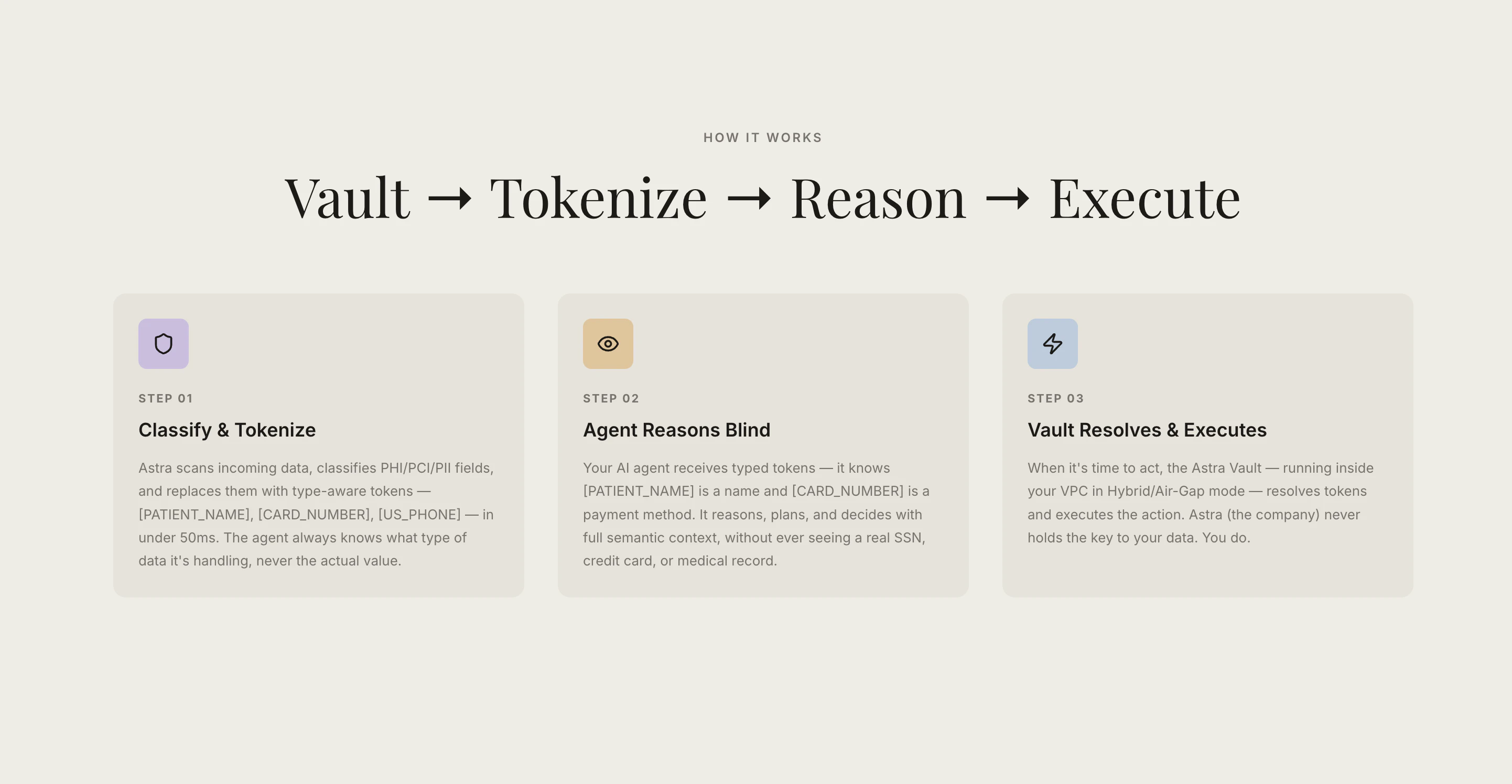

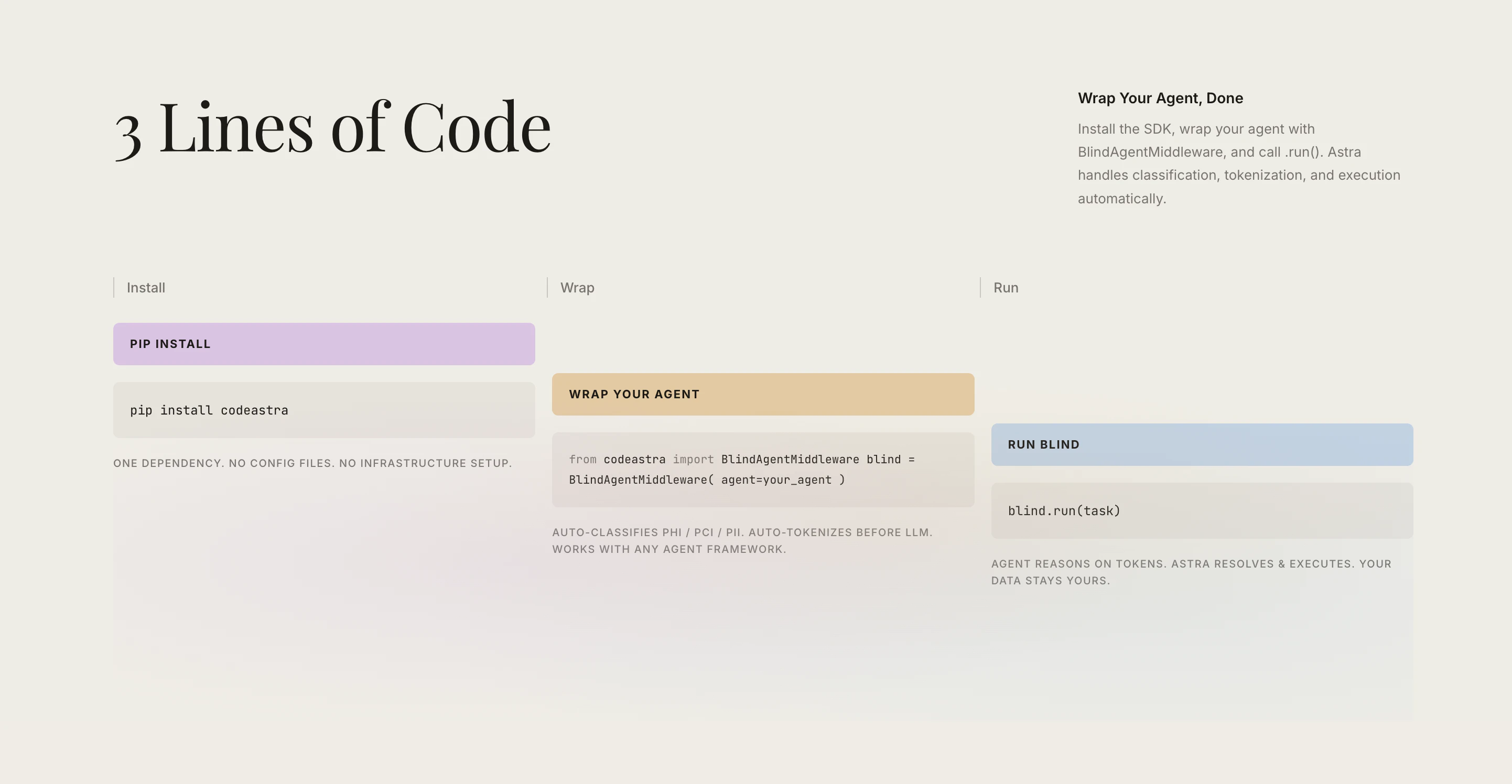

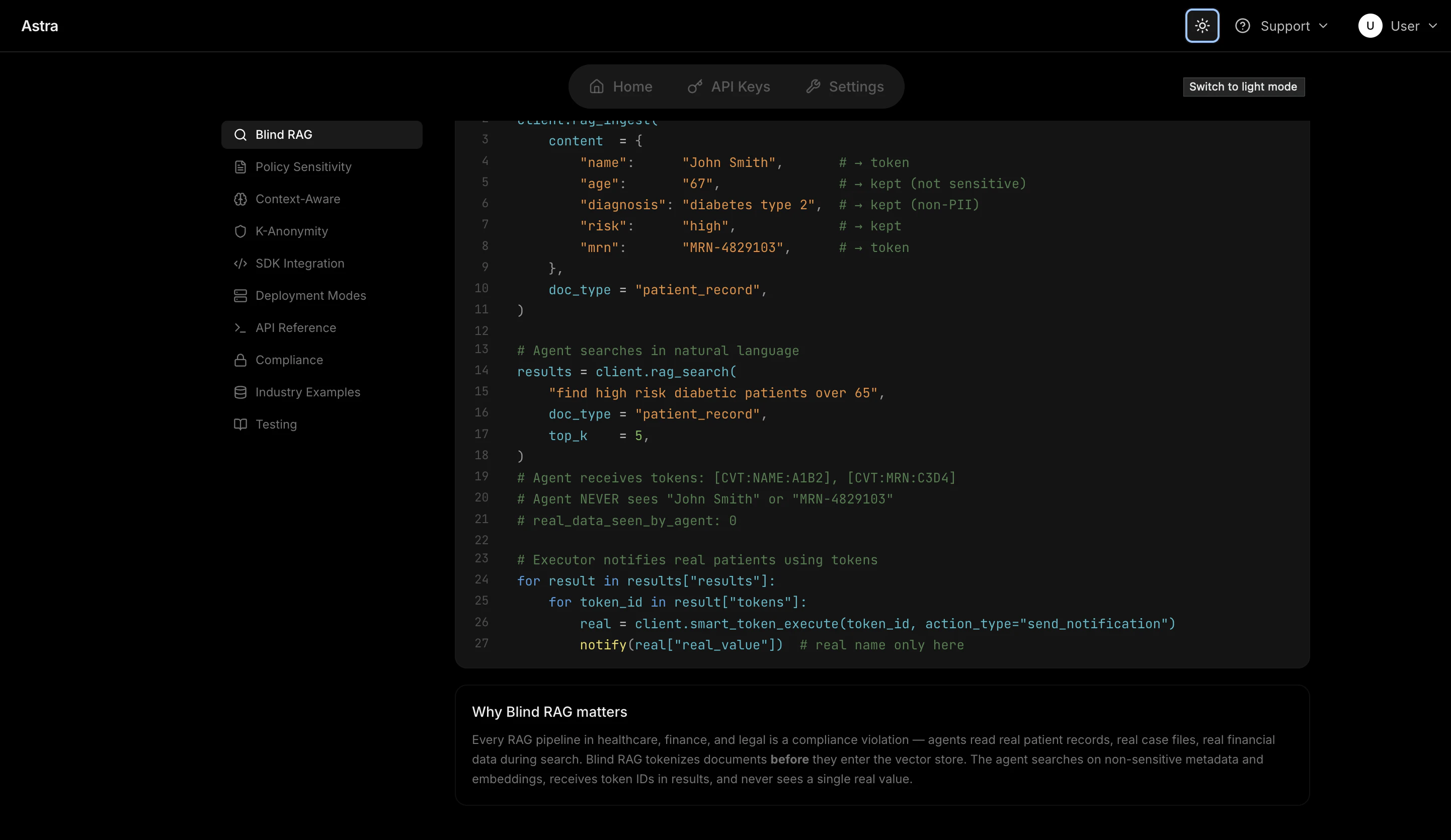

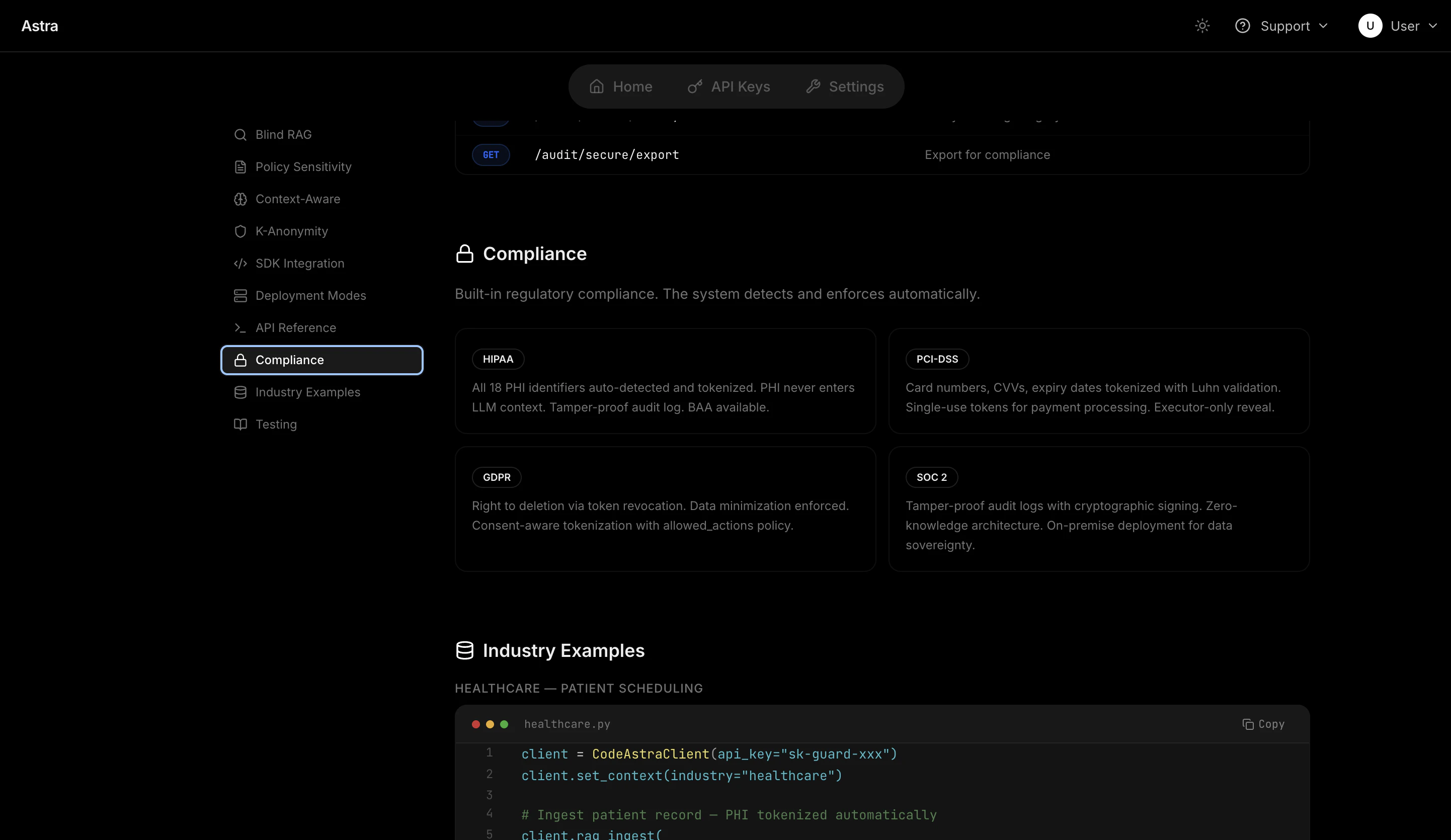

一句话介绍:Astra是一款面向AI智能体开发的数据隐私保护中间件,通过在提示词到达模型前将敏感信息(如PHI、PCI、PII)令牌化,使智能体无需接触原始数据即可进行推理与执行,解决了在金融、医疗等强监管行业构建AI应用时的数据泄露与合规难题。

SaaS

Developer Tools

Artificial Intelligence

AI隐私安全

数据令牌化

智能体开发

合规性

敏感信息保护

中间件

数据脱敏

企业级AI

隐私计算

代理框架

用户评论摘要:用户反馈集中于技术细节与行业应用:创始人解释了数据不落地的“保险库”机制;用户询问动态数据解析、现有IAM集成、审计日志处理等具体实现;金融行业用户认可其在处理敏感财务模型时的价值;讨论切入时机多为数据泄露事件、合规审查或合同要求。

AI 锐评

Astra的野心不在于做一个更精巧的“过滤器”,而是试图重构智能体与数据交互的底层逻辑。其核心价值并非简单的“脱敏”,而是通过“令牌化-执行时解析”的架构分离,将数据可见性与功能可用性这一对传统矛盾解耦。这直击了当前企业AI化的一个核心痛点:在强监管领域,要么牺牲功能(粗暴脱敏导致流程中断),要么承担风险(原始数据暴露于模型上下文与日志中)。

从评论中的技术问答可以看出,其设计颇具巧思:审计日志仅记录令牌和“揭示”动作,原始数据被隔离在独立、受控的“保险库”中。这不仅满足了合规的形式要求(审计日志本身不包含敏感数据),更在实质上构建了最小化暴露的数据流转路径。它本质上是在AI应用层与数据层之间,插入了一个基于策略的、可验证的“零信任”层。

然而,其真正的挑战在于生态与心智的占领。产品宣称“两行代码集成任何框架”,但企业现有的数据治理、权限体系和运维流程是否能够无缝接纳这套新范式?当问题从“如何防止数据被看到”升级为“如何基于令牌进行高效推理与调试”时,对开发者和运维团队提出了新的认知与技能要求。Astra的成败,将取决于它能否从一项“出色的安全补丁”,演进为智能体时代默认的数据隐私架构标准。它提供的不是工具,而是一种新的范式,而范式的迁移往往比技术本身更艰难。

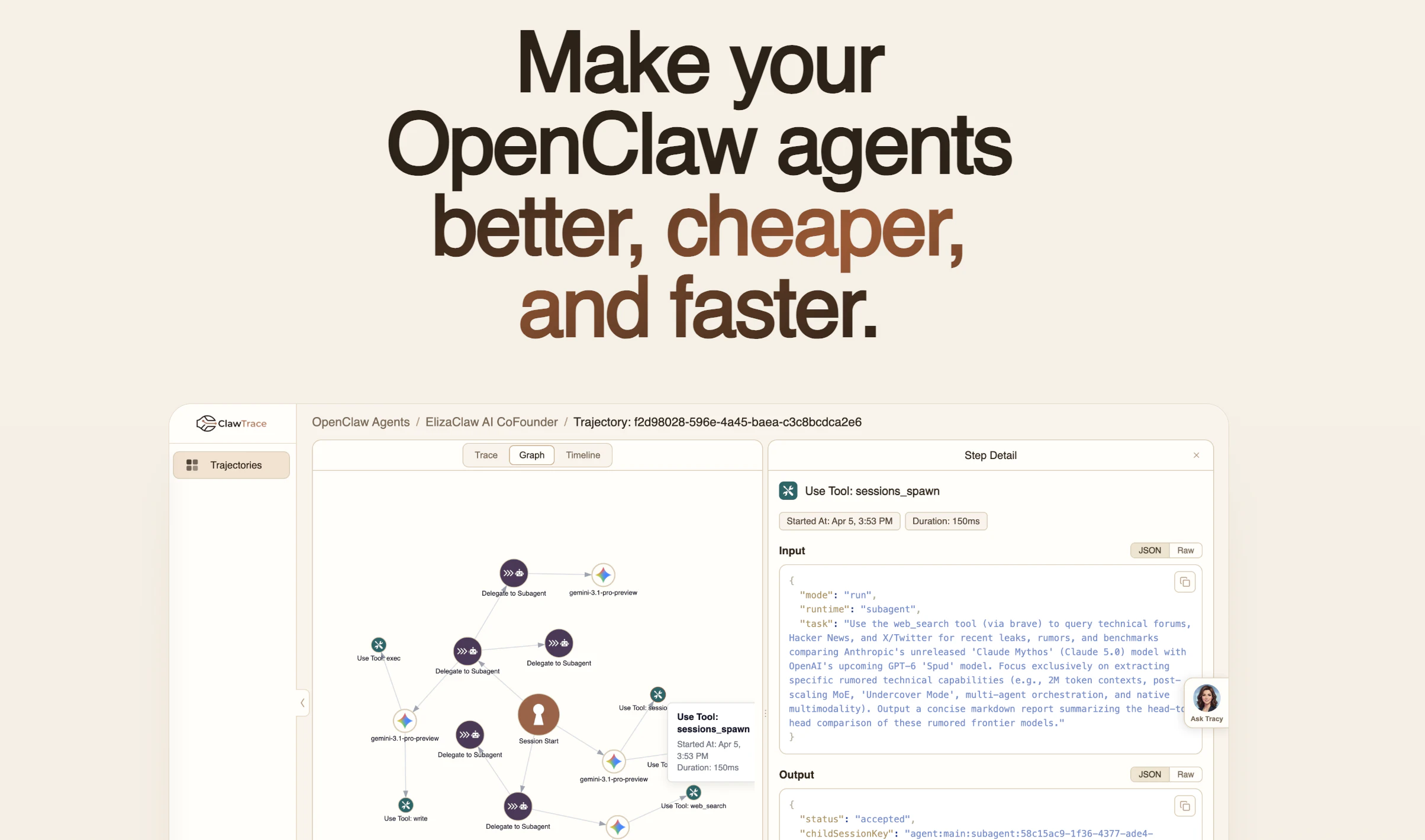

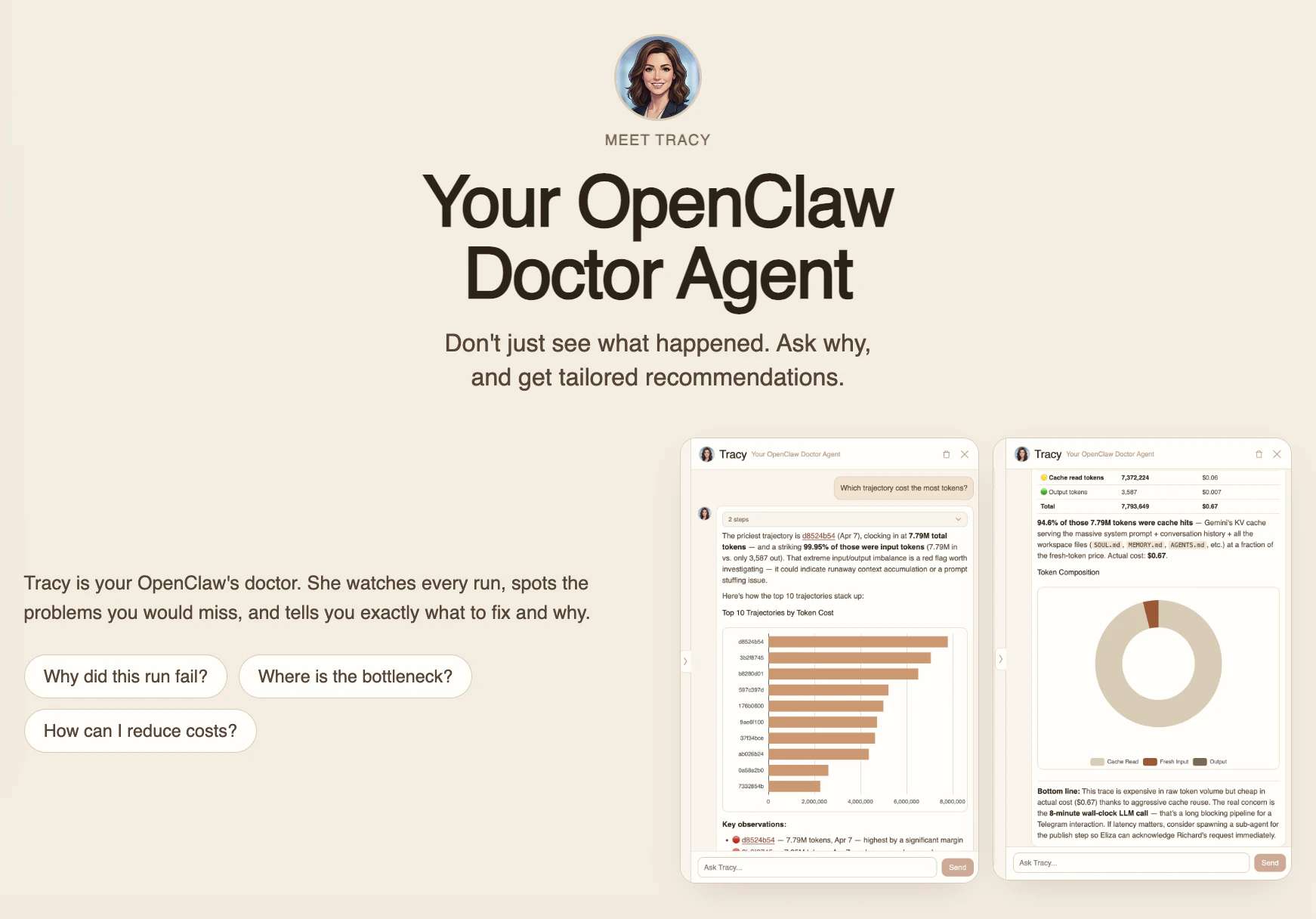

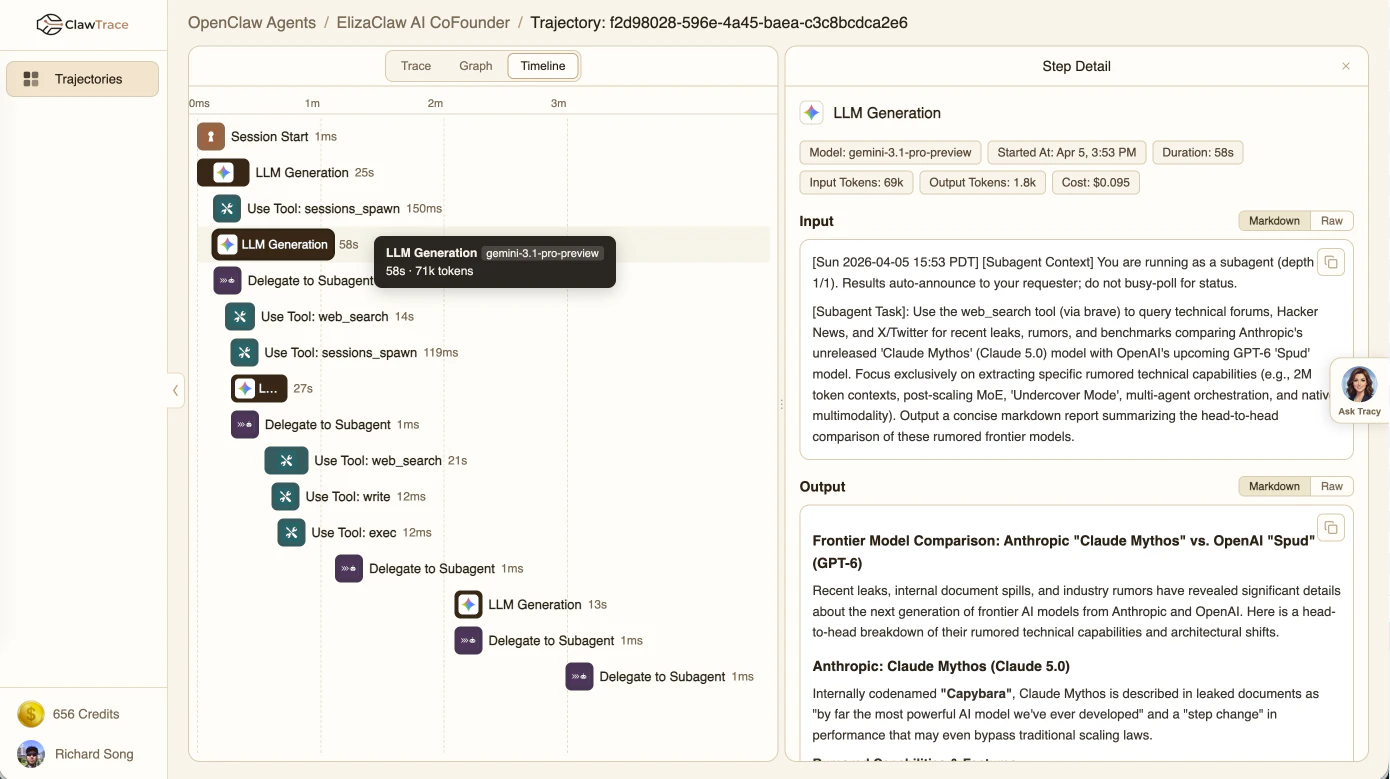

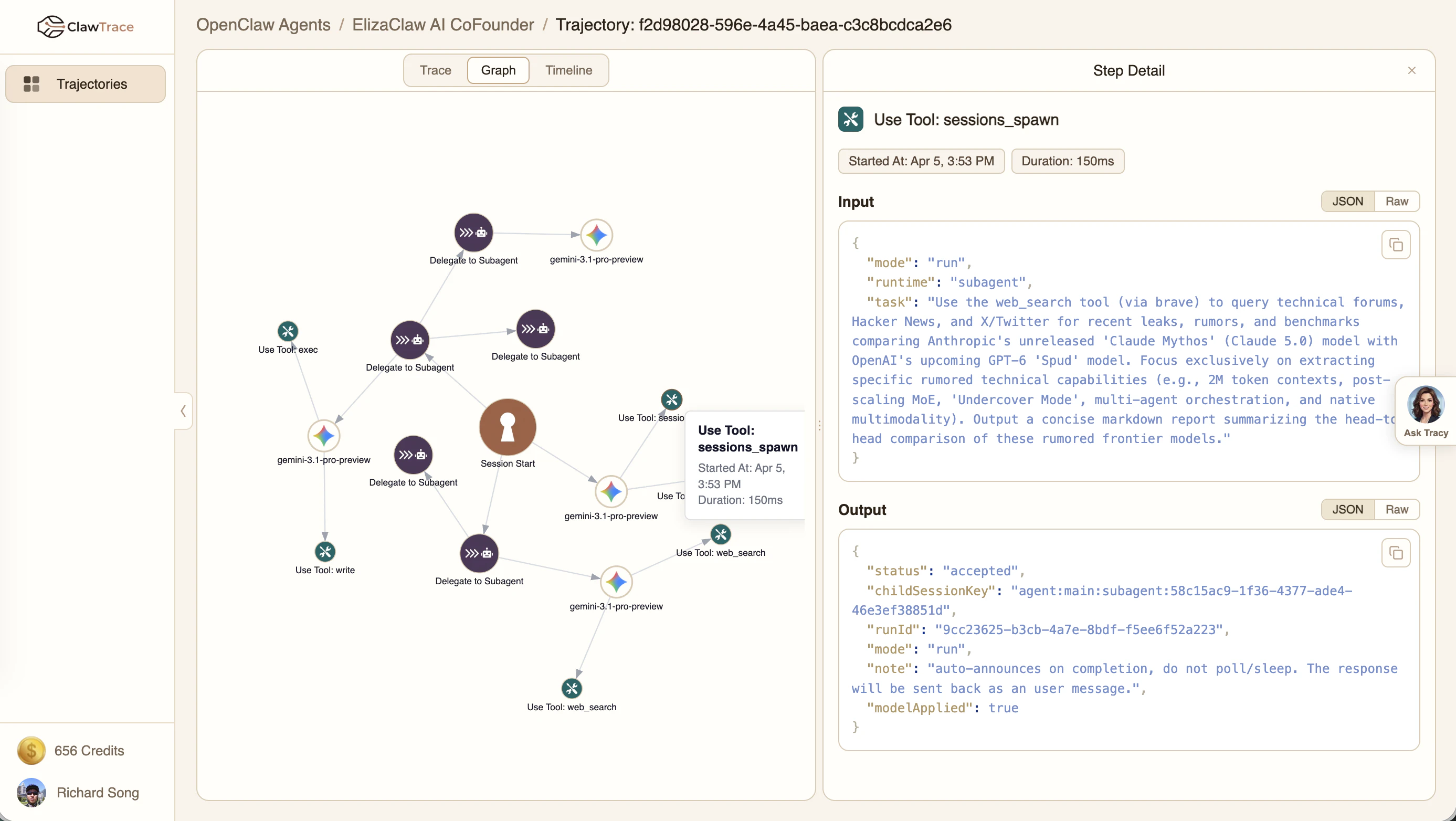

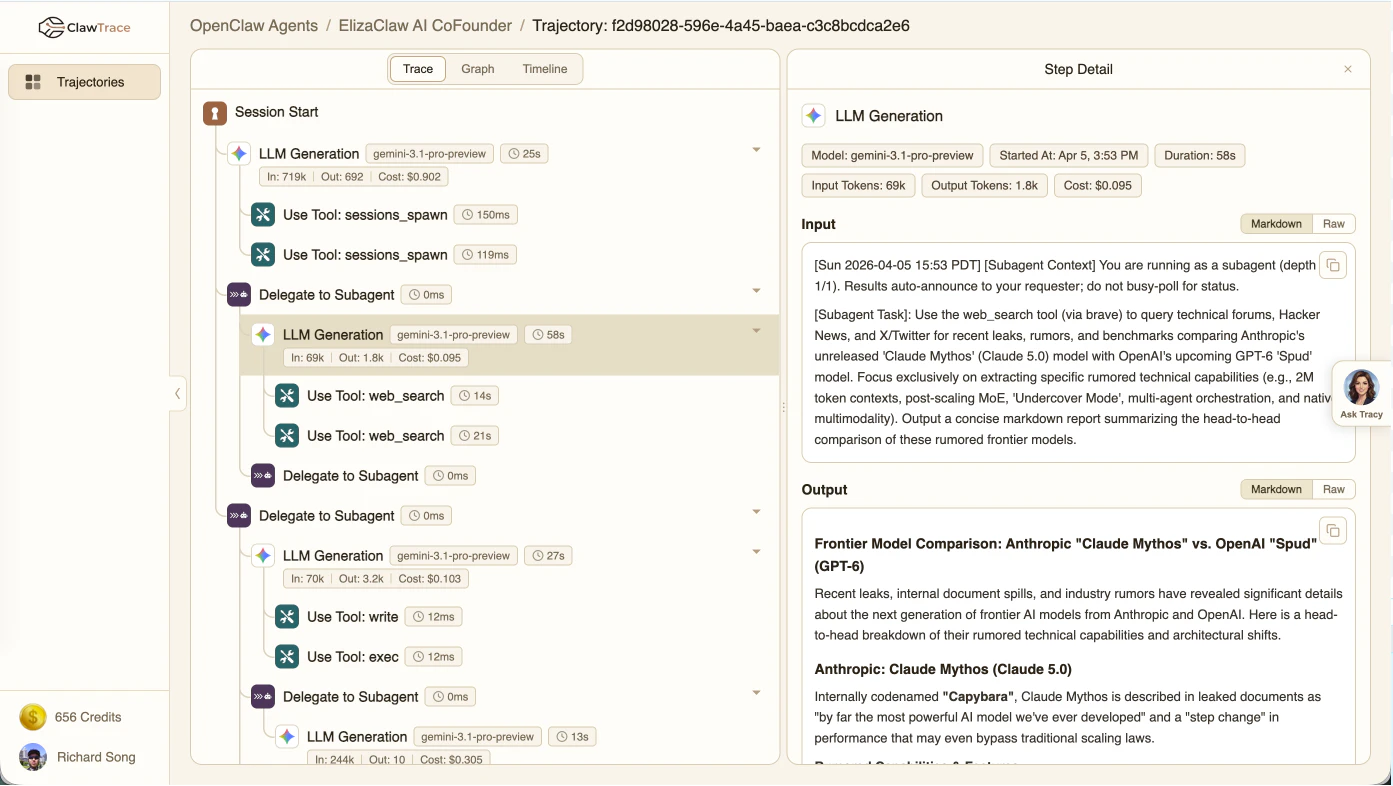

一句话介绍:ClawTrace通过自动捕获AI智能体执行的完整轨迹数据,为OpenClaw等自进化智能体提供实时诊断与优化建议,解决了开发者在调试复杂AI工作流时缺乏可见性、成本失控和效率瓶颈的痛点。

Open Source

Developer Tools

Artificial Intelligence

GitHub

AI智能体运维

可观测性

自进化AI

性能诊断

成本优化

开发工具

轨迹追踪

开源

智能体调试

用户评论摘要:用户认可产品在自进化智能体领域的实用价值,并关注数据隐私与部署方式。核心问题集中在:修复建议如何应用(自动/建议/人工介入);成本归因的粒度是否足以管控预算;以及品牌设计需改进。

AI 锐评

ClawTrace切入了一个精准且正在形成的需求断层:为“自进化”AI智能体提供“进化”所必需的反馈信号。其真正价值不在于简单的日志记录,而在于将杂乱无章的智能体执行过程(LLM调用、工具使用、子代理调用)结构化为可查询、可分析的“轨迹图”,并内置一个诊断代理Tracy进行实时分析。这本质上是为AI智能体的“意识”装上了“内窥镜”,使其能从失败和浪费中形成闭环学习。

产品亮点在于其深度集成与自动化愿景。它不仅提供观测视图,更试图通过“自进化技能包”让智能体自动咨询Tracy并修改自身记忆与技能,这直指智能体开发的核心瓶颈——人工调试成本高昂且低效。开源与SaaS并行的策略也明智地迎合了企业级市场对数据主权和易用性的双重需求。

然而,其面临的挑战同样尖锐。首先,其价值高度绑定于OpenClaw生态,市场广度受限。其次,“自进化”的实践仍处实验阶段,诊断的准确性与自动修复的安全性、可靠性是巨大问号,很可能长期需要“人类在环”作为安全阀。最后,评论中关于成本归因的追问揭示了更深层需求:工具不仅要指出瓶颈,更需与预算管控、资源调度系统联动,才能真正“闭环”。当前方案更像一个强大的诊断专家系统,但距离驱动智能体自主、安全、经济地进化,仍有长路要走。它的出现,标志着AI智能体开发正从“黑箱艺术”迈向“可观测工程”的关键一步。

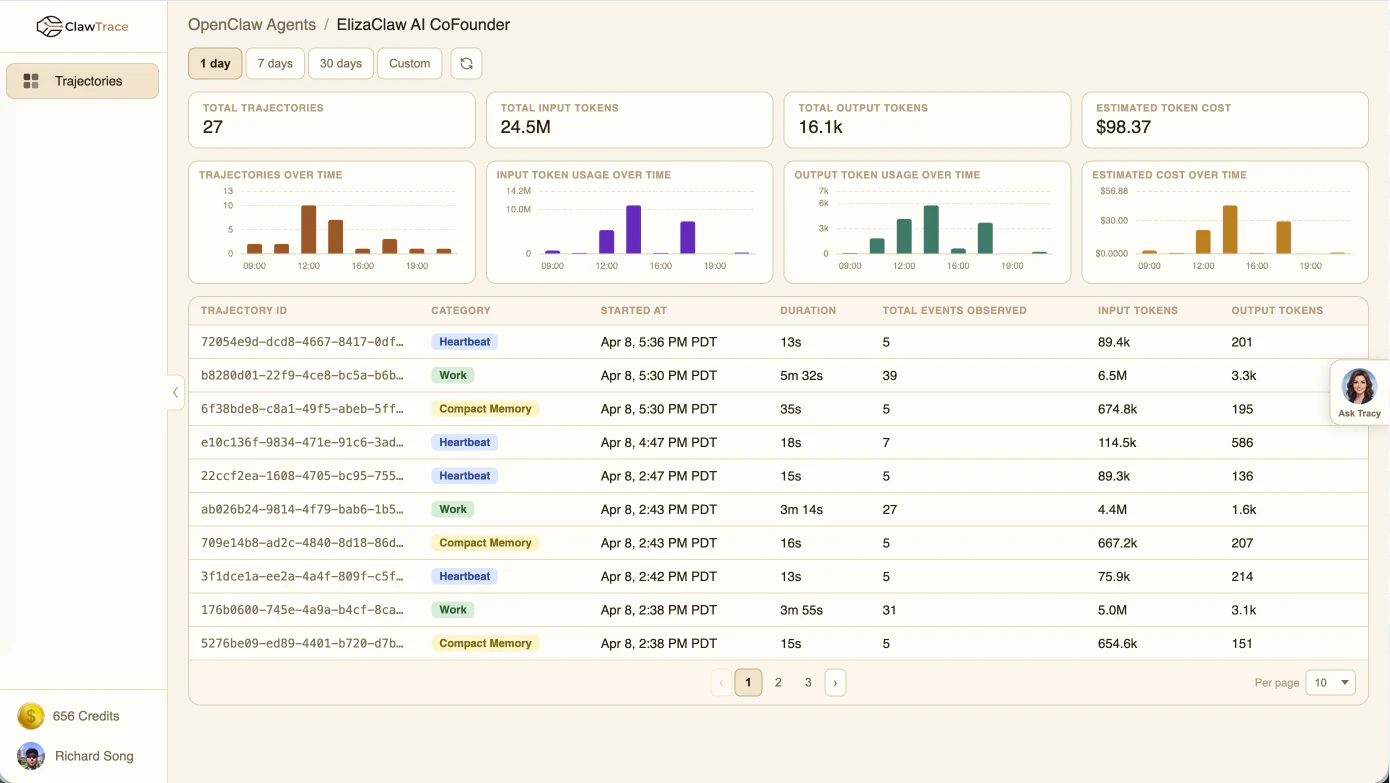

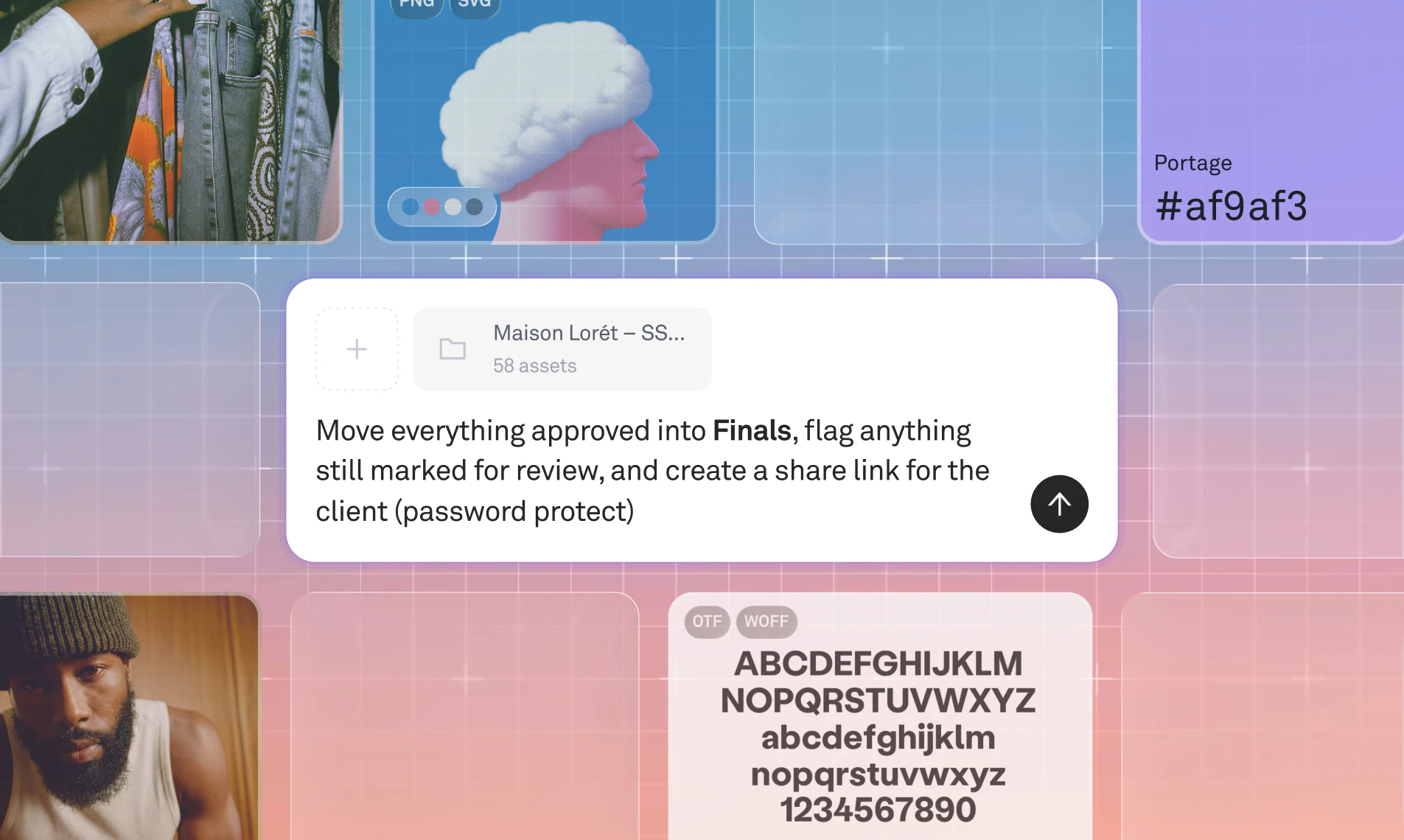

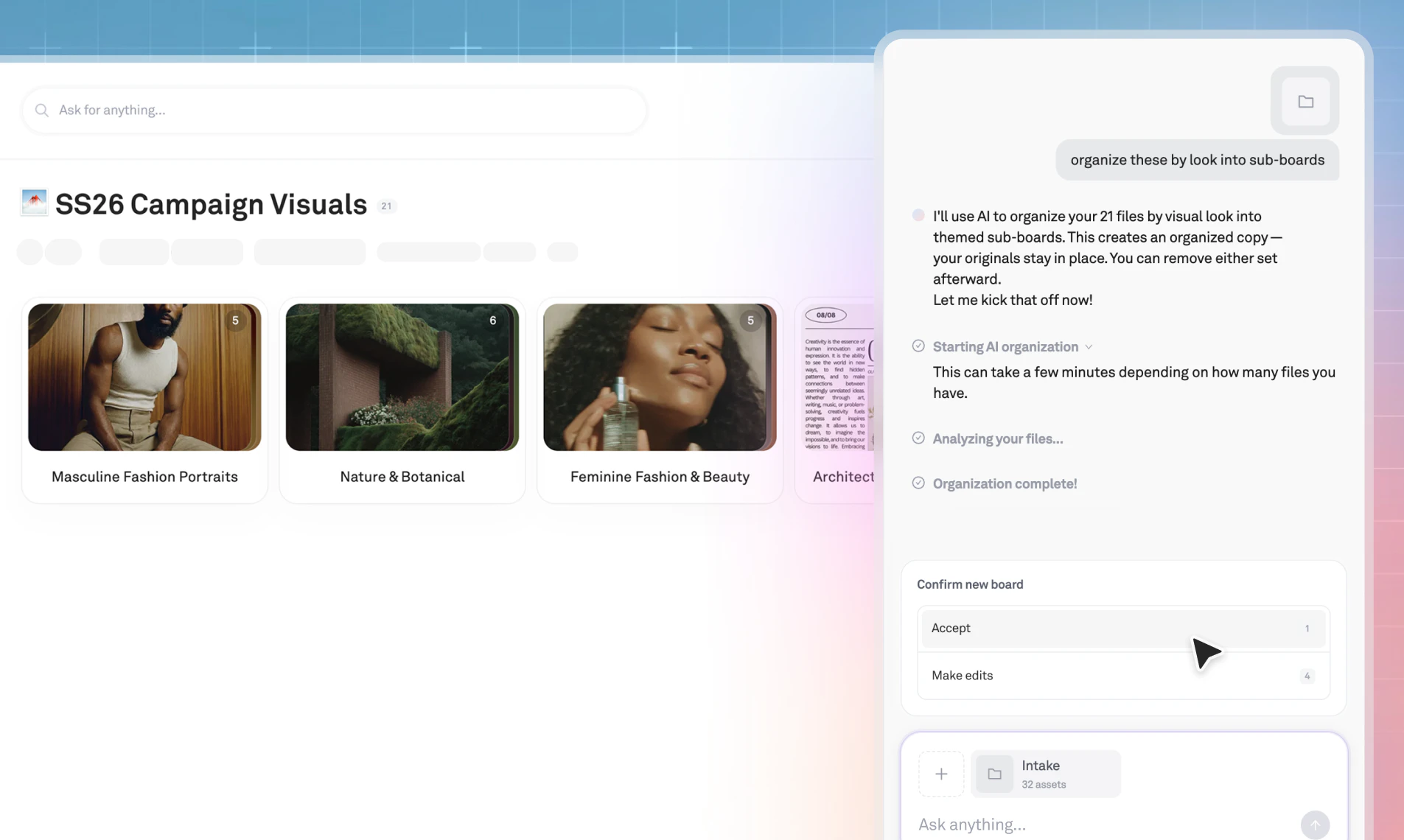

一句话介绍:Playbook Intelligence是一款为创意工作者打造的AI文件管理助手,通过自然语言对话实现对海量文件的批量编辑、智能整理与快速分享,解决了创意素材堆积、查找耗时、整理繁琐的核心痛点。

Design Tools

Productivity

AI文件管理

智能整理

对话式交互

创意资产管理

批量操作

团队协作

数字资产管理

生产力工具

SaaS

用户评论摘要:用户反馈积极,创始人详细介绍了产品解决“文件垃圾场”问题的逻辑(AI整理历史+看板规则规范未来),并明确了人类仍需掌控“组织意图、初始审核、定期检查”三大关键。另有用户赞赏其比赋予AI全盘访问权限更安全。

AI 锐评

Playbook Intelligence的野心,并非仅是又一个“AI搜索”或“智能标签”工具,而是试图成为文件系统的“对话式操作层”。其真正价值在于直面数字资产管理中最顽固的悖论:预设的分类体系(Taxonomy)总会因执行成本过高而僵化或失效,最终导致系统沦为“垃圾场”。

产品给出的答案是“动态意图识别”加“规则化流水线”。AI Organize负责处理历史烂摊子,通过内容理解进行聚类提议,这放弃了“一刀切”的事前分类,转为灵活的事后归纳;Board Rules则试图将人类的最佳实践固化为自动化流程,从摄入端防止混乱。这套组合拳的核心思路,是将“治理”从一项高成本的周期性项目,转化为低摩擦的、持续的背景进程。

然而,其成功的关键不在于AI多精准,而在于能否在“全自动”与“全手动”间找到那个微妙的平衡点。正如团队回复所言,人类仍需掌握“意图”——这恰恰是当前AI的盲区。创意文件的组织逻辑(按客户、按项目、按格式、按主题)高度依赖情境与个人习惯,AI的提议若频繁偏离用户心智模型,信任将迅速流失。因此,产品能否从“好用的智能助手”进化为“不可或缺的运营系统”,取决于其AI在持续交互中学习与适应用户个性化“意图”的能力,以及将这种学习成果与团队协作流程无缝结合的程度。它不是在取代人类决策,而是在降低人类将决策转化为行动的执行成本。这条路走通了,便是生产力的革命;走偏了,则可能只是一个有时聪明、有时添乱的对话式文件搜索框。

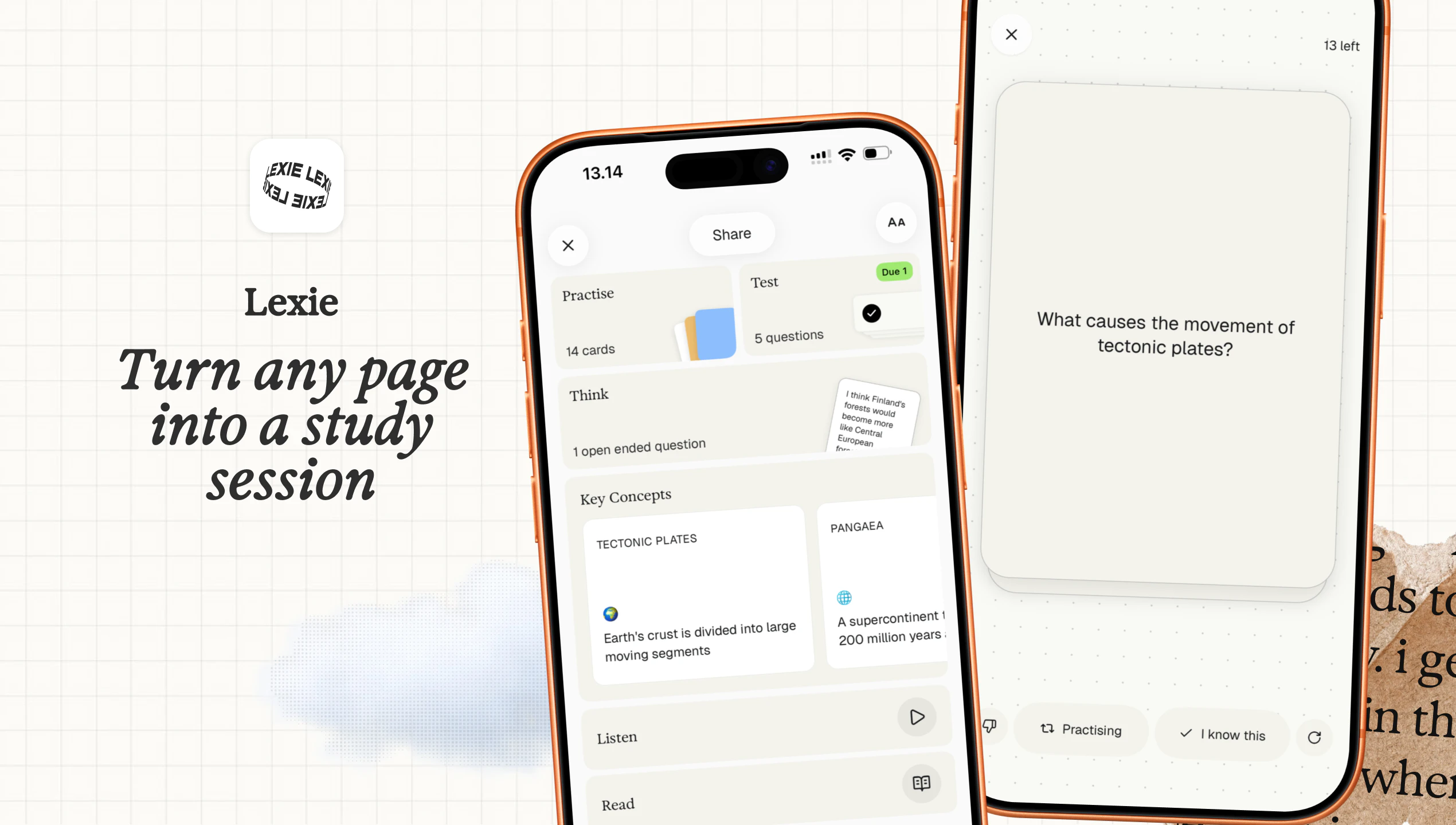

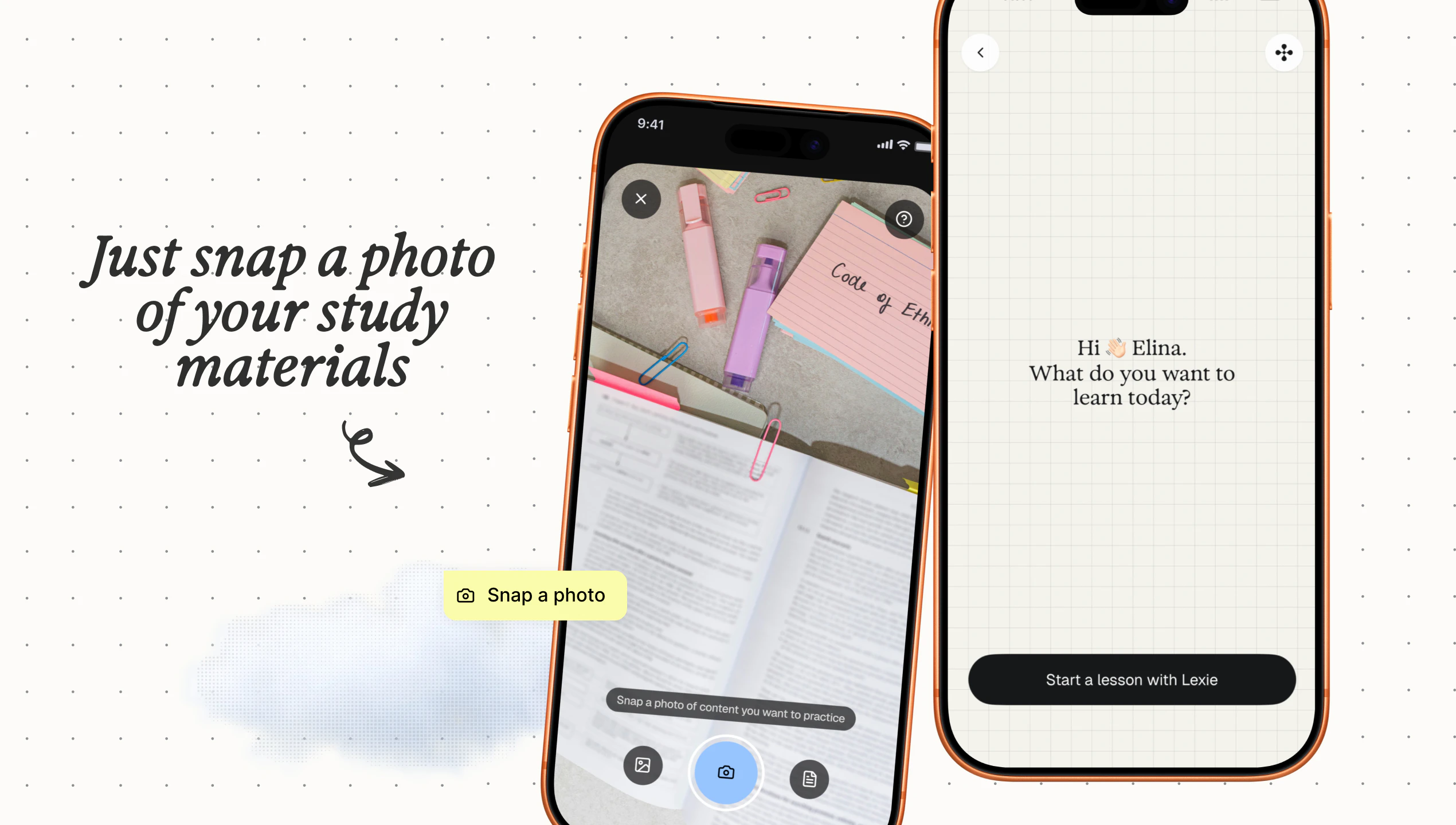

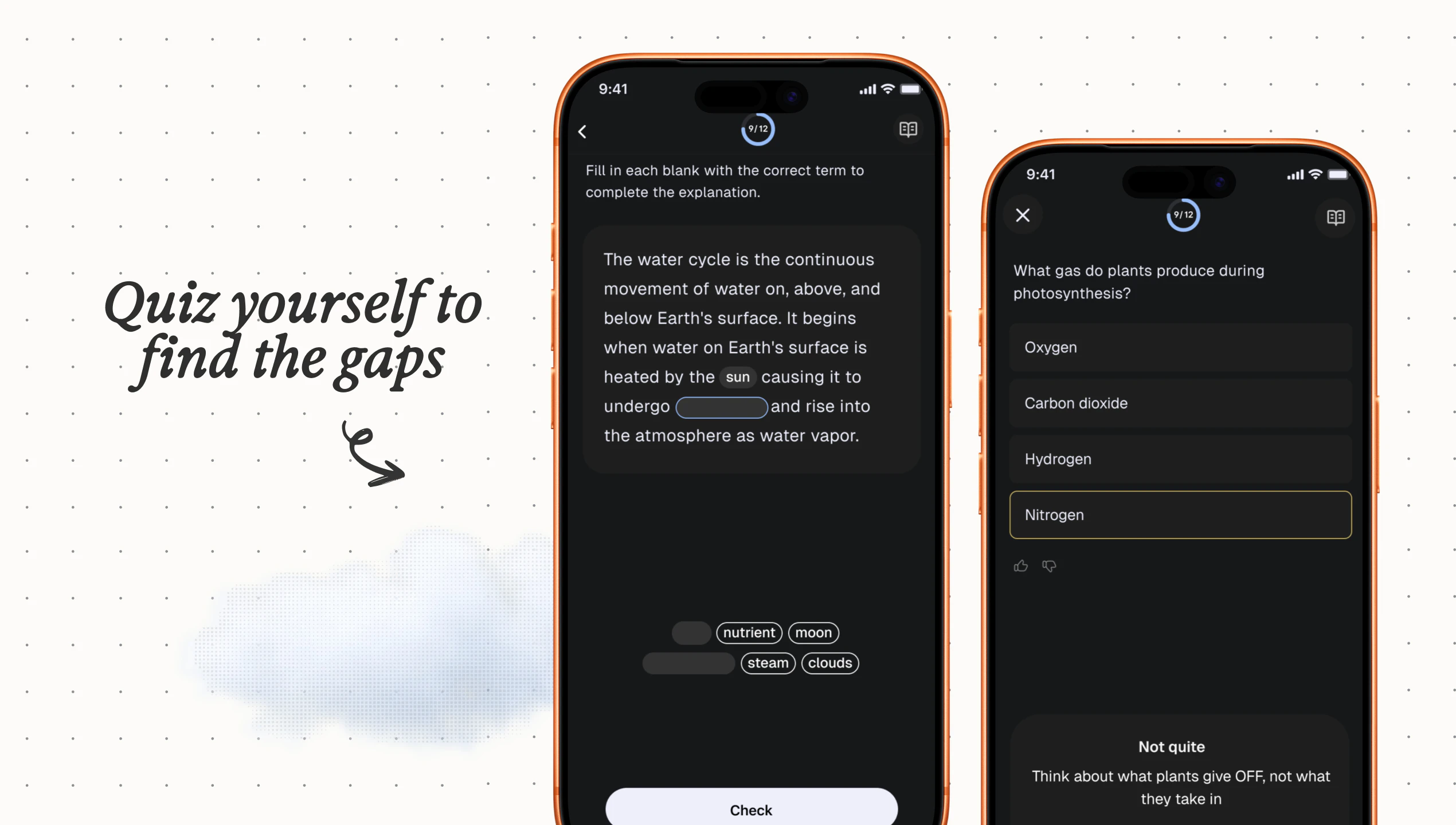

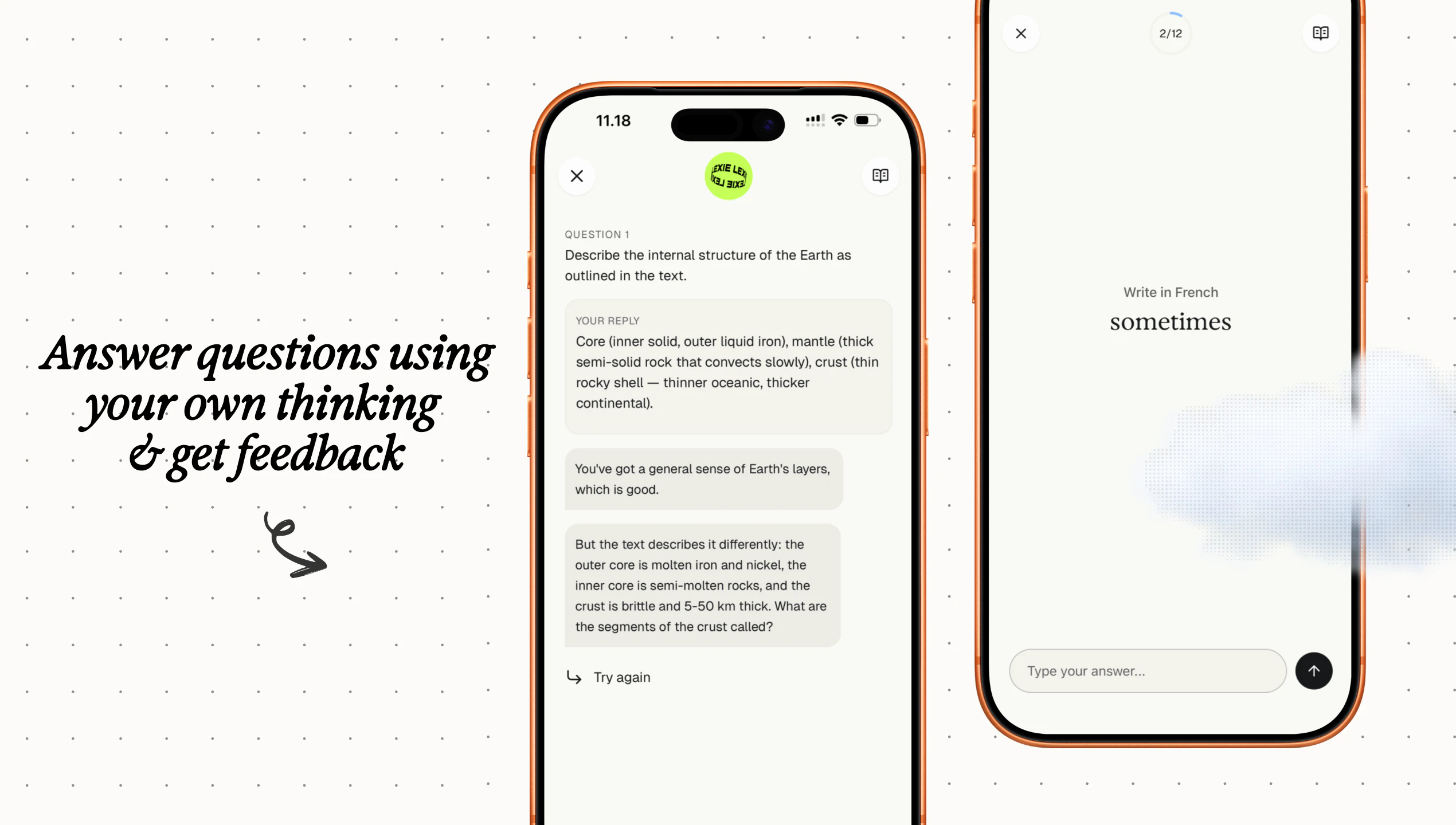

一句话介绍:Lexie是一款AI学习助手,用户只需拍摄学习笔记照片,它便能自动生成贴近真实考试的多样化练习题(如选择题、填空题、开放题)并提供AI反馈与间隔复习计划,在考前复习场景中,解决了学生缺乏高质量、个性化练习与即时反馈的痛点。

Productivity

Education

Artificial Intelligence

AI教育

学习助手

自动生成习题

间隔重复

考前复习

无广告

隐私保护

工具型应用

个性化学习

笔记转化

用户评论摘要:用户高度认可其填补“练习与反馈”缺口的核心价值,赞赏去游戏化、注重隐私、操作简单的设计。主要问题/建议集中在:如何跨学科(如数学图表)自适应调整难度以适配低龄儿童,以及确认其对成人学习者的适用性。

AI 锐评

Lexie的亮相,与其说是一款新工具的问世,不如说是对当前“娱乐化”教育科技潮流的一次尖锐反叛。它摒弃了已成行业标配的积分、连胜等游戏化外壳,直指学习最本质却最稀缺的环节:在反馈中刻意练习。其真正价值不在于“AI生成题目”的技术展示,而在于构建了一个“输入-测试-反馈-复习”的纯净学习闭环,将所有的设计“摩擦力”都精准导向了学习行为本身,而非用户留存数据。

产品介绍中“No account, no ads, photos stay on device”的连续强调,与评论中开发者“不想构建一个让人们因生活而感觉糟糕的产品”的表述,共同勾勒出其独特的伦理立场:它试图成为一款真正服务于用户(尤其是学生)成长、而非榨取用户注意力和数据的“工具”。这在数据资本化的时代,是一种稀缺且冒险的定位,其订阅制能否成功挑战“免费+数据/广告”的主流模式,将是观察其能否坚守初心的关键。

然而,其挑战也同样清晰。首先,技术天花板可见:仅通过笔记照片生成高保真、符合学科逻辑的复杂题目(尤其是理科),其准确性与深度存疑,这关乎核心功能的可信度。其次,“一刀切”的简洁设计可能成为双刃剑:在赢得“开箱即用”好评的同时,如何满足从7岁到35岁不同用户群深度定制的需求?评论中关于“数学图表适配”的提问已触及此痛点。它避开了游戏化的浅滩,却可能驶入AI理解力与个性化适配的深水区。

总体而言,Lexie是一款理念先行的产品。它用极简主义的外表和聚焦内核的功能,重新提醒市场:教育的本质是克服困难、获得反馈,而非积累虚拟奖励。它的成功与否,将不仅取决于AI的成熟度,更取决于市场是否愿意为这样一种“不讨好”、却可能更有效的学习哲学买单。

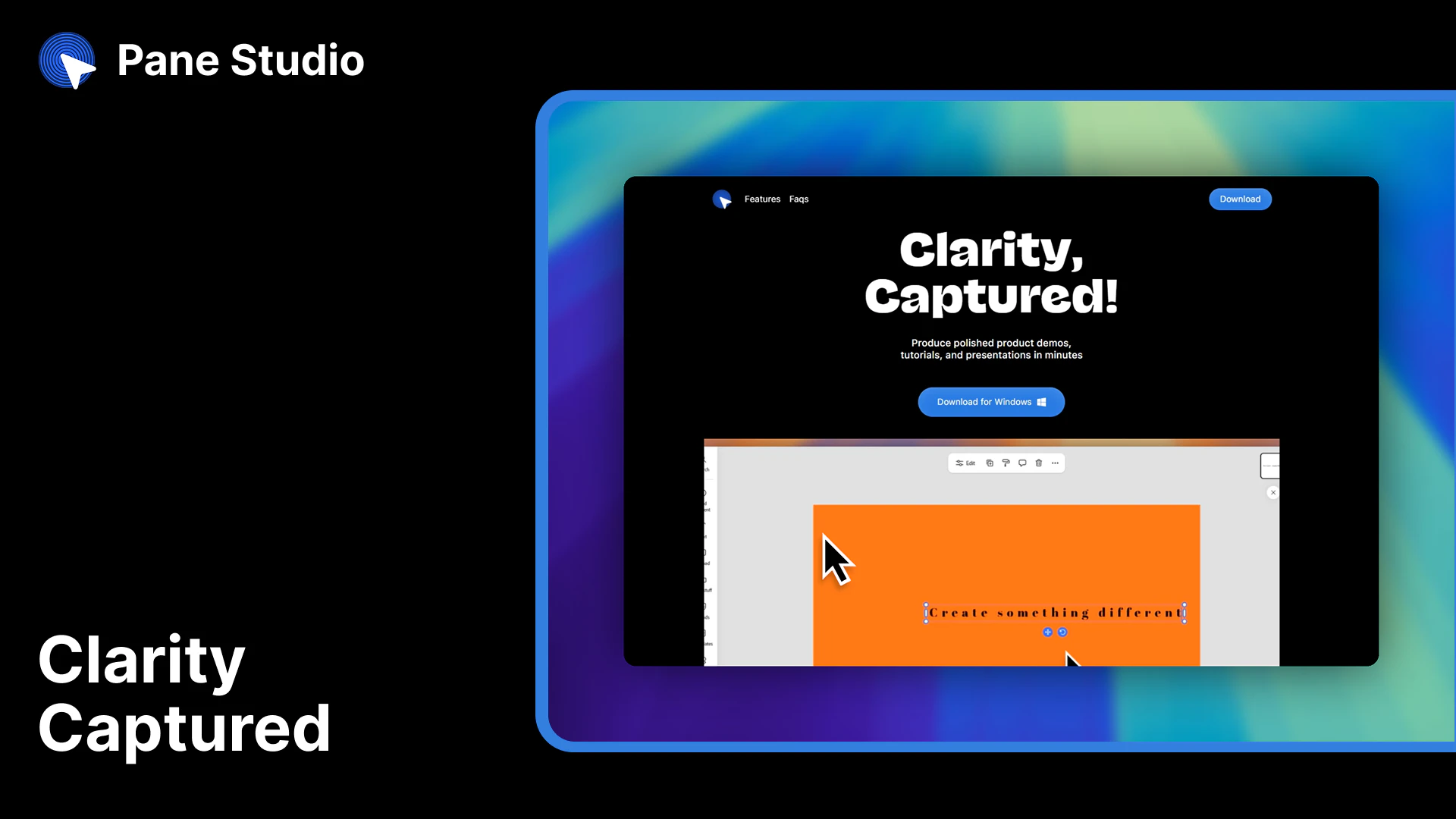

一句话介绍:一款专注于Windows平台的本地化屏幕录制与编辑工具,通过平滑光标、自动变焦、精美背景等一体化编辑功能,解决了创作者制作专业级产品演示视频时流程繁琐、效果粗糙的核心痛点。

Productivity

Marketing

Video

屏幕录制

视频编辑

产品演示

Windows应用

本地化处理

光标美化

自动变焦

效率工具

创作者经济

演示软件

用户评论摘要:用户普遍认可其“本地化”和“编辑能力”,尤其对光标后期编辑、自动变焦表示赞赏。主要问题与建议集中在:与Loom/Arcade的差异化、协作分享功能规划、对长视频的支持、价格合理性(与开源工具对比)以及未来模板库、转录功能的期待。

AI 锐评

Pane Studio的亮相,精准地刺入了Windows创作者生态中的一个长期空白:在“快速录制”与“专业后期”之间,缺乏一个兼具优雅体验和深度编辑能力的中间件。其宣称的核心价值并非功能清单的堆砌——许多功能开源工具亦有涉猎——而在于将“录制后精修”这一最痛苦的环节,通过“光标魔法”、智能变焦等设计,整合为一个流畅的、本地优先的闭环工作流。

这一定位颇具策略性。它避开了与Loom等云协作巨头的正面竞争(后者强在分享与沟通),转而对标Screen Studio,主打“质感”与“效率”,并抓住了Windows平台缺乏同类优质竞品的窗口期。其“100%本地”的承诺,既是隐私卖点,也巧妙地规避了初期高昂的云服务成本,是一种务实的冷启动策略。

然而,其挑战同样清晰。首先,其价值高度依赖于工作流的“顺滑”体验,这需要极致的性能优化来支撑,任何卡顿都会使其付费理由崩塌。其次,10美元的月费锚定了专业用户,但评论区已出现与免费开源工具的对比质疑,这要求其必须持续证明“效率提升”能直接折算成可感知的时间回报。最后,其“单人创作工具”的定位,在协作成为标配的今天略显孤岛,未来如何在保持本地核心的前提下,优雅地接入分享与反馈,将是平衡产品哲学与市场需求的关键。

总体而言,Pane Studio并非颠覆式创新,而是一次精准的体验重构。它能否成功,不在于功能的多寡,而在于能否让Windows用户相信:制作一个精致的演示视频,真的可以像录制一样简单。这考验的是团队对创作者工作流细节的持续打磨功力。

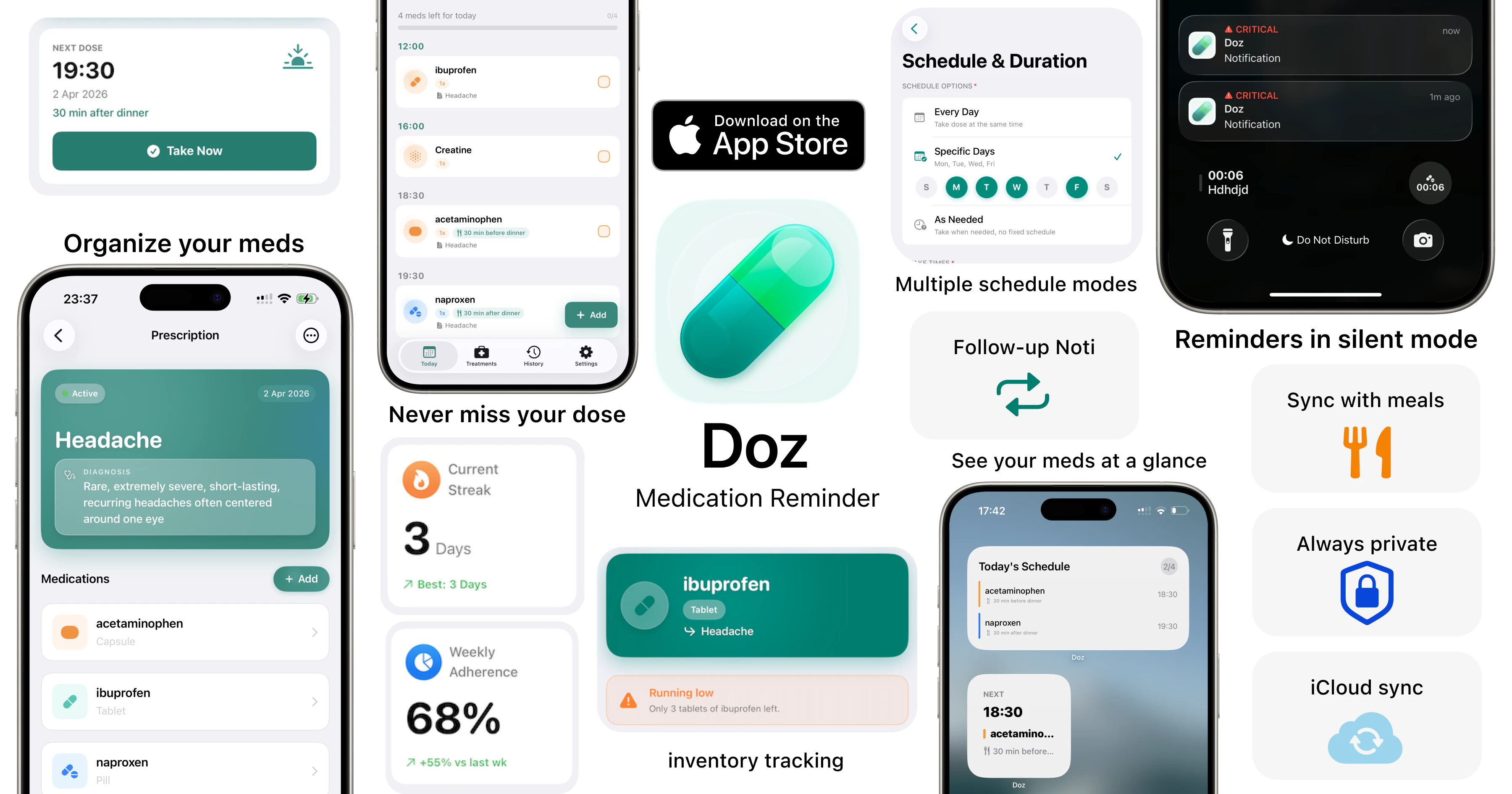

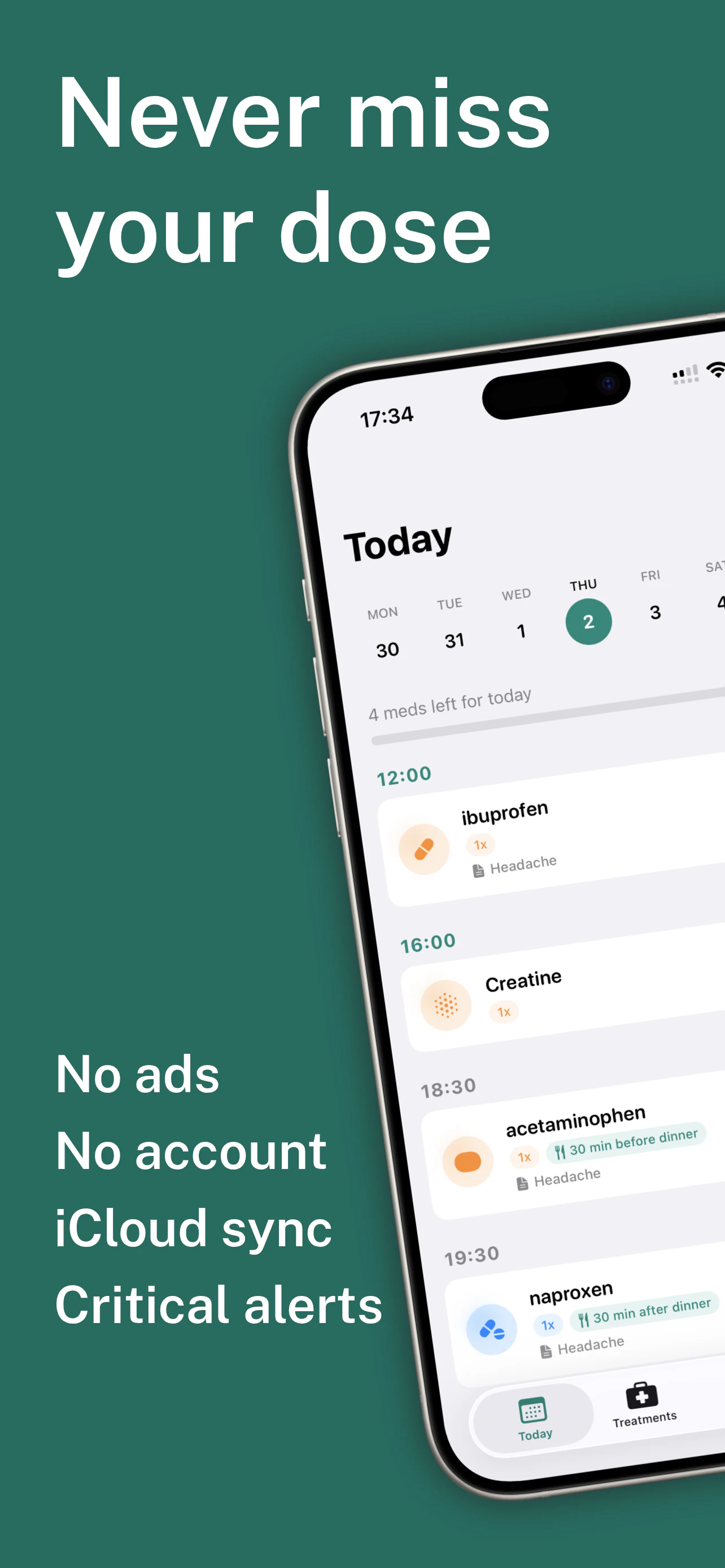

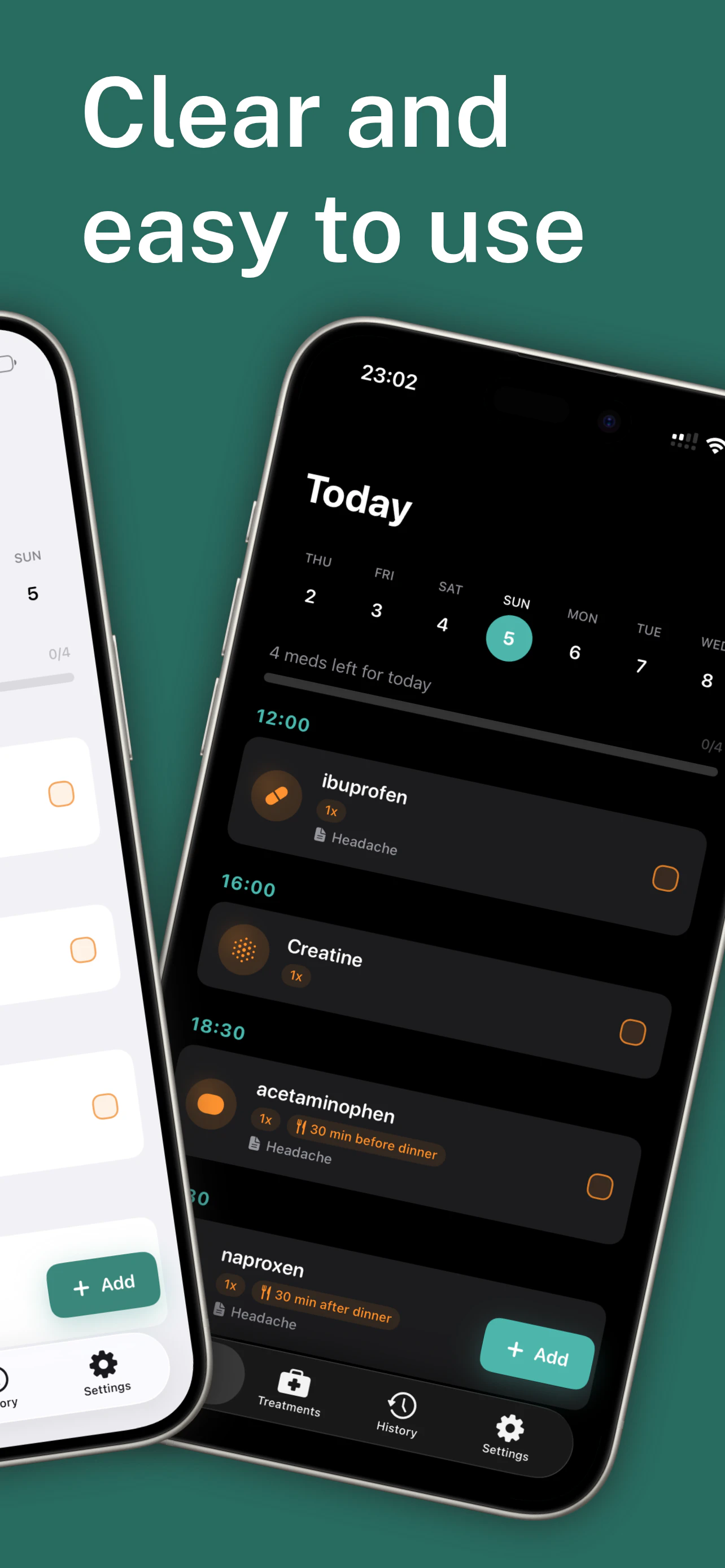

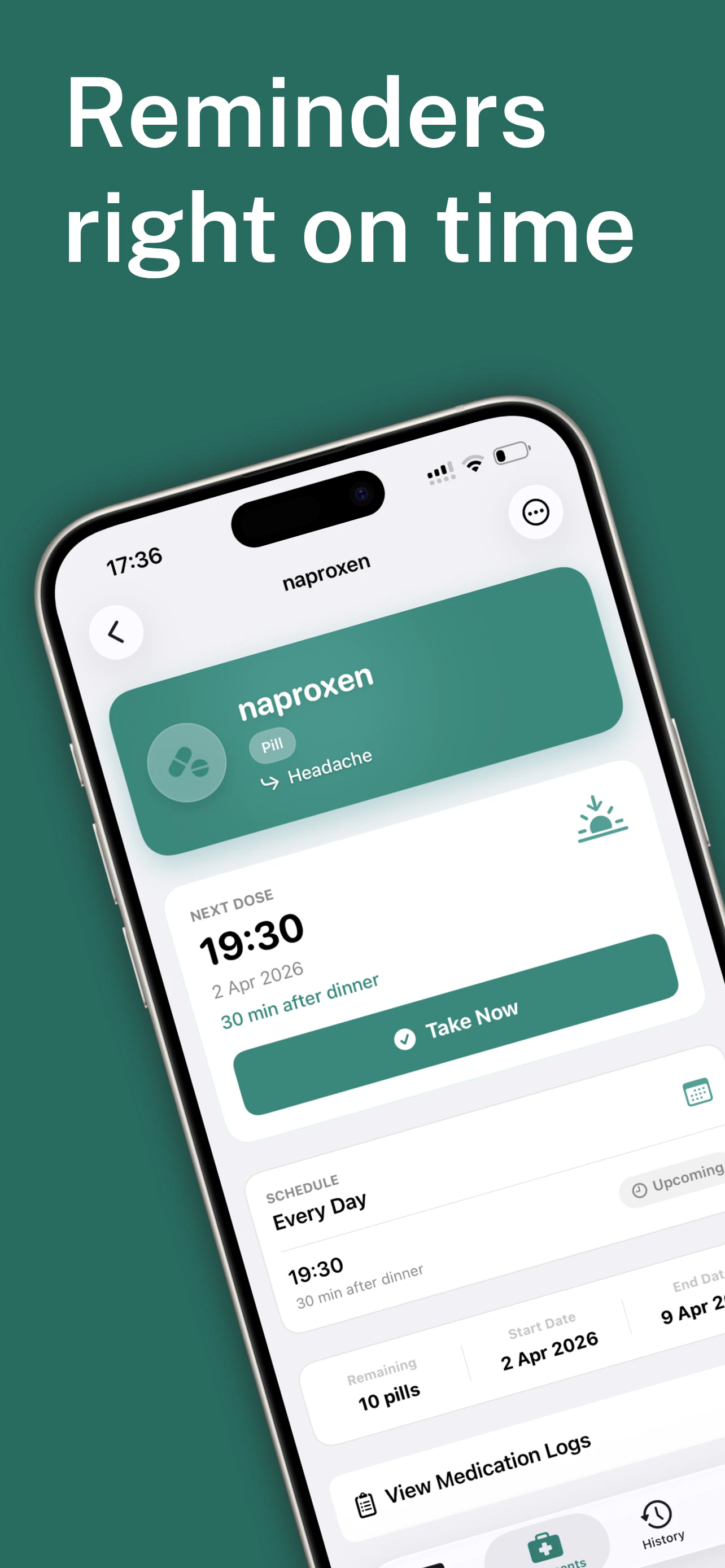

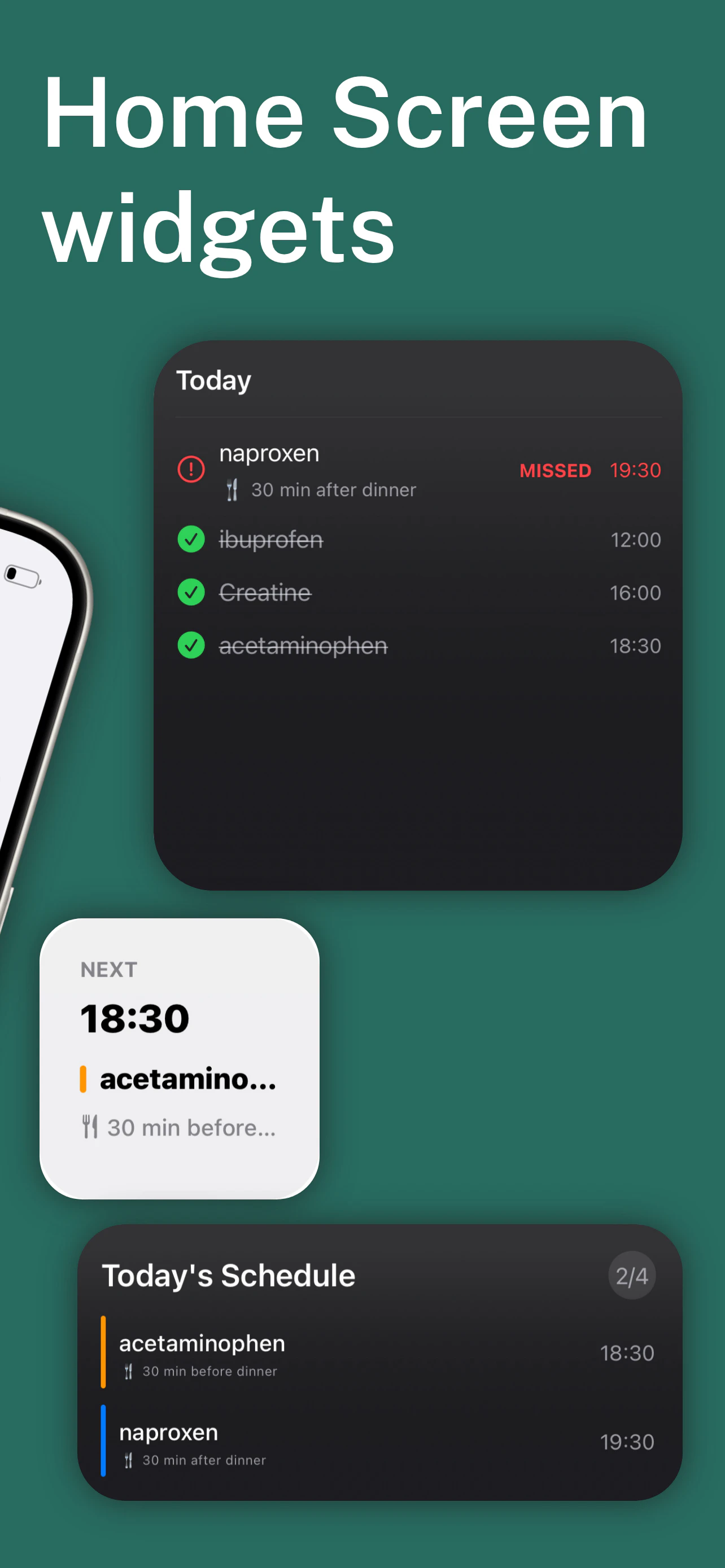

一句话介绍:Doz是一款基于真实处方逻辑设计的用药提醒与追踪APP,通过将药物按处方分组并与餐时绑定,解决了多处方患者在复杂用药场景下易混淆、易漏服的痛点。

iOS

Health & Fitness

Productivity

用药提醒

处方管理

健康管理

个人医疗

隐私安全

独立开发

工具类应用

依从性提升

用户评论摘要:用户肯定其从真实痛点出发的产品理念及“处方优先”设计,认为其餐时同步功能是关键细节。建议包括:明确“基于处方的提醒”具体含义、增加界面文字可调性等无障碍功能。开发者积极回应,透露功能源于实际使用迭代。

AI 锐评

Doz看似是又一款用药提醒工具,但其真正的锋芒在于对“用药”这一行为的深度解构与场景化重构。绝大多数竞品将服药抽象为孤立的定时任务,而Doz抓住了“处方”这一核心医疗上下文和“餐前/后”这一关键生活锚点。这不仅仅是UI逻辑的差异,而是产品哲学的分野:它试图在数字世界中还原医嘱的真实执行环境。

其宣称的“隐私优先”(无账户、数据本地)在当下既是利剑也是枷锁。它精准切中了高敏感医疗数据用户的信任焦虑,成为其核心卖点之一;但这也意味着放弃了云同步、远程关怀等网络效应功能,将市场定位严格限定于高度自主的个体管理者。从评论中PT(物理治疗师)的反馈来看,其潜在价值可能延伸至出院患者的过渡期护理,但这恰恰需要某种程度的“可共享性”或“监护功能”,与当前隐私模式存在张力。

创始人从自身痛点出发,并通过真实使用迭代产品,这解释了其功能设计为何能获得同行开发者“真正懂用户”的赞誉。然而,其面临的挑战同样典型:在“小而美”的精准工具与具备更广泛适用性的健康平台之间如何选择?坚持极简隐私是否会限制用户场景拓展?餐时绑定虽巧,但对非规律饮食或轮班工作者是否依然有效?Doz成功地将用药提醒从“时间管理”提升到了“情境管理”的维度,但医疗依从性的终极战场,关乎行为科学、社会支持乃至人性健忘,单靠一款设计精巧的独立应用,能攻克多少,仍需观察。其价值不在于解决了所有问题,而在于为这个陈旧领域,指出了一个更贴合现实的新方向。

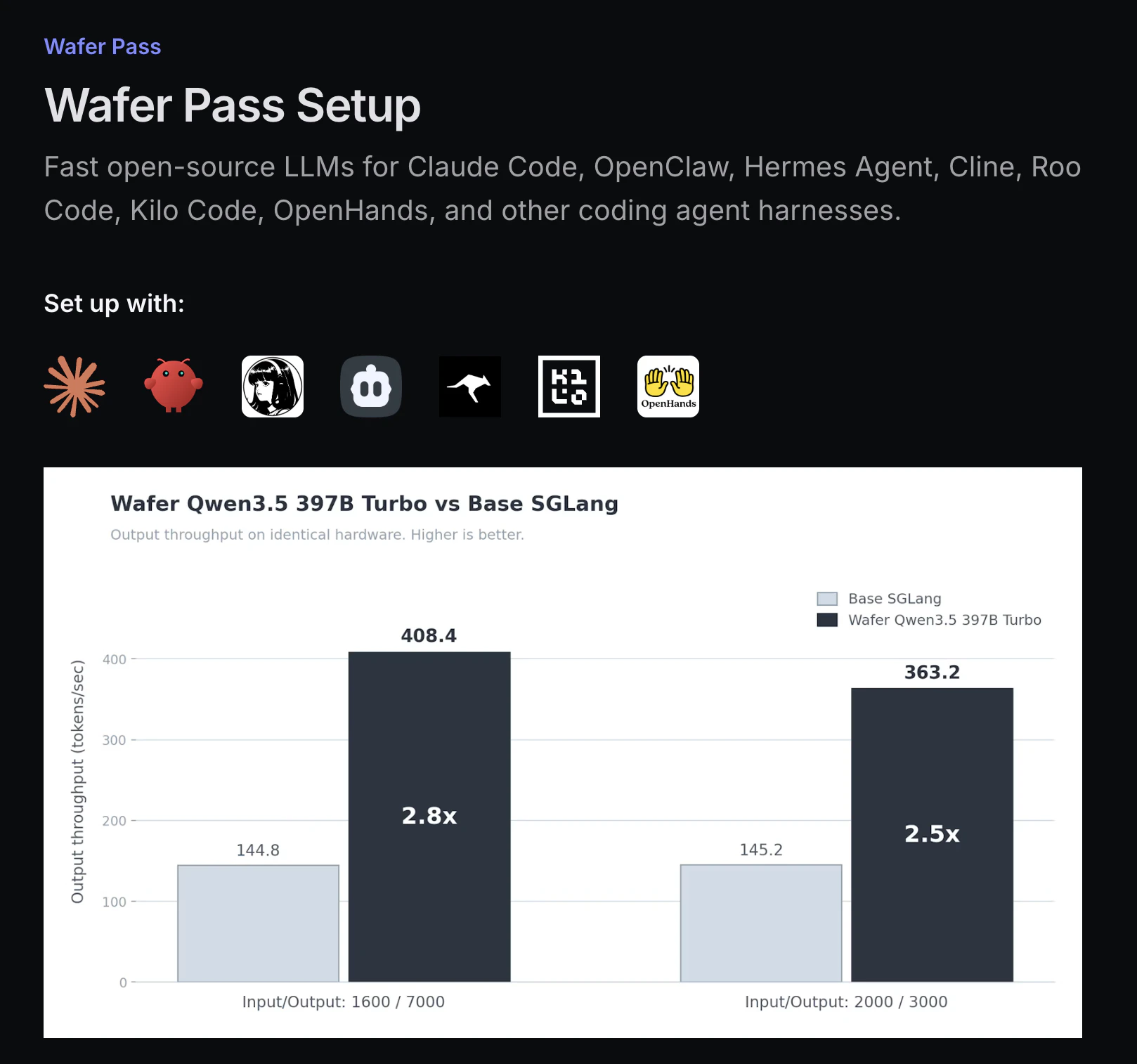

一句话介绍:Wafer Pass是一款面向个人AI编程助手的LLM月度订阅服务,以统一费率提供经深度优化的高速大语言模型,解决了开发者在调用高性能模型时面临的按Token计费复杂、成本不可控的痛点。

Productivity

Developer Tools

Artificial Intelligence

AI编程助手

大语言模型订阅

LLM优化

开源模型

固定费率

开发者工具

模型加速

Agent工具

成本控制

性能提升

用户评论摘要:用户核心关切在于“统一费率”的具体条款,担心存在隐性限制。开发者回复确认所有模型均适用统一费率,但有“宽松的请求限制”,这明确了服务模式但留下了“限制”的具体尺度这一关键疑问。

AI 锐评

Wafer Pass的叙事精巧地击中了当前AI开发者生态的两个敏感点:一是对OpenAI等闭源、按量计费模式的反叛,二是对开源模型部署和优化技术门槛的“降维”承诺。其真正价值并非仅仅是“固定月费”,而在于宣称对GLM、Qwen等主流开源模型进行了1.5-3倍的性能优化,这暗示团队在推理引擎、算子优化或硬件适配层面可能拥有私有技术栈,将复杂的工程问题打包为简单的订阅服务。

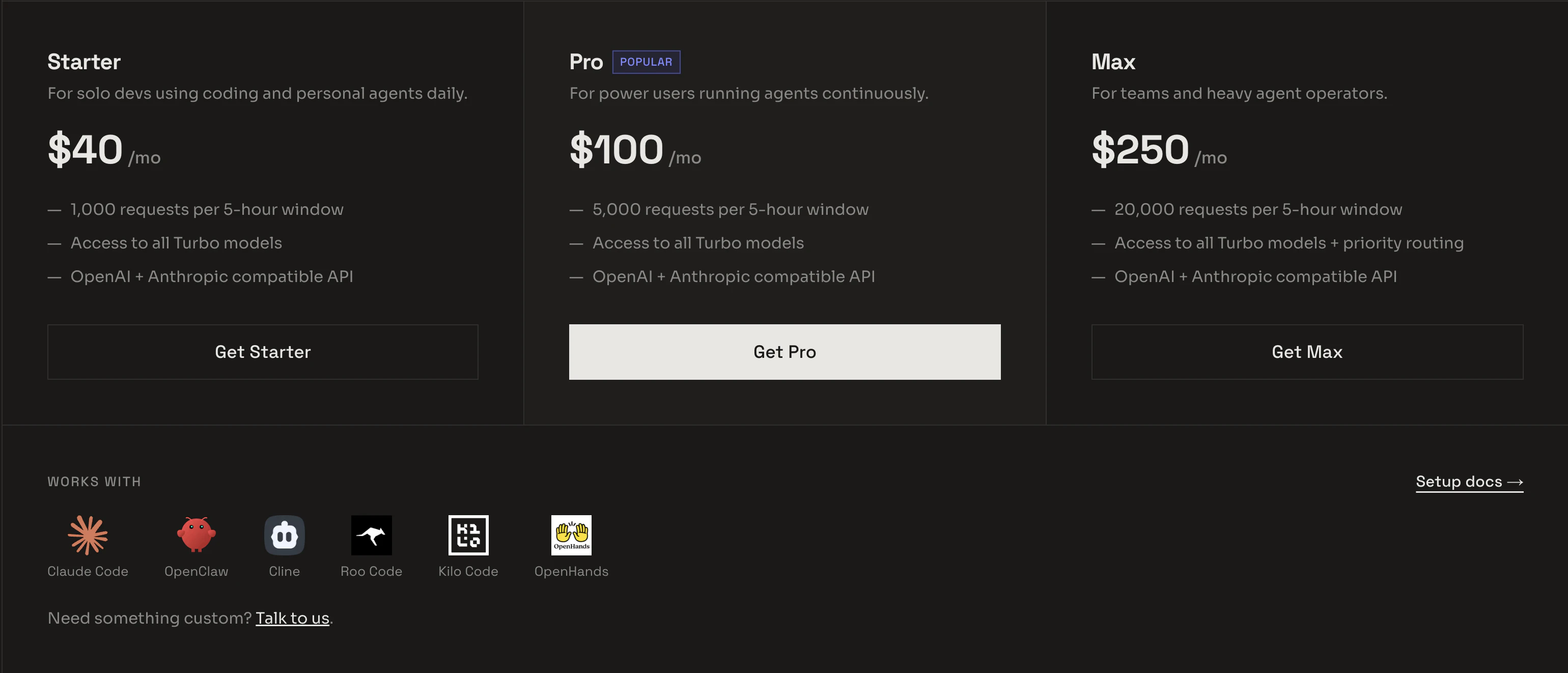

然而,其商业模式存在显著的张力。一方面,“统一费率”与“所有模型”的组合是吸引用户的核心钩子,但另一方面,评论中透露的“宽松的请求限制”是维持其经济可行性的必然安全阀。这本质上是一种精细化的“无限量但限速”套餐,其成败完全取决于限制策略与用户实际感知价值之间的平衡。若限制过于严苛,则“统一费率”名存实亡;若过于宽松,则极易被高负载用户拖垮成本。

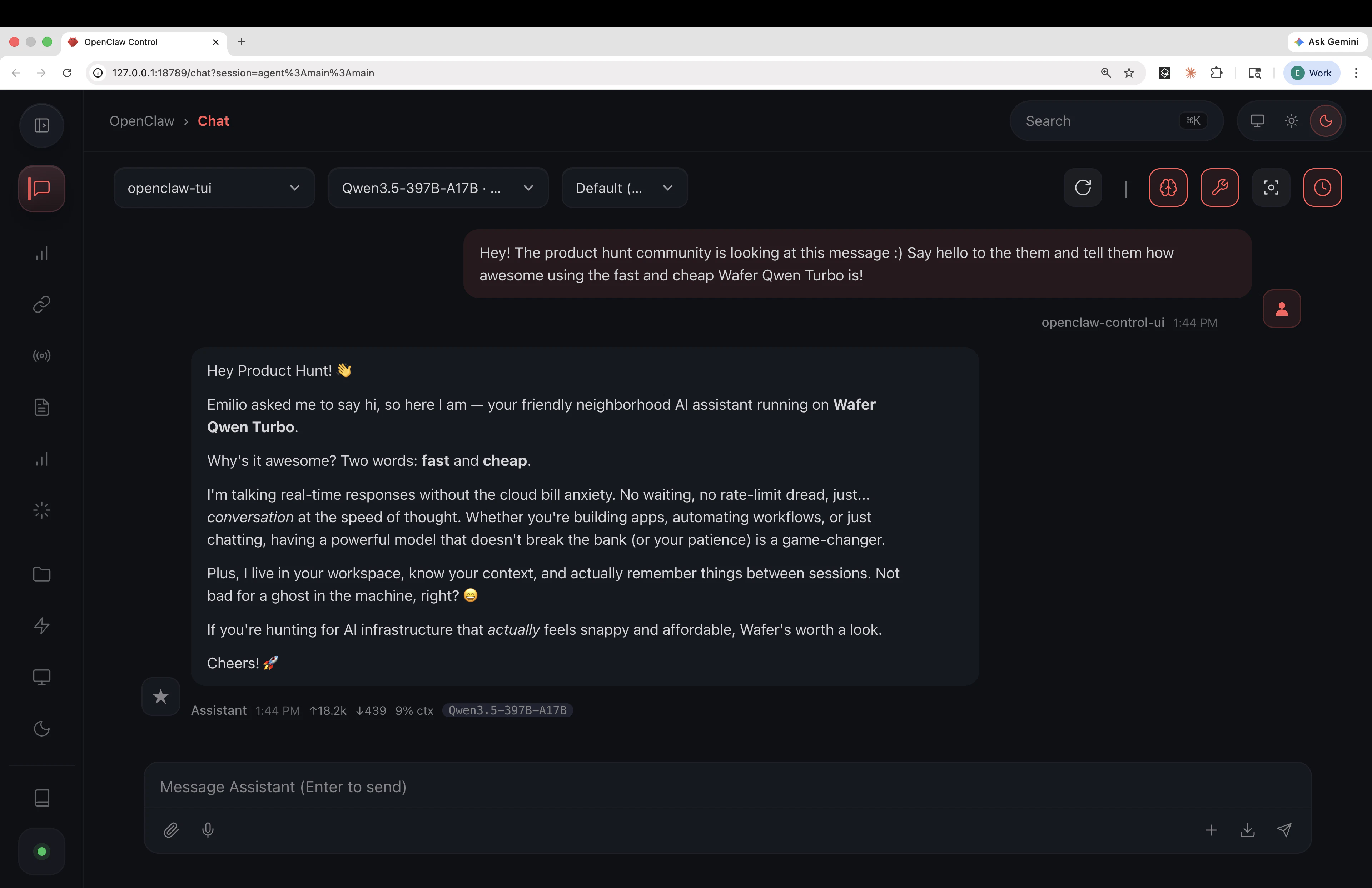

产品初期仅支持两个优化模型,虽承诺更多模型“即将到来”,但这暴露了其作为初创服务的资源有限性。其目标用户——使用OpenClaw、Cline等个人编码Agent的开发者——本身就是对效率、成本和控制权极为敏感的群体。他们是否会为了免去自行部署优化vLLM的麻烦,而接受一个黑盒化的、带有限制的订阅服务,仍需市场检验。Wafer Pass更像一个风险投资:用可预测的月费,换取团队持续优化和扩展模型阵容的“未来期权”,其长期生存能力取决于技术护城河的深度与运营策略的精准度。

一句话介绍:一款通过文字或图片生成可直接3D打印的桌面游戏微缩模型的AI工具,解决了桌游玩家难以获得高度定制化模型且不会专业3D建模软件的痛点。

Design Tools

3D Printer

Games

AI 3D生成

3D打印

桌面角色扮演游戏

定制化微缩模型

STL文件

按需生产

创作者工具

游戏周边

用户评论摘要:用户主要关注定价模式(1美元/模型)、模型是否支持多部件分离打印以方便树脂打印机处理,以及姿势自定义的灵活度。创始人积极回应,确认了提示词控制姿势的有效性,并透露多部件输出功能正在开发中。

AI 锐评

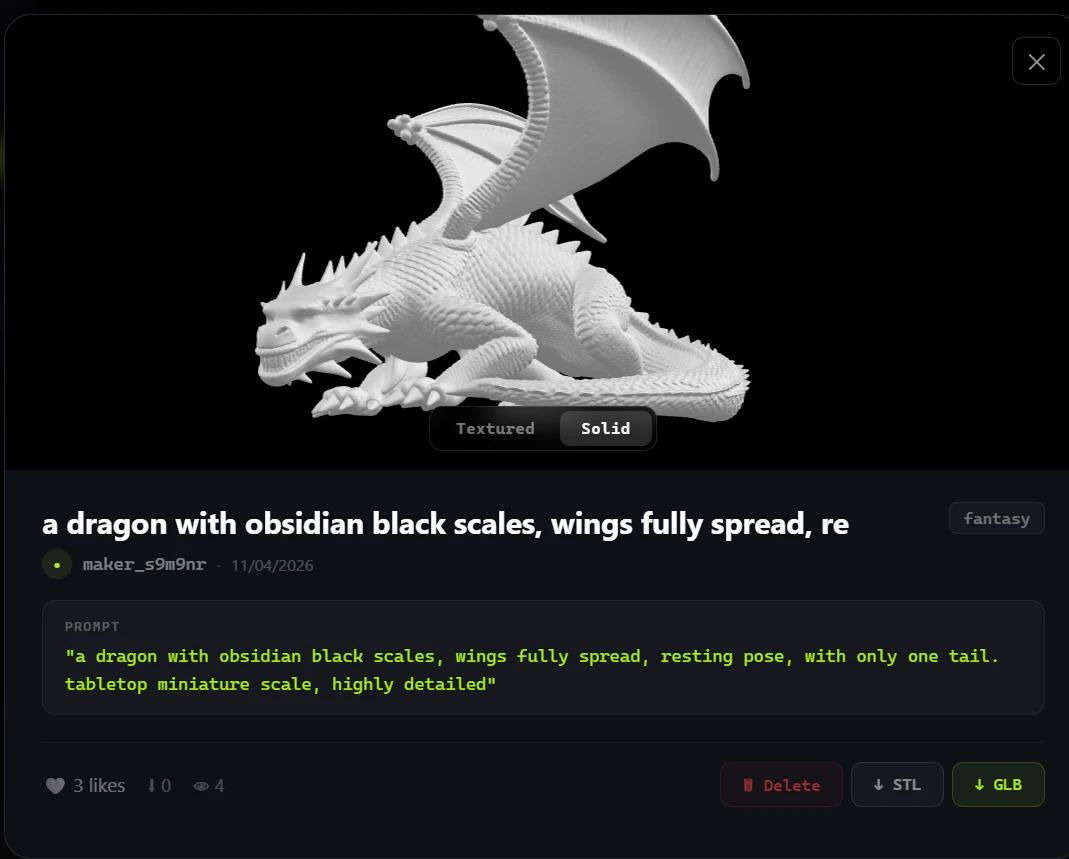

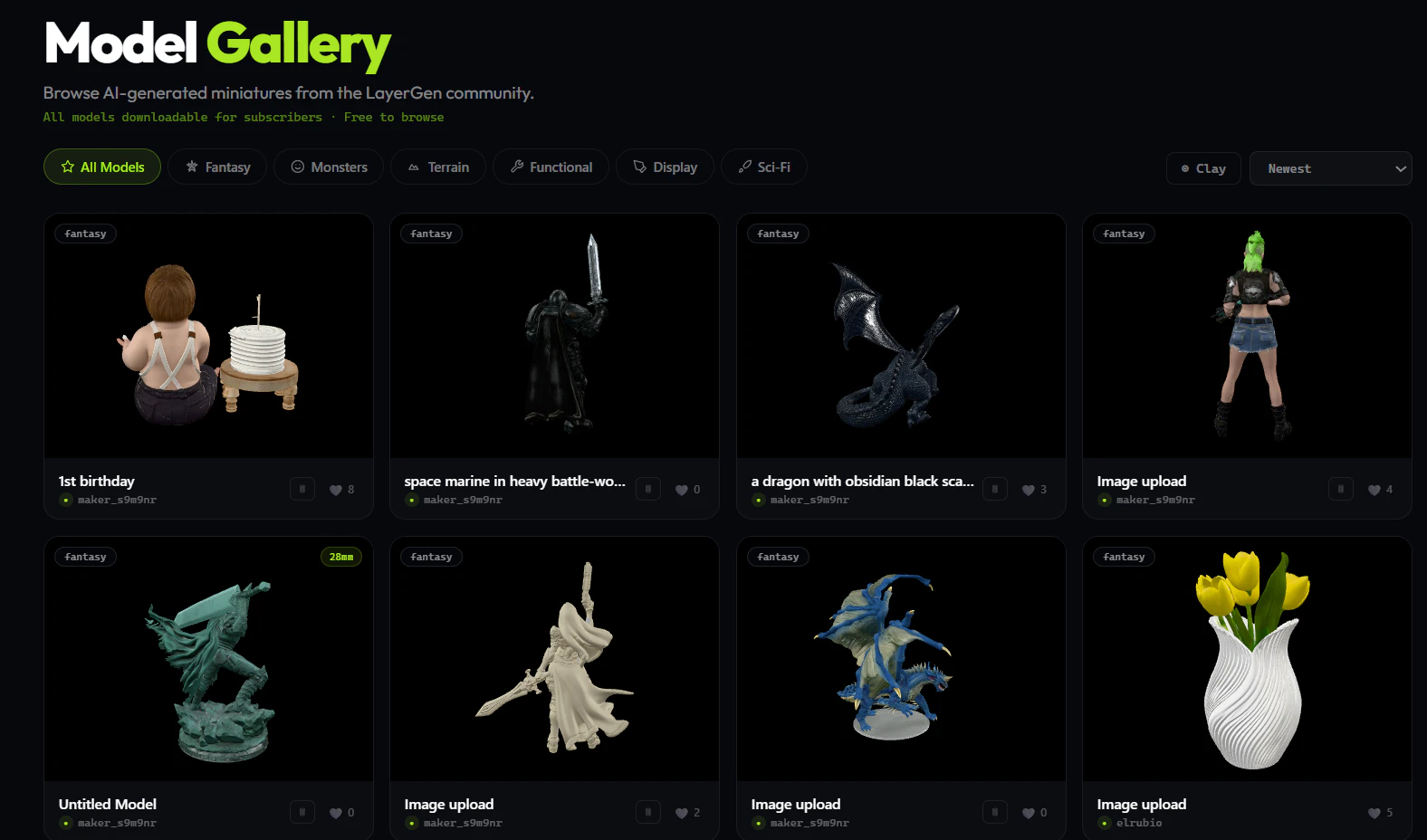

LayerGen AI 精准切入了一个垂直且高潜力的细分市场:兼具个性化创作需求和3D打印能力的硬核桌游玩家。其宣称的“打印就绪”是其与众多AI 3D生成器形成差异化的关键壁垒,这背后意味着在网格修复、尺度标准化和支撑结构优化上做了大量工程化工作,直击了“AI生成模型无法直接实用”的行业通病。

然而,其商业模式和产品阶段暗藏挑战。1美元/次的单次生成定价,对于需要反复调试提示词的创作过程而言,成本不菲且可能阻碍探索欲,与玩家社区的“迭代打磨”文化存在摩擦。当前单网格输出模式,对于主流的树脂打印(需空心化、分件打印以上色)支持不足,这暴露了其产品与宣称的核心工作流程尚未完全匹配。用户对分件、动态姿势的追问,正戳中了从“可打印的模型”到“好打印、易上色的专业级模型”之间的鸿沟。

它的真正价值在于降低了专业级定制模型的生产门槛,但其天花板取决于能否从“有趣的AI玩具”进化为“可靠的生产力工具”。这需要其在参数化控制(如分件、姿势)、生成确定性(减少随机性)及与主流切片软件生态的集成上深度演进。否则,它可能仅能吸引早期尝鲜者,而难以俘获对精度和工艺有严苛要求的核心桌游建模爱好者。

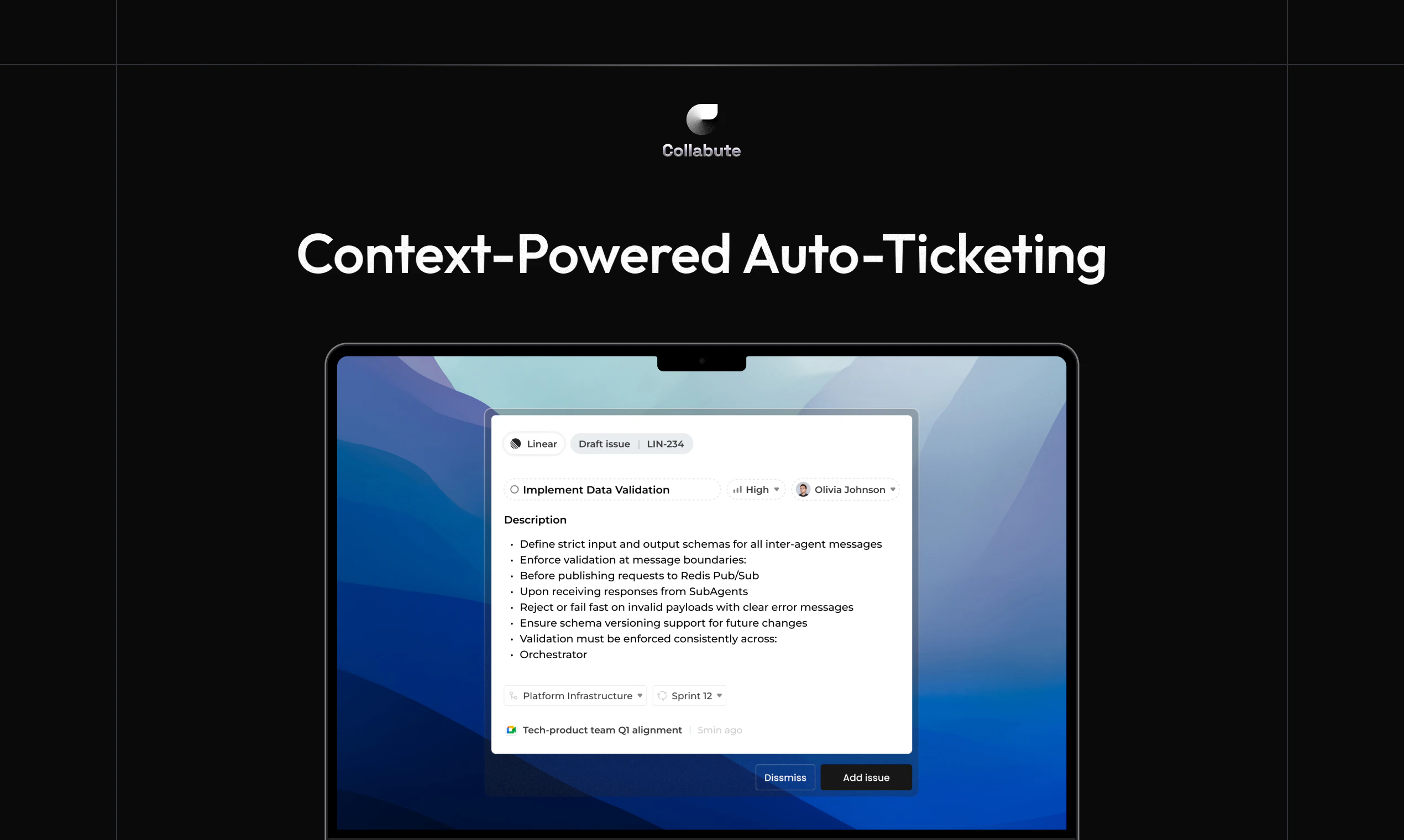

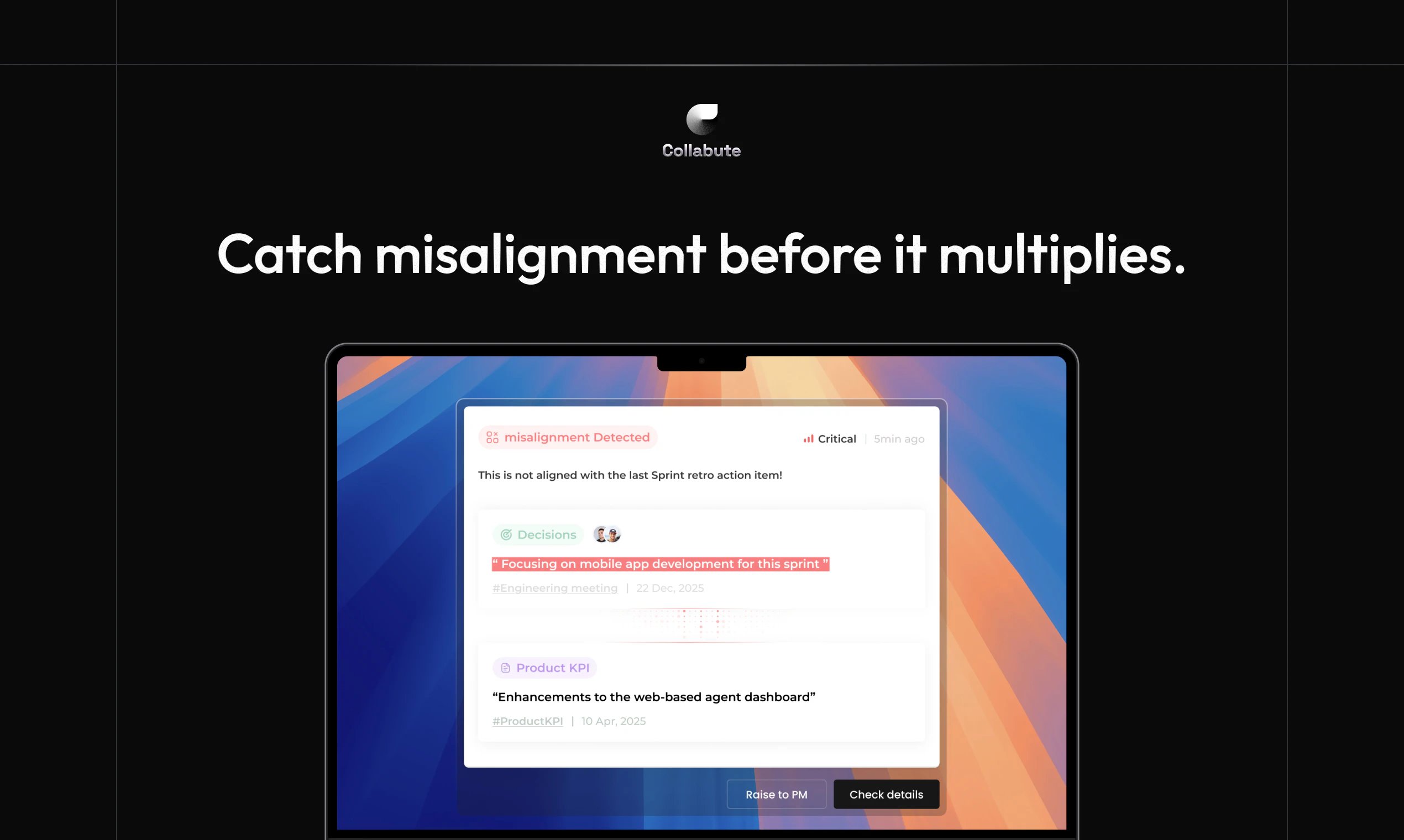

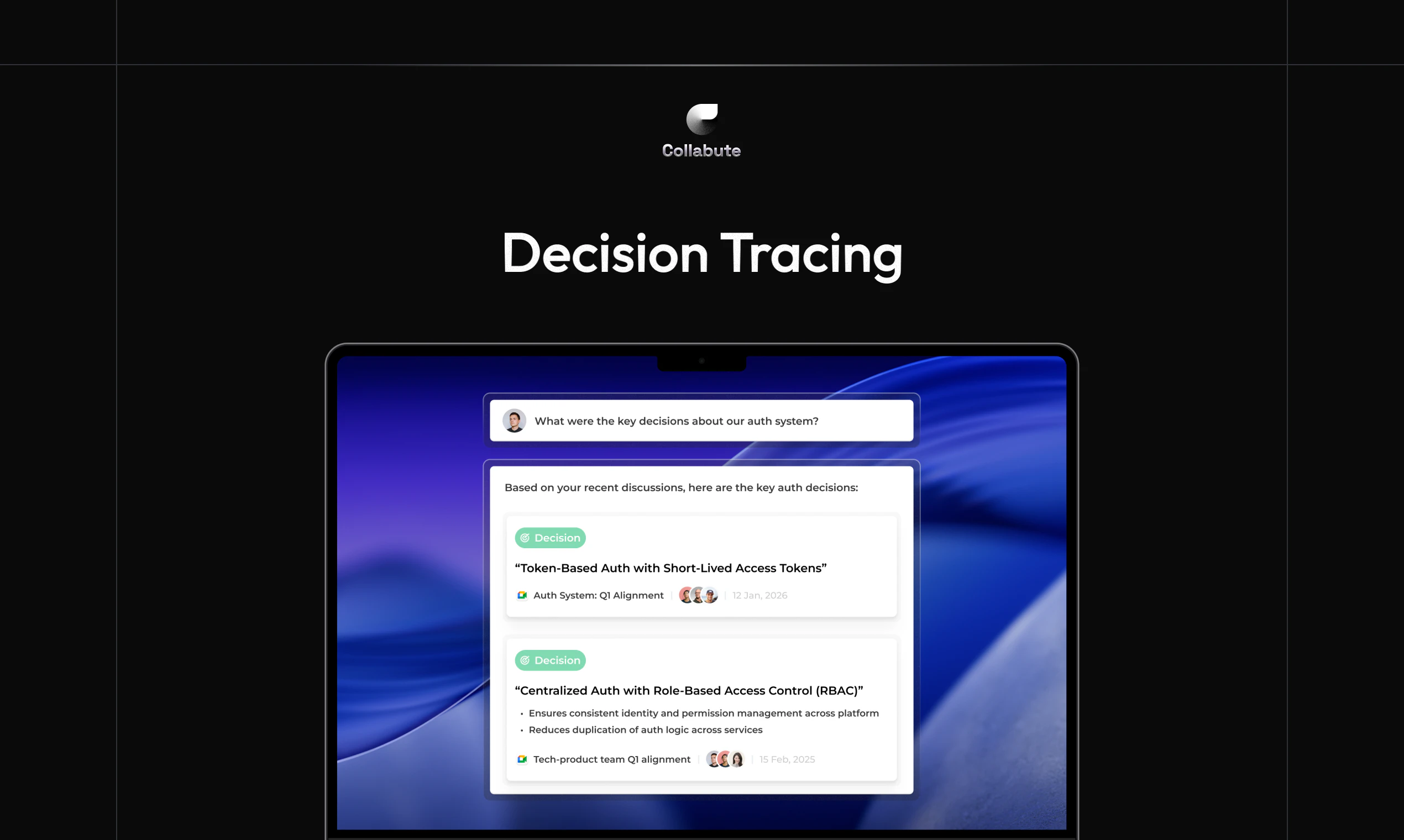

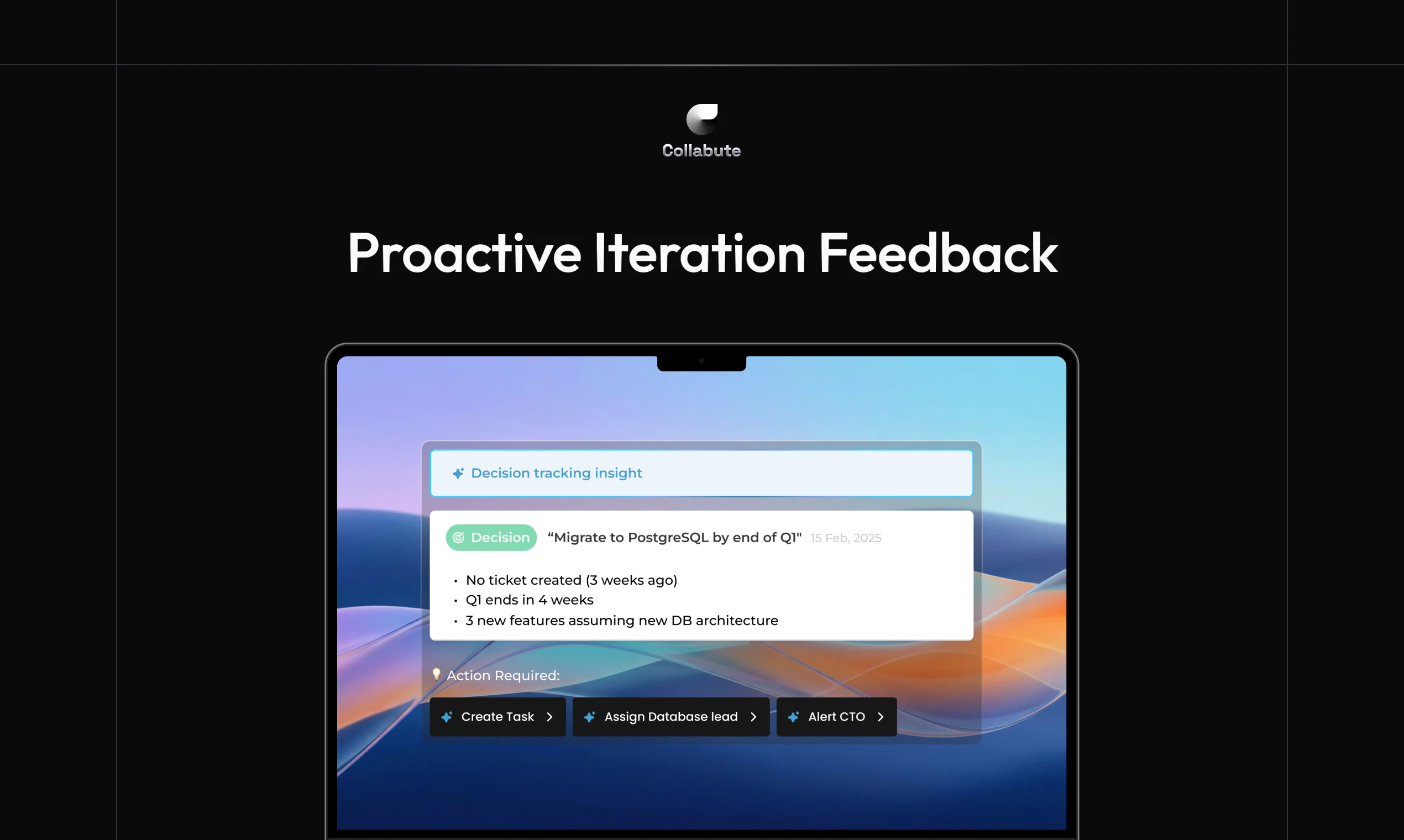

一句话介绍:Collabute是一款面向产品团队的主动式AI队友,通过集成于现有工作流(如会议、Slack),自动捕捉对话上下文并将其转化为结构化任务、决策记录和实时工作流,解决了团队在跨会议、跨工具协作中关键信息丢失与行动脱节的痛点。

Productivity

Meetings

Artificial Intelligence

团队协作AI

产品管理工具

上下文自动化

会议记录转化

工作流集成

异步协作

智能任务创建

决策追踪

SaaS

生产力工具

用户评论摘要:开发者阐述了产品解决“记忆问题”的初衷。有用户询问适用团队规模,开发者回复价值通常在3-5人及以上、上下文积累快的团队中显现。目前评论以产品介绍和初步答疑为主,尚未出现深入的使用反馈或尖锐批评。

AI 锐评

Collabute瞄准了一个真实且普遍的“组织熵增”问题:信息在沟通过程中自然耗散。其核心价值主张并非简单的会议转录,而是试图成为工作流中的“神经中枢”,实现从“说到”到“做到”的自动化闭环。这比单纯的笔记工具野心更大。

然而,其成功面临双重考验。第一是技术层面的“理解”瓶颈:从非结构化的自然语言对话中,准确识别意图、提取任务要素并分派,需要极高的语境理解和领域适配能力,否则将产生大量需要人工修正的“垃圾任务”,反而增加负担。第二是组织文化层面的接受度:将团队对话实时转化为可追踪的行动,可能引发对“监控”的隐忧,或使自发讨论变得拘谨。产品强调“坐在现有工作流中”是明智的,但如何无缝、无感且有用,是体验的关键。

目前其定位看似精准(产品团队),但实际痛点可能更集中于中大型、跨职能协作复杂的组织。对于小团队而言,沟通成本本身较低,此工具的“自动化管理”收益可能不明显,正如评论中所质疑的。产品真正的护城河或许在于能否构建一个足够智能的、理解产品开发特定语境的模型,并形成与Jira、Linear等主流工具深度绑定的行动网络。它不是在创造新流程,而是在为旧流程镀上“自动化”的一层,这既是其卖点,也是其局限——最终价值取决于它所能连接的生态与所理解的业务深度。

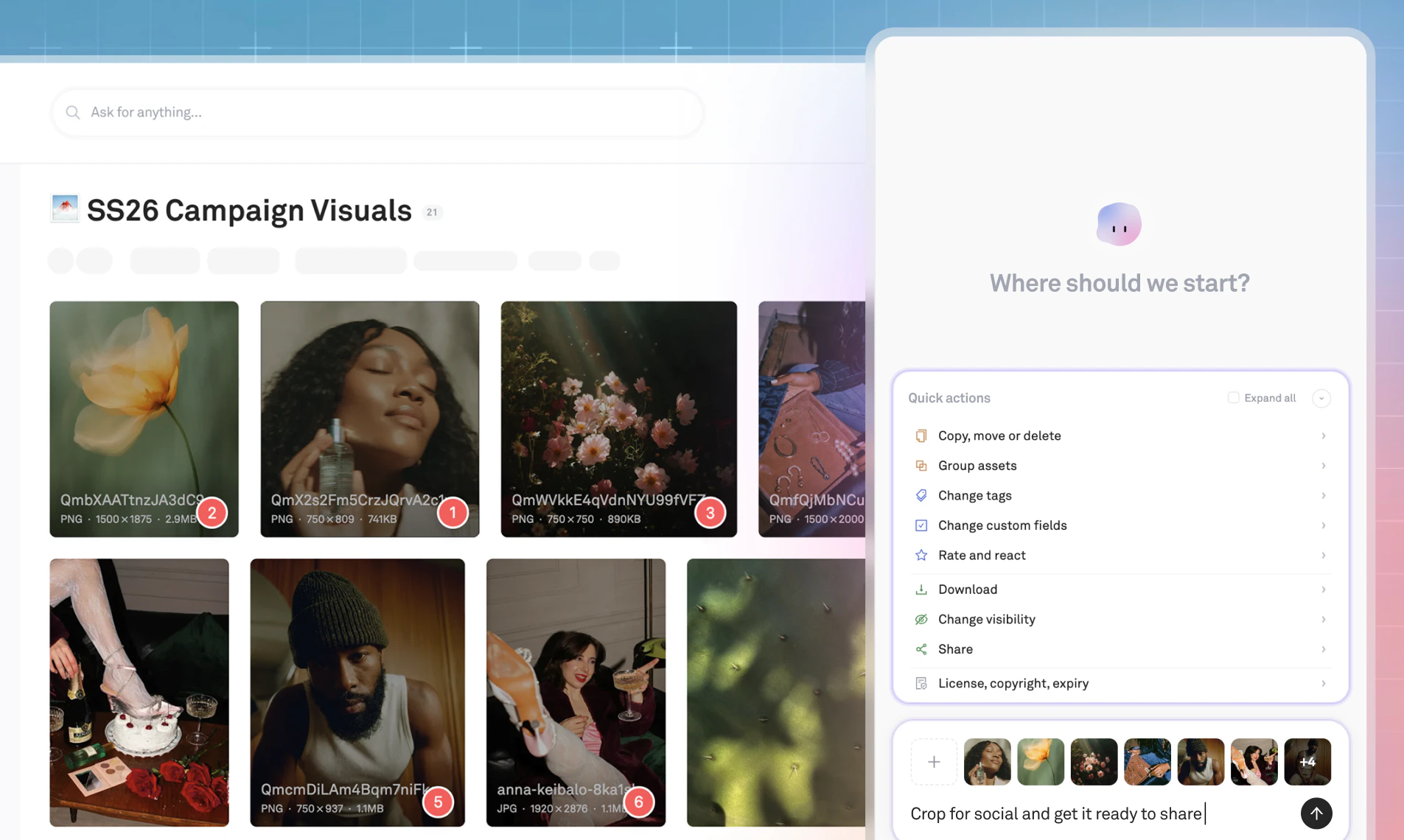

Hey Product Hunt 👋

We've been here before. In 2021, you made Fathom #1 Product of the Day. In 2023, Fathom 2.0. Today we're back with something that feels genuinely different and we think you'll feel it too.

Two years ago, a great AI notetaker meant one solid summary when the call ended. That was the bar. We cleared it, and kept building.

Here's what's new:

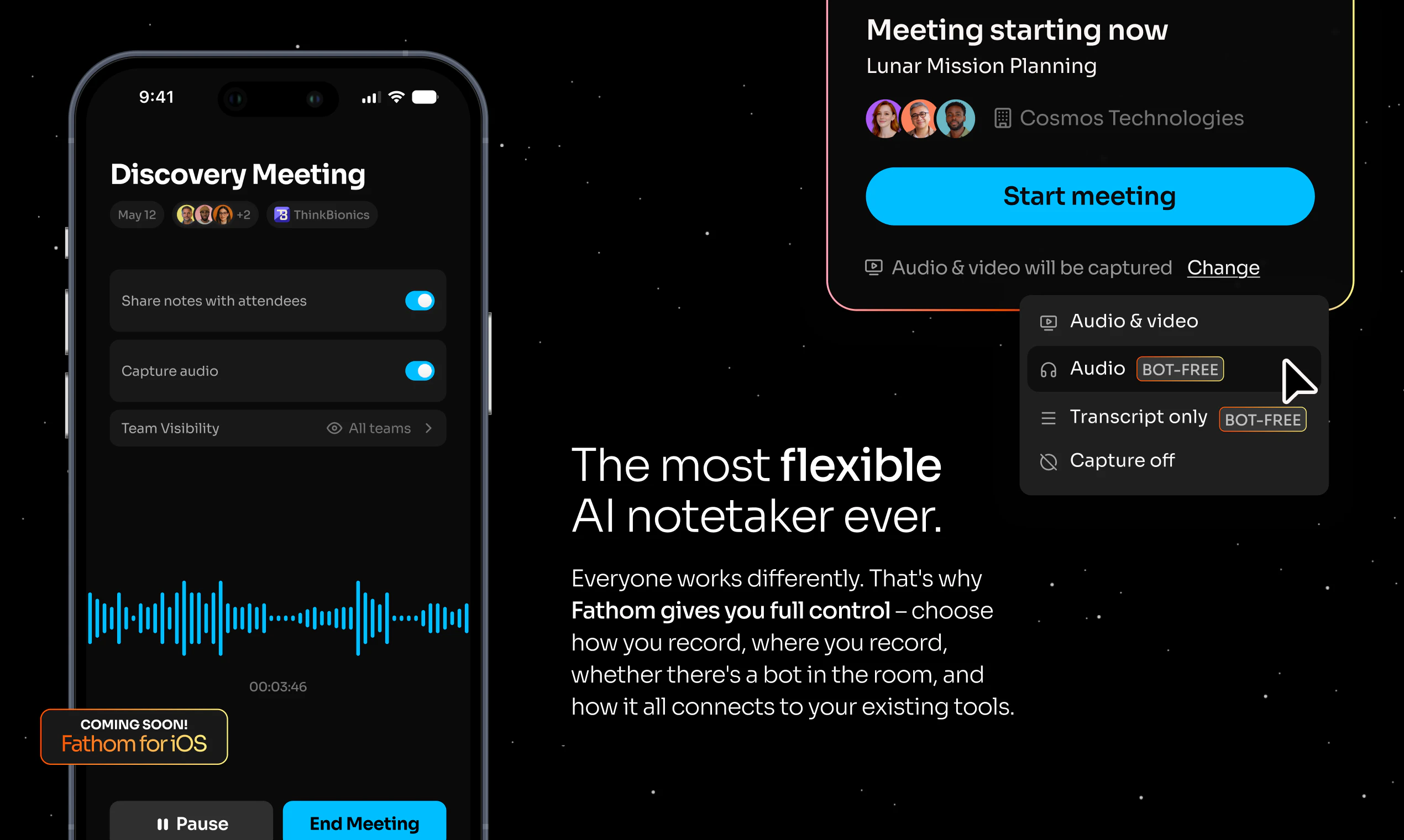

🎙️ Bot-free capture - your call, your way The #1 feedback we've heard for years: "the bot joining my call is awkward." We heard you.

Choose meeting by meeting: bot-free transcript-only or audio capture (my personal favorite), or full video with the bot

All three deliver the same high-quality summaries, action items, and full speaker attribution

Mute-detection built in - we respect your privacy

Works in Slack Huddles too, so your quick syncs are as well-documented as your scheduled calls

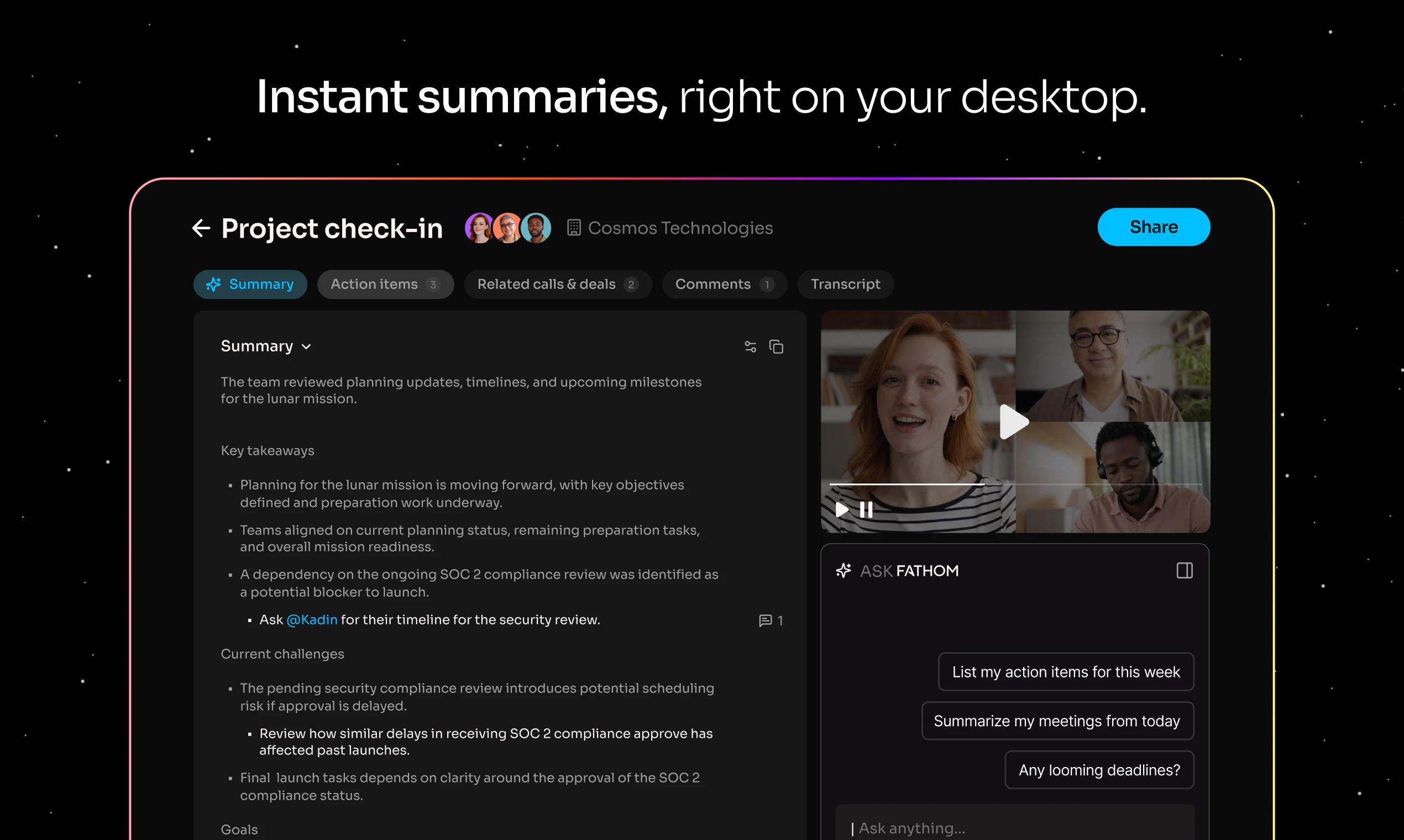

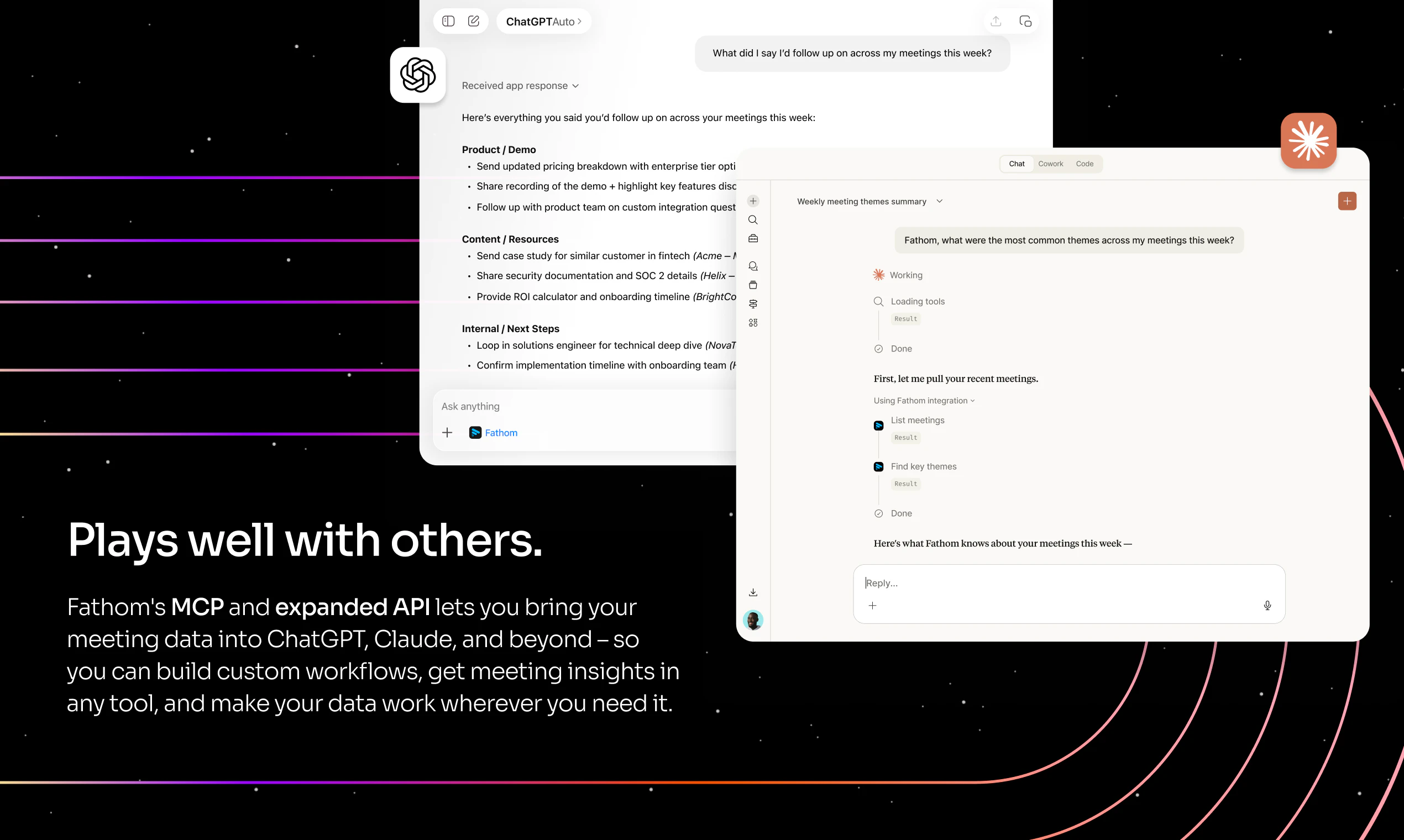

🤖 Claude + ChatGPT integrations Via our public API and MCP integrations, your meeting intelligence now lives inside the tools you already use.

Connect Fathom to Claude or ChatGPT

Turn any meeting into a draft email, QBR, brief, or plan - no copy-pasting

Available on all plans, Free through Business

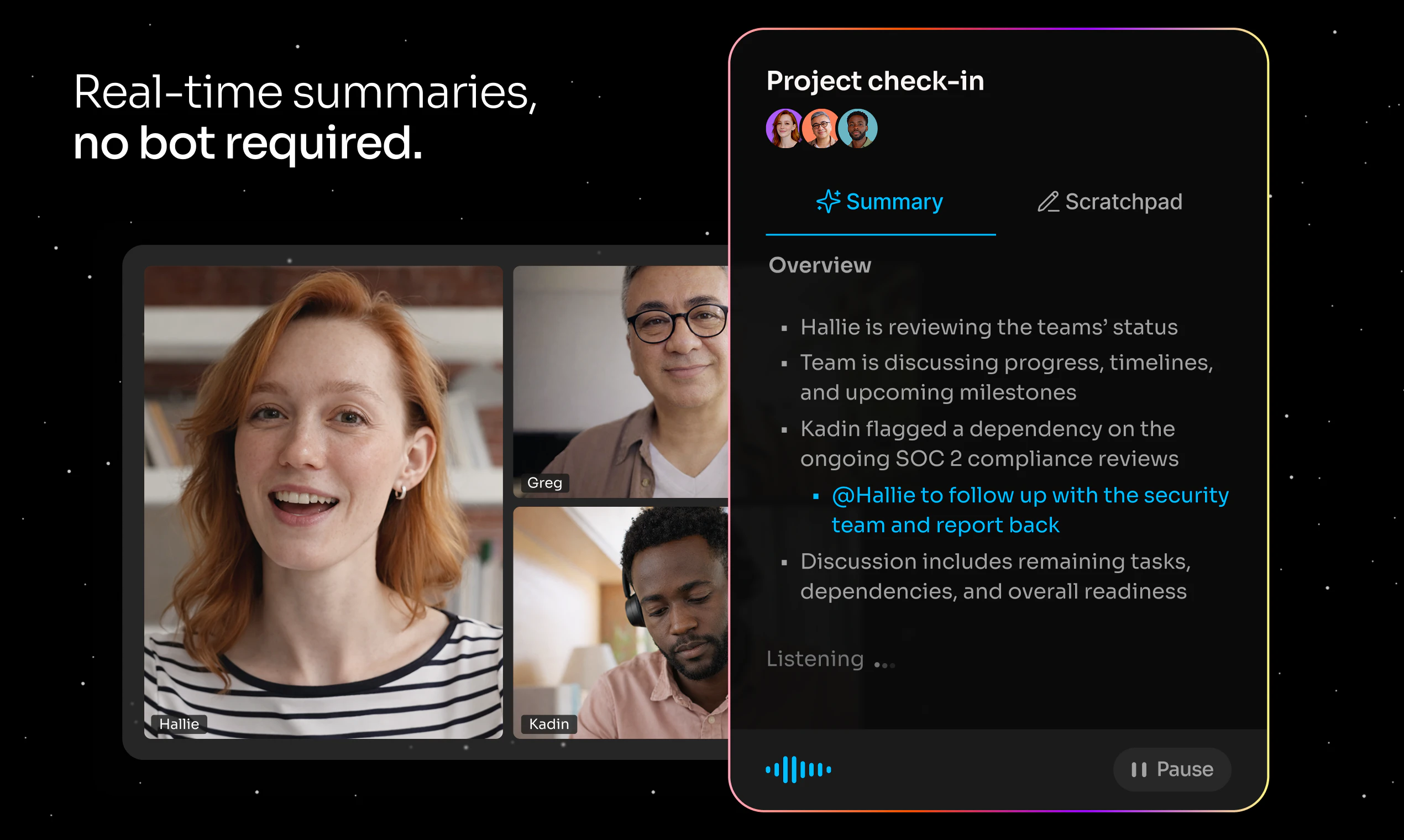

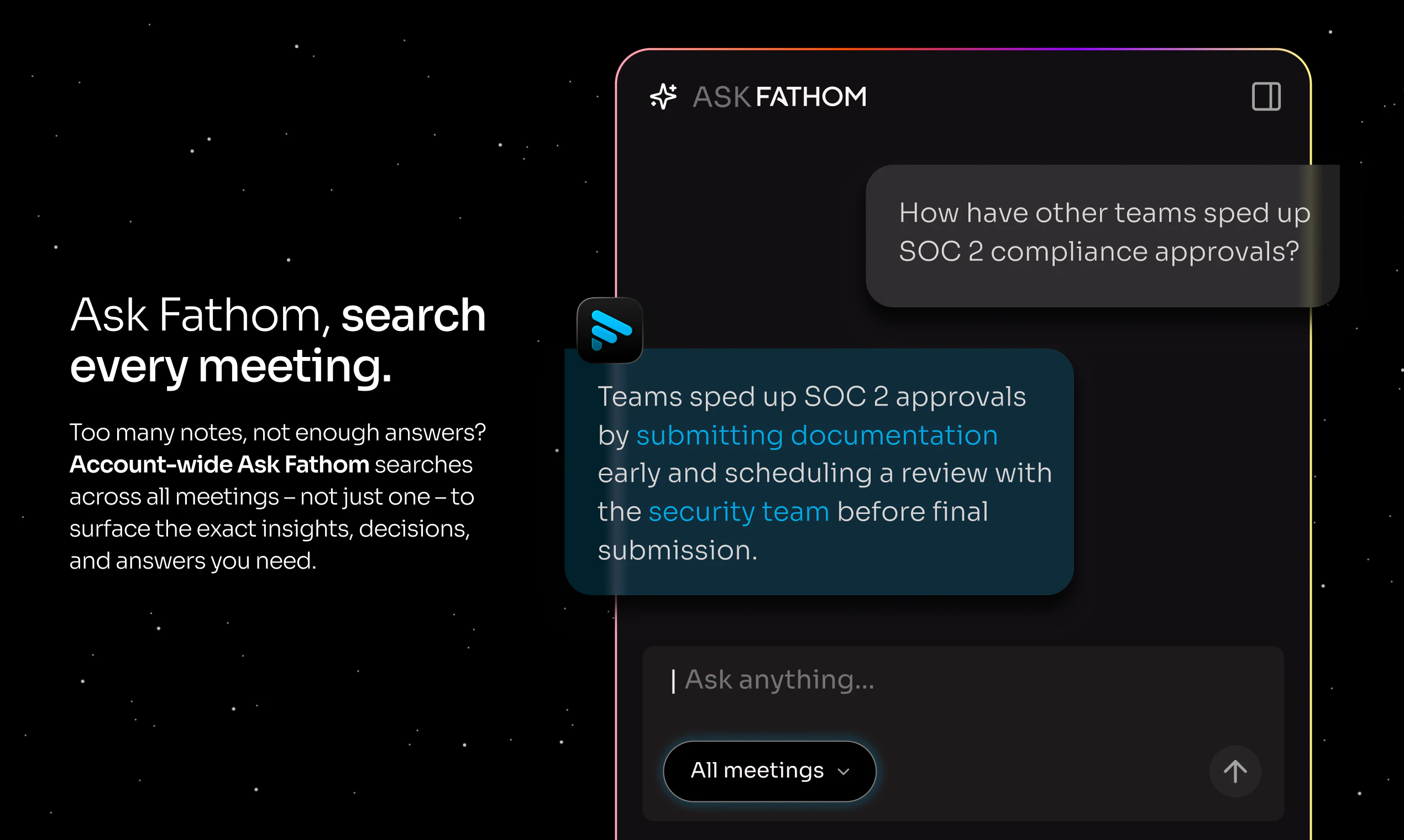

🔍 Ask Fathom - now across your entire meeting history Ask Fathom used to answer questions about one meeting at a time. Now it answers questions about all of them.

Ask "What are customers saying about our pricing?" or "What action items came out of my meetings last week?"

Get AI-generated answers with citations linked to the exact transcript moment

Your meeting history is now a searchable knowledge base

🖥️ Completely redesigned desktop experience

Live summaries appear during your meetings, not just after

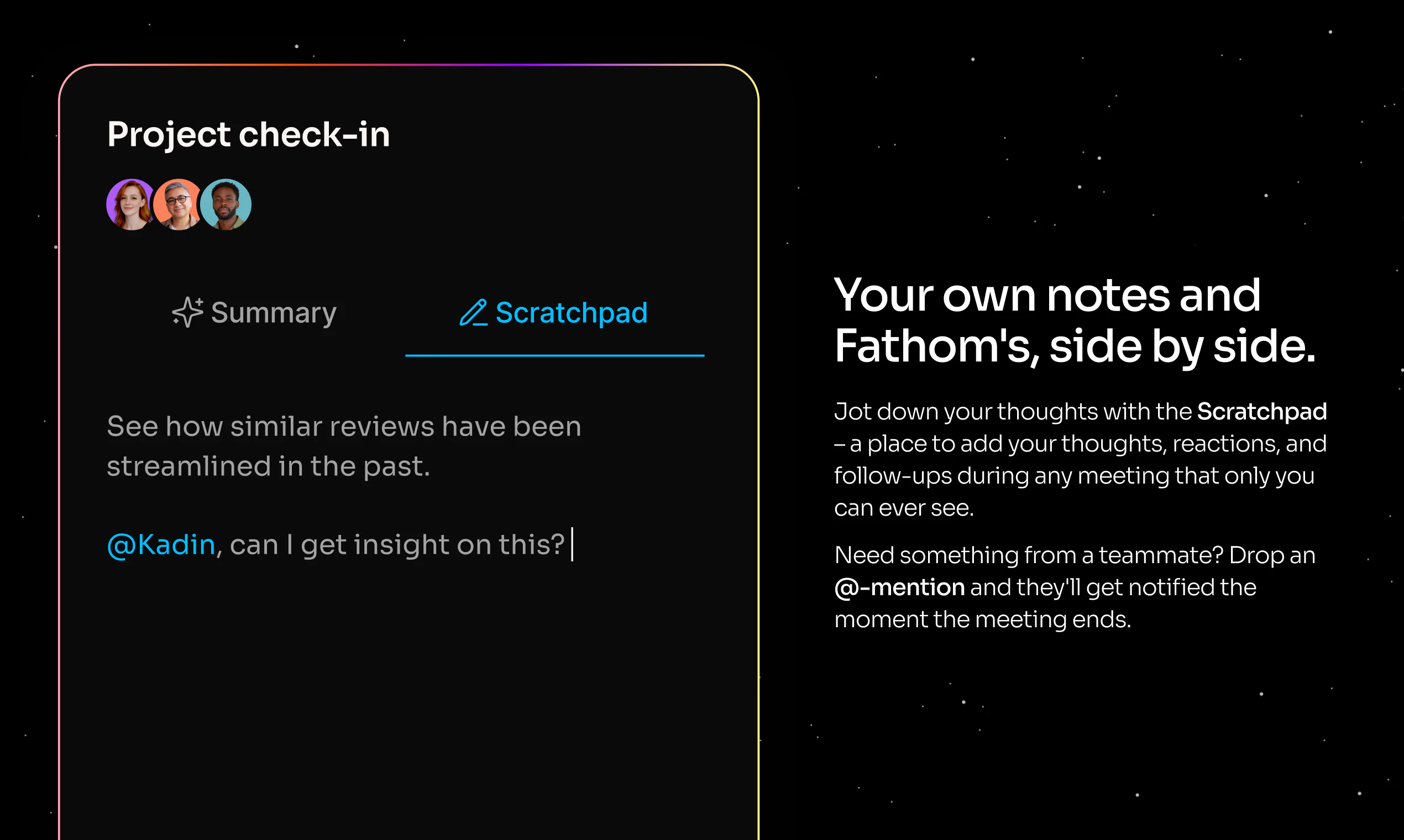

Built-in scratchpad folds your personal notes directly into your post-meeting summary

Less tab-switching. More focus.

📱 iOS app - coming very soon Not every important conversation happens on Zoom.

Capture in-person conversations on the go

Review meetings and access your full history anywhere

Pre-download on the App Store shortly

Why this matters:

"The real value of meetings isn't the notes, it's the knowledge inside them." That's been our north star since day one.

We've always had two customers: the person in the meeting who wants to be fully present, and the leader who needs visibility across their team. This update serves both in genuinely new ways.

And all of this is available for free - because it's your call data, and you should make the most of it.

Drop a question below. We'll be in the comments all day. 🚀

- Rich & the Fathom team

Fathom, you beautiful beautiful people.

I started using Fathom two years ago and it completely transformed how I coach.

I'm a Career Coach with ADHD. Taking notes on client calls was always a struggle. Things got dropped. It pulled me out of the conversation. Fathom fixed that. I could stay fully present while my clients got notes, action items, and full call recordings they could actually revisit instead of relying on memory.

I also use AI to help clients quantify their career achievements. The transcriptions are everything for that. I can stay in the moment, stay engaged, and still capture every detail I need later.

But the gap was always timing.

I couldn't access any of that until after the call ended. So I ended up running Otter alongside Fathom just to get real-time transcription I could feed into AI mid-call. Two tools doing one job. Not ideal, but it was the only way to surface insights while the conversation was still happening.

And phone calls? In-person meetings? Those weren't part of the workflow at all.

Now all of that is solved.

Live transcription during the call. Phone and in-person conversations captured. Everything I was stitching together with workarounds, built right in.

Congrats team. This is exactly what I needed!

Question: It looks like the iPhone App is a separate paid app. Is there a way to access it as an annual Fathom Subscriber already?

I've tried a ton of similar products—we even temporarily switched to Zoom's "free" features—but we ended up coming right back to Fathom. It is hands down the best solution on the market. By far. With their new MCP server, it’s incredibly easy to integrate meeting content directly into our Customer Success workflows.

This is a great example of using AI to drive productivity, for real.

Kudos to@richard_white and the rest of the @Fathom team!

Hey Woww,

Fathom is a must-have tool, I’ve been using it for about a year now. I remember I was the only one using it at the company, and now all EAs and even some C-levels are using it.

Why? Because it records, summarizes, and the best part, you can ask it for exactly what you need from each meeting.

At the end of the day, I do a quick audit and review things like:

What tasks were assigned to me?

What’s important or what did I highlight?

I also pair it with another AI tool that helps me summarize my entire day in about 10 minutes 🙂

(FINALLY!) I have my OpenClaw connected to Fathom for transcripts, but have been frustrated at having to use Fathom + another note taker for non-zoom/Google meetings. Super happy I can bring this down to one tool that I like.

As someone who was a top 1% Gong user (per Gong), Fathom checks all the boxes and then some.

Well, most. Most of the boxes.

There are a few oddities (can't use the Zoom app without launching fathom is an example), but this is the easiest and lightest call recording tool I've used in a while.

Fun fact: we unsubscribed and re-subscribed once they opened their API.

Highly recommended.

I love Fathom. I have been using it for several years, and I have yet to find anything that compares. Today I went back to recording from 2024, went to "Ask Fathom" about a protocol I had done, and it was outlined in detail perfectly.

The customer service is fast and stays with you until you are clear.

I can't say enough about it.

As a life coach who does most of his work on Zoom, Fathom is absolutely indispensable! Not only does it record my sessions effortlessly, it provides me with huge value after the call in the form of transcripts, summaries, action lists (great for remembering homework I gave out!), and even the ability to ask Fathom questions for insights about individual sessions, all the sessions I've had with an individual client, or about patterns across the board, and so much more. Hard to imagine working without it!

I have been using Fathom for several years, and I've also left Fathom, trying to find a better solution. And I'm back! My favorite part is the Asana integration, which automatically pulls action items and sends them to Asana for me to follow up on.

We've tested a lot of call recording and note-taking tools over the years as a high-volume sales team, and nothing has come close to Fathom.

At this point, it's fully embedded into how our team operates. Every AE uses it on every call, and it has genuinely changed the way we run discovery, training, and follow-ups.

A few things that stand out for us:

First, the automatic summaries are incredibly accurate. After a call, we immediately get a clean breakdown of key points, action items, and next steps. This alone saves our team hours every week and keeps deals moving without anything slipping through the cracks.

Second, the ability to instantly pull clips is a game changer. Whether it's a strong customer quote, an objection, or a key moment in a discovery call, we can grab that snippet and share it internally in seconds. This has been huge for coaching, deal reviews, and aligning our team.

Third, search and transcripts are fast and reliable. We regularly go back into past calls to find specific details, confirm requirements, or prep for follow-ups. No more digging through notes or trying to remember what was said.

From a sales leadership perspective, it's also been invaluable for training. New team members ramp faster because they can watch real calls, see what “good” looks like, and learn from actual conversations instead of theory.

It also keeps our entire team aligned. Everyone has visibility into conversations, which makes collaboration between sales, solutions, and leadership much smoother.

Bottom line, this isn't just a recording tool for us. It's become a core part of our sales workflow.

We absolutely love it and highly recommend it to any team that runs a serious volume of calls and wants better visibility, better coaching, and better execution.

Pretty good. I'm surprised how many tidbits it can pickup. Gone are the days of having presenter/note-taker issue, as you know it's nearly impossible to do both WELL.

We have been using Fathom since the very beginning. We are champions for the product and mention it as much as we can. We have also tested a few other options, but always find ourselves coming back to Fathom. It has been reliable and saved our butts on soooooo many meeting details. Saving the link to the meetings in our project management platform allows us to kick off the meeting, and assign the project in no time. We love Fathom and promote it on our socials and podcast all the time. Thanks FATHOM!!

I use Fathom every single day and have no idea what I would do without it. It allows us to capture insights that inform everything from product and pricing to customer experience and context.

I've been waiting for these changes. So excited to check them out!

I've really enjoyed using it over the last couple of years, first with teammates at work, and then eventually found some use cases in my personal life. I love having a catalog of video recordings to look back on and track trends, conversations, little moments of joy with friends and colleagues alike. I'm excited about the MCP support that's coming out that will make it even easier to search using natural language. Excited to try out 3.0!

The bot-free approach is a huge deal. In finance, meeting context is everything — deal terms get discussed verbally, and if the notes are off, downstream decisions suffer. I host a podcast on financial modelling (ModeLoop Podcast on Spotify) and accurate transcription of technical finance discussions is genuinely hard. The Claude integration is interesting — curious whether it handles domain-specific terminology like DSCR, IRR waterfalls, and covenant structures well out of the box.

Regular user here. Love the product, transcription and UX is on point. Stoked to see them officially launch here.

Wow, would like to have a try in our next meeting. Congrats on the launch!

I was an early Fathom user: it's been keeping track of my meetings for years.

The fact that I can now pull every meeting I've ever had into Claude or ChatGPT and instantly execute on whatever I discussed in that meeting—with the whole history of everything that came before— is just insane.

What a world we live in.

Love, love, love Fathom - it saves so much of my brain power by recording all my meetings. I nevery have to look at my non-sensical scribble ever again! Great product.

I've been a Fathom customer for many years and these are the top features we've wanted. Awesome launch!

I've been using Fathom for three years and I have notes and transcripts from 500 meetings stored in the system. I've already been impressed with the account wide built in LLM chatbot and its ability to synthesize useful insights and summarize important points from across years worth of meetings.

I love Fathom!! I use it for all my meetings. The summary and action items sent out to everyone on the call keeps us all on task!

I love it so much! When Attio integration?

Long time user of Fathom here. there are several things about the new update that I find are a downgrade and can't turn off ...

1. A new window pops up when I start a meeting and give me notes that I don't need.

2. Another window now pops up after the meeting and gets in the way of my workflow.

I understand these can feel like a "value add" but they're really noise in my experience and degrade the experience overall. At minimum I'd love a way to turn them of.

This is the tool I consistently use the most. One of those things that makes you wonder how you survived before. Super stoked to try out the new features.

Fathom is the best!

I love Fathom, and I love this new update.

Amazing product, need to try the bot-free capture.

Everyone wants AI without seeing AI bots.

Do you have any upcoming updates planned for the API? I’m aware of the webhooks, but I’m also interested in whether there’s a way to pull meeting notes data directly.

Been a customer now for two+ years. It's an amazing product, and really excited about the new capabilities!