PH热榜 | 2026-04-07

一句话介绍:NovaVoice是一款桌面端语音操作系统,通过上下文感知的听写、跨应用语音指令和内置AI助手,在写作、编程、沟通等多任务场景中,让用户无需切换应用与手动输入,实现“动口不动手”的高效工作流,解决频繁切换打断专注与打字效率低下的核心痛点。

Productivity

Writing

Artificial Intelligence

语音操作系统

语音听写

AI助手

跨应用控制

生产力工具

桌面效率

语音指令

智能转录

多语言支持

无障碍辅助

用户评论摘要:用户肯定其多语言支持(如孟加拉语)和AI助手质量,但指出转录速度有待提升。核心关切集中在:与竞品(如VoiceOS)的差异化、隐私安全与数据存储、误操作防护机制、应用集成广度以及未来对iOS的支持计划。团队回复积极,强调安全重于速度、数据本地处理及持续改进。

AI 锐评

NovaVoice的野心并非做一个更快的听写工具,而是试图成为桌面的“语音层”。其真正价值在于将语音从单一的文本输入通道,升级为连接用户意图、本地应用与AI能力的**系统级交互协议**。

产品聪明地避开了与Siri或Cortana在通用智能上的正面竞争,转而聚焦于“生产力”这一具体、高频且痛感强烈的场景。其宣称的“上下文感知写作”和“跨应用语音命令”,本质上是将大语言模型的“理解”能力与操作系统的“执行”能力进行缝合。用户说“给Maria发微信问设计稿进度”,它需要理解实体关系、应用逻辑并执行多步操作——这已远超听写范畴,触及了“语音自动化”的深水区。

然而,正是这一点构成了其最大的风险与挑战。从评论中可窥见,用户对隐私(数据是否上传)、安全(误操作防护)和可靠性(复杂指令执行)抱有天然疑虑。团队“安全重于速度”、不请求广泛OAuth、关键操作需用户确认的谨慎策略,虽值得称道,但也可能成为体验流畅度的天花板。这揭示了一个核心矛盾:语音交互的魅力在于“无感”和“流畅”,但涉及跨应用操作时,安全边界必然引入“确认感”和“断裂感”。

此外,其商业模式与生态构建路径尚不清晰。作为一款深度依赖各应用API接口的工具,其功能深度与广度受制于外部生态的开放程度。当前有限的集成列表(如WhatsApp、Gmail)更像是一个技术演示,要成为真正的“OS”,需要构建一个强大的开发者生态或与巨头达成合作——这远非一个创业团队易事。

综上所述,NovaVoice展示了一个极具前瞻性的方向:用语音作为粘合剂,打破桌面应用的数据与功能孤岛。但它面临的是一场硬仗:在安全与流畅、集成深度与开发广度、理想体验与技术现实之间找到精妙的平衡。它可能无法迅速取代键盘,但有望为特定场景(如深度办公、无障碍辅助)和先锋用户提供一个令人兴奋的“未来工作”预览。

一句话介绍:Lessie AI是一款AI驱动的智能人脉搜索与连接代理,通过自然语言描述目标人群,自动完成跨平台精准查找、评估并执行个性化触达,在营销拓客、人才招聘、网红合作等场景下,解决了用户手动跨平台搜索、筛选效率低下、触达流程割裂的核心痛点。

Sales

Artificial Intelligence

Marketing automation

AI人脉搜索

智能拓客

自动化外联

招聘寻源

网红营销

AI智能体

销售自动化

开源技能

精准匹配

B2B营销

用户评论摘要:用户肯定其从“搜索到连接”的一体化流程和匹配精准度,尤其对开源技能、避免垃圾邮件、CRM集成、数据来源及小众领域效果提出具体疑问。团队积极回应,强调了基于证据的匹配、可控的个性化触达及未来开发方向。

AI 锐评

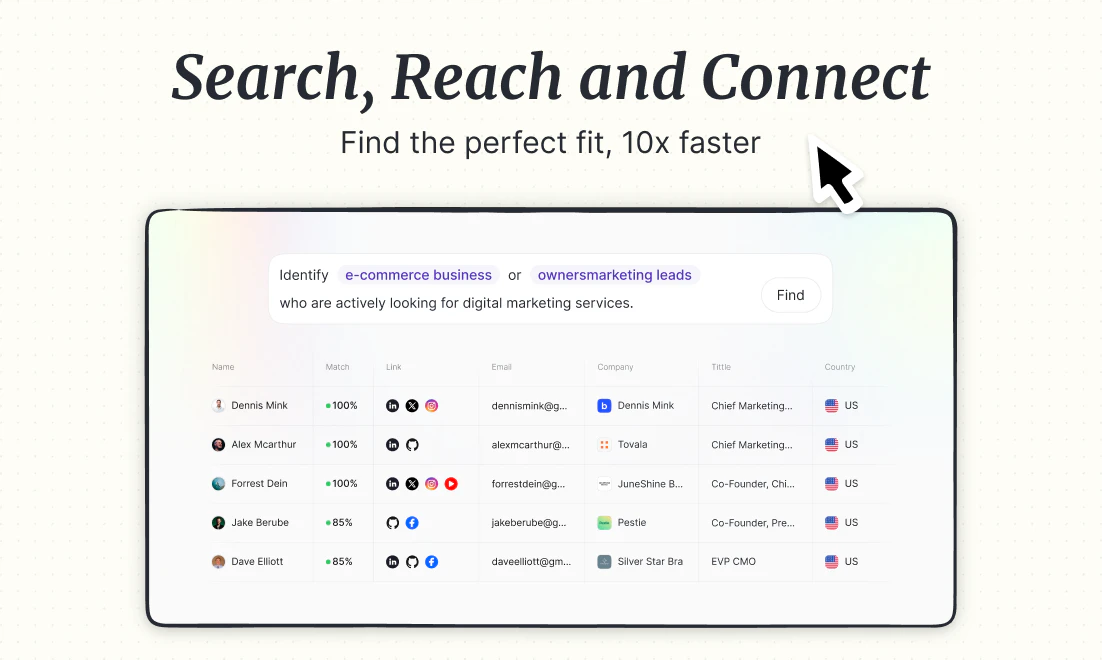

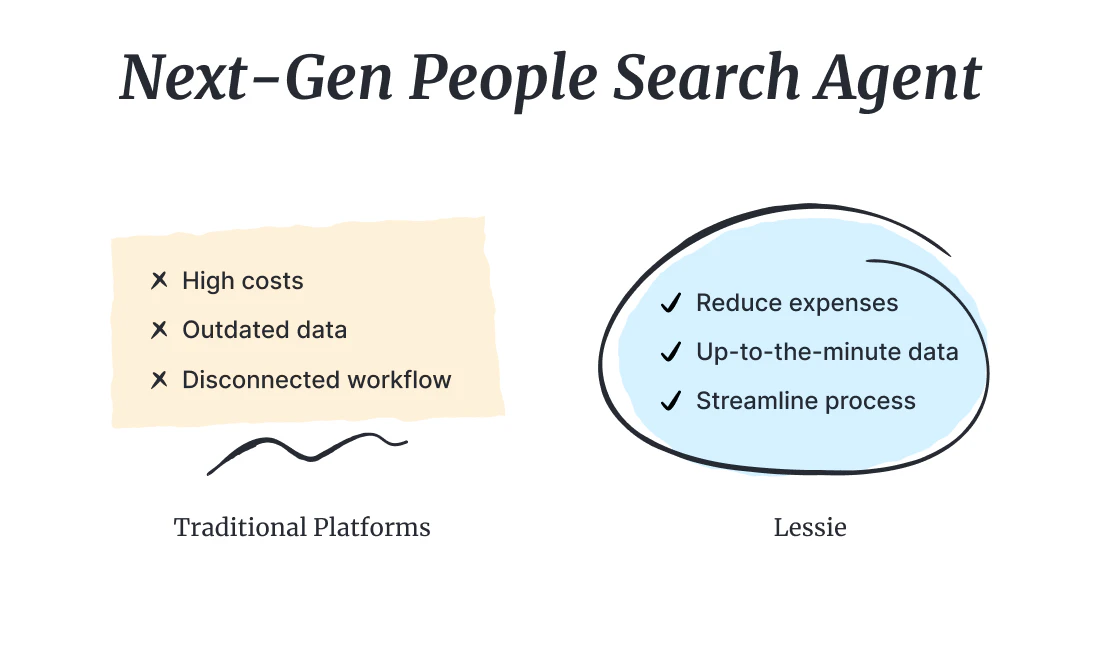

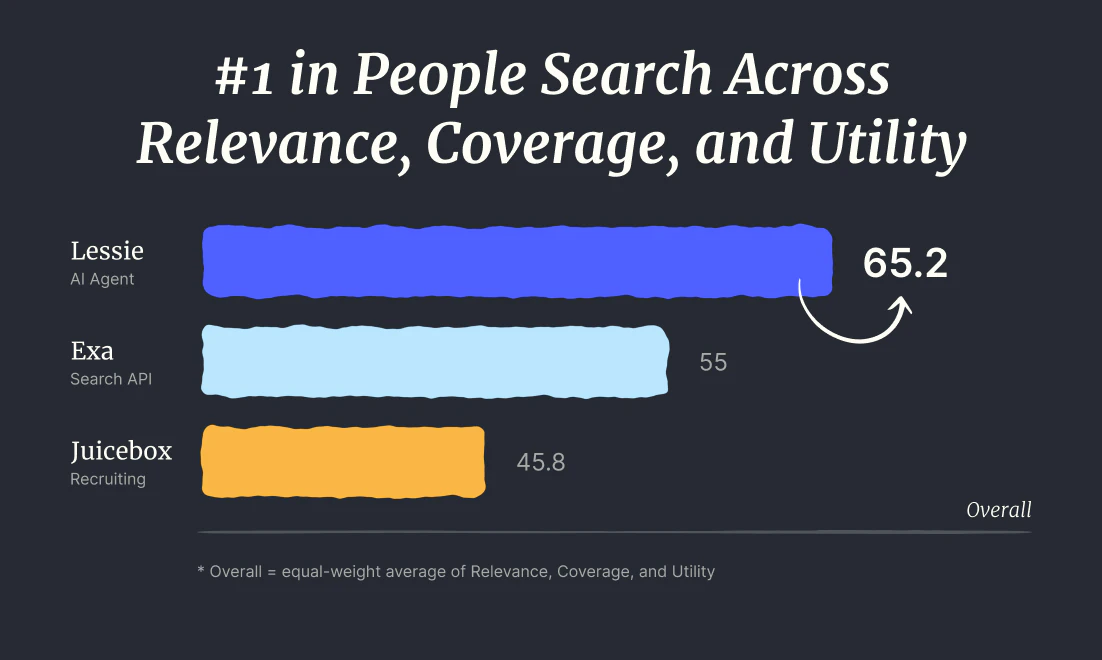

Lessie AI的亮相,与其说是推出了一个新工具,不如说是对“人脉搜索”这个陈旧品类进行了一次“AI原生”的重构。它的野心不在于成为另一个RocketReach或LinkedIn Sales Navigator的替代品,而在于试图用AI智能体彻底接管从“意图”到“连接”的完整工作流。

其宣称的“State-of-the-Art”匹配质量是核心壁垒,也是最大风险点。通过“描述而非筛选”,产品将理解模糊意图、进行跨网络推理和身份合成的重任完全交给了AI。这跳出了传统数据库的范式,理论上能发现隐藏关联,但“理解”的偏差和“合成”的幻觉可能带来精准度上的不确定性。团队开源核心技能模块是步高棋,既吸引了开发者生态构建护城河,也巧妙地将数据合规、基础设施成本等复杂问题部分转移,转而聚焦于智能体“大脑”的培育。

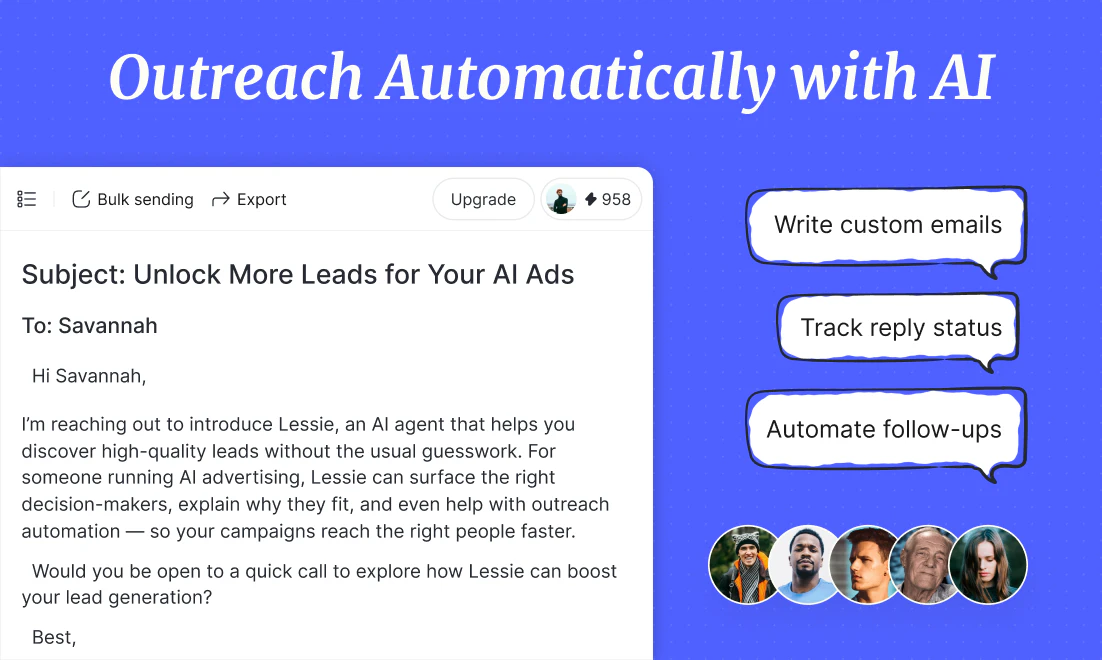

然而,其最锋利也最危险的功能是“自动化个性化触达与跟进”。这直接触碰了商业沟通中“效率”与“骚扰”的红线。尽管团队强调“Human-in-the-loop”和基于深度匹配的上下文,但在规模化应用中,如何保证每一封AI邮件都不滑向高级垃圾邮件,是产品伦理和实用性的双重考验。它可能成为销售团队的力量倍增器,也可能进一步污染本已不堪重负的收件箱。

本质上,Lessie AI贩卖的是一种“确定性的幻觉”。在信息过载的时代,它承诺将寻找“对的人”这个充满偶然性和劳动密集的过程,转化为一个可描述、可执行、可衡量的自动化任务。它的真正价值不在于更快地生成列表,而在于重新定义“寻找”这个动作——从被动筛选到主动告知,从工具使用到委托代理。成败关键在于,其AI“判断”的可靠性,能否真正支撑起用户委托的信任,并守住规模化外联的伦理边界。

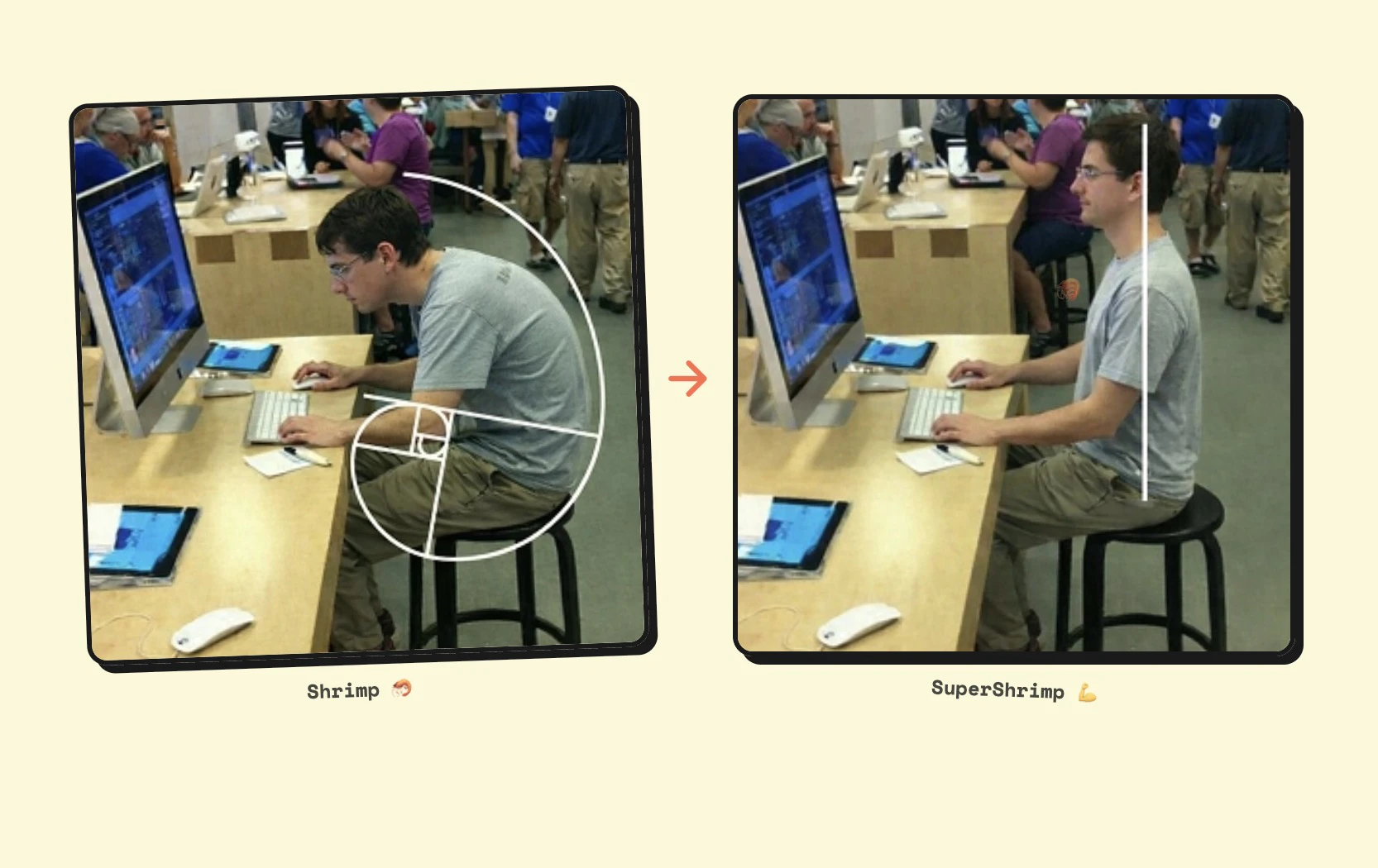

一句话介绍:一款利用电脑摄像头和AI实时监测、纠正用户坐姿的桌面应用,在长时间伏案工作的场景下,帮助用户改善不良姿势,预防健康问题。

Health & Fitness

健康科技

姿势纠正

AI计算机视觉

生产力工具

远程办公

Mac应用

本地处理

游戏化

实时反馈

无硬件依赖

用户评论摘要:用户普遍认可其创意与无硬件依赖理念,并对游戏化元素(虾进化)表示喜爱。主要问题集中于:对多显示器支持的询问、隐私安全(摄像头常开)、检测准确性以及具体的系统版本支持。

AI 锐评

SuperShrimp 精准切入了一个广泛存在却常被忽视的痛点——办公人群的姿势管理。其核心价值并非在于高深的技术突破,而在于以一种极简、巧妙且低成本的方式,将普遍闲置的摄像头转化为一个“被动监控-主动提醒”的健康干预节点。产品聪明地避开了需要额外硬件(如可穿戴设备)的路径,降低了用户尝试门槛,这是其最大的增长杠杆。

然而,其面临的挑战同样清晰。首先,技术天花板明显:仅依靠单目摄像头进行姿势评估,其准确性与可靠性在复杂坐姿、多变光照或衣着情况下存疑,这从专业用户的评论中已见端倪。其次,隐私“疙瘩”难以消除:尽管强调本地处理,但要求摄像头持续开启,在心理层面和安全性上仍是用户,尤其是企业用户,需要跨过的一道坎。最后,用户粘性存疑:初始的游戏化反馈(虾进化)颇具巧思,但长期来看,这种新鲜感能否转化为持久的习惯养成,是决定其从“有趣的小工具”升级为“必备的健康助手”的关键。

本质上,它是一款优秀的“意识唤醒”工具。它未必能像专业医疗设备般提供精准的脊柱力学数据,但其核心任务或许是成功的:即通过即时、无感的提醒,不断将用户的注意力拉回自身的姿势状态,从而打破无意识的“虾化”过程。它的市场定位更应是健康管理生态的轻量级入口,而非终极解决方案。若能持续优化基础检测的稳定性,并围绕“数据洞察”(如生成每日姿势报告)和“生态联动”(与健康App、办公软件集成)深化价值,其发展空间将更为稳固。目前看来,它是一个极具病毒式传播潜力的聪明MVP,但要从“网红”走向“长红”,仍需在实用性深度上持续锤炼。

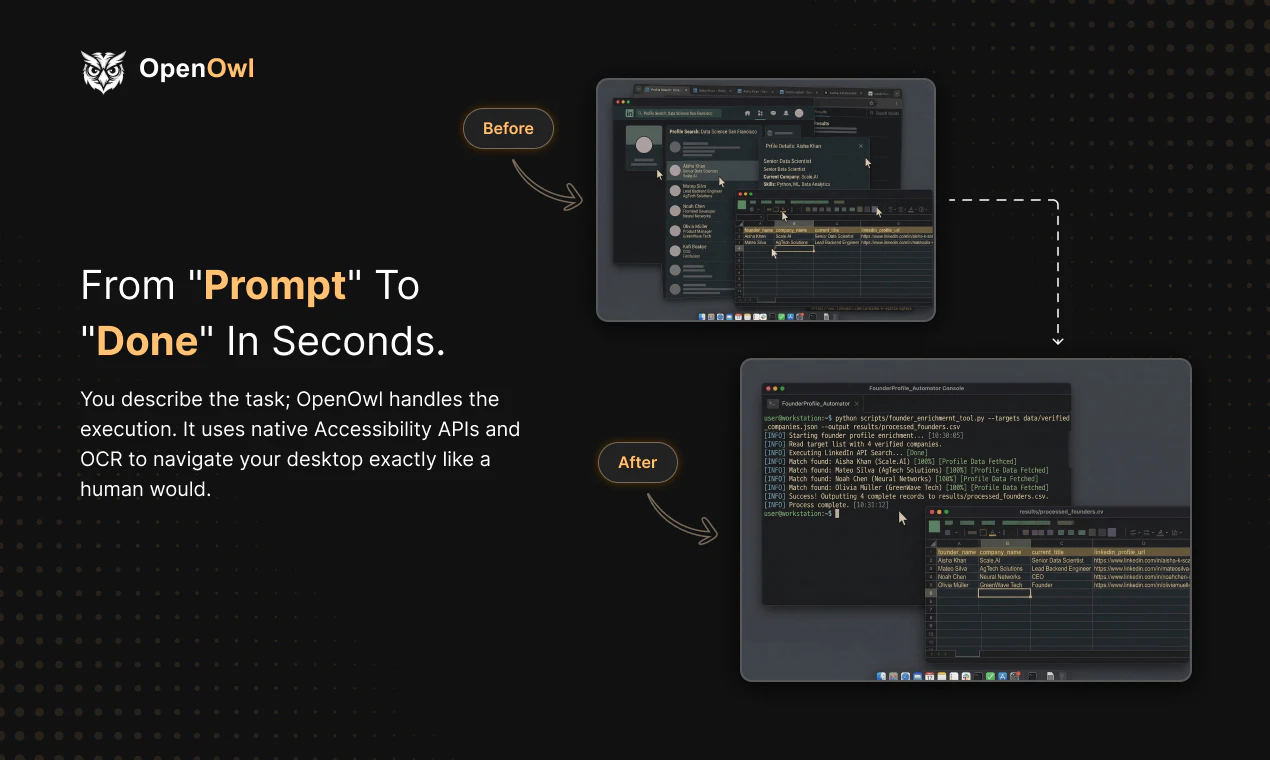

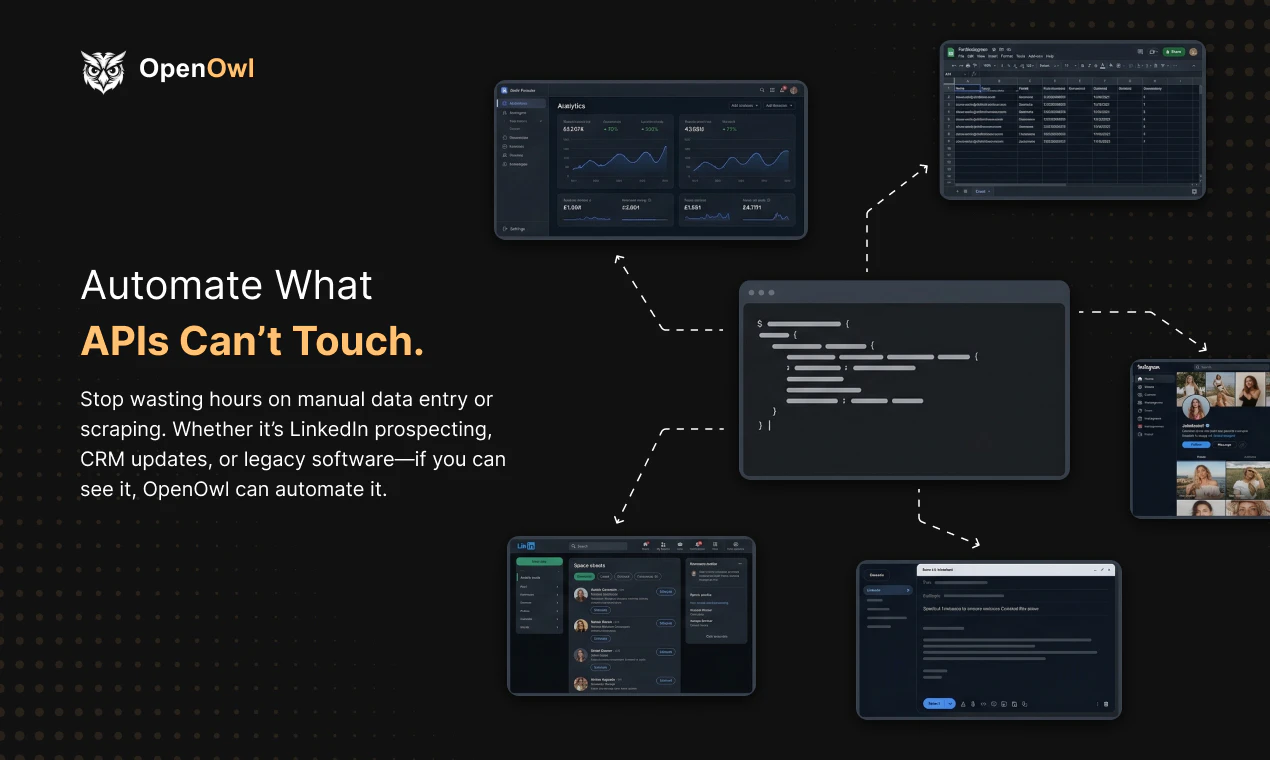

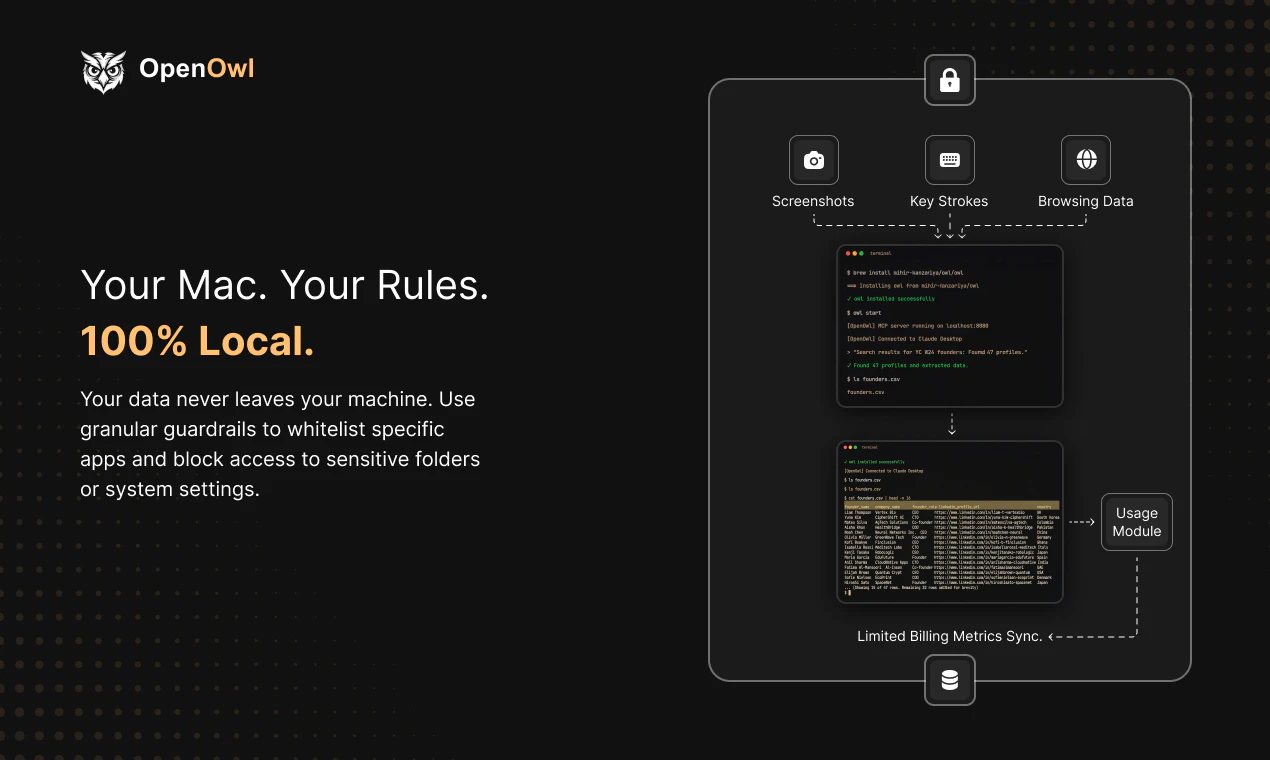

一句话介绍:OpenOwl是一款macOS桌面自动化代理,通过让AI助手能“看见”屏幕并模拟人工点击与输入,解决了在无API支持的场景(如LinkedIn拓客、Shopify后台更新)中,用户仍需手动执行AI生成指令的繁琐痛点。

Productivity

Marketing

Artificial Intelligence

桌面自动化

AI智能体

RPA

macOS工具

本地化运行

人机协作

浏览器自动化

无代码自动化

MCP生态

生产力工具

用户评论摘要:用户认可其解决“认知与执行间鸿沟”的核心价值,并对本地化隐私保护表示赞赏。主要问题集中在Windows/Android版本支持、应对网站反爬机制的能力,以及其与Claude原生功能或其他自动化工具(如Playwright)的差异比较。

AI 锐评

OpenOwl的亮相,与其说是一款新工具,不如说是对当前AI应用边界的一次精准爆破。它巧妙地避开了“重造轮子”的陷阱,没有试图让AI直接操控系统底层,而是选择成为MCP协议下的“手眼”延伸,将大模型的规划能力与传统的UI自动化技术嫁接。其真正的颠覆性在于,它瞄准了“API荒漠”地带——那些陈旧、封闭或设计上就拒绝开放接口的商业软件和网页后台。在这些场景中,即使是最先进的AI,此前也只能充当一个“纸上谈兵”的军师。

然而,其“模拟人类操作”的底层逻辑,既是优势也是阿喀琉斯之踵。优势在于惊人的兼容性和上手门槛的降低;隐患则在于稳定性和规模化挑战。评论中关于“反爬机制”和“部分失败处理”的提问直指核心:在动态验证码、异常弹窗或网络波动面前,它的鲁棒性如何?它宣称的“像人一样观察并反应”高度依赖背后大模型的实时判断能力与成本,50次/日的免费额度暗示了其操作并非零成本。此外,它将自动化从“流程预设”推向“实时决策”,但这也意味着错误可能从简单的“步骤中断”升级为更不可控的“逻辑偏离”。

本质上,OpenOwl代表了一种务实的AI工程思维:在不完美(无API)的现实世界中,寻找最高效的妥协方案。它未必是终极答案,但它清晰地指出了下一代AI智能体必须攻克的关键一关——让AI不仅会思考,还要能在混乱的真实数字环境中安全、可靠地执行。它的成功与否,将取决于能否在灵活性、稳定性与成本之间找到最佳平衡点,而不仅仅是作为一个炫技的演示。

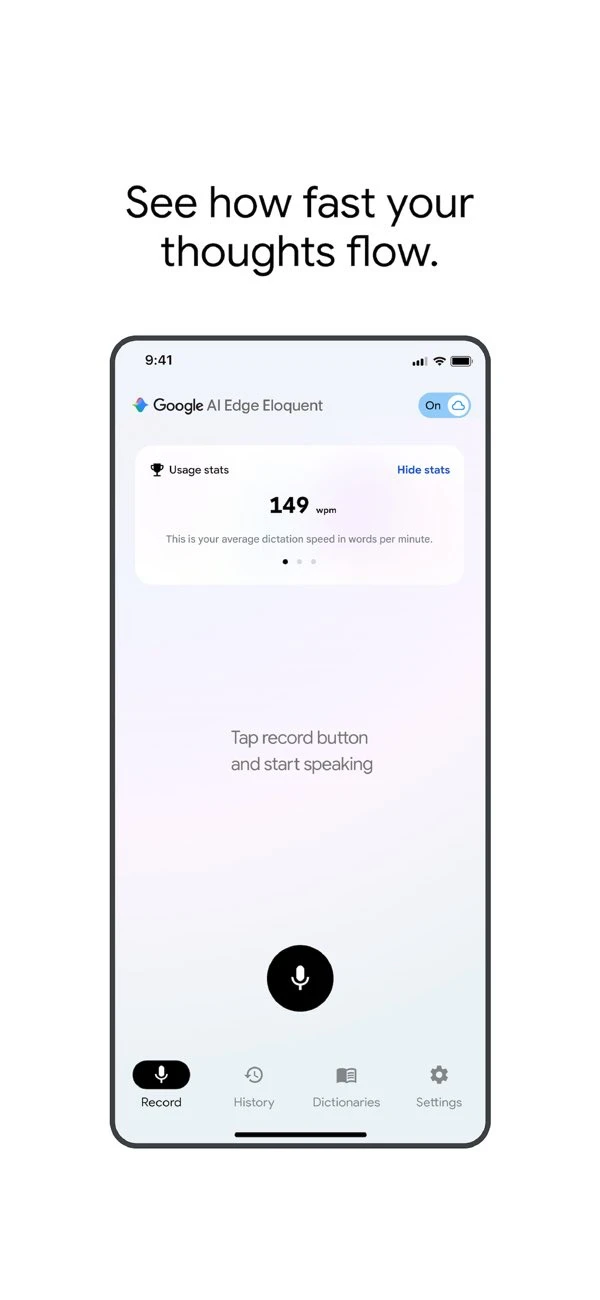

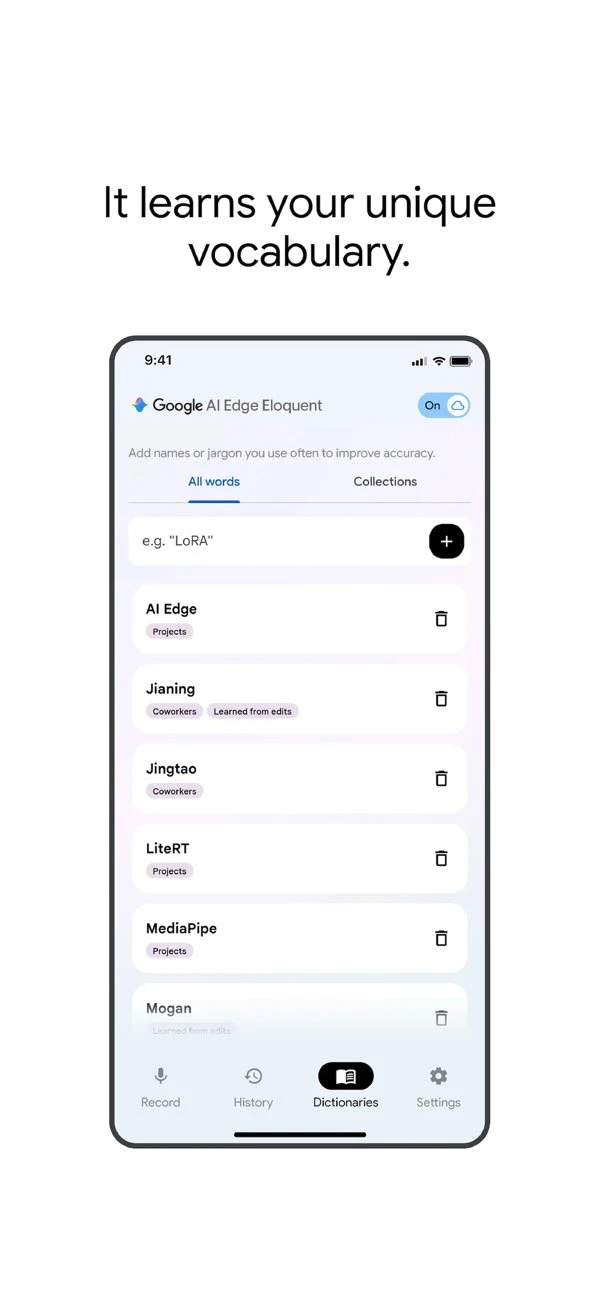

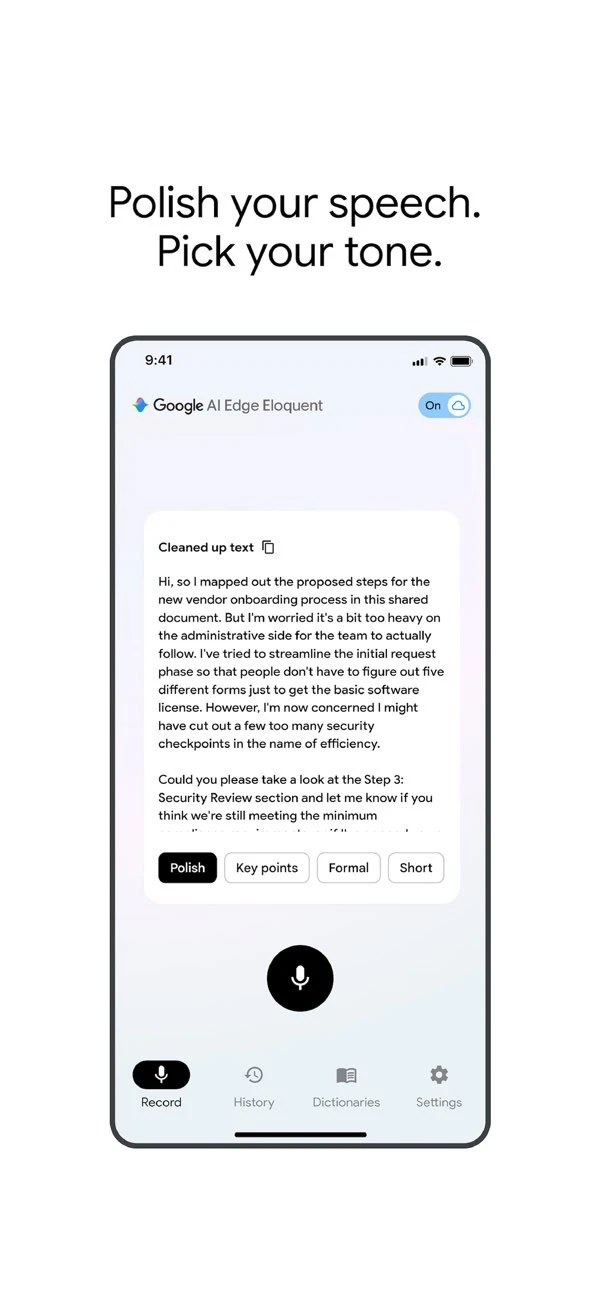

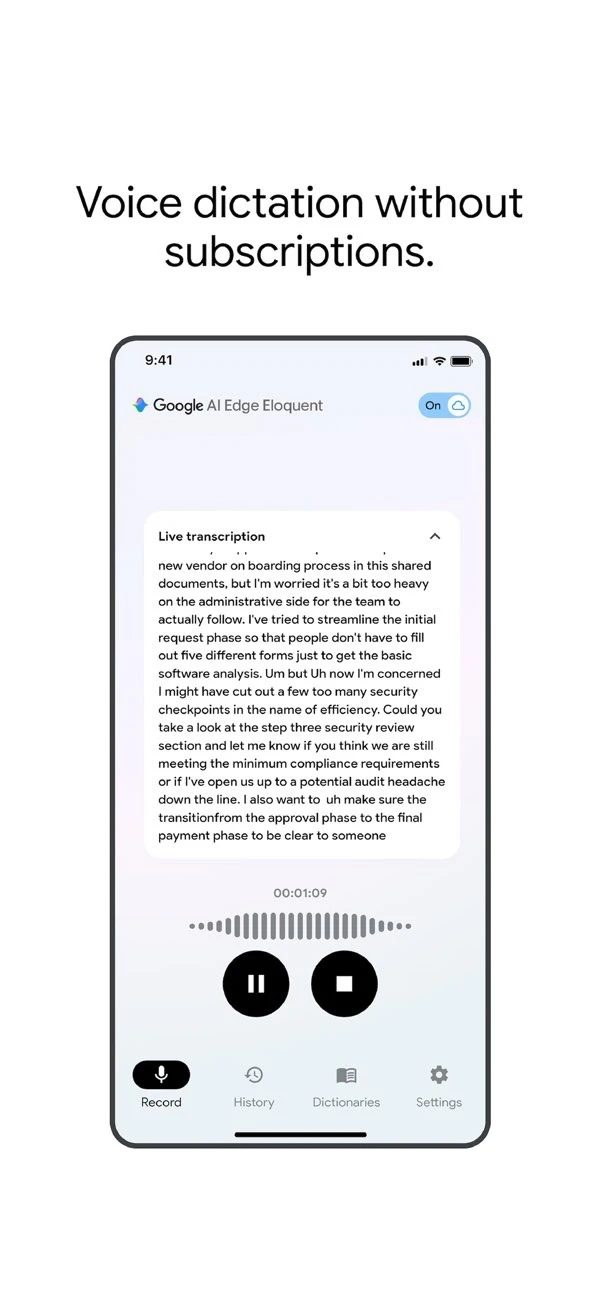

一句话介绍:Google推出的离线优先AI口述转录应用,通过本地Gemma模型自动过滤语气词和口误,在保护隐私的敏感场景下为用户提供流畅的文稿起草解决方案。

Artificial Intelligence

Audio

AI语音转录

离线优先

隐私安全

Gemma模型

本地处理

文稿整理

谷歌应用

免费工具

云端协同

用户评论摘要:用户肯定其离线优先与隐私保护设计,认为是对标Superwhisper等产品的有力竞争者。主要建议包括增加键盘输入支持以提升应用内集成体验,并关注其在专业术语处理的实际准确性及与云端方案的精度对比。

AI 锐评

Google AI Edge Eloquent的发布,远非仅仅在拥挤的AI转录市场增加一个选项,其核心价值在于谷歌以“离线优先”和“本地Gemma模型”为楔子,精准切入当前AI应用最敏感的神经:数据隐私与云端依赖。产品设计的二元性(本地基础处理+云端高阶优化)是一种精明的市场策略,既满足了极端隐私需求场景(如法律、医疗、机密会议),又通过可选的Gemini云服务承认了当前边缘AI在复杂场景下准确性的客观局限。

然而,其“免费”模式引人深思。这很可能是一次战略性的数据飞轮启动:通过极低的门槛吸引大量用户使用本地模式,收集宝贵的边缘案例与口音数据,反哺Gemma等轻量化模型的迭代;同时,将云端高阶功能作为未来潜在的增值服务入口或向Gemini生态的引流管道。用户评论中指出的“缺乏键盘集成”、“感觉仓促”等问题,暴露出其目前仍是“最小可行产品”状态,核心目的在于快速验证市场对隐私优先转录工具的真实需求强度。

真正的挑战在于平衡。在本地,需要持续压缩模型体积与提升准确率,尤其是处理专业术语;在云端,则需明确界定“基础免费”与“高级付费”的界限,避免重蹈部分AI工具因免费而体验滑坡的覆辙。如果谷歌能凭借其芯片优化(Tensor)与模型研发(Gemma)的垂直整合能力,切实提升边缘AI的体验上限,它或许能重新定义“离线AI应用”的基准,而不只是另一个附属于云端的语音输入前端。

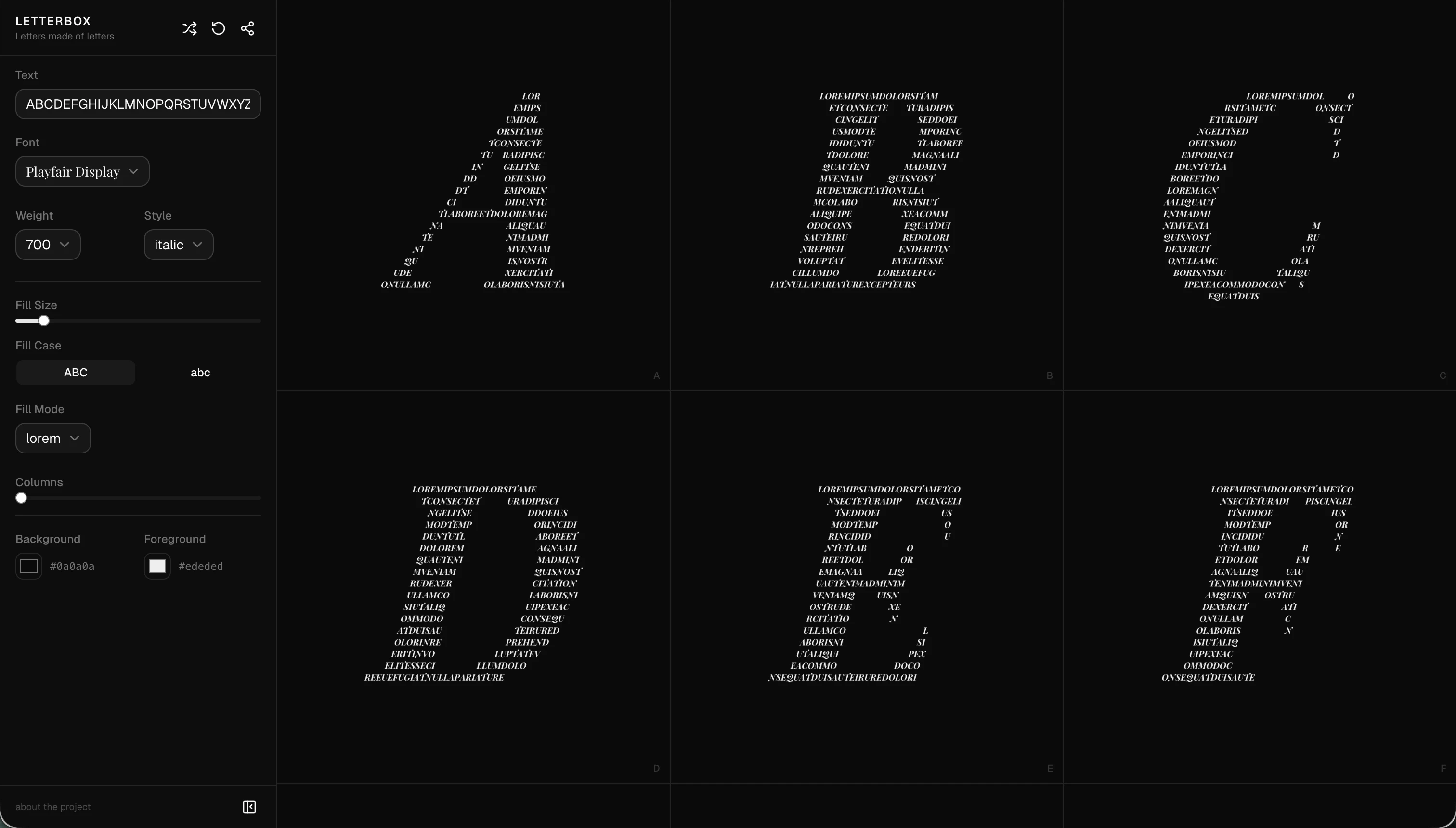

一句话介绍:Letterbox是一款将字母本身作为画布、用微小文字填充来生成视觉艺术字体的在线工具,它让设计师和创意工作者能快速创作出极具纹理感和个性的文字图形,解决了传统字体工具在视觉表现力和创意玩法上的局限。

Design Tools

Typography

字体设计工具

文字艺术生成器

创意排版

视觉艺术

在线设计

免费工具

微文字填充

可分享设计

网页应用

设计实验

用户评论摘要:用户普遍赞赏其创意和趣味性,认为它将字体从“可读”提升为“可看”的艺术。核心建议集中在:1. 增加更多控制项(内部文字流向、间距微调);2. 强烈需求导出/嵌入功能以用于实际项目;3. 个别用户遇到显示模糊的技术问题。开发者回应导出功能已在规划中。

AI 锐评

Letterbox的本质,并非一个生产力工具,而是一个精致的“字体玩具”和创意火花发生器。它聪明地抓住了字体设计中一个常被忽视的维度:文字作为视觉纹理的潜力。通过将字母解构为数百个微字符的集合,它把“阅读”的行为延迟,先让“观看”发生,这颠覆了传统排版以信息传递为最高准则的逻辑。

其真正的价值在于降低了“文字视觉化艺术”的创作门槛。用户无需掌握复杂的图形软件或编程知识,通过调整字体、颜色、填充密度几个简单参数,即可探索海量随机但可控的视觉组合。这种即时反馈和“编码于URL”的轻量级分享机制,完美契合了社交媒体时代快速创作与传播的需求。

然而,从评论中暴露的“导出需求”狂热可以看出,用户渴望将其从“玩具”升级为“工具”。这正是其面临的典型悖论:过度强化导出质量、控件精度,可能扼杀其轻松的实验性核心;而停留在玩具阶段,其生命周期和商业价值将非常有限。开发者提到的“嵌入交互组件”思路或许是更巧妙的路径,将动态的文本艺术转化为一种新型的网页媒体元素,这比生成静态图片更具想象空间。

当前版本像一个功能完整的“最小化可行产品”,证明了市场对创意字体玩法的兴趣。它的下一步,关键在于能否在保持玩法灵魂的同时,找到一种优雅的方式,让这些美丽的文字实验落地生根,真正嵌入到数字产品的肌理之中,而不仅仅是停留于屏幕截图。这考验的是开发者对产品定位的定力和对创意工作流的深刻理解。

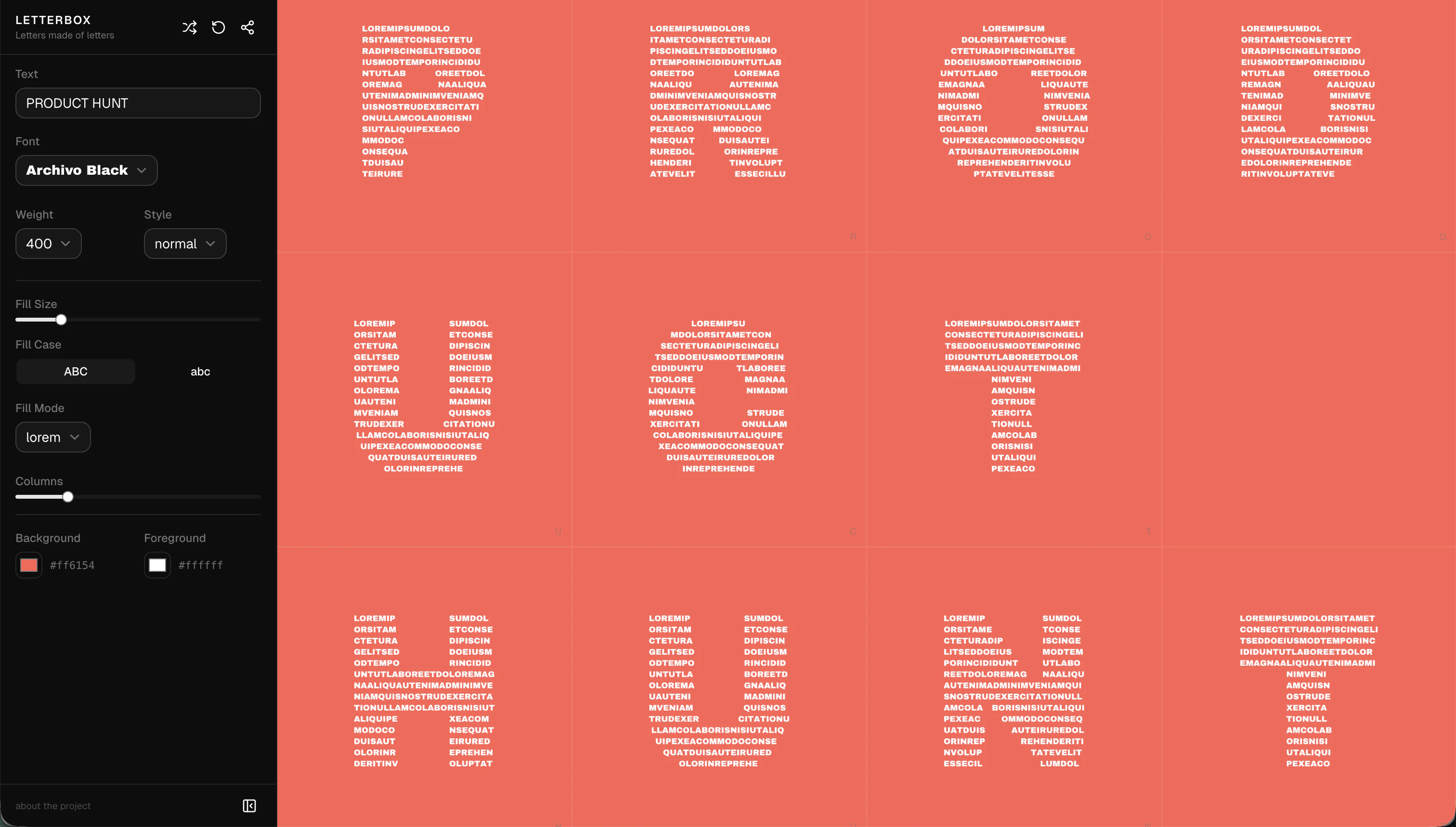

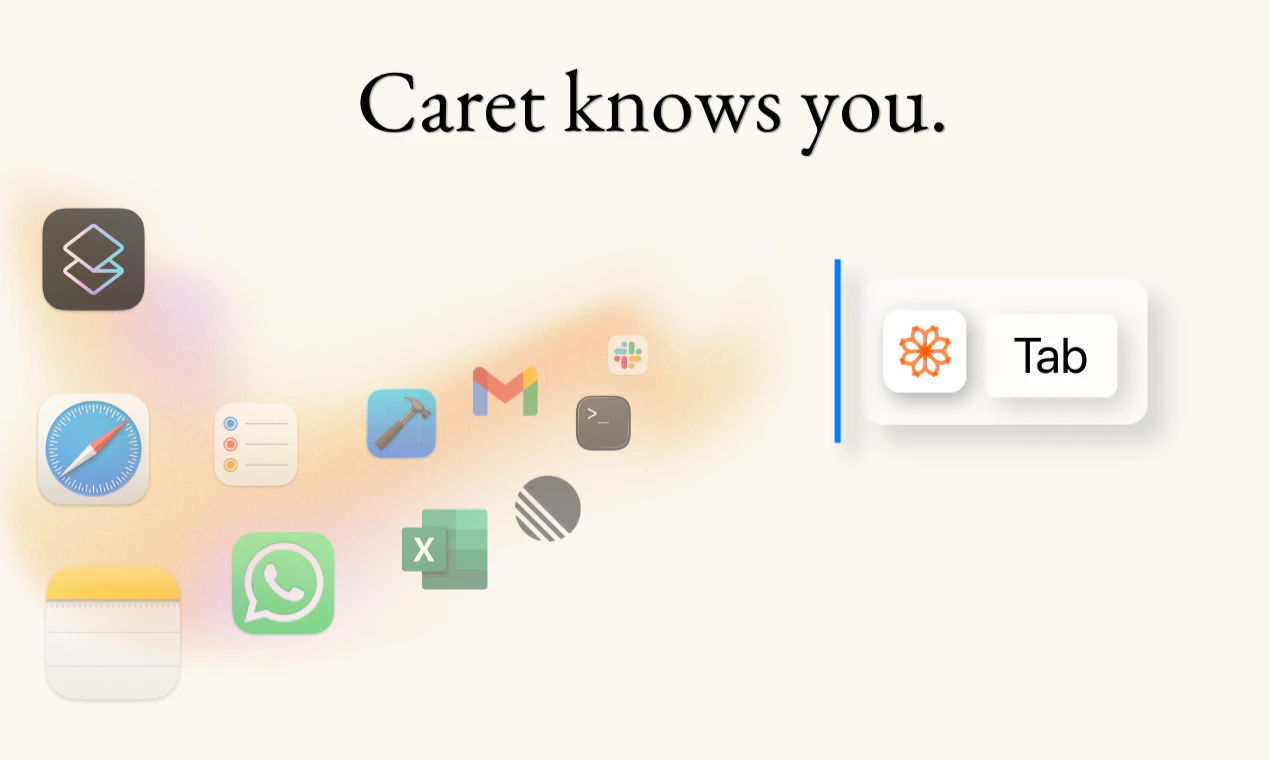

一句话介绍:Caret是一款macOS系统级AI输入辅助工具,通过按Tab键在任意应用内实现基于用户个人风格和上下文的智能句子补全,解决了跨应用重复输入、思维中断和频繁切换窗口的痛点。

Mac

Productivity

Artificial Intelligence

AI输入补全

生产力工具

macOS应用

系统级集成

隐私保护

本地学习

个性化

无感交互

第二大脑

自动完成

用户评论摘要:用户普遍认可“按Tab补全”的直觉交互和系统级集成的便利性。核心反馈集中在:期待其长期学习效果;询问隐私安全机制(如敏感字段处理)与数据本地化策略;探讨其在速度与深度个性化间的平衡;与编辑器内自动补全的体验对比。

AI 锐评

Caret的野心并非做一个简单的文本预测工具,而是试图成为运行在操作系统层面的“思维协处理器”。其真正价值在于两个打破:一是打破应用沙盒,通过无障碍权限获取跨应用上下文,这比任何单点集成的AI助手都更接近用户真实的工作流全景;二是打破通用型AI的“平均主义”回复,通过本地化、持续学习的“第二大脑”模型,追求极致的个性化,目标是让补全内容“像用户自己写的”。

然而,其面临的挑战同样尖锐。首先是隐私信任门槛极高,“读取所有输入”的双刃剑属性需要远超普通软件的安全设计和透明度。其次是技术效能平衡,本地模型的能力边界、响应速度与个性化深度之间的三角博弈,将直接决定它是“读心术”还是“恼人弹窗”。最后是市场定位,它试图替代的不仅是复制粘贴,更是用户固有的、分散的输入习惯,这种习惯迁移成本巨大。如果成功,它将成为底层交互范式的一次升级;若失败,则可能只是又一个被关闭的辅助功能。其成败关键,在于能否用近乎无感的准确度,证明“系统级学习”的必要性,让用户觉得交出部分隐私和习惯是值得的。

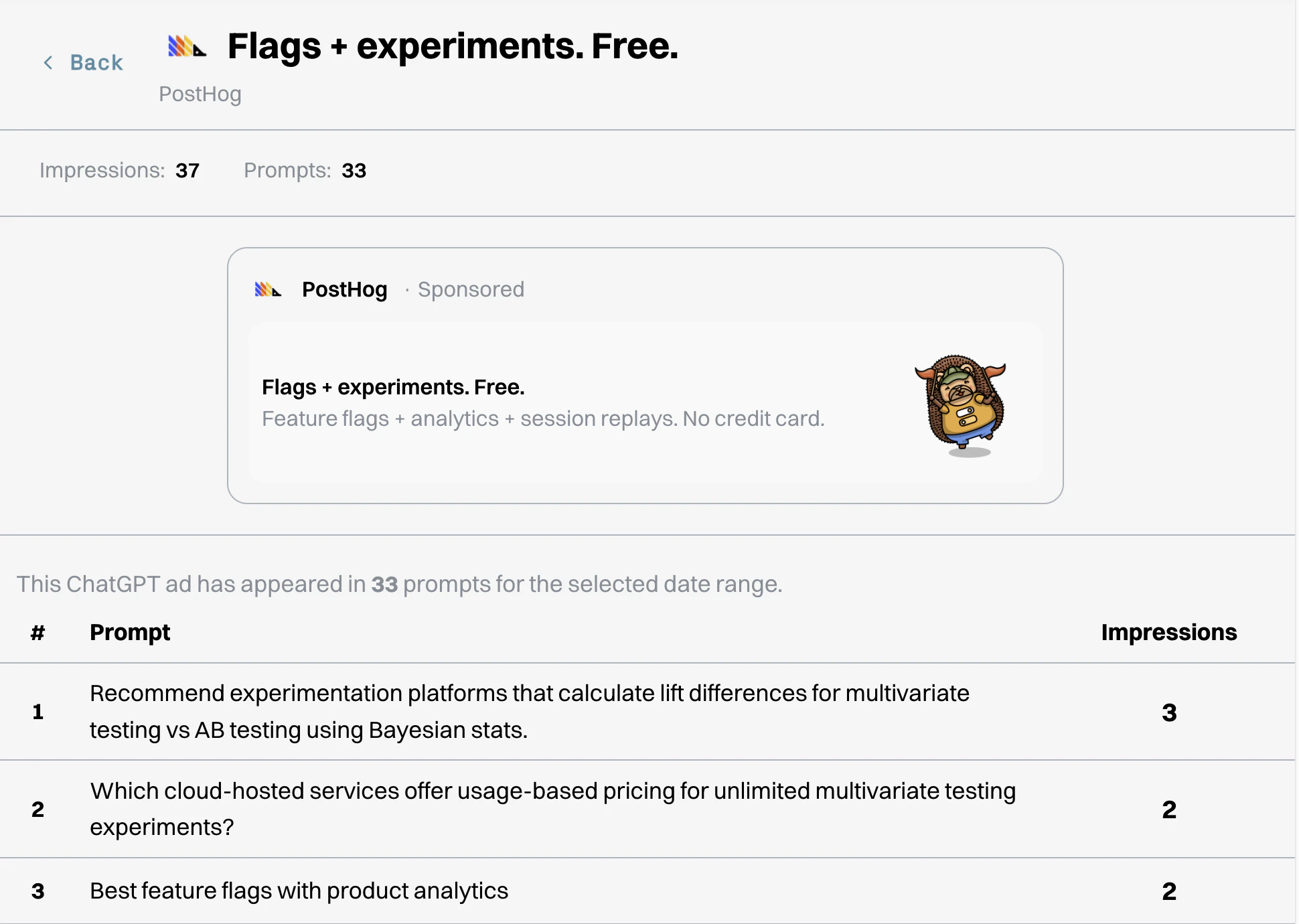

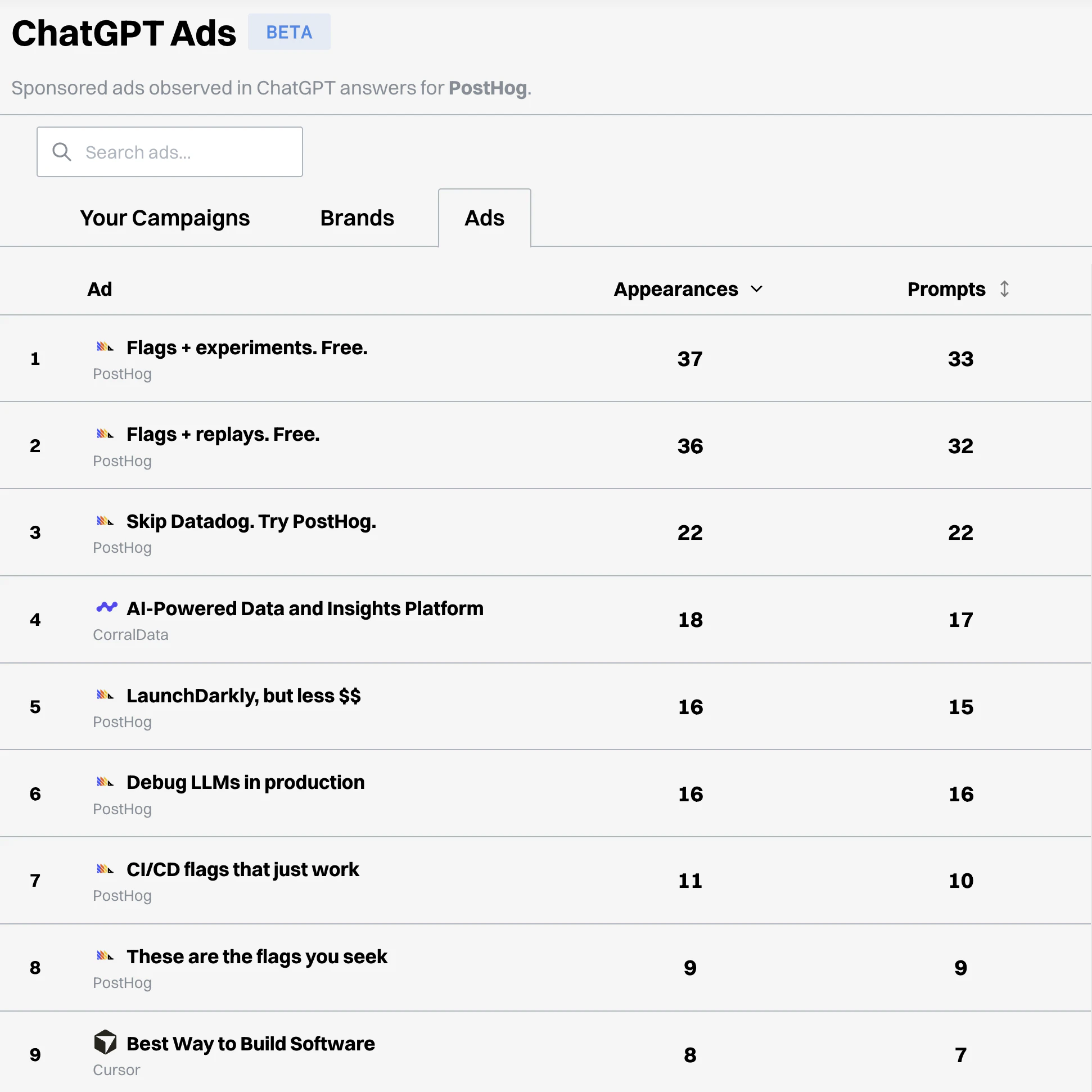

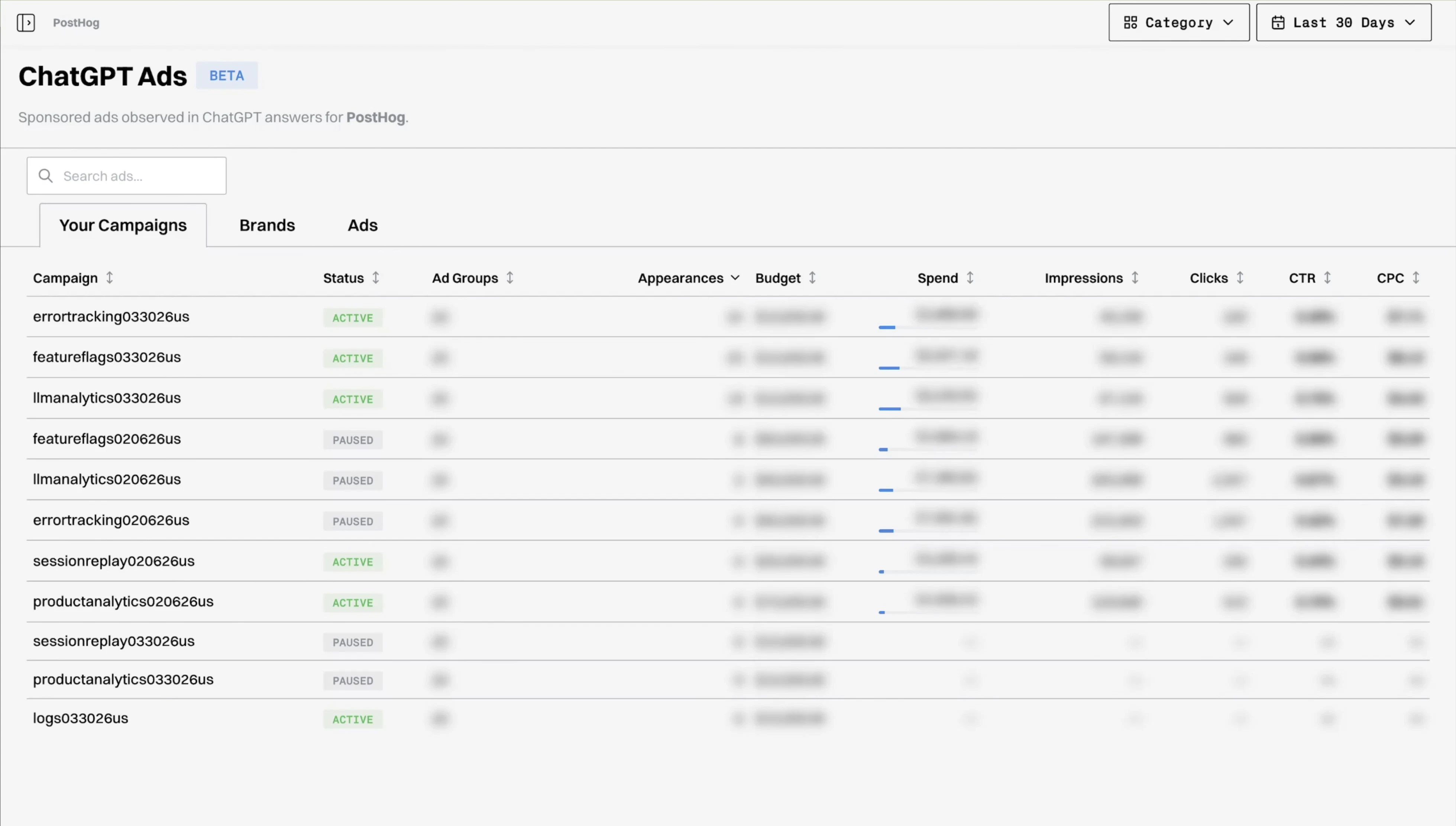

一句话介绍:Gauge为在ChatGPT中投放广告的营销者提供了竞品广告洞察与一站式管理平台,解决了广告主在新兴AI对话广告生态中缺乏可见性和分析工具的痛点。

Marketing

Advertising

Artificial Intelligence

ChatGPT广告分析

竞品广告洞察

广告活动管理

AI对话广告

营销情报

广告效果追踪

API集成

营销SaaS

广告技术

竞争情报

用户评论摘要:创始人亲自介绍产品开发背景与客户案例。用户反馈核心价值在于能针对特定提示词查看竞争对手的广告文案,这被视为巨大的早期竞争优势。另有用户表示期待使用。

AI 锐评

Gauge本质上是一款“AI原生广告生态”的军情雷达与指挥中枢。其真正价值不在于简单的广告管理,而在于抓住了ChatGPT广告从“黑盒”走向“透明化”这一关键时间窗口,将自身定位为整个生态的“情报层”。

产品逻辑犀利地切中了两个刚需:其一,是“逆向工程”竞品策略。在传统搜索广告中,关键词竞争分析已成熟,但在LLM驱动的对话场景中,广告触发逻辑更复杂、更不透明。Gauge声称能按提示词(Prompt)反向查询广告,这相当于为营销人员提供了窥视对手“Prompt攻防策略”的望远镜,在规则未定的早期市场,情报即权力。其二,是试图成为跨API广告活动的统一操作面板。这反映了ChatGPT广告可能走向碎片化(不同模型、不同版本)的趋势,Gauge提前卡位,想做聚合器。

然而,其面临的核心挑战与风险同样尖锐。首先,其数据获取的合规性与可持续性存疑。高度依赖OpenAI的API接口且功能直接绑定其广告系统的变动,政策风险极高。其次,产品的护城河不深。其功能模块(竞品分析、数据看板)在传统广告领域已是红海,一旦平台方(如OpenAI)自行提供类似基础功能或大媒体集团入场,其生存空间将被严重挤压。最后,当前ChatGPT广告生态本身仍处于极早期和极小规模的测试阶段,是否为“伪需求”或“过早优化”有待市场验证。

总之,Gauge是一次敏捷的卡位尝试,展现了敏锐的生态洞察力。但它更像一个精巧的“战术工具”,而非具有长期战略壁垒的“平台”。其成败不取决于自身功能多完善,而取决于ChatGPT广告业务的体量增长速度,以及OpenAI对其生态链的开放与控制程度。它是在赌一个生态的爆发,并希望自己成为其中不可或缺的“润滑剂”。

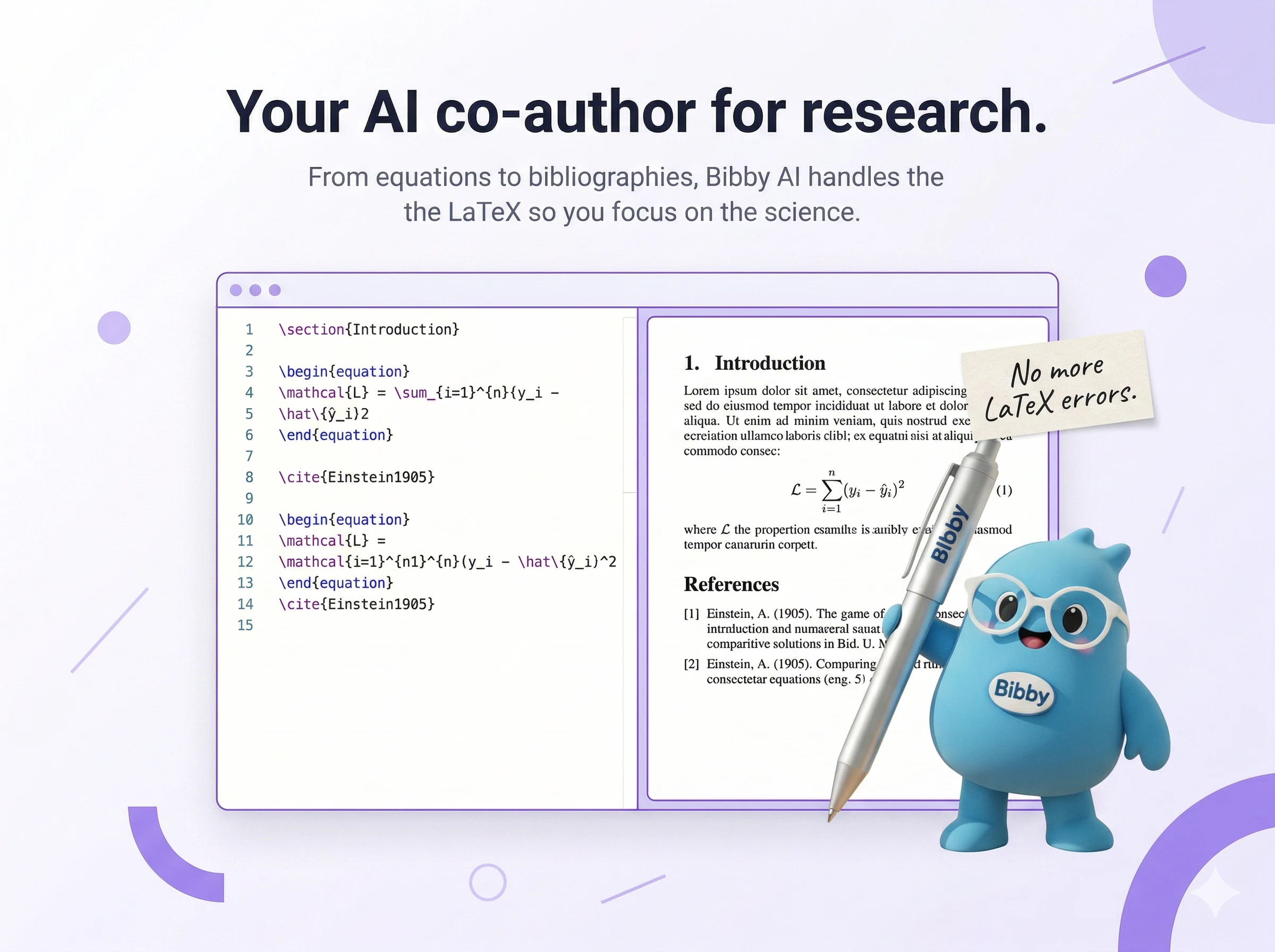

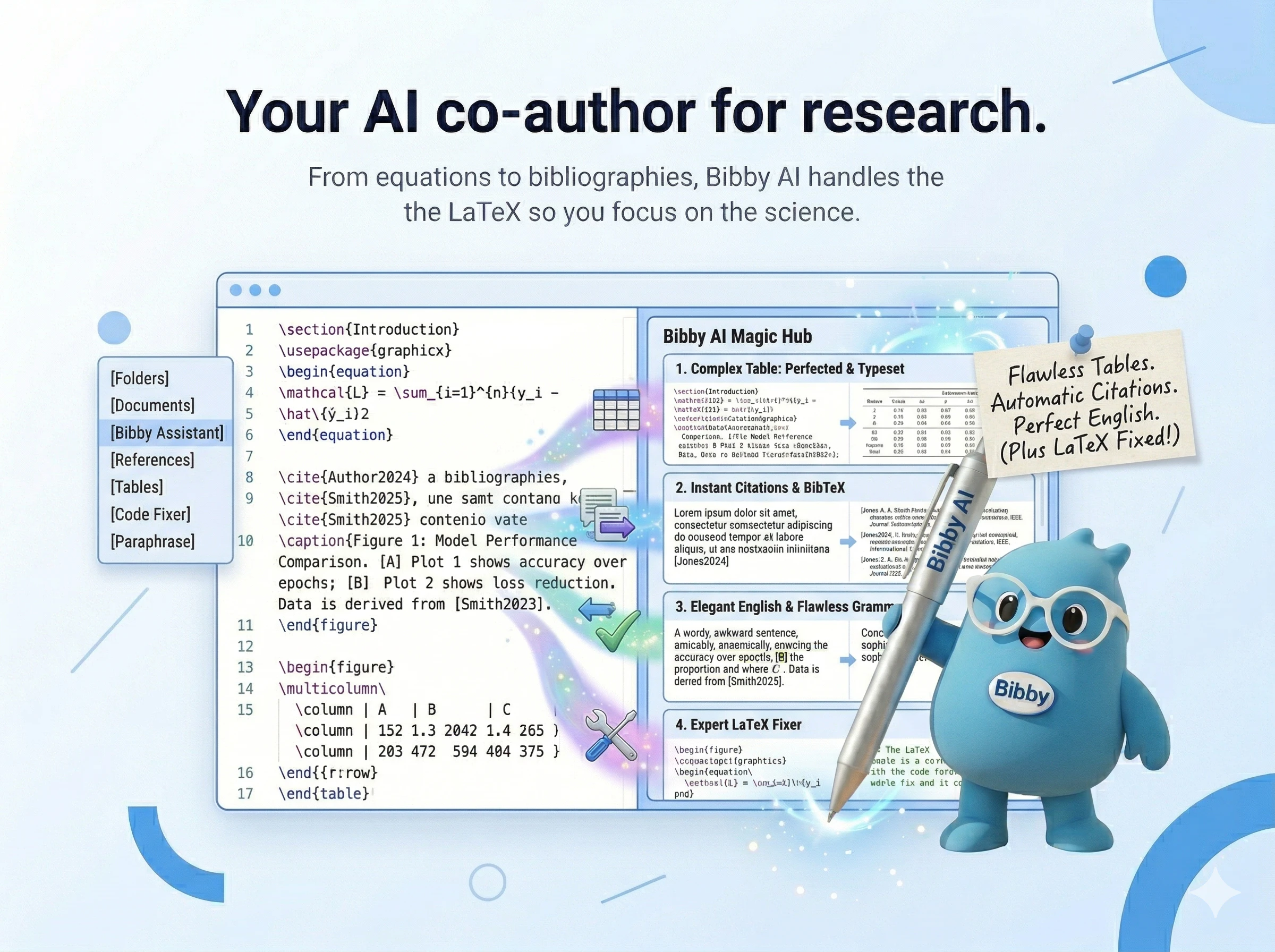

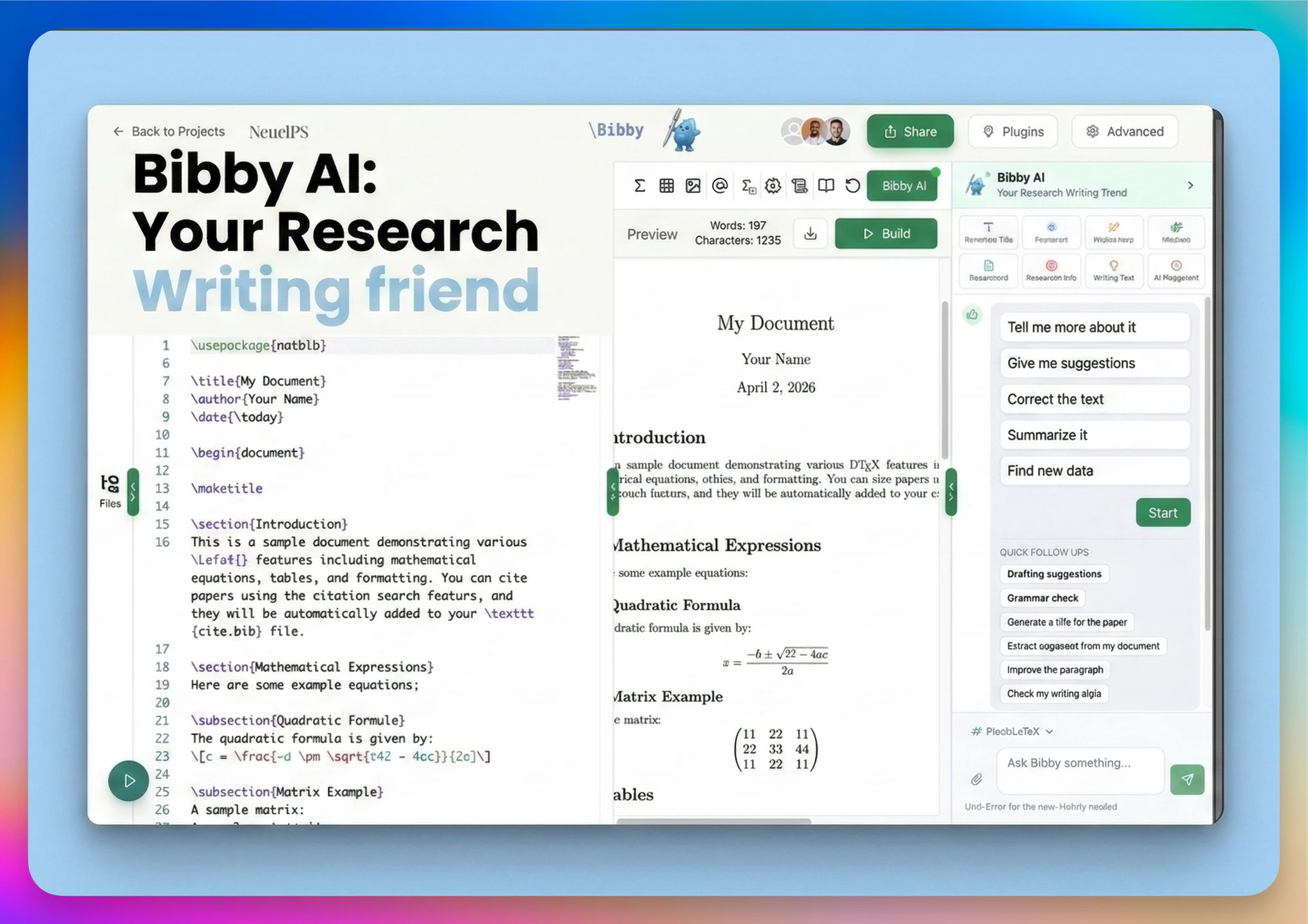

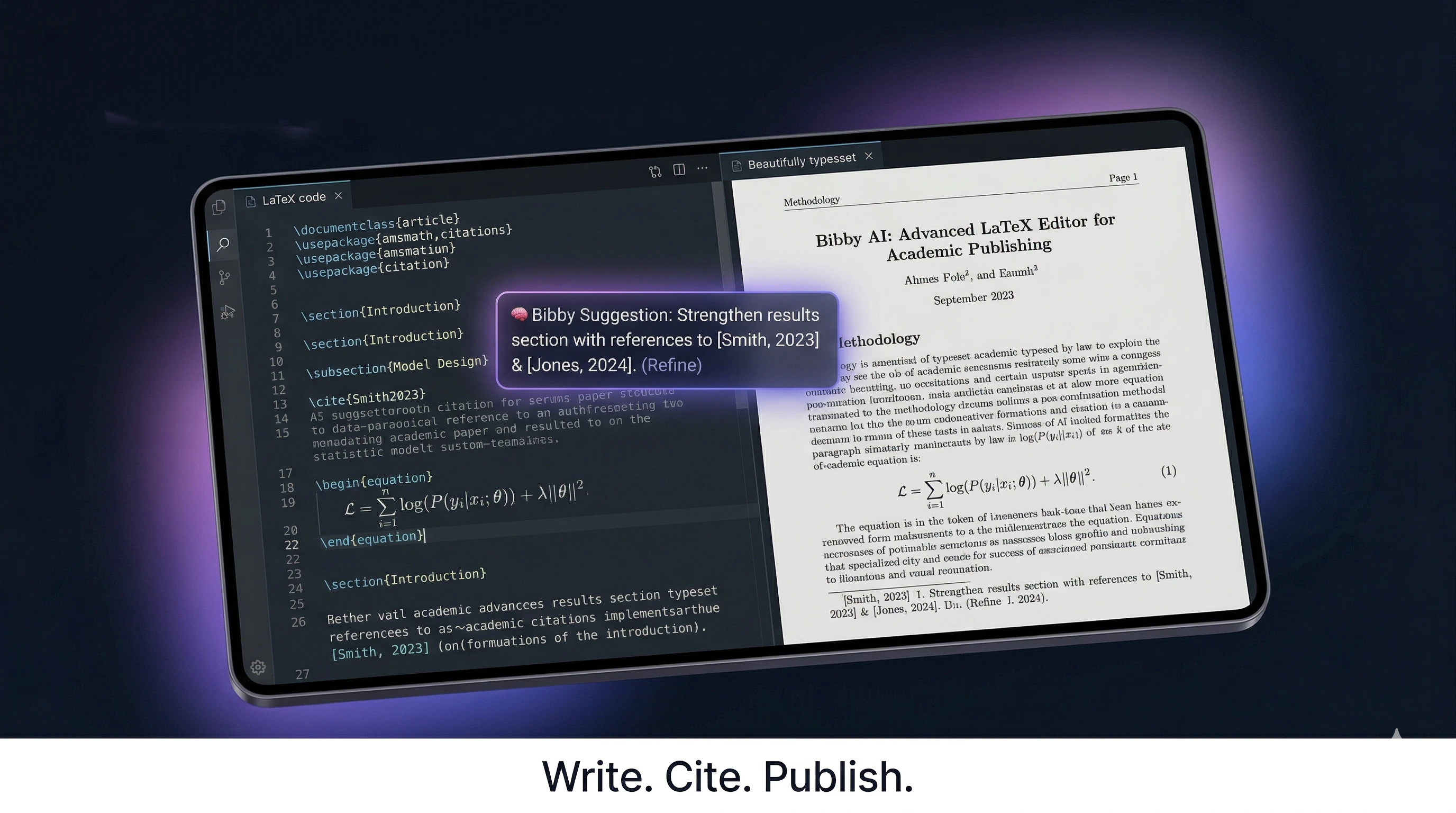

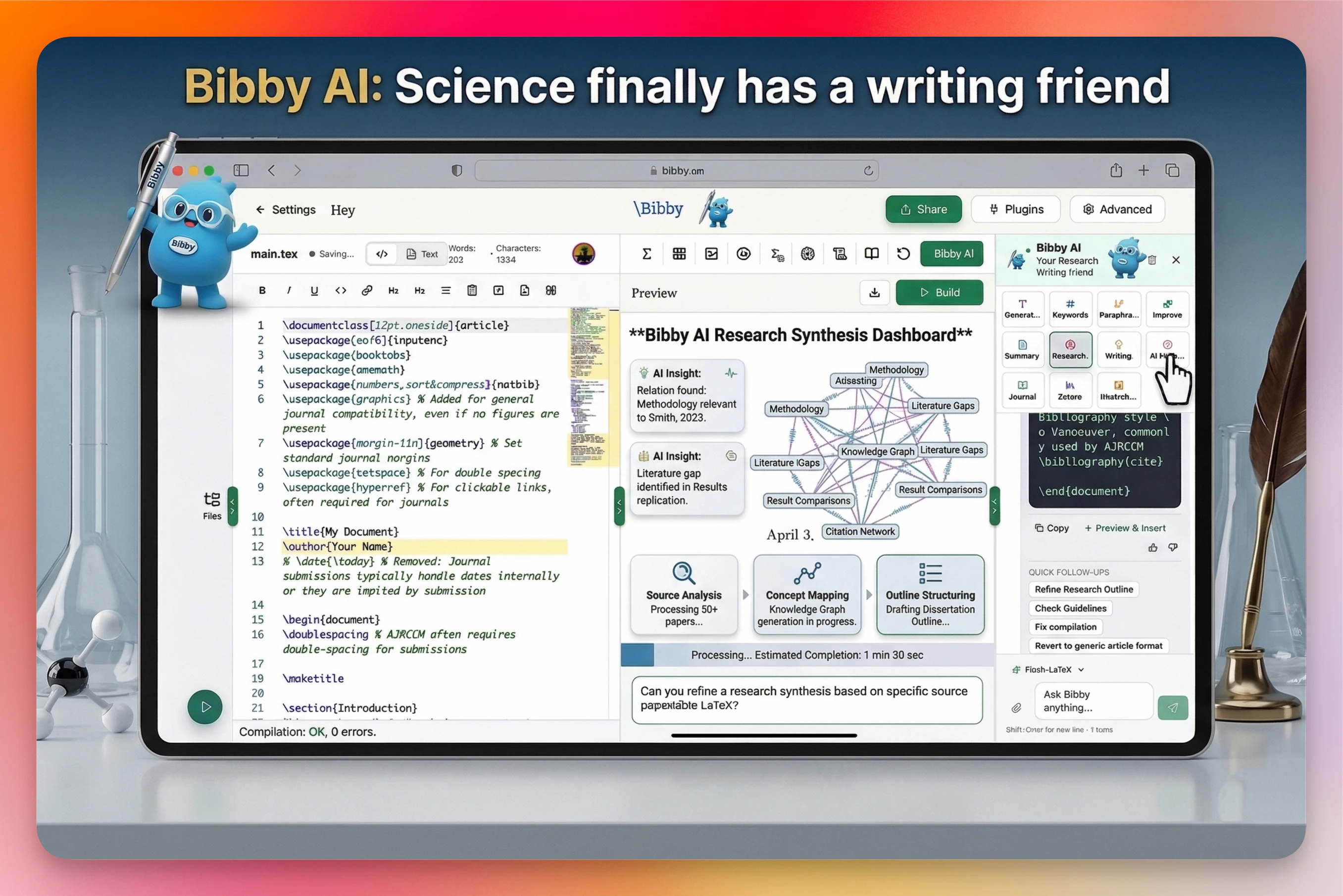

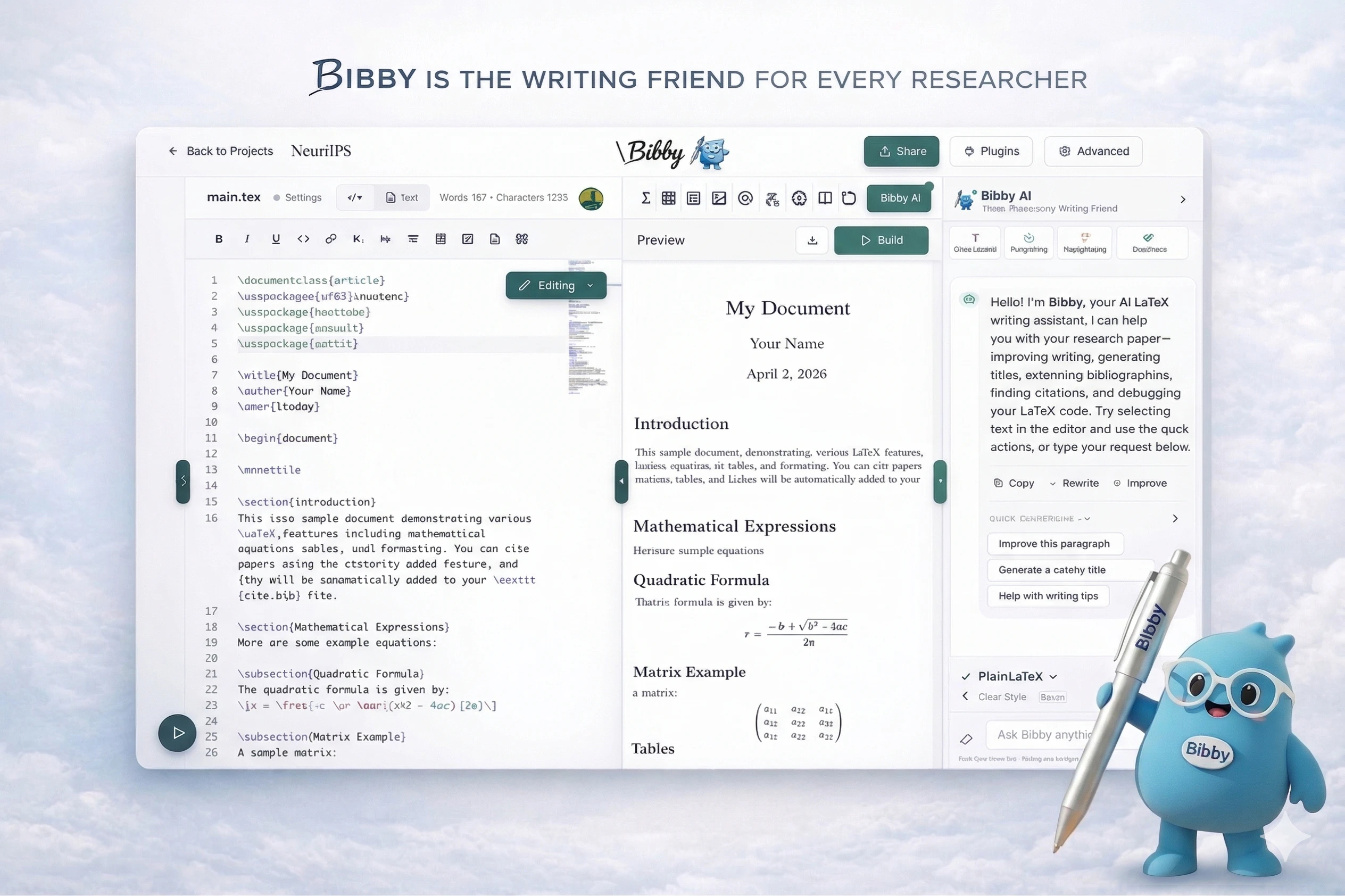

一句话介绍:Bibby AI是一款AI科研协作者,在研究人员撰写论文的场景下,通过文献挖掘、智能撰写、格式与语法检查、一键引用等功能,解决了他们耗时于繁琐格式、文献管理和写作而非核心研究的痛点。

Productivity

Writing

Artificial Intelligence

AI科研助手

论文写作

文献管理

学术协作

LaTeX工具

智能排版

引用生成

学术校对

AI协作者

研究效率工具

用户评论摘要:用户反馈积极,认为其直击科研写作流程中的低效痛点。主要问题与建议集中在:对跨学科研究、复杂公式识别的支持程度;期待具备跨项目的长期记忆功能;询问底层LLM与技术细节;建议支持团队协作中的个性化AI设置。

AI 锐评

Bibby AI并非又一个泛化的写作助手,它试图成为深入学术生产流水线的“嵌入式基础设施”。其真正价值不在于某个炫酷的AI功能,而在于对科研人员“隐性成本”的全面量化与自动化:将散落在LaTeX编译器、参考文献管理器、期刊投稿系统、乃至团队协作沟通过程中的摩擦与损耗,整合进一个以当前论文为上下文的智能层中。

产品介绍中“200M+ citations, 800+ journal templates”以及支持各大出版模板的描述,揭示了其核心壁垒可能并非AI模型本身,而是对高度结构化、封闭且保守的学术出版体系的深度适配。这更像是一次对学术工具链的“现代化重构”。然而,其面临的挑战同样尖锐:首先,“AI co-author”的定位游走在学术伦理的灰色地带,如何界定“辅助”与“代笔”将伴随始终;其次,深度依赖现有文献库的“深研模式”在本质上是对已有研究范式的强化,可能无意中抑制创新性、颠覆性观点的产生;最后,从工具到生态的跨越至关重要,能否打破学术协作中根深蒂固的个体工作习惯与机构传统,将决定其是从“好用工具”升维为“必要平台”,还是仅成为Overleaf等现有平台的一个功能插件。

创始人作为前研究人员的背景,使其精准切中了“格式而非科学消耗生命”这一情感痛点,但产品的长期成功,取决于它能否在提升效率与捍卫学术自主性、整合现有体系与催生新工作范式之间,找到精妙的平衡。

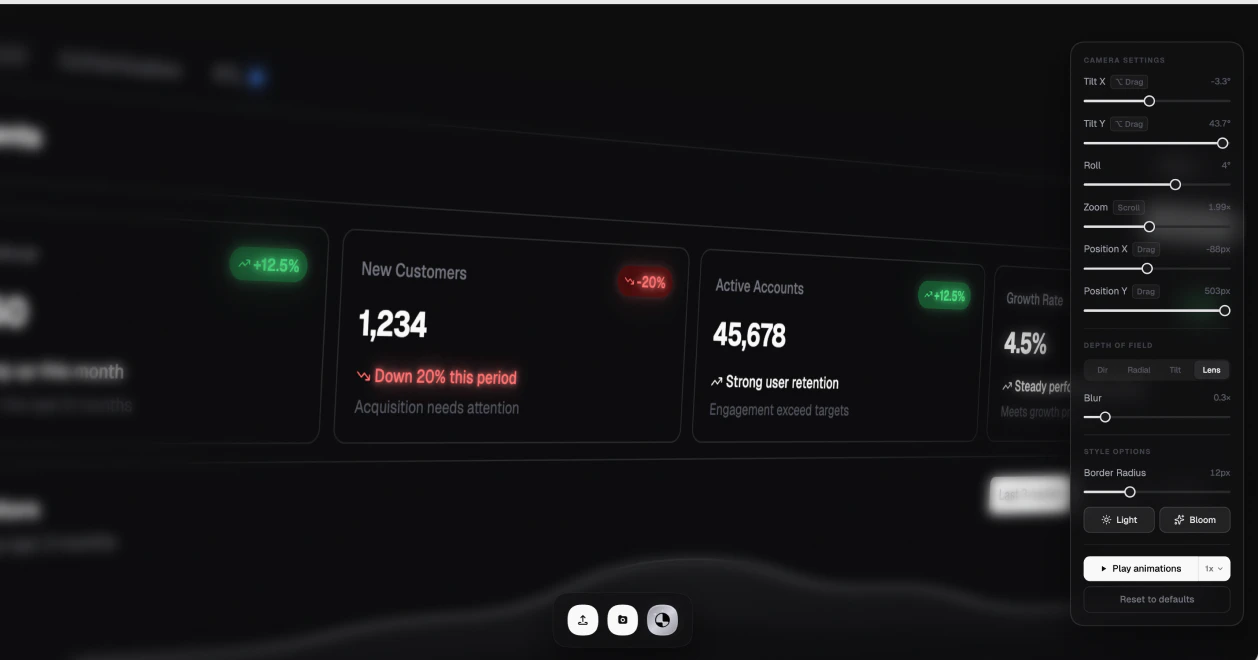

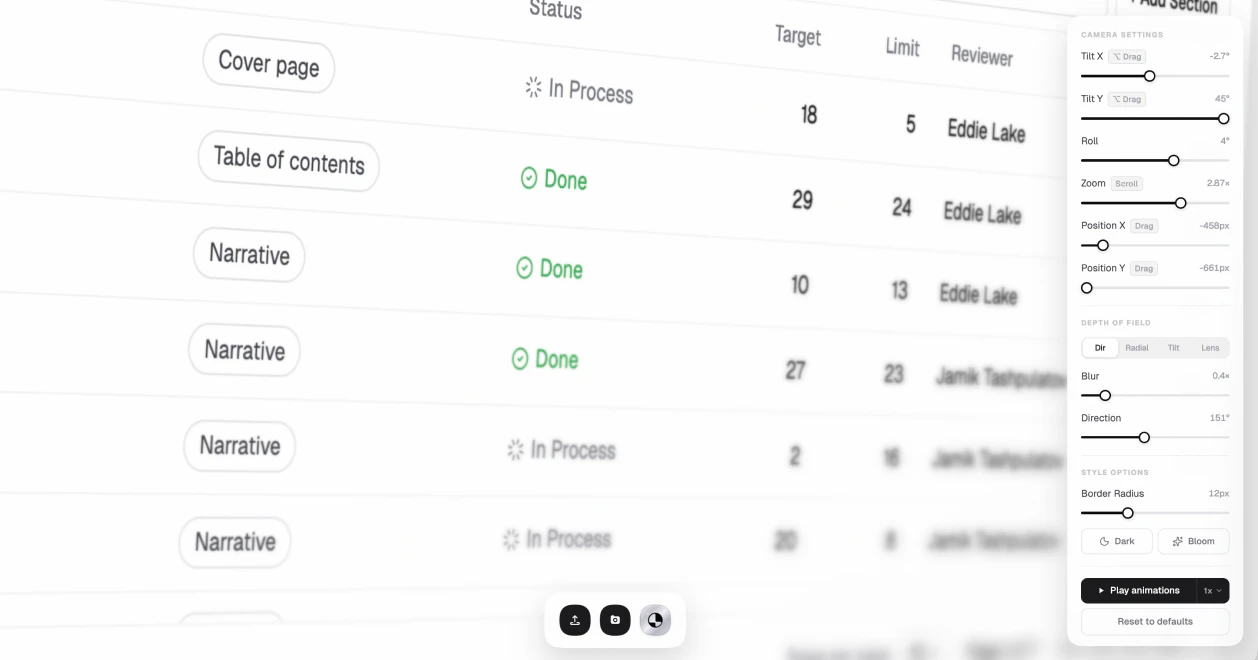

一句话介绍:一款基于浏览器的工具,能让设计师和开发者快速将普通界面截图转化为具有电影感、带真实景深模糊效果的3D设备模型图,解决了传统制作流程繁琐、耗时长的痛点。

Design Tools

Marketing

Developer Tools

UI设计工具

设备模型图

浏览器应用

设计效率

3D效果

截图美化

设计展示

一键生成

用户评论摘要:用户认可其提升设计动力和效率的价值,能快速制作社交媒体内容。创始人互动积极,透露了开发Chrome扩展(为任何网站添加动画)的路线图。用户询问了动画生成的技术实现方式(基于代码生成还是需现有动画)。

AI 锐评

Ultramock的本质,是将“苹果发布会”级别的视觉包装能力,从专业软件中解耦并产品化,其核心价值在于提供了“设计虚荣心”的即时满足。它不生产界面,而是生产界面的“高光时刻”,这精准击中了独立开发者、初创团队和营销人员“重功能、轻包装”的软肋——他们需要以最低成本,为产品披上成熟、精致的外衣以获取关注。

产品巧妙地避开了与Figma等全功能设计工具的正面竞争,转而聚焦于设计工作流的最后一环——“展示”。其宣称的“30秒”和“浏览器内完成”,是对传统Photoshop复杂操作的一次降维打击。然而,其天花板也显而易见:它处理的是静态结果的“化妆”,而非动态设计过程。用户的提问(动画是生成还是复用)恰恰点出了其未来挑战——若仅是对现有元素的运动包装,则技术壁垒有限;若想从代码智能生成动画,则步入另一个复杂赛道。

目前的一次性付费模式,在“软件即服务”的洪流中显得怀旧且冒险,这既可能是吸引早期用户的亮点,也可能成为其长期收入稳定性的隐患。总体而言,Ultramock是一款犀利、精准的“止痛药”型工具,但若想成长为“维他命”,必须在交互演示和动态体验的自动化生成上,构建更深的技术护城河。

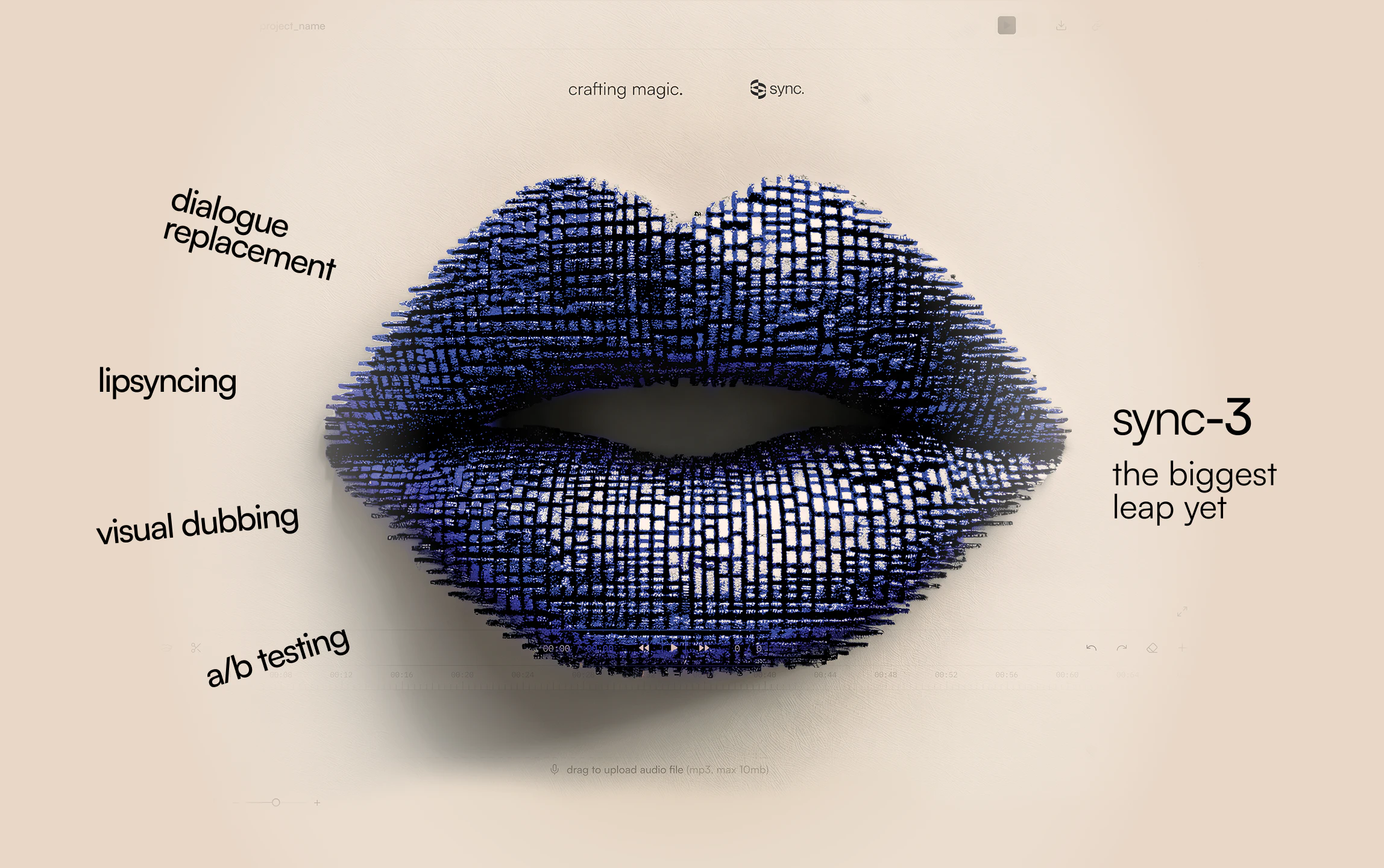

一句话介绍:sync-3是一款工作室级AI唇音同步与视觉配音工具,通过全局理解视频片段中的人物表现并一次性生成所有帧,解决了传统配音在特写、遮挡、极端角度等复杂场景下唇形不同步、情感失真的行业痛点。

Movies

Artificial Intelligence

Video

AI唇音同步

视觉配音

视频本地化

多语言支持

影视后期

人工智能模型

4K视频处理

Adobe插件

表演保持

口型生成

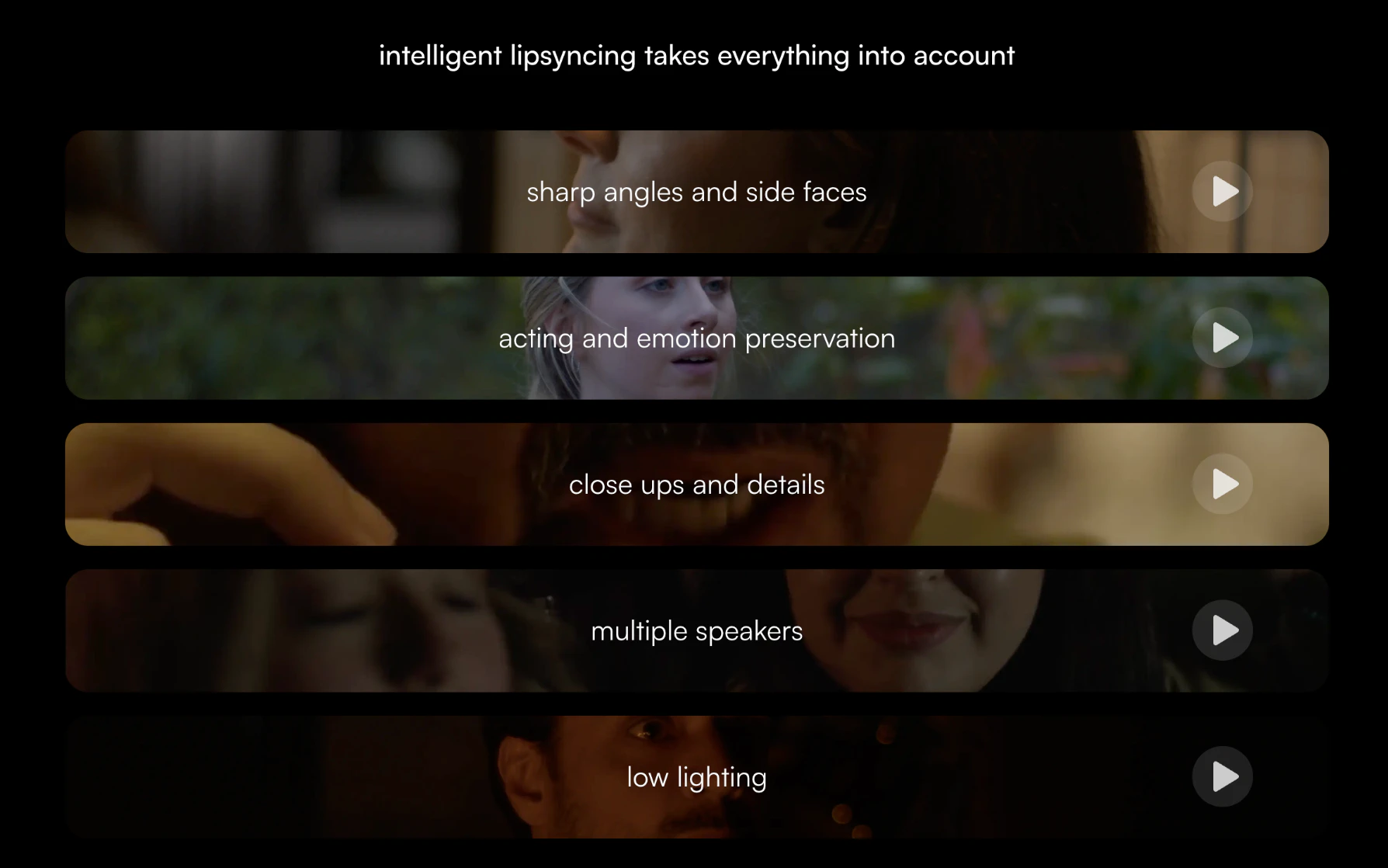

用户评论摘要:用户关注点集中在技术突破的真实生产可用性、多语言4K输出的工作流变革潜力,以及如何处理跨语言情感与唇形对齐的复杂边缘情况。开发者积极回应,强调产品为应对真实制作场景而构建,并解释了通过全面部区域再生来保持表演自然度的技术原理。

AI 锐评

sync-3所标榜的“从唇音同步到面部重新动画”的跨越,其核心价值并非单纯参数量的飙升,而在于其“全局理解”的范式转移。传统方法(包括其前代)将视频切割为孤立片段进行“缝补”,本质上是局部优化的堆砌,必然在镜头连贯性、遮挡与极端角度下崩坏。sync-3宣称的“一次性生成所有帧”,是对视频时序与空间上下文进行统一建模的尝试,这使其处理的不再是“一张张嘴”,而是“一个表演中的人”。

其真正锋芒,直指影视工业化中最昂贵、最棘手的环节之一:跨国界的内容本地化。支持95+种语言并保留原表演情感,这不仅是技术参数,更是商业野心的宣告——它瞄准的是全球流媒体与电影发行市场,试图将高昂的演员重拍或专业配音演员的“对口型”工作,转化为可批量、高效处理的AI后期流程。然而,其挑战也同样尖锐:其一,“表演”的微妙之处远超唇部肌肉运动,涉及整个面部肌群乃至微妙的头部姿态,16B参数是否足以编码人类表演的丰富性,仍需严苛的片场测试;其二,行业接受度,影视艺术创作者能否信任一个“理解表演”的AI来处理核心表演素材,涉及深刻的信任与版权伦理问题。

评论中关于跨语言情感对齐的提问切中要害,开发者的回复揭示了其技术路径:不满足于“贴嘴唇”,而是“再生面部区域”。这步棋险而高,它避免了强制对齐的机械感,但也将自己置于更复杂的评估标准之下——生成的面部是否在所有帧间保持绝对一致且无伪影?这对其模型的物理一致性与审美判断提出了更高要求。

总之,sync-3的价值在于它试图将AI从“特效工具”推向“制作基础架构”。若其宣称的可靠性在高压生产环境中被验证,它可能重塑从短视频到好莱坞的配音工作流;若其只是“更好的demo”,则仍难逃技术玩具的范畴。其成败,在于“理解表演”是营销话术,还是可工程化的现实。

一句话介绍:一款将实时虚拟自习室与轻量级社交游戏结合的生产力应用,为需要“陪伴感”的远程学习/工作者提供了一个无摄像头压力、可沉浸专注的线上第三空间。

Productivity

Music

Games

虚拟自习室

陪伴学习

生产力工具

线上社区

轻游戏化

白噪音

番茄钟

习惯追踪

免费增值

独立游戏美学

用户评论摘要:用户高度评价其“陪伴学习”效果,尤其对无视频压力的“身体共在感”和温馨社区氛围表示赞赏。核心用户包括备考学生、远程工作者及神经多样性人群(如ADHD),认为其能提升专注度和建立习惯。开发者积极互动,并持续根据反馈更新(如新增习惯追踪)。主要吸引力在于社区和专注氛围,游戏化功能为可选加分项。

AI 锐评

lofi.town 的精妙之处在于,它没有发明新需求,而是精准地重构了一个古老的生产力场景——图书馆或咖啡馆的“共同在场”氛围,并将其数字化、游戏化。它避开了现有“身体共在”应用的两个主要痛点:付费墙和强制性视频社交,转而提供一种低压力、高氛围的异步陪伴。这本质上是在售卖一种“被验证的孤独感”——你知道周围是真实的人,但无需进行任何社交表演,这种微妙的平衡是其核心价值。

产品看似缝合了多种元素(Pomodoro、习惯追踪、轻游戏),但其真正的护城河并非这些可复制的功能,而是正在形成的社区文化和用户每日登录形成的“场所依恋”。评论中“用户登录超过一年”、“成为他们的专属位置”等表述,揭示了它已超越工具属性,成为一个具有归属感的“第三空间”。这种用户粘性和社区氛围是竞争对手短期内难以复制的。

然而,其挑战也同样明显。首先,商业模式存疑。“所有核心功能免费”的承诺虽能快速获客,但如何可持续地维护一个复杂的虚拟世界并保障社区质量?未来可能的变现路径(如外观定制、高级空间)是否会破坏其精心营造的平等、无压氛围?其次,规模与氛围的悖论。社区扩张可能稀释其精心维护的“温馨感”,管理成本将急剧上升。最后,功能泛化风险。在“生产力工具”与“休闲社交游戏”之间,它需要持续界定重心,避免两头不讨好。

总体而言,lofi.town 是一次对数字时代生产力与孤独感的优雅回应。它能否成功,不在于添加更多小游戏,而在于能否将当前这种脆弱而宝贵的社区感,转化为一个可扩展、可持续的生态系统。

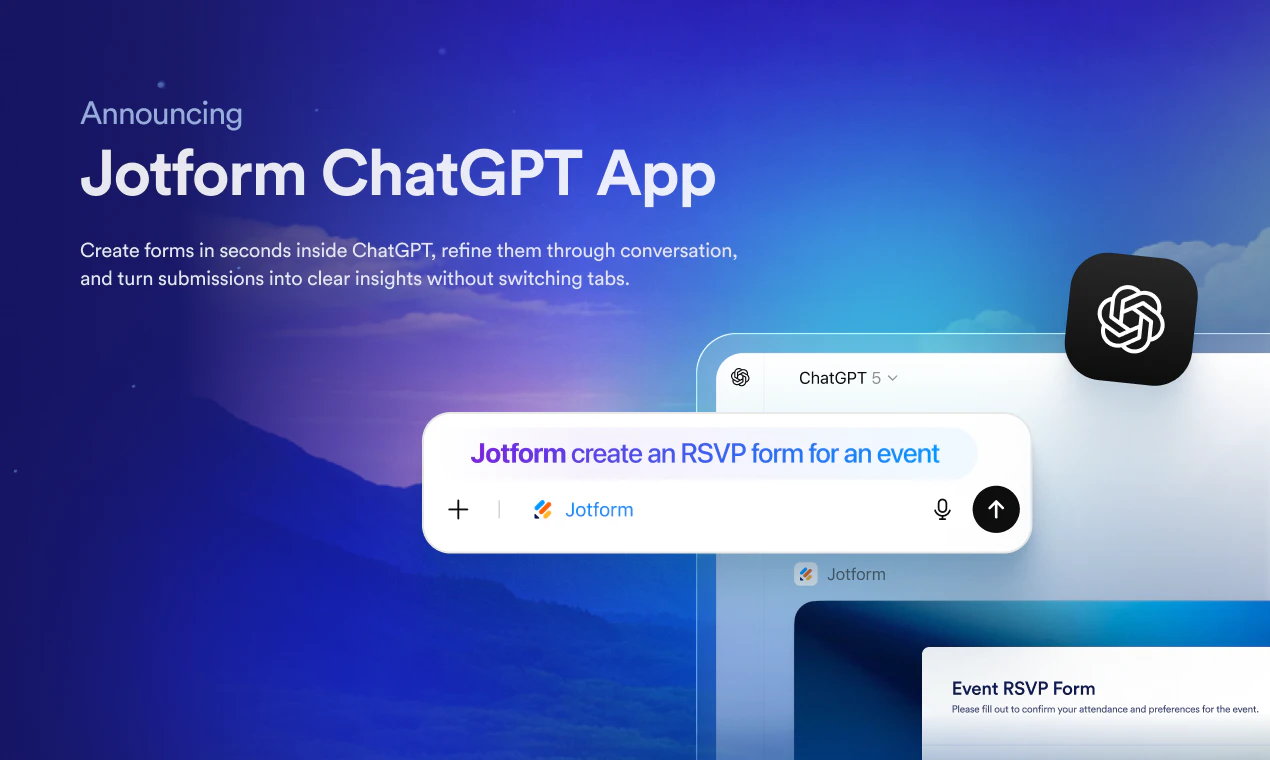

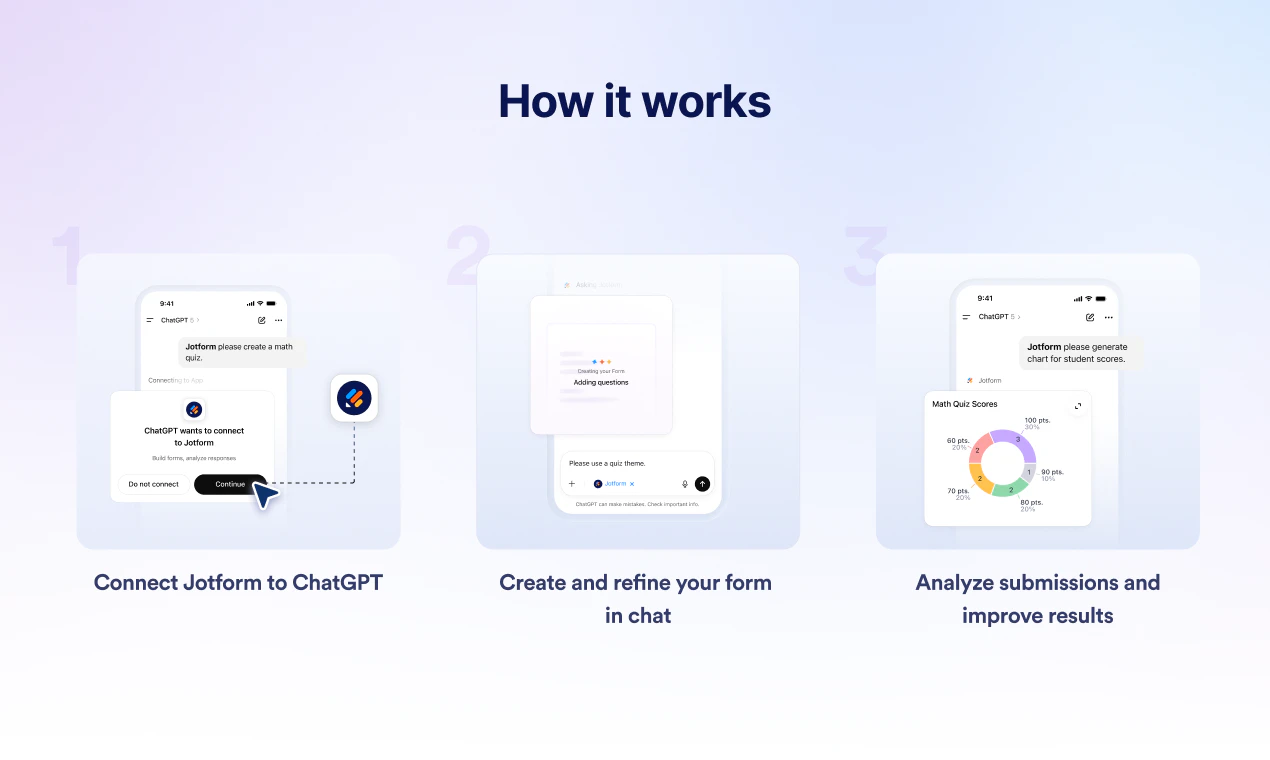

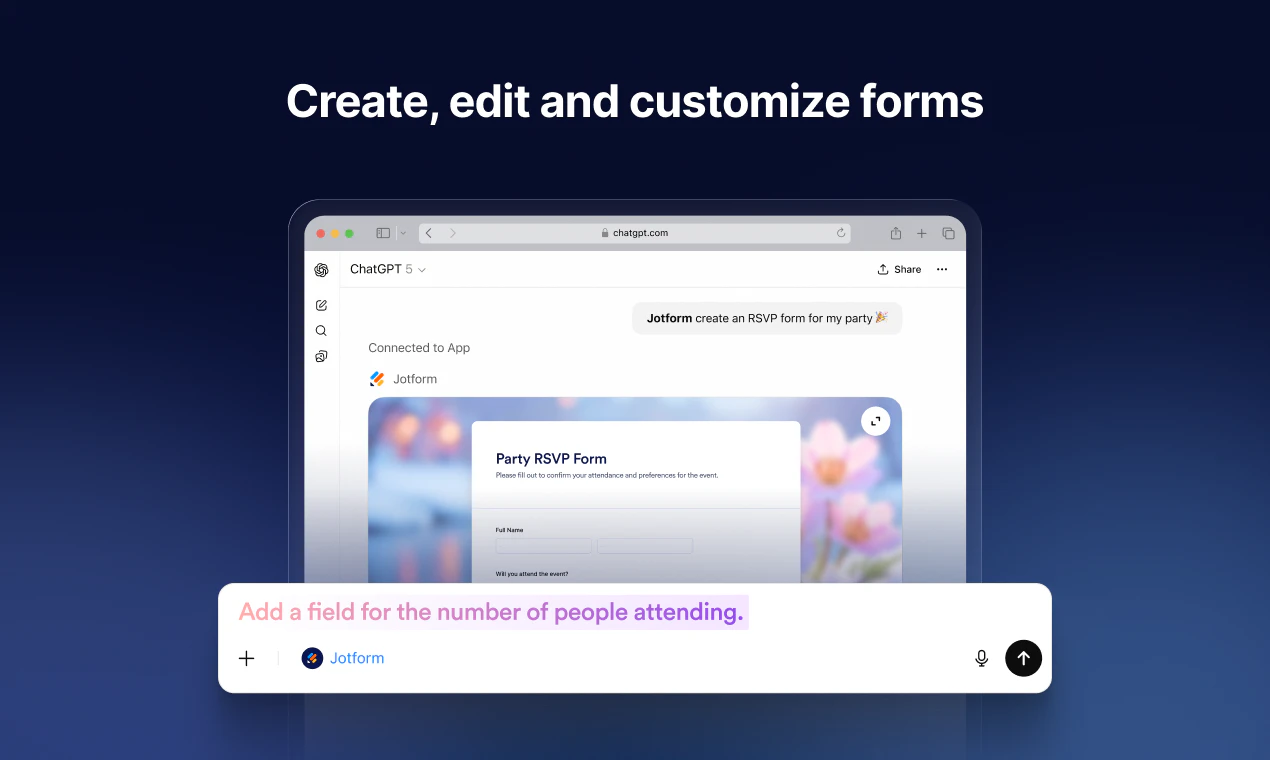

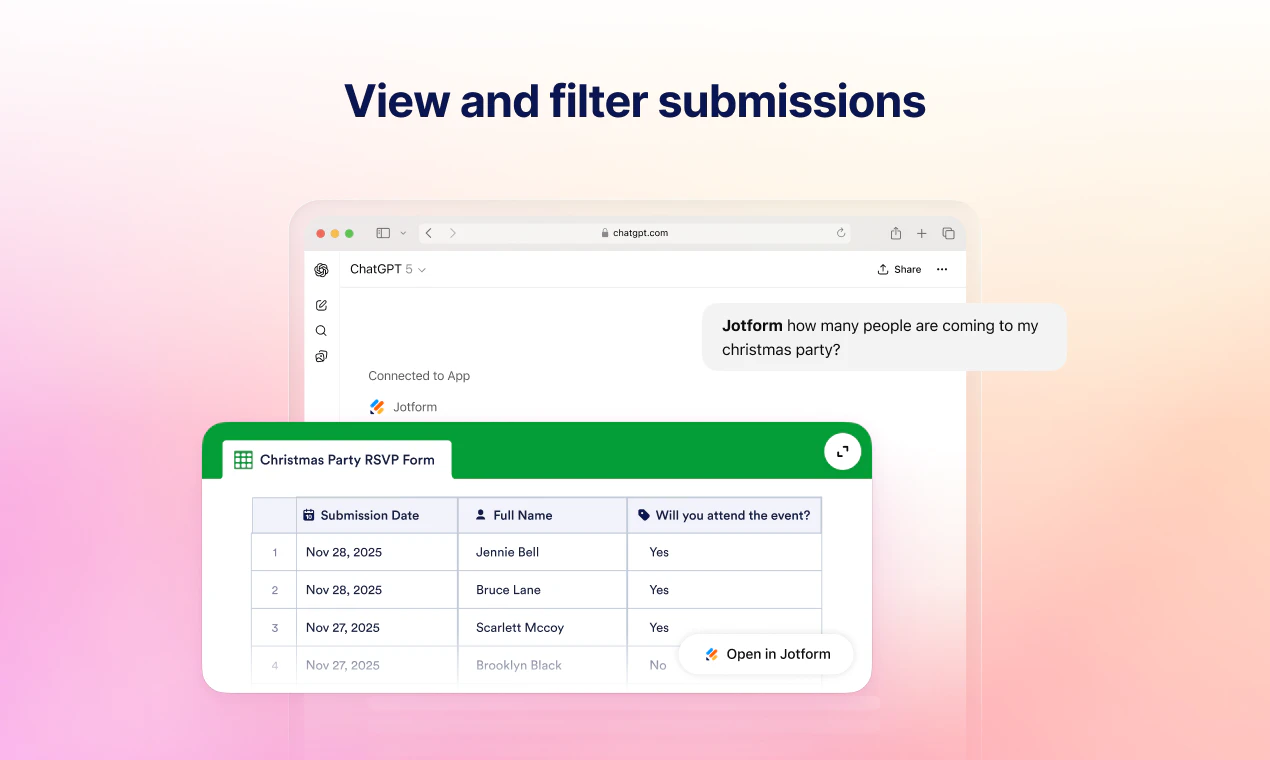

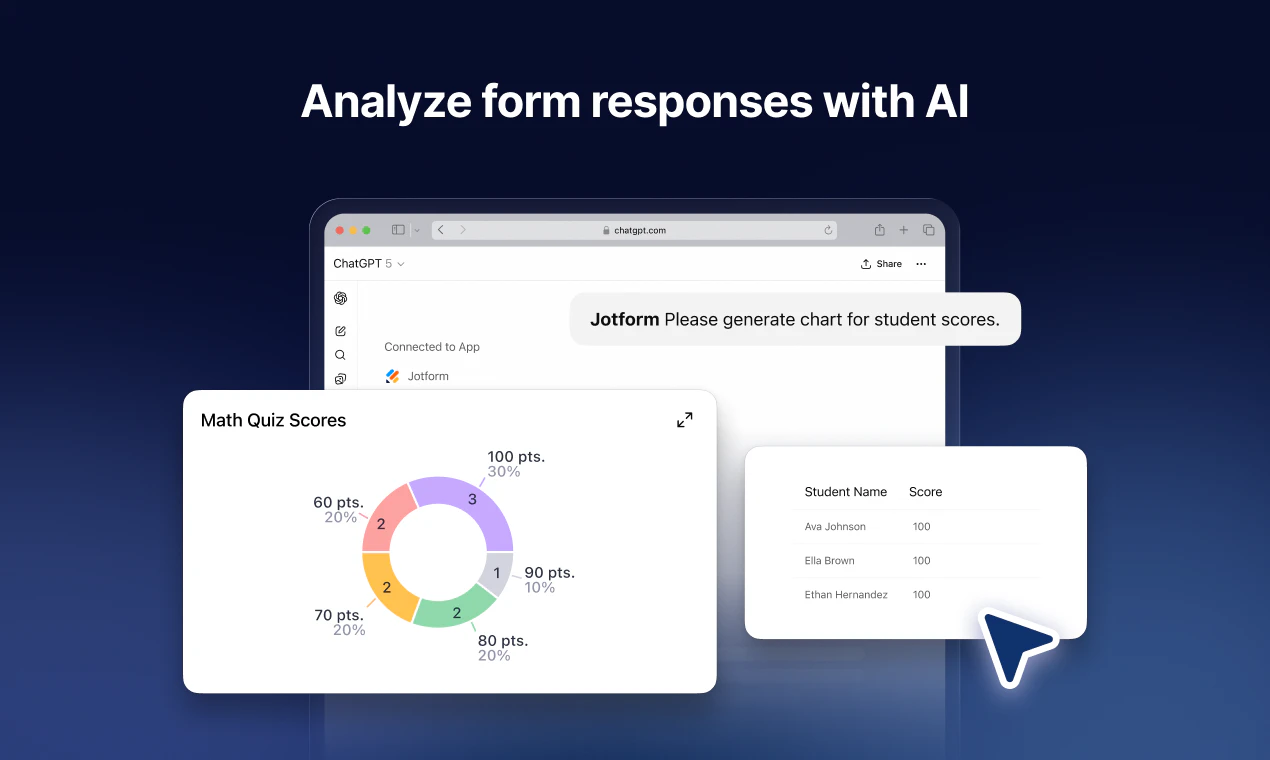

一句话介绍:在ChatGPT对话内直接创建表单、管理提交数据,将AI对话转化为主动的数据收集工具,解决了用户在不同工具间切换以处理结构化数据的效率痛点。

Productivity

Artificial Intelligence

No-Code

AI表单生成

对话式应用

数据收集

工作流集成

无代码工具

实时分析

ChatGPT插件

自动化

团队协作

用户反馈

用户评论摘要:评论主要为产品创始人发布,旨在介绍产品理念与功能,属于官方宣介。目前无其他独立用户的有效反馈、问题或建议。

AI 锐评

Jotform此举并非简单的功能延伸,而是一次对AI助手定位的巧妙“越狱”。其核心价值在于试图将ChatGPT从一个文本生成与问答中枢,升级为一个具备持久化数据存储与操作能力的轻量级业务中台。产品标榜的“将被动对话转为主动数据收集”,直击了当前LLM应用的核心短板——对话的瞬时性与业务数据的连续性之间的矛盾。

然而,其真正的挑战与价值考验在于“意图检测”的精准度与操作边界的界定。在非结构化的自然语言指令与高度结构化的表单数据之间进行映射,极易产生理解偏差,可能使简单的编辑操作变得低效甚至混乱。所谓的“实时仪表盘”愿景,目前看来更接近于一个ChatGPT会话内的数据视图,其分析深度与定制化能力能否替代专业BI工具,仍需观察。

本质上,这是一个通过ChatGPT插件生态抢占用户工作流入口的策略。它降低了表单工具的使用门槛,但可能将复杂的数据管理逻辑隐藏在简单的对话背后,这对普通用户是福音,但对复杂场景可能带来新的认知负担。成功与否,取决于它在“足够智能”与“足够可控”之间的平衡能力,否则极易沦为一次炫技式的功能演示。

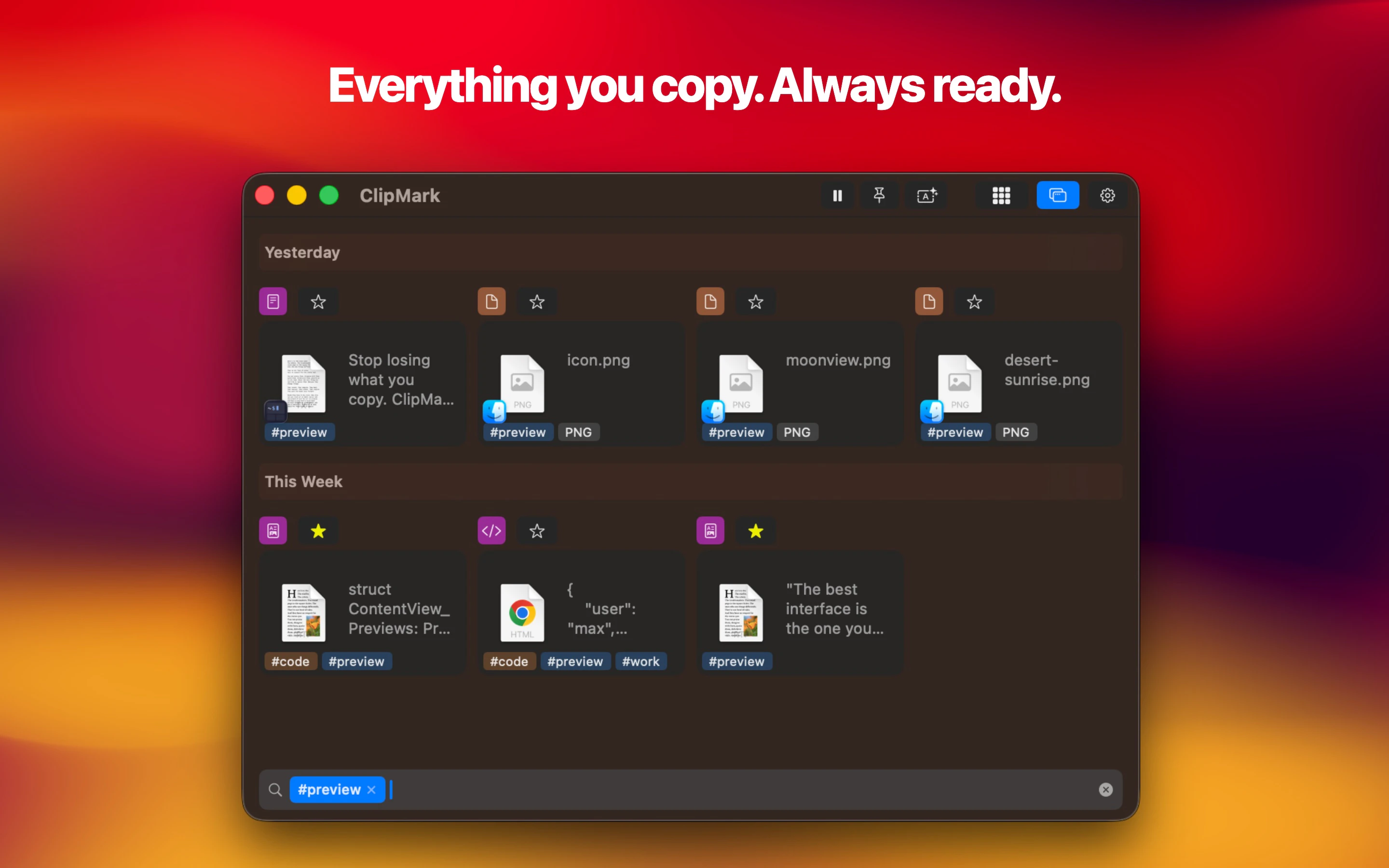

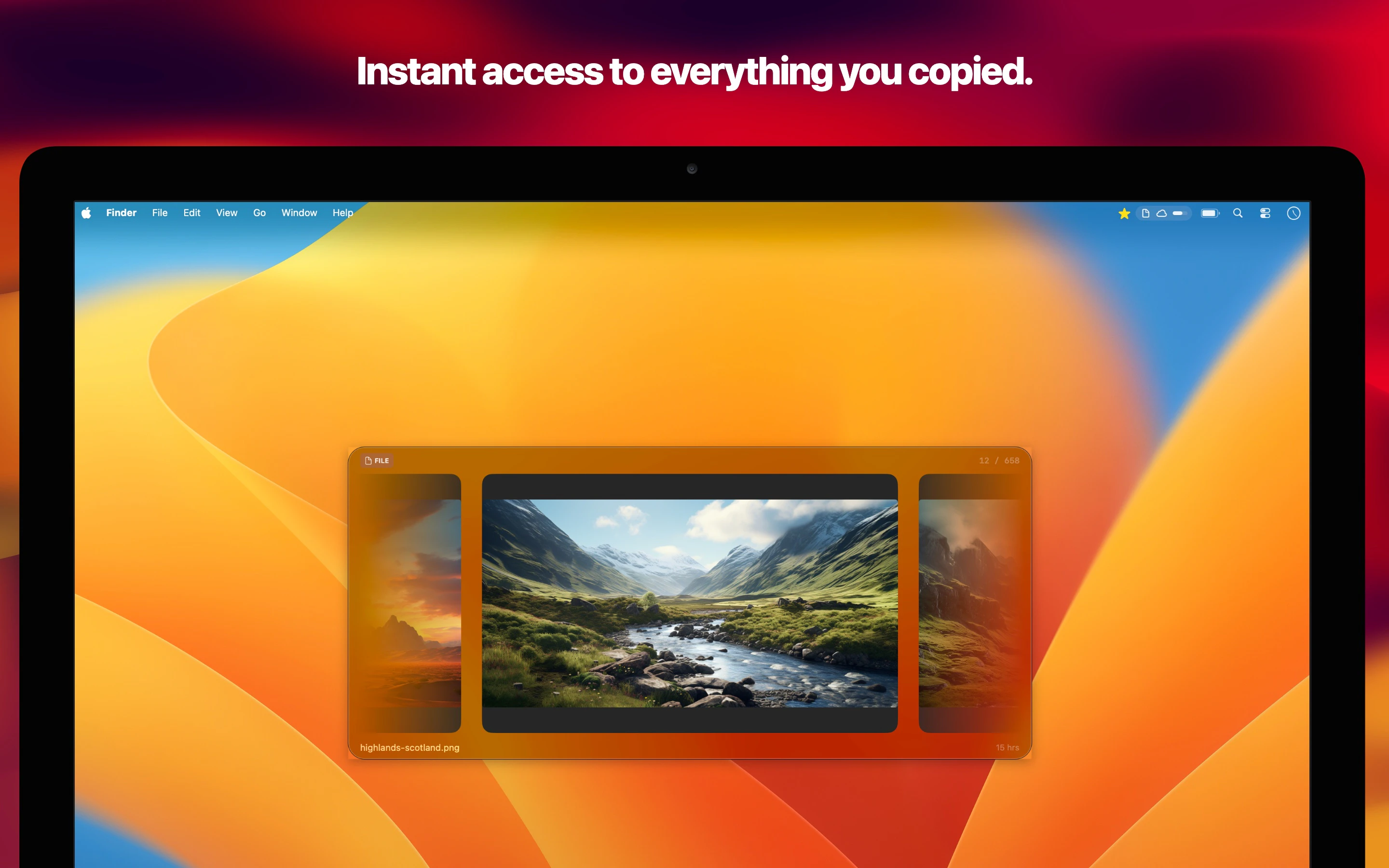

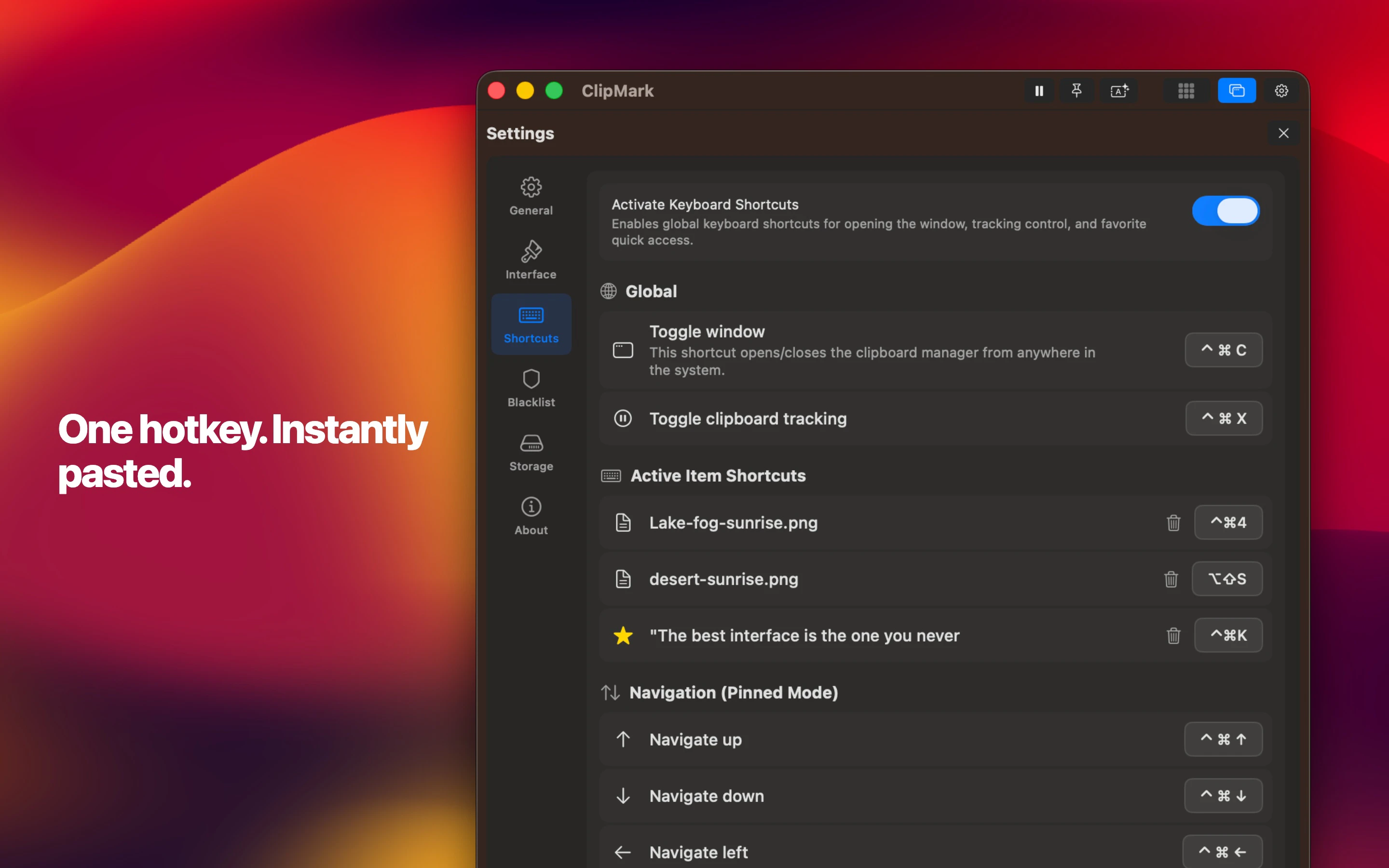

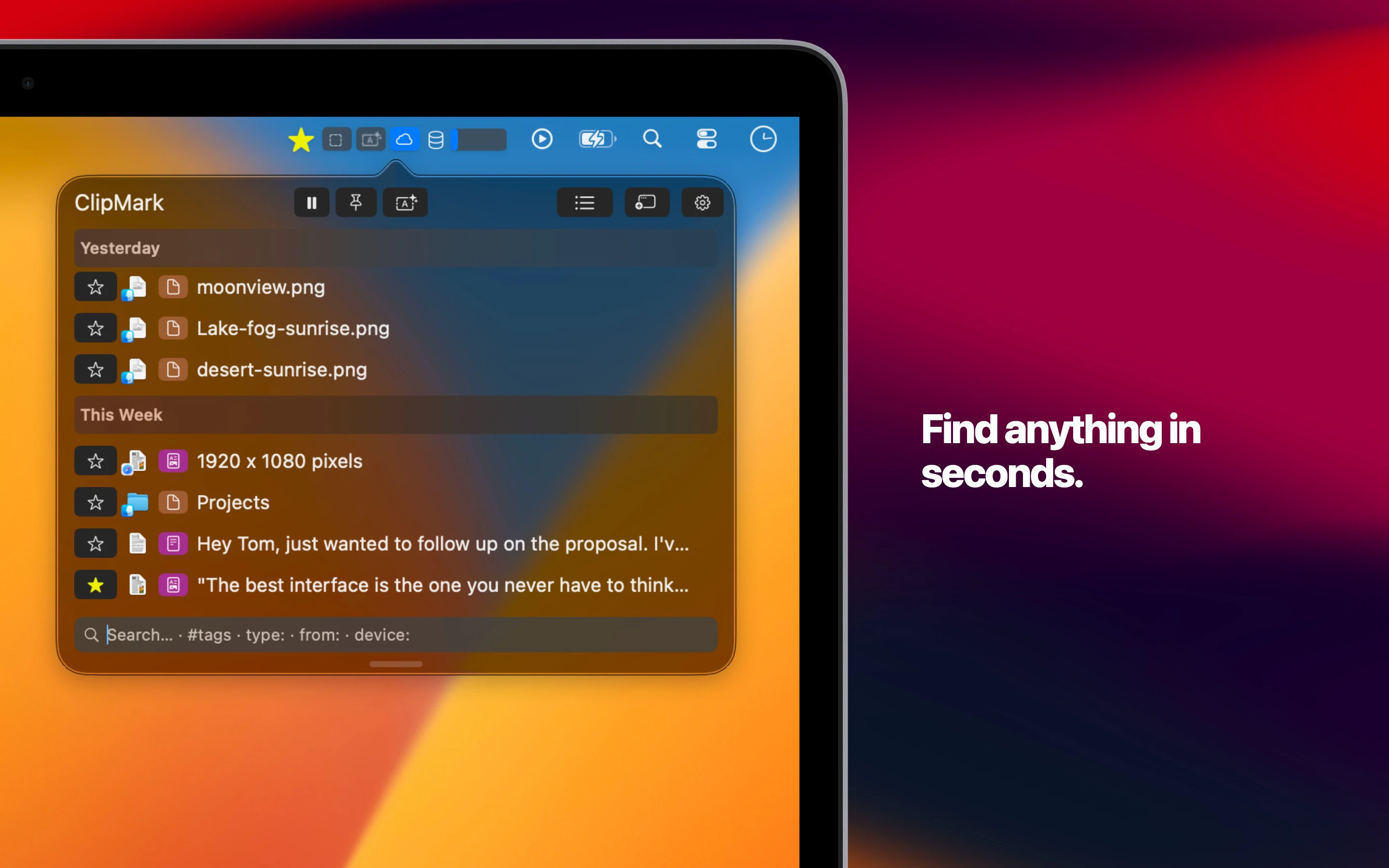

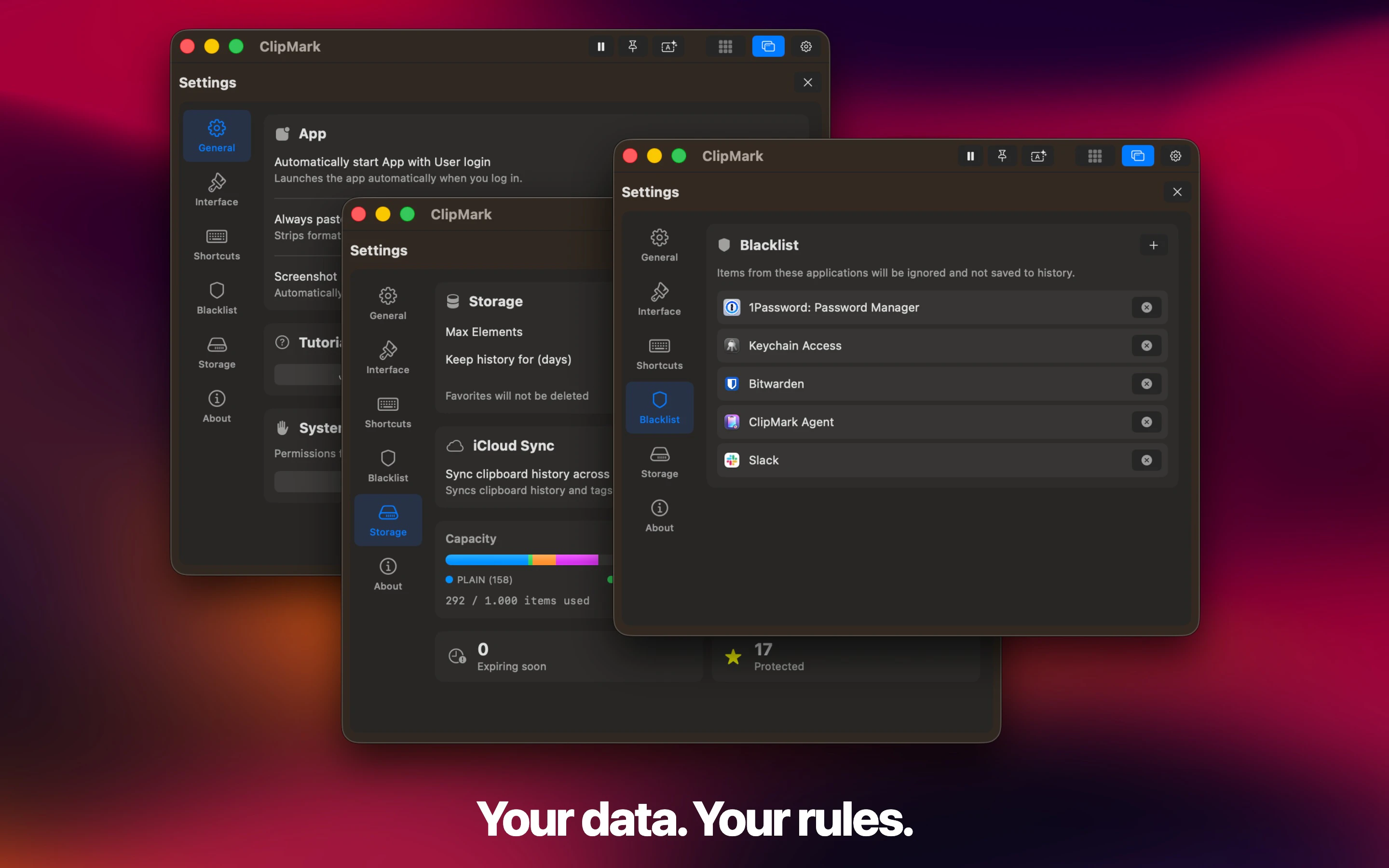

一句话介绍:ClipMark是一款macOS剪贴板管理工具,通过快捷键快速浏览和粘贴历史记录,解决了用户复制内容后易丢失、难以重复调用的问题。

Mac

Productivity

Developer Tools

剪贴板管理工具

macOS应用

效率工具

快速粘贴

内容搜索

标签分类

代码片段管理

截图保存

生产力提升

用户评论摘要:开发者亲自介绍产品理念,强调“快速召回”与“搜索控制”的双重设计逻辑。另一条评论为首次发布的新手致谢。暂无用户直接反馈问题或建议。

AI 锐评

ClipMark切入了一个老生常谈却始终存在痛点的领域——剪贴板管理。其宣称的“永不丢失”直指核心:用户在跨应用、跨时间的信息搬运中,因系统剪贴板单次存储的局限,导致重要信息被意外覆盖的普遍困境。产品提出的“Quick Recall”交互(按住快捷键-滚动预览-释放粘贴)是亮点,它试图在“无需思考的快速取回”和“需要组织的精细管理”之间寻找平衡,这恰恰是此类工具的关键分野。

然而,其真正的挑战不在于功能实现,而在于用户习惯的迁移成本和竞争红海。系统级快捷键与用户现有肌肉记忆的冲突、长期运行对系统资源的潜在占用、以及面对Paste、CopyClip等成熟竞品时的差异化优势,都是其必须面对的问题。从评论看,目前仍是开发者主导的宣导阶段,缺乏真实用户的压力测试反馈。产品价值能否成立,取决于其“快速召回”的流畅度是否足以让用户愿意改变习惯,以及其“强大搜索”的精度能否在长期积累后依然高效。若仅止于又一个“够用”的剪贴板管理器,其命运恐难逃小众。它需要证明自己不是功能的简单堆砌,而是能无缝融入并真正优化信息流工作链的智能中枢。

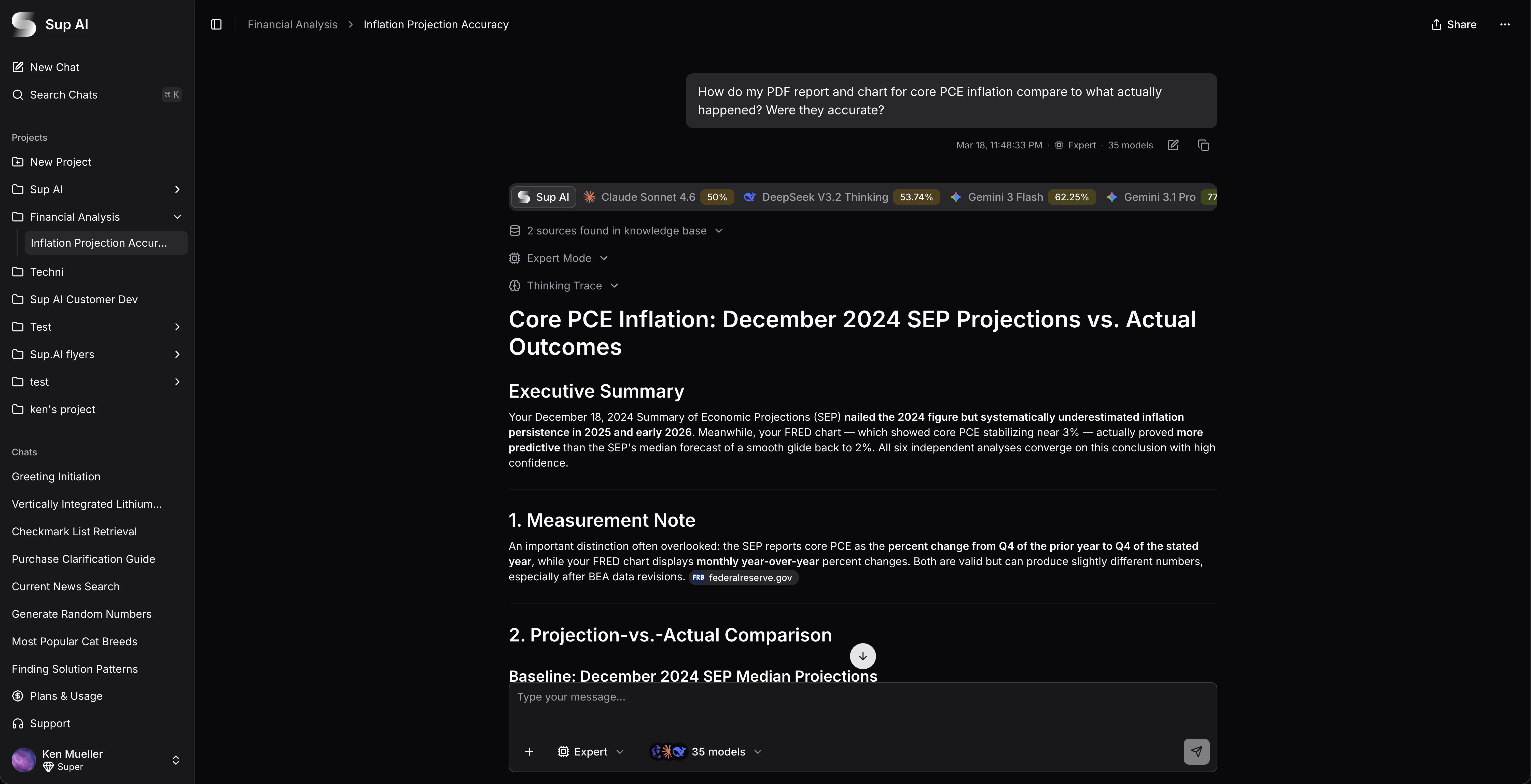

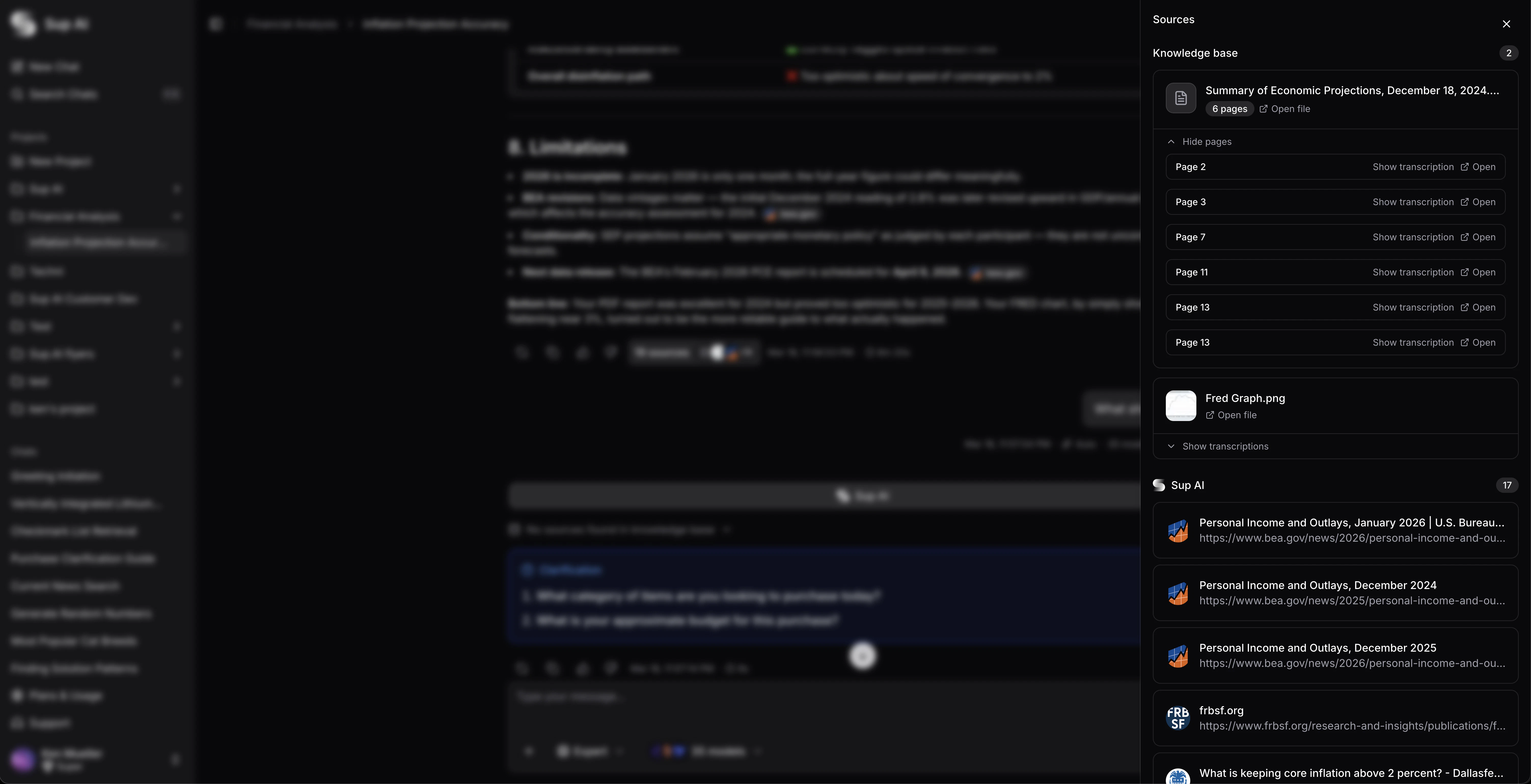

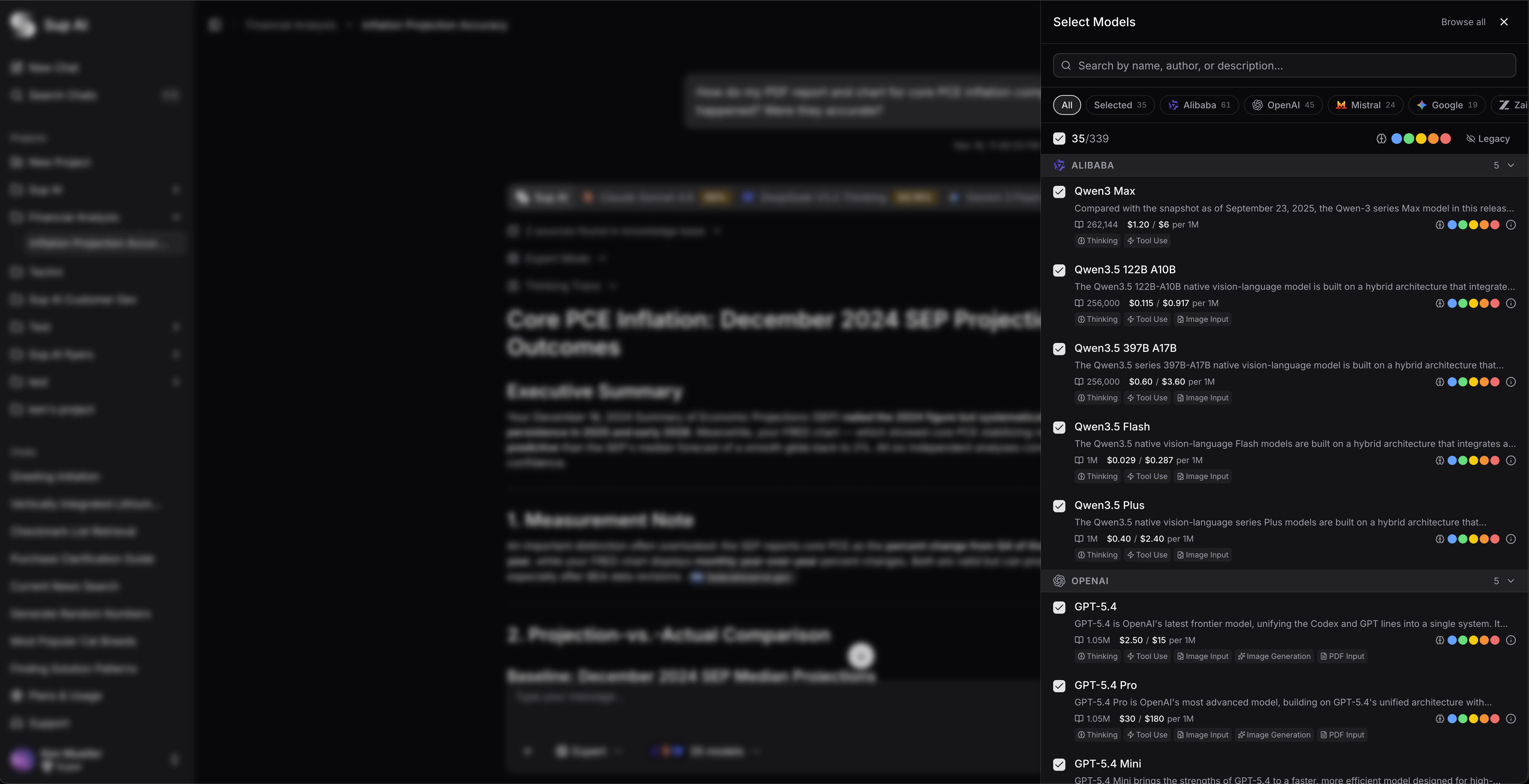

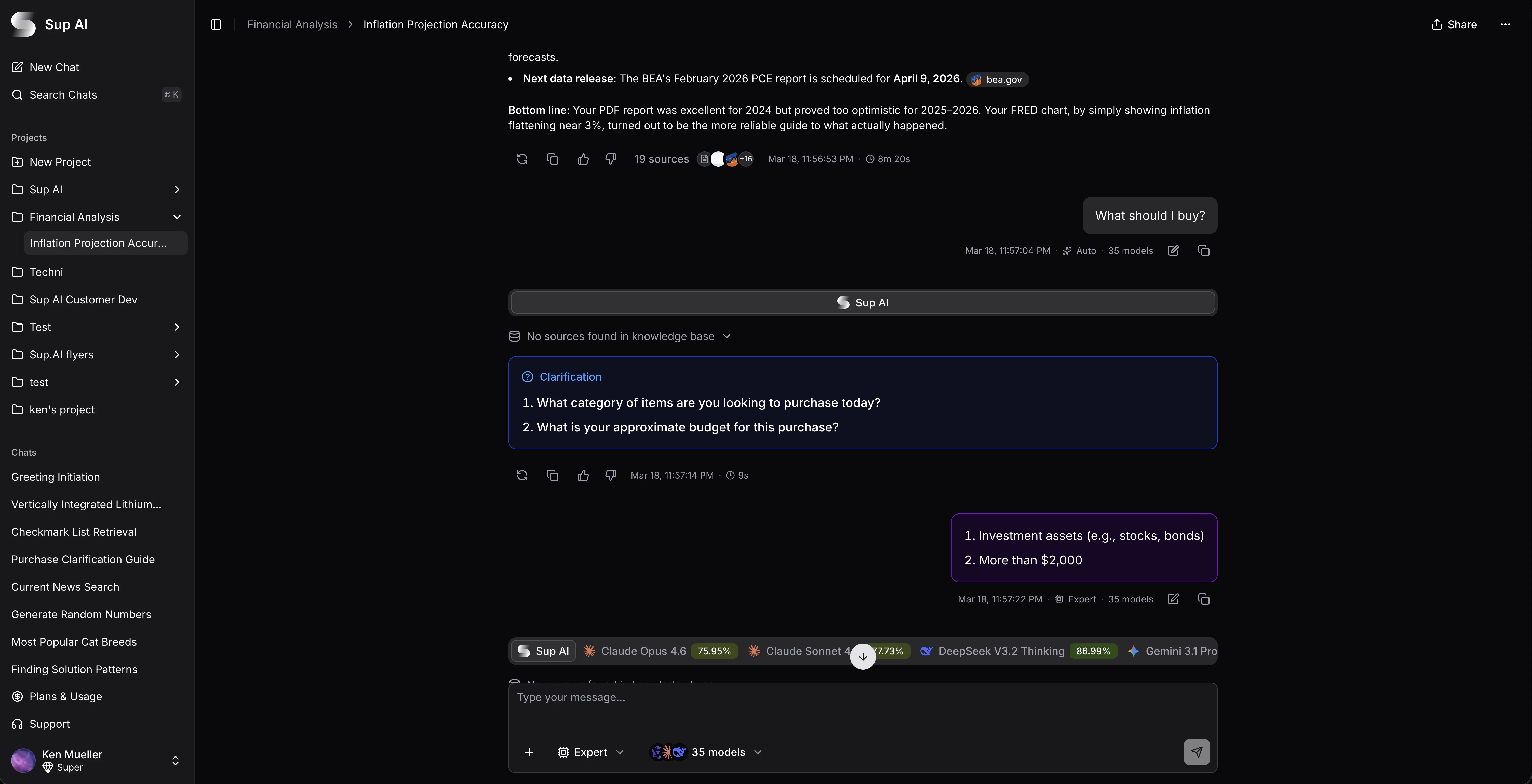

一句话介绍:Sup AI通过并行运行多个大语言模型并基于置信度熵值加权合成答案,在需要高精度、低幻觉的AI问答场景(如敏感信息处理、学术研究)中,显著提升了回答的准确性和可靠性。

Productivity

Writing

Artificial Intelligence

AI模型集成

幻觉抑制

置信度加权

熵值分析

精度提升

多模型并行

学术研究工具

信息验证

API聚合

成本优化

用户评论摘要:用户肯定其在处理敏感信息时的价值。主要问题集中于:1. 是否整合确定性工具(如代码执行)以确保数字准确性;2. 运行多个模型带来的token消耗与成本优化。创始人回应合成器可接入代码执行验证,并解释了通过使用更便宜模型组合及提示缓存优化来控制成本。

AI 锐评

Sup AI的核心卖点并非模型创新,而是工程化集成与概率统计的巧妙应用。它敏锐地抓住了当前LLM发展的一个核心矛盾:模型能力越强,其“黑箱”幻觉越难以根除,且不同模型的错误模式并不完全相关。通过并行调用大量模型(号称339个)并分析输出token概率分布的熵值来加权合成,本质上是将“模型共识”与“内部置信度”进行了量化融合,试图用统计规律对冲单点幻觉风险。

其宣称在“Humanity's Last Exam”基准上显著领先最佳单模型,这一成绩需冷静看待。首先,该基准公众熟知度有限,其代表性和权威性有待检验。其次,方法论严重依赖API是否提供token概率(logprobs),对于不提供的模型需进行估计,这引入了新的不确定性。最后,其商业模式从“免费被滥用”转向“预付费验证”,虽可理解,但直接将用户门槛从零拉至10美元,在竞争白热化的AI工具市场是一场赌博,可能将大量好奇的早期用户拒之门外。

真正的价值在于其思路:将AI应用从“寻求唯一最优模型”的思维,转向“构建动态模型网络与决策机制”。然而,其天花板也显而易见:1. 成本与延迟的天然矛盾,多模型并行必然增加开销,尽管团队声称已优化,但相比单模型调用仍有显著劣势;2. 对“熵值-准确性”相关性的依赖仍是经验性的,在不同领域、不同问题类型上是否普适存疑;3. 它未能从根本上解决LLM的事实性谬误问题,只是通过概率手段进行了筛选和降权,对于所有模型共同存在的认知盲区或训练数据偏差,该方法可能失效。

总之,Sup AI是一款思路清晰、针对特定痛点(高精度需求)的工程化产品,更像一个“AI答案的质量控制中间件”。它展示了后大模型时代的一个发展方向——模型调度与融合。但其长期生存能力,不仅取决于技术效果的泛化性,更取决于能否在成本、速度和准确性之间找到一个能被市场广泛接受的甜蜜点。

一句话介绍:一款基于Metal GPU加速的macOS原生屏幕录制与多轨编辑一体化工具,为需要快速制作专业级演示视频的创作者解决了在多个软件间切换、手动精细编辑的效率痛点。

Design Tools

Social Media

Marketing

屏幕录制

视频编辑

macOS原生应用

GPU加速

AI自动化

品牌化工具

一次性付费

开发者工具

效率工具

用户评论摘要:用户普遍赞赏其原生性能、轻量体积及智能缩放功能。主要问题与建议包括:询问多品牌项目切换的便捷性、对模拟器设备框架支持的期待、以及自动化与手动控制之间的平衡。开发者积极回应,并提及已修复权限问题。

AI 锐评

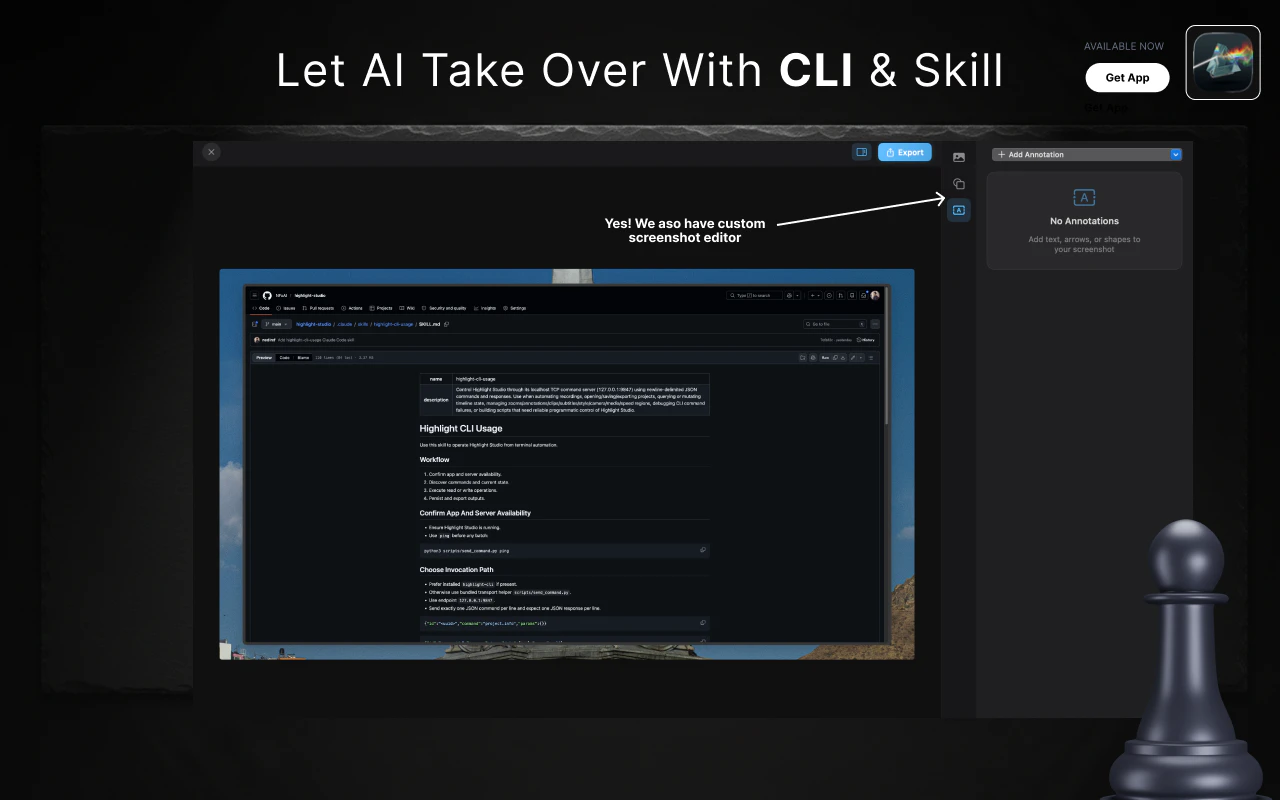

Highlight Studio的野心,在于用极致的原生技术栈(Swift+Metal)和AI自动化,试图重新定义“轻量级”屏幕录制编辑工具的天花板。它精准切入了一个市场缝隙:介于功能简陋的录屏工具与过于复杂的专业非线性编辑软件之间。其核心价值并非功能堆砌,而是通过“Smart Zoom”等基于系统级交互感知的AI功能,将高频、耗时的手动操作(如关键帧)转化为后台自动化流程,真正实现了“录制即开始编辑”的流畅体验。

然而,其真正的挑战在于定位的可持续性。一方面,它用终身买断制对抗订阅制,以原生性能对抗Electron套壳,这赢得了技术爱好者和预算敏感用户的好感。但另一方面,其“一体化”工具属性也可能面临两端挤压:上,有专业软件更强大的编辑能力;下,有操作系统原生录屏功能的免费与便捷。其提出的“40+ CLI命令供AI智能体编程操作”是一个极具前瞻性的差异化思路,试图将自己从用户工具升级为AI工作流的基础设施,但这部分需求的真实市场规模仍需验证。

总体而言,这是一款在技术执行上相当犀利的产品,抓住了效率创作者的核心痒点。但它能否从一个出色的工具成长为一个持久的品牌,取决于其能否围绕“AI赋能的内容创作流水线”构建更深的护城河,并妥善解决多场景、多客户端的细节兼容性问题。

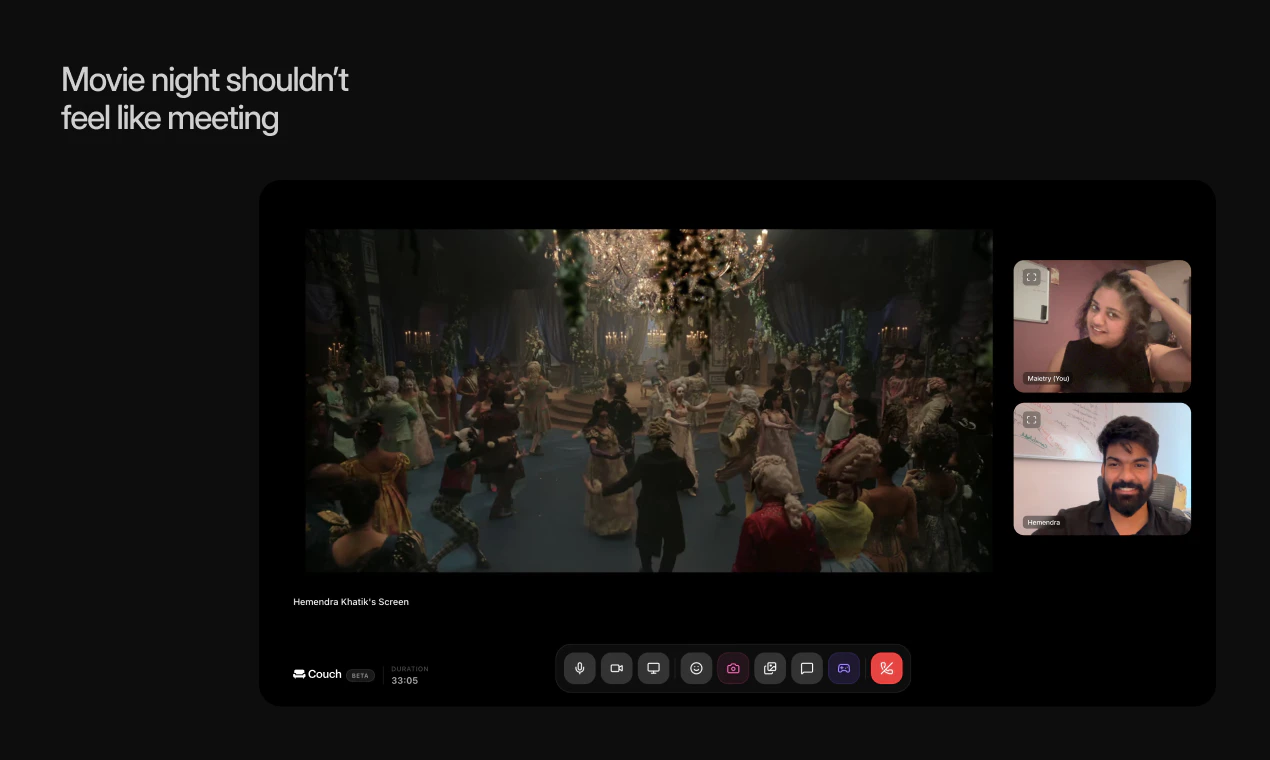

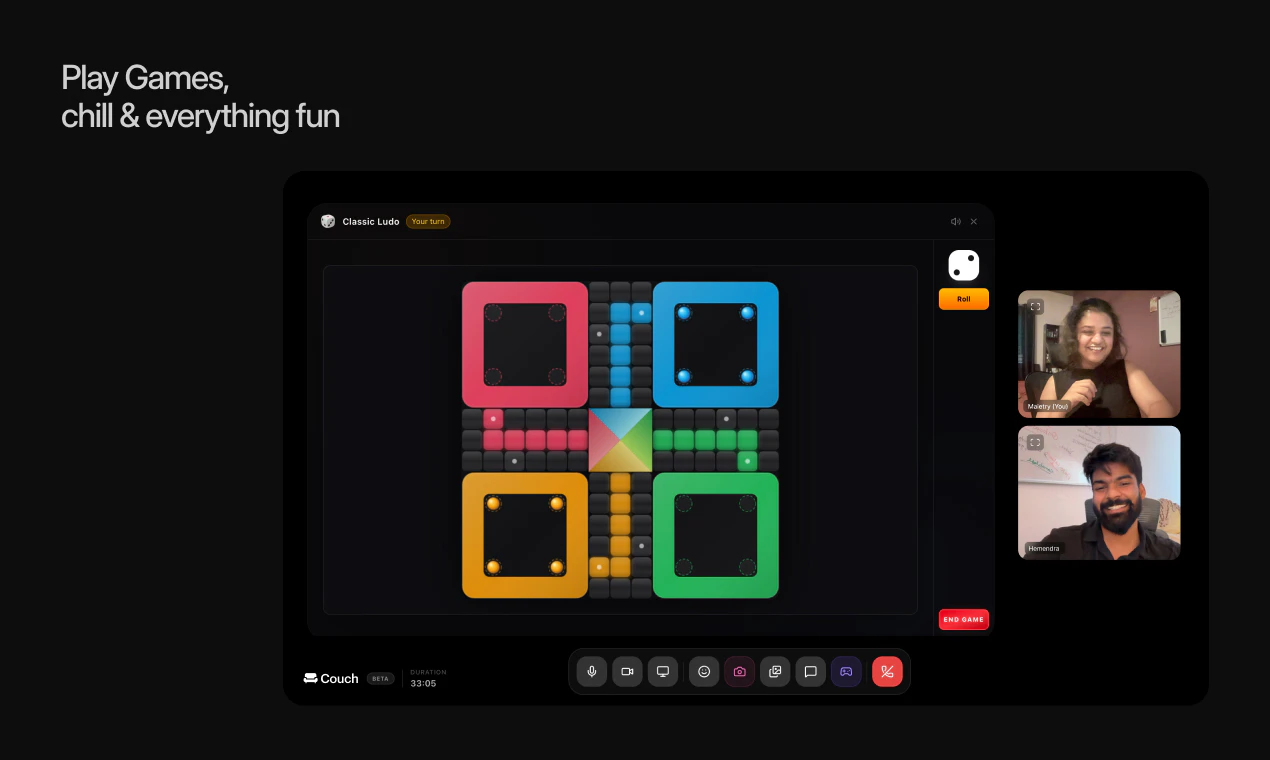

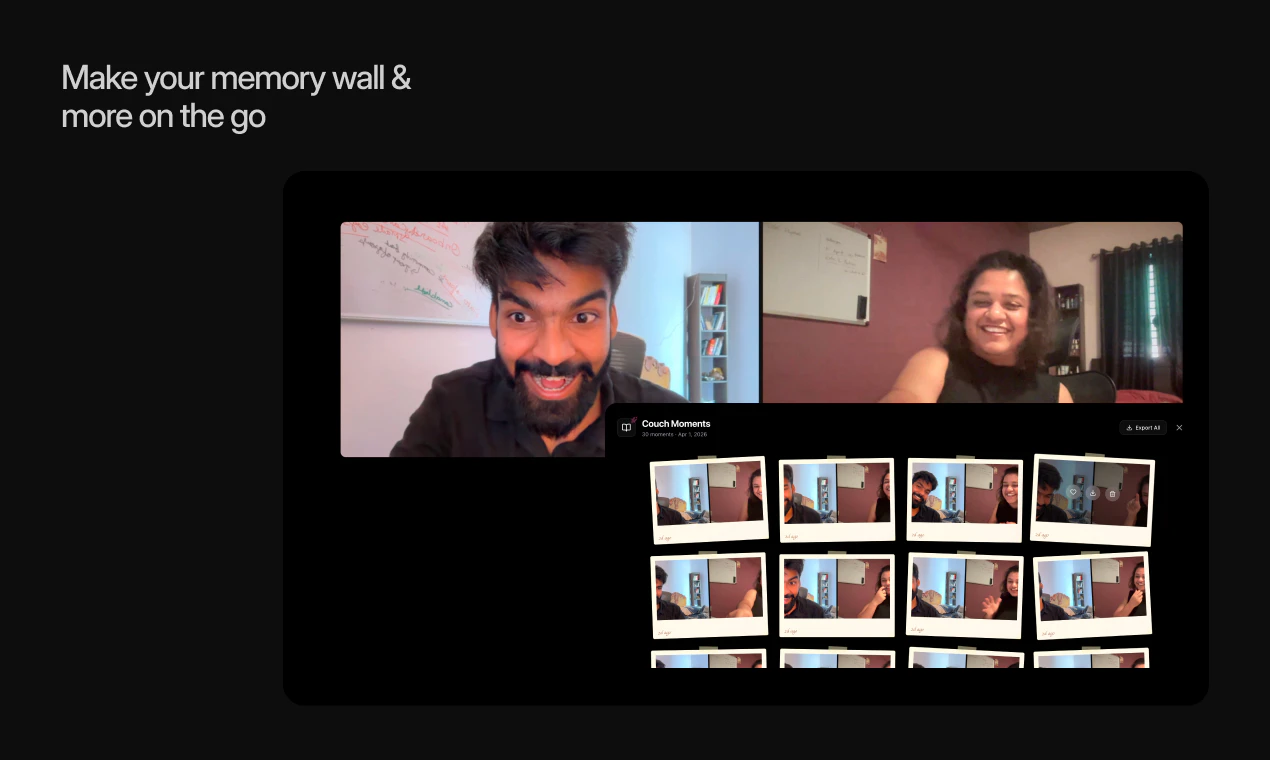

一句话介绍:Couchspace是一个集同步观影、内置游戏和实时聊天于一体的在线共享空间,为异地亲友提供了一种简单、无摩擦的线上相聚方式,解决了传统视频工具“像开会”、体验割裂的痛点。

Movies

Streaming Services

Games

线上社交

虚拟房间

同步观影

休闲游戏

远程聚会

数字沙发

体验一体化

情感连接

用户评论摘要:用户普遍赞赏其“非会议”的温馨体验,解决了远程电影夜和聚会的“拼凑感”。主要问题/建议集中在:如何处理流媒体平台(如Netflix)的共享限制;是否支持更多桌游集成;对时区适配和异步连接功能的询问。

AI 锐评

Couchspace的野心,并非做一个功能堆砌的“瑞士军刀”,而是试图成为数字时代的“情感沙发”。其真正价值在于对“场景”而非“功能”的整合。它敏锐地捕捉到,Zoom、Discord等工具本质是效率导向的“数字会议室”或“游戏指挥中心”,其UX设计语言与“放松、共处”的情感需求存在根本冲突。

产品将观影、游戏、闲聊封装进一个名为“房间”的轻量级容器中,其核心创新是“体验流”的无缝切换。用户无需在Netflix、游戏平台、通讯软件间跳转并反复协调,这降低了远程社交的操作成本和心理负担,维护了“在一起”的沉浸感。从评论看,这种“不像开会”的体验直击用户情感软肋,是产品早期获得共鸣的关键。

然而,其发展面临双重挑战。表层是技术/版权合规挑战,如评论中提到的流媒体共享限制,这使其在核心观影场景上可能受制于人,需探索合法合规的同步解决方案或深化与流媒体平台的合作。深层则是场景深度与用户粘性的矛盾。当前“数字沙发”的概念新颖,但内置游戏和基础社交的体验若缺乏深度和持续更新,易使用户新鲜感消退。它必须回答:当“见面”的惊喜过后,用什么持续吸引用户停留?是打造独占的社交游戏?还是深化为基于共同兴趣的社群空间?

总体而言,Couchspace是一次有价值的“体验设计”尝试。它证明在远程交互领域,情感体验的优先级可以高于功能完备性。其成败将取决于能否在维持“轻量化、无压力”产品气质的同时,构建起足够有吸引力的内容或互动循环,让用户不仅“来相聚”,更愿意“常回来坐坐”。

一句话介绍:Netflix为8岁以下儿童推出的独立游戏应用,依托其热门儿童IP构建互动世界,在无网络环境(如旅行途中)为家长提供无广告、无内购的安全娱乐解决方案。

Kids

Education

Games

儿童教育娱乐

独立应用

无广告

无内购

离线游戏

IP衍生

订阅增值服务

家长友好

学前儿童

互动体验

用户评论摘要:用户积极肯定其离线功能对旅行场景的实用性。核心关注点在于游戏内容的更新频率与长期吸引力。有评论敏锐指出,其战略价值在于构建“观看+游玩”的IP闭环生态。

AI 锐评

Netflix Playground绝非简单的游戏合集,而是Netflix将其庞大儿童IP库从“内容消费”升级为“互动体验”的关键落子。它精准切入了一个被忽视的痛点:在充斥着广告、内购和强制联网的儿童应用市场中,提供一个纯粹、安全且可离线的“数字游乐场”。这本质上是将其订阅服务的价值从“观看权”向“体验权”的一次隐秘扩张。

其真正的犀利之处在于生态构建。通过将《小猪佩奇》、《芝麻街》等知名IP游戏化,Netflix正试图打造一个“观看-游玩-强化认知-更爱观看”的闭环,加深儿童用户与平台的情感绑定和停留时长,构筑起更深的护城河。这步棋不仅提升了用户粘性与会员价值,更是在探索IP的长期生命周期价值,将流媒体战火引向了更纵深的“用户时间与心智争夺战”。

然而,挑战同样明显。作为后来者,其游戏内容的质量与创新性能否匹敌专注儿童教育游戏多年的对手(如Khan Academy Kids),尚待观察。此外,“免费”捆绑会员的模式虽具吸引力,但也可能削弱其作为独立产品的研发驱动力。若无法持续注入高质量的新游戏,它很可能只是一个华丽的会员福利,而非一个能真正改变赛道的产品。Netflix需要证明,它不只是IP的搬运工,更是儿童互动体验的创造者。

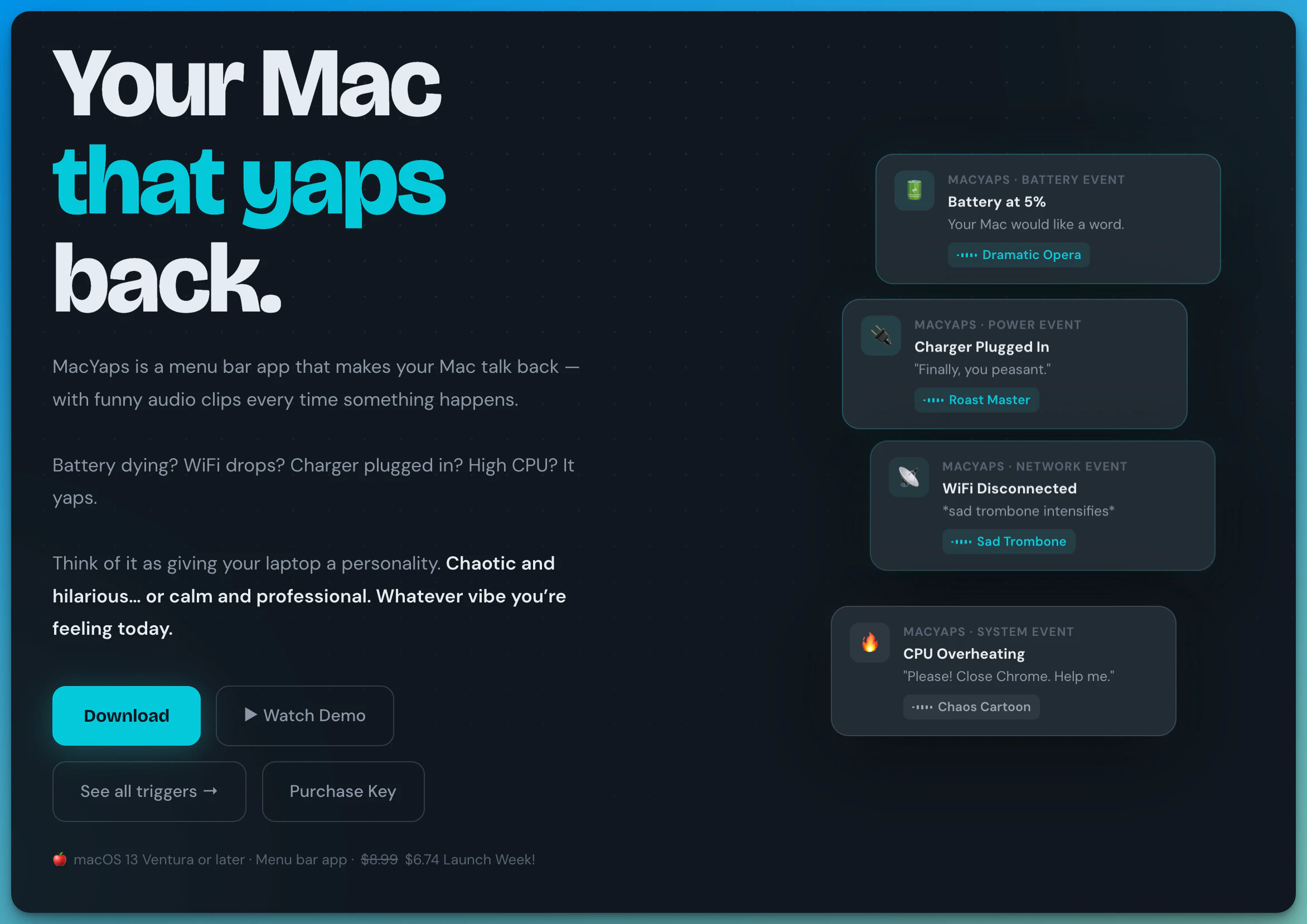

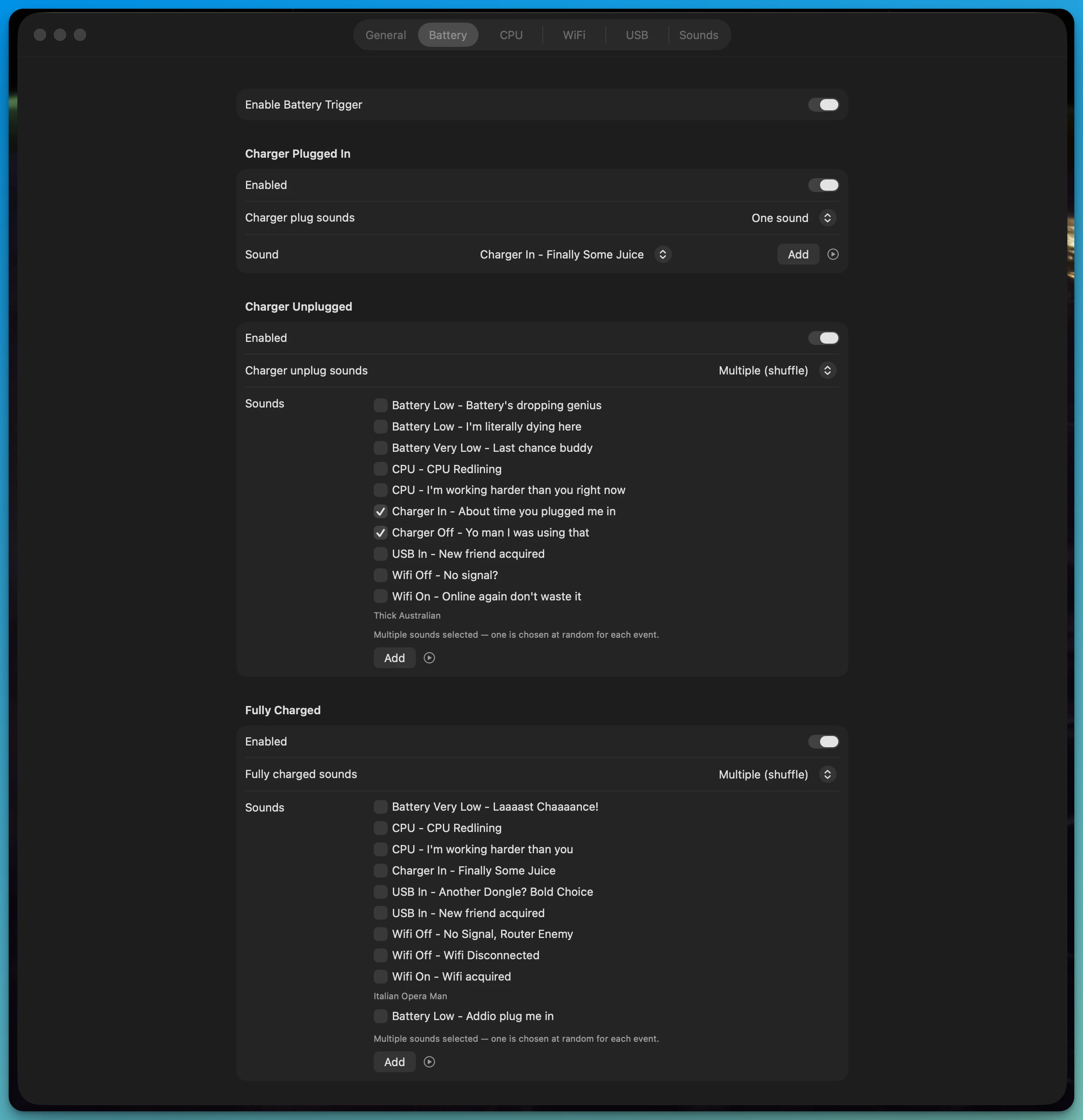

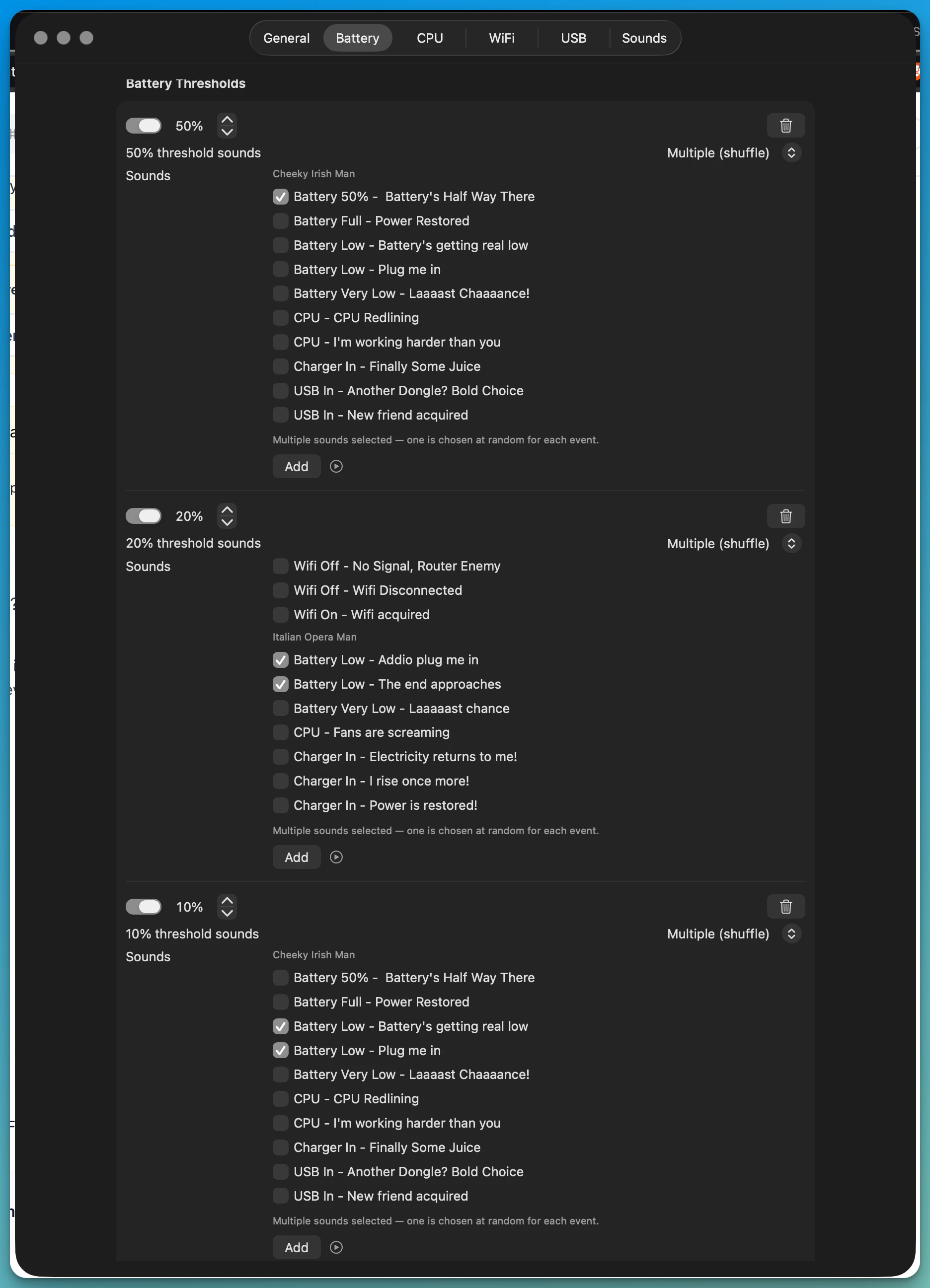

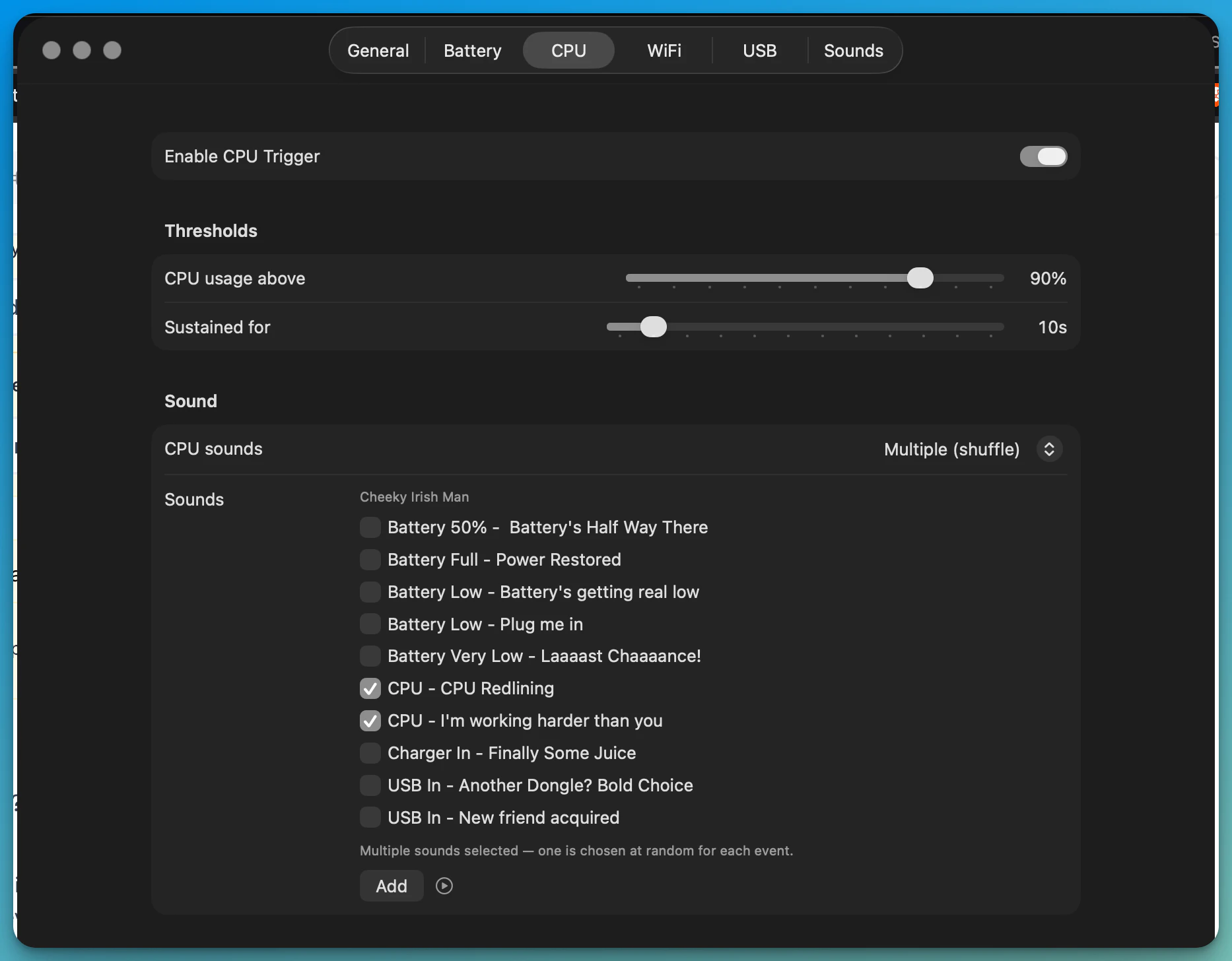

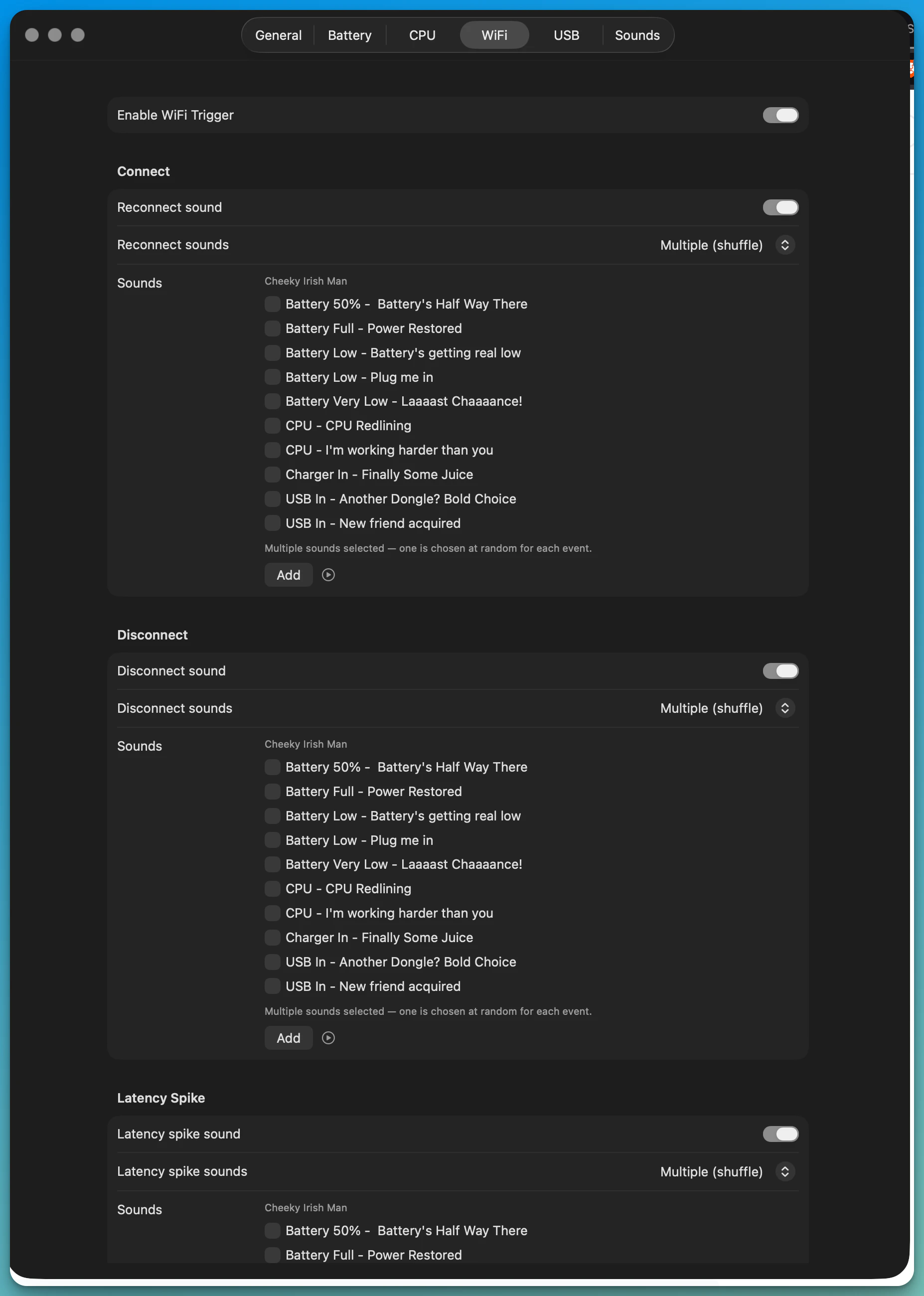

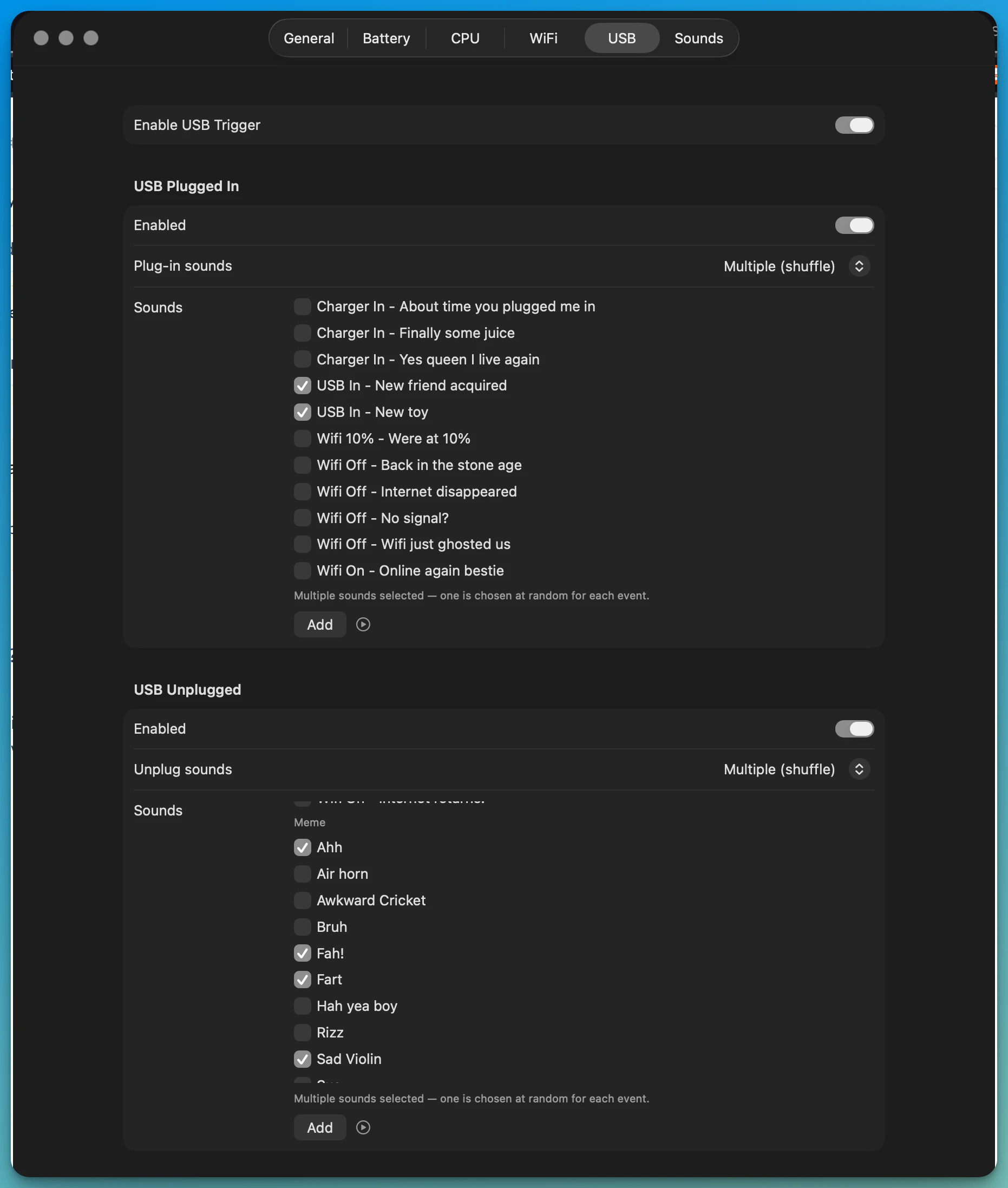

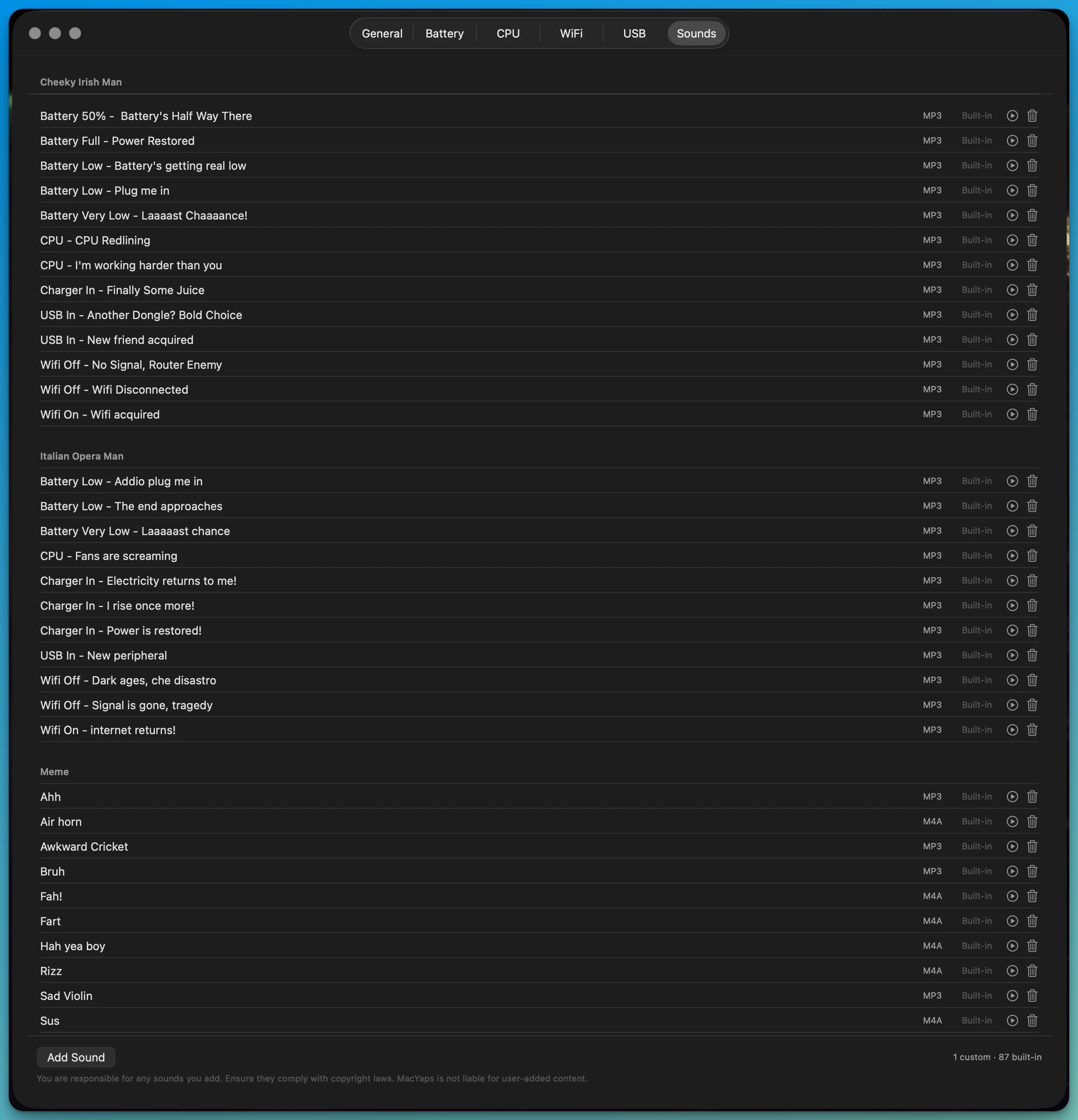

一句话介绍:MacYaps是一款让Mac在系统事件(如电量低、WiFi断开、CPU占用高)发生时,通过播放个性化语音或音效进行提示的菜单栏应用,解决了用户在设备状态变化时缺乏直观、有趣提醒的痛点。

Mac

Funny

Menu Bar Apps

macOS工具

系统通知

个性化提醒

语音反馈

生产力增强

趣味应用

状态监控

自定义音效

菜单栏应用

用户交互

用户评论摘要:用户普遍认可创意和趣味性,尤其喜爱语音包。主要建议包括:增加电脑睡眠/唤醒触发、实现更精细的情景感知响应(如根据紧急程度调整语气)、修正语音包名称准确性(如“爱尔兰”口音不纯)。开发者积极回应,承诺增加新功能。

AI 锐评

MacYaps的本质,是将枯燥的系统状态监控从“被动查看”转变为“主动告知”,并裹上了一层浓厚的情感化与娱乐化糖衣。其真正价值并非在于技术突破——监控电池、网络或CPU并非难事——而在于精准切入了一个被长期忽视的交互缝隙:人与机器间冰冷状态反馈所带来的“静默焦虑”。

产品聪明地避开了与专业监控工具的正面竞争,转而聚焦于“通知”本身的表现形式。通过提供“毒舌纽约客”、“戏剧歌剧腔”等极具人格化的语音包,它将原本可能代表问题的系统事件(如低电量)转化为一种略带戏谑的互动体验,从而消解用户的负面情绪,甚至创造一种奇特的陪伴感。这是一种典型的“体验经济”思路:功能本身可替代,但情绪价值构成壁垒。

然而,其面临的挑战同样清晰。首先,新鲜感褪去后,高频、重复的语音提示是否会造成新的干扰,乃至使用户迅速关闭,是决定其用户留存的关键。其次,从评论看,用户需求正快速从“好玩”向“好用”深化,如要求更精准的情景感知、与电池健康管理等实用功能结合。这要求产品必须在“趣味玩具”与“贴心工具”之间找到更稳固的平衡点。

长远看,MacYaps的路径可以有两个方向:一是持续深化娱乐性,成为可订阅的“语音包平台”;二是谨慎拓展实用性,成为可定制化、情景智能的系统助手。目前它成功地用幽默感打开了市场,但若要避免沦为昙花一现的 novelty,下一步必须思考如何将这种人格化交互,更深层、更智能地融入工作流,而不只是点缀。

一句话介绍:为Claude Code等AI编程助手提供设计能力的MCP工具,在AI辅助编程场景中,解决了生成的UI设计质量低下、与现有代码库设计体系脱节的核心痛点。

Design Tools

Developer Tools

Artificial Intelligence

AI编程助手

UI设计工具

模型上下文协议

代码库感知

设计系统集成

前端开发

人机协作

Claude生态

开发效率工具

智能体工具扩展

用户评论摘要:创始人介绍了开发初衷与便捷安装。用户关注点在于工具如何自动识别现有设计系统,以及担心AI自主修改破坏现有设计。官方回复强调工具需人工审核,且能通过分析代码库生成或匹配设计规范。

AI 锐评

AI Designer MCP的发布,直指当前AI编程代理在“创造力”与“工程化”之间的断层。其宣称的价值并非替代Figma等专业设计工具,而是试图将“设计意识”作为上下文注入编码流程,这恰恰是当前AI编码从“功能实现”迈向“成品交付”的关键瓶颈。

产品聪明地避开了“全自动设计”的噱头,而是定位为“增强智能体的工具”。它不承诺解决所有设计问题,而是聚焦于“代码库感知”这一精准场景。这意味着其核心价值并非生成惊艳的原创设计,而是实现设计输出的“一致性”与“可集成性”。这本质上是一种“设计规范化”的工程解决方案,通过让AI理解项目现有的颜色、组件和布局约定,来减少人工后续调整的成本。

然而,其真正的挑战与价值深度并存。首先,“代码库感知”的精度决定了工具上限。从现有代码中逆向推导出明确、可用的设计规范(Design Tokens),本身就是一个复杂的技术问题,尤其在面对混乱的遗留代码时。其次,它试图在“AI自主性”与“人工控制”间寻找平衡点。评论中的担忧非常典型:开发者需要的是“得力的助手”,而非“自作主张的艺术家”。产品将人类定位为“审核者与指挥者”,是务实的策略,但如何设计流畅的审核与迭代交互流程,将直接影响用户体验。

长远看,此类工具若成功,其意义在于模糊“编码”与“设计”在开发流程中的界限,推动形成一种“设计即代码”的连续体工作流。但它目前更像一个“补丁”,弥补了基础代码模型在审美与系统化思维上的不足。它的成功与否,不仅取决于自身技术,更取决于底层AI编码智能体(如Claude Code)理解与运用这些工具指令的能力。这是一场针对AI开发生态“最后一公里”体验的精准突围,但突围之路注定需要与整个生态协同进化。

👋 Hey Product Hunt!

I'm Rustam, founder of NovaVoice.

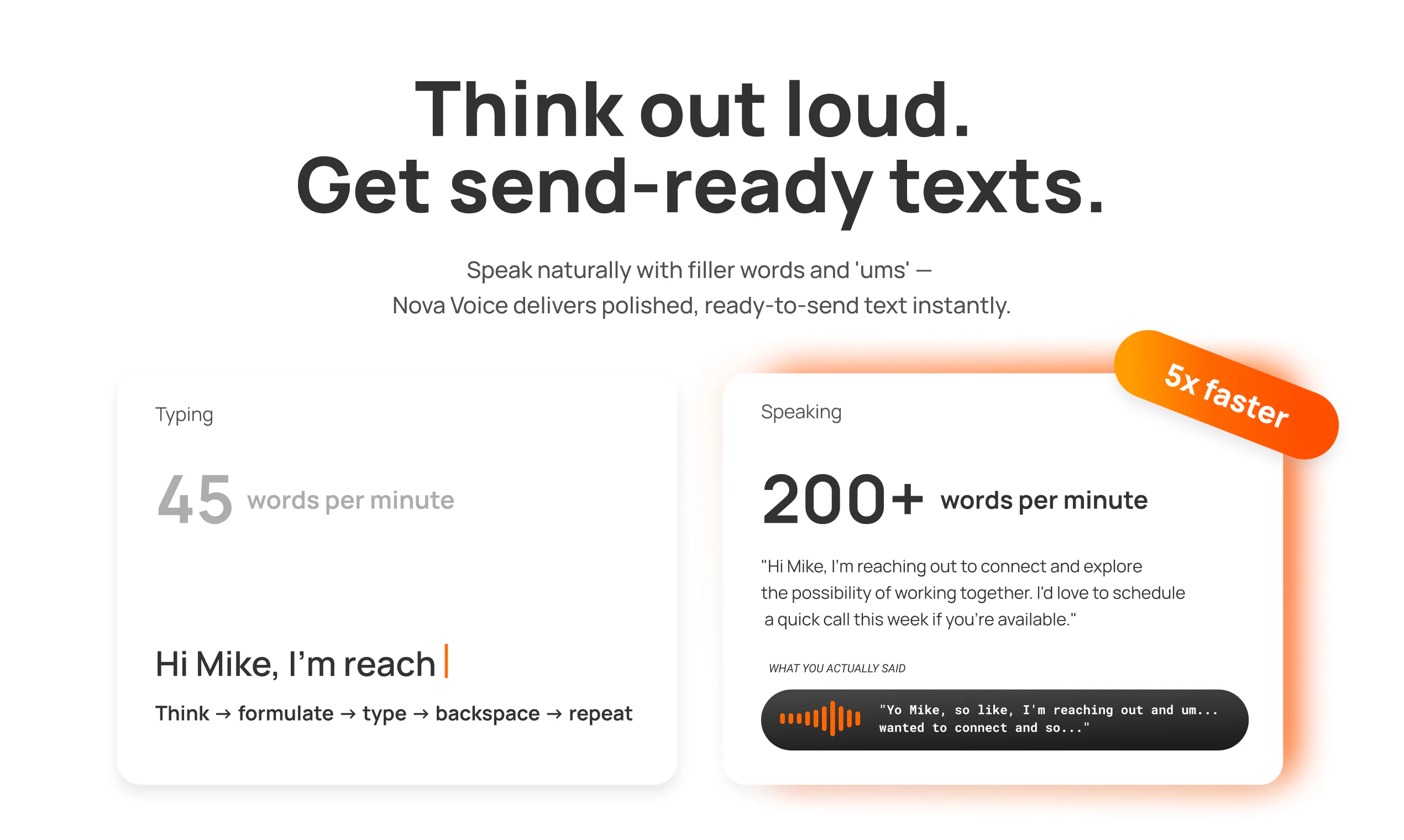

A few months ago, I realized: voice is the interface nature gave us. It's fast, intuitive, effortless. Yet when we sit at our computers, we default to typing — even though speaking is at least 4x faster.

That felt wrong.

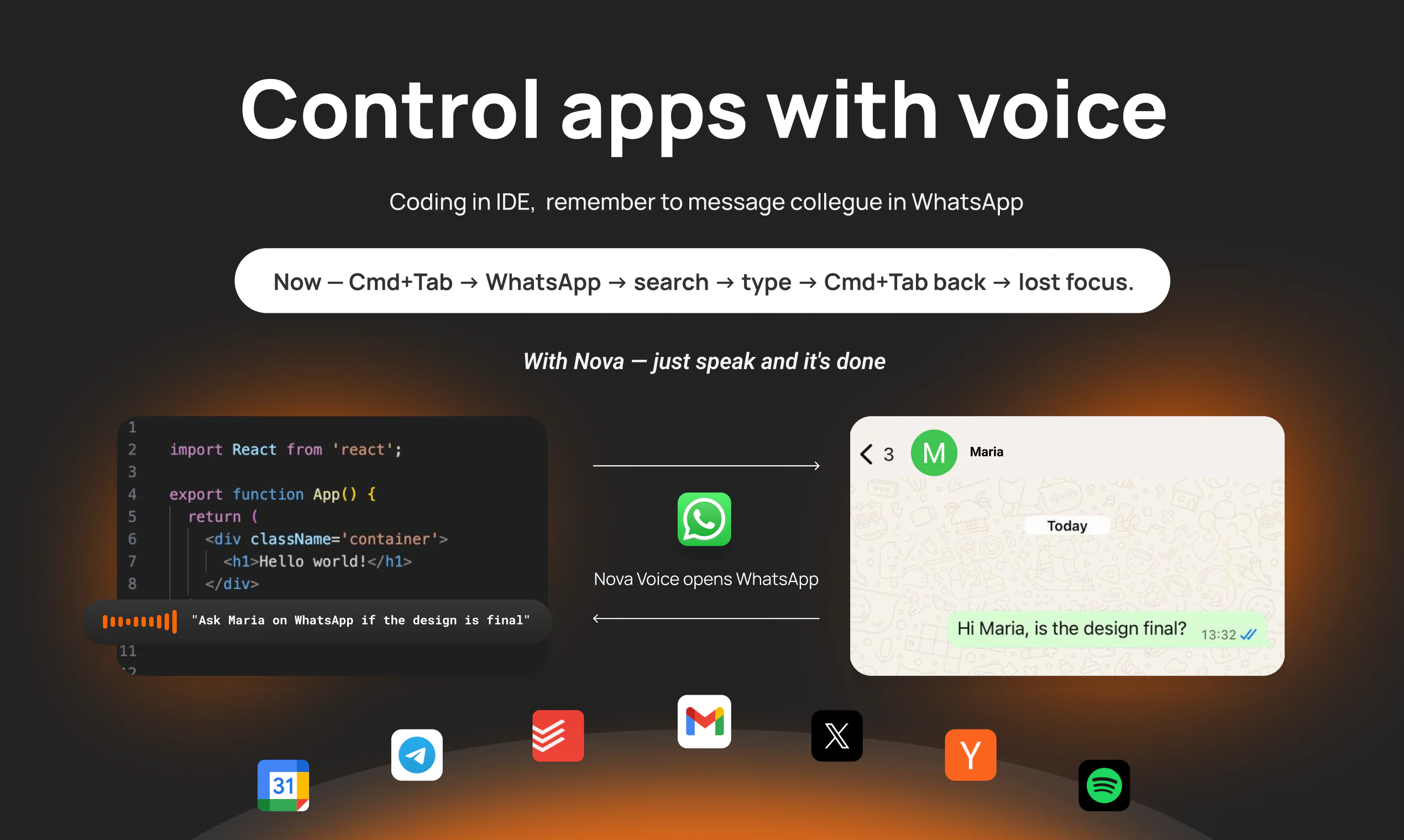

So my co-founders and I built NovaVoice — a Voice OS that writes, answers questions, and acts across your entire desktop. Not just dictation.

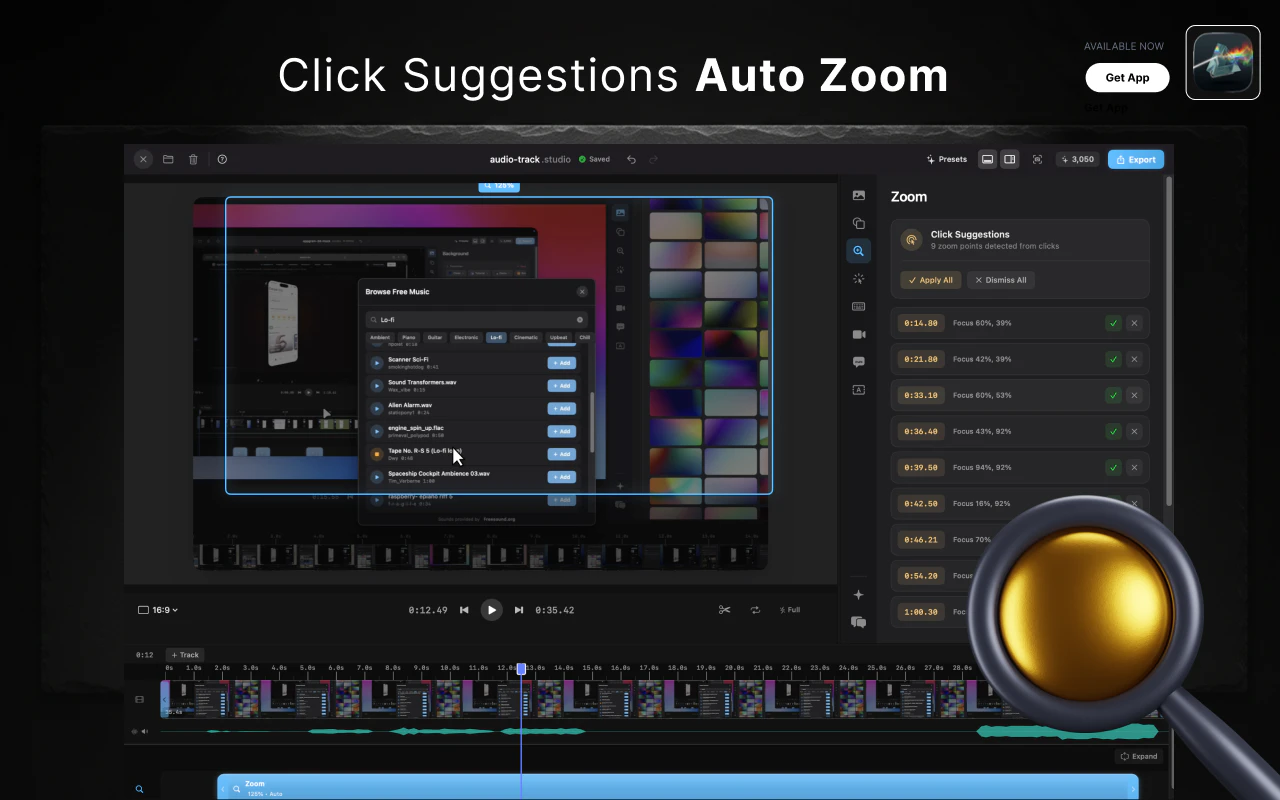

What makes NovaVoice different:

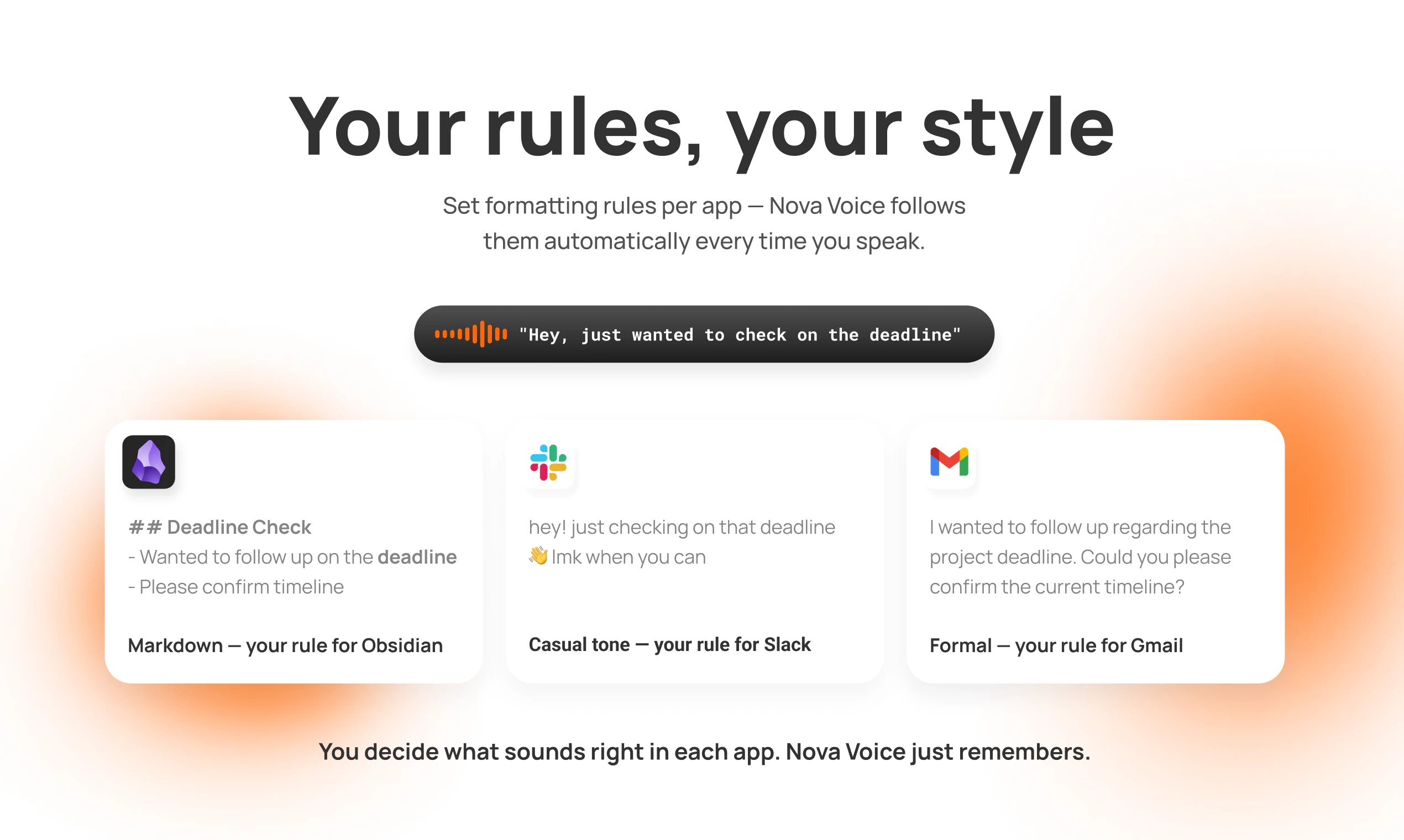

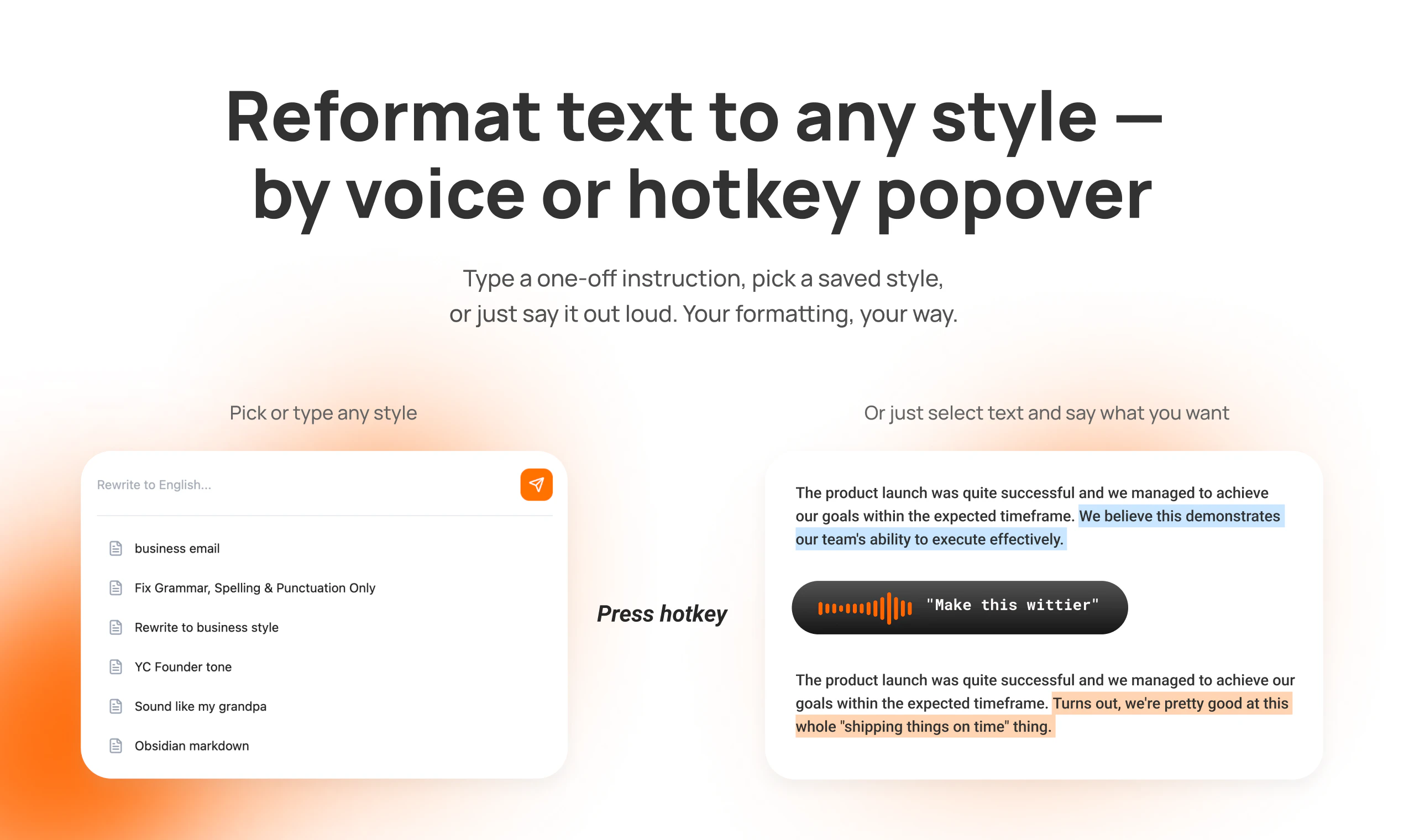

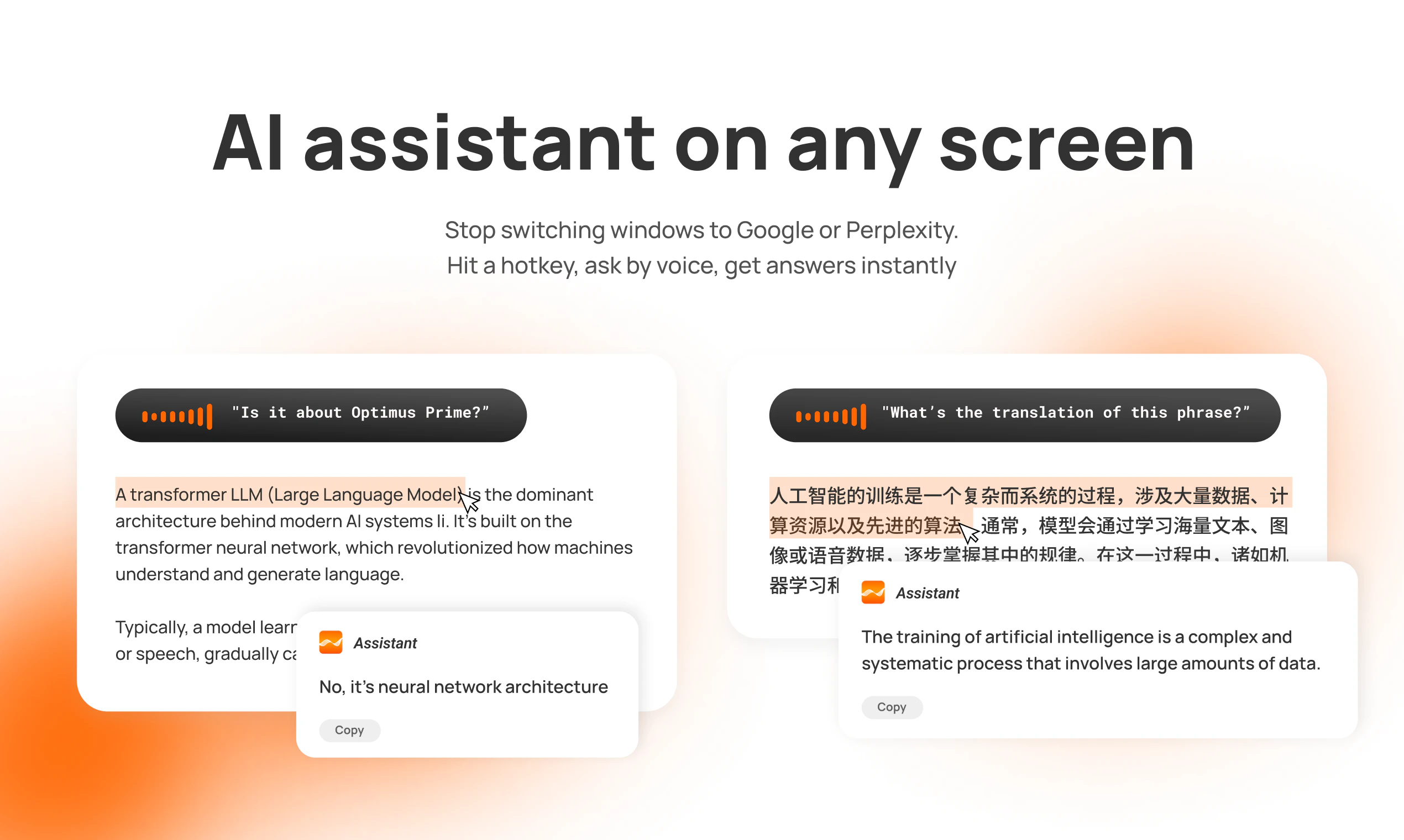

1. Context-aware writing — speak naturally in your email client, get professional emails. Dictate into Notion, get formatted Markdown. NovaVoice knows where you are and formats accordingly.

2. AI assistant on any screen — hit a hotkey, ask anything by voice. Translate text, get answers, research — no switching to browser or ChatGPT.

3. Voice commands across apps — you're coding. Say "Ask Maria in WhatsApp if design is ready." NovaVoice opens WhatsApp, finds Maria, drafts the message. You just hit send.

4. Smart popover — Reformat any text instantly using preset styles or type any custom instruction on the fly. No switching to Grammarly or ChatGPT to polish your writing.

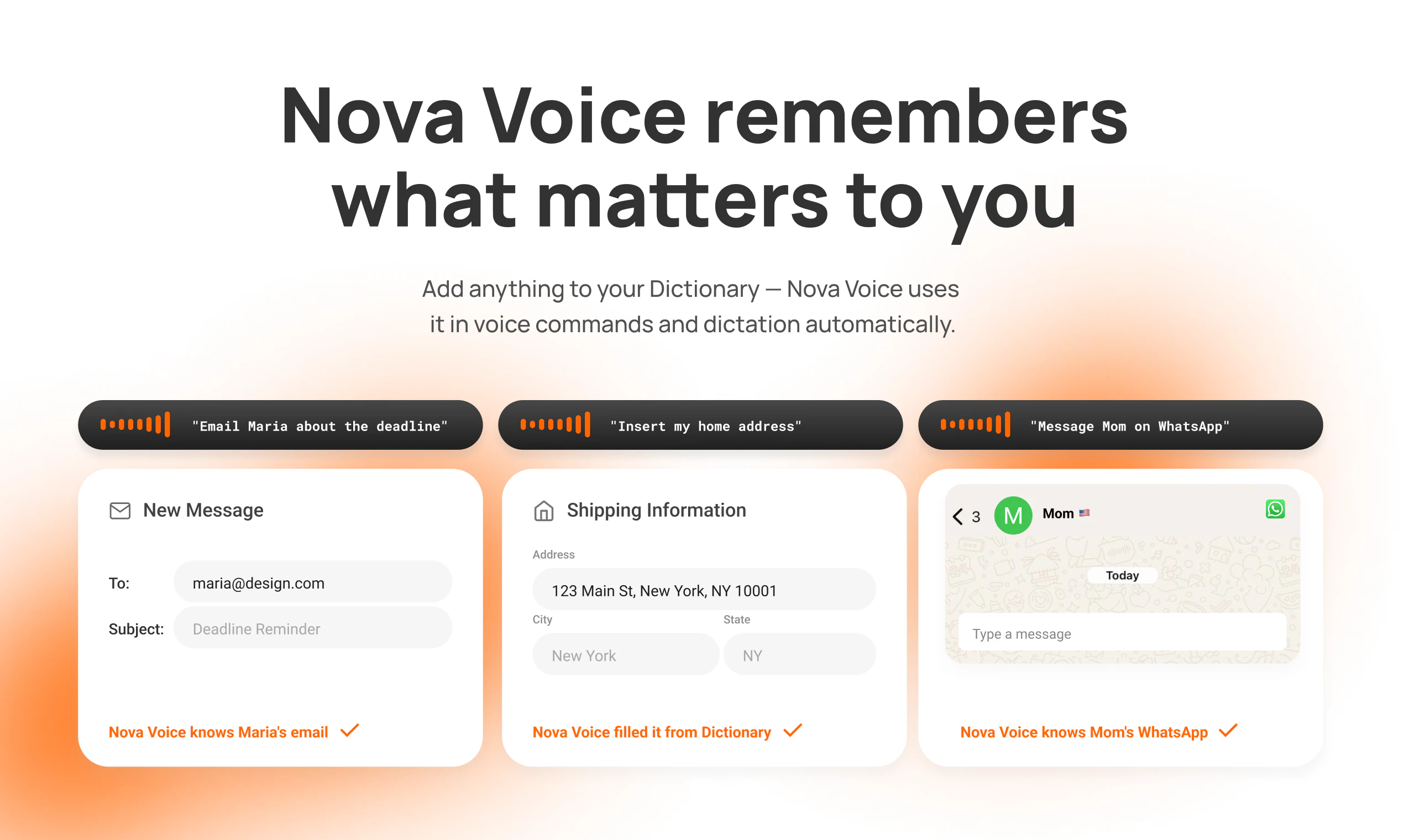

5. Custom Dictionary — NovaVoice remembers your shortcuts: contacts, addresses, loyalty numbers. Say "email Maria" or "insert home address" — no need to spell things out every time.

6. Cross-language quality — switch between languages mid-sentence. NovaVoice catches proper names, abbreviations, grammar automatically.

I use NovaVoice daily for prompting AI models, writing emails, code comments, quick Slack messages, repetitive actions in daily apps, and asking the assistant by voice instead of googling — without breaking flow or switching windows.

What's next:

- More app integrations and voice commands

- Near-instant transcription

- Personalization that learns your writing style

We built this for productivity, but realized it has real impact for people with limited mobility. Voice isn't just faster — for some, it's essential.

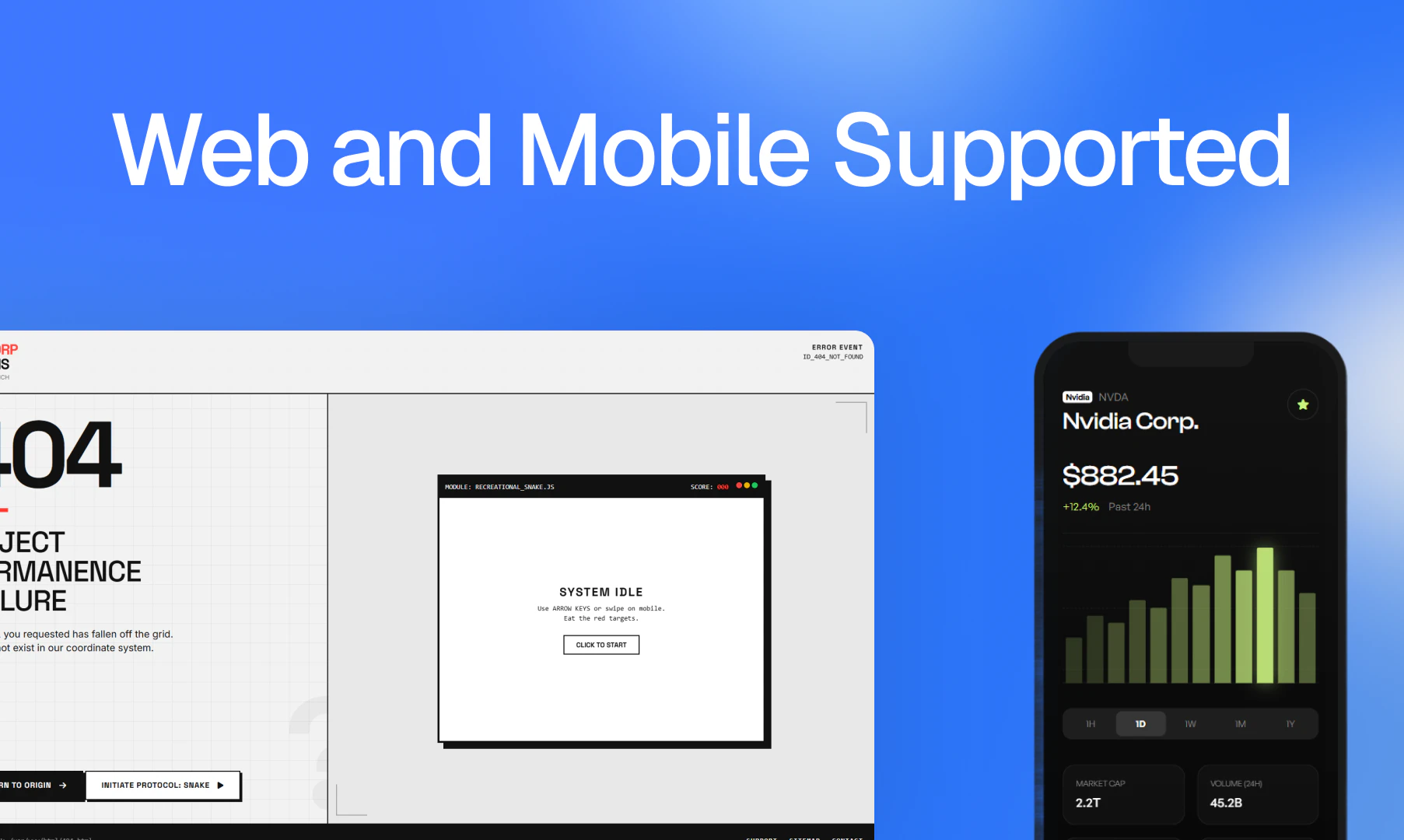

NovaVoice works on Mac & Windows.

We'd love to hear your feedback!

I have used Nova Voice (Free Version) and it worked fine with me. I am sharing my experience here:

1. AI assistant is good. It could answer all of my questions. So, it will surely cut one’s time with ChatGPT or similar apps just for finding information or questions answers.

2. I asked questions in English and also in Bengali (my mother tongue) and I was surpised happily with the fact that it worked in Bengali Language and provided me the information I was seeking in my mother tongue.

3. As for transcription, it worked perfectly in English language speech typing or dictation. Auto punctuation is also up to the mark and then after you finish typing you can improve your writing and it also worked well.

4. As for Bengali Language, it worked well if I spoke slowly and clearly but in English, I could speak naturally and it got my words correctly. Well, the thing is that most apps do not provide any quality output in my language at all. So, Nova Voice stands out in this regard in a very nice way. Of course, I want better support.

4. It will surely help writers and bloggers.

5. The only thing that I wish better was speed. I hope that they will improve this in the next version.

Overall, I am satisfied with my experience.

Congrats on the launch!

I'm a big fan of voice and getting things done through dictation. Curious how this compares and differs from @VoiceOS.... which the term itself is also highlighted on your webpage and marketing copy which I found interesting.

been waiting for voice that handles navigation, not just transcription. which apps have you tested beyond the obvious ones?

@rustam_khasanov congrats with the launch! didn't get if it's mac os app or what? does it have full control over my mac os?

Hi PH Community!

I am Anton, co-founder of NovaVoice. Try us and you will see why we earn attention!

As someone who loves WhisprFlow but can't work with it, coz it's not fast and understanding enough (I am suprised too), I am very hopeful here!

I explored your landing page and still have a couple of questions.

- What languages does NovaVoice support?

- Is it possible to use NovaVoice as a speech-to-text tool only, not as an agent? As a replacement for WhisprFlow

Good luck Rustam!

Really impressive work, congrats on the launch! NovaVoice’s transcription is surprisingly accurate. I was especially curious to test how it handles mixed language input, since I often switch between Portuguese and English mid-sentence (which usually breaks most tools), but it handled it much better than expected.

I also like the broader vision of a “Voice OS”, reducing context switching and moving closer to working at the speed of thought feels like a natural next step.

One thing I’d be curious to explore further is how it performs in more complex or noisy real-world scenarios, and how much control users have over formatting and actions. But overall, this is a very promising direction, excited to keep testing it.

This is a nice reminder that I shouldn't always be typing since voice dictation is so much faster. What languages does NovaVoice support right now?

I like the idea of a voice-first workflow, but the real challenge is always consistency once you move beyond demos. Acting across apps, formatting text, and staying context-aware sounds great, but in day-to-day messy usage things usually break or need correction. How close is this to something you can actually rely on without constantly fixing outputs or switching back to keyboard?

Interesting, and looks quite sophisticated!

But it won't be useful for me as I can't conceive what I want to write faster than I can type normally...

I'm using aqua voice. How does your product compare with it?

Congratulations on the launch.

I recently broke my arm, which slowed my writing. The default transcriptionist had all the garbage I'd mentioned, so I had to correct it, and my speed ultimately stalled. Your service helped me get back to my previous pace of communication with partners and maintain my polished style without any additional edits. Thank you!

Congratulations on the launch, team! Super excited to try Nova. I’ve never quite learned to type fast let alone blind and honestly, I’m happy not to have to learn that skill. Typing is one of the main limitations for me and it’s frustrating, so I’m ready to be the #1 fun. Just one quirky question: does Nova support switching between languages in real time? :p

Voice is the way forward. Curious — are you using ElevenLabs under the hood for the voice layer, or have you built your own models? The quality bar they've set is wild and I'm interested in how new voice products are approaching it. Typing is the bottleneck for everything I do, happy to see people building real voice-first tools instead of bolting voice onto a text app.

Is support for other platforms planned (mobile/browser versions)?

Congratulations on the launch btw

My coworkers will definitely think i've lost my mind talking to myself all day:D But 4x speed worth it!

just dropped superwhisper to use this, banger!

I'm an active MacWhisper user and I love the concept. It's definitely worth giving a try!

Congrats guys!

This looks like a massive time-saver. How well does the app handle ambient background noise if I am working in a busy coffee shop or office?

Oh, we put a lot into this one.

Proud of the team. :)

Hope NovaVoice ends up being the thing you reach for every time input text slows you down