PH热榜 | 2026-04-13

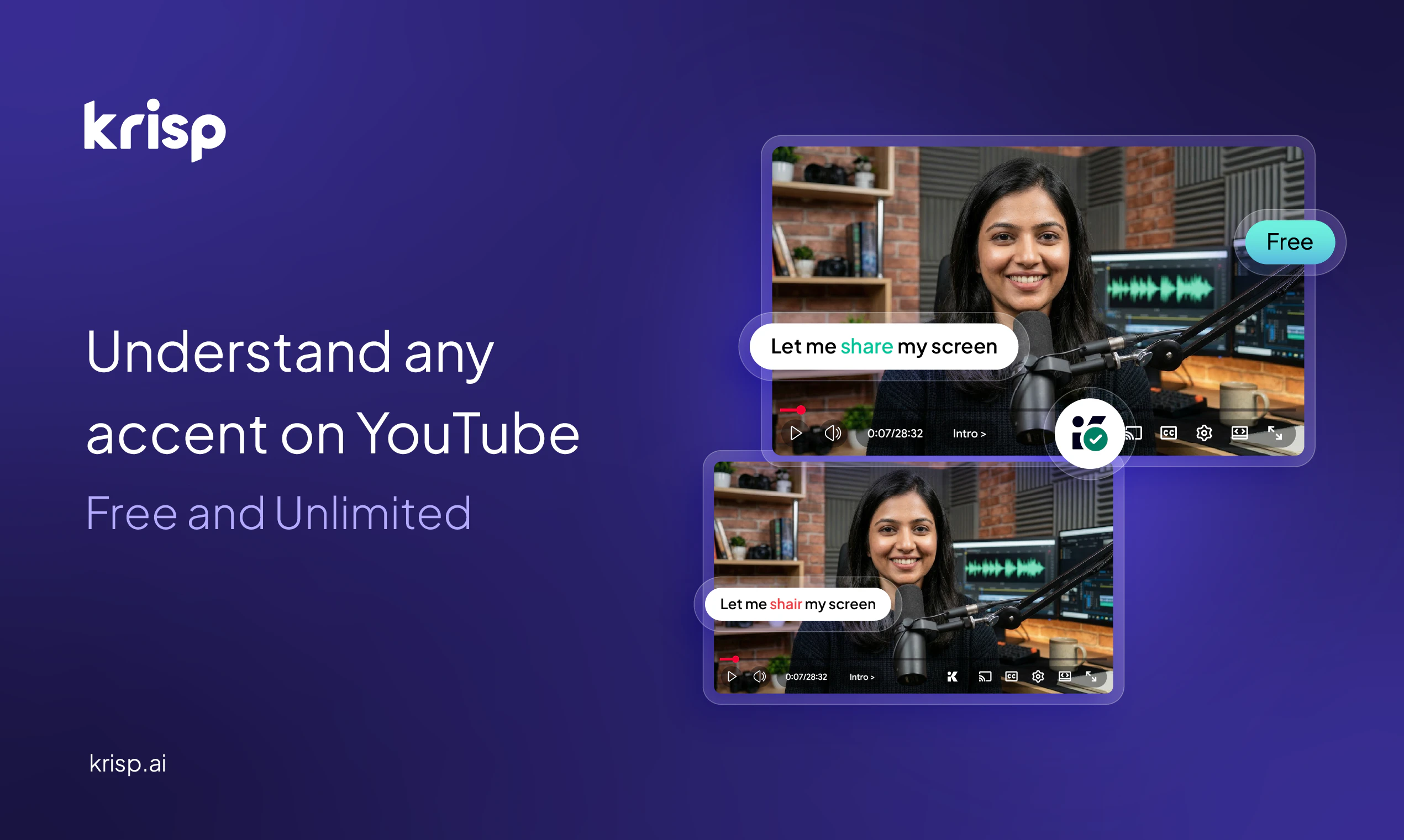

一句话介绍:一款免费的Chrome浏览器扩展,利用端侧AI实时转换YouTube视频中的口音,提升非母语英语内容(如技术讲座、课程)的清晰度和可理解性,解决了用户因口音障碍难以吸收优质内容的痛点。

Chrome Extensions

Productivity

Audio

浏览器扩展

口音转换

语音清晰化

AI音频处理

教育科技

无障碍辅助

实时处理

YouTube工具

端侧AI

内容可及性

用户评论摘要:用户普遍认为产品解决了真实痛点,尤其在理解印度等口音的技术讲座时效果显著。主要反馈包括:肯定其价值与易用性;询问支持的口音范围(团队回复重点支持印度、菲律宾等口音);关注语音的自然度、情感保留及与视频的同步性;建议扩展到创作端;并讨论该功能未来是否会被YouTube原生集成。

AI 锐评

Krisp此次推出的口音转换器,看似是功能微创新,实则精准刺入了一个被主流平台长期忽视的“口音鸿沟”市场。其真正价值不在于技术炫技,而在于对内容消费不平等现象的务实解构。YouTube拥有海量由非母语者创造的优质教育、技术内容,但口音屏障使得这些内容的有效传播大打折扣。字幕和调速是通用方案,但前者存在翻译失真和延迟,后者牺牲效率,均未直击“听不清”的核心。

产品巧妙地将经过会议场景验证的AI模型,以轻量的浏览器扩展形式嵌入最大的视频平台,实现了近乎零成本的用户触达和教育。其“端侧AI”的强调,不仅关乎隐私和延迟,更深层次是降低了平台集成的技术顾虑与合规风险,为未来可能的B端合作或收购埋下伏笔。从评论看,用户最关切的并非技术原理,而是效果边界(口音覆盖度、情感保留)和体验完整性(音画同步)。这正是产品的挑战所在:在“口音标准化”与“发言人音色特质保留”之间走钢丝。过度优化前者,可能导致语音“机器人化”,损耗教学情感;过度强调后者,则可能削弱清晰化效果。

它的出现,预示着一个新维度的媒体无障碍标准正在被定义——从“看到文字”到“听清声音”。然而,其商业模式的长远性存疑。作为免费扩展,它无疑是出色的用户获取和品牌展示工具,但最终价值闭环可能需要依赖向B端(如在线教育平台)的技术输出,或促使YouTube这类巨头将其内化为付费功能。它此刻的成功,恰恰在于它指出了巨头的盲区,但这也可能加速巨头亲自下场的进程。

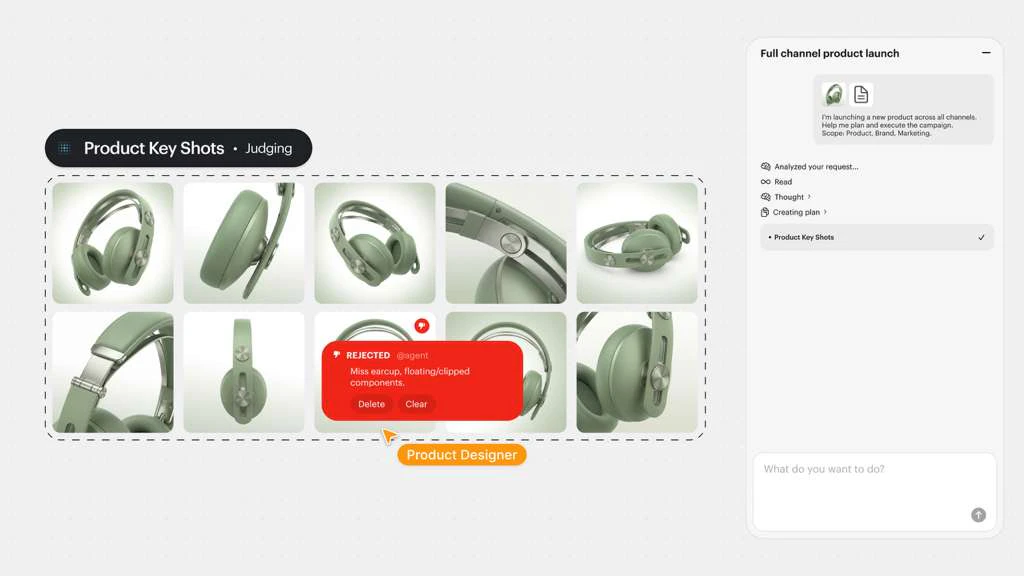

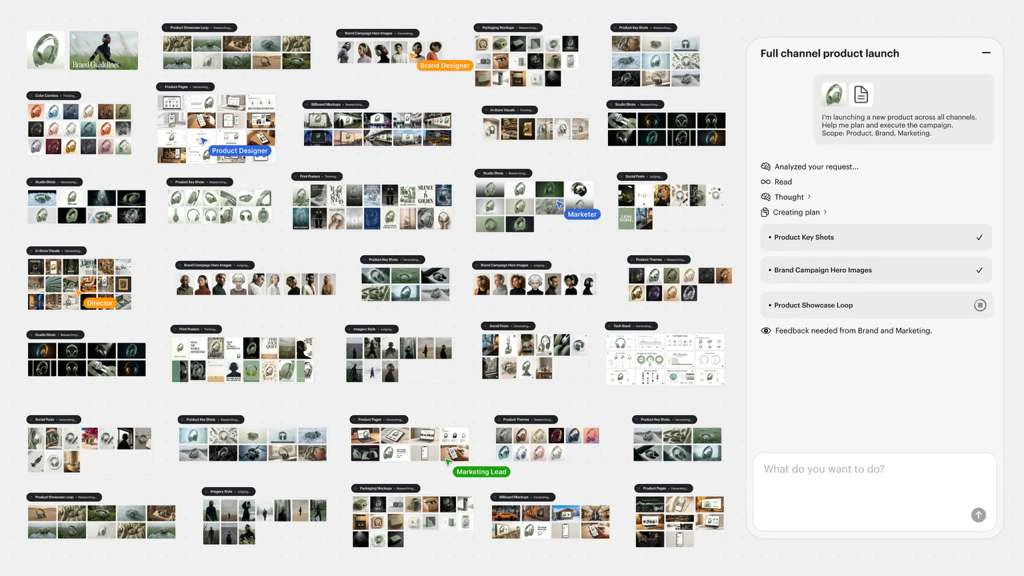

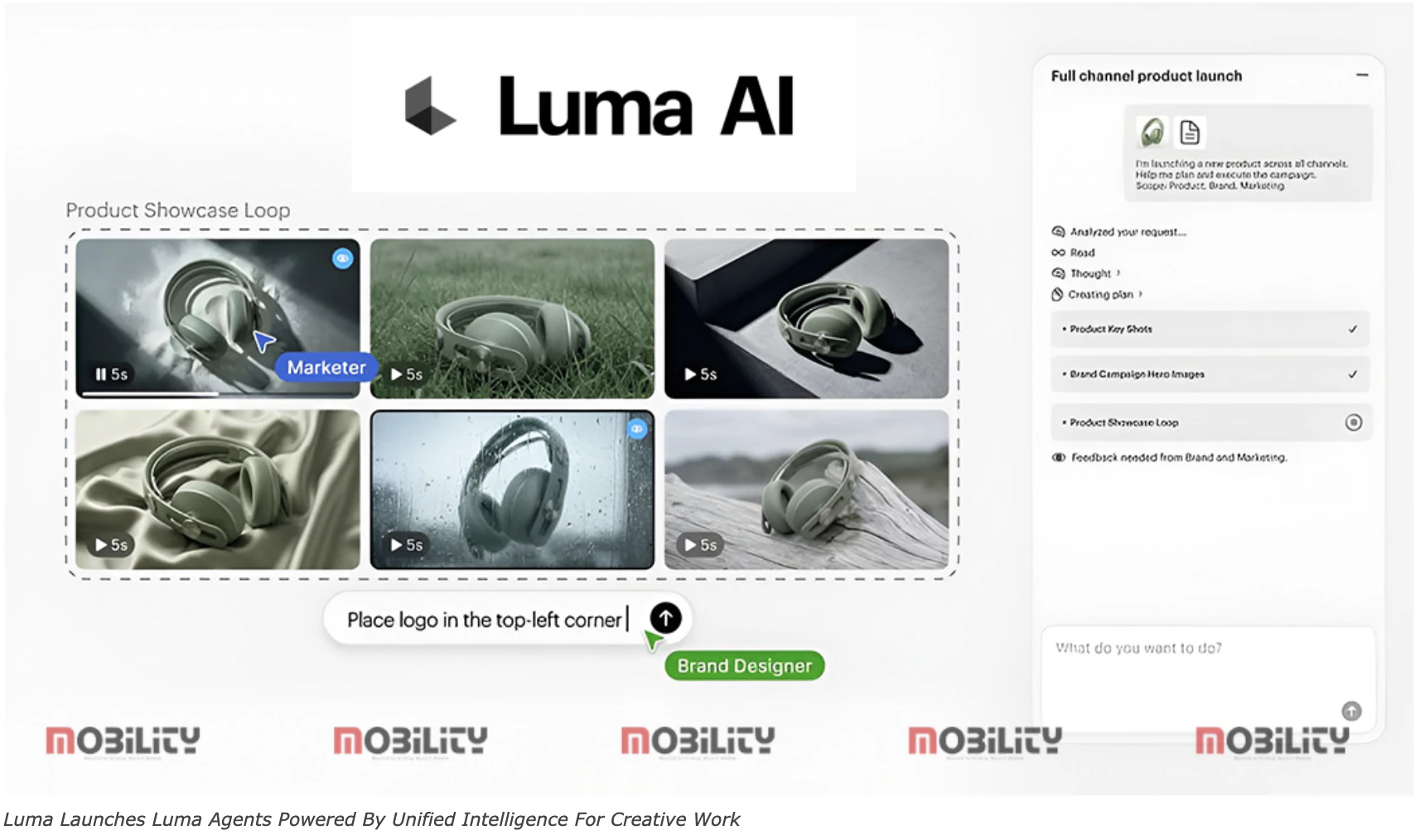

一句话介绍:Luma Agents是一款面向创意团队和代理商的AI智能体平台,通过在一个共享工作流中贯通视频、图像和音频的规划、生成与迭代,解决了多工具间创作流程割裂、上下文丢失及效率低下的核心痛点。

Design Tools

Social Media

Marketing

AI智能体

多模态内容生成

创意生产管线

品牌营销

视频本地化

社交媒体广告

创意协作平台

端到端工作流

上下文保持

用户评论摘要:用户普遍认可“端到端共享上下文”的价值,认为其解决了创意资产风格不连贯的根本问题。主要关注点在于:实际工作流中团队协作与单会话的上下文传递范围、AI迭代过程中人工控制权的平衡、以及产品是替代还是补充现有工具栈。部分用户建议集成更先进的生成模型并开放试用。

AI 锐评

Luma Agents的野心不在于推出又一个孤立的AI生成工具,而在于试图重构数字创意生产的工作流本身。其宣称的“共享上下文端到端”是击中当前行业要害的精准定位——它将矛头指向了创意生产中长期存在的“缝合怪”困境,即不同模态、不同环节的产出在技术层面合格,却在品牌调性与创意内核上脱节。

产品的真正价值,在于将AI智能体从“执行者”定位向“协作者”推进。它不再仅仅是听令生成一张图或一段视频,而是试图理解并承载一个完整的创意简报(Creative Brief),并让这份理解贯穿于跨模态、多格式的批量生产与迭代中。这对于品牌营销、电商素材等强调高度一致性与快速规模化生产的场景,具有显著的效率提升潜力。

然而,其面临的挑战同样尖锐。首先,“上下文”的深度与保真度是技术黑盒,智能体对品牌“神韵”的理解能否达到资深创意总监的精度存疑。其次,评论中关于“控制权”的疑问直指核心:在赋予AI规划与迭代能力后,人类创意者如何确保主导权而非被流程裹挟?这涉及到工具哲学的根本转变。最后,市场采纳路径很可能如评论所预测——先作为现有工具链的补充层(Layering),而非颠覆性替代。只有当其在复杂、边缘案例中证明其可靠性与产出质量后,才可能引发工作流的彻底重构。

因此,Luma Agents是一次极具前瞻性的赛道卡位,它描绘了未来AI赋能创意生产的理想图景:一个无缝、智能、保持一致性的管线。但其成功与否,取决于能否将“共享上下文”这一美好概念,转化为创意团队可感知、可信任、且不可或缺的生产力基石,而非又一个增加复杂性的中间件。

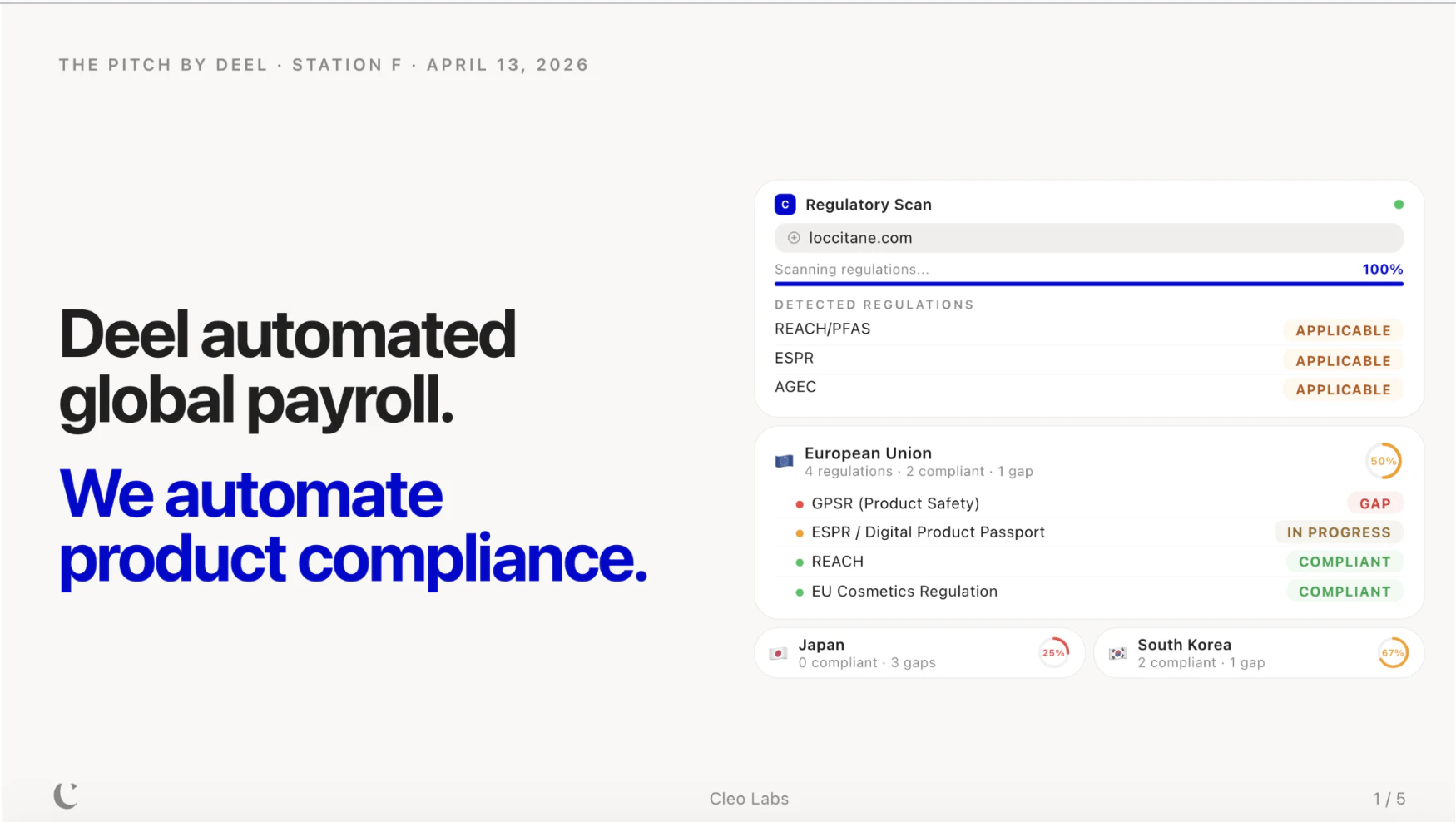

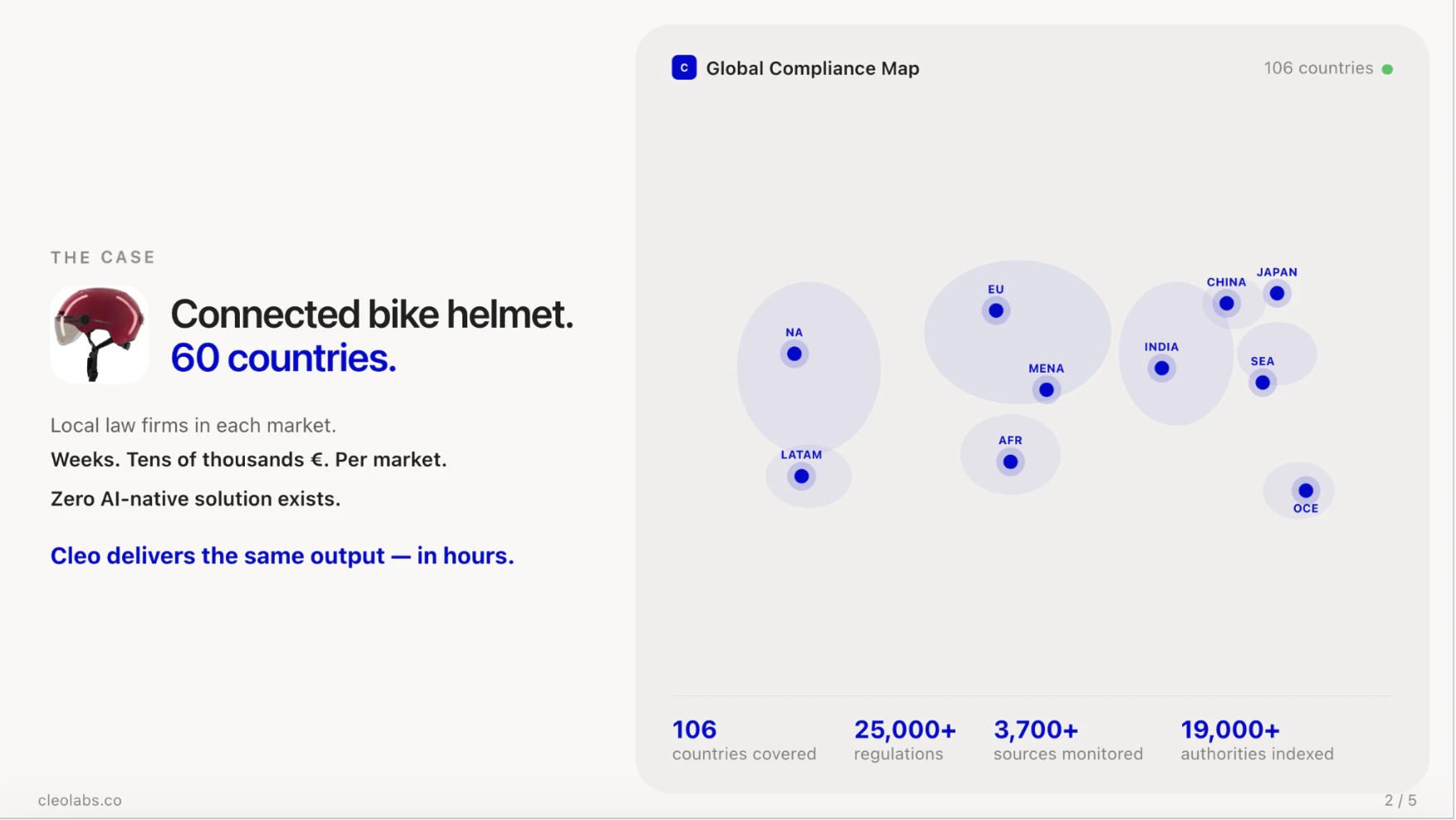

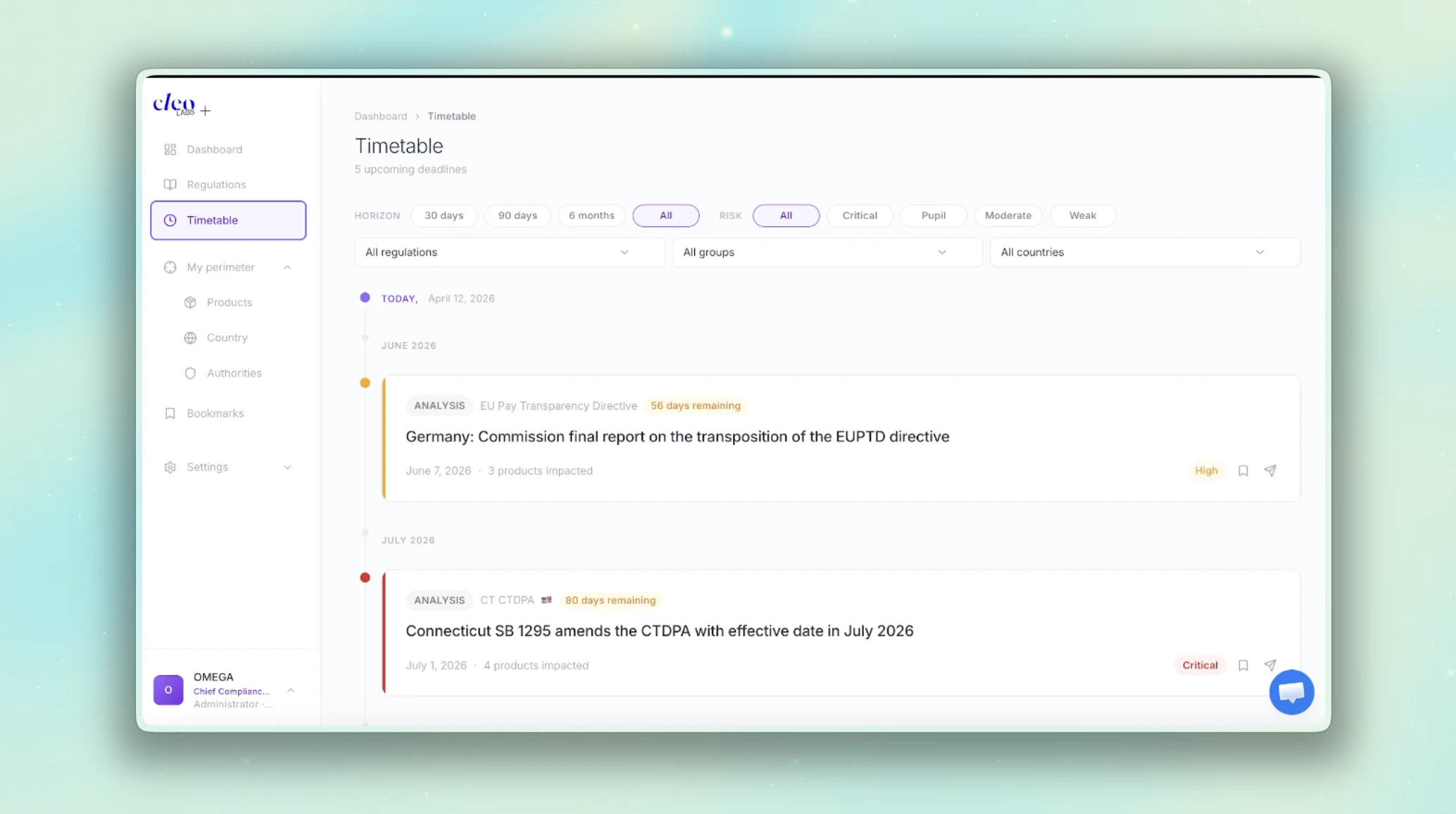

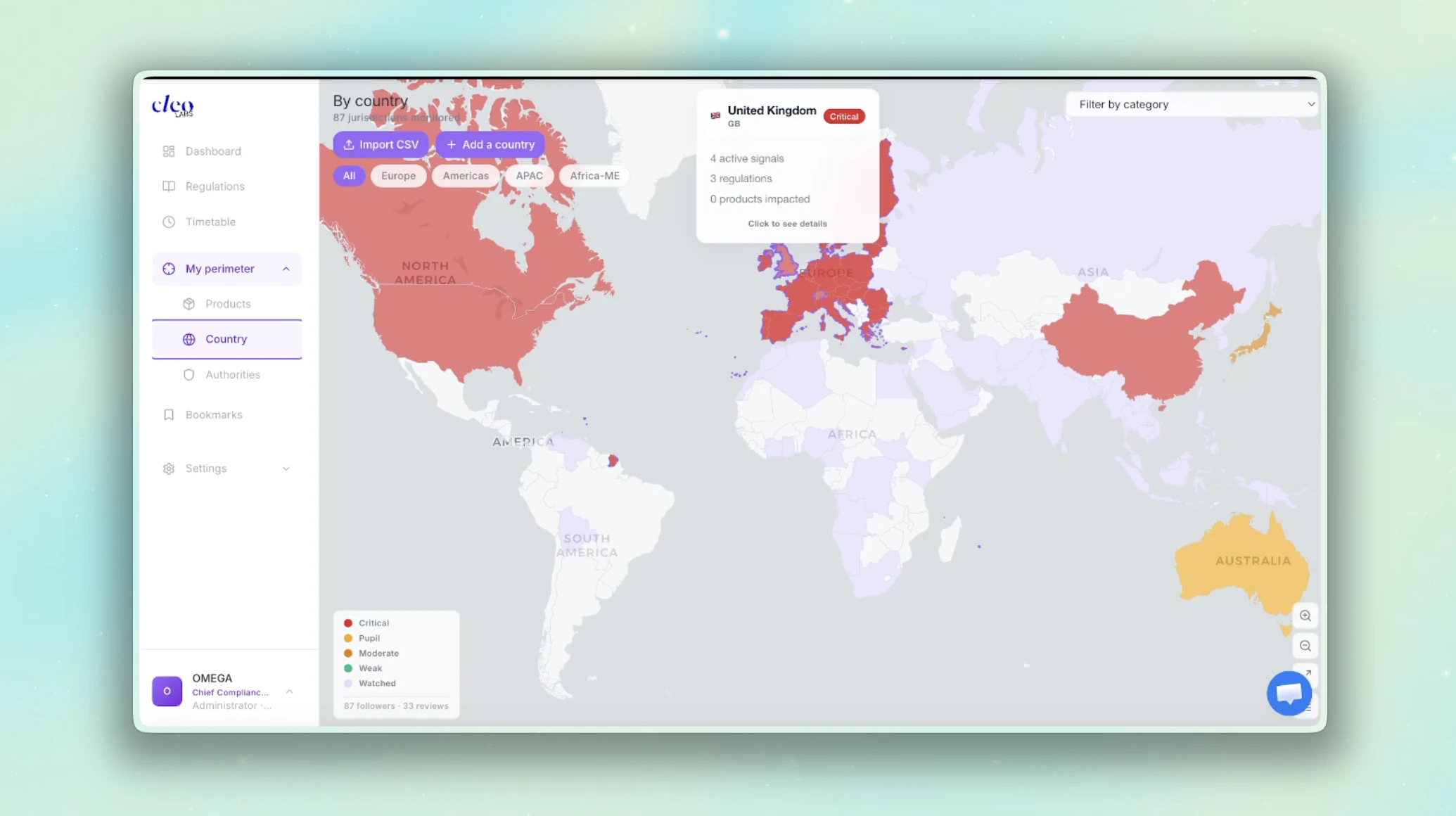

一句话介绍:Cleo Labs 是一款通过多智能体AI管道自动扫描全球106个国家、超1.9万个监管机构,为实体产品卖家提供精准、结构化全球合规图谱的工具,解决了企业跨境销售时面临的法规复杂、多变且难以追踪的核心痛点。

Legal

Artificial Intelligence

全球合规自动化

RegTech

实体产品合规

AI法律科技

跨境贸易

监管情报

多智能体AI

数字产品护照

供应链合规

市场准入分析

用户评论摘要:用户关注产品是同时展示所有适用法规还是择一处理(确认展示全部),适用阶段(证实适用于市场准入前规划与持续监控),以及小团队能否无需专家直接使用。建议包括集成Shopify等平台,并高度认可其“AI速度+法律专家验证”的混合模式与解决实际痛点的价值。

AI 锐评

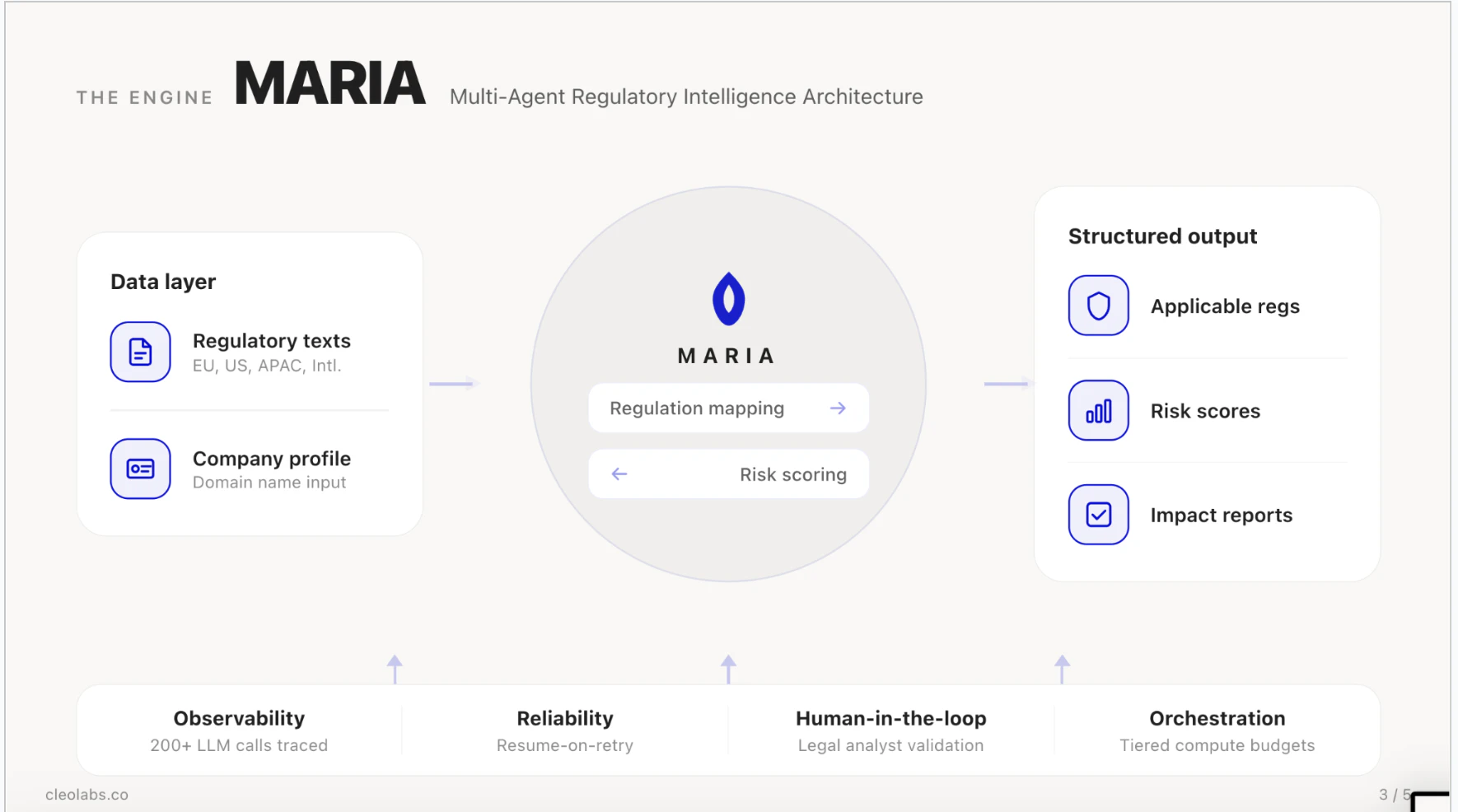

Cleo Labs 切入了一个被严重低估的“硬骨头”市场:实体产品的全球合规。其宣称的价值并非简单的信息聚合,而在于用一套名为MARIA的多智能体AI管道,试图将高度非结构化、分散且动态的各国监管条文,转化为结构化的、可操作的合规清单。这背后的真正挑战不是数据量,而是理解的准确性、更新的及时性以及对法规冲突的识别能力。

产品最犀利的卖点在于“人类在环验证”。在合规领域,AI的“幻觉”是致命伤,单纯依赖大语言模型输出无法建立信任。Cleo通过法律专家对AI输出进行校验,本质上是在用AI承担繁重的初筛和监测工作,而将最终的质量控制锚定在人的专业上。这是一种务实的“AI增强”模式,而非天真的“AI替代”模式,符合企业客户在关键任务上的风险厌恶心理。

其长期战略押注“数字产品护照”等监管趋势极具前瞻性。这不仅是工具,更是试图成为未来产品合规数据的基础设施。然而,其面临的考验同样严峻:如何保证对19,000多个监管源头的覆盖深度而不仅仅是广度?如何定价才能让早期创业公司和大型跨国品牌都觉得物有所值?以及,当法规解释存在灰色地带时,其“验证”的法律责任边界如何界定?如果它能持续证明其图谱的可靠性与行动指导的有效性,它确实有可能重塑企业全球化扩张的合规成本结构与决策流程。

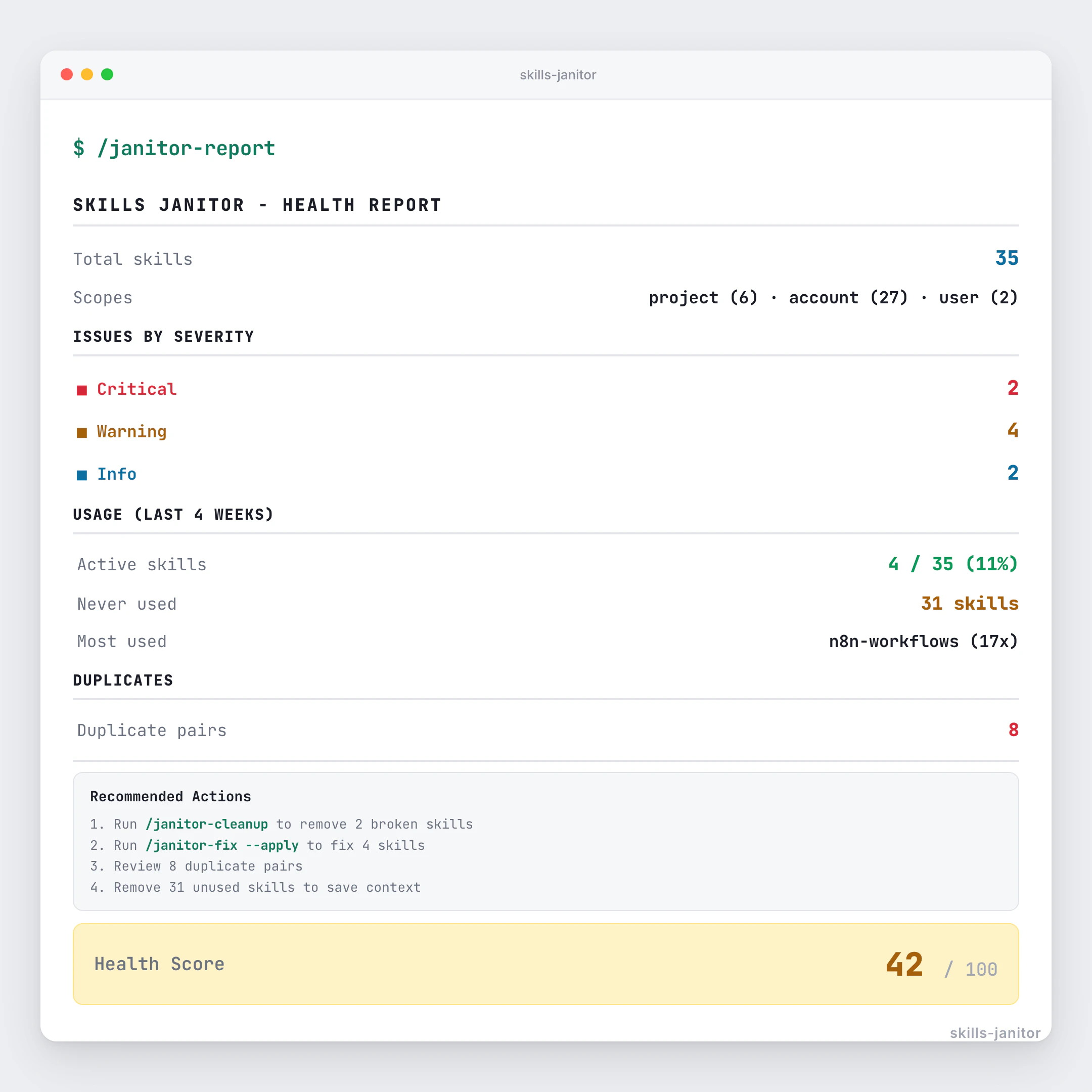

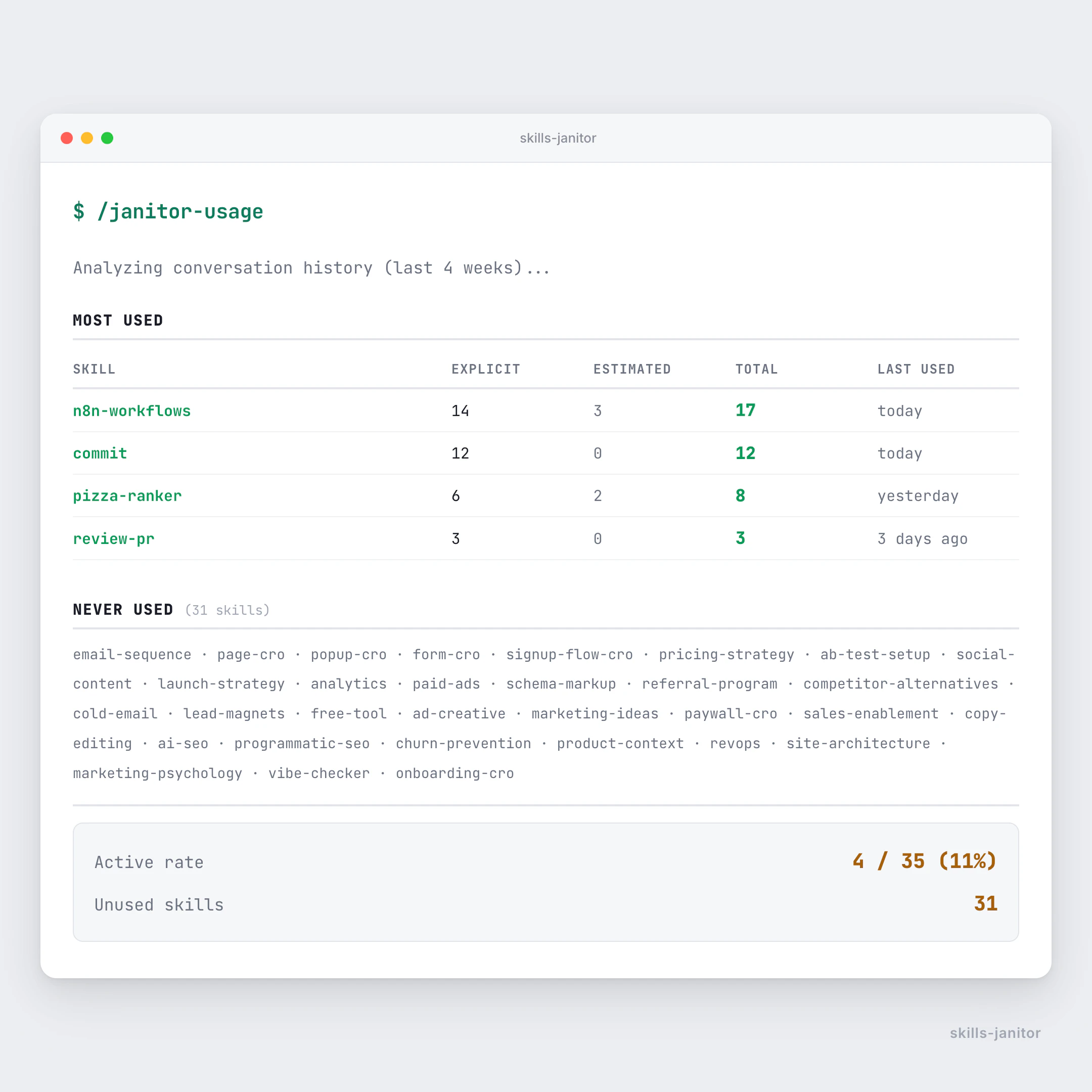

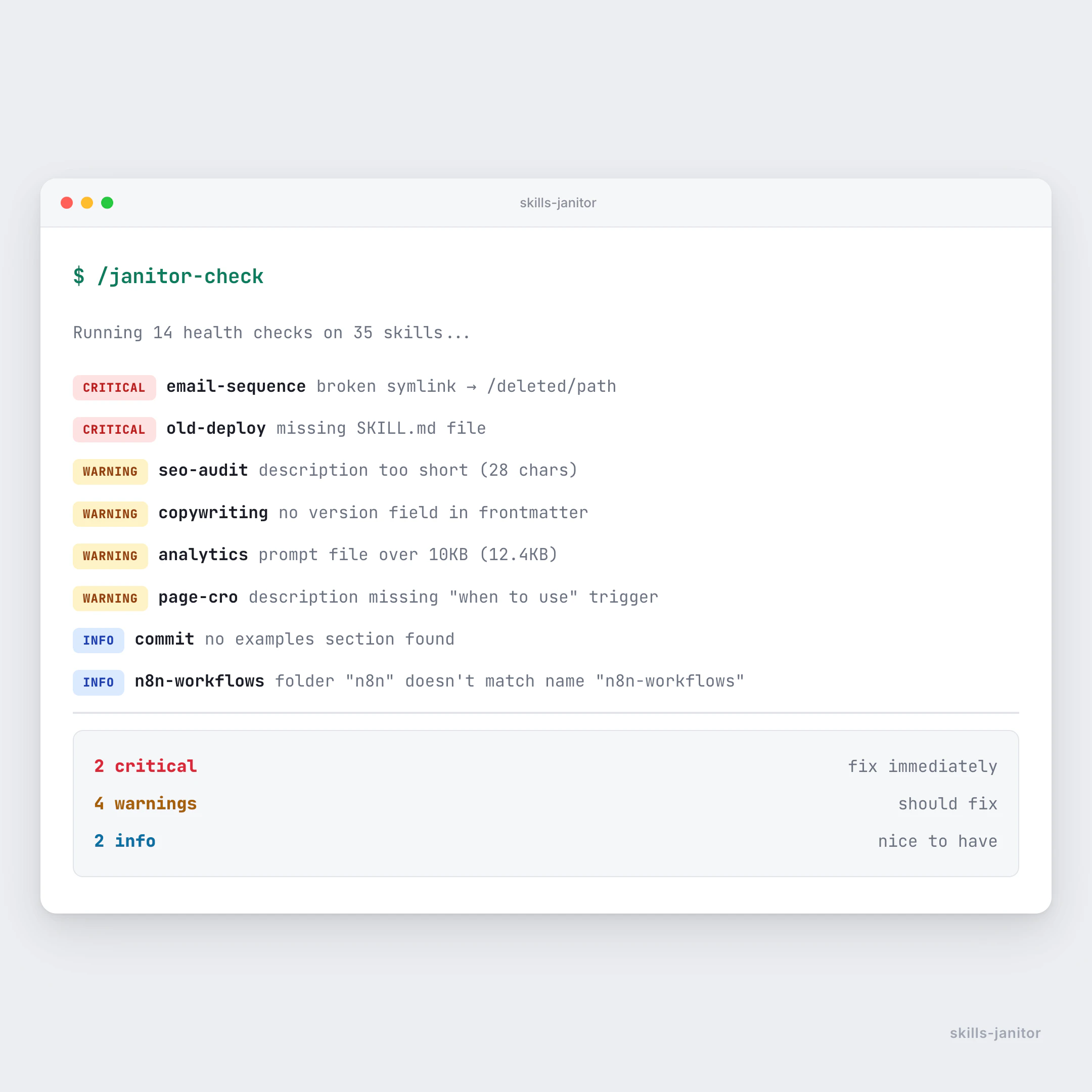

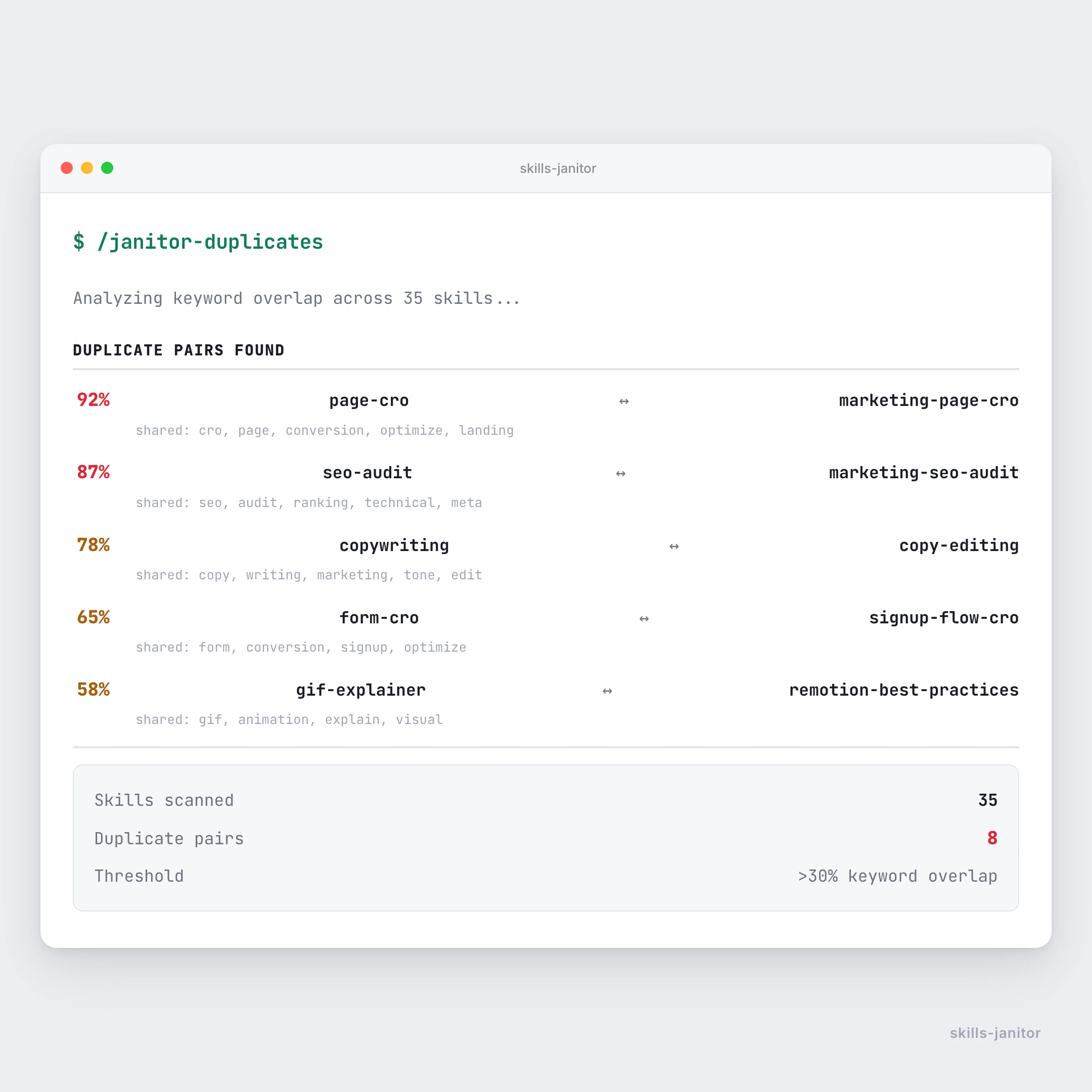

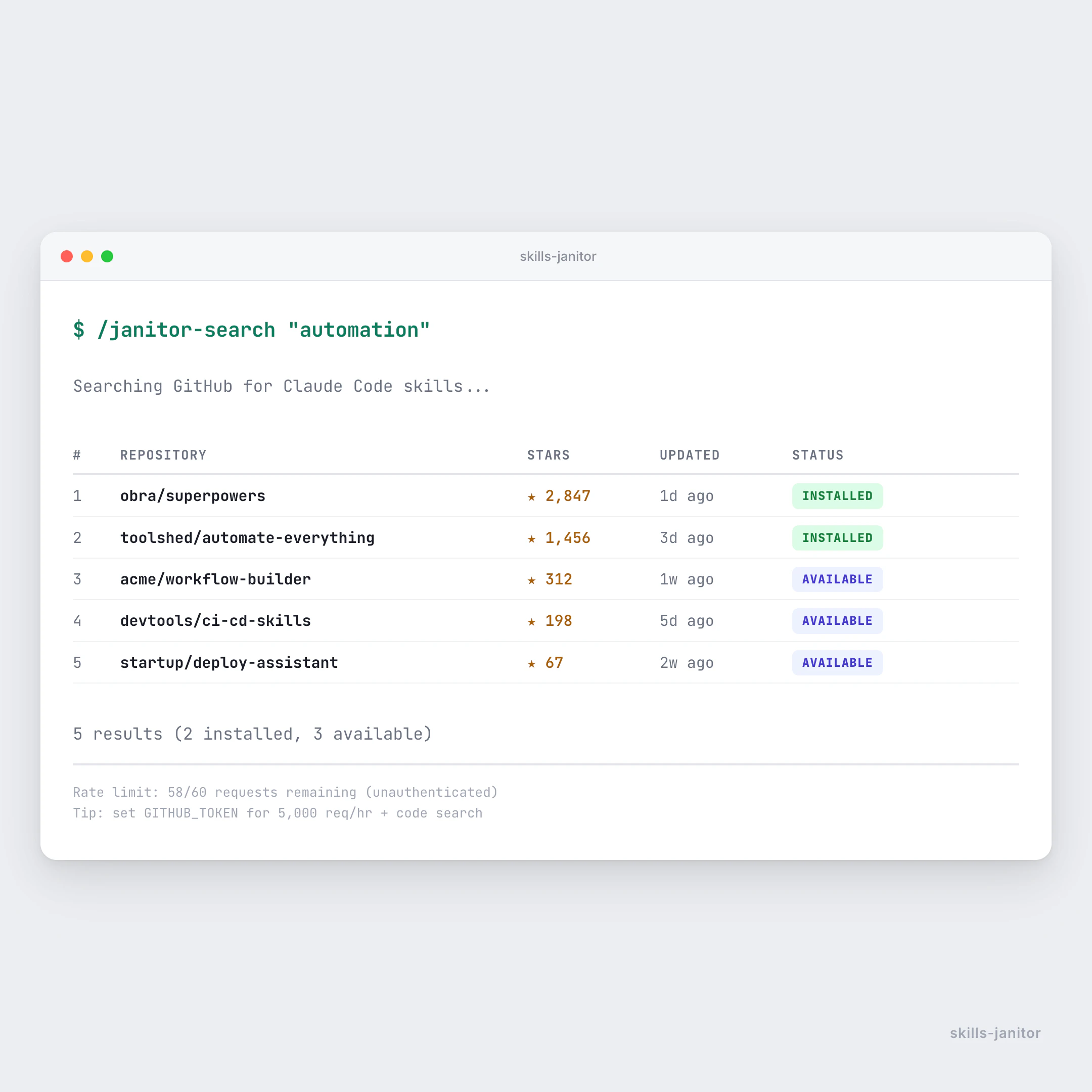

一句话介绍:一款用于审计、去重、整理和追踪Claude Code技能使用情况的本地脚本工具,解决了AI技能库臃肿、重复和难以管理的问题。

Open Source

Developer Tools

Artificial Intelligence

GitHub

AI技能管理

开发工具

代码审计

去重优化

开源工具

上下文窗口优化

Claude生态

效率工具

本地脚本

用户评论摘要:用户普遍认同技能库臃肿痛点,11%使用率引发共鸣。核心关注点包括:重复技能检测的具体算法、使用数据来源(是否分析本地日志)、未来是否会基于使用模式或Token成本识别低效技能,以及如何集成到自动化清理流程中。

AI 锐评

Skills Janitor揭示了一个正在浮现的“AI后工具化”市场。其真正价值不在于简单的文件清理,而在于首次为AI技能生态提供了“可观测性”。当开发者热衷于为Claude等AI编码助手堆砌技能时,却忽略了两个致命问题:一是未经治理的技能库会持续吞噬宝贵的上下文窗口,直接拉低每次查询的性价比;二是技能之间的隐性冲突与功能重叠,可能导致AI行为不可预测。

产品思路犀利地指向了AI原生工作流的一个盲点——我们习惯于用人类项目管理思维管理代码,却尚未建立管理AI能力的范式。它本质上是一个“AI技能治理平台”的雏形。用户评论中关于“基于Token成本分析”和“自动化每周清理”的建议,恰恰点明了其未来可能演进的商业方向:从清理工具变为优化AI计算资源消耗的必备套件。

然而,其当前形态也暴露了局限性。作为Bash+Python脚本,它更偏向极客用户,未能将数据转化为更直观的优化洞察。开源免费虽利于传播,但若不能持续迭代出更深层的分析功能(如技能调用链分析、场景化使用建议),很可能被集成到更完整的AI开发平台中,成为一个功能模块。它捅破了AI技能管理的第一层窗户纸,但能否从“脚本”进化为“标准”,取决于其能否定义出下一代AI技能管理的核心指标与最佳实践。

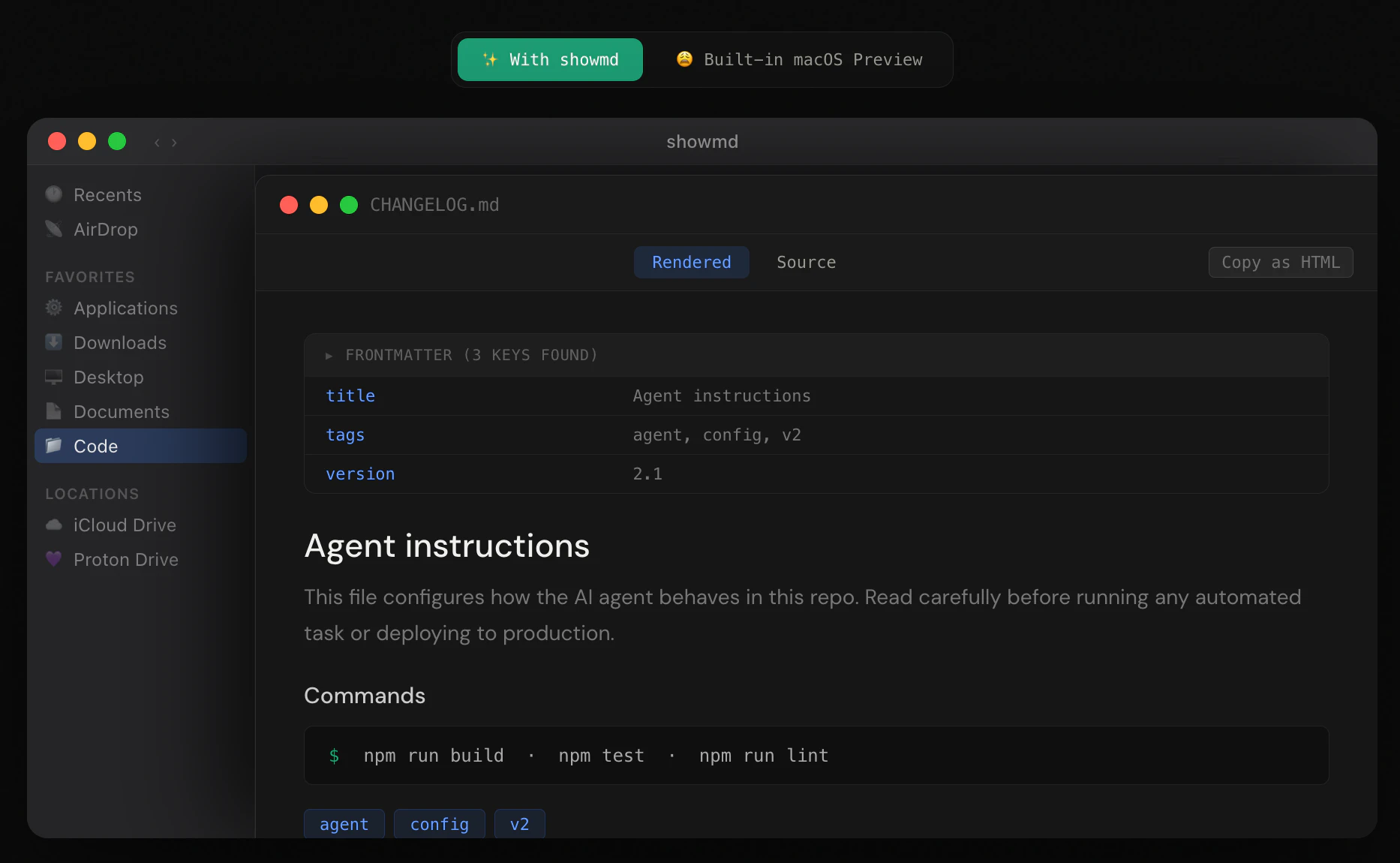

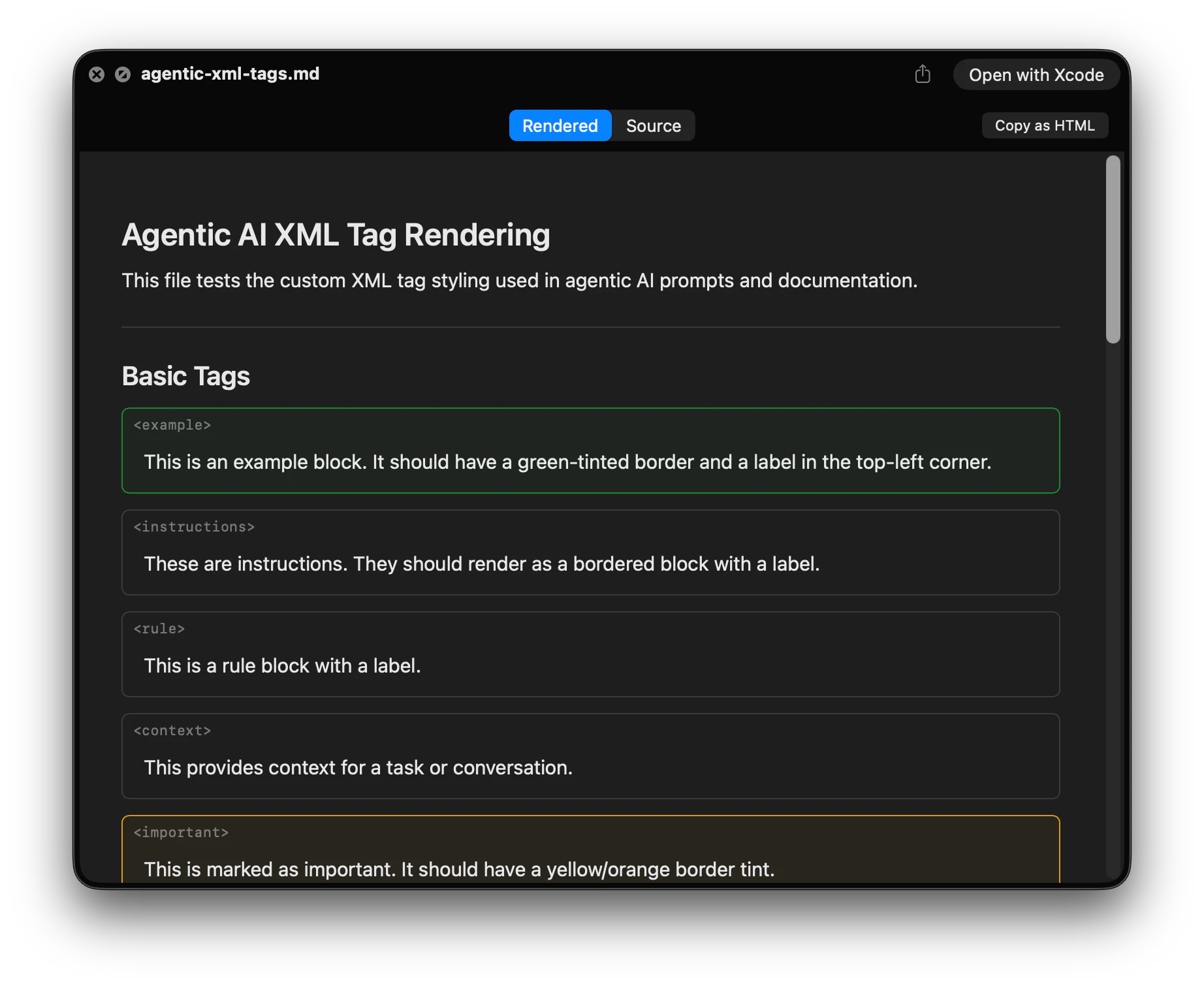

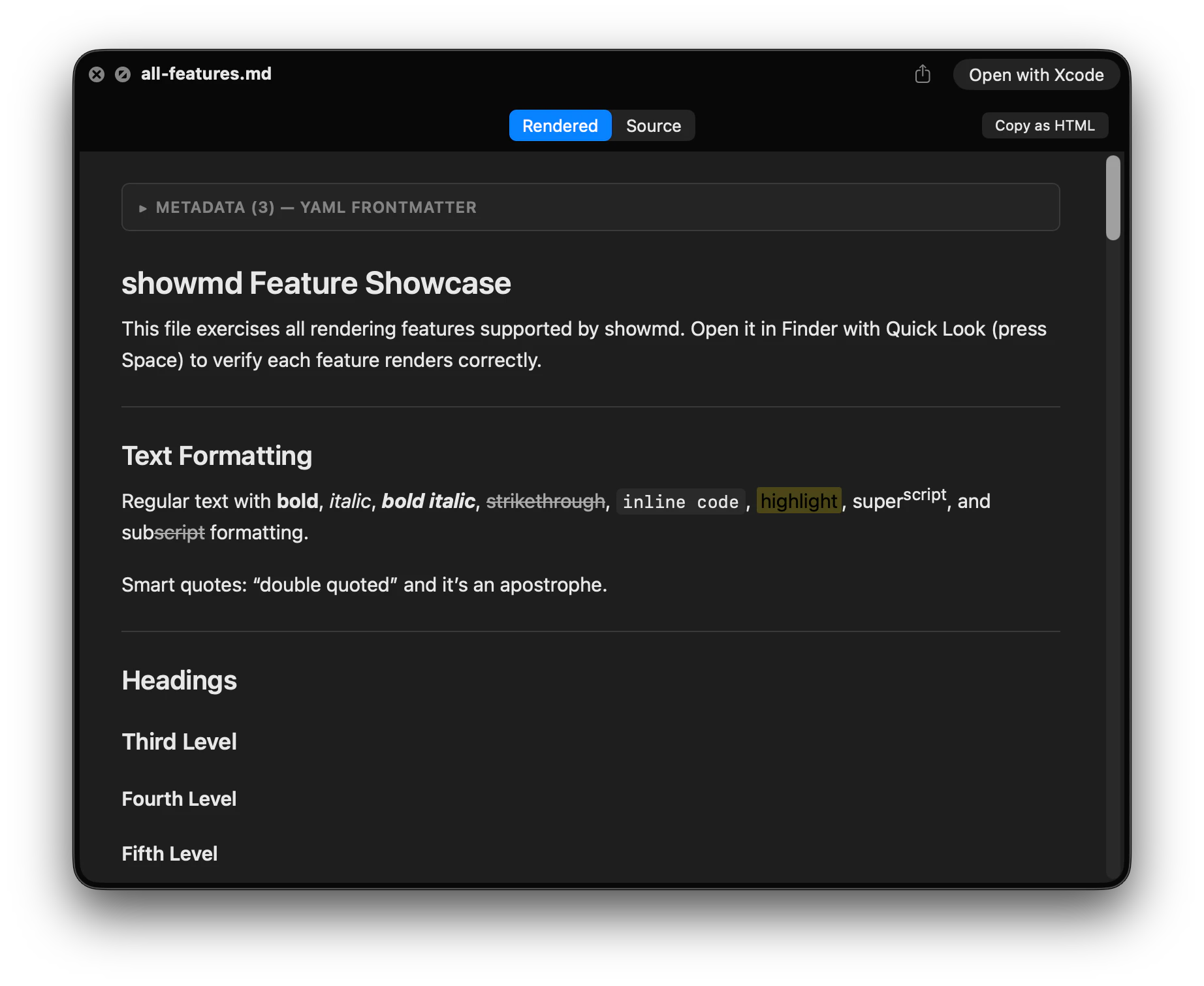

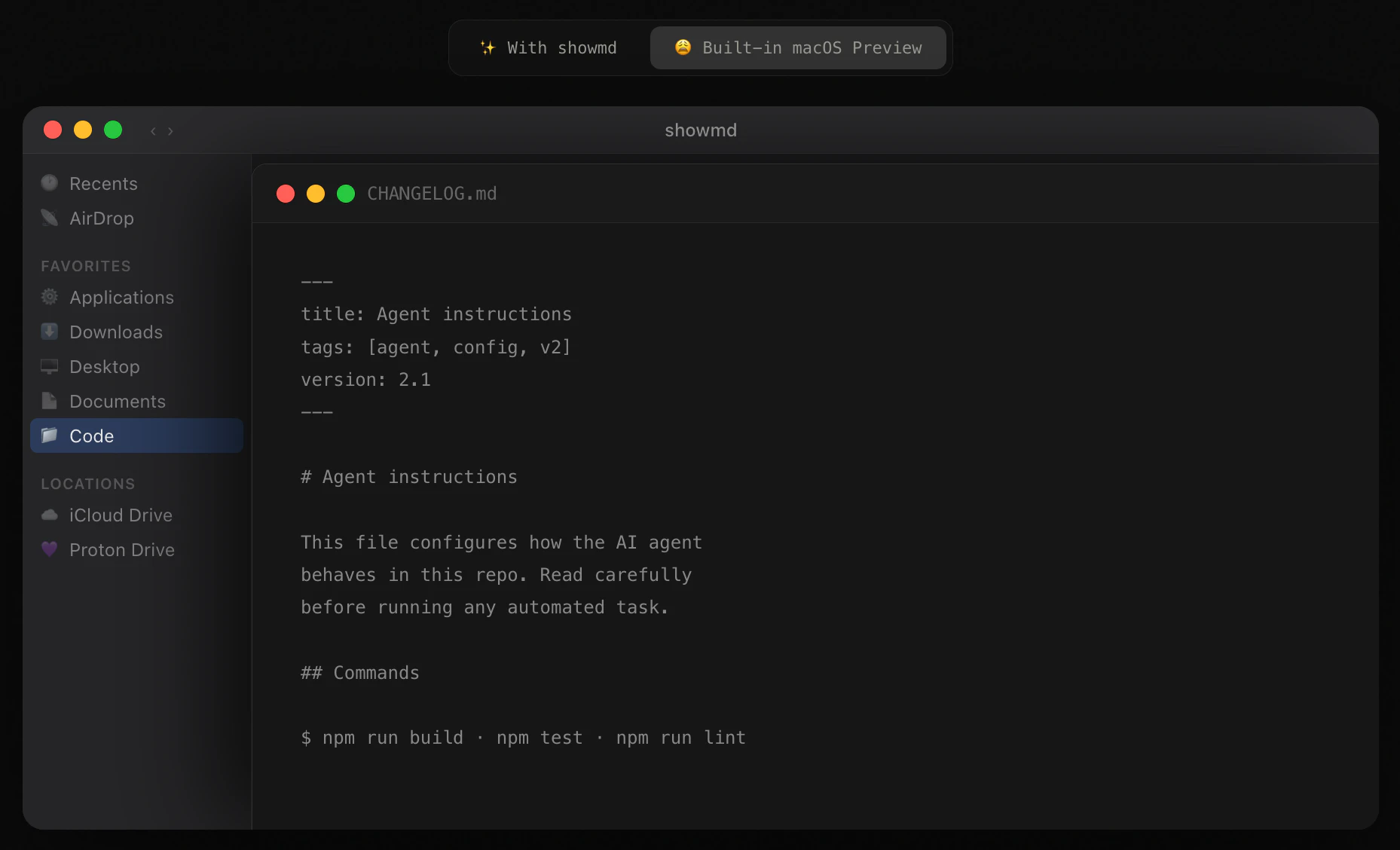

一句话介绍:一款免费的macOS快速查看扩展,在Finder中按空格即可将Markdown文件(尤其是含YAML元数据和AI代理XML标签的文件)渲染为美观易读的预览,解决了开发者日常查看复杂Markdown源码时体验割裂的痛点。

Mac

Open Source

User Experience

GitHub

macOS工具

快速查看扩展

Markdown预览

开发者工具

生产力工具

离线应用

AI工作流

YAML渲染

开源工具

用户评论摘要:用户普遍赞赏其解决了长期痛点,特别是对AI工作流中复杂Markdown的渲染效果。反馈包括安装时的网络报错(已解决)、对实时预览功能的询问,以及对其精准市场定位(反“纯文本”哲学)的肯定。

AI 锐评

showmd的价值远不止于“又一个Markdown预览器”。它精准地捕捉到了一个被主流工具忽视的生态位变迁:Markdown已从简单的写作语法,演变为AI代理时代承载复杂结构化指令(YAML元数据、自定义XML标签)的配置与文档载体。传统预览器固守“纯文本”教条,在此场景下已构成体验断层。

其真正犀利之处在于“理解上下文”。它将YAML前端元数据解析为可折叠表格,将AI代理标签渲染为带标签的边框区块,这本质上是对文件语义的解读和可视化重构,而不仅是样式渲染。这标志着预览工具从“格式转换”向“内容理解”迈出了一小步,贴合了当下AI开发工作流中人类需要频繁审阅、调试结构化提示词的实际需求。

然而,其作为Quick Look扩展的形态,既是优势也是局限。优势在于深度集成系统,体验无缝;局限在于功能场景被严格限定在“预览”,无法介入编辑流。用户关于“实时预览”的询问恰恰击中了这一边界。产品目前明智地选择了做深单点体验,而非泛功能覆盖。其成功揭示了工具演化的一个方向:在基础技术栈(如Markdown)被赋予新内涵的转折点,通过深度适配新场景的微观创新,能迅速建立竞争壁垒。但长远看,其理念若被主流编辑器吸收内化,其独立价值将面临挑战。

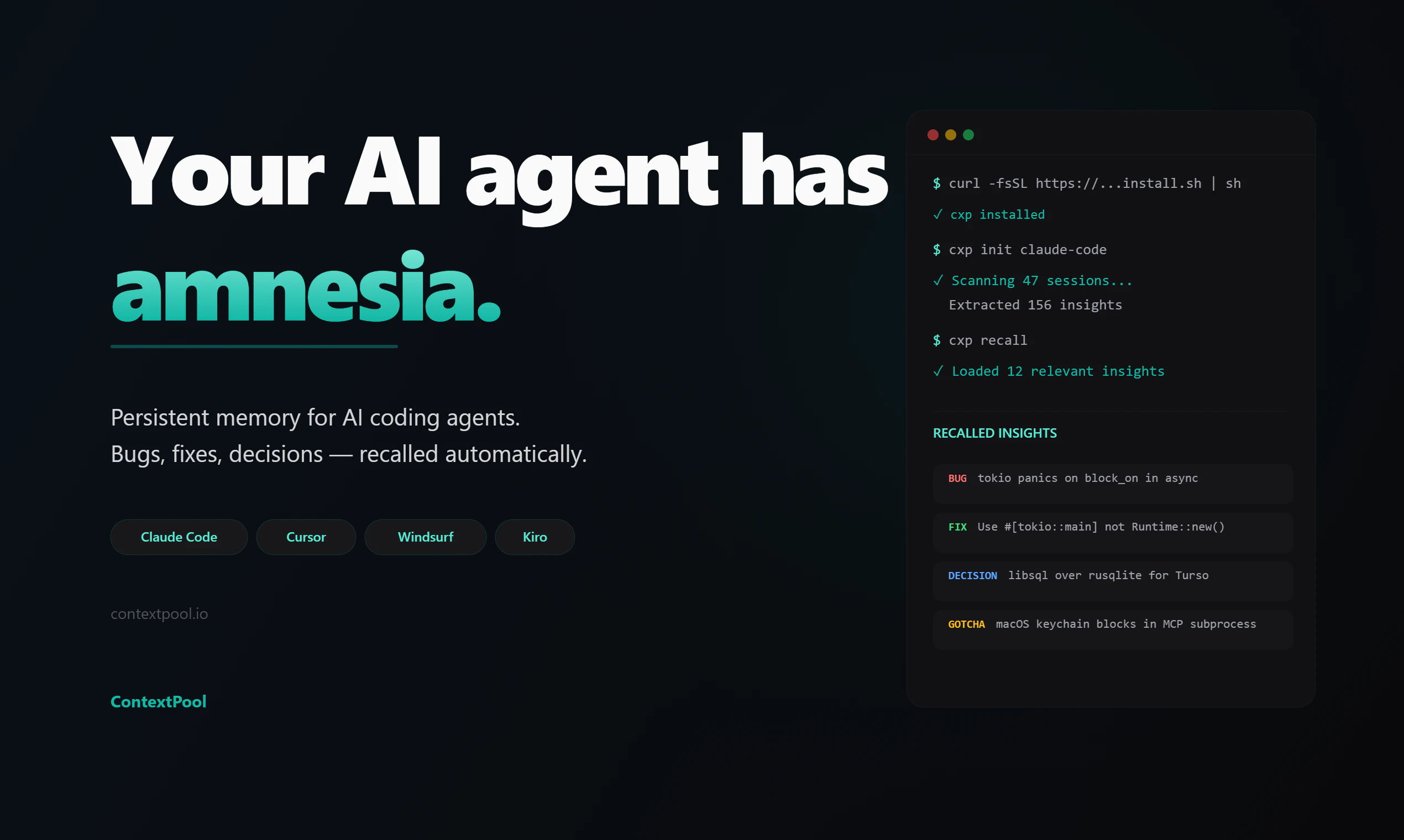

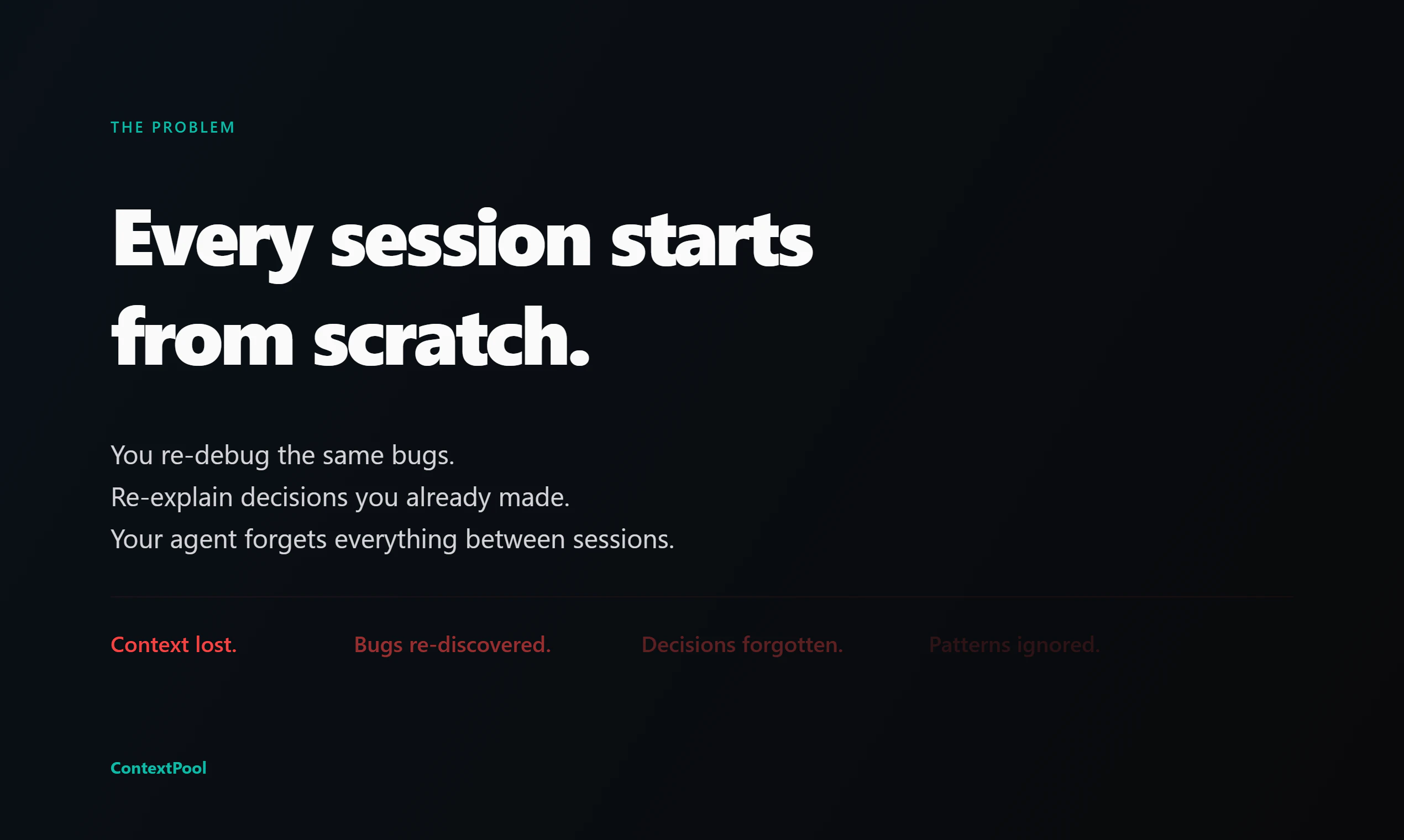

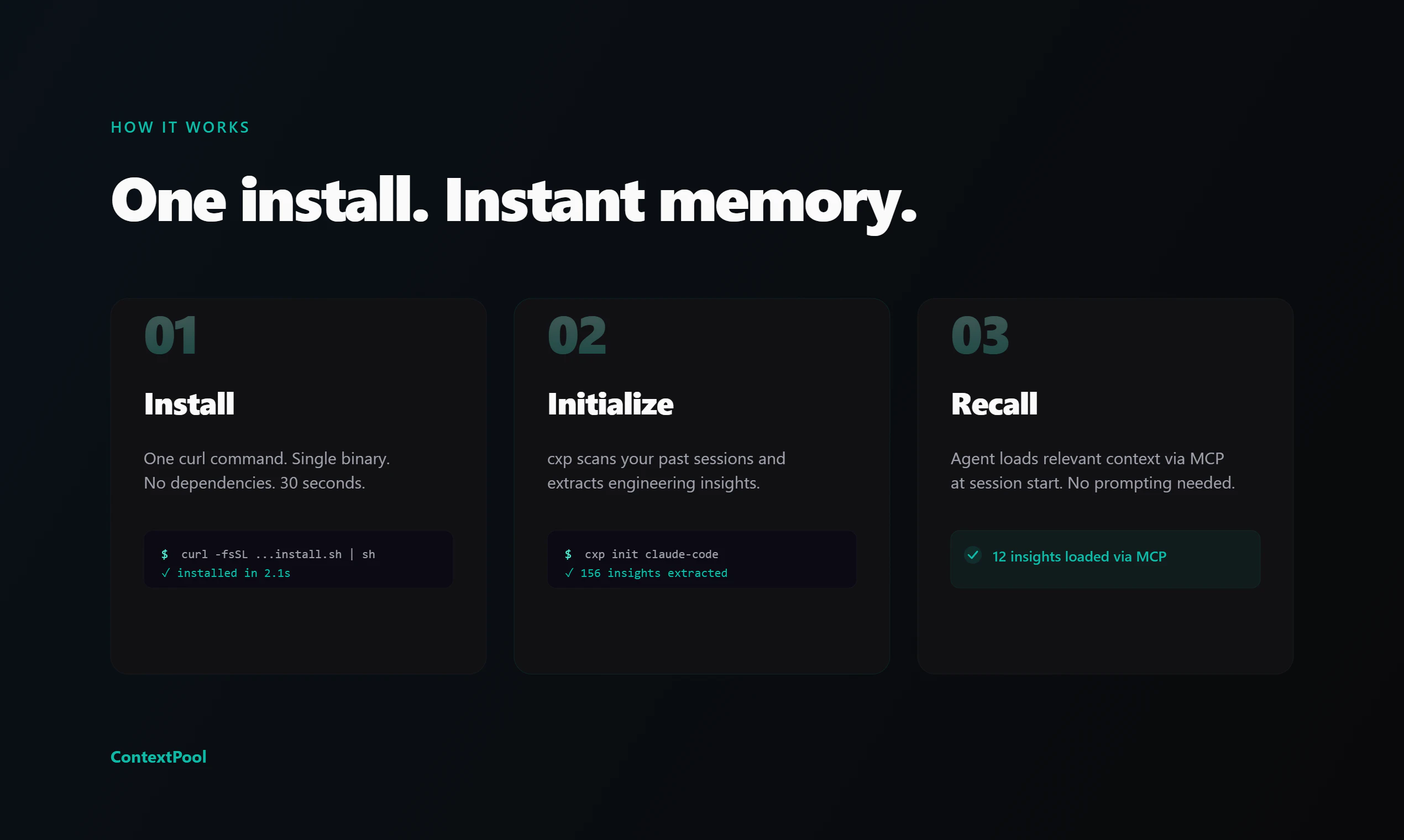

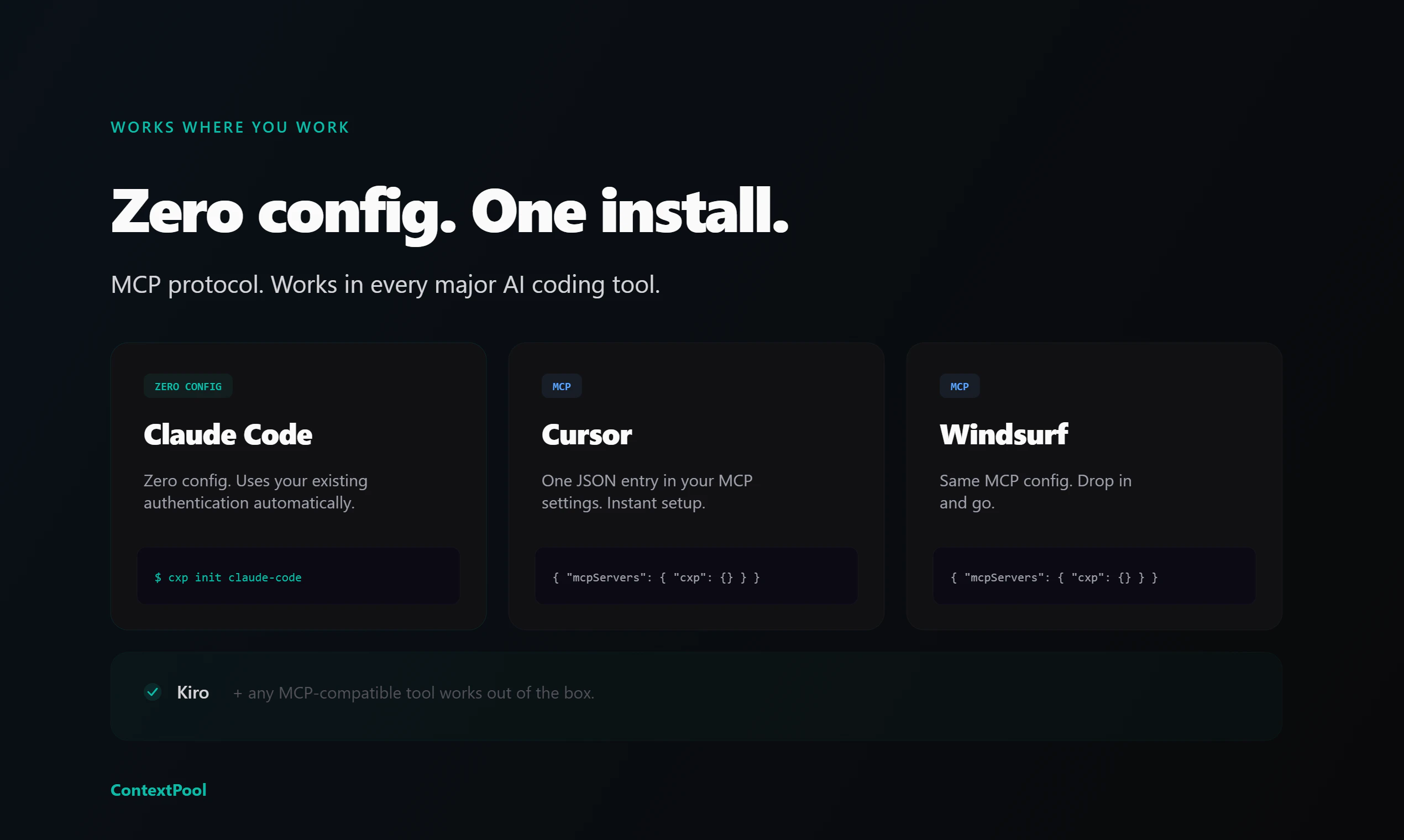

一句话介绍:ContextPool为AI编程助手(如Cursor、Claude Code)提供持久记忆层,自动从历史会话中提取工程洞察,解决开发者反复向AI重述项目背景、已修复Bug和设计决策的痛点。

Open Source

Developer Tools

Artificial Intelligence

GitHub

AI编程助手

持久记忆

开发效率工具

上下文管理

团队知识库

开源

MCP协议

本地优先

工程洞察提取

代码会话管理

用户评论摘要:用户普遍认可其解决“AI健忘症”的核心痛点,尤其关注团队记忆、多项目/多技术栈支持、过时/错误记忆的清理机制、以及从大量会话中提取高价值洞察的有效性。部分用户将其与手动维护文档的方式对比,询问其增量价值。

AI 锐评

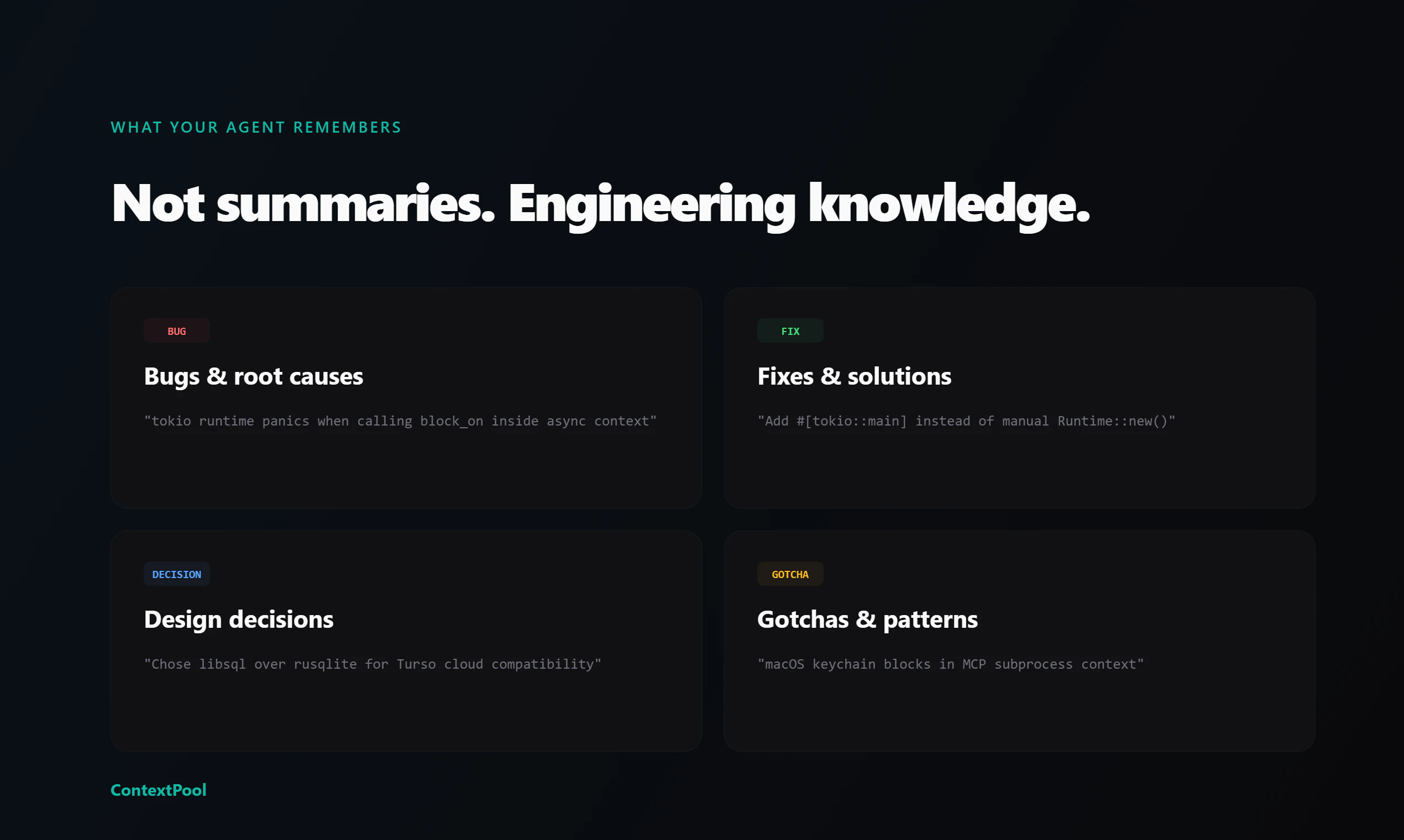

ContextPool切入了一个精准且日益凸显的“AI后遗症”市场:随着开发者深度依赖AI结对编程,会话的“原子性”和“失忆性”成了新的效率瓶颈。它并非简单缓存聊天记录,而是试图构建一个结构化的、可检索的“工程记忆体”,其真正价值在于将非结构化的对话流,蒸馏为可被后续AI直接消费的“提示词增强块”。

产品设计体现了对开发者心理和工程现实的洞察:本地优先保障隐私与控制权;以MCP协议集成,避免生态锁死;输出Markdown格式,保持LLM友好性。然而,其面临的挑战远大于技术实现。首当其冲的是“记忆污染”问题——过时、错误或矛盾的决策如何被识别和清理?虽然团队提到了未来规划,但这本质是一个知识管理难题,而非单纯的技术问题。其次,“提取”的可靠性存疑:LLM能否从冗长会话中准确识别出真正关键、普适的“洞察”,而非无关噪音?这直接决定了工具是“智能摘要”还是“垃圾生成器”。

最值得玩味的是其“团队同步”功能。它试图将个人记忆升格为组织资产,但评论中“第二个文档坟墓”的担忧一针见血。如果缺乏基于使用反馈的动态权重、生命周期管理和权威裁决机制,共享池极易沦为信息沼泽。产品目前将冲突解决方案抛回给AI和用户,这在早期可行,但在规模下可能适得其反。

总体而言,ContextPool是一次必要的尝试,它标志着AI编程工具从“单次会话工具”向“持续学习系统”演进的关键一步。但其长期成功的标尺,不在于它记住了多少,而在于它如何优雅地“忘记”和“优选”,从而真正成为团队中那位“不忘事、不啰嗦、且经验持续增长”的沉默伙伴。

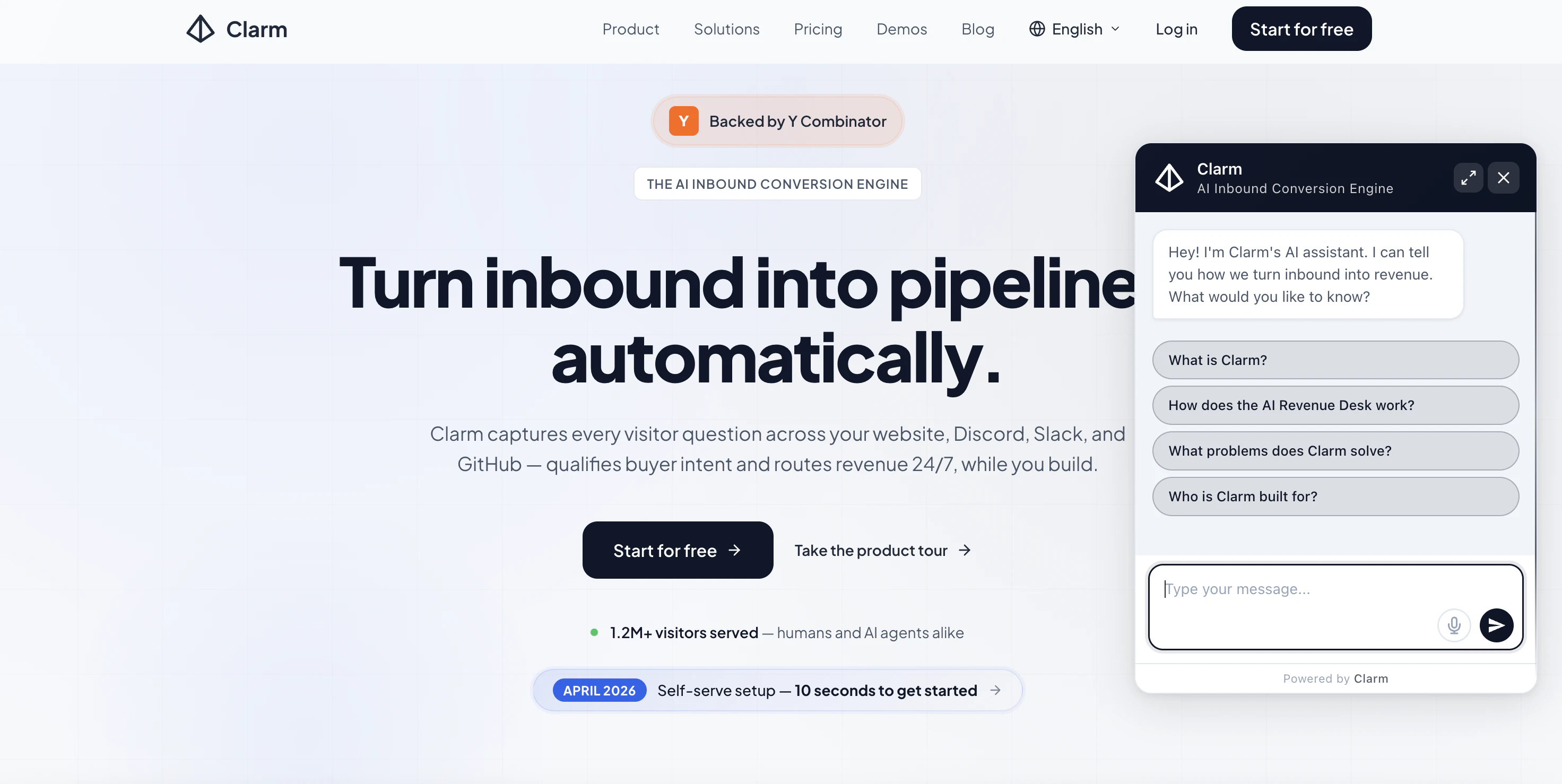

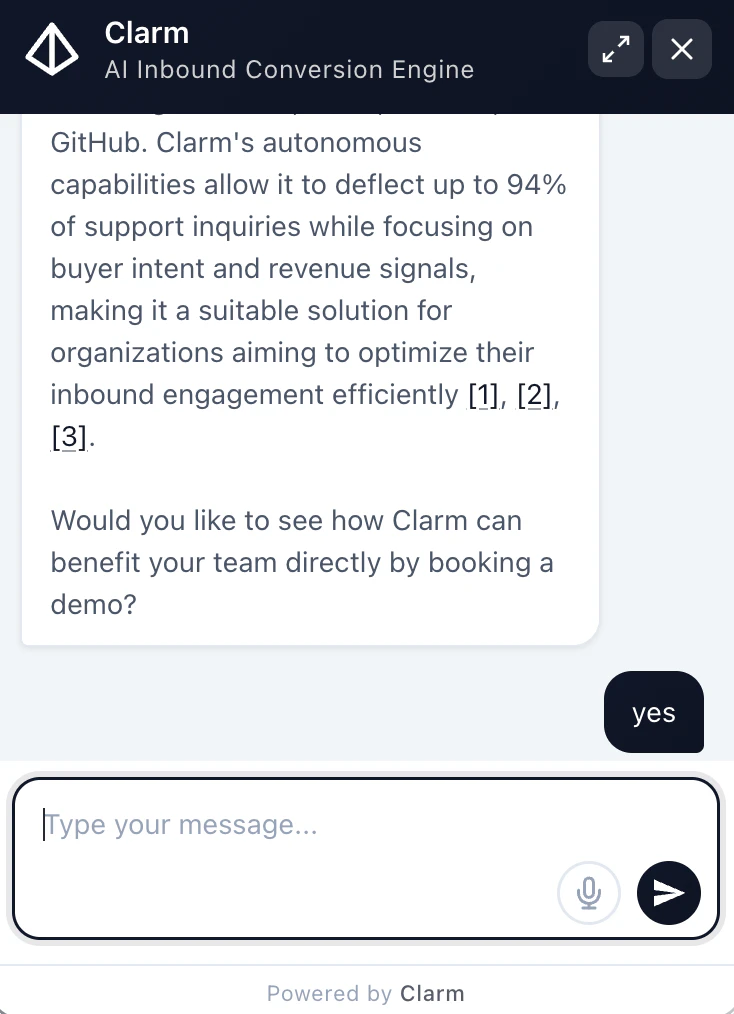

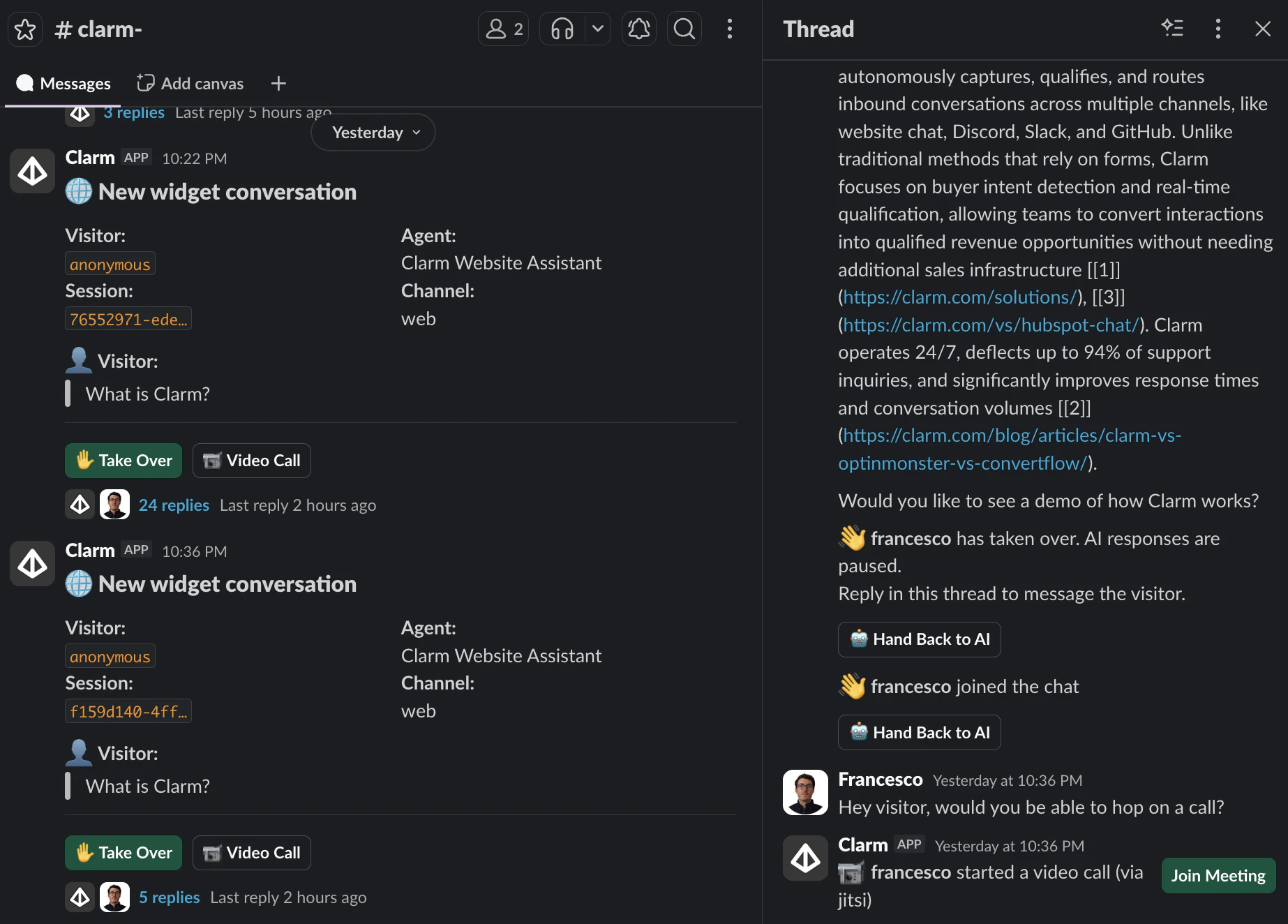

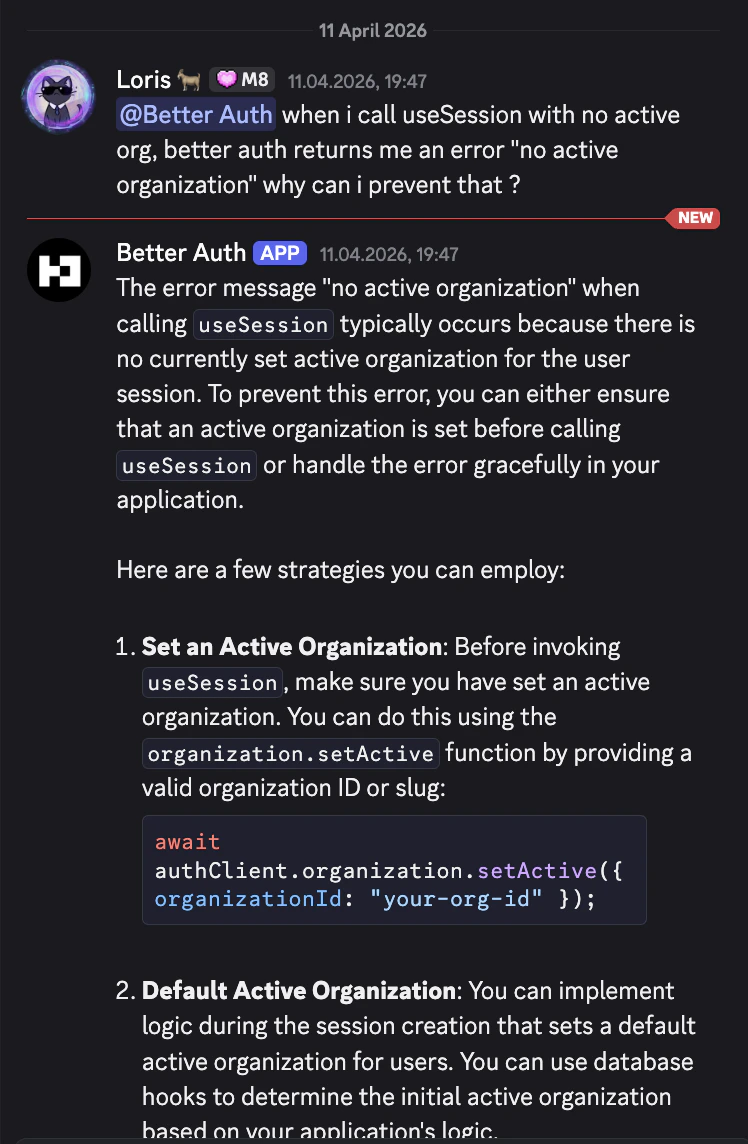

一句话介绍:Clarm是一款AI驱动的多渠道潜在客户捕获与筛选层,通过在网站、Slack、Discord、GitHub和邮箱等渠道自动与访客互动,智能判断购买意向并精准路由,解决了企业在非工作时间或高流量场景下潜在销售线索大量流失的痛点。

Productivity

SaaS

Artificial Intelligence

AI销售助手

潜在客户筛选

多渠道互动

销售自动化

线索路由

B2B工具

YC孵化

SOC2合规

HIPAA合规

转化率优化

用户评论摘要:用户肯定其多渠道集成与提升销售线索的效果,创始人透露电商类客户转化率提升显著。主要问题与建议集中在:1) 请求更多案例数据和基准;2) 询问与LinkedIn等平台的集成可能;3) 关注路由准确性的长期评估与反馈机制;4) 关心知识库(如文档、代码库)更新的同步准确性;5) 询问与现有工具(如Crisp)的兼容性。

AI 锐评

Clarm的定位并非又一个聊天机器人,而是一个“AI驱动的入站转化层”,其真正价值在于将企业从“守株待兔”式的被动获客,转向在用户自然停留的数字场景中进行主动、智能的意图筛选与分流。产品敏锐地抓住了两个关键趋势:一是用户交互偏好从表单和静态页面向即时聊天的迁移;二是企业增长对合规性与全渠道覆盖的硬性要求。

其犀利之处在于“筛选”与“路由”的双重能力。它不满足于仅仅增加互动,而是旨在通过AI判断,将“询问定价的访客”、“在文档中徘徊40分钟的技术负责人”与“普通浏览者”区别对待,并将高价值线索实时推送给正确的人或下一步流程。这直接攻击了传统销售漏斗中最大的效率黑洞——线索浪费与响应延迟。创始人声称的“6倍销售相关消息增长”,其巨大提升空间恰恰源于传统B2B网站极低的基线互动率。

然而,光环之下亦有隐忧。首先,其核心壁垒在于意图分类模型的精准度与深度行业适配能力。评论中关于“路由质量长期评估”、“金融行业自定义筛选标准”的提问,直指其产品能否从“不错”变为“不可或缺”的关键。其次,尽管支持多渠道,但用户对LinkedIn集成的需求揭示了重要场景的缺失。最后,“半小时部署”的便捷性是一把双刃剑,在降低使用门槛的同时,也可能让企业低估了知识库优化与路由规则配置所需的持续运营成本。

总体而言,Clarm展现了一个清晰的愿景:成为企业数字前端的智能中枢。但它面临的挑战同样清晰:在避免成为“更复杂的聊天插件”的同时,需持续深化其AI的决策智能,并构建可验证的ROI闭环,才能真正坐实“转化层”的定位。

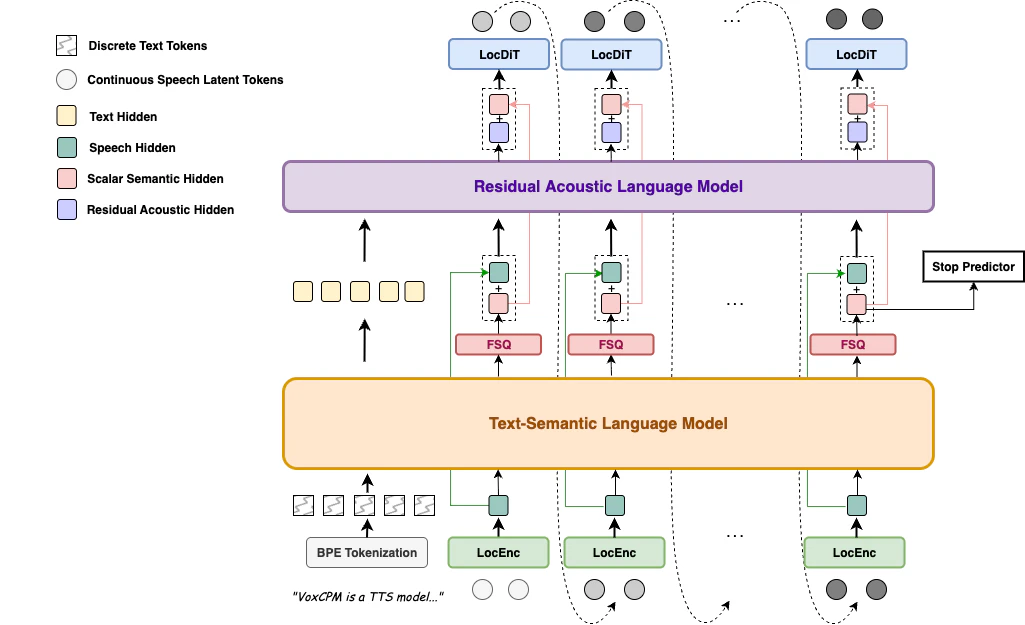

一句话介绍:VoxCPM2是一款仅20亿参数的开源文本转语音模型,通过文本描述直接生成或克隆可控音色,并输出48kHz高品质音频,解决了音视频创作、营销等领域中寻找定制化人声门槛高、流程繁琐的痛点。

Open Source

Artificial Intelligence

Audio

开源TTS模型

语音合成

音色设计

语音克隆

多语言支持

高保真音频

实时流式处理

轻量化AI

Apache-2.0协议

音频工作流

用户评论摘要:用户普遍惊叹其“能力密度”,对仅用文本提示生成新音色的“语音设计”功能感到惊喜。有评论者询问其在营销、播客等场景的实际应用案例。另有专业开发者关注其多语言混合发音的处理能力,体现出对生产环境实用细节的关切。

AI 锐评

VoxCPM2的发布,与其说是一次参数竞赛的胜利,不如说是一次对开源语音合成应用范式的精准重构。其真正价值不在于堆砌“48kHz”、“30种语言”等规格参数,而在于用仅2B的轻量级模型,将“语音设计”这一高阶概念产品化——让用户通过自然语言描述直接生成音色,这实质上将声音从“寻找/克隆”的稀缺资源,变成了“按需描述生成”的可设计元素,大幅降低了创意门槛。

然而,其面临的挑战同样清晰。评论中关于多语言混合发音的疑问,正戳中了当前TTS模型在复杂、自然语境下的通病:技术演示惊艳,但生产环境中的鲁棒性、一致性和情感细微控制仍是难关。所谓的“生产就绪”RTF指标,在真实工作流中能否经受住复杂脚本、长文本连贯性以及多说话人场景的考验,仍需观察。

它最大的冲击力在于其开源协议与轻量化体量。Apache-2.0许可使其能无障碍地嵌入各类商业产品,而2B参数则意味着更低的部署成本和硬件门槛,有望真正推动高质量语音合成从云端API服务下沉到边缘设备和个人工作台。但这把“利剑”也指向了自身:如何构建可持续的开发者生态与商业模式,来支撑其长期迭代,将是其能否从“惊艳的开源项目”蜕变为“定义行业的标准”的关键。它的出现,迫使整个行业重新思考:语音合成的核心价值,究竟是为已有声音做复制,还是为人类想象力提供新的发声工具?

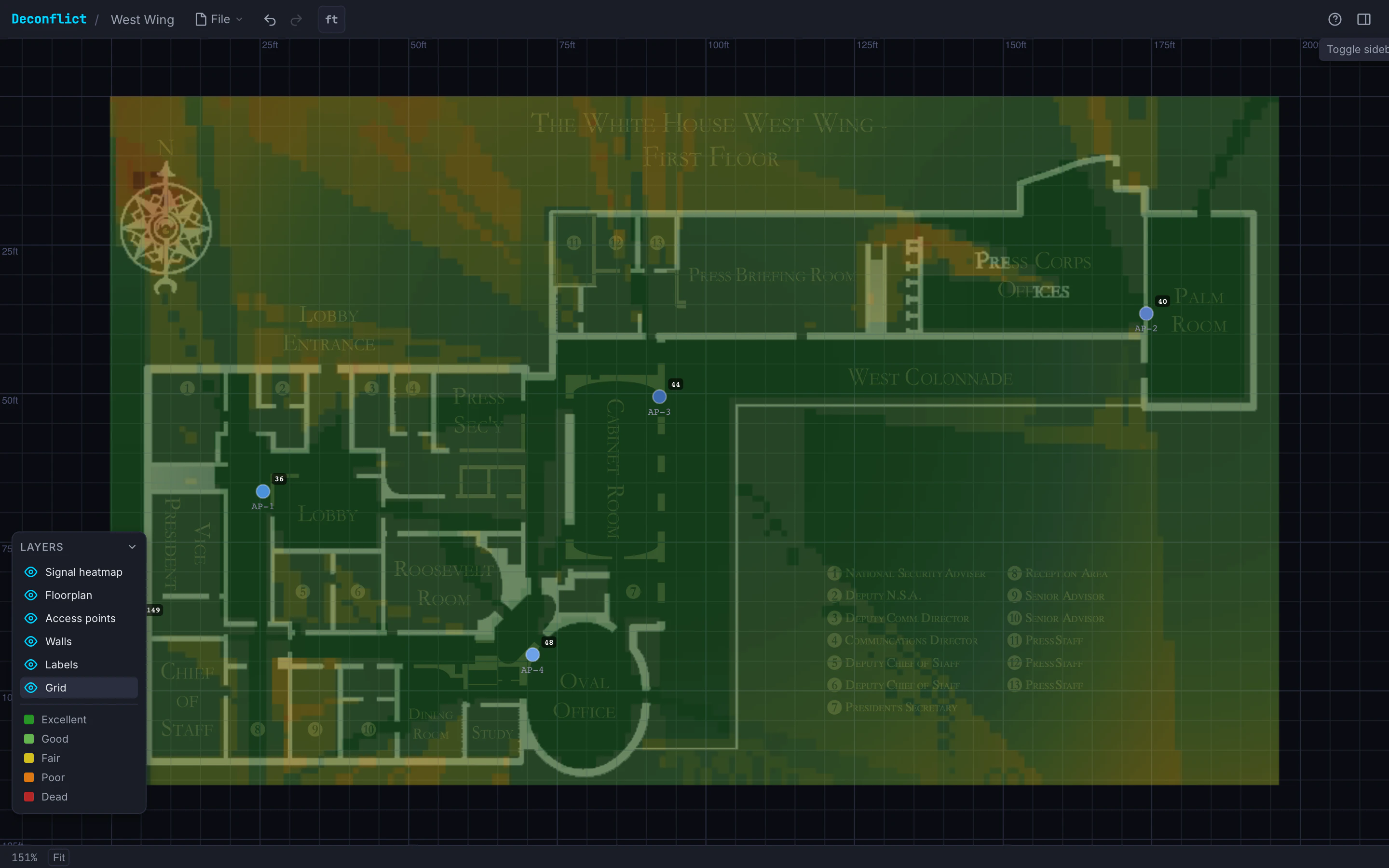

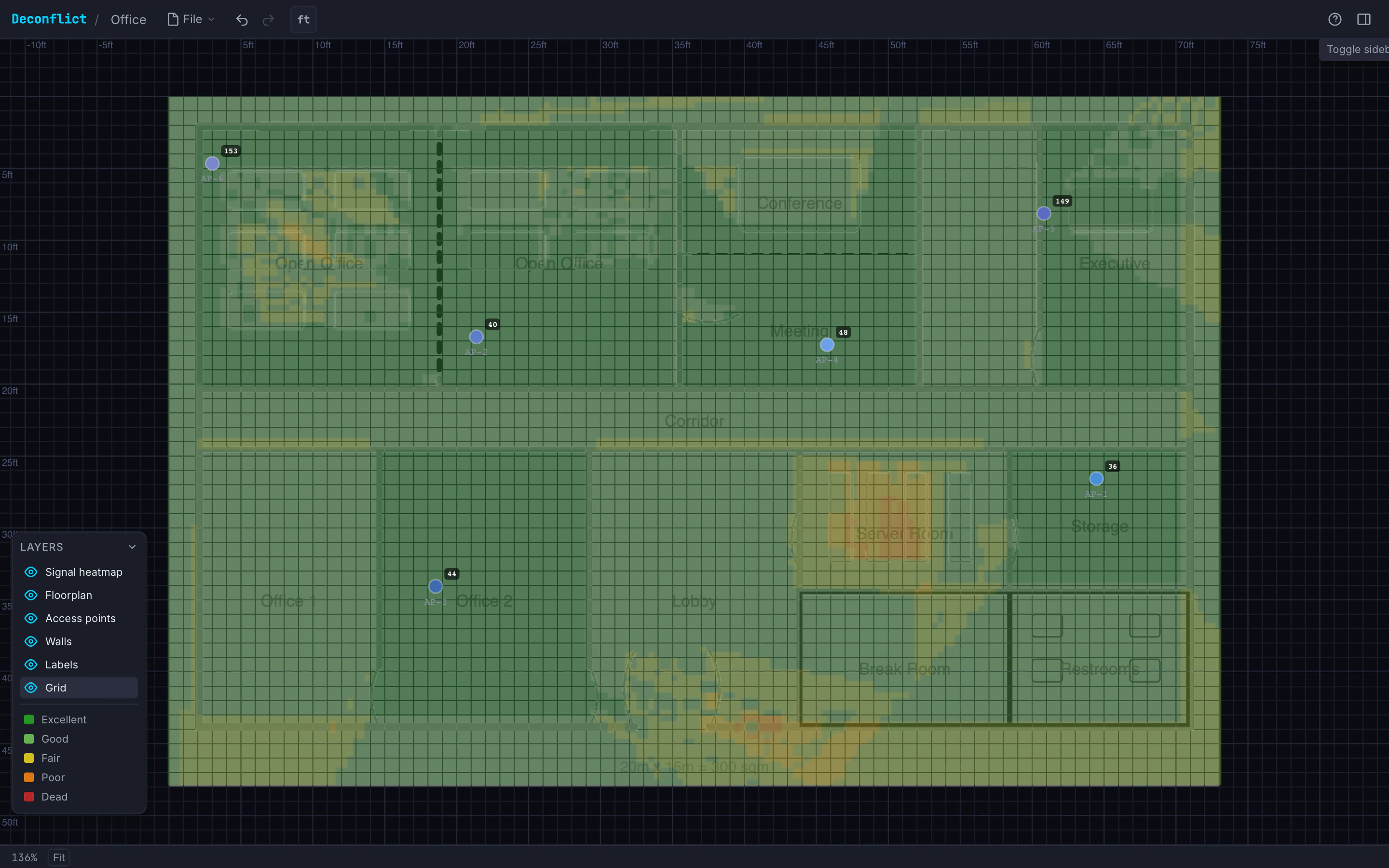

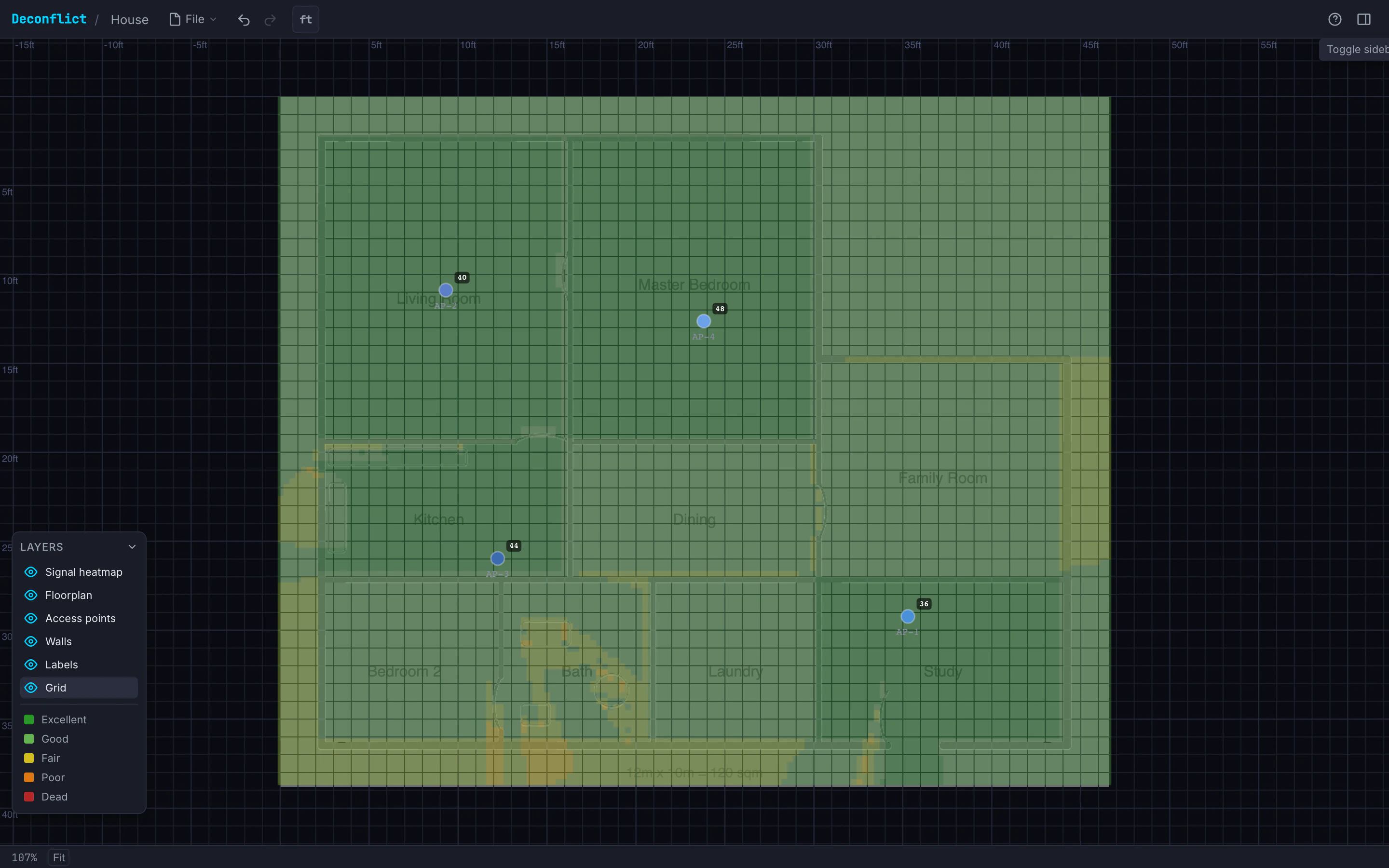

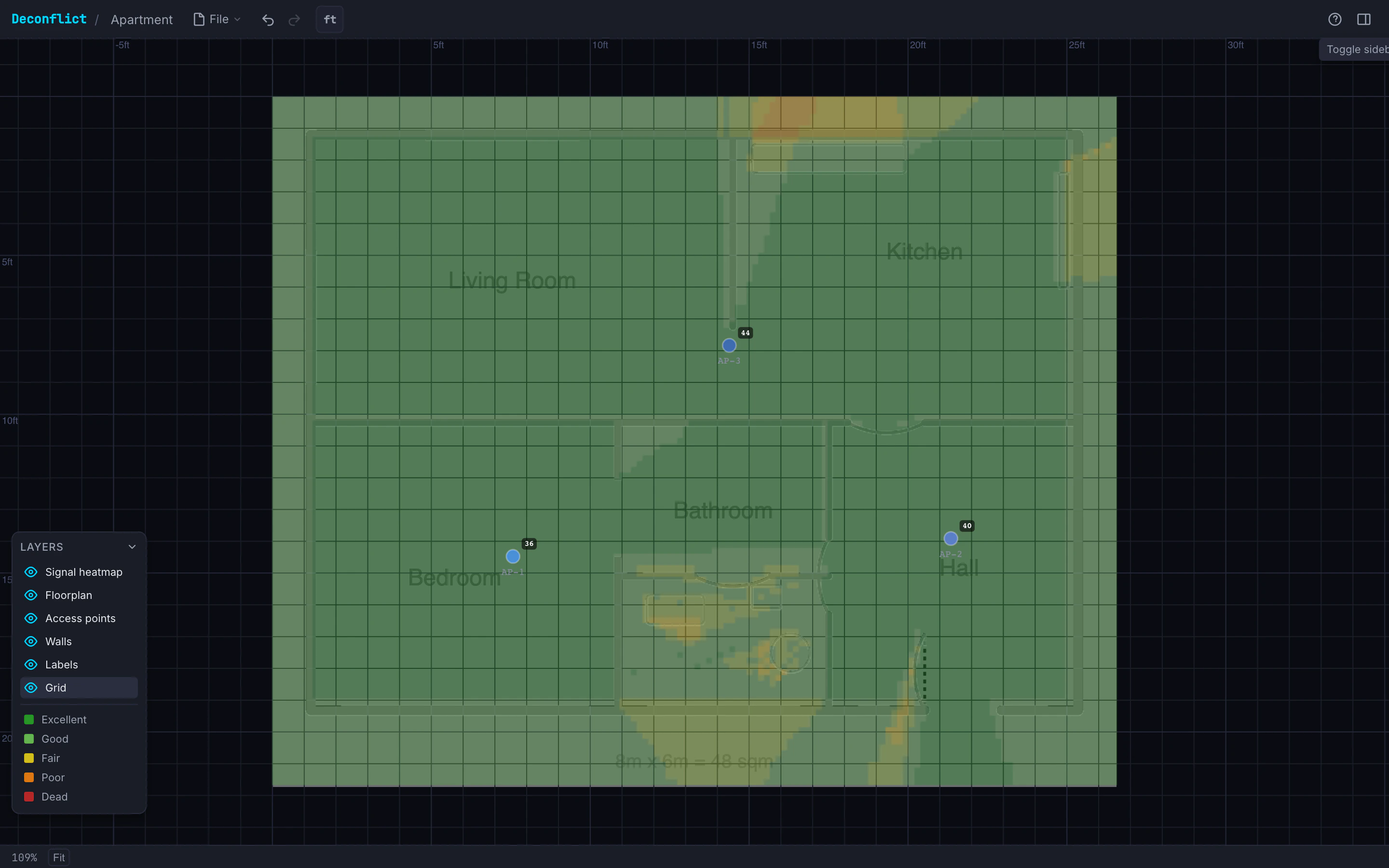

一句话介绍:一款免费开源的浏览器端WiFi规划工具,通过上传平面图、放置真实AP模型并模拟信号穿墙衰减,帮助家庭用户和IT人员可视化无线覆盖、自动分配信道并优化AP位置,解决WiFi盲区与信号干扰的痛点。

Design Tools

Open Source

Developer Tools

GitHub

WiFi规划工具

网络优化

信号模拟

开源

浏览器应用

信道分配

室内覆盖

AP部署

射频衰减

网络可视化

用户评论摘要:用户普遍赞赏其轻量、实用及逼真的材料衰减模型。主要问题与建议包括:期待多层建筑支持、设备密度加权优化、性能加载延迟(尤其特定地区),以及询问模型准确性。开发者积极回应,确认相关功能已在规划中。

AI 锐评

Deconflict的价值核心在于将昂贵的专业无线网络规划能力“平民化”。它并非又一个简单的信号模拟器,其关键突破在于引入了基于真实物理属性的材料衰减参数和实时信道干扰计算,这直接击中了家庭用户和小型企业主“知其然不知其所以然”的痛点——他们能感受到信号差,却无法量化一堵混凝土墙带来的高达12dB的信号损失。

然而,其“免费开源”和“浏览器运行”的双重特性是一把双刃剑。优势在于零门槛、易传播,迅速吸引了被Ekahau等企业级软件价格劝退的用户。但劣势同样明显:受限于浏览器算力和2D射线模型,它无法处理复杂的3D多径效应和家具遮挡,这决定了其天花板是“精准的规划工具”而非“高保真仿真工具”。开发者对此有清醒认知,定位在“合理范围内的实用”。

从评论互动看,产品的成功在于精准解决了“规划”这一环节,但用户已开始提出“运营”层面的需求,如基于设备密度的优化。这揭示了其从“部署工具”向“网络健康管理平台”演进的潜在路径。当前最大的挑战并非功能,而是作为开源项目如何平衡性能优化(如评论提及的加载延迟)与开发可持续性。若能建立社区生态,吸引贡献者共同优化引擎与模型,它有望成为中小场景无线网络规划的事实标准。

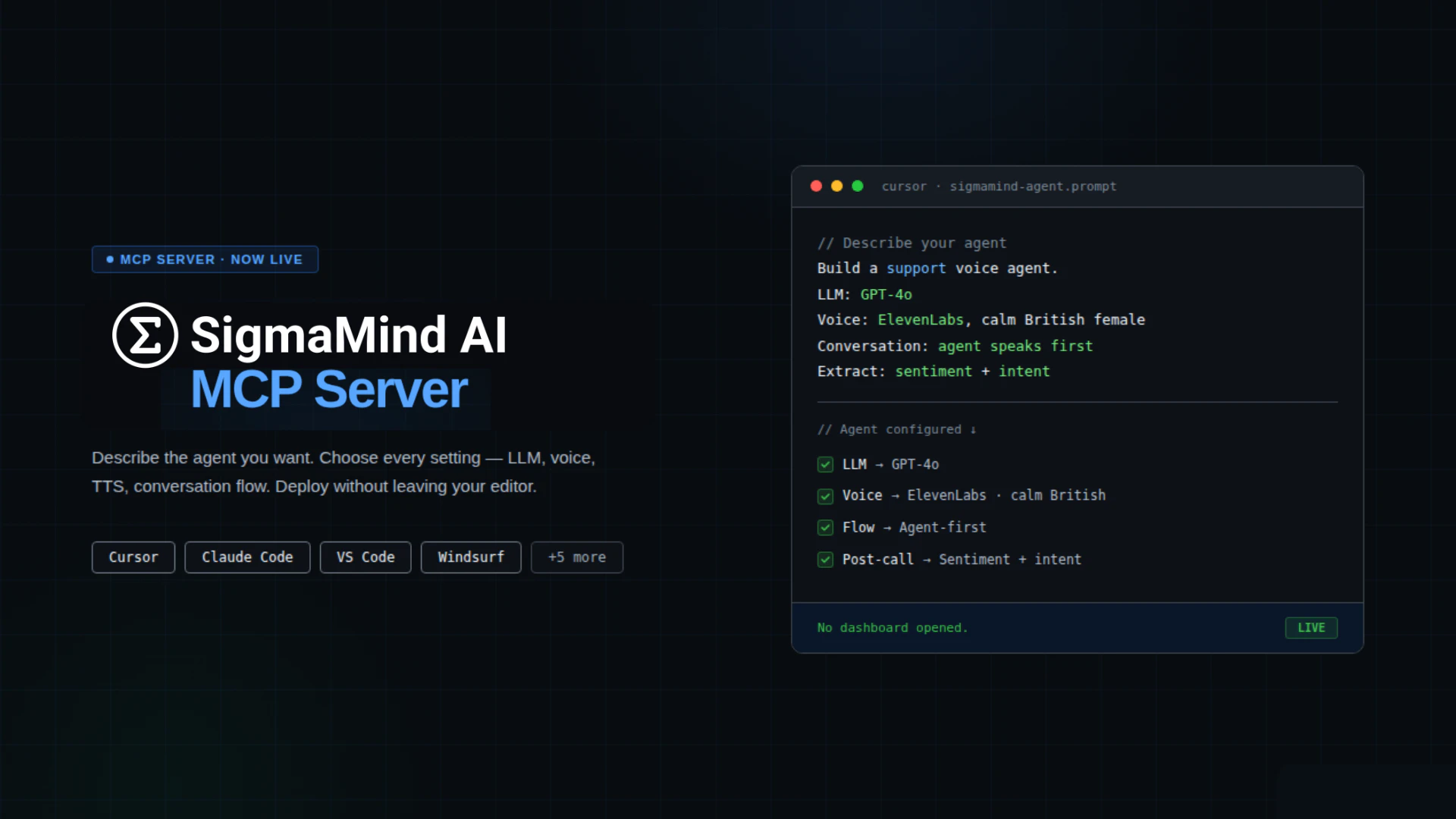

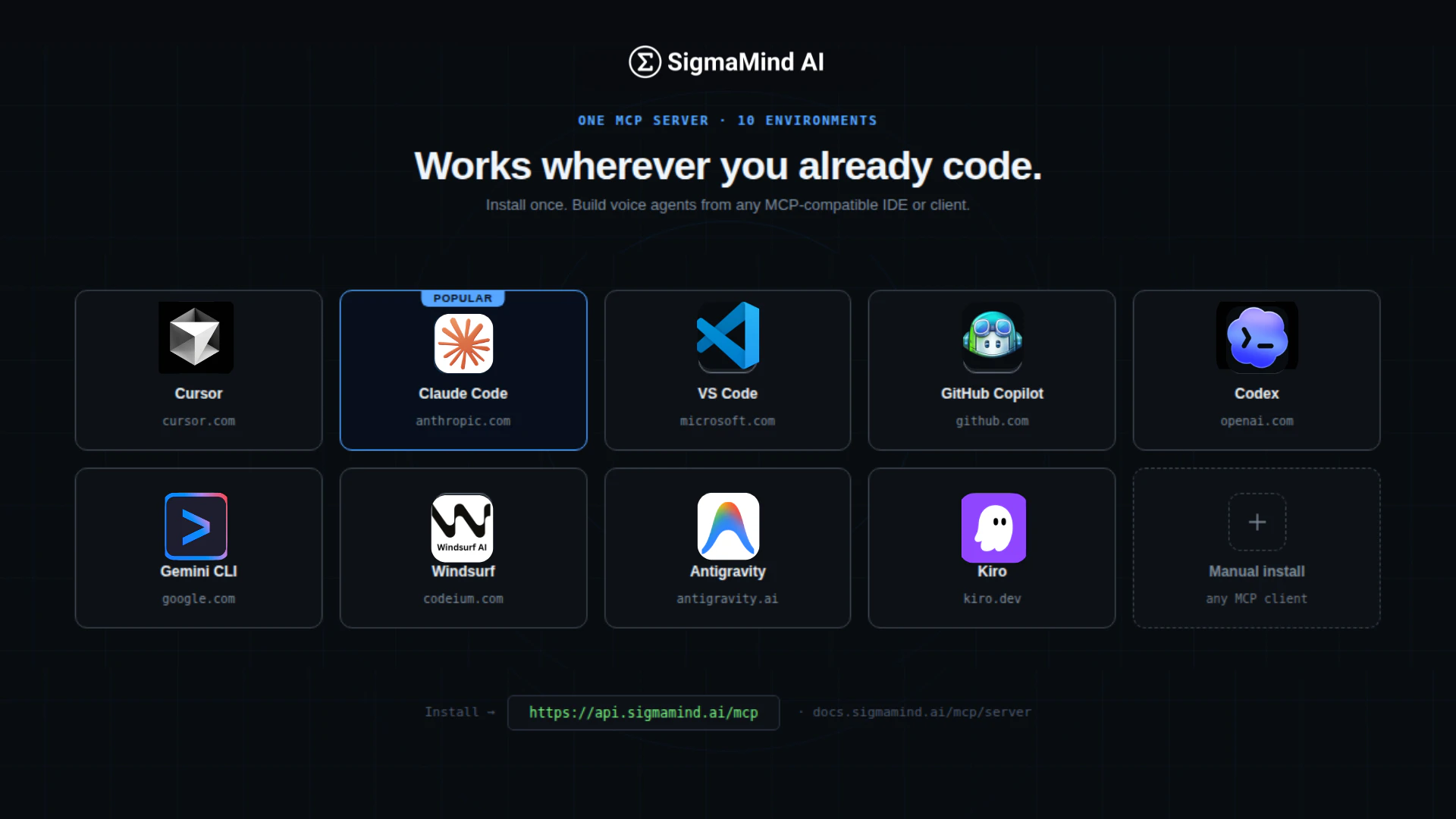

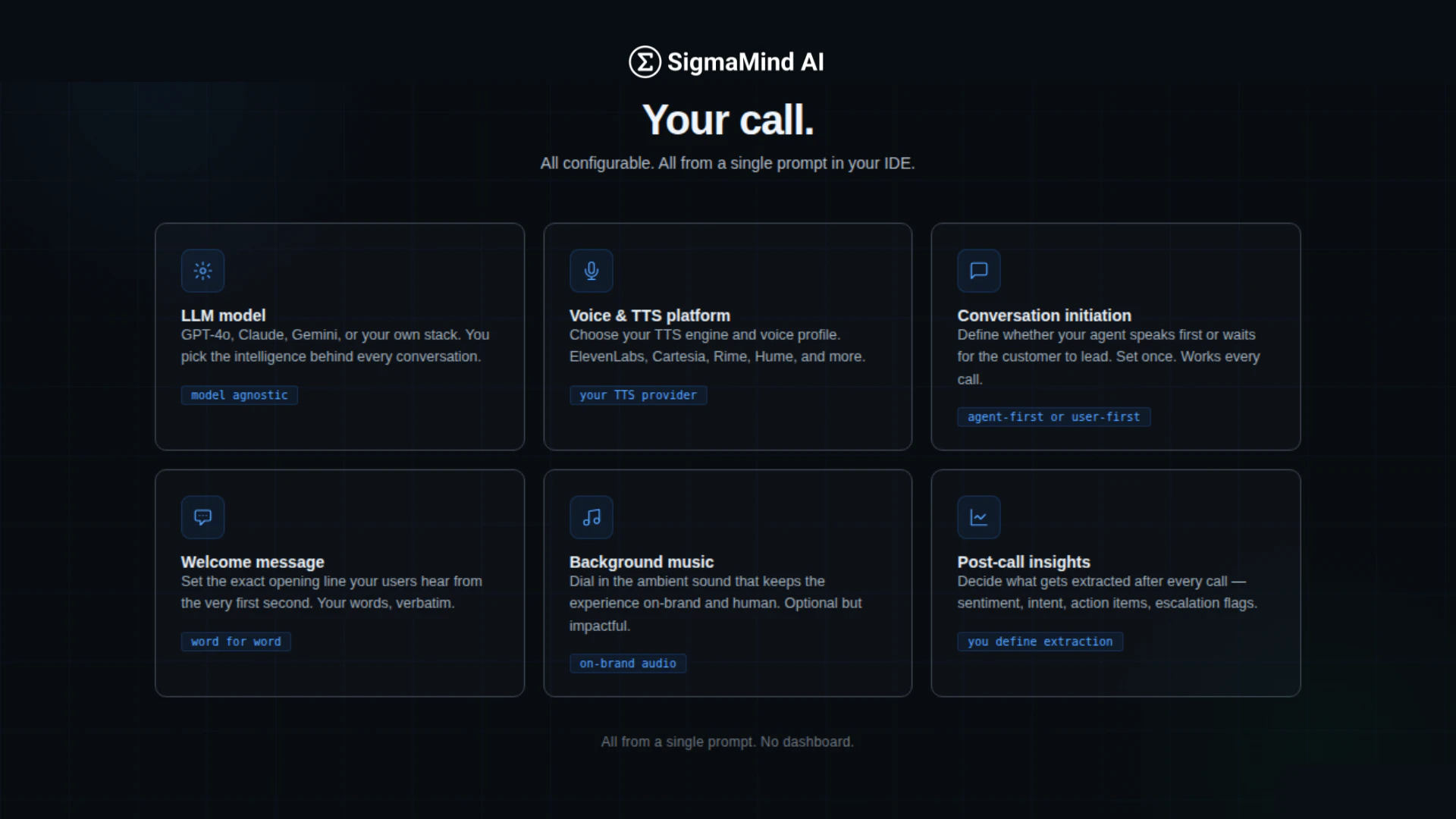

一句话介绍:一款将完整语音AI技术栈(如智能体、通话、号码等)封装为MCP协议工具的服务器,让开发者能在IDE内通过自然语言指令快速构建、测试和部署低延迟语音AI助手,解决了开发者在不同平台间频繁切换、配置流程繁琐的核心痛点。

API

Developer Tools

Artificial Intelligence

语音AI开发平台

MCP协议集成

开发者工具

AI智能体编排

低延迟语音

代码环境集成

自然语言配置

生产级基础设施

电话系统集成

工作流优化

用户评论摘要:用户肯定其简化语音AI“布线”复杂度的价值,认为是从“基础设施”转向“可访问性”的关键。主要疑问集中于:长期定位是停留在MCP协议层,还是转向更结果导向的产品;在并行工具调用时,如何保障可观测性和调试;与自行集成开源方案的核心差异(在于生产级的稳定性和规模化能力)。

AI 锐评

SigmaMind MCP 表面上是一个将语音AI功能引入IDE的MCP服务器,但其真正的颠覆性在于对“开发范式”的改造。它没有选择在模型能力或语音质量上做增量优化,而是精准地切入了AI工程化中最隐秘的痛点——上下文切换与系统集成损耗。开发者不再需要为配置一个语音智能体而在仪表盘、API文档和代码编辑器之间疲于奔命,这本质上是通过协议层将“基础设施”彻底“接口化”。

然而,其当前的定位存在一个显著的张力。产品名和宣传紧扣“MCP”这一技术协议,这固然能吸引早期开发者与技术决策者,但也可能将其禁锢在“为技术而技术”的工具范畴。正如评论所指,当并行工具调用成为常态,复杂的生产调试和观测需求会迅速浮出水面,这远非一个简洁的提示词所能解决。产品的下一阶段挑战,将从“如何便捷地创建”转向“如何可靠地观测、管理和优化”这些分布式的语音智能体。

它的核心价值并非仅仅是“sub-800ms延迟”或“噪声消除”,而是通过深度集成工作流,将语音AI从需要专门运维的“系统级项目”,降维成开发者可在编码流中随时调用的“功能模块”。如果它能成功跨越从“惊艳的演示”到“坚如磐石的工程平台”这道鸿沟,并平衡好底层协议透明性与上层业务成果可见性,它有望定义下一代语音AI应用的开发标准。否则,它可能只是技术栈中又一个精美的“桥接器”。

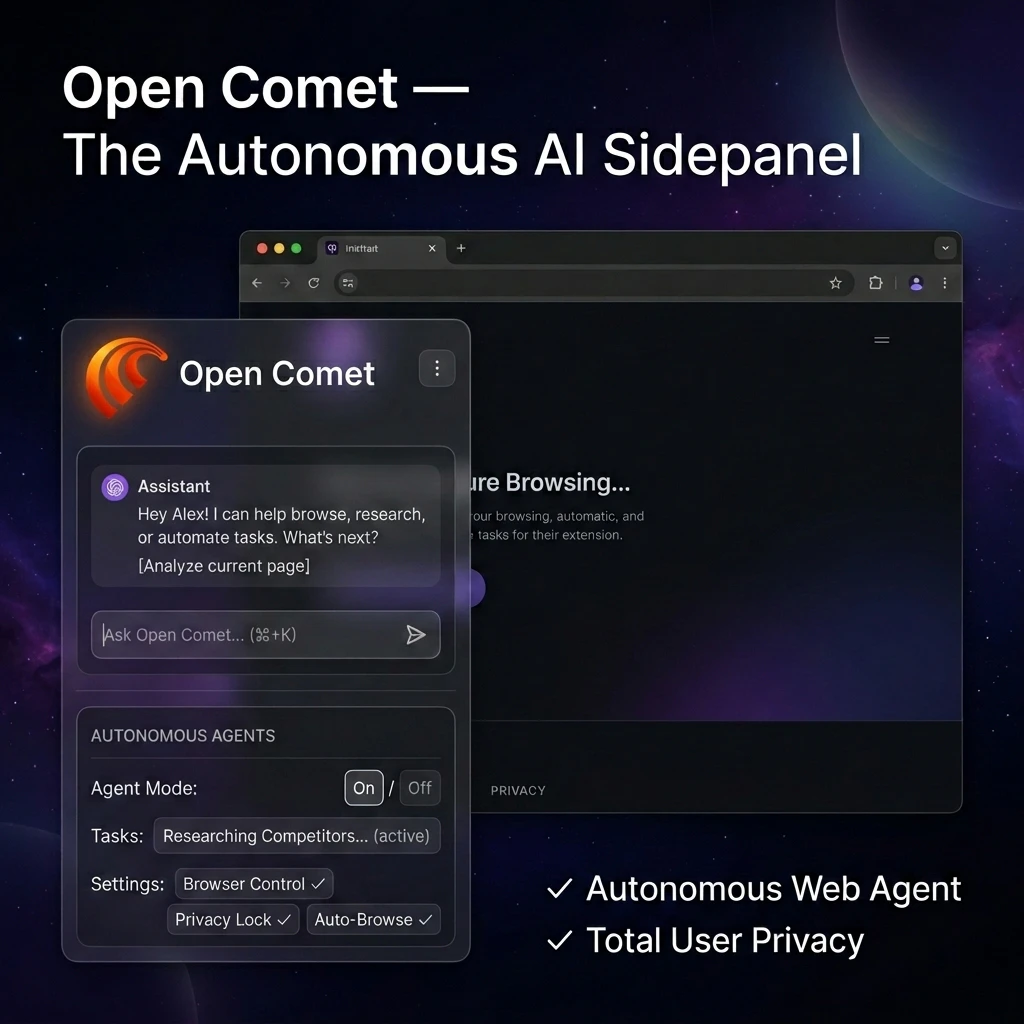

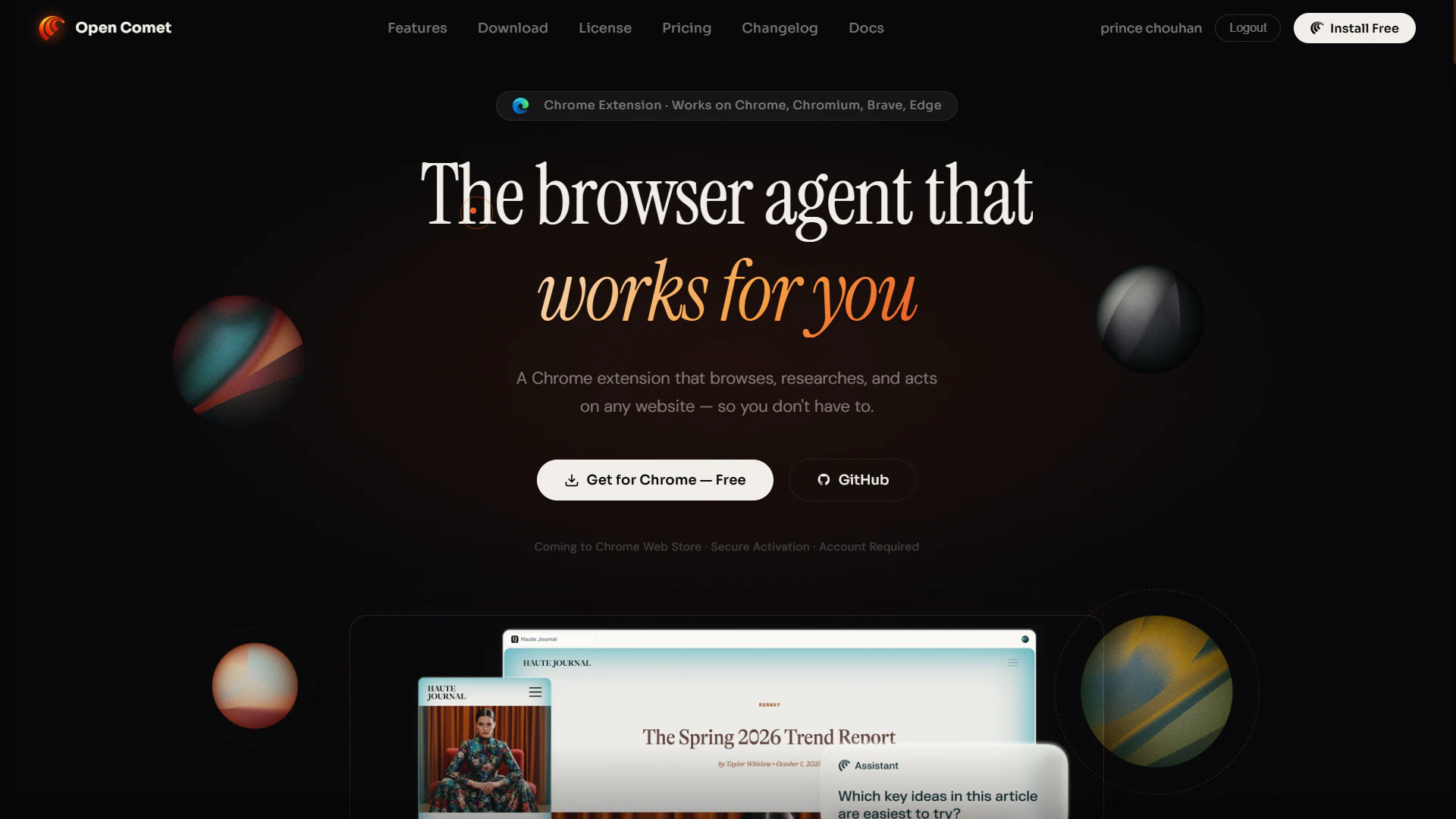

一句话介绍:Open Comet是一款运行在浏览器侧边栏的自主AI智能体,能在用户真实登录的网页环境中自动执行多步骤研究与任务,解决了用户在深度信息搜集和重复性网页操作中需频繁切换、手动操作的效率痛点。

Chrome Extensions

Productivity

Task Management

GitHub

AI浏览器智能体

自主网页操作

本地化隐私

浏览器扩展

多步骤工作流

人机协同

网页自动化

零数据架构

深度研究工具

企业级推理

用户评论摘要:用户普遍赞赏其本地存储的隐私设计,并重点关注其实际能力边界:能否在已登录网站(如LinkedIn)执行操作、如何处理高风险动作、应对会话持久性与反爬机制的稳定性,以及信息来源的交叉验证能力。开发者回复确认了人机协同机制及当前局限性。

AI 锐评

Open Comet的野心在于将“智能体”从聊天框的囚笼中解放,植入真实的浏览器环境。其宣称的“高保真”与“零数据架构”直击当前AI助手的两大软肋:操作环境的隔阂与数据隐私的黑箱。产品价值并非在于其全知全能,而在于它选择了一条务实的路径——不试图重建浏览器或破解反爬,而是依附于用户现有会话,以“人在回路”的方式实现可控自动化。这本质上是将智能体降格为“超级宏”,虽在自主性上做了妥协,却在实用性与安全性上找到了一个现阶段更可能落地的平衡点。

然而,其面临的挑战与所有浏览器自动化工具同源:动态网页的不可预测性、反自动化机制的不断升级,以及多步骤任务中的状态漂移问题。评论中关于“源可靠性验证”和“STORM研究循环”的质疑,恰恰暴露了其从“自动化脚本”迈向“可信研究助手”之间的巨大鸿沟。当前版本更像是一个概念验证,证明了侧边栏智能体的可行性,但其真正的“企业级推理”能力,仍有待于在复杂、长链条的真实业务场景中得到严酷检验。它的出现,标志着AI应用正从“问答”走向“操作”,但距离真正的“自主”,还有一片名为“可靠性”的荒野需要穿越。

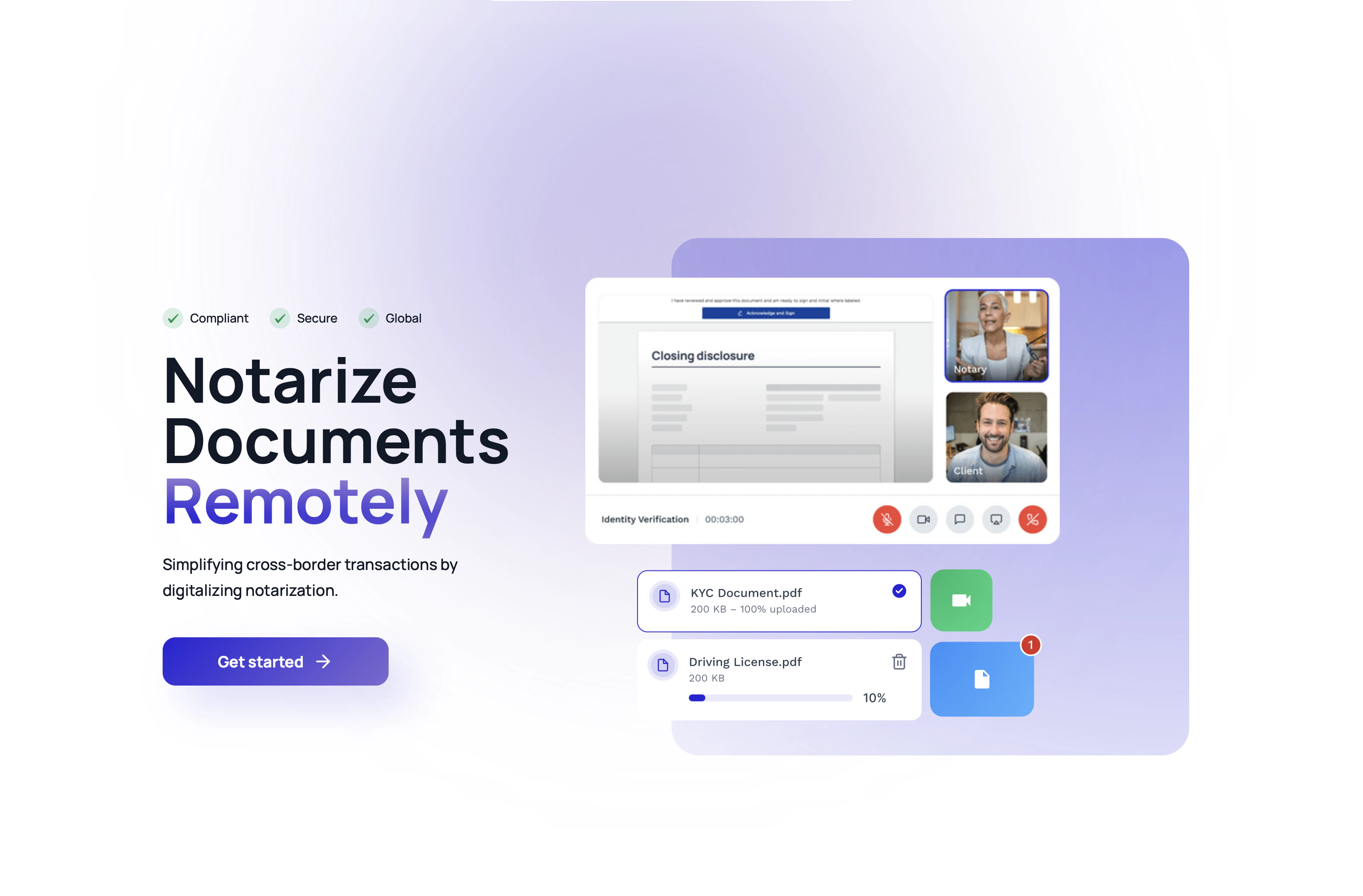

一句话介绍:Legitify 提供完全数字化的合规跨境公证与海牙认证流程,为企业和个人解决了因传统纸质、线下、跨国界公证手续带来的耗时、高成本与进度阻滞的核心痛点。

Productivity

Legal

Artificial Intelligence

跨境公证

数字公证

远程身份验证

电子签名

海牙认证

法律科技

合规工作流

跨境业务

效率工具

用户评论摘要:用户反馈肯定了产品在德国等数字化进程缓慢地区的价值。创始人详细阐述了产品愿景与功能。主要问题集中于支持国家范围、如何同步当地政策变化,官方回复解释了其基于欧盟/英国公证网络与《海牙公约》的合规模式。

AI 锐评

Legitify 瞄准的并非一个新鲜概念——远程在线公证(RON),但其真正的锋芒在于“跨境”与“合规”的交叉点。它本质上是一个精心构建的合规层与连接器:上游整合欧盟、英国等地已获法律许可的公证人网络,下游利用《海牙认证公约》构建跨境效力,将自身塑造成一个标准化的数字中间件。

产品价值不在于技术突破(身份验证、电子签名已属成熟),而在于对复杂法律地缘版图的解构与重组。它解决的痛点是“不确定性”:将传统流程中“寻找合规路径”的隐性成本(研究当地法律、寻找可靠公证人、协调物流)转化为确定性的数字服务。其宣称的50+司法管辖区有效性,正是这种“合规即服务”能力的体现。

然而,其最大的挑战与护城河也在于此。合规是动态的,各国对远程公证的态度和政策处于演变中。产品的扩张速度将严重受限于与各地监管的磨合及公证网络的拓展能力。评论中关于政策同步的质疑直指核心。此外,在非海牙公约成员国或对远程公证持保守态度的关键市场(评论中德国用户的期盼反衬出现实阻力),其效力可能受限,这或许是其当前聚焦欧盟与英国的原因。

总体而言,Legitify是全球化数字浪潮与本地化法律传统之间矛盾的一个优雅解决方案。它并非颠覆法律,而是优化法律的“接口”。其成功与否,不取决于技术流量,而取决于其法律网络扩张的深度与广度,以及在快速变化的监管环境中维持合规稳定的运营能力。它为企业提供的,是让“公证”这个传统摩擦点,从一项需要专门管理的合规项目,退隐为一项可按需调用的基础服务。

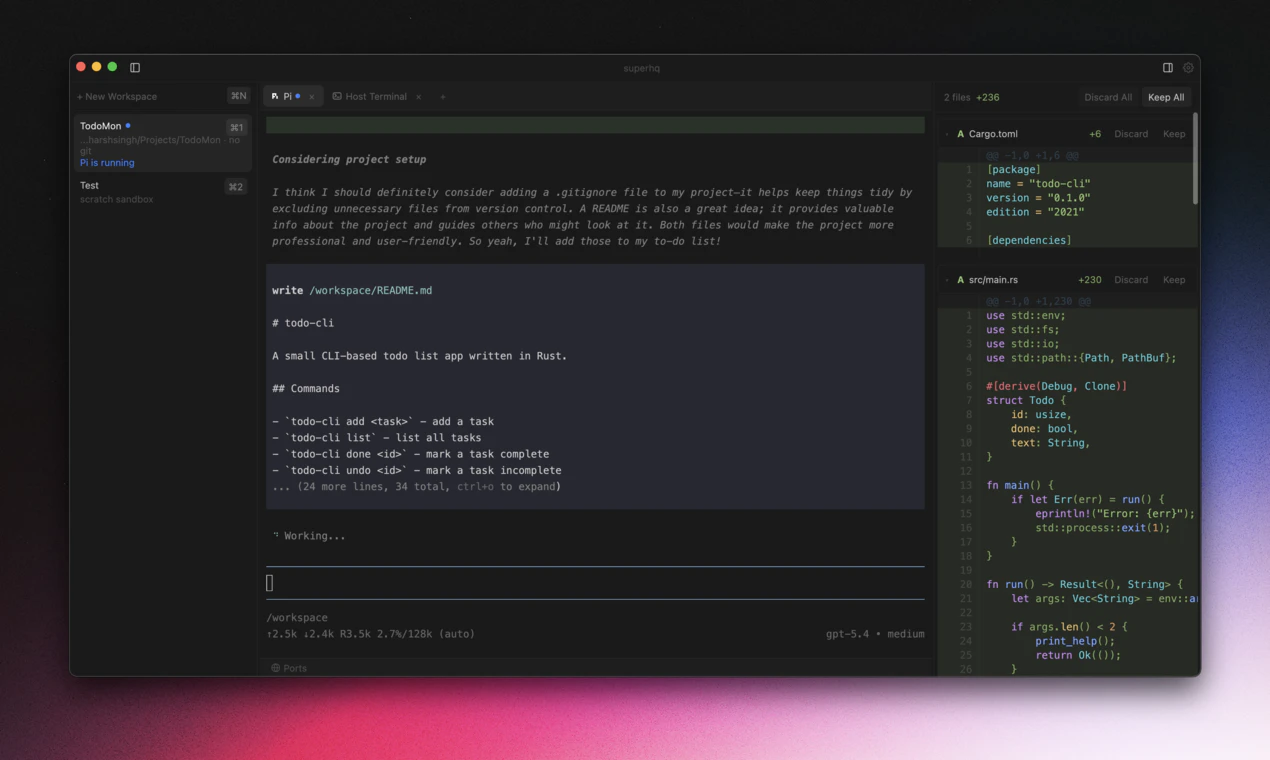

一句话介绍:SuperHQ通过在隔离的微型虚拟机中运行AI编程助手,解决了开发者在本地使用多AI代理时代码安全与系统隔离的核心痛点。

Productivity

Developer Tools

Artificial Intelligence

GitHub

AI编程助手

代码安全

沙箱隔离

微虚拟机

开发工具

代理编排

本地部署

密钥管理

用户评论摘要:用户肯定其将隔离作为系统设计核心的突破性思路,并询问多代理状态同步与差异合并的技术细节。开发者确认当前仅支持macOS,Windows与Linux版本正在社区开发中。

AI 锐评

SuperHQ看似是又一个AI编程工具,但其真正的锋芒在于对“信任”这一根本问题的工程化解构。在AI代理狂热追逐能力的当下,它冷静地将“隔离”从可选项提升为架构基石,这无异于一次范式纠偏。

其价值并非仅仅是“更安全地运行Claude或Codex”。其深层创新在于三重解耦:一是通过微虚拟机实现代理行为与宿主环境的物理隔离,二是通过临时文件系统覆盖层实现过程与结果的分离,三是通过本地认证网关实现密钥与执行环境的逻辑隔离。这构建了一个可审计、可回滚的AI协作框架,将黑箱操作转变为白箱工作流。

然而,其挑战同样鲜明。创始人提到的“多代理对同一仓库状态的收敛”问题,直指分布式版本控制与AI非确定性输出的本质矛盾。此外,从极客工具迈向大众平台,其面临的将是易用性、性能开销与跨平台一致性的经典三角难题。当前macOS优先的策略也揭示了其早期定位——服务于对安全有极致要求的高端开发者。

本质上,SuperHQ贩卖的不是效率,而是控制权。在AI时代,这或许是一种更为稀缺和高级的生产力。

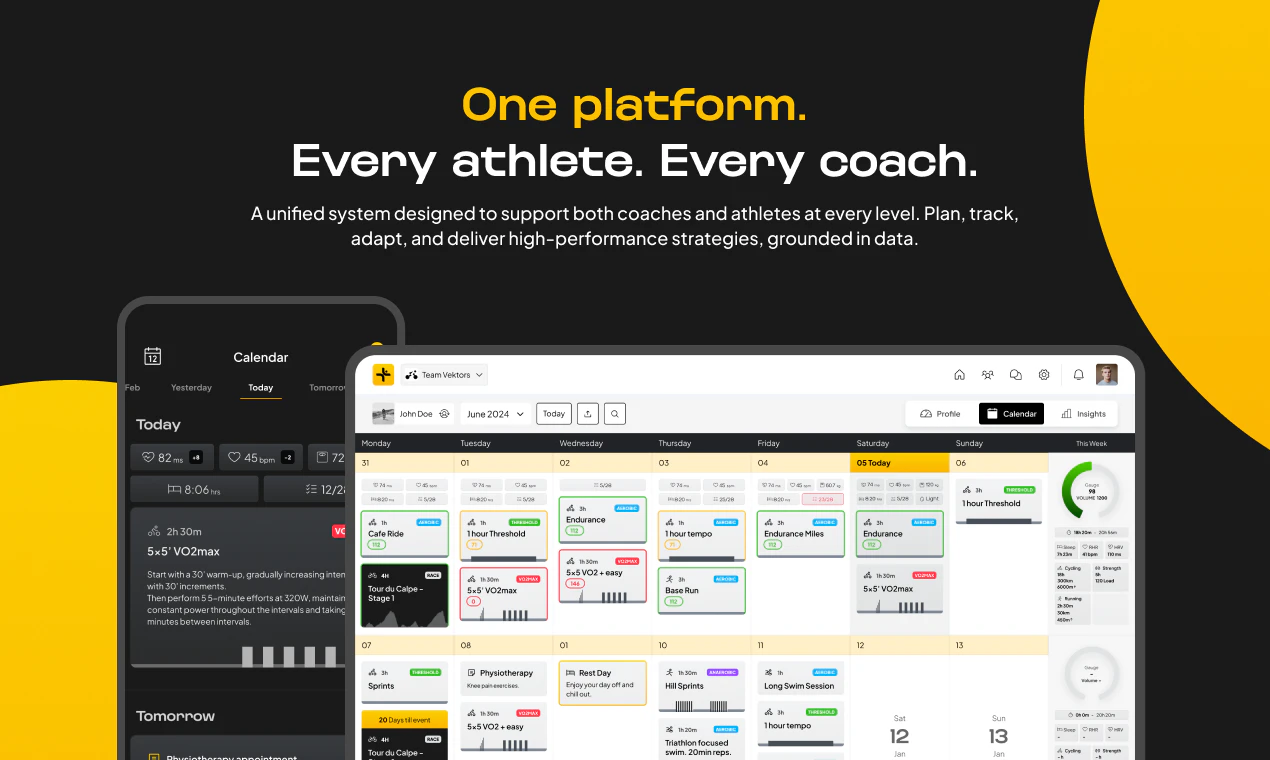

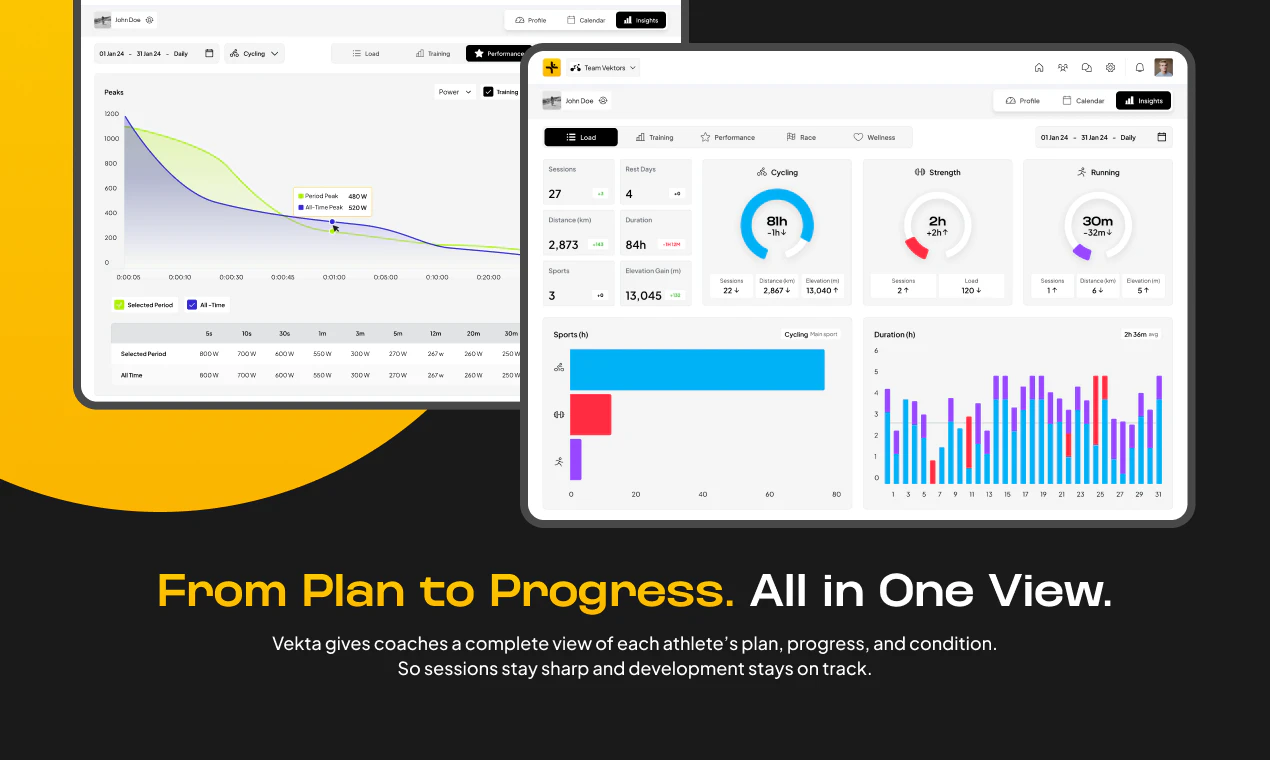

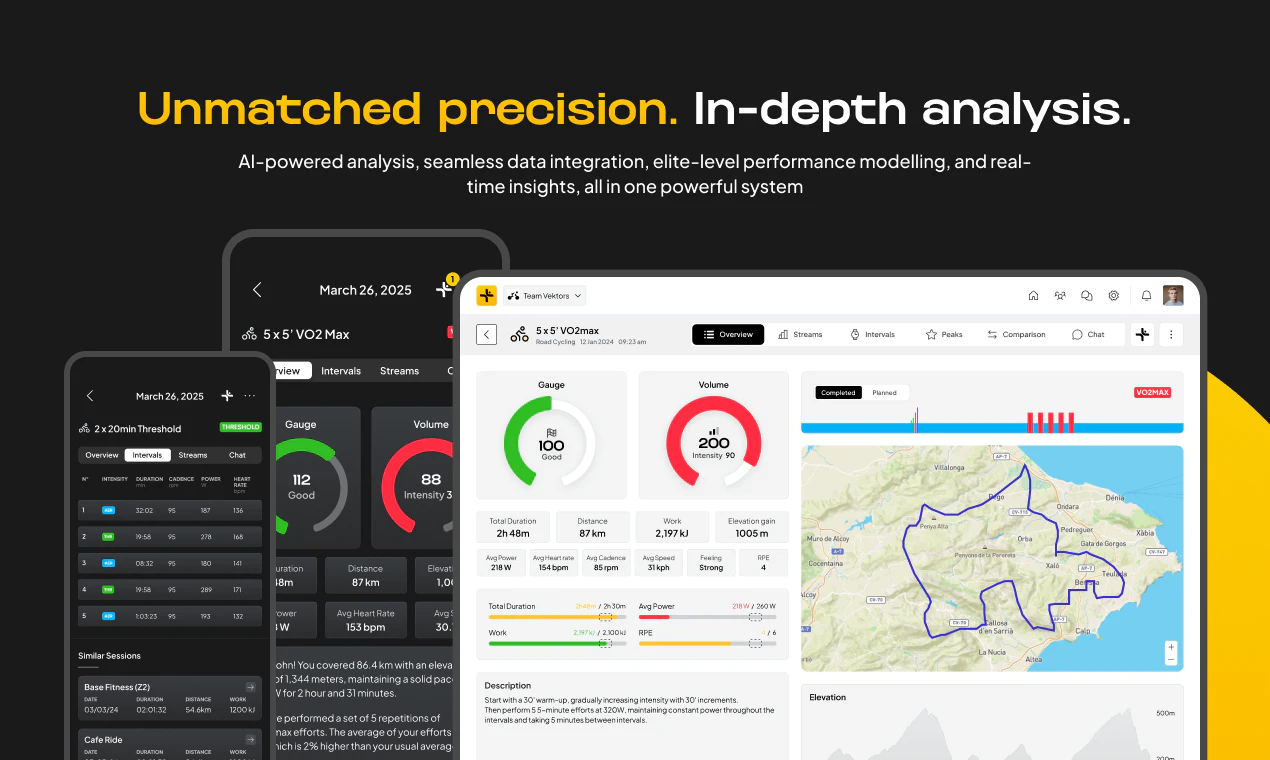

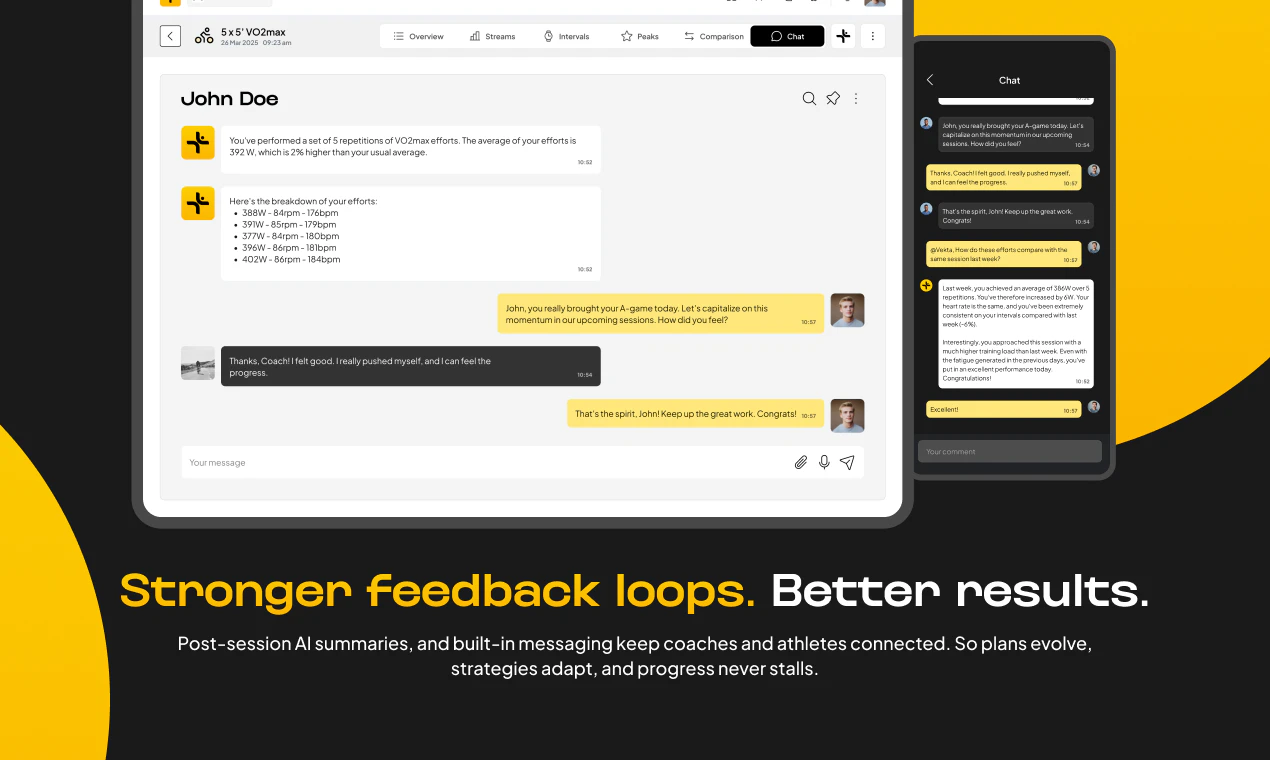

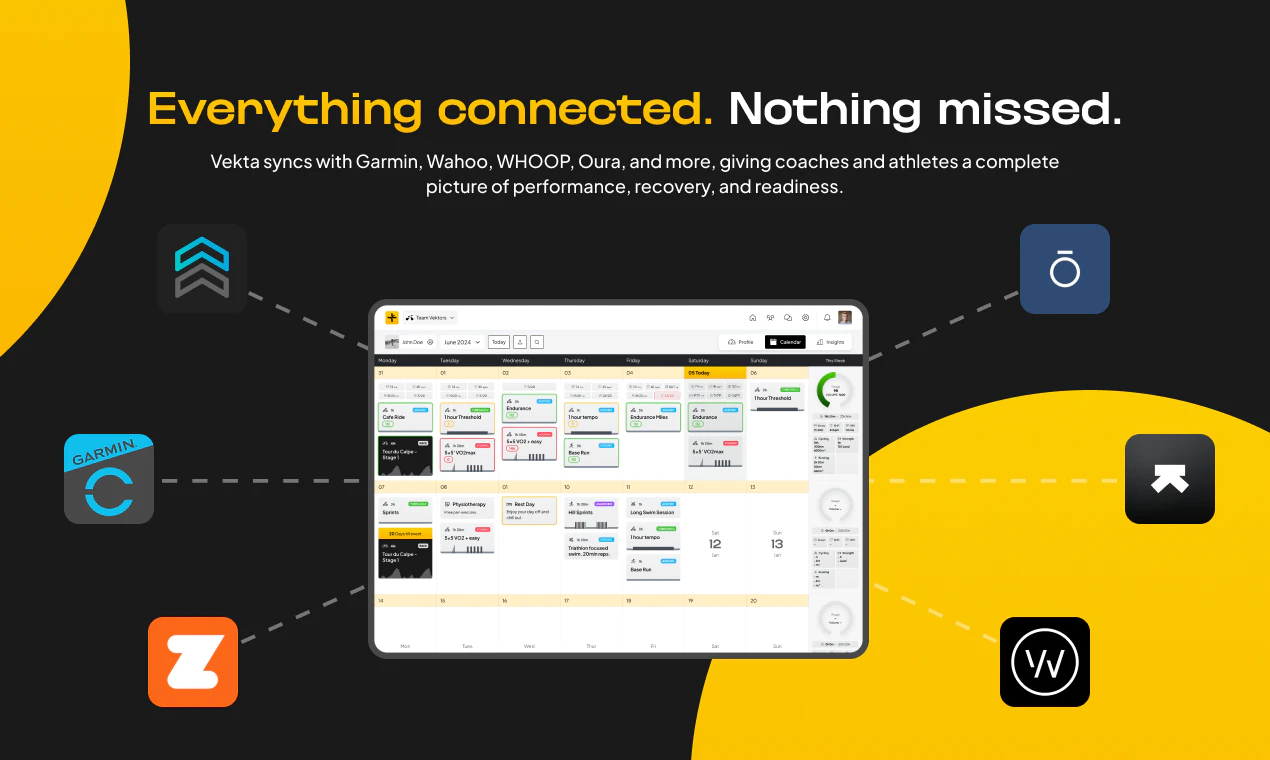

一句话介绍:Vekta是一个将人类专业知识与AI相结合的平台,为耐力运动教练和运动员整合训练计划、分析与洞察,解决数据繁杂却难以转化为有效性能提升方案的痛点。

Health & Fitness

Sports

AI训练平台

耐力运动

数据分析

教练辅助

职业体育

个性化训练

绩效管理

体育科技

SaaS

用户评论摘要:用户肯定其将数据转化为实际性能提升的价值,特别赞赏其构建教练与运动员共享模型的理念。核心建议是关注新用户的“顿悟时刻”设计,以推动在职业队伍之外的广泛采用。

AI 锐评

Vekta切入了一个精准且高价值的细分市场——职业与严肃业余耐力运动训练。其真正的颠覆性不在于简单的“AI+体育”概念,而在于试图重构训练系统中的核心生产关系:将教练的经验智慧与AI的数据处理能力置于一个共享的、动态的“性能模型”之中。这直击了当前运动科技领域的普遍软肋——数据烟囱与决策孤岛。产品标榜的超越FTP、采用临界功率模型等技术点,是服务于严肃运动员的性能“硬核”需求,是其专业性的护城河。

然而,其面临的挑战同样清晰。首先,从“为职业车队服务”到“为普通教练和运动员所用”之间存在巨大的产品与市场匹配鸿沟。职业场景有专职人员消化复杂数据,而大众市场需要极致的简洁与自动化。评论中提及的“新用户顿悟时刻”恰恰点中了这一命门:如何让非顶级用户在第一时间感知到价值,而非被复杂模型吓退?其次,其商业模式可能受限于耐力运动本身的小众市场天花板。虽然客单价高,但规模扩张需要将产品逻辑泛化到更广泛的健身或健康领域,这可能与其“专业性能”的定位产生冲突。最后,作为数据驱动平台,其长期价值取决于数据的闭环与模型的进化,这需要庞大的用户基数和持续的行为数据输入,在早期如何打破这个循环是关键。

总体而言,Vekta展现了一个专业、深度的产品方向,但它的成功将不取决于其技术或概念有多先进,而取决于其能否在保持专业深度的同时,完成从“职业装备”到“专业工具”的优雅降维,找到那个关键的、可规模化的用户体验支点。

一句话介绍:deckpipe.dev 是一款“智能体优先”的幻灯片渲染引擎,它允许AI智能体通过JSON描述幻灯片内容并自动渲染成演示文稿,解决了在AI协作场景中快速、灵活生成结构化视觉材料的痛点。

Artificial Intelligence

AI智能体工具

幻灯片自动生成

MCP服务器

无头渲染引擎

开发者工具

JSON驱动

人机协作

开源生态

效率工具

内容编排

用户评论摘要:用户肯定其“将智能留给智能体”的极简设计哲学,认为能节省时间。主要反馈集中在样式控制力上,询问用户与智能体在结构和样式上的权限划分。创始人回应目前样式基础,强调与智能体协同迭代内容的工作流。

AI 锐评

deckpipe.dev 表面是一个幻灯片工具,实质是AI智能体时代的“标准化输出接口”。其真正价值不在于渲染能力本身,而在于通过定义一种极简的JSON协议,将幻灯片这种高度非结构化的创意产物,变成了AI智能体可理解、可操作、可生成的标准化数据对象。

这步棋看似简单,实则犀利。它精准地预判了未来AI工作流的痛点:当每个个体都拥有一个主智能体时,核心矛盾将从“如何让AI执行任务”转向“如何让AI与复杂的人类工具链对话”。市面上一众SaaS忙于在自家产品上“螺栓式”地集成AI功能,导致智能碎片化、上下文割裂。deckpipe反其道而行,主动做“笨”的、被动的渲染层,将所有的内容决策与逻辑推理权交还给中心化的智能体。这符合技术演进的底层逻辑——专业化分工。AI负责思考和结构化,专用工具负责高保真呈现。

然而,其挑战也同样明显。首先,其市场天花板与“智能体优先”范式的普及速度强绑定,目前仍属早期开发者需求。其次,将设计控制权过度让渡给AI,在当前AI审美与设计一致性尚不成熟的阶段,可能难以满足专业级演示需求,使其易被定位为“快速草稿工具”。它的成功,将不取决于自身功能多强大,而取决于它能否成为AI智能体世界中最流行、最通用的那款“幻灯片MCP服务器”,构建起生态护城河。这是一场关于标准与协议的豪赌。

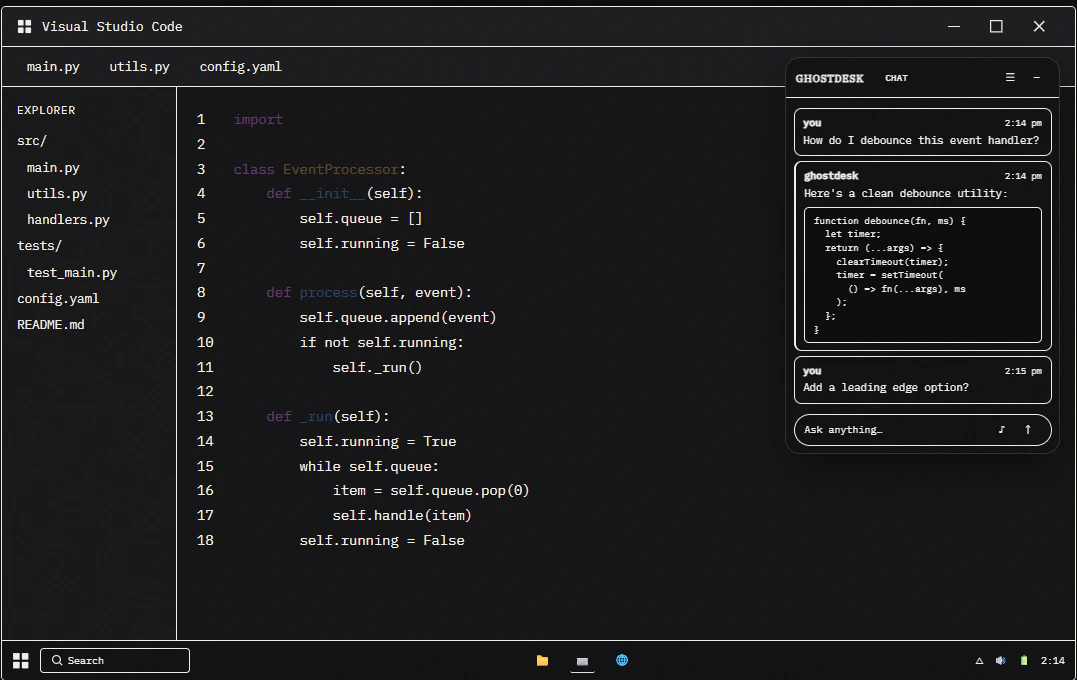

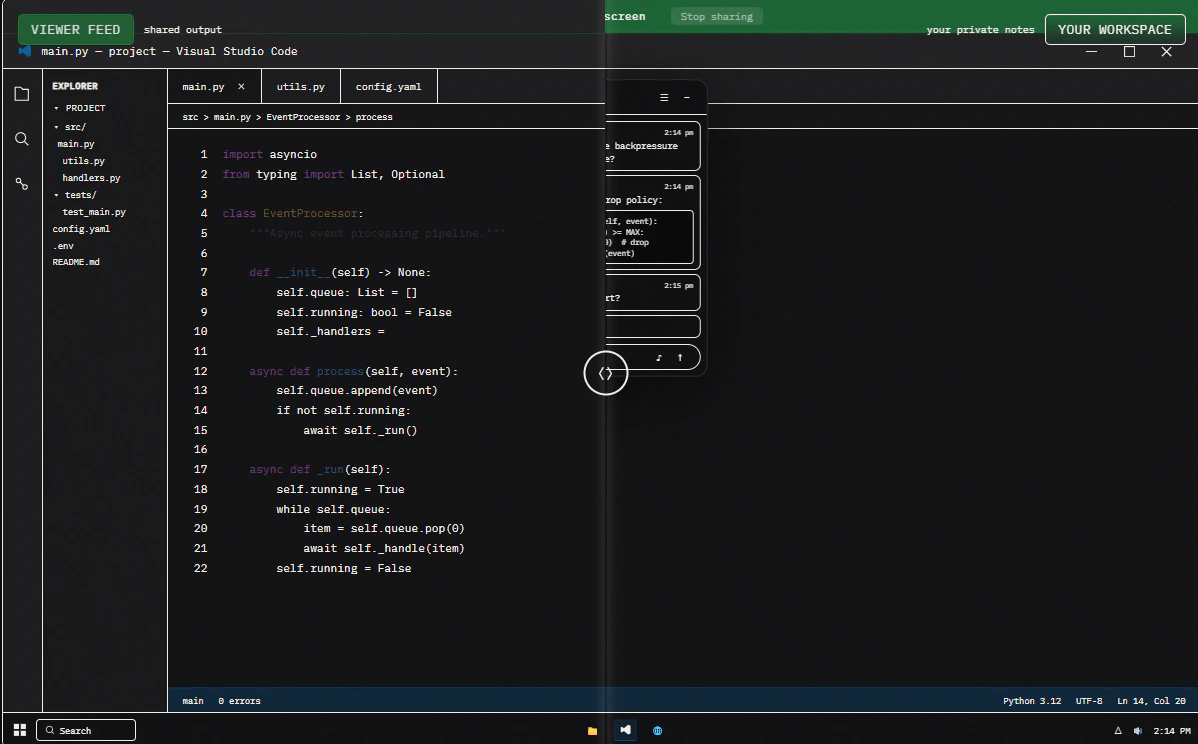

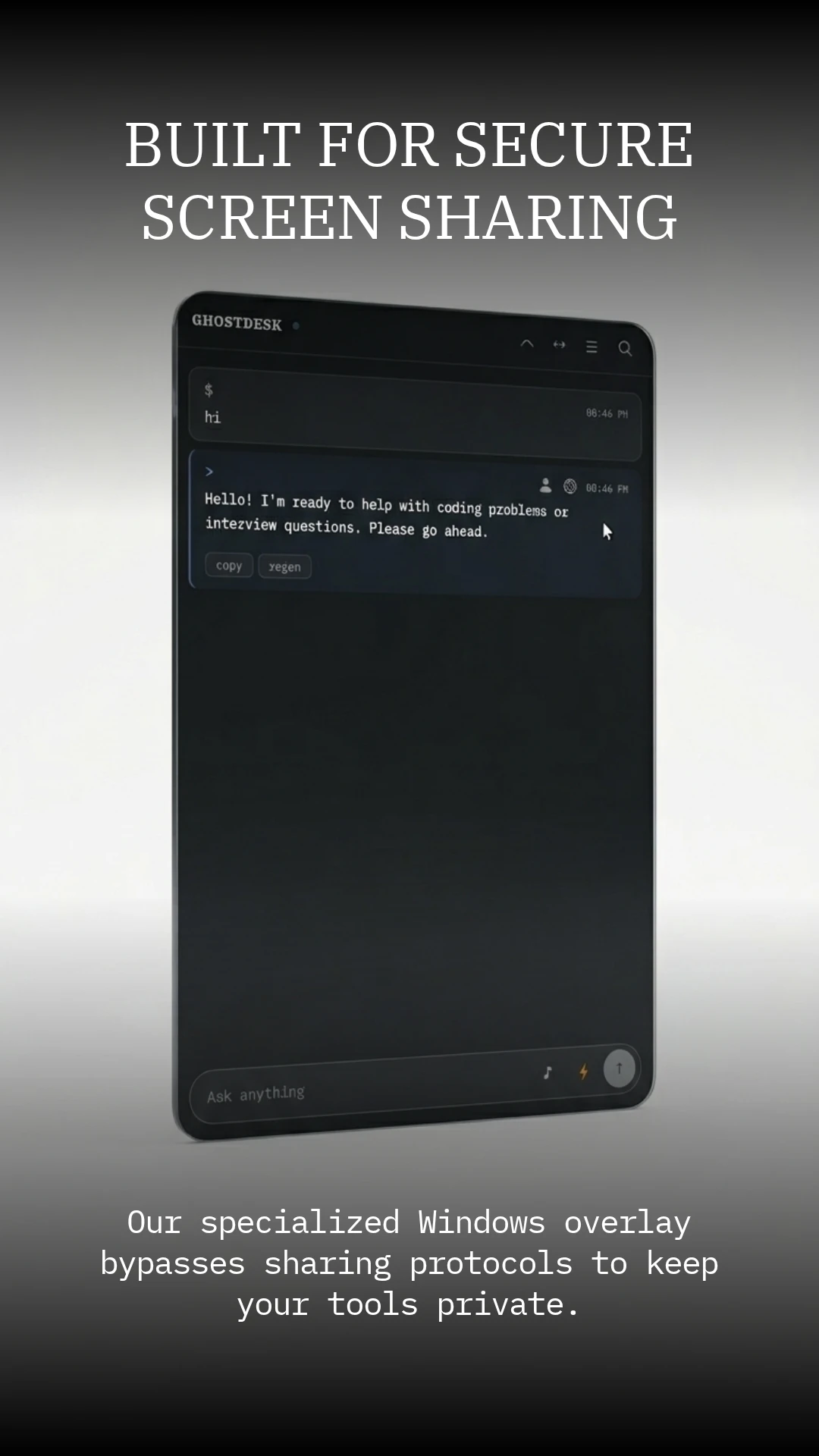

一句话介绍:GhostDesk是一款完全隐身的桌面AI助手,在面试、高利害会议等高压场景中,为用户提供实时转录、AI答案和屏幕分析支持,同时规避监考和录屏软件的检测,解决用户因紧张遗忘或需要即时信息支援的痛点。

SaaS

Developer Tools

Artificial Intelligence

AI助手

实时转录

屏幕OCR

隐身模式

防检测

面试辅助

会议效率

高压场景

桌面应用

生产力工具

用户评论摘要:用户关注与竞品Cluely的对比,开发者回应在延迟、OCR、隐身模式和价格上有优势。用户询问技术实现细节(虚拟麦克风驱动或系统输出)。有评论建议品牌定位应从“隐身”转向“高压场景下的优势赋能”。

AI 锐评

GhostDesk 2.0精准切入了一个灰色但需求强烈的利基市场:在受监控或高压力环境中寻求不对称信息优势的用户。其核心价值并非简单的“AI副驾驶”,而是一个“数字护身符”,旨在缓解用户在关键场合下的表现焦虑与认知过载。

产品介绍的“隐身”特性是一把双刃剑。从技术角度看,针对专业监考工具的规避能力是其最犀利的卖点,也构成了短期壁垒。然而,这也将产品置于道德与合规的模糊地带,可能限制其主流化发展和长期品牌形象。评论中关于“定位优势而非隐身”的建议极为中肯,这暗示了产品真正的用户心理诉求:他们需要的不是“作弊工具”,而是一种能提升自信、保障稳定发挥的“增强层”。

从功能集成看,语音转录、OCR与AI应答的闭环设计确实针对了实时交互场景的痛点。但深度挑战在于:在完全隐身的前提下,如何提供流畅、不干扰主任务的交互体验?目前的“不可见”模式可能将交互成本转移到了用户的注意力分配上,如何确保AI提示本身不成为新的认知负担,是设计上的关键。

此外,其商业模式高度依赖于特定场景(如在线面试、考试)的持续存在与监管技术的不对称性。一旦监管技术升级或平台规则收紧,其核心优势可能迅速消退。因此,GhostDesk的长期路径,或许需要从“规避检测”转向“合规赋能”,例如专注于为练习、复盘或无障碍支持等正当场景提供增强服务,从而建立更可持续的产品生命线。

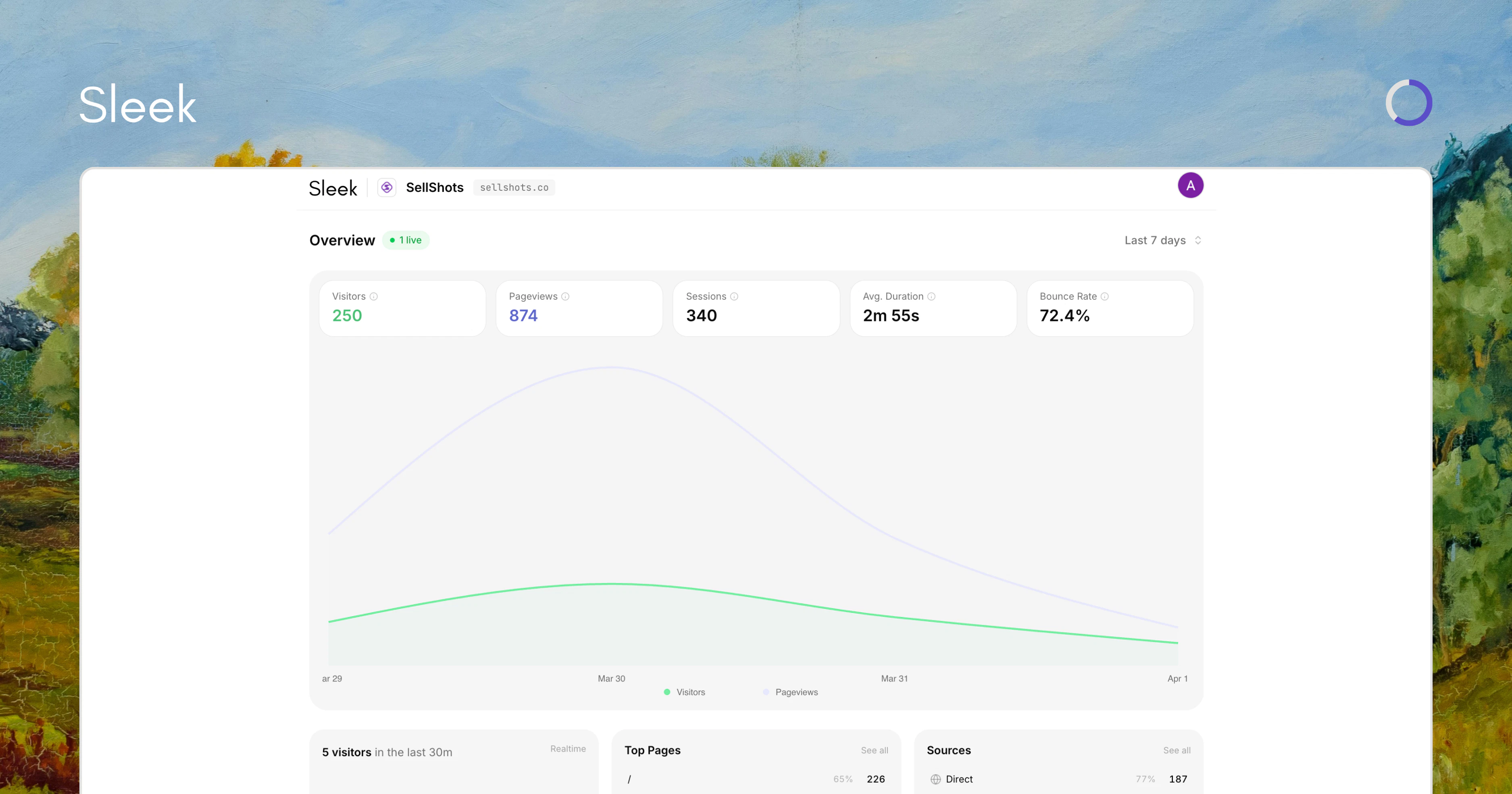

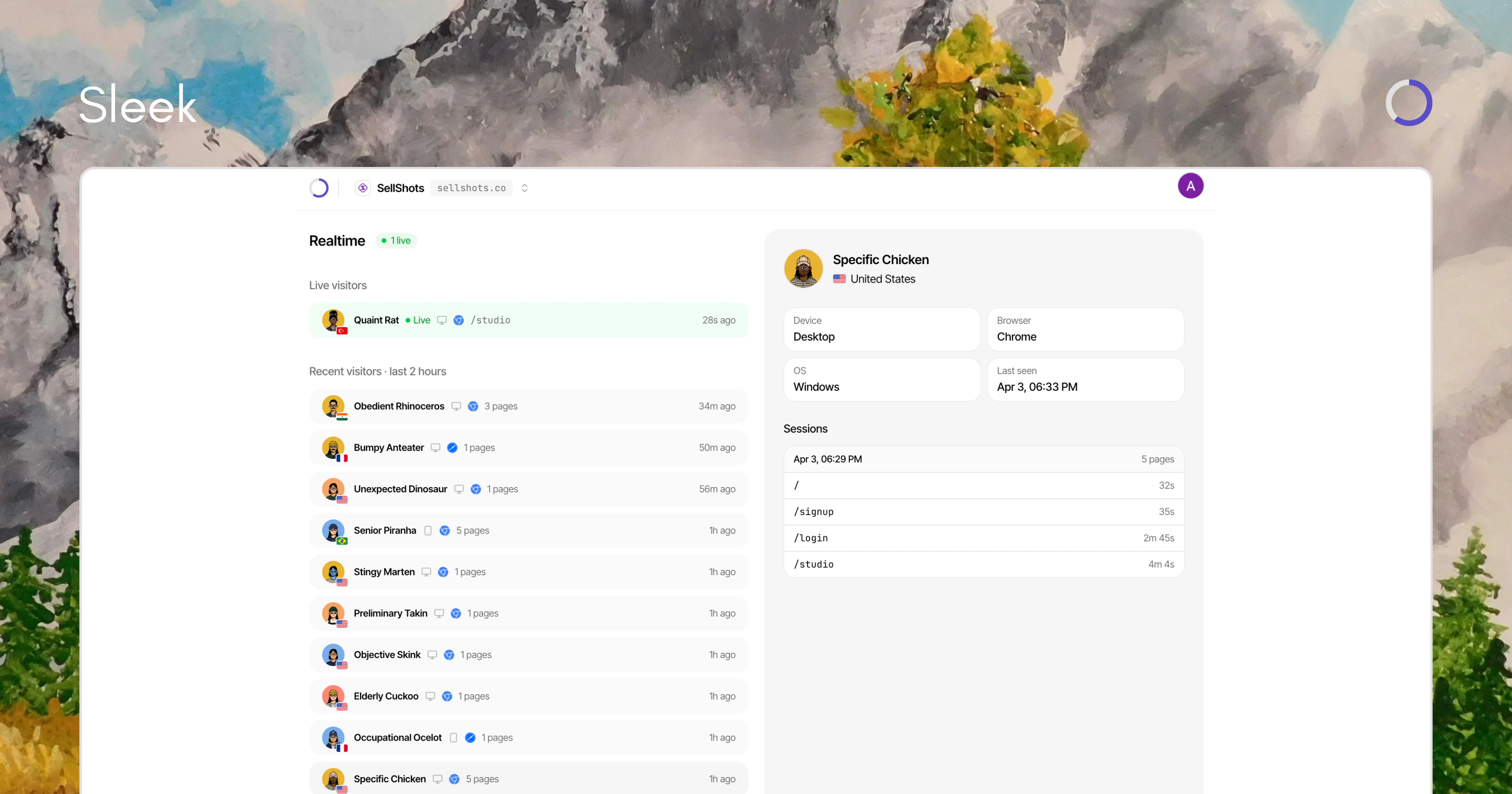

一句话介绍:一款将Stripe实时收入数据与网站流量数据并排展示的分析工具,解决了市场运营人员无法快速、直观地判断流量波动是否真正带来收入的痛点。

Analytics

SaaS

Developer Tools

收入归因分析

实时数据仪表板

Stripe集成

营销效果分析

SaaS工具

数据可视化

隐私安全

轻量级应用

效率工具

用户评论摘要:用户普遍认可产品核心价值:将收入与流量实时关联,流程轻量即时。主要反馈集中在功能深度上:询问数据粒度(能否追踪到具体渠道或活动),以及对产品未来扩展时如何保持简洁性的关切。

AI 锐评

Sleek Analytics 推出的这款产品,精准地刺中了现代增长团队的一个经典盲区:数据孤岛带来的决策迟滞。它没有选择构建一个庞杂的全能BI系统,而是扮演了一个极为锋利的“手术刀”角色——单点切入,直连Stripe与流量数据,实现近乎零延迟的收入可视化。其宣称的“Restricted key only”和“Privacy-first”是切入企业级市场的聪明策略,用技术手段降低了安全顾虑的接入门槛。

然而,其真正的挑战与价值天花板也在此刻显现。从评论中用户的追问可以看出,当前版本提供的可能还是一个“宏观真相”。一旦用户尝到甜头,必然会要求更“微观的归因”:这笔收入是来自自然搜索、付费广告还是网红推广?这要求产品必须向后整合更复杂的归因模型和数据管道,向前则可能需对接Google Analytics、Meta Ads等多平台。这与评论中担忧的“如何保持轻量”形成了核心矛盾。

因此,这款产品的未来,取决于团队在“功能深度”与“体验简洁”这个经典对立面上所做的权衡。它可能成为一款优雅的、面向中小团队或初创公司的核心监控仪表盘,也可能以此为楔子,逐步侵入更广阔的商业智能与分析市场。它的出现本身,就是对那些操作笨重、设置复杂的传统分析工具的一次犀利批判。

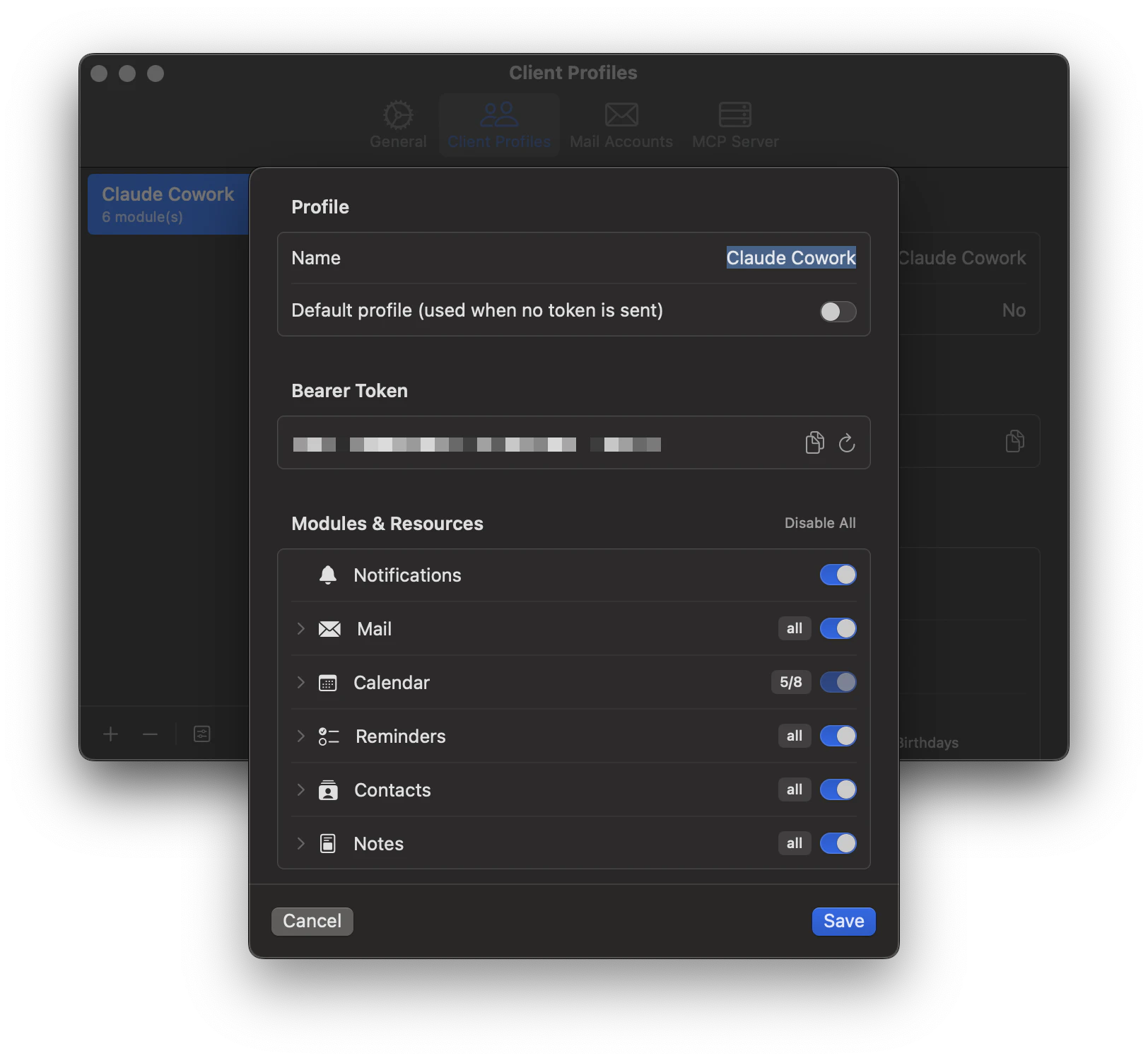

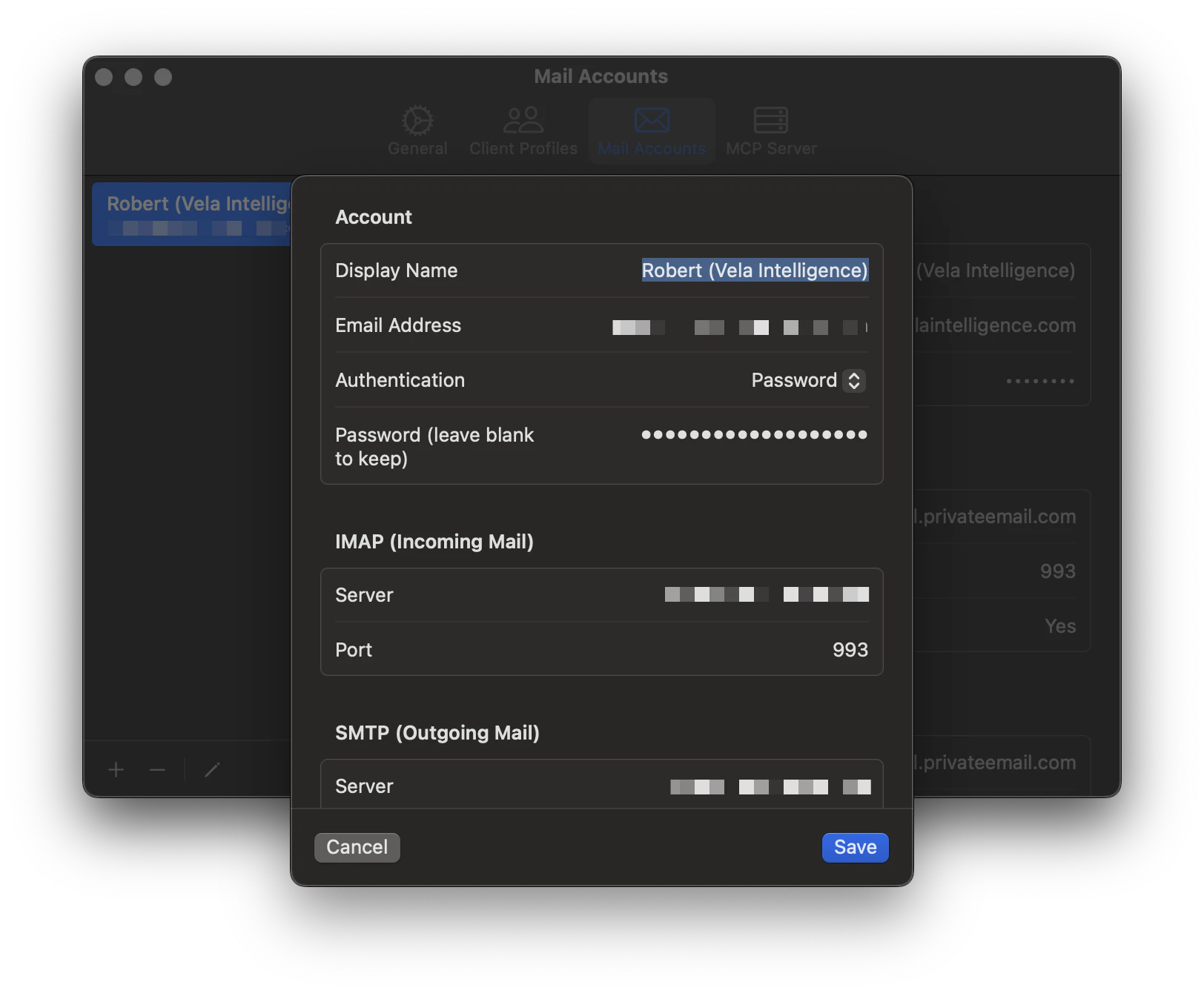

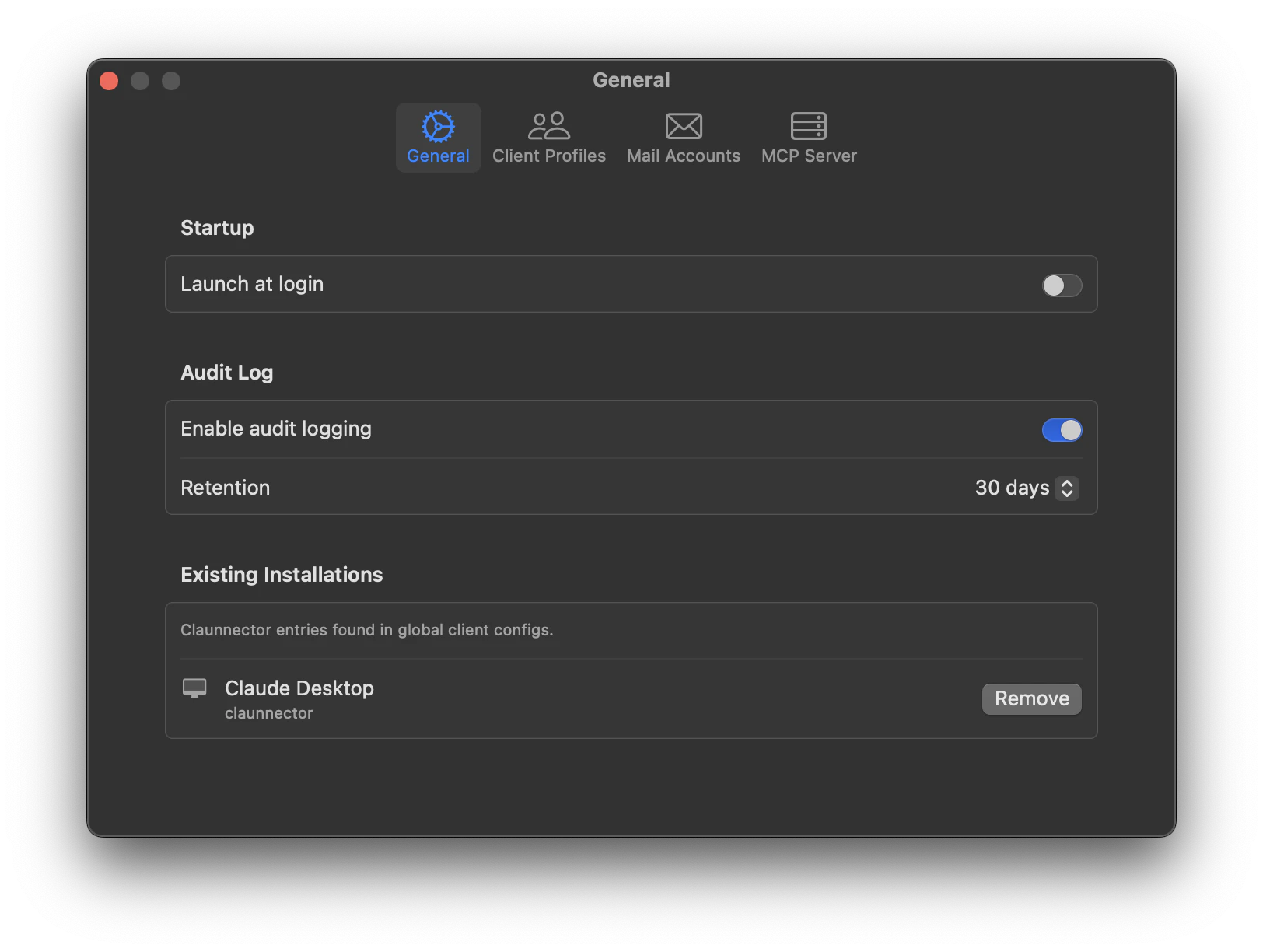

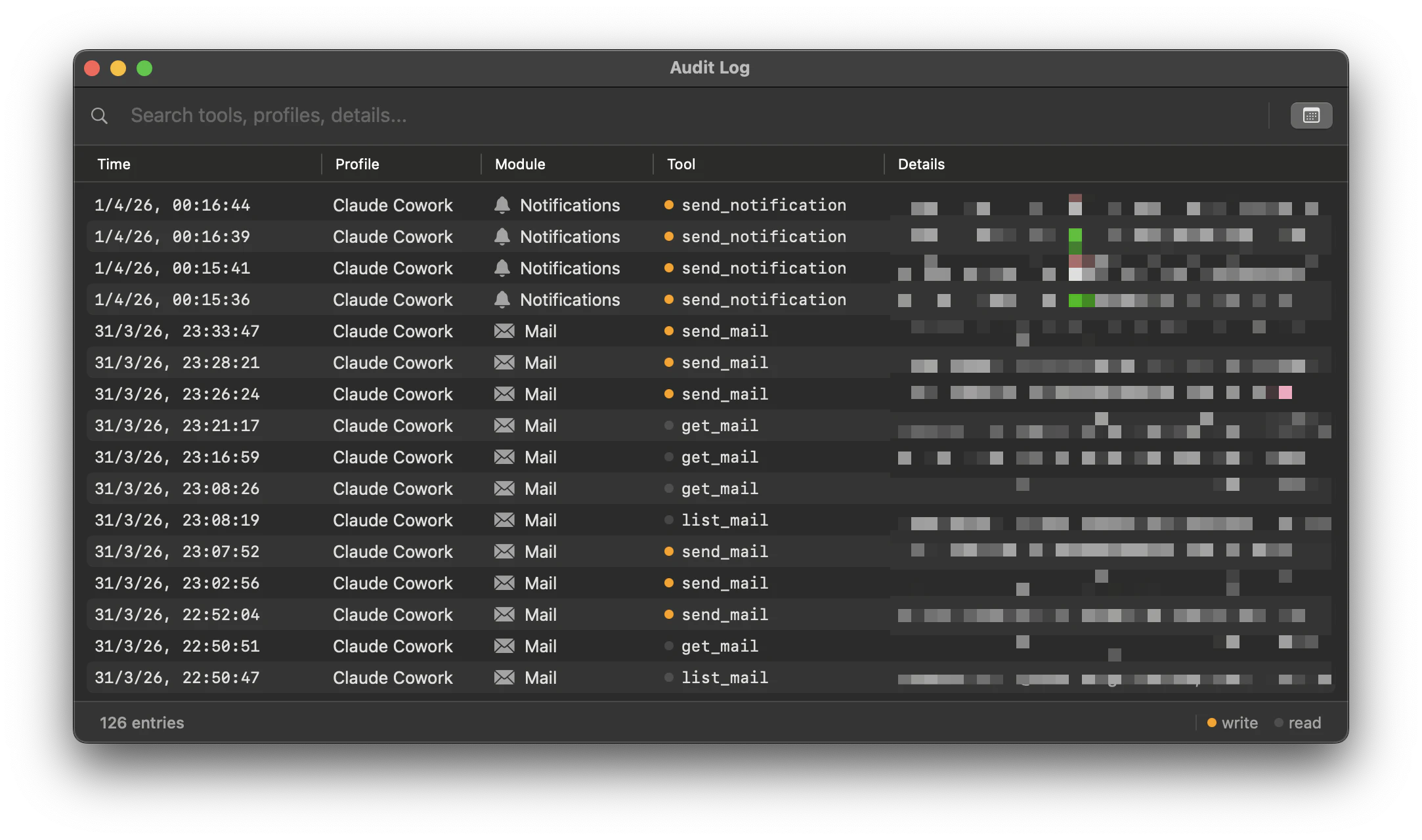

一句话介绍:Claunnector是一款macOS菜单栏应用,通过本地运行MCP服务器,让Claude等AI工具能安全读写本地邮件、日历等数据,解决了用户在追求AI效率时担忧隐私泄露的核心痛点。

Email

Productivity

Menu Bar Apps

AI工具集成

本地化部署

数据隐私

macOS生产力工具

MCP服务器

菜单栏应用

权限管理

审计日志

无云化

一键安装

用户评论摘要:开发者自述产品源于个人对AI无法安全交互本地应用的痛点。用户肯定其本地化运行解决了信任问题,同时指出产品功能强大但略显技术化,建议优化新用户引导流程以更快实现“顿悟时刻”。

AI 锐评

Claunnector看似是又一个AI集成工具,但其真正价值在于精准切中了当前AI应用生态中最脆弱的信任环节。在各大厂商竞相将用户数据上传云端进行模型训练的时代,它反其道而行之,以“数据永不离开本地”作为核心卖点,这并非简单的功能差异化,而是对AI隐私焦虑的一次外科手术式打击。

产品通过本地MCP服务器架构,将AI能力从“云上黑箱”转变为“本地可控工具”,其客户端权限配置和完整审计日志功能,实质上是在用户与AI之间建立了一套可追溯的问责机制。这解决了专业用户(尤其是处理敏感信息的商务、法律人士)既想利用AI提升效率,又极度忌惮数据泄露的核心矛盾。

然而,其挑战同样明显。首先,重度依赖本地算力可能限制处理复杂任务的能力,与云端AI的规模优势形成天然鸿沟。其次,“仅Claude自动连接”的现状暴露了其对单一生态的早期依赖,能否成为跨AI平台的通用数据桥梁存疑。最后,正如用户所指出的,平衡“强大控制力”与“用户易用性”将是其破圈关键——隐私爱好者青睐的复杂配置,恰恰是大众用户的使用门槛。

本质上,Claunnector不是AI能力的创造者,而是AI与本地环境之间“受信任的中间人”。它的出现标志着AI应用正从“功能竞赛”进入“信任基建”的新阶段。如果它能成功教育市场并建立标准,其价值将远超一个工具,而可能成为未来桌面AI交互的基础协议层。但这条路的前提是,它必须从极客的“玩具”平稳走向大众的“工具”。

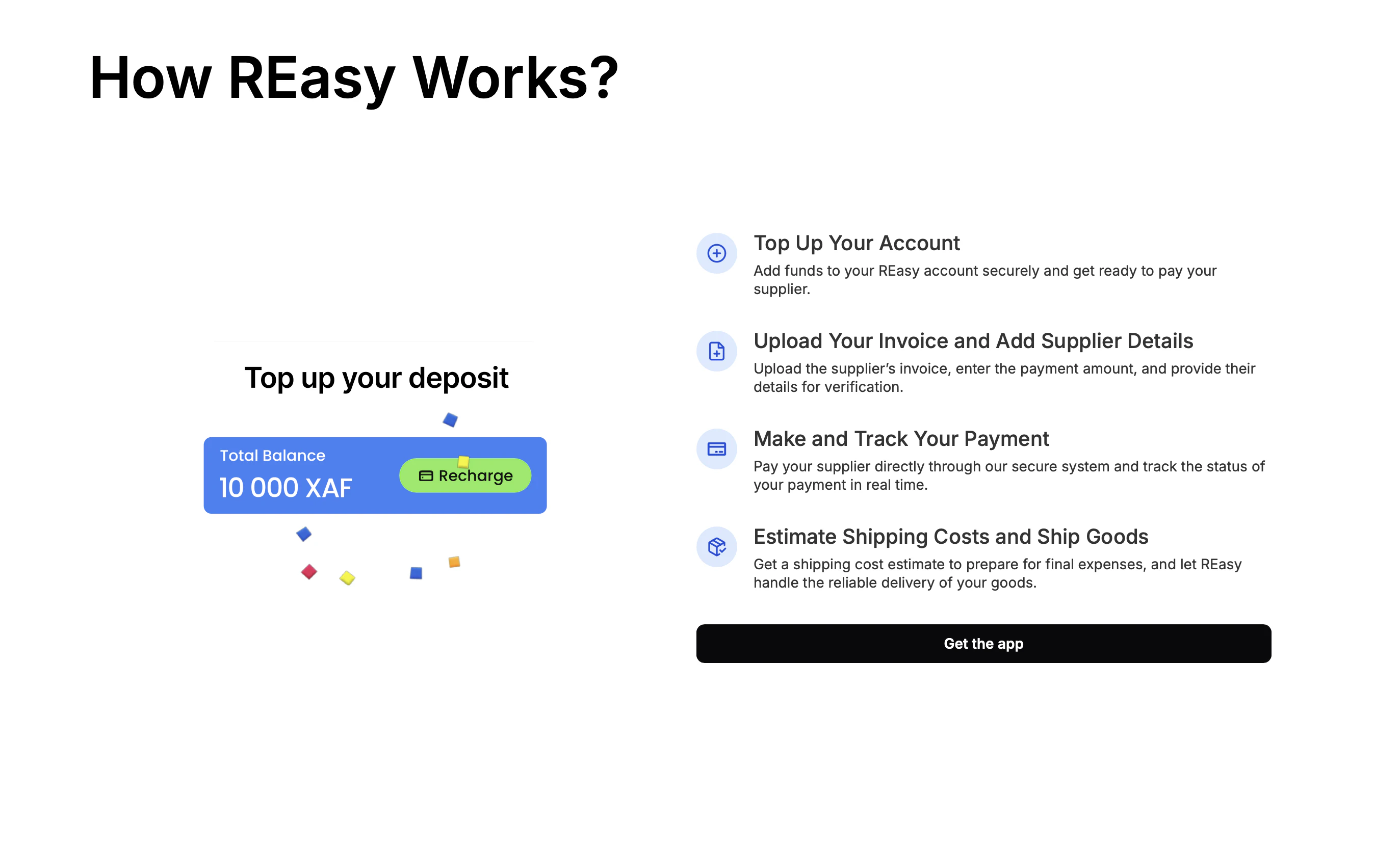

一句话介绍:REasy为非洲小型进口企业提供一站式操作系统,在跨境贸易场景下,通过整合供应商审核、支付、物流与清关等环节,解决供应链碎片化导致的利润侵蚀痛点。

Payments

跨境贸易操作系统

非洲中小企业

B2B进口

SaaS平台

供应链整合

跨境支付

物流管理

海关清关

新兴市场

数字化转型

用户评论摘要:目前提供的评论列表为空,无法获取用户直接反馈。建议后续通过用户访谈或市场调研收集关于产品易用性、服务覆盖范围、费用结构及本地化适配等维度的具体意见。

AI 锐评

REasy瞄准了一个极具潜力却长期被主流SaaS厂商忽视的利基市场——非洲中小进口商。其宣称的“操作系统”定位,本质上是对跨境贸易中“脏活累活”的数字化整合,野心不小。非洲跨境贸易的痛点并非秘密:碎片化的服务商、高昂的信任成本、复杂的清关手续,这些都精准地吞噬着本就微薄的利润。REasy若能真正打通从寻源到交付的全链条,其价值将远不止于工具,而是成为贸易基础设施的一部分。

然而,其面临的挑战同样尖锐。首先,“全链路”意味着要与无数本地化、非标准的环节搏斗,从喀麦隆起步能否形成可复制的模式存疑。其次,支付与金融是核心,但在外汇管制普遍、金融基建薄弱的地区,其解决方案的合规性与稳定性将经受严峻考验。最后,作为平台,其核心壁垒在于双边网络效应——吸引足够多的进口商与供应商。在信任缺失的市场,冷启动难度极大,可能需要重度线下服务介入,这又将拖慢扩张速度与利润率。

产品标语中的“操作系统”一词颇具战略考量,意在彰显其基础性与不可或缺性。但现阶段,它更可能是一个“重度垂直的工作流协同平台”。其真正的试金石在于:能否将线下极度复杂的贸易流程,抽象为线上足够简单、可靠且低成本的标准化产品。若成功,它不止是一个APP,而是成为非洲跨境贸易的“数字守门人”;若失败,则可能陷入为特定地区提供定制化IT服务的泥潭。在资本与耐心双重稀缺的非洲创业环境中,这是一场与时间的豪赌。

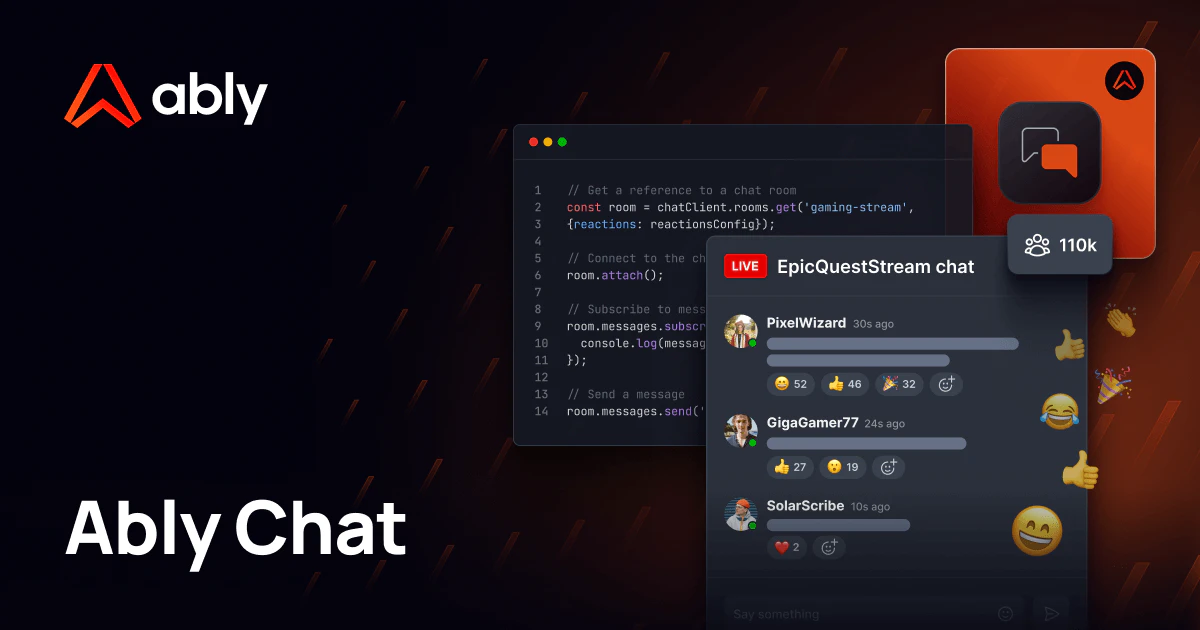

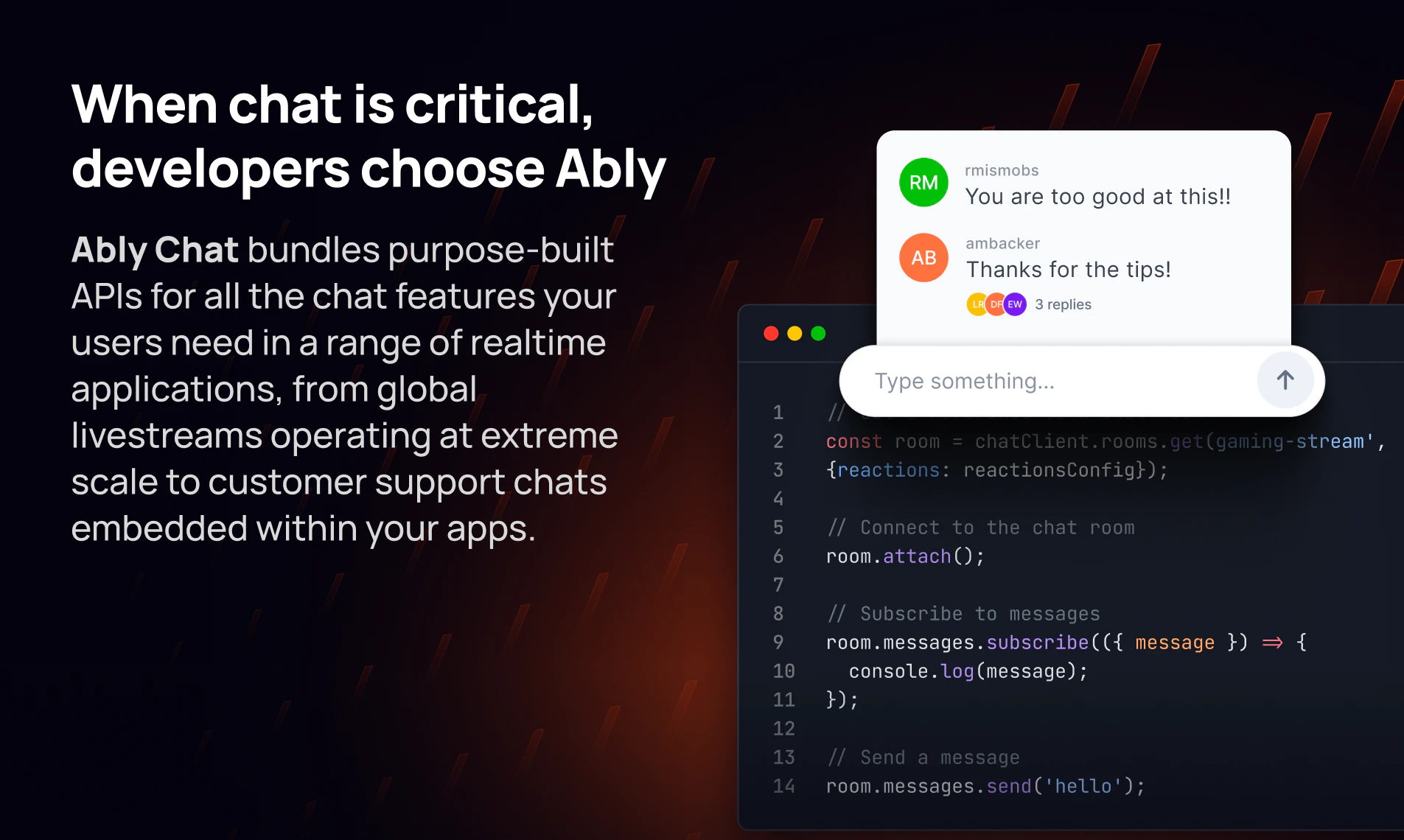

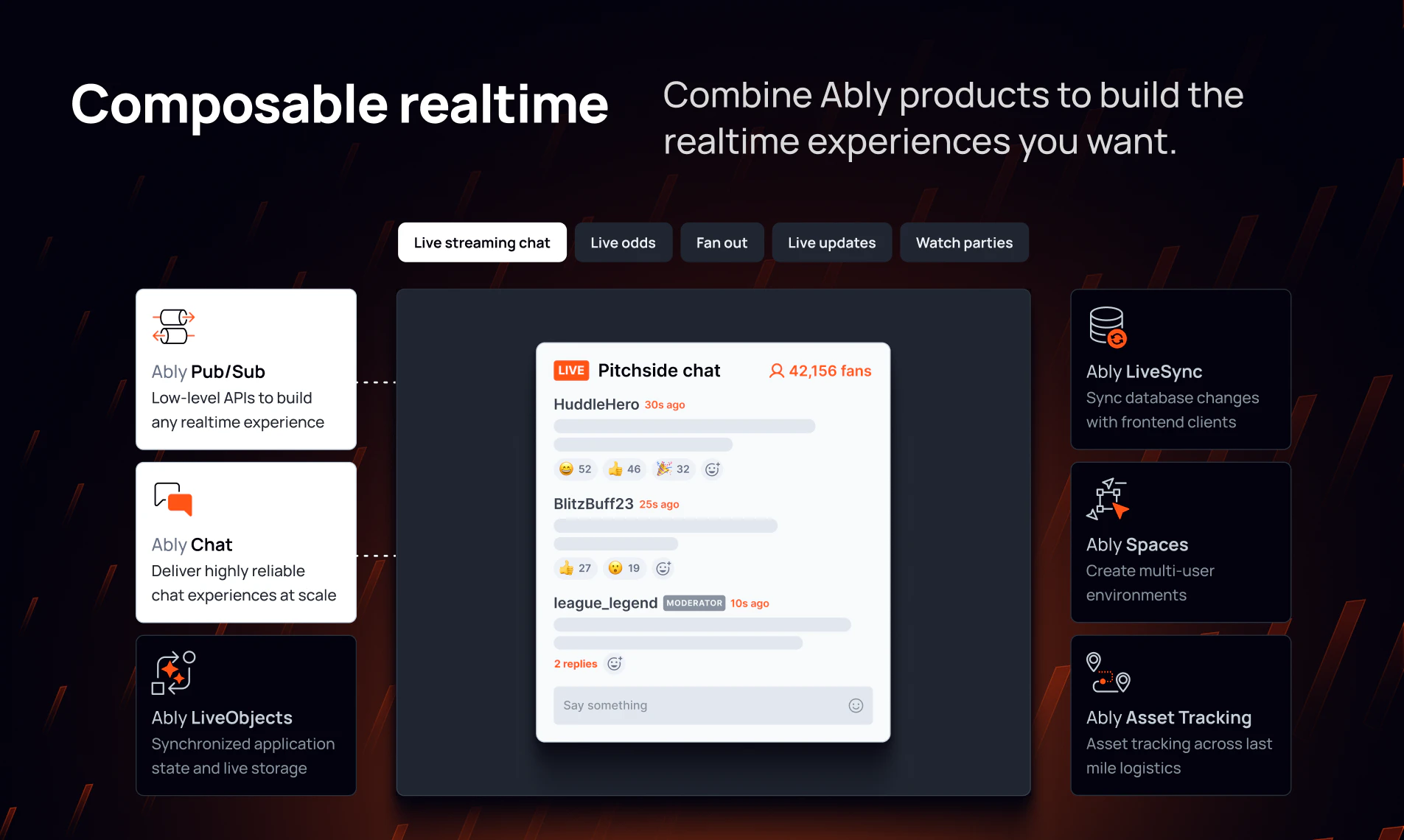

一句话介绍:Ably Chat为开发者提供了一个专为大规模实时通信设计的API,解决了在直播、游戏、客服等高并发场景下,构建稳定、可扩展聊天功能的复杂技术难题。

Messaging

Developer Tools

Chat rooms

实时通信API

开发者工具

可扩展架构

聊天功能

直播互动

游戏内通讯

SDK

企业级基础设施

高可用性

用户评论摘要:用户关注定价模式与大规模使用的成本;认可其在直播、游戏等高并发场景的价值,强调消息顺序和稳定性是关键;赞赏其开箱即用的功能(如输入提示)节省开发成本;并提及对AI智能体集成等前沿演进的兴趣。

AI 锐评

Ably Chat的亮相,精准刺中了实时通信领域的“阿克琉斯之踵”——规模。它不只是在功能列表上做加法,而是将“严肃规模”作为核心价值主张,这一定位本身就极具穿透力。产品介绍中反复强调的“消息顺序保证”、“99.999%可用性”和“全球边缘网络”,并非锦上添花,而是直面直播、游戏等场景下,海量并发导致的消息乱序、延迟、崩溃等核心痛点。这暗示其底层可能采用了更严谨的分布式共识机制,而不仅仅是简单的Pub/Sub。

用户评论也印证了这一点:资深开发者关心的不是花哨的UI套件,而是“分区下的消息顺序保证”和“定价如何随使用量扩展”。这揭示了两个关键挑战:一是技术上的“正确性”在规模压力下成本极高;二是商业上的“可预测性”,避免因成功(用户量暴增)而导致的成本失控。Ably Chat若真能在这两点上给出优雅解,其价值将远超一个功能丰富的API,而是成为数字业务敢于部署实时互动功能的“信心基础设施”。

然而,其真正的试金石并非功能完备性,而是在极端场景下的实际表现与成本曲线。在拥挤的CPaaS市场中,它必须证明自己不仅是另一个“功能更多”的聊天API,而是能在关键时刻扛住“最终BOSS”的工程级解决方案。否则,它可能只是技术栈中的一个可选项,而非必选项。

Hey PH!

We launched Accent Conversion for meetings back in March. People loved it, but we kept hearing the same request: "Can I use this on YouTube?"

So we went and looked. Pick any popular lecture by a non-native English speaker and scroll to the comments. It's always the same: "Can't understand what he's saying." "Had to watch at 0.75x." "Captions are completely wrong."

Millions of views. Brilliant speakers. Huge chunk of the audience rewinding or just leaving.

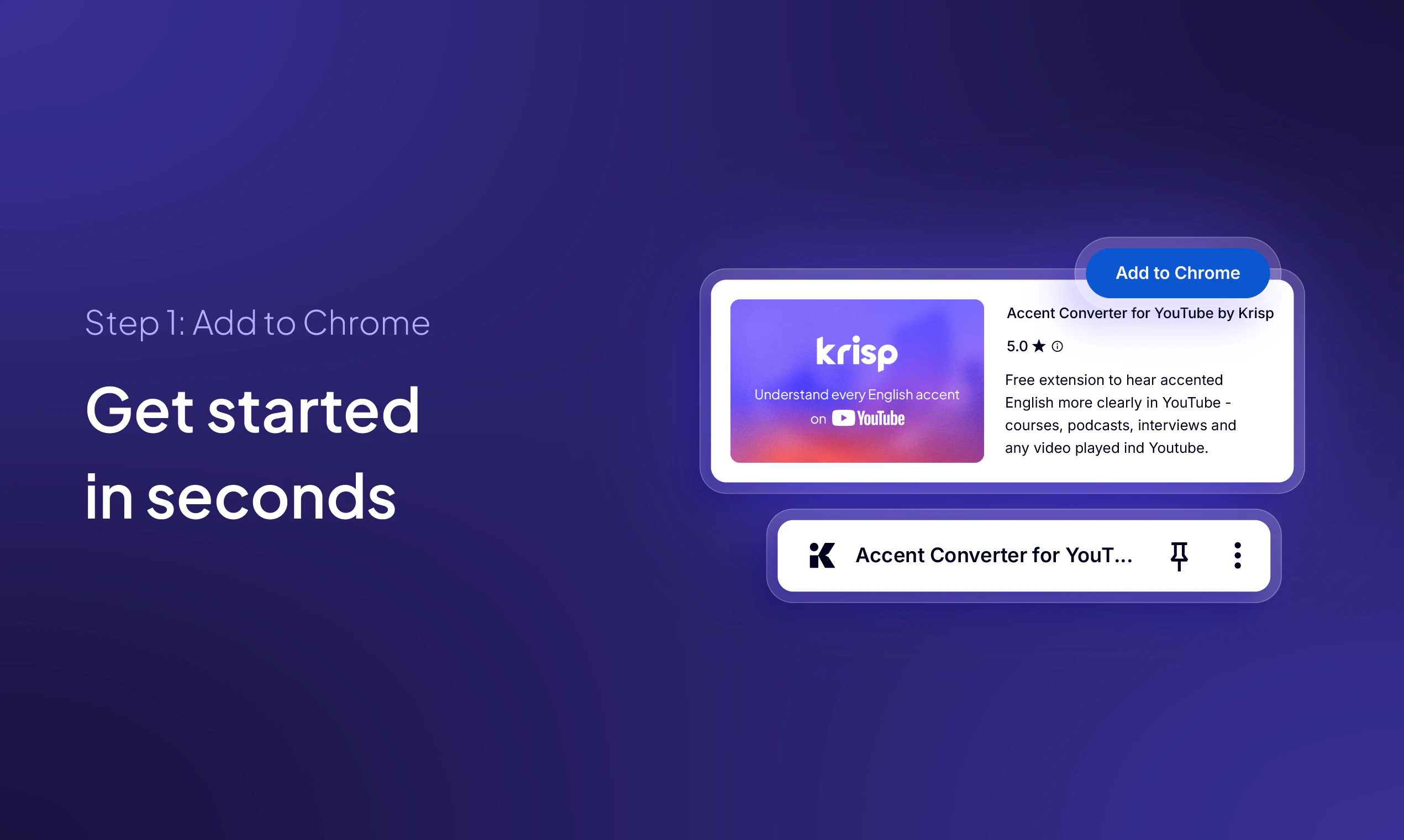

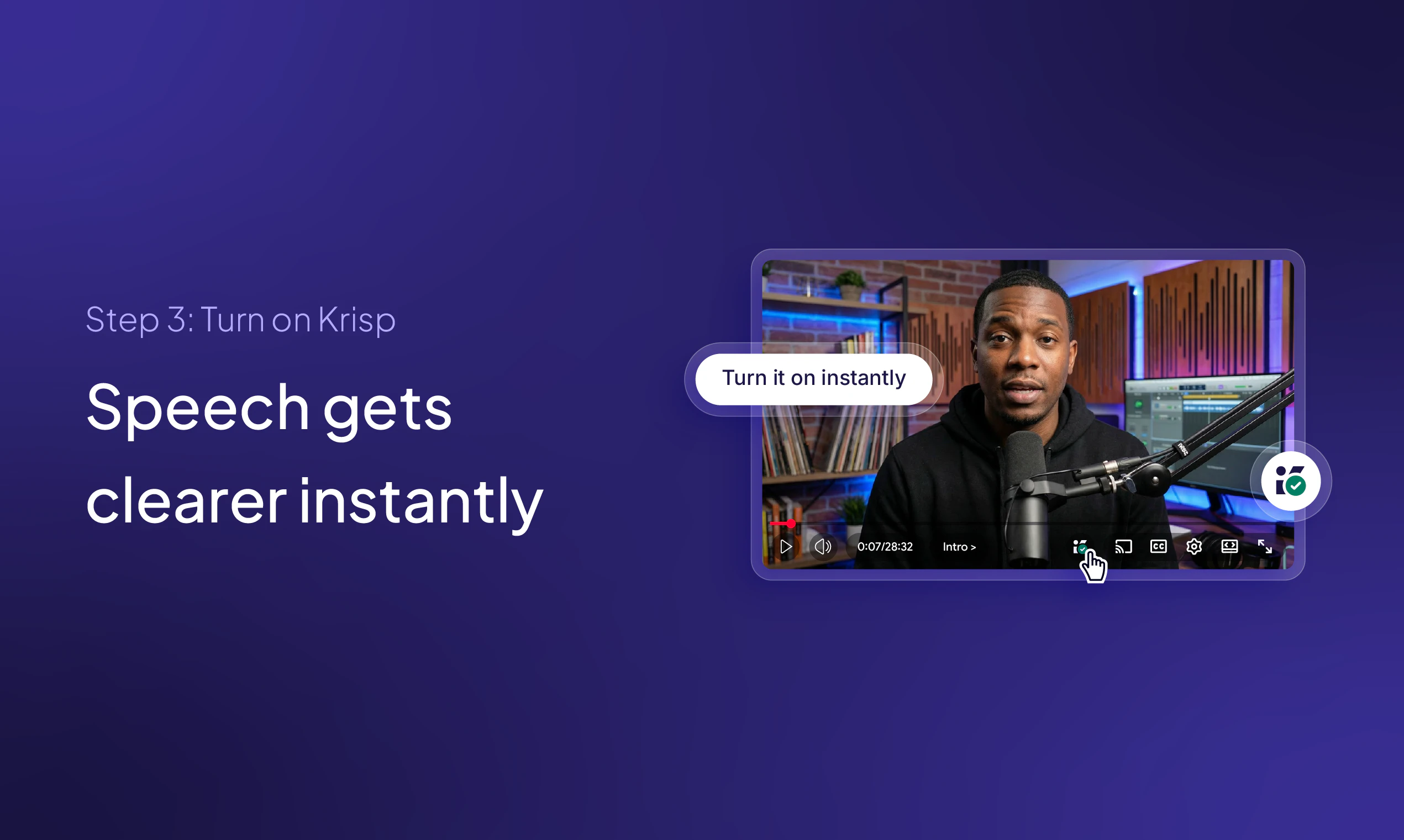

That felt wrong. The content is right there. The only thing in the way is accent clarity. So we packaged our on-device AI into a free Chrome extension.

Open a YouTube video, toggle it on, speech gets clearer. That's it.

Ask me anything, I'm here all day.

Great job Krisp team! This is a life-saver 🤌

btw, what accents does it support?

Most tools focus on captions, but real-time accent normalization in audio addresses a different problem! Curious how it handles heavily accented technical content like coding tutorials or medical lectures where vocabulary matters as much as clarity. Congrats on the launch, team Krisp!

So freaking useful! Do you see accent conversion becoming as standard as captions or playback speed on video platforms or maybe youtube will add this feature itself??

We built it for builders like us 🎉 Everyone I know who watches Vibe coding tutorials needs to try this.

Looks Neat! Can we take it beyond meeting rooms ? Can we use this for voice over for my content, with natural emotion ?

We are Curious how natutal the converted voice sounds across different accents. does it preserve the speaker’s tone or lean more neutral?

Just tried and it's amazing! Can't believe Youtube couldn't come up with this feature all these years!

Just installed it. Tried it on an NPTEL lecture and before/after is impressive

intresting approach, but I hope it doesn’t distort the speaker’s tone too much. That natural flow is still important for learning.

Cool !! I usually spend half of my time on technical tutorials trying to decipher specific terms that get lost in translation or heavy accents.

Does this handle real time processing well enough ? there is no weird audio lag with the video ? if the sync is tight , this is very useful .

This feels like when captions first became standard on YouTube. Once you have it you wonder how you watched without it

Does it affect the voice (changes it, or maybe makes it robotic)?

Heheh! Need this. As a non-native english speaker we constantly deal with it and I'm sure many founders gonna feel the same Asti! Wish you all the best here

I can finally understand my CS lectures

Now I can go back to my resolution to learn Python, since I will actually understand the content. :)

You know, 40 seconds of video and everything is clear. The main feature is clear!)

love the idea. very useful for native language speakers. what's the accuracy of these models, the cost of a wrong accent can be significant in some use cases like critical meetings.

I have struggled in meetings, where people don't have good microphones or are sitting away from the laptop or a lot of background noise, If Krisp can support that as well it will be awesome.

But that will be real time.

Finally. I spend half my day on YouTube watching technical documentation and deep-dives, and it's a constant struggle when the auto-captions can't parse technical jargon because of a thick accent. I usually end up wasting time rewinding or just giving up on the video entirely.

Seeing this as an on-device Chrome extension is interesting from a performance standpoint. I'm curious about the browser overhead—have you guys noticed any significant impact on CPU or RAM usage during longer 30+ minute lectures?

This is a genuine friction point for the global dev community. Great to see a practical use case for on-device AI that isn't just another chatbot. Good luck with the launch!

How is this different from auto-captions? Is it actually changing the audio?

This is one of those apps you only appreciate once you’ve tried it in a noisy environment. Curious how it performs with more complex background noise like cafés or street traffic.

This is a huge deal for educational content. I teach an Excel for Financial Modelling course on Udemy (https://www.udemy.com/course/excel-for-financial-modelling/) and a big chunk of my students are non-native English speakers working in finance globally. Accent barriers in video-based learning are real, and on-device AI that solves this without requiring the creator to re-record is brilliant. Curious if you're seeing higher retention rates on videos where accent conversion is active?

This is what I can genuinely useful, practical leverage of AI, very nice. I have one question and one suggestion.

Question: does it work well also when people are talking on another or only for solo speakers?

Suggestion: Make also a funny/gimicky version where everyone can switch their voices to anything they like - I always wanted to sound like British royalty :)

YouTube has had speed control since forever but accent was always the missing piece. I've rewound the same sentence four times trying to catch a word. One toggle sounds right - this doesn't need to be complicated.